The Complete Guide to PCR Failure: From Root Causes to Advanced Troubleshooting for Researchers

This guide provides a comprehensive framework for researchers and drug development professionals to understand, diagnose, and resolve Polymerase Chain Reaction (PCR) failures.

The Complete Guide to PCR Failure: From Root Causes to Advanced Troubleshooting for Researchers

Abstract

This guide provides a comprehensive framework for researchers and drug development professionals to understand, diagnose, and resolve Polymerase Chain Reaction (PCR) failures. It explores the fundamental causes of amplification issues, details methodological considerations for various PCR applications, offers systematic troubleshooting protocols for common and unexpected problems, and discusses validation techniques to ensure data accuracy and reproducibility. By synthesizing foundational knowledge with practical optimization strategies and comparative technology analyses, this resource aims to enhance experimental success rates in both basic research and clinical diagnostic settings.

Understanding the Core Principles and Common Causes of PCR Failure

The PCR Process and Critical Points of Failure

The Polymerase Chain Reaction (PCR) is a foundational in vitro technique that revolutionized molecular biology by enabling the exponential amplification of specific DNA sequences. Introduced by Kary Mullis in 1985, for which he was later awarded the Nobel Prize in Chemistry, PCR serves as a cornerstone for biomolecular research and clinical diagnostics [1]. This method allows researchers to generate ample quantities of a targeted DNA segment from minimal starting material, facilitating applications ranging from genetic disorder screening and pathogen detection to forensic analysis and basic research [1] [2]. The core principle involves the thermostable Taq polymerase, isolated from Thermus aquaticus, which synthesizes new DNA strands complementary to the target sequence through repeated thermal cycling [1].

The technique's extreme sensitivity, capable of amplifying 10⁶ to 10⁹ DNA copies from just 1 to 100 ng of input DNA, also renders it susceptible to various failure modes [1]. Challenges such as reaction contamination, suboptimal primer design, and inhibitor carryover can compromise amplification efficiency, specificity, and yield. This guide provides an in-depth examination of the PCR process, systematically analyzes critical points of failure, and offers evidence-based troubleshooting protocols to ensure robust and reliable amplification for researchers, scientists, and drug development professionals.

The Core PCR Process

The standard PCR process is an automated, enzymatic reaction that cycles through three fundamental temperature-dependent steps: denaturation, annealing, and extension. These steps are repeated for 25-40 cycles in a thermal cycler, leading to the exponential amplification of the target DNA region [1] [2].

The Three Fundamental Steps

- Denaturation: The reaction mixture is heated to 94–95°C for 20–30 seconds, causing the double-stranded DNA template to separate into single strands by breaking the hydrogen bonds between complementary bases. This provides the necessary single-stranded templates for primer binding [1] [3].

- Annealing: The temperature is lowered to a defined range, typically 55–72°C for 20–40 seconds, allowing the forward and reverse primers to hybridize to their complementary sequences on the single-stranded DNA templates. The optimal annealing temperature is critical for specificity and is usually 3–5°C below the calculated melting temperature (Tm) of the primers [1] [4] [3].

- Extension: The temperature is raised to 72°C, the optimal temperature for Taq polymerase activity. During this step, which lasts ~60 seconds per kilobase of amplicon length, the DNA polymerase synthesizes new DNA strands by adding nucleotides to the 3' ends of the annealed primers, creating double-stranded DNA copies [1] [3].

Reaction Components

A successful PCR requires a precise mixture of several key components, each playing a critical role [3]:

- Template DNA: The source DNA containing the target sequence to be amplified.

- Primers: Short, single-stranded DNA oligonucleotides (typically 18-25 nucleotides) that define the 5' and 3' boundaries of the target sequence.

- Thermostable DNA Polymerase (e.g., Taq): The enzyme that catalyzes the synthesis of new DNA strands.

- Deoxynucleoside Triphosphates (dNTPs): The building blocks (dATP, dCTP, dGTP, dTTP) for new DNA synthesis.

- Reaction Buffer: Provides the optimal chemical environment (pH, ionic strength) for polymerase activity.

- Divalent Cations (Mg²⁺): An essential cofactor for DNA polymerase function, with its concentration often requiring optimization [5] [6].

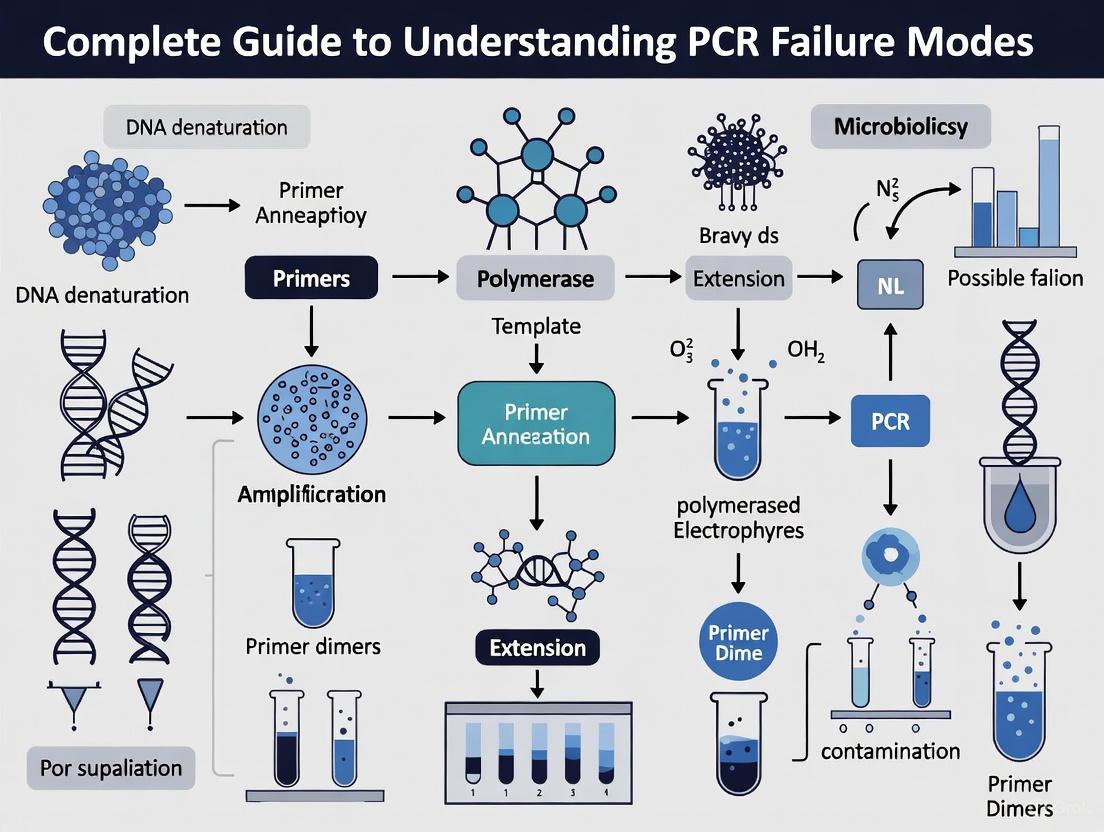

The following diagram illustrates the logical workflow and component relationships in a standard PCR setup.

Critical Points of Failure and Troubleshooting

PCR failures can manifest as absent or low yield, non-specific amplification, or the formation of primer-dimers. Understanding the root causes is essential for effective troubleshooting.

Common PCR Problems, Causes, and Solutions

The table below summarizes the most common PCR failure modes, their potential causes, and recommended solutions.

Table 1: Comprehensive Guide to PCR Troubleshooting

| Problem Symptom | Root Causes | Recommended Solutions |

|---|---|---|

| No Amplification or Low Yield [5] [6] | • Insufficient template DNA quantity/quality [5]• Poor primer design or degradation [5]• Suboptimal Mg²⁺ concentration [5]• Low polymerase activity or amount [5]• PCR inhibitors present (e.g., phenol, EDTA) [1] [5] | • Verify DNA concentration and purity (A260/A280) [6]• Check primer integrity and redesign if necessary [5]• Optimize Mg²⁺ concentration (0.5-5.0 mM) [5] [3]• Increase amount of DNA polymerase [5]• Re-purify DNA template; use inhibitors-tolerant polymerases [1] [5] |

| Non-Specific Bands/Background [5] [6] | • Annealing temperature too low [5]• Excess primers, enzyme, or Mg²⁺ [5]• Too many thermal cycles [5]• Non-specific primer binding | • Increase annealing temperature incrementally [5]• Use a hot-start DNA polymerase [5] [4]• Optimize reagent concentrations [5]• Reduce cycle number [5]• Employ Touchdown PCR [4] |

| Primer-Dimer Formation [5] [6] [3] | • Primer 3'-end complementarity [3]• Excessively high primer concentration [5]• Overlong annealing time or low temperature [5] | • Redesign primers to avoid 3' complementarity [3]• Lower primer concentration (0.1-1 μM) [5]• Increase annealing temperature; shorten annealing time [5] |

| Smearing or Heterogeneous Products [6] | • Degraded template DNA [5] [6]• Excess template DNA [5]• Contamination from previous PCR products [6]• Too long extension time [6] | • Assess DNA integrity by gel electrophoresis [5]• Reduce input DNA quantity [5]• Use separate pre- and post-PCR work areas [6]• Optimize extension time [5] |

Advanced Methodologies for Challenging Targets

Standard PCR protocols often require modification to address specific experimental challenges.

- Hot-Start PCR: This method uses antibody-based, affibody, or chemically modified DNA polymerases that remain inactive at room temperature. This prevents non-specific amplification and primer-dimer formation during reaction setup, which is especially critical for multiplex PCR. The enzyme is activated during the initial high-temperature denaturation step [5] [4].

- Touchdown PCR: This strategy begins with an annealing temperature several degrees above the primer's Tm to ensure high stringency and prevent non-specific binding in the initial cycles. The annealing temperature is then gradually decreased (e.g., 1°C per cycle) until it reaches the optimal temperature. This enriches the desired specific amplicon early in the process [4].

- GC-Rich PCR: Amplifying GC-rich templates (>65%) is challenging due to strong hydrogen bonding and secondary structures. Using PCR additives like DMSO, formamide, or betaine (0.5 M to 2.5 M) helps denature these stable structures. Highly processive and hyperthermostable DNA polymerases that withstand higher denaturation temperatures (e.g., 98°C) are also beneficial [5] [4] [3].

- Long-Range PCR: For targets longer than 5 kb, specialized DNA polymerase blends are used. These blends combine a polymerase with high processivity for efficient elongation and a proofreading enzyme for accuracy. Additionally, extension times must be prolonged according to the amplicon length [5] [4].

The following workflow provides a systematic approach for diagnosing and resolving the most frequent PCR issues.

Essential Protocols for Robust PCR

Basic PCR Setup Protocol

This protocol is adapted for a standard 50 µL reaction volume and serves as a reliable starting point for most applications [3].

- Preparation: Thaw all PCR reagents (except the polymerase) completely and mix thoroughly. Keep them on ice throughout the setup. Wear gloves to prevent contamination.

- Master Mix Preparation: For multiple reactions, prepare a Master Mix in a sterile 1.5 mL microcentrifuge tube to minimize pipetting errors and ensure consistency. Combine the components in the following order:

- Sterile Nuclease-Free Water (Q.S. to 50 µL)

- 10X PCR Buffer (5 µL)

- 10 mM dNTP Mix (1 µL)

- 25 mM MgCl₂ (volume optimized, typically 1.5-3 µL)

- 20 µM Forward Primer (1 µL)

- 20 µM Reverse Primer (1 µL)

- Template DNA (variable, 1-1000 ng)

- Taq DNA Polymerase (0.5-2.5 Units)

- Mixing and Aliquotting: Gently mix the Master Mix by pipetting up and down at least 20 times. Dispense the appropriate volume into individual 0.2 mL PCR tubes. Add template DNA to each sample tube. For the negative control, add an equivalent volume of water instead of template.

- Thermal Cycling: Place the tubes in a pre-heated thermal cycler and run the following standard program:

- Initial Denaturation: 94–95°C for 2–5 minutes.

- Amplification (25–35 cycles):

- Denaturation: 94–95°C for 20–30 seconds.

- Annealing: 55–72°C (primer-specific) for 20–40 seconds.

- Extension: 72°C for 60 seconds per kilobase.

- Final Extension: 72°C for 5–10 minutes.

- Hold: 4–10°C ∞.

Protocol for Primer Design and Optimization

Proper primer design is the single most critical factor for PCR success [3].

- Length: Primers should be 15–30 nucleotides long.

- GC Content: Aim for 40–60% GC content.

- Melting Temperature (Tm): The Tm for both primers should be within 52–65°C, and the final Tms should not differ by more than 5°C.

- 3' End Specificity: The 3' end must terminate in a G or C (GC clamp) to increase priming efficiency but should not be complementary to the other primer to prevent primer-dimer formation.

- Specificity Checks: Avoid long runs of a single base and di-nucleotide repeats. Verify primer specificity by running a BLAST search against the appropriate genome database to ensure they are unique to the target.

- Tools: Utilize reputable online tools like NCBI Primer-BLAST or Primer3 for design and validation [3].

The Scientist's Toolkit: Key Reagents and Materials

The selection of appropriate reagents is fundamental to overcoming common PCR challenges. The following table details essential solutions and their functions.

Table 2: Key Research Reagent Solutions for PCR

| Reagent / Material | Function / Purpose | Application Notes |

|---|---|---|

| Hot-Start DNA Polymerase [5] [4] | Enzyme inactive at room temperature, activated at high temperature. | Critical for: Reducing nonspecific amplification and primer-dimer formation, especially in multiplex PCR. Enables room-temperature setup. |

| PCR Additives / Co-solvents [5] [4] [3] | Modifies DNA melting temperature and reduces secondary structures. | DMSO (1-10%), Formamide (1.25-10%), Betaine (0.5-2.5 M): Essential for amplifying GC-rich templates. Note: They may lower primer Tm. |

| Bovine Serum Albumin (BSA) [6] [3] | Binds to and neutralizes common PCR inhibitors. | Used at 10–100 μg/ml to overcome inhibition from compounds carried over from blood, plant, or soil samples. |

| MgCl₂ or MgSO₄ Solution [5] [3] | Essential cofactor for DNA polymerase activity. | Concentration (0.5-5.0 mM) is critical and often requires optimization. Excess Mg²⁺ can reduce fidelity and cause nonspecific binding. |

| dNTP Mix [5] [3] | Provides the nucleotides (dATP, dCTP, dGTP, dTTP) for DNA synthesis. | Must be used at equimolar concentrations (typically 200 μM of each dNTP) to prevent misincorporation errors. |

| Nuclease-Free Water [1] [3] | Solvent for the reaction, free of contaminating nucleases. | Prevents degradation of primers, template, and PCR products. Essential for reproducible results. |

A deep understanding of the PCR process—from its core principles of thermal cycling and enzymatic synthesis to the nuanced roles of each reaction component—is fundamental for molecular biologists. As this guide has detailed, the critical points of failure are often predictable and manageable. They range from fundamental issues like template integrity and primer design to more subtle optimization requirements for Mg²⁺ concentration and thermal cycling parameters. By applying systematic troubleshooting workflows, leveraging specialized methods like hot-start and touchdown PCR, and utilizing the appropriate reagents from the scientific toolkit, researchers can reliably overcome these challenges. Mastering these aspects ensures the generation of specific, high-yield amplification products, thereby upholding the integrity and reproducibility of experimental data across diverse applications in research and diagnostics.

The success of Polymerase Chain Reaction (PCR) is fundamentally dependent on the quality of the template DNA. Issues related to the integrity, purity, and quantity of the template are predominant causes of PCR failure, leading to unreliable results, failed experiments, and costly delays in research and diagnostic pipelines [6] [5]. For researchers and drug development professionals, a systematic understanding of these failure modes is not merely beneficial—it is essential for robust experimental design and data integrity. This guide provides an in-depth technical examination of template DNA issues, framed within a broader thesis on PCR failure modes, to equip scientists with the knowledge and methodologies to preemptively address these critical challenges.

The Critical Role of Template DNA in PCR

In PCR, the template DNA serves as the blueprint for amplification. The DNA polymerase enzyme relies on this template to synthesize new strands, using primers to define the specific region of interest. The exponential nature of PCR amplification means that any initial imperfections in the template are also amplified, potentially leading to catastrophic failure or misleading results [7].

The core requirements for template DNA are:

- Integrity: The DNA must be structurally intact, with minimal fragmentation or strand breaks, to allow for the full-length amplification of the target sequence.

- Purity: The template solution must be free of contaminants that inhibit the DNA polymerase or interfere with the reaction chemistry.

- Quantity: An optimal and consistent amount of template DNA must be used to ensure efficient amplification without promoting non-specific products.

Failures in any of these areas disrupt the delicate biochemical balance of the PCR reaction. Understanding the specific mechanisms of failure is the first step toward developing effective mitigation strategies.

DNA Integrity: Degradation and Fragmentation

Mechanisms of DNA Degradation

DNA integrity is compromised through several biochemical pathways that cause strand breaks and base modifications. The primary mechanisms include [8]:

- Oxidation: Reactive oxygen species (ROS) modify nucleotide bases, leading to strand breaks. This process is accelerated by exposure to heat or UV radiation.

- Hydrolysis: Water molecules break the phosphodiester bonds in the DNA backbone. This can cause depurination (loss of purine bases), creating abasic sites that stall DNA polymerases.

- Enzymatic Breakdown: Endogenous nucleases, if not properly inactivated during extraction, can rapidly digest DNA.

- Mechanical Shearing: Overly aggressive physical disruption during DNA extraction can fragment DNA, making it unsuitable for long-range PCR.

Impact on PCR and Quantitative Assessment

Degraded DNA directly compromises PCR success. Sheared DNA templates prevent the amplification of longer fragments, as polymerases cannot traverse across breakpoints. Abasic sites and oxidized bases can cause the polymerase to stall or misincorporate nucleotides, leading to truncated products or sequence errors [8] [5].

Table 1: DNA Degradation Pathways and Their Effects on PCR

| Degradation Pathway | Primary Causes | Impact on PCR | Preventive Measures |

|---|---|---|---|

| Oxidation | Heat, UV radiation, reactive oxygen species | Strand breaks; polymerase stalling; false mutations | Use antioxidants; store at -80°C in oxygen-free environment [8] |

| Hydrolysis | Aqueous environments, acidic conditions | Depurination; DNA fragmentation; abasic sites | Store in stable pH buffers; use frozen or anhydrous storage [8] |

| Enzymatic Breakdown | Cellular nucleases (DNases) | Complete DNA digestion; no amplification | Use chelating agents (EDTA); heat inactivation; nuclease inhibitors [8] |

| Mechanical Shearing | Vigorous pipetting, vortexing, bead-beating | DNA fragmentation; inability to amplify long targets | Use gentle isolation methods; optimize homogenization parameters [8] |

Assessment of DNA integrity is typically performed using gel electrophoresis. Intact genomic DNA appears as a tight, high-molecular-weight band, while degraded DNA manifests as a smear of lower molecular weight fragments. For more precise analysis, fragment analyzers or bioanalyzers provide a detailed size distribution profile, which is particularly crucial for next-generation sequencing applications [8].

DNA Purity: Contamination and Inhibition

Common PCR Inhibitors

PCR inhibitors are substances that co-purify with DNA and disrupt the amplification process. They can originate from the original sample (e.g., blood, plant tissue) or be introduced during the DNA extraction process itself [9].

Inhibitors act through several mechanisms:

- Direct Enzyme Inhibition: Certain compounds bind directly to the DNA polymerase, blocking its active site or causing its degradation.

- Cofactor Interference: Inhibitors like EDTA chelate magnesium ions (Mg²⁺), which are essential cofactors for DNA polymerase activity.

- Template Interaction: Substances like humic acid or melanin can bind to the template DNA, preventing primer annealing or polymerase progression [9].

Table 2: Common PCR Inhibitors and Their Sources

| Inhibitor Category | Specific Examples | Common Sources | Mechanism of Inhibition |

|---|---|---|---|

| Organic Compounds | Hemoglobin, lactoferrin, IgG | Blood, serum, plasma | Bind to DNA polymerase [9] |

| Ionic Substances | Heparin | Anticoagulants | Competes with Mg²⁺ binding [9] |

| Plant Compounds | Polyphenols, polysaccharides | Plant tissues | Mimic DNA structure; interfere with polymerase [9] |

| Laboratory Reagents | Phenol, EDTA, SDS, ethanol | DNA extraction kits | Denature polymerase or chelate Mg²⁺ [5] [9] |

| Environmental Samples | Humic acids, heavy metals | Soil, water | Interact with template and polymerase [9] |

Detection and Elimination of Inhibitors

The presence of inhibitors is often suspected when PCR fails despite seemingly adequate DNA concentration. A simple test involves spiking a known, functional PCR reaction with the suspect DNA sample; a reduction in amplification efficiency confirms inhibition [5].

Strategies to overcome inhibition include:

- Dilution: Diluting the template DNA can reduce inhibitor concentration to a level that no longer affects the reaction. A 10- to 100-fold dilution is often effective [9].

- Purification: Re-purifying the DNA using silica-column based methods, ethanol precipitation, or drop dialysis can effectively remove contaminants [5] [10].

- Polymerase Selection: Some DNA polymerases, such as those designed for forensic or plant applications, have higher tolerance to common inhibitors [5] [9].

- Reaction Additives: Adding bovine serum albumin (BSA, 0.1-0.5 μg/μL) can bind to and neutralize inhibitors like phenolics and humic acids. Betaine (0.5-1.5 M) can help destabilize secondary structures and may mitigate some inhibition effects [6].

DNA Quantity: Optimal Input and Quantification

Consequences of Suboptimal Template Quantity

The amount of template DNA in a PCR reaction must be carefully calibrated. Common issues arise from both insufficient and excessive template [5]:

- Insufficient Template (<1 ng for genomic DNA): Leads to no amplification or low yield, as the probability of primer-template encounters is too low for exponential amplification to initiate effectively.

- Excessive Template (>100 ng for genomic DNA): Can lead to non-specific amplification, as the increased number of non-target sequences raises the chance of off-site primer binding. Excess DNA can also carry proportionally more inhibitors.

Accurate Quantification Methods

Accurate DNA quantification is critical for PCR reproducibility. Common methods include:

- Spectrophotometry (A260/A280): Measures absorbance at 260 nm (nucleic acids) and 280 nm (proteins). A pure DNA sample has an A260/A280 ratio of ~1.8. Ratios significantly lower than this suggest protein contamination, while higher ratios may indicate RNA contamination [11].

- Fluorometry: Uses DNA-binding dyes (e.g., PicoGreen) that fluoresce only when bound to DNA. This method is more specific for double-stranded DNA and less susceptible to contaminants than spectrophotometry [6].

- Gel Electrophoresis: Visual assessment of DNA quantity and quality against a DNA mass standard. This method provides a qualitative check of integrity alongside quantification.

For routine PCR, 10-100 ng of genomic DNA is a standard starting point for a 50 μL reaction. The optimal amount may vary based on template complexity and target abundance [5] [10].

Advanced Protocols for Challenging Samples

The Chloroform-Bead Method for Tough Cell Walls

Mycobacterial species, with their thick, mycolic acid-rich cell walls, present a significant challenge for DNA extraction. A novel Chloroform-Bead (CB) method, validated across 16 laboratories in 2025, demonstrates a universal, high-yield approach [11].

Experimental Workflow:

- Sample Input: A loopful of mycobacterial cells (~10 mg) is transferred to a 2.0 mL screw-cap tube.

- Chemical and Mechanical Lysis: Add 700 μL of 0.1 M NaCl/TE buffer, 500 μL of chloroform, and ~600 mg of 0.2 mm diameter glass beads. Vortex at 2,700 rpm for 7 minutes. Chloroform sterilizes the sample and dissolves lipids, while bead-beating mechanically disrupts the tough cell wall.

- RNase Treatment: Incubate with RNase A for 20 minutes to remove RNA.

- Purification: Perform phenol-chloroform and chloroform extractions using a phase-lock tube for easy separation.

- DNA Precipitation: Precipitate DNA with isopropanol, wash, and resuspend in 100 μL of elution buffer (10 mM Tris-HCl, pH 8.5) [11].

Performance Metrics: This protocol achieved a median DNA yield of 22.2 μg from mycobacteria with high purity (A260/A280 ~1.92), drastically reducing processing time from days to 2 hours while ensuring complete sample sterilization [11].

Optimized Extraction for Degraded or Low-Input Samples

For samples with compromised integrity or limited quantity, such as forensic, ancient DNA, or laser-capture microdissected samples, specialized protocols are required [8].

- Combined Lysis Approaches: Use a combination of chemical agents (e.g., EDTA for demineralization of bone) with controlled mechanical homogenization (e.g., bead beating). This "combo power punch" maximizes recovery while minimizing further damage [8].

- Temperature and pH Control: Maintain digestion temperatures between 55°C to 72°C and carefully control pH throughout extraction to preserve DNA integrity [8].

- Specialized Preservation: For fresh samples, flash-freezing in liquid nitrogen followed by storage at -80°C is the gold standard. When freezing is impossible, use chemical preservatives that stabilize nucleic acids and inhibit nucleases [8].

Diagram: Troubleshooting DNA Extraction for Challenging Samples

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Template DNA Issues

| Reagent/Material | Function/Application | Technical Notes |

|---|---|---|

| Bead Ruptor Elite | Mechanical homogenizer for tough samples (bone, bacteria) | Allows precise control of speed, cycle duration, and temperature to minimize DNA shearing [8] |

| Hot-Start DNA Polymerase | Reduces non-specific amplification in PCR | Inactive at room temperature; requires high-temp activation. Prevents primer-dimer formation [6] [5] |

| Bovine Serum Albumin (BSA) | PCR additive to counteract inhibitors | Binds to and neutralizes organic inhibitors like phenolics and humics; typical use: 0.1-0.5 μg/μL [6] |

| Phase-Lock Tubes | Facilitates phenol-chloroform extraction | Easy separation of aqueous and organic phases; improves recovery and reduces hands-on time [11] |

| EDTA (Ethylenediaminetetraacetic acid) | Chelating agent in DNA storage buffers | Inhibits nuclease activity by chelating Mg²⁺; common in TE buffer for long-term DNA storage [8] |

| GC Enhancer / Betaine | Additive for difficult templates (GC-rich) | Destabilizes secondary structures; equalizes Tm for more efficient amplification [5] |

| RNase A | Removes RNA contamination from DNA preps | Essential for accurate quantification and purity; used post-lysis in many protocols [11] |

Template DNA integrity, purity, and quantity are not standalone concerns but interconnected variables that collectively determine PCR success. A systematic approach—incorporating rigorous quality control, appropriate quantification methods, and specialized protocols for challenging samples—is fundamental to reliable molecular diagnostics and research. As PCR continues to be a cornerstone technology in life sciences and drug development, a deep and practical understanding of these template-related failure modes will remain an essential component of the scientist's expertise.

Primer Design Flaws and Binding Site Complications

Polymersse Chain Reaction (PCR) is a foundational technique in molecular biology, yet its success is critically dependent on the meticulous design of oligonucleotide primers. Flawed primer design or complications at the primer binding site represent a predominant cause of PCR failure, leading to issues such as no amplification, non-specific products, or primer-dimer formation [12] [6]. These pitfalls can confound experimental results, waste valuable resources, and impede research and diagnostic progress. This guide provides an in-depth technical examination of common primer design flaws and binding site complications, offering researchers a systematic framework for troubleshooting and optimization. By understanding these failure modes, scientists can enhance the reliability and efficiency of their PCR assays, which is particularly crucial in high-stakes environments like drug development and clinical diagnostics.

Common Primer Design Flaws

The design process is the first and most critical defense against PCR failure. Several specific flaws can compromise primer efficacy.

Thermodynamic Instability and Mismatched Melting Temperatures

A fundamental requirement for efficient amplification is that both primers in a pair bind with similar affinity at a common annealing temperature. This is governed by their melting temperature (Tm), the temperature at which half of the DNA duplex dissociates. A Tm difference of more than 1–5°C between paired primers can result in one primer binding efficiently while the other does not, leading to asymmetric or failed amplification [13] [3]. For quantitative real-time PCR assays, the primer Tm should ideally be between 58–60°C [13]. Furthermore, the 3' end of a primer must be thermally stable to prevent "breathing" (fraying), which can displace the polymerase. Including a G or C base at the 3' end, which forms three hydrogen bonds, effectively "clamps" the end and increases priming efficiency [3] [14].

Table 1: Key Primer Design Parameters and Their Optimal Ranges

| Design Parameter | Optimal Range/Guideline | Consequence of Deviation |

|---|---|---|

| Primer Length | 20–30 nucleotides [3] [14] | Shorter primers reduce specificity; longer primers may have folding issues. |

| Melting Temperature (Tm) | 55–65°C [14]; 52–58°C for conventional PCR [3] | Poor efficiency if too high/low; failed PCR if pair Tm differs >5°C. |

| GC Content | 40–60% [3] [14] | Poor annealing if too low; non-specific binding if too high. |

| 3' End Stability | A G or C base at the 3' end is recommended [3] [14] | "Breathing" or fraying of the ends, reducing polymerase binding. |

| Self-Complementarity | Avoid hairpins and inverted repeats [14] | Primer-dimer formation and self-annealing, reducing target yield. |

Structural Complications: Self-Complementarity and Dimerization

Primers must be specific for the target template and not for themselves or each other. Self-complementarity within a primer can lead to the formation of hairpin loops, which prevents the primer from binding to the template [3]. Similarly, complementarity between the two primers, especially at their 3' ends, facilitates the formation of primer-dimers, where primers anneal to each other and are extended by the polymerase [6]. This consumes reaction reagents and drastically reduces the yield of the desired amplicon. Primer-dimer formation is promoted by high primer concentrations, long annealing times, and low annealing temperatures [6]. Software tools should be used to check for these interactions, and structures with a ΔG (change in free energy) of less than -5 kcal/mol should be avoided [14].

Sequence-Related Pitfalls

Even primers with appropriate Tm and no self-complementarity can fail if their sequence composition is problematic. Runs of a single base (e.g., AAAAA) or di-nucleotide repeats (e.g., GCGCGC) can cause the primer to "slip" along the template during annealing, leading to mispriming and heterogeneous products [3]. Furthermore, primers designed to low-complexity sequence regions may not be unique in the genome, resulting in amplification of non-target sequences [13]. If an alternative region cannot be selected, one strategy is to use longer primers with a higher Tm to increase specificity, though this may require subsequent optimization of thermal cycling conditions [13].

Binding Site Complications and Template Issues

The context in which the primer is designed to bind is as important as the primer itself. Complications at the binding site can thwart even a perfectly designed primer.

Target Secondary Structure

Single-stranded DNA or RNA templates are not linear in solution; they form complex secondary structures such as hairpins and stem-loops through intramolecular base pairing. If a primer binding site is located within such a structure, the energy required to melt the structure may be prohibitive at the assay's annealing temperature, preventing primer binding and causing false negatives [15]. This is a particularly significant challenge in reverse transcription PCR (RT-PCR) where RNA templates are used. Advanced software that uses multi-state thermodynamic models (beyond simple two-state predictions) can solve these coupled equilibria to accurately predict the amount of primer bound to its structured target, thereby improving assay sensitivity [15].

Template Sequence Quality and Inaccuracies

The accuracy of the template sequence used for primer design is paramount. Sequence discrepancies or inaccuracies in public databases can lead to primers that do not match the actual target, resulting in failed assays [13]. This is especially relevant when working with gene families with high homology, or when single nucleotide polymorphisms (SNPs) are present within the primer binding site. A mismatch, particularly at the 3' end of the primer, can severely reduce or prevent polymerase extension. To mitigate this, it is critical to use curated sequences from databases like NCBI and dbSNP and to verify the template sequence through multiple sequencing reactions [13]. If a region with a known SNP must be targeted, one strategy is to increase the primer length without raising the annealing temperature, which allows for more "wobble" or mismatch tolerance [13].

Genomic DNA Contamination and Amplicon Length

When performing RNA PCR or qPCR, a common source of false positives is the amplification of contaminating genomic DNA (gDNA). To prevent this, primers should be designed to span an exon-exon junction (an intron splice site) [13]. This ensures that amplification will only occur from the processed mRNA template, as the amplicon spanning the junction would not exist in the gDNA. For targets without introns (e.g., from bacteria or viruses), rigorous RNA isolation techniques and DNase treatment are necessary [13]. Finally, amplicon length impacts efficiency. Ideally, amplicons should be 50 to 150 bases long for optimal amplification [13]. Designing primers that generate very long amplicons may lead to poor efficiency, requiring optimization of cycling conditions and reaction components.

Diagram 1: A systematic troubleshooting workflow for diagnosing and resolving common PCR failures related to primer design and binding site complications.

Advanced Applications and Emerging Solutions

As PCR technology evolves to meet more complex diagnostic and research needs, the challenges in primer design have become more sophisticated.

Complications in Multiplex PCR

Multiplex PCR, which amplifies multiple targets in a single reaction, is powerful for pathogen detection, target enrichment, and genotyping. However, it introduces unique challenges beyond single-plex PCR. Primer-amplicon interactions are a major cause of false negatives in multiplex panels; a primer intended for one target can cross-hybridize to a different target's amplicon, leading to shortened, non-amplifiable products and depleting reagents [15]. Similarly, the formation of primer-dimers between different primer sets in the reaction is a significant risk, consuming primers and dNTPs and causing reaction failure [15]. A critical, often overlooked, problem is uneven amplification across targets, often caused by varying degrees of target secondary structure, which makes some binding sites inaccessible relative to others [15]. Solving these problems requires sophisticated software that can model all possible intermolecular interactions and the complex folding of all targets and primers simultaneously.

Degenerate Primers and Error Correction

In applications such as amplifying gene families or identifying novel genes from related species, degenerate primers are used. These primers have several possible bases at certain positions, creating a mixture of primer sequences [16]. The degeneracy is the number of unique sequence combinations it contains. While powerful, designing effective degenerate primers is computationally complex, as they must match a maximum number of input sequences without promoting non-specific binding. Programs like HYDEN have been developed to tackle this problem, successfully designing primers with degeneracies as high as 10^10 to amplify novel human olfactory receptor genes [16].

For ultra-sensitive detection of rare alleles, such as circulating tumor DNA in liquid biopsies, errors introduced during PCR itself become a major bottleneck. Methods like SPIDER-seq address this by using a novel bioinformatics approach to track molecular lineages even when barcodes are overwritten during standard PCR cycles [17]. By constructing a peer-to-peer network of barcodes from daughter strands, the method can generate consensus sequences to reduce errors, enabling detection of mutations at frequencies as low as 0.125% [17]. This represents a significant advance over more laborious and costly ligation-based methods.

Experimental Protocols and Validation

Systematic Primer Validation Workflow

A methodical approach to testing and validating primers is essential after in silico design. The following protocol outlines a step-by-step workflow to experimentally confirm primer specificity and efficiency [3].

Reaction Setup: Prepare a master mix for multiple reactions to minimize pipetting error. For a standard 50 µL reaction, combine the following components in order:

- Sterile Nuclease-Free Water (Q.S. to 50 µL)

- 10X PCR Buffer (5 µL)

- 10 mM dNTP Mix (1 µL, final concentration 200 µM of each dNTP)

- 25 mM MgCl₂ (volume varies; start at 1.5-4.0 mM final concentration)

- 20 µM Forward Primer (1 µL, final concentration 0.4 µM)

- 20 µM Reverse Primer (1 µL, final concentration 0.4 µM)

- DNA Template (1-1000 ng, typically 0.5-5 µL)

- DNA Polymerase (0.5-2.5 units, typically 0.5-1 µL) Mix gently by pipetting up and down 20 times after adding the polymerase [3].

Thermal Cycling with Gradient Annealing: Use a thermal cycler with a gradient function. A basic cycling program includes:

- Initial Denaturation: 94–98°C for 30–120 seconds.

- Amplification (25–35 cycles):

- Denature: 94–98°C for 15–30 seconds.

- Anneal: Gradient from 5°C below to 5°C above the calculated average Tm, for 15–60 seconds.

- Extend: 72°C (for Taq polymerase) for 1 minute per 1 kb of amplicon.

- Final Extension: 72°C for 5–10 minutes [12] [3].

Product Analysis via Gel Electrophoresis: Analyze 5–10 µL of the PCR product on a 1–2% agarose gel stained with an intercalating dye. A successful reaction should show a single, sharp band of the expected size under UV light. The presence of multiple bands indicates non-specific amplification, a smear may suggest degraded template or primers, and no band indicates a complete failure [6] [3].

Research Reagent Solutions

Table 2: Key Reagents for Troubleshooting Primer-Related PCR Failure

| Reagent / Material | Function / Application | Troubleshooting Purpose |

|---|---|---|

| Hot-Start DNA Polymerase | Enzyme activated only at high temperatures. | Prevents non-specific priming and primer-dimer formation during reaction setup [6]. |

| dNTP Mix | Nucleotide building blocks for DNA synthesis. | Ensure fresh, high-quality dNTPs; suboptimal concentration causes low yield [6]. |

| MgCl₂ Solution | Cofactor essential for DNA polymerase activity. | Optimize concentration (0.5-5.0 mM) to address no amplification (increase) or non-specific bands (decrease) [12] [3]. |

| PCR Additives (e.g., BSA, Betaine, DMSO) | Modifiers of nucleic acid stability and melting behavior. | DMSO/Betaine: Destabilize secondary structure in high-GC targets [3]. BSA: Binds to inhibitors in the reaction [6]. |

| TaqMan Probes (for qPCR) | Fluorogenic probes for specific detection. | For qPCR, the probe Tm should be ~10°C higher than the primer Tm [13]. |

| Molecular Grade BSA | Inert protein additive. | Mitigates the effects of PCR inhibitors present in the sample or reaction [6]. |

Diagram 2: The mechanism of primer-dimer formation and its negative impact on PCR efficiency, alongside key preventive strategies.

Primer design is a critical step that dictates the success or failure of PCR experiments. Common flaws, including thermodynamic imbalances, self-complementarity, and poor sequence choices, are often preventable with careful in silico design. Complications at the binding site, such as target secondary structure and template inaccuracies, require a combination of sophisticated software prediction and empirical validation. As PCR applications expand into multiplex panels and rare allele detection, the principles of robust primer design become even more crucial. By adhering to the guidelines, troubleshooting workflows, and experimental protocols detailed in this technical guide, researchers can systematically overcome these challenges, thereby enhancing the reliability and impact of their work in molecular biology and drug development.

The Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, enabling the exponential amplification of specific DNA sequences. While the thermal cycling protocol is often the visible face of the process, the true biochemical engine lies in the carefully balanced reaction components. The efficacy of this engine is governed by the intricate interplay between the enzyme (DNA polymerase), the reaction buffer, and essential cofactors. For researchers and drug development professionals, a deep understanding of these components is not merely academic; it is critical for diagnosing amplification failures, optimizing assays for novel targets, and ensuring the reliability of results in diagnostic and research applications. This guide delves into the core reaction components, framing them within the context of PCR failure modes to provide a practical resource for troubleshooting and optimization.

The Central Catalyst: DNA Polymerase

DNA polymerase is the central workhorse of the PCR, responsible for synthesizing new DNA strands by incorporating nucleotides complementary to the template. Its characteristics directly determine the success, fidelity, and yield of the amplification reaction.

Key Attributes and Selection Criteria

Selecting the appropriate DNA polymerase is the first critical step in assay design. The choice should be guided by four key attributes, as detailed in Table 1 [18].

- Thermostability: The enzyme must withstand the repeated high-temperature denaturation steps (typically 94–98°C). The half-life at 95°C is a key metric; for example, Taq polymerase has a half-life of approximately 40 minutes at 95°C, while enzymes from hyperthermophilic organisms, like Deep Vent polymerase, can last for hours at these temperatures [19] [18].

- Fidelity: Fidelity refers to the accuracy of nucleotide incorporation. Standard Taq polymerase lacks proofreading (3'→5' exonuclease) activity and has an error rate of approximately 1.8 x 10⁻⁴, or about 1 error per 10,000 bases incorporated [18]. For applications like cloning or sequencing, high-fidelity polymerases (e.g., Pfu, Q5) with proofreading capabilities are essential, as they can reduce error rates by 1–2 orders of magnitude [20] [18].

- Processivity: This is the number of nucleotides a polymerase can incorporate per binding event. Higher processivity is beneficial for amplifying long targets (>10 kb) or GC-rich regions that can stall the enzyme. While native Taq incorporates 10–45 nucleotides per second, engineered polymerases like KAPA2G can achieve speeds around 150 nucleotides per second [18].

- Specificity: Specificity minimizes non-specific amplification and primer-dimer formation. Hot-start polymerases are a crucial solution here. They are rendered inactive at room temperature through antibodies or chemical modifications, preventing spurious activity during reaction setup. Activation occurs only after the initial high-temperature denaturation step [6] [5] [18].

Table 1: DNA Polymerase Selection Guide for Common Applications

| Application | Recommended Polymerase Type | Key Rationale |

|---|---|---|

| Routine Screening / Genotyping | Standard Taq | Fast, robust, and cost-effective for simple amplifications [20]. |

| Cloning, Sequencing, Mutagenesis | High-Fidelity (e.g., Pfu, Q5) | Proofreading activity ensures low error rates in the final product [20] [5] [18]. |

| Complex Samples (e.g., blood, soil) | Inhibitor-Tolerant / High-Processivity | Engineered to maintain activity in the presence of common PCR inhibitors [5]. |

| Long-Range PCR (>10 kb) | Long-Range / High-Processivity | High processivity and thermostability enable full-length synthesis of long amplicons [5] [21]. |

| Multiplex PCR | Stringent Hot-Start | Prevents primer-dimer formation and off-target amplification when multiple primer sets are used [22]. |

Experimental Protocol: Determining Optimal Enzyme Concentration

The amount of DNA polymerase used in a reaction is a key variable that requires optimization beyond the manufacturer's general recommendations.

- Prepare a Master Mix: Create a master mix containing all standard reaction components (1X buffer, 0.2 mM each dNTP, 0.5 µM each primer, template DNA) sufficient for multiple reactions.

- Dilution Series: Prepare a dilution series of the DNA polymerase in the appropriate storage buffer. A typical starting range is 0.5 to 2.5 units per 50 µL reaction [3].

- Setup Reactions: Aliquot the master mix into PCR tubes and add the varying amounts of polymerase from your dilution series.

- Run PCR: Perform amplification using your standard thermal cycling protocol.

- Analysis: Analyze the PCR products by agarose gel electrophoresis. The optimal concentration is the lowest amount that produces a strong, specific amplicon with minimal background. Excessive enzyme can lead to nonspecific products, while insufficient amounts result in low yield (Figure 2) [19].

The Biochemical Environment: Buffer Composition and Additives

The reaction buffer provides the optimal chemical environment for the DNA polymerase to function and for the primers to anneal to the template. Its composition is a frequent source of PCR failure if not properly optimized.

Core Buffer Components

- Tris-HCl: Provides a stable pH, typically around 8.3, which is optimal for polymerase activity [23].

- Potassium Chloride (KCl): A salt concentration of 35–100 mM promotes primer annealing by neutralizing the negative charges on the phosphate backbones of DNA, facilitating the formation of stable primer-template hybrids [3] [23].

Essential Cofactor: Magnesium Ions (Mg²⁺)

Magnesium is arguably the most critical cofactor in PCR. It serves a dual role: it is an essential cofactor for DNA polymerase activity, and it stabilizes the primer-template complex by neutralizing charge repulsion [19] [20]. The optimal concentration of Mg²⁺ is highly dependent on the specific primer-template system and must be determined empirically.

- Low Mg²⁺ Concentration (<0.5 mM): Results in significantly reduced polymerase activity and can lead to complete amplification failure due to a lack of the essential cofactor [20] [23].

- High Mg²⁺ Concentration (>5 mM): Decreases reaction stringency, leading to non-specific amplification and smeared bands on a gel. It can also reduce fidelity by promoting misincorporation of nucleotides [20] [5] [23].

Table 2: Quantitative Effects of Key Reaction Components on PCR Outcome

| Component | Typical Concentration Range | Effect of Low Concentration | Effect of High Concentration |

|---|---|---|---|

| Mg²⁺ | 0.5 - 5.0 mM [23] [24] | No or poor yield [20] [23] | Nonspecific products, smearing, lower fidelity [20] [5] |

| dNTPs (each) | 0.01 - 0.2 mM [19] [24] | Reduced yield, early plateau [19] | Inhibition, misincorporation (if unbalanced) [19] [5] |

| Primers (each) | 0.1 - 1.0 µM [19] [5] | Low or no amplification [19] | Primer-dimer formation, nonspecific binding [19] [5] |

| DNA Template | 1 pg - 1 µg (varies by type) [19] | Low or no amplification [6] | Nonspecific amplification, inhibitors carryover [5] |

Experimental Protocol: Mg²⁺ Titration

Titrating Mg²⁺ is one of the most effective steps in troubleshooting a failed PCR.

- Stock Solution: Prepare a MgCl₂ or MgSO₄ stock solution (e.g., 25 mM). The choice of salt may depend on the polymerase; for instance, Pfu DNA polymerase often works better with MgSO₄ [5].

- Master Mix: Prepare a master mix without Mg²⁺. Include all other components: 1X buffer (without Mg²⁺), dNTPs, primers, template, polymerase, and water.

- Reaction Setup: Aliquot the master mix into a series of PCR tubes. Add the Mg²⁺ stock solution to each tube to create a final concentration gradient, for example: 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, 4.0, and 5.0 mM.

- Amplification and Analysis: Run the PCR and analyze the products by gel electrophoresis. Identify the concentration that yields the strongest specific product with the cleanest background [20] [3].

PCR Additives and Enhancers

Additives are co-solvents used to modify the reaction environment to overcome challenges posed by complex templates.

- DMSO (Dimethyl Sulfoxide): Used at 1-10% (v/v), DMSO disrupts base pairing and is particularly effective for denaturing GC-rich templates (>60% GC) by interfering with secondary structure formation. Concentrations above 2% can inhibit some polymerases [20] [23] [21].

- Betaine: Used at 0.5 M to 2.5 M, betaine (N,N,N-trimethylglycine) equalizes the thermodynamic stability of GC and AT base pairs, facilitating the amplification of GC-rich regions and long targets [20] [23].

- BSA (Bovine Serum Albumin): At concentrations of 10-100 µg/mL, BSA acts as a stabilizer and can bind PCR inhibitors commonly found in complex samples like blood, soil, or plant tissues [6] [22] [23].

- Formamide: Like DMSO, formamide (1-10%) destabilizes DNA secondary structures and can increase stringency, but it can also be inhibitory at higher concentrations [23].

Integrated Troubleshooting: Connecting Components to Failure Modes

PCR failures can often be traced back to the suboptimal performance of one or more reaction components. The following diagram and table provide a systematic approach to diagnosing these failures.

Diagram 1: A diagnostic map linking common PCR failure modes to their potential root causes in reaction components and conditions.

Table 3: Troubleshooting Guide for PCR Failure Modes

| Failure Mode | Primary Component Causes | Recommended Solutions |

|---|---|---|

| No/Low Yield | ||

| Non-Specific Bands / Smearing | ||

| Primer-Dimer Formation | ||

| Low Fidelity (Errors in Product) |

The Scientist's Toolkit: Essential Reagents and Materials

The following table catalogues the key reagents required for setting up and optimizing PCR from the perspective of reaction components.

Table 4: Research Reagent Solutions for PCR Setup and Optimization

| Reagent | Function | Key Considerations |

|---|---|---|

| DNA Polymerase | Catalyzes the template-dependent synthesis of new DNA strands. | Select based on fidelity, thermostability, processivity, and specificity (hot-start) for the application [18]. |

| 10X Reaction Buffer | Provides the optimal pH and ionic strength for polymerase activity and primer annealing. | Often supplied with the enzyme; may or may not contain Mg²⁺ [3] [18]. |

| MgCl₂ or MgSO₄ Solution | Essential cofactor for DNA polymerase; stabilizes primer-template binding. | Concentration must be optimized; the type of salt (Cl vs. SO₄) can affect some polymerases [5] [23]. |

| dNTP Mix | Provides the nucleotide building blocks (dATP, dCTP, dGTP, dTTP) for DNA synthesis. | Must be equimolar and free of degradation from multiple freeze-thaw cycles [19] [5]. |

| Oligonucleotide Primers | Short, single-stranded DNA sequences that define the start and end points of amplification. | Must be well-designed (length, Tm, GC%) and used at an optimized concentration (0.1-1 µM) [19] [3]. |

| Nuclease-Free Water | Solvent for the reaction; ensures no enzymatic degradation of components. | Critical for preventing reaction failure due to contaminating nucleases. |

| PCR Additives (e.g., DMSO, Betaine) | Co-solvents that help denature complex secondary structures in the template DNA. | Use at the lowest effective concentration to minimize polymerase inhibition [20] [23]. |

| Template DNA | The target sequence to be amplified. | Quality and quantity are paramount; must be free of inhibitors [19] [21]. |

A meticulous understanding of PCR reaction components—enzymes, buffer conditions, and cofactors—is fundamental to overcoming the failure modes that researchers routinely encounter. The DNA polymerase dictates the speed, accuracy, and scope of the amplification. The buffer system, critically influenced by Mg²⁺ concentration, creates the biochemical environment for specificity and efficiency. By systematically approaching these components, as outlined in the troubleshooting guides and protocols herein, scientists can transform a failing PCR into a robust and reliable assay. This knowledge is indispensable for advancing research and development in fields ranging from fundamental genetics to targeted drug discovery.

Thermal Cycling Parameters and Their Impact on Specificity

In polymerase chain reaction (PCR) optimization, thermal cycling parameters are the adjustable physical conditions that govern the denaturation, annealing, and extension of DNA during amplification. These parameters—temperature, time, and cycle number—exert a profound influence on reaction specificity, which is the ability to amplify only the intended target sequence without generating non-specific products such as primer-dimers or spurious amplicons [25]. For researchers and drug development professionals, mastering these parameters is not merely beneficial but essential for generating reliable, reproducible data in applications ranging from clinical diagnostics to next-generation sequencing library preparation. Failure to optimize thermal cycling is a primary failure mode in PCR, often leading to inconclusive results, failed experiments, and costly delays [25] [26]. This guide provides an in-depth examination of these critical parameters and their practical optimization for ensuring specificity.

Core Thermal Cycling Parameters

The standard PCR cycle consists of three fundamental steps: denaturation, annealing, and extension. Each step's parameters must be carefully controlled to favor specific amplification.

Denaturation Conditions

Denaturation is the process of separating double-stranded DNA into single strands, making the template accessible to primers. This step is typically performed at 94–98°C for 15 seconds to 3 minutes [27] [26].

- Incomplete denaturation results in poor amplification efficiency because primers and polymerase cannot access the template [27].

- Excessive denaturation (prolonged time or excessively high temperature) can degrade the DNA template and reduce polymerase activity, especially for enzymes less thermostable than Taq [27] [26].

For GC-rich templates (>65% GC content), which form more stable duplexes, a higher denaturation temperature (e.g., 98°C) or a longer initial denaturation time (3-5 minutes) is often necessary for complete strand separation [27]. The presence of buffer additives like DMSO or formamide can facilitate denaturation of such challenging templates [27].

Annealing Temperature Optimization

The annealing step is arguably the most critical for specificity. Here, primers bind to their complementary sequences on the template DNA. The annealing temperature (Ta) must be stringently controlled.

- Too low a Ta permits non-specific binding, where primers anneal to partially complementary sites, leading to amplification of unintended products and background "smearing" [20] [27] [26].

- Too high a Ta prevents primer binding altogether, resulting in low or no yield of the desired product [20].

The optimal Ta is intrinsically linked to the primer melting temperature (Tm), the temperature at which 50% of the primer-DNA duplex dissociates [27]. A standard starting point is to set the Ta 3–5°C below the calculated Tm of the primers [27] [28]. Tm can be calculated using several formulas, with the Nearest Neighbor method being the most accurate as it considers sequence context and reagent concentrations [27].

Table 1: Common Formulas for Calculating Primer Tm

| Formula | Calculation | Considerations |

|---|---|---|

| Basic Rule of Thumb [28] | Tm = 4(G + C) + 2(A + T) |

Simple but less accurate; does not account for salt or primer concentration. |

| Salt-Adjusted Formula [27] | Tm = 81.5 + 16.6(log[Na+]) + 0.41(%GC) – 675/primer length |

More accurate as it incorporates salt concentration into the calculation. |

| Nearest Neighbor Method [27] | Uses thermodynamic stability of dinucleotide pairs with salt and primer concentrations. | Most accurate method; typically employed by commercial primer design software. |

Extension Parameters

During extension, the DNA polymerase synthesizes a new DNA strand. The key parameters are temperature and time.

- Temperature: The extension temperature is typically set to the optimum for the polymerase enzyme, often 72°C for Taq polymerase [27] [26].

- Time: Extension time is directly proportional to the length of the amplicon. A general guideline is 1 minute per kilobase (kb) for Taq polymerase [27] [28]. However, "fast" polymerases may require significantly less time [27].

Using an excessively long extension time offers no benefit and can increase opportunities for non-specific amplification [28]. For amplicons shorter than 1 kb, the extension time can be reduced to as little as 15-20 seconds [28].

Cycle Number

The number of PCR cycles (typically 25–40) influences product yield and specificity [27].

- Too few cycles result in insufficient product for detection.

- Too many cycles (>45) promotes the accumulation of non-specific products and primer-dimers as reaction components are depleted and the reaction enters a plateau phase. This is due to the preferential amplification of shorter, non-specific products that outcompete the longer target in later cycles [27].

Table 2: Summary of Core Thermal Cycling Parameters and Their Impact on Specificity

| Parameter | Typical / Optimal Range | Effect of Sub-Optimal Condition on Specificity |

|---|---|---|

| Denaturation | 94–98°C, 15 sec - 3 min [27] [26] | Too low/time too short: Incomplete denaturation lowers efficiency. Too high/time too long: Enzyme/template degradation. |

| Annealing Temperature (Ta) | Tm of primers - (3–5°C) [27] [28] | Too low: High non-specific amplification. Too high: Low/no specific yield. |

| Extension Time | 1 min/kb (Taq polymerase) [27] [26] | Too short: Incomplete products. Too long: Increased non-specific products. |

| Cycle Number | 25–35 cycles [27] | Too few: Low product yield. Too many (>45): High non-specific background and plateau. |

The following diagram illustrates the logical relationship between these thermal cycling parameters and the outcome of a PCR assay, highlighting the path to achieving high specificity.

Advanced Optimization Techniques

Empirical Optimization with Gradient PCR

While calculations provide a starting point, the optimal annealing temperature is often determined empirically. A gradient thermal cycler is an indispensable tool for this process, allowing a single PCR run to test a range of annealing temperatures across different wells of the reaction block [27]. The optimal Ta is identified as the highest temperature that produces a strong, specific amplicon band and the lowest background of non-specific products on a gel [20] [27]. Modern "better-than-gradient" thermal cyclers with separate heating/cooling units for different block sections provide superior temperature precision for this optimization [27].

Touchdown PCR

Touchdown PCR is a powerful technique to enhance specificity, particularly when the optimal Ta is unknown [28]. The protocol begins with an annealing temperature 1–2°C above the estimated Tm and systematically decreases the Ta by 0.5–1.0°C every cycle or every few cycles until it reaches a final, lower "touchdown" temperature [28]. The initial high-temperature cycles are highly stringent, favoring only the most perfectly matched primer-template binding. This ensures that the specific target is preferentially amplified during the early stages of the reaction. Once amplified, this specific product outcompetes non-specific targets in subsequent, less stringent cycles, thereby maximizing the yield of the correct product [28].

The Scientist's Toolkit: Reagents and Materials

Optimization extends beyond thermal parameters to the chemical composition of the reaction mix. The following table details key reagents and their role in managing reaction specificity.

Table 3: Key Research Reagent Solutions for Optimizing PCR Specificity

| Reagent / Material | Function / Rationale | Optimization Consideration |

|---|---|---|

| High-Fidelity Polymerase (e.g., Pfu, Vent) [25] [20] | Possesses 3'→5' proofreading (exonuclease) activity, which corrects misincorporated nucleotides, lowering error rates by 10-fold compared to Taq [20]. | Essential for cloning, sequencing, and any application requiring high sequence accuracy. Typically has a slower extension rate than Taq. |

| Hot-Start Polymerase [20] [26] | Remains inactive until a high-temperature activation step, preventing primer-dimer formation and non-specific extension during reaction setup at lower temperatures [20]. | A critical tool for improving specificity and yield across many PCR applications. |

| Magnesium Chloride (MgCl₂) [25] [20] [28] | Essential cofactor for DNA polymerase activity. Concentration stabilizes primer-template duplex and affects enzyme fidelity [20]. | Too low (e.g., <0.5 mM): Low efficiency/yield. Too high (e.g., >4 mM): Increased non-specific binding and reduced fidelity. Titrate from 1.5–2.0 mM starting point [20] [28]. |

| dNTPs [28] | Building blocks for new DNA strands. | Concentration affects yield and specificity. Too high (>200 µM): Can reduce specificity. Too low (<50 µM): Reduces yield. A common balance is 50–200 µM each dNTP [28]. |

| Primers [25] [28] | Short DNA sequences complementary to the target, defining the start and end of amplification. | Concentration is critical. Too high (>1 µM): Promotes non-specific binding and primer-dimer formation. Optimal range is typically 0.1–0.5 µM [25] [28]. |

| Buffer Additives (DMSO, Betaine, etc.) [20] [27] | Destabilize DNA secondary structures and homogenize the stability of GC- and AT-rich regions, aiding in denaturation and primer annealing. | Particularly useful for GC-rich templates (>65%). DMSO is typically used at 2–10% and Betaine at 1–2 M. Note: Additives lower the effective Tm, requiring Ta adjustment [20] [27]. |

Experimental Protocol: Gradient PCR for Annealing Temperature Optimization

This protocol provides a detailed methodology for empirically determining the optimal annealing temperature using a gradient thermal cycler [20] [27].

Materials

- Purified DNA template (e.g., 10–40 ng genomic DNA or 1 ng plasmid per reaction).

- Target-specific forward and reverse primers (e.g., 10 µM stock each).

- 2X PCR Master Mix (containing buffer, dNTPs, MgCl₂, and hot-start high-fidelity DNA polymerase).

- Nuclease-free water.

- Gradient thermal cycler.

- Gel electrophoresis equipment.

Procedure

- Calculate Tm: Use the Nearest Neighbor method via primer design software to determine the Tm for your primer pair.

- Prepare Reaction Mix: On ice, prepare a master mix for all reactions plus 10% extra to account for pipetting error.

- Nuclease-free water: To a final volume of 25 µL per reaction.

- 2X PCR Master Mix: 12.5 µL per reaction.

- Forward Primer (10 µM): 0.5 µL per reaction (final conc. 0.2 µM).

- Reverse Primer (10 µM): 0.5 µL per reaction (final conc. 0.2 µM).

- Mix thoroughly by pipetting.

- Aliquot and Add Template: Aliquot 23.5 µL of the master mix into each PCR tube. Add 1.5 µL of DNA template to each tube, cap, and mix by brief centrifugation.

- Program Thermal Cycler: Set up the following program in the gradient thermal cycler.

- Initial Denaturation: 98°C for 30 seconds.

- 35 Cycles:

- Denaturation: 98°C for 10 seconds.

- Annealing: Gradient from 55°C to 70°C for 15 seconds. (Set the range to span ~5°C above and below the calculated Tm).

- Extension: 72°C for 30 seconds/kb.

- Final Extension: 72°C for 5 minutes.

- Hold: 4°C.

- Run PCR and Analyze: Start the cycler. After completion, analyze 5–10 µL of each reaction using agarose gel electrophoresis. Include a DNA ladder for size determination.

Expected Results and Analysis

Visualize the gel under UV light. The optimal annealing temperature will be the highest temperature that produces a single, intense band of the expected size. Lower temperatures will typically show multiple bands or smearing (non-specific products), while higher temperatures will show a decline in the intensity of the specific band until it disappears entirely.

Impact of Instrumentation on Thermal Performance

The thermal cycler itself is a critical variable in achieving specificity. Key performance metrics include [29]:

- Temperature Accuracy: How closely the block's actual temperature matches the setpoint.

- Temperature Uniformity: The maximum temperature variance across the entire thermal block. Poor uniformity means reactions in different wells are effectively running under different conditions, compromising reproducibility [29].

- Ramp Rate: The speed at which the block transitions between temperatures. Faster ramp rates reduce overall cycle time and limit the duration reactions spend at non-optimal temperatures, which can improve specificity [29].

Advanced thermoelectric coolers in modern instruments are designed to provide the precise control, fast ramp rates (up to 6–9°C per second), and uniform block temperatures required for highly specific, high-speed PCR [30] [31].

Thermal cycling parameters are fundamental levers for controlling PCR specificity. A methodical approach—starting with well-designed primers, calculating theoretical conditions, and empirically refining the annealing temperature, extension time, and reagent concentrations using tools like gradient PCR—is the most robust strategy for overcoming this common PCR failure mode. By systematically integrating these optimization strategies, researchers and drug development professionals can ensure their PCR assays are specific, efficient, and reliable, forming a solid foundation for downstream applications and analyses.

Statistical Predictors of PCR Failure in Large Genomes

The polymerase chain reaction (PCR) is a foundational technique in molecular biology, yet its application in amplifying specific targets from large, complex genomes is often hampered by unexpected failures. In the context of large genomes, the challenge is not merely biochemical but also statistical, driven by the increased probability of non-specific primer binding and other sequence-related factors. This guide synthesizes current research to present a quantitative framework for predicting PCR failure, framing it within a broader thesis on understanding PCR failure modes. For researchers, scientists, and drug development professionals, the ability to statistically predict amplification success is critical for efficient experimental design, particularly in genomics, diagnostics, and assay development. This document provides an in-depth analysis of the primary statistical predictors, supported by experimental data and practical protocols, to equip professionals with the tools to preemptively identify and mitigate potential PCR failures.

Quantitative Model of PCR Failure

A seminal study developed statistical models to estimate the failure rate of PCR primers using 236 primer sequence-related factors, based on data from over 80,000 PCR experiments involving 1,314 primer pairs [32]. The research concluded that the number of predicted primer-binding sites in the genomic DNA is the most significant factor in determining PCR failure. The most efficient prediction was achieved by the GM1 model, which combines four key factors into a single statistical framework. It is estimated that using the GM1 model can reduce the average failure rate of PCR primers nearly three-fold, from 17% to 6% [32].

Table 1: Key Factors in the GM1 Statistical Model for Predicting PCR Failure

| Factor | Description | Impact on PCR Failure |

|---|---|---|

| Number of Primer-Binding Sites | Quantity of sequences in the genome where the primer is predicted to bind [32]. | The most important predictor; a higher number of binding sites increases the potential for non-specific amplification and reaction failure. |

| Alternative Binding Site Enumeration | Number of binding sites counted using methods that include mismatches (e.g., 1-2 mismatches) [32]. | Improves predictive accuracy by accounting for non-perfect binding, which can still lead to spurious amplification. |

| Thermodynamic Binding Model | Prediction of binding sites using a model that considers binding energy, not just a fixed-length sequence match [32]. | Offers a more biologically realistic assessment of potential off-target binding compared to simple exact-match counting. |

| Primer GC Content | The percentage of guanine and cytosine nucleotides in the primer sequence [32]. | Influences primer melting temperature (Tm) and stability; deviations from an optimal range can hinder specific binding. |

PCR failure can be attributed to two broad categories: errors during the enzymatic amplification process and failures related to primer-template interactions. Understanding these mechanisms is essential for interpreting statistical models and troubleshooting failed reactions.

Enzymatic and Thermal Errors

During amplification, the primary sources of errors are:

- Polymerase Misincorporation: The DNA polymerase can insert an incorrect nucleotide during strand extension. The fidelity varies significantly between enzymes; for instance, Pyrococcus kodakaraensis (KOD) polymerase has an error rate of approximately 1.1 errors per 10^6 base pairs, while Thermus aquaticus (Taq) polymerase lacks 3' editing activity and typically has a higher error rate [33].

- Thermal Damage: Exposure to high temperatures during cycling causes DNA damage. The main types are:

- Depurination (A+G): The loss of purine bases from the DNA backbone, leading to abasic sites that can cause polymerase stalling or misincorporation [33].

- Cytosine Deamination: The conversion of cytosine to uracil, which results in G-C to A-T mutations in subsequent amplification cycles [33].

- Oxidative Damage: For example, the oxidation of guanine to 8-oxoguanine, which can pair with adenine, causing a transversion mutation [33].

The rate of thermal damage is significantly higher in single-stranded DNA, which is exposed during the denaturation steps of PCR [33]. This risk can be mitigated by optimizing thermal cycling protocols to minimize the time DNA spends at elevated temperatures.

Primer and Template-Dependent Failures

In large genomes, the following factors are major contributors to failure:

- Non-Specific Amplification: This occurs when primers bind to non-target sites in the genome. The GM1 model identifies the sheer number of potential primer-binding sites as the paramount predictor of failure [32]. This is a particular challenge in genomes with high sequence redundancy or repetitive elements.

- Primer Design Flaws: Primers with self-complementarity can form hairpins or dimers, and those with inappropriate melting temperatures (Tm) can lead to inefficient annealing or mis-priming [34].

- Template Quality and Purity: Degraded template DNA or RNA, or the presence of inhibitors in the reaction, can drastically reduce efficiency. For RNA templates in RT-PCR, even partial degradation can skew results if the target region is affected [34]. Furthermore, genomic DNA contamination in RNA preparations is a common pitfall in qRT-PCR [34].

Table 2: Research Reagent Solutions for PCR

| Reagent / Material | Function in the PCR Workflow |

|---|---|

| High-Fidelity DNA Polymerase | Enzymes like KOD or Pfu polymerase offer proofreading (3'→5' exonuclease) activity, which corrects misincorporated nucleotides, resulting in significantly lower error rates than non-proofreading enzymes like Taq [33]. |

| dNTP Mix | A solution containing equimolar concentrations of dATP, dCTP, dGTP, and dTTP, which serve as the building blocks for the new DNA strands synthesized by the polymerase [35]. |

| MgCl₂ Buffer | Provides a stable chemical environment and magnesium ions, which are essential cofactors for DNA polymerase activity. The concentration can affect specificity and yield [35]. |

| Nuclease-Free Water | The solvent for the reaction, free of RNases and DNases that would otherwise degrade the template, primers, or products [35]. |

| DMSO | An additive that can help amplify difficult templates, such as those with high GC content, by reducing secondary structure formation and lowering the DNA melting temperature [35]. |

| DNAzap / DNA Decontamination Solution | Used to decontaminate surfaces and equipment to destroy contaminating DNA amplicons, preventing false positives in subsequent PCRs [34]. |

| RNAlater / RNA Stabilization Solution | A reagent used to immediately stabilize and protect RNA in fresh tissue samples, preventing degradation during storage and handling prior to RNA extraction for RT-PCR [34]. |

Experimental Protocols for Validation and Analysis

Protocol for Validating Primer Specificity

This protocol is critical when designing primers for large genomes, as predicted by the GM1 model.

- Primer Design: Using software (e.g., Primer3), design primers with an optimal Tm (e.g., 55-65°C). Ensure the 3' ends lack self-complementarity. For eukaryotic mRNA targets, design primers to span an exon-exon junction to prevent amplification from genomic DNA [34].

- In Silico Analysis: Use the GM1 model or similar tools to pre-screen primers by calculating the number of binding sites in the reference genome and evaluating the GC content [32].

- Reaction Setup: Prepare a standard 50 µL PCR mixture containing:

- Thermal Cycling:

- Product Analysis: Analyze 2-5 µL of the PCR product by agarose gel electrophoresis to confirm a single amplicon of the expected size. For qRT-PCR, perform a dissociation curve analysis to verify the specificity of the amplification [34].

Protocol for Controlling for Contamination

To ensure results are not compromised, these controls are mandatory.

- No Template Control (NTC): Includes all PCR reagents except the template DNA, which is replaced with nuclease-free water. The absence of a product confirms reagents are free of contaminating DNA [34].

- No Amplification Control (NAC) / Minus-Reverse Transcriptase Control: For RT-PCR, this reaction includes all components except the reverse transcriptase. The absence of a product indicates the RNA sample is free of contaminating genomic DNA [34].

Signaling Pathways and Workflow Visualizations

The following diagram illustrates the logical relationship between the sources of PCR failure and the strategies for prediction and mitigation, as informed by the quantitative model and experimental research.

Diagram 1: A logical map of PCR failure modes, their statistical predictors, and corresponding mitigation strategies.

The statistical prediction of PCR failure in large genomes represents a significant advancement in molecular biology experimental design. The GM1 model demonstrates that primer failure is not a random event but a quantifiable outcome driven primarily by the number of primer-binding sites. By integrating this model with a thorough understanding of enzymatic fidelity, thermal degradation, and robust experimental protocols—including stringent controls and optimized reagent systems—researchers can dramatically reduce PCR failure rates. This systematic approach to understanding and mitigating failure modes ensures greater reliability and efficiency in genomic applications, from basic research to drug development.

Selecting Appropriate Methods and Applications for Reliable PCR

The Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, yet its success is highly dependent on the choice of DNA polymerase. Selecting the wrong enzyme can lead to reaction failure, inaccurate results, and wasted resources. A comprehensive statistical model analyzing over 80,000 PCR experiments identified that the number of predicted primer-binding sites in the genome is the most critical factor in determining PCR failure [37]. This guide provides an in-depth comparison of standard, high-fidelity, and hot-start polymerases, framing the selection within the broader context of PCR failure mode research to empower researchers in making informed decisions for their specific applications.

Understanding why PCR fails is the first step in preventing it. The sources of error are multifaceted and can be broadly categorized as follows:

- Primer-Related Failures: The uniqueness of the primer sequence is paramount. A statistical model developed from 1314 primer pairs found that the number of predicted primer-binding sites is the most important factor in PCR failure. Models that incorporated the number of binding sites, along with primer GC%, could reduce the average failure rate from 17% to 6% [37].

- Polymerase Errors: DNA polymerases can introduce errors during amplification.

- Base Substitution Errors: These are misincorporation of nucleotides during DNA synthesis. For very accurate polymerases, DNA damage introduced during thermocycling can be a more significant contributor to observed mutations than the polymerase's own base substitution error rate [38].