Resolving Discrepant Results in Microbiological Method Verification: A Practical Guide for Pharmaceutical and Clinical Researchers

This article provides a comprehensive framework for resolving discrepant results encountered during the verification and validation of microbiological methods.

Resolving Discrepant Results in Microbiological Method Verification: A Practical Guide for Pharmaceutical and Clinical Researchers

Abstract

This article provides a comprehensive framework for resolving discrepant results encountered during the verification and validation of microbiological methods. Tailored for researchers, scientists, and drug development professionals, it bridges the gap between regulatory standards and practical application. The content spans from foundational principles of method validation vs. verification, through systematic methodologies for investigating discrepancies, to advanced troubleshooting strategies for challenging samples and comparative validation techniques. By synthesizing current standards like the ISO 16140 series and practical case studies, this guide aims to equip professionals with the knowledge to ensure the accuracy, reliability, and regulatory compliance of their microbiological testing.

Understanding Discrepant Results: Core Principles and Regulatory Frameworks

Fundamental Definitions and Core Differences

What is the fundamental distinction between verification and validation?

A common and succinct way to distinguish these processes is to ask two critical questions:

- Verification answers the question: "Are we building the product right?" It confirms that a product, service, or system meets its specified design requirements and specifications. [1] [2] [3] It is an process focused on internal consistency and correctness. [4] [2]

- Validation answers the question: "Are we building the right product?" It confirms that the product, service, or system fulfills its intended use and meets the needs of the customer and other identified stakeholders in a real-world environment. [5] [1] [2] It is an process focused on external performance and user needs. [4] [2]

In the context of laboratory methods, this translates to:

- Method Validation is a comprehensive process that proves an analytical method is acceptable for its intended use. [6] It is typically required when developing a new method or when significantly modifying an existing one. [7] [6]

- Method Verification is the process of confirming that a previously validated method performs as expected under a specific laboratory's conditions, using its specific analysts and equipment. [8] [7] [6] It is performed when adopting a standard, compendial, or previously validated method. [6]

The table below summarizes the key differences:

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | Are we building the product right? [1] [2] | Are we building the right product? [1] [2] |

| Focus | Conformance to design specifications and requirements. [4] [5] | Fitness for intended use and user needs. [4] [5] |

| Timing | During the development phase. [4] [3] | After development or pre-market. [4] [2] |

| Methods | Inspections, reviews, static analysis, unit testing. [1] [2] | User testing, clinical trials, real-world simulation. [4] [2] |

| Evidence | Objective, quantifiable data against specs. [4] | Real-world performance data and user satisfaction. [4] |

Regulatory Requirements and Standards

What are the key regulatory frameworks governing verification and validation?

Regulatory bodies across various industries mandate rigorous verification and validation processes to ensure product safety, efficacy, and data integrity.

- FDA (U.S. Food and Drug Administration): For medical devices, the FDA's 21 CFR Part 820 (Quality System Regulation) requires manufacturers to establish procedures for both verifying and validating device software. [2] Design verification confirms that design outputs meet design inputs, while design validation ensures the device meets user needs and intended uses. [5] The FDA's General Principles of Software Validation guidance provides detailed instructions, emphasizing that software must meet user needs and intended uses. [2]

- ISO (International Organization for Standardization):

- ISO 9001:2015 (Quality management systems) requires organizations to verify and validate product conformity at appropriate stages. [4] [5]

- ISO 13485:2016 (Medical devices) adds specific depth, requiring manufacturers to maintain objective evidence of both design validation and verification. [4] [2]

- IEC 62304 (Medical device software) is an international standard for software lifecycle processes that is widely accepted by the FDA. [2]

- CLIA (Clinical Laboratory Improvement Amendments): In clinical diagnostics, CLIA regulations (42 CFR 493.1253) require laboratories to perform method verification for any unmodified, FDA-cleared or approved test before reporting patient results. [7] This verification must confirm accuracy, precision, reportable range, and reference range. [7]

Troubleshooting Guides and FAQs for Microbiological Method Verification

Frequently Asked Questions in Method Verification and Validation

FAQ 1: Our laboratory is implementing a new, FDA-cleared commercial test kit for detecting Listeria monocytogenes. Do we need to validate or verify the method?

You need to perform a method verification. [7] Since the test is unmodified and FDA-cleared, it has already undergone a full validation by the manufacturer. Your laboratory's responsibility is to verify that the method performs as per the manufacturer's stated performance characteristics in your specific environment, with your personnel, and on your equipment. [7] [6] CLIA regulations require this one-time verification for non-waived (moderate or high complexity) tests before reporting patient results. [7]

FAQ 2: We are validating a new, laboratory-developed molecular method for a novel pathogen. What performance characteristics must we assess?

For a qualitative method like this, a full method validation is required. You must establish several key performance parameters, which are often summarized in a validation report [6]:

| Performance Characteristic | Description & Protocol |

|---|---|

| Accuracy | The agreement of results between the new method and a reference method. Protocol: Test a panel of known positive and negative samples (e.g., 20+ clinical isolates or reference materials) and calculate the percentage agreement. [7] |

| Precision | The closeness of agreement between independent test results under specified conditions. Protocol: Test a minimum of 2 positive and 2 negative samples in triplicate over 5 days by 2 different operators. Calculate the percentage of results in agreement. [7] |

| Specificity | The ability to unequivocally assess the analyte in the presence of interfering components. This includes testing for cross-reactivity with closely related non-target organisms. [5] [6] |

| Limit of Detection (LOD) | The lowest quantity of the target microorganism that can be reliably detected. Protocol: Perform a dilution series of the target organism to determine the lowest concentration that yields a positive result ≥95% of the time. [6] |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters (e.g., incubation temperature, time, reagent lot). [6] |

FAQ 3: We are getting discrepant results during the verification of a commercial pathogen test on a new food matrix. What should we do?

Discrepant results when applying a validated method to a new matrix (e.g., testing a pathogen in cooked chicken with a test validated for raw meat) often indicate a "fitness-for-purpose" issue. [8] The new matrix may contain substances that inhibit detection, physically impede the test, or alter microbial growth.

Troubleshooting Protocol:

- Matrix Assessment: Determine if the new food matrix falls within the category and subcategory for which the method was originally validated. AOAC guidelines group foods into categories based on similar characteristics. [8]

- Public Health & Detection Risk: Evaluate the public health risk associated with the pathogen-matrix combination and the risk of test failure (e.g., presence of inhibitors like pectin or fat). [8]

- Conduct a Matrix Extension Study: If risks are identified, perform a study beyond basic verification. Follow FDA or AOAC guidelines, which typically involve testing spiked (inoculated) and control samples of the new matrix to demonstrate successful detection. [8]

- Documentation: Document the study design, results, and conclusion that the method is (or is not) fit-for-purpose for the new matrix.

FAQ 4: What is the single most common error laboratories make during verification and validation?

A frequent and critical error is confusing the definitions and applications of verification and validation, leading to the application of one when the other is required. [4] [3] This often manifests as inappropriately substituting a limited verification for a full validation when it is not permitted by regulations, such as when implementing a laboratory-developed test (LDT) or a modified FDA-approved test. [7] [6] This can result in non-compliance, erroneous results, and failed audits. [4] [6]

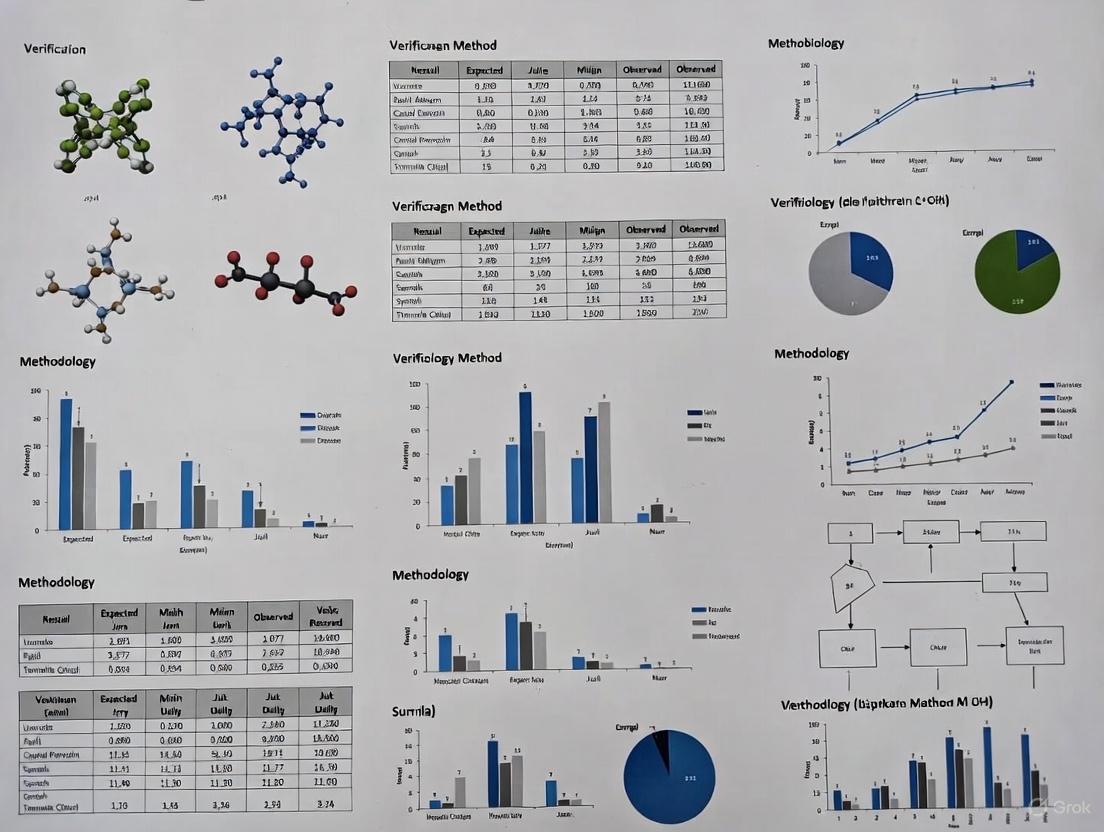

Experimental Workflows and Visualization

The following diagram illustrates the logical sequence and key questions of the integrated Verification and Validation workflow in product development.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and their functions used in microbiological method verification and validation studies.

| Item | Function in Verification/Validation |

|---|---|

| Reference Strains (ATCC, etc.) | Genetically well-characterized microbial strains used as positive controls, for spiking studies to determine accuracy and LOD, and for testing specificity and cross-reactivity. [7] |

| Clinical or Food Isolates | De-identified real-world samples used to verify or validate method performance against a comparative method and to ensure the test works with the laboratory's typical sample matrix. [7] |

| Certified Reference Materials | Materials with established property values used for calibration and to provide a traceable chain of evidence for accuracy and reportable range studies. [6] |

| Proficiency Test (PT) Samples | Blind samples provided by an external program used to independently assess the laboratory's ability to perform the method correctly and obtain accurate results. [7] |

| Inhibitor Testing Panels | Panels designed to contain substances known to inhibit molecular or cultural methods (e.g., pectin, fats, acids) used to test the robustness and fitness-for-purpose of a method in complex matrices. [8] |

In the rigorous field of pharmaceutical and clinical microbiology, achieving reliable and reproducible results is paramount. The process of method verification and validation provides the foundation for confidence in microbiological testing. However, even with validated methods, laboratories frequently encounter discrepant or ambiguous results that can undermine the effectiveness of quality control and food safety programs [9]. These discrepancies arise from a complex interplay of analytical, technical, and biological factors. Framed within a broader thesis on resolving such discrepancies, this technical support center provides targeted troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals identify root causes and implement effective corrective actions, thereby strengthening the application of microbiological methods [9].

FAQs and Troubleshooting Guides

▸ Analytical & Methodological Factors

1. What are the key validation parameters for qualitative versus quantitative microbiological methods, and why does it matter? Using inappropriate validation parameters for your test type is a common source of methodological failure. The validation requirements differ significantly between qualitative tests (e.g., sterility testing, presence/absence of pathogens) and quantitative tests (e.g., microbial enumeration, bioburden) [10]. Implementing a method validated for a different test category can lead to a lack of sensitivity, precision, or accuracy.

- Troubleshooting Guide:

- Symptom: The method fails to detect low levels of contamination, or quantitative results show high variability and poor agreement with reference methods.

- Investigation: Cross-reference your method's intended use with regulatory validation tables. For example, according to USP <1223>, Limit of Detection (LOD) is required for qualitative but not quantitative tests, while Linearity and Limit of Quantitation (LOQ) are required for quantitative but not qualitative tests [10].

- Resolution: Ensure your validation study and subsequent verification protocols assess the correct parameters as defined in guidelines like USP <1223> or Ph. Eur. 5.1.6 [10].

2. How can poor recovery of environmental isolates during validation lead to future discrepancies? A method might be validated with standard indicator organisms but fail to detect the specific microorganisms contaminating your local environment or unique manufacturing process [11].

- Troubleshooting Guide:

- Symptom: Recurring, unexplained microbial contamination events despite monitoring cultures showing "no growth."

- Investigation: Audit your validation records. Check if environmental isolates from your facility (e.g., from air, water, or surface monitoring) were included in the method suitability and growth promotion testing.

- Resolution: Include known environmental isolates from your facility in validation and ongoing suitability tests to ensure your culture media and methods support their growth [11].

▸ Technical & Operational Factors

1. Why could improper media preparation and handling cause inconsistent results? Deviations from validated media preparation procedures can introduce inhibitory substances or degrade nutrients, making the medium incapable of supporting microbial growth [11].

- Troubleshooting Guide:

- Symptom: Poor recovery (<50%) during growth promotion tests or inconsistent growth of low-inoculum samples.

- Investigation:

- Review media preparation records, including autoclaving cycles and pH checks.

- If media is re-melted, verify the process is captured in the validation. Inquire about techniques; microwaving can create localized hot spots that degrade the medium [11].

- Test the sterility and growth-promoting properties of a freshly prepared batch alongside the suspect batch.

- Resolution: Standardize media preparation in a detailed SOP. Specify holding times and temperatures, and strictly prohibit ad-hoc reheating methods. Validate any reheating process used [11].

2. How can incubation conditions lead to false negatives? The incubation temperature and atmosphere can selectively favor or inhibit the growth of certain microorganisms. An incubator that does not maintain a uniform, specified temperature may fail to support the growth of target organisms [11].

- Troubleshooting Guide:

- Symptom: Failure to recover specific microbial groups (e.g., anaerobes, psychrophiles, or thermophiles) while others grow normally.

- Investigation:

- Calibrate and map the incubator to ensure it maintains the set temperature within a ±1°C range at all internal locations [11].

- Verify atmospheric conditions (e.g., use of anaerobic jars or CO₂ chambers) for fastidious organisms.

- Resolution: Include temperature and atmosphere mapping as part of equipment qualification. Justify incubation temperatures for your specific test and organisms [11].

▸ Biological & Sample-Related Factors

1. How can the inherent properties of microorganisms lead to statistical discrepancies in quantitative tests? At low microbial concentrations, the random distribution of cells in a liquid follows a Poisson distribution rather than a normal (linear) distribution. This can lead to significant inaccuracies when performing serial dilutions and counting [11].

- Troubleshooting Guide:

- Symptom: High variance in replicate counts at low concentrations (e.g., near the detection limit); a 0.1 mL aliquot from a sample with 10 CFU/mL has a ~37% chance of containing zero organisms [11].

- Investigation: Review data from low-count samples for patterns of high variability. Calculate the Poisson-based variance to see if it explains the observed scatter.

- Resolution: Increase the sample volume tested or the number of replicate tests when working with low microbial counts to obtain a more statistically reliable average [11].

2. How can antimicrobial properties in a sample cause low microbial recovery, and how is this neutralized? Pharmaceutical products with inherent antimicrobial activity (e.g., antibiotics) will inhibit microbial growth in the test system, leading to false negatives unless the antimicrobial effect is effectively neutralized [10].

- Troubleshooting Guide:

- Symptom: Consistently low or no recovery from product samples, while positive controls grow normally.

- Investigation: Perform a neutralization validation as described in USP <1227> [10]. This involves testing three groups:

- Product + neutralizing agent + microorganisms

- Neutralizing agent + microorganisms (toxicity control)

- Buffer + microorganisms (positive control)

- Resolution: Validate and use an effective neutralizing agent (e.g., chemical inactivators, specific enzymes, dilution, or membrane filtration) to eliminate the product's antimicrobial effect without being toxic to the microorganisms [10].

Essential Experimental Protocols for Troubleshooting

Purpose: To validate that the chosen method effectively neutralizes the antimicrobial activity of a sample and is not toxic to microorganisms.

Materials:

- Test product

- Selected neutralizing agent(s)

- Broth culture of suitable reference organisms (e.g., Staphylococcus aureus, Pseudomonas aeruginosa)

- Appropriate culture media (liquid and solid)

- Buffered solution

Method:

- Prepare the following test groups in triplicate:

- Test Group: Combine a specified volume of the product with the neutralizing agent and a low inoculum (e.g., <100 CFU) of microorganisms.

- Toxicity Control Group: Combine the neutralizing agent with the same inoculum of microorganisms (without the product).

- Positive Control Group: Combine a buffered solution with the same inoculum of microorganisms.

- Incubate all groups under validated conditions.

- After incubation, enumerate the viable microorganisms from each group (e.g., by plate count).

- Calculation & Acceptance Criteria: The test is valid if the Positive Control shows growth. Recovery in the Test Group must be within a specified range (e.g., ≥50%) of the recovery in the Toxicity Control Group, demonstrating that neutralization was effective and non-toxic.

Purpose: To verify the performance of an unmodified, FDA-cleared/approved qualitative test (e.g., a pathogen detection assay) in your laboratory, as required by CLIA.

Materials:

- New test system and reagents

- A minimum of 20 clinically/relevantly characterized samples (positive and negative)

- Comparative method (a previously validated method)

Method:

- Accuracy: Test a minimum of 20 positive and negative samples with the new method and compare the results to the comparative method. Calculate percent agreement.

- Precision: Test a minimum of 2 positive and 2 negative samples in triplicate over 5 days by 2 different operators.

- Reportable Range: Verify using at least 3 known positive samples to ensure the method correctly identifies the analyte.

- Reference Range: Verify using at least 20 samples representative of your patient population to confirm the expected "normal" result.

- Acceptance Criteria: Performance must meet the manufacturer's stated claims or laboratory-defined criteria for accuracy, precision, and reportable range [7].

Table 1: Key Validation Parameters for Different Microbiological Test Types as per USP <1223> and Ph. Eur. 5.1.6 [10]

| Validation Parameter | Qualitative Tests | Quantitative Tests | Identification Tests |

|---|---|---|---|

| Trueness | - (or used as LOD alternative) | + | + |

| Precision | - | + | - |

| Specificity | + | + | + |

| Limit of Detection (LOD) | + | - (may be required) | - |

| Limit of Quantitation (LOQ) | - | + | - |

| Linearity | - | + | - |

| Range | - | + | - |

| Robustness | + | + | + |

| Equivalence | + | + | - |

Table 2: Performance Criteria for Antimicrobial Susceptibility Testing (AST) Validation as per CLSI [12]

| Performance Measure | Definition | Target Performance Criteria |

|---|---|---|

| Categorical Agreement (CA) | Percentage of identical interpretations (S, I, R) between the new and reference method. | ≥ 90.0% |

| Very Major Error (VME) | New method: Susceptible / Reference method: Resistant | < 3.0% |

| Major Error (ME) | New method: Resistant / Reference method: Susceptible | < 3.0% |

| Minor Error (mE) | New method or reference method: Intermediate, and the other: Susceptible or Resistant | ≤ 10.0% |

| Precision | Agreement between replicates of the same sample. | > 95.0% |

Visual Workflows and Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Microbiological Method Validation

| Item | Function in Validation/Troubleshooting |

|---|---|

| Reference Strains (e.g., ATCC strains) | Used as positive controls and indicator organisms for growth promotion testing, accuracy, and precision studies [11]. |

| Environmental Isolates | In-house characterized isolates from the facility's monitoring program; critical for ensuring methods detect the actual resident microflora [11]. |

| Neutralizing Agents (e.g., Diluents, inactivators) | Chemical agents or enzymes used to nullify the antimicrobial effect of a product sample, allowing for accurate microbial recovery [10]. |

| Qualified Culture Media | Nutrient media that has been tested and proven to support the growth of a wide range of microorganisms, ensuring reliable results [11]. |

| Clinical or Contrived Samples | Well-characterized samples (fresh, frozen, or contrived) used to verify method performance against a reference standard in a real-world matrix [12]. |

Troubleshooting Guides

Guide 1: Resolving Discrepant Results in Microbiological Method Verification

Problem: Inconsistent or unexpected results appear when verifying a microbiological method for food testing.

Explanation: Discrepancies can stem from biological factors (e.g., variable microbial distribution), technological issues (e.g., uncalibrated equipment), or human factors (e.g., deviations from a procedure) [9]. A structured troubleshooting approach is essential to identify the root cause.

Solution: A systematic investigation should be conducted, focusing on the following common root causes [9]:

- Sample Issues: Inhomogeneous sample or improper sample storage and handling.

- Method Performance: The laboratory's capabilities with the method or the method's suitability for the specific sample matrix have not been sufficiently verified.

- Equipment and Reagents: Uncalibrated equipment, malfunctioning instruments, or degraded reagents.

- Personnel and Procedures: Inadequate training or failure to follow the Standard Operating Procedure (SOP).

Experimental Protocol for Root Cause Analysis:

- Re-evaluate Method Verification Data: Confirm that the "implementation verification" and "(food) item verification" as per ISO 16140-3 were successfully completed. This proves the laboratory can perform the method correctly on relevant sample types [13].

- Verify Equipment Qualification: Consult CLSI QMS23 to ensure all general laboratory equipment (e.g., balances, centrifuges, pipettes) has a current Performance Qualification (PQ), routine function checks, and calibration verification [14] [15].

- Check Reagent Quality: Confirm that all culture media and reagents have been quality controlled and are within their expiration dates.

- Review Personnel Competency: Ensure analysts have documented training on the method SOP and participate in a proficiency testing program [9].

- Re-test Retained Samples: If possible, re-test any retained original or homogenized sample to investigate potential sample heterogeneity [9].

Guide 2: Troubleshooting Inaccurate Results in Pharmaceutical Assay Validation

Problem: An analytical method for a drug substance fails accuracy acceptance criteria during validation.

Explanation: Accuracy expresses the closeness of agreement between the found value and the accepted true value [16]. Common mistakes during validation include not using a representative sample matrix and setting inappropriate acceptance criteria [17].

Solution: The experimental design for accuracy must mimic real-world samples and include all potential sources of bias.

Experimental Protocol for Assessing Accuracy (based on ICH Q2(R1) and USP <1225>):

- Sample Preparation: Prepare a minimum of nine determinations over at least three concentration levels covering the method's specified range. For a drug product, this is typically done by spiking the placebo with known amounts of the analyte [16].

- Critical Step: Ensure "pseudo-samples" are as close as possible to real samples. For a solid dosage form, this means separately preparing and spiking at least nine individual placebo blends, not making multiple measurements from a single stock solution [17].

- Calculation: Calculate the percentage recovery for each sample. The mean recovery and confidence intervals should meet pre-defined acceptance criteria compatible with the product specification [16] [17].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between method validation and verification? A: Validation proves a method is fit-for-purpose, while verification demonstrates a laboratory can competently perform a method that has already been validated [13].

- Validation is the process of establishing, by laboratory studies, that the method's performance characteristics (e.g., accuracy, precision) meet requirements for its intended application [16]. It often involves interlaboratory studies [13].

- Verification is performed by a user laboratory to demonstrate it can get the correct results with a pre-validated method. For microbiological methods, ISO 16140-3 outlines a two-stage process: implementation verification and (food) item verification [13].

Q2: According to USP, must I fully validate a compendial method? A: No. Users of USP methods are not required to validate them but must verify their suitability under actual conditions of use [16]. This typically involves a limited set of tests to confirm the method works as expected in your laboratory with your specific samples.

Q3: How does IVDR define "Analytical Performance" for an In Vitro Diagnostic (IVD)? A: Under the IVDR, analytical performance refers to a device's ability to accurately and reliably detect or measure an analyte. The evaluation must include specific characteristics as outlined in Annex I [18] [19]:

Table: Core Analytical Performance Characteristics under IVDR

| Characteristic | Description |

|---|---|

| Accuracy (Trueness) | Closeness of results to a certified reference value [18]. |

| Precision | Repeatability and reproducibility across runs, operators, and instruments [18]. |

| Analytical Sensitivity (LoD) | The lowest amount of analyte reliably detected [18]. |

| Analytical Specificity | Ability to detect the analyte without interference from other substances [18]. |

| Measuring Range | The interval over which results are valid and linear [18]. |

Q4: My method verification failed. What are the first things I should check? A: Start with the fundamentals of your laboratory's quality system [9]:

- Equipment: Is all equipment properly qualified, calibrated, and maintained? (Refer to CLSI QMS23 for guidance) [14].

- Reagents: Are all reagents, media, and reference standards within their expiry dates and stored correctly?

- Personnel: Are the analysts trained and competent in the technique?

- Procedure: Was the SOP followed exactly? Review the method's "ruggedness" or "robustness" to identify steps that are sensitive to minor variations.

Key Experiments and Data Summaries

Table: Typical Analytical Performance Characteristics from USP <1225> and ICH Q2(R1) [16]

| Performance Characteristic | Definition | Typical Validation Approach |

|---|---|---|

| Accuracy | Closeness to the true value. | Application to a reference standard or spiked samples; minimum 9 determinations over 3 levels. |

| Precision (Repeatability) | Agreement under repeated measurement. | A minimum of 9 determinations or 6 at 100% test concentration. |

| Specificity | Ability to assess the analyte unequivocally. | Demonstration that the procedure is unaffected by impurities, excipients, or other components. |

| Detection Limit (LoD) | Lowest amount of analyte that can be detected. | Analysis of samples with known concentrations; signal-to-noise ratio. |

| Quantitation Limit (LoQ) | Lowest amount of analyte that can be quantified. | Analysis of samples with known concentrations; specified levels of precision and accuracy. |

| Linearity | Ability to obtain results proportional to concentration. | Test a series of samples across the claimed range of the procedure. |

| Range | The interval between upper and lower levels of analyte. | Confirmed by demonstrating acceptable levels of accuracy, precision, and linearity. |

| Robustness | Capacity to remain unaffected by small, deliberate variations. | Testing the influence of small changes in operational parameters (e.g., pH, temperature). |

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for Microbiological Method Verification

| Reagent / Material | Function in Experiment |

|---|---|

| Certified Reference Material (CRM) | Serves as the accepted reference value with known purity/quantity to establish method accuracy [16]. |

| Reference Standard | Used for system suitability testing, calibration, and quantifying the analyte in a sample [16]. |

| Selective Culture Media | Allows for the isolation and enumeration of target microorganisms from a complex sample matrix [13]. |

| Quality Controlled Placebo | The drug product formulation without the active ingredient, used to prepare spiked samples for accuracy studies in drug product analysis [16]. |

| Strain Panels for Specificity | A collection of well-characterized microbial strains used to demonstrate the method's ability to correctly identify the target organism[s]. |

Workflow and Relationship Diagrams

Method Selection and Implementation Flow

Discrepant Result Troubleshooting Path

Frequently Asked Questions

1. What is the difference between method validation and method verification? Method validation is the initial process that confirms a method's performance characteristics (like specificity and accuracy) for detecting target organisms under a particular range of conditions, often conducted by test kit manufacturers against standards from bodies like AOAC or ISO [8]. Method verification, by contrast, is the testing performed by an individual laboratory to demonstrate that it can successfully execute a previously validated method and correctly obtain the required results before using it for routine testing [8] [7].

2. What does "Fitness-for-Purpose" mean in practice? A method is considered fit-for-purpose when it produces accurate data that allows for correct decisions in its intended application [8]. This is automatically true if the method has been validated for your specific sample matrix (e.g., a specific food type). If the matrix is new or different, the laboratory must evaluate whether the existing validation is relevant or if additional studies are needed to confirm the method's performance [8].

3. How do I set acceptance criteria for a method verification study? Acceptance criteria should be defined in a verification plan before starting the study. For an unmodified, FDA-approved test, the laboratory must verify performance characteristics like accuracy and precision. The acceptance criteria should meet the performance claims stated by the manufacturer or be determined as acceptable by the laboratory director, in line with regulatory standards like CLIA [7].

4. What should I do when my new, more sensitive test gives positive results that the old "gold standard" test misses? This is a common challenge, particularly with nucleic acid amplification tests (NAA). A practice known as discrepant analysis is often used, where a third, resolving test is used to check the discordant results [20]. However, this approach can be statistically biased in favor of the new test. A more rigorous approach is to use a robust reference standard from the start, which could be a combination of several tests or include clinical correlation, applied to all samples uniformly to avoid bias [20].

5. What is a matrix extension study and when is it needed? A matrix extension study is a type of fitness-for-purpose evaluation conducted when a laboratory wants to use a validated method on a sample type (matrix) that was not included in the original validation [8]. This is necessary because some foods contain substances that can interfere with testing. The study typically involves testing spiked and control samples of the new matrix to demonstrate successful detection [8].

Troubleshooting Guides

Problem: Inconsistent Results During Method Verification

Issue: Unacceptable variance during precision testing (e.g., within-run or between-run results do not match).

Step-by-Step Resolution:

- Review Quality Control: Confirm that all quality control (QC) procedures were followed and that controls yielded expected results. Repeat the QC if necessary [7].

- Check Reagents and Materials: Verify that all reagents are within their expiration dates and have been stored correctly. Ensure that new lots of reagents are not a source of variance.

- Evaluate Operator Technique: If the test involves manual steps, ensure all technicians are trained and following the standardized procedure exactly. Review the protocol for any ambiguous steps [7].

- Inspect Instrumentation: Check maintenance logs and performance data for the instruments involved. Run instrument-specific diagnostic and calibration checks.

- Re-assess Samples: Confirm that the samples used for verification are stable and homogeneous. If using clinical samples, ensure they have been stored properly.

- Consult the Plan: Revisit your verification plan's acceptance criteria. If precision continues to fall outside acceptable limits, contact the test manufacturer's technical support for assistance.

Problem: Resolving Discrepant Results with a New Test

Issue: A new, highly sensitive test (e.g., a molecular test) produces positive results that a traditional culture method does not.

Step-by-Step Resolution:

- Do NOT automatically classify: Do not automatically classify all new-test-positive/culture-negative results as "false positives" or all new-test-negative/culture-positive results as "false negatives" [20].

- Define a Resolution Strategy: Plan your approach before starting the evaluation. The best practice is to establish a composite reference standard that does not depend solely on the old test. This could involve [20]:

- Using a second, independent molecular method targeting a different gene.

- Incorporating clinical data from patient charts to confirm active infection.

- Applying the resolving test to a random selection of concordant samples (both positive and negative), not just the discrepant ones, to help assess bias.

- Avoid Dependent Tests: Do not use a resolving test that is methodologically similar to your new test (e.g., a different PCR targeting the same gene), as this can inflate the apparent performance of the new test due to shared weaknesses [20].

- Recalculate Performance: After applying the resolving test, recalculate the new test's sensitivity, specificity, and predictive values with the adjusted "true" status of the samples. Be aware that resolving only discrepant results will always improve the apparent performance of the new test, so interpret the results with caution [20].

Experimental Protocols & Data

Protocol: Verification of a Qualitative Microbiological Method

This protocol outlines the methodology for verifying an unmodified, FDA-cleared/approved qualitative test in a single laboratory, as required by standards such as CLIA [7].

1. Purpose To demonstrate that the laboratory can achieve performance characteristics (Accuracy, Precision, Reportable Range, and Reference Range) for the new method that meet established acceptance criteria.

2. Experimental Design The verification study should evaluate the following performance characteristics [7]:

- Accuracy: The agreement between the new method and a comparative method.

- Precision: The agreement between repeated measurements of the same sample (within-run, between-run, and between-operator).

- Reportable Range: The range of results that can be reliably reported by the test system.

- Reference Range: The established "normal" or expected result for the tested patient population.

3. Materials and Methods

- Samples: A minimum of 20 clinically relevant isolates or samples is recommended. Use a combination of positive and negative samples. Acceptable sources include [7]:

- Reference materials (e.g., ATCC strains)

- Proficiency test samples

- De-identified clinical samples previously characterized by a validated method

- Procedure:

- Accuracy: Test all samples in parallel using the new method and the comparative (existing validated) method.

- Precision: Test a minimum of 2 positive and 2 negative samples in triplicate, over 5 days, by 2 different operators (if the process is not fully automated) [7].

- Reportable Range: Test a minimum of 3 known positive samples to verify that the system correctly reports results as "Detected" or within the established cutoff values.

- Reference Range: Test a minimum of 20 samples that are known to be negative for the analyte to confirm the expected "normal" result for your patient population [7].

4. Data Analysis and Acceptance Criteria Calculate the following and confirm they meet the manufacturer's claims or laboratory-defined criteria [7]:

- Accuracy: (Number of results in agreement / Total number of results) x 100

- Precision: (Number of results in agreement across all replicates / Total number of results) x 100

Table 1: Summary of Verification Criteria for a Qualitative Assay

| Performance Characteristic | Minimum Sample Number/Type | Calculation Method |

|---|---|---|

| Accuracy | 20 positive & negative samples [7] | % Agreement = (Agreements / Total) x 100 [7] |

| Precision | 2 positive & 2 negative, in triplicate over 5 days by 2 operators [7] | % Agreement across all replicates [7] |

| Reportable Range | 3 known positive samples [7] | Confirmation of correct "Detected" result or correct classification relative to cutoffs [7] |

| Reference Range | 20 known negative samples [7] | Confirmation of correct "Not Detected" result [7] |

The Scientist's Toolkit

Table 2: Key Reagents and Materials for Microbiological Method Verification

| Item | Function / Purpose |

|---|---|

| Reference Strains | Well-characterized microbial strains (e.g., from ATCC) used as positive controls to confirm the test's ability to correctly detect the target organism. |

| Clinical Isolates | De-identified, previously characterized patient samples used to assess method performance against real-world, relevant specimens [7]. |

| Proficiency Test (PT) Samples | Commercially provided samples of known but blinded content, used to objectively assess the accuracy and reliability of the testing process [7]. |

| Spiked Samples | Samples of a specific matrix (e.g., food, clinical specimen) that have been inoculated with a known quantity of the target microorganism. Critical for fitness-for-purpose and matrix extension studies [8]. |

| Inhibitor Testing Panels | Samples or reagents designed to contain substances known to potentially inhibit molecular tests (e.g., pectin, fats). Used to evaluate a method's robustness in complex matrices [8]. |

Establishing Fitness-for-Purpose Workflow

The following diagram illustrates the logical decision process for establishing that a method is fit-for-purpose, particularly when dealing with a new sample matrix.

Decision Workflow for Fitness-for-Purpose

A Systematic Approach to Discrepancy Investigation and Resolution

A well-structured verification plan is your first line of defense against discrepant results in the laboratory.

When introducing a new microbiological method to your laboratory, demonstrating its reliability through a robust verification process is a fundamental requirement for routine diagnostics. This process ensures the method performs as expected in your specific hands, with your equipment, and on your patient population. A critical component of this process is planning for how to handle discrepant results—those instances where the new method and the reference method disagree. A proactive plan for their resolution strengthens the entire verification study and ensures the integrity of your laboratory's data.

This guide provides practical, actionable frameworks to help you develop a verification plan that systematically addresses these challenges.

Core Concepts: Verification vs. Validation

A clear understanding of the terms verification and validation is the essential starting point, as it dictates the regulatory and practical scope of your study.

Q: What is the difference between method verification and method validation?

A: The terms are often used interchangeably, but they apply to distinct scenarios:

Method Verification is conducted for unmodified, FDA-cleared or approved tests. It is a one-time study to confirm that the test's established performance characteristics (e.g., accuracy, precision) are successfully demonstrated in your laboratory environment. You are "verifying" the manufacturer's claims [ [7] [8]].

Method Validation is a more extensive process required for non-FDA cleared tests, such as laboratory-developed tests (LDTs), or when an FDA-cleared test has been modified outside the manufacturer's specifications (e.g., using a different specimen type or changing incubation times). Validation aims to establish that the assay works as intended for its new use [ [7] [21]].

Key Components of a Verification Study Design

For an unmodified FDA-approved test, CLIA regulations require laboratories to verify several key performance characteristics. The following table outlines the objectives and strategies for each, with a focus on qualitative and semi-quantitative assays common in microbiology.

Table 1: Key Components and Experimental Design for Verification Studies

| Performance Characteristic | Study Objective | Recommended Experiment & Minimum Sample Size | Acceptance Criteria |

|---|---|---|---|

| Accuracy | Confirm agreement between the new method and a comparative method [ [7]]. | Test a minimum of 20 clinically relevant isolates using a combination of positive and negative samples. Use standards, controls, proficiency test samples, or de-identified clinical samples tested in parallel with a validated method [ [7]]. | The percentage of agreement should meet the manufacturer's stated claims or criteria determined by the lab director [ [7]]. |

| Precision | Confirm acceptable variance within a run, between runs, and between operators [ [7]]. | Test a minimum of 2 positive and 2 negative samples in triplicate over 5 days by 2 different operators. If the system is fully automated, operator variance may not be needed [ [7]]. | The percentage of results in agreement should meet the manufacturer's stated claims or lab director's criteria [ [7]]. |

| Reportable Range | Confirm the test's upper and lower detection limits for reporting results [ [7]]. | Test a minimum of 3 samples. For qualitative assays, use known positive samples. For semi-quantitative, use samples near the upper and lower cutoffs [ [7]]. | The laboratory verifies that it can correctly report results (e.g., "Detected," "Not detected") for samples within the manufacturer's specified range [ [7]]. |

| Reference Range | Confirm the "normal" result for your patient population [ [7]]. | Test a minimum of 20 isolates using de-identified clinical or reference samples that represent the standard for your population (e.g., samples negative for MRSA when verifying an MRSA assay) [ [7]]. | The expected result for a typical sample is confirmed. If your patient population differs from the manufacturer's, the range may need to be re-defined with additional testing [ [7]]. |

Troubleshooting Discrepant Results

Despite a well-designed study, discrepant results between the new method and the reference standard are common. A systematic approach to their resolution is critical.

Q: What is a systematic approach to resolving discrepant results?

A: Discrepancies should be investigated through a structured troubleshooting process that evaluates biological, technological, and human factors [ [9]].

Phase 1: Re-confirmation and Repeat Testing

- Action: Repeat the testing on the new system and the reference method using the original sample or a fresh aliquot, following standard operating procedures strictly.

- Goal: Rule out simple errors like pipetting mistakes, sample mix-up, or equipment glitches.

Phase 2: Arbitration with a Reference Method

- Action: Test the discrepant sample using a third, highly reliable ("gold standard") method. This could be a different molecular method (e.g., qPCR), sequencing, or a culture-based method [ [22]].

- Goal: To determine which of the two original methods (new or comparative) provided the correct result. This step is crucial for calculating true accuracy and sensitivity.

Phase 3: Root Cause Analysis If the new method is consistently at odds with the arbitration method, investigate these common root causes [ [9]]:

Sample Issues:

- Inhibitors: Does the sample matrix contain substances (e.g., pectin, fats, acids) that inhibit detection chemistry? This is a common issue in food testing and clinical specimens [ [8]].

- Matrix Effects: Was the method validated for your specific sample type? A test validated for raw meat may not perform accurately for cooked chicken without a matrix extension study [ [8]].

- Low Microbial Load: The target organism may be present at a concentration near the detection limit of one method but not the other.

Methodology Issues:

- Specificity: The new method may be detecting closely related non-target organisms (cross-reactivity).

- Sensitivity: The new method may lack the ability to detect all strains or variants of the target organism.

Procedural Issues:

- Calibration & Maintenance: Is equipment properly calibrated and maintained?

- Operator Training: Are all personnel thoroughly trained and competent in the new method?

The following workflow provides a visual guide for investigating discrepant results:

Essential Research Reagent Solutions

A successful verification study relies on well-characterized materials. The table below lists essential reagents and their critical functions.

Table 2: Essential Research Reagents for Verification Studies

| Reagent / Material | Function & Importance in Verification |

|---|---|

| Reference Strains | Well-characterized microbial strains (e.g., from ATCC) used as positive controls, for accuracy testing, and to ensure the method detects the intended target. |

| Clinical Isolates | De-identified patient samples that represent the laboratory's typical patient population and microbial diversity. Crucial for verifying reference ranges and accuracy in a real-world context [ [7]]. |

| Proficiency Test (PT) Samples | Blinded samples of known content provided by an external program. They provide an unbiased assessment of the method's and the operator's performance. |

| Internal Control Materials | Substances added to the sample to monitor the entire testing process. For molecular methods, an exogenous internal control (e.g., a non-pathogenic bacterium) can detect the presence of PCR inhibitors, helping to explain false negatives [ [22]]. |

| Quality Control (QC) Organisms | Strains with defined susceptibility profiles or identities used daily or with each test run to ensure the test system is performing within specified limits [ [23]]. |

FAQs on Verification Planning

Q: How do I determine the right sample size for a verification study if the method is for a rare pathogen? A: While guidelines like 20 positive and 20 negative samples are common for accuracy, this may not be feasible for rare targets. In such cases, use all available clinical samples collected over time. The study design should be justified and documented, stating the limitation. Collaboration with other laboratories to pool samples or the use of commercially available reference materials can also be solutions.

Q: Our laboratory is implementing a new antimicrobial susceptibility test (AST). Are there special considerations? A: Yes. AST verification is particularly complex. It is crucial to use a broad strain set that includes organisms with well-defined resistance mechanisms. Furthermore, you must decide whether to use FDA breakpoints or CLSI/EUCAST breakpoints, as this impacts the acceptance criteria. CLSI document M52, "Verification of Commercial Microbial Identification and AST Systems," is an invaluable resource for this specific task [ [7]].

Q: Where can I find authoritative protocols for verification studies? A: Several professional organizations provide detailed guidelines:

- CLSI (Clinical & Laboratory Standards Institute): Documents like EP12-A2 (Qualitative Test Performance), M52 (Microbial ID and AST), and MM03-A2 (Molecular Diagnostics) are essential [ [7]].

- AOAC International: Provides standardized methods for food safety and microbiological testing [ [8]].

- ISO 15189:2022: The international standard for medical laboratories provides requirements for verification and validation [ [21]].

Key Takeaways

- Distinguish between verification and validation to apply the correct regulatory and procedural framework from the start [ [7] [8]].

- Plan your study design around core performance characteristics, using minimum sample sizes as a guide and adjusting for your laboratory's specific context and needs [ [7]].

- Develop a proactive, multi-phase troubleshooting plan for discrepant results that includes repeat testing, arbitration, and a thorough root cause analysis [ [9] [22]].

- Utilize well-characterized reagents and reference standards to ensure the quality and traceability of your verification data [ [7] [23]].

Frequently Asked Questions

What is the fundamental difference between comparing qualitative and quantitative tests? When comparing quantitative tests, you assess the bias and agreement between numerical results. For qualitative tests, which yield categorical results (e.g., positive/negative), the focus shifts to measuring the agreement between the methods, reported as Positive Percent Agreement (PPA) and Negative Percent Agreement (NPA) [24].

When should I report PPA/NPA versus Sensitivity/Specificity? Report PPA and NPA when you are comparing a new candidate method to an existing comparative method. Report Sensitivity and Specificity only when you are evaluating the new method against a Diagnostic Accuracy Criteria, which is the best available reference for determining the true condition of a sample. Using a routine method as a reference for sensitivity/specificity is not recommended [24].

How do I resolve discrepant results between the new and old methods? Discrepant results should be investigated using a referee method, which is a definitive method (like DNA sequencing) that is different from the two being compared. This method is used to assign the true status to samples where the candidate and comparative methods disagree. It is critical that this referee method is applied blindly, without knowledge of the results from the other two methods [25].

What are common pitfalls in planning a method comparison study? Common pitfalls include:

- Insufficient sample size: This leads to imprecise estimates of agreement or performance [24].

- Using an inappropriate reference method: A routine method cannot establish diagnostic accuracy [24].

- Poor sample selection: The samples used should reflect the full spectrum of analytes and matrices that the test will encounter in routine use [24].

Troubleshooting Guides

Problem: Low Agreement in Qualitative Test Comparison

Symptoms: Unexpectedly low Positive Percent Agreement (PPA) or Negative Percent Agreement (NPA) during a method comparison study.

Investigation and Resolution: Follow this logical workflow to diagnose and address the issue.

Detailed Steps:

- Investigate Discrepant Samples: Isolate all samples that produced conflicting results between the candidate and comparative methods. Re-check the raw data for these samples [24].

- Employ a Referee Method: Subject the discrepant samples to a more definitive referee method to determine their true status. This is crucial for understanding where the error lies [25].

- Analyze the Root Cause:

- If the referee method confirms the candidate method's results were incorrect, investigate issues with the candidate method's procedure, reagent quality, or user training.

- If the referee method confirms the comparative method was wrong, it highlights that the original method was an imperfect reference. The calculated PPA/NPA against the original method remains valid for understanding how your lab's results will change, but the performance of the candidate method is likely better than initially calculated.

- In some cases, both methods may be incorrect for a subset of samples, indicating a more complex analytical challenge.

- Take Corrective Action: Based on the root cause, you may need to retrain staff, adjust the candidate method's protocol, or decide that the candidate method is not suitable.

- Report Appropriately: When a referee method is used, the final PPA and NPA can be recalculated based on the truth assigned by the referee, providing a more accurate picture of the candidate method's performance relative to the best available standard.

Problem: High Bias in Quantitative Test Comparison

Symptoms: A significant constant or proportional bias is observed in the difference plot (Bland-Altman plot) when comparing a new quantitative method to a reference method.

Investigation and Resolution:

Detailed Steps:

- Characterize the Bias: Determine if the bias is constant (consistent across all concentration levels) or proportional (increases or decreases with the concentration of the analyte).

- Investigate Constant Bias:

- Action: Review the calibration process. A constant offset often points to an error in calibrator value assignment or an issue with the instrument's blanking procedure.

- Check: Ensure calibrators have been prepared correctly and are traceable to a higher-order standard.

- Investigate Proportional Bias:

- Action: Investigate the assay's specificity. Proportional bias can be caused by antibody cross-reactivity with similar substances or matrix effects where components of the sample interfere with the detection method.

- Check: Run spike-and-recovery experiments to assess matrix effects. Evaluate cross-reactivity by testing structurally similar compounds.

- Final Decision:

- If the bias is consistent and predictable, it may be possible to apply a mathematical correction to the results from the new method.

- If the bias is deemed clinically insignificant or falls within pre-defined acceptable limits, you may decide to accept the method but document the bias clearly in the test's procedures and interpretations.

Data Presentation

The following table outlines the core metrics for reporting qualitative method comparisons. PPA and NPA are used for method comparisons, while Sensitivity and Specificity are reserved for evaluations against a diagnostic accuracy standard [24].

| Metric | Calculation Formula | Interpretation |

|---|---|---|

| Positive Percent Agreement (PPA) | (TP / (TP + FN)) × 100% | The candidate method's ability to categorize positive samples the same way the comparative method does. |

| Negative Percent Agreement (NPA) | (TN / (TN + FP)) × 100% | The candidate method's ability to categorize negative samples the same way the comparative method does. |

| Sensitivity | (TP / (TP + FN)) × 100% | The probability that the test will give a positive result for a sample that is truly positive. |

| Specificity | (TN / (TN + FP)) × 100% | The probability that the test will give a negative result for a sample that is truly negative. |

Abbreviations: TP = True Positive; TN = True Negative; FP = False Positive; FN = False Negative.

Key Parameters for Quantitative Assay Comparison

For quantitative tests, the comparison focuses on statistical measures of agreement and bias [24].

| Parameter | Description | Acceptance Criteria |

|---|---|---|

| Passing-Bablok Regression | A non-parametric method used to compare two measurement methods. It is robust to outliers and does not assume a normal distribution of errors. | The 95% confidence interval for the slope should contain 1, and for the intercept, it should contain 0. |

| Bland-Altman Analysis (Difference Plot) | Plots the difference between two methods against their average. It is used to visualize bias and agreement limits. | The mean difference (bias) should be close to zero and within clinically acceptable limits. The 95% limits of agreement should be narrow enough for clinical purposes. |

| Correlation Coefficient (r) | Measures the strength and direction of the linear relationship between two methods. | A high value (e.g., >0.975) indicates strong association, but does not prove agreement. |

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Method Comparison |

|---|---|

| Well-Characterized Panel of Samples | A set of clinical samples with values spanning the entire analytical measurement range. Used to assess accuracy, precision, and linearity. |

| Reference Standard | A material of known quantity and purity, often traceable to an international standard. Serves as the benchmark for determining accuracy in quantitative studies [25]. |

| Diagnostic Accuracy Criteria Panel | A panel of samples where the true positive/negative status is definitively known, established by a reference method or clinical outcome. Essential for determining true sensitivity and specificity [24]. |

| Quality Control Materials | Stable materials with known expected values. Used to monitor the precision and stability of both the candidate and comparative methods throughout the validation period. |

Frequently Asked Questions

FAQ 1: Why do we often see discrepant results when comparing data from different laboratories, even when using the same sample type?

Discrepant results between labs are frequently caused by variations in each step of the microbiome workflow [26]. Different methods for sample collection, DNA extraction, library preparation, sequencing, and bioinformatics analysis can introduce substantial bias and error [26]. A prominent example is the discrepant data between two major labs (American Gut and µBiome) in their analyses of the same fecal sample [26]. Standardization across labs is challenging, making direct comparison nearly impossible without the use of common standards [26].

FAQ 2: What are the key regulatory and guidance documents for validating alternative or rapid microbiological methods?

When validating new methods, you should consult relevant pharmacopoeia and technical reports. Key documents include [27]:

- Ph. Eur. general chapter 5.1.6. on alternative methods for control of microbiological quality.

- U.S. Pharmacopeia (USP) chapters <1223> on validation of alternative microbiological methods and <72> on sterility tests, which provides guidance on determining incubation times.

- PDA Technical Report No. 33, which provides guidance on demonstrating comparability between a new method and a compendial method, for both qualitative and quantitative assays [27].

FAQ 3: My differential abundance analysis in a microbiome study yields conflicting results with different statistical methods. How can I improve the replicability of my findings?

Some widely used Differential Abundance Analysis (DAA) methods are known to produce conflicting findings [28]. To improve replicability, consider using simpler, more robust statistical methods. Recent large-scale benchmarking studies suggest that the best performance, considering both consistency and sensitivity, is achieved by [28]:

- Analyzing relative abundances with non-parametric tests like the Wilcoxon test or ordinal regression models.

- Using linear regression or a t-test.

- Analyzing the presence/absence of taxa using logistic regression. These "elementary" methods have been shown to provide more replicable results across datasets [28].

Statistical Tools for Method Comparability

For quantitative method validation, PDA Technical Report No. 33 proposes recommendations for demonstrating comparability using statistical models. The table below outlines the key parameters and corresponding statistical tools [27].

| Parameter | Description | Recommended Statistical Tools / Approach |

|---|---|---|

| Accuracy | Closeness of agreement between test values and accepted reference values. | Statistical models comparing mean results from new and reference methods. |

| Precision | Closeness of agreement between independent test results under stipulated conditions. | Calculation of standard deviation and variance. |

| Linearity | Ability of the method to obtain test results proportional to the analyte concentration. | Regression analysis. |

| Range | The interval between the upper and lower levels of analyte for which suitable precision and accuracy are demonstrated. | Defined based on linearity and precision data. |

| Limit of Quantitation (LOQ) | The lowest level of analyte that can be quantified with acceptable precision and accuracy. | Determined from precision and accuracy data at low concentrations. |

Experimental Protocol: Validating a Qualitative Rapid Microbiological Method

This protocol outlines the key experiments for validating a qualitative alternative method (e.g., a rapid sterility test) against a compendial method, following Ph. Eur. 5.1.6 and USP <1223> guidelines [27].

1. Goal: To demonstrate that the alternative method is at least equivalent to the compendial method for detecting specified microorganisms.

2. Materials:

- Test Samples: Use the actual product or a placebo spiked with microorganisms.

- Microorganism Panel: A panel of relevant ATCC strains, typically including a mix of aerobic bacteria, anaerobic bacteria, and fungi (e.g., Staphylococcus aureus, Pseudomonas aeruginosa, Bacillus subtilis, Clostridium sporogenes, Candida albicans, Aspergillus brasiliensis).

- Equipment: The alternative method's instrument(s) and materials. Equipment for the compendial method (e.g., incubators, media).

3. Experimental Design & Procedure:

- Sample Inoculation: For each microorganism in the panel, independently inoculate test samples at two levels: a low microbial inoculum (e.g., ≤ 100 CFU) and a high microbial inoculum.

- Testing: Test the inoculated samples (and appropriate negative controls) in parallel using both the alternative method and the compendial method.

- Replication: Repeat the experiment a sufficient number of times (e.g., n=3 or as per validation guidance) to generate statistically sound data.

4. Data Analysis and Acceptance Criteria: The core of the analysis is to demonstrate non-inferiority. The alternative method must detect all microorganisms that the compendial method detects. A statistical analysis (e.g., a equivalence test) should show that the detection rate of the alternative method is not inferior to the compendial method within a pre-defined margin [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function / Explanation |

|---|---|

| Microbiome Reference Materials (e.g., ZymoBIOMICS) | Benchmarking materials with known microbial composition used to assess the accuracy and reproducibility of microbiome measurements across different labs and workflows [26]. |

| ATCC Strains | Certified microbial strains from a culture collection used for method validation studies to ensure the panel of microorganisms is relevant and well-characterized [27]. |

| Culture Media | Growth media used in compendial sterility or bioburden tests, and as a basis for comparison when validating rapid growth-based alternative methods [27] [29]. |

| Validated Rapid Method Kits | Commercial kits for specific rapid methods (e.g., digital PCR, solid phase cytometry, biocalorimetry) that have been developed and optimized for detecting microorganisms in complex samples like cell and gene therapy products [27]. |

Troubleshooting Guides

Bacterial Endotoxin Testing (BET) Troubleshooting

Q1: What are common causes of invalid or Out-of-Specification (OOS) results in the Bacterial Endotoxins Test (BET), and how are they resolved?

Invalid or OOS results require a structured, two-phase investigation as per FDA guidance [30]. The following table summarizes common technical issues and their evidence-based solutions.

Table 1: Common BET Issues and Corrective Actions

| Issue Manifestation | Potential Root Cause | Corrective & Preventive Actions |

|---|---|---|

| Inhibition/Enhancement (Failed Suitability) [31] [32] | Sample matrix interference (e.g., proteins, extreme pH, chelators). | • Perform serial dilution not exceeding the Maximum Valid Dilution (MVD) [30] [31].• Adjust sample pH to 6.5-7.5 [31].• Remove interferents via centrifugation or filtration [31]. |

| Low Endotoxin Recovery (LER) [32] | Endotoxin "masking" by product components (e.g., biologics, charged excipients). | • Conduct hold-time studies to assess recovery over extended periods [32].• Apply strategies from PDA Technical Report No. 82 on LER [32]. |

| Gel-Clot Interpretation Issues [31] | Atypical gel formation (flocculent precipitation); reagent sensitivity problems. | • Tilt tube 180° as per pharmacopeia to check for a solid clot [31].• Verify lysate sensitivity and expiration date [31]. |

| False Positives in Controls [31] | Environmental contamination or non-pyrogenic apparatus. | • Work in a laminar flow hood with aseptic technique [31].• Depyrogenate glassware by dry baking at 250°C for 30+ minutes [31]. |

| Kinetic Assay Abnormalities [31] | Improper temperature control or flawed optical systems. | • Verify thermal block precision (37.0°C ± 0.1°C) [31].• Calibrate spectrophotometers with NIST-traceable standards [31]. |

Experimental Protocol: Conducting a Two-Phase OOS Investigation [30]

- Phase I - Laboratory Investigation: Initiate immediately upon discovering an OOS. The objective is to identify obvious laboratory error.

- Notify management and Quality Assurance (QA).

- Document a detailed problem statement.

- Interview the analyst and review raw data, calculations, and equipment records.

- If possible, re-measure original preparations. If a clear lab error is found, invalidate the original test.

- Phase II - Full-Scale OOS Investigation: If Phase I is inconclusive, expand the investigation.

- Review manufacturing records, sampling procedures, and other batches.

- Perform structured retesting with a pre-defined number of replicates to avoid "testing into compliance."

- If the OOS is confirmed, the batch must be rejected. The investigation must be documented with conclusions and CAPA.

Q2: How should a lab validate its BET method for a new product to prevent discrepancies?

A robust method validation is essential for preventing future discrepancies. The core of this is the Inhibition/Enhancement (I/E) Test [32].

Table 2: Key Steps for BET Method Validation

| Step | Description | Acceptance Criterion |

|---|---|---|

| Determine MVD | Calculate the Maximum Valid Dilution: MVD = (Endotoxin Limit × Sample Concentration) / (λ × Sensitivity) [31]. |

Dilution must not exceed MVD. |

| Spike Recovery | Test the product at its chosen dilution, spiked with a known endotoxin concentration. | Mean recovery should be within 50-200% of the spiked amount [31]. |

| Confirm Labware | Use only depyrogenated, endotoxin-free tubes, tips, and plates. | Negative controls must confirm the absence of contaminating endotoxins. |

Sterility Testing Troubleshooting

Q3: What are the primary sources of sterility test failures, and how can they be controlled?

Sterility test failures can stem from the test process itself or from the product. A critical distinction must be made during investigation [33].

Figure 1: Sterility Test Failure Investigation Workflow

Key Control Strategies:

- Water System Control: The water system is a common contamination source. It must be designed to minimize biofilm (e.g., through continuous circulation and regular sanitization) and monitored with appropriate chemical and microbiological attributes [34].

- Process Validation: Drug manufacturing processes must be validated to demonstrate they consistently produce a sterile product. This includes validating all sterilization processes [34].

- Environmental Monitoring: Regular monitoring of air and surfaces in the aseptic processing area is critical for controlling contamination risks [33].

Q4: What advanced methodologies are emerging to improve sterility testing?

Innovative methods are being developed to provide faster results, especially for short shelf-life products like Cell and Gene Therapies (CGTs) [27].

- Rapid Growth-Based Methods: Technologies like biocalorimetry can detect microbial growth within 3 days, significantly faster than the 14-day compendial method, helping to reduce the "vein-to-vein" time for patients [27].

- Digital PCR (dPCR): A dPCR-based approach for sterility testing is under development, which can differentiate between background and positive signals with high specificity [27].

- Automated Plate Reading: Systems using Artificial Intelligence (AI) to automatically read environmental monitoring plates are being piloted, improving efficiency and traceability [27].

Frequently Asked Questions (FAQs)

Q5: Our purified water system keeps yielding B. cepacia. What should we do? A persistent B. cepacia biofilm indicates a fundamentally deficient water system design or control strategy [34]. The FDA has cited companies for this issue. Remediation requires a comprehensive assessment of the system's design, control, and maintenance. Switching to a continuously circulating system and implementing a robust, ongoing control and monitoring program is often necessary to ensure water consistently meets specifications [34].

Q6: What is the difference between pyrogenicity and endotoxin? Endotoxin is a specific type of pyrogen (a fever-causing substance), namely Lipopolysaccharides (LPS) from Gram-negative bacteria. Pyrogenicity, however, can be either:

- Microbial-mediated: Primarily caused by endotoxins (≈99% of cases), but also by components from Gram-positive bacteria or fungi [35].

- Material-mediated: Caused by chemical agents or other contaminants in the device or drug product itself [35]. No single test detects all pyrogens. The BET detects endotoxins, while the Rabbit Pyrogen Test or Monocyte Activation Test (MAT) are needed for a broader pyrogen screen [35].

Q7: What equipment validation is required for cGMP sterility testing? For any equipment used in cGMP testing (e.g., incubators, automated sterility test systems), full Installation, Operational, and Performance Qualification (IOPQ) is required [36].

- IQ: Verifies equipment is received and installed correctly.

- OQ: Confirms it operates according to specifications across its intended range.

- PQ: Demonstrates it performs consistently under actual working conditions to meet pre-defined acceptance criteria [36]. This validation differs from routine calibration and is a foundational expectation in a cGMP quality system.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for BET and Sterility Testing

| Item | Function & Importance |

|---|---|

| Limulus Amebocyte Lysate (LAL) / TAL | The critical reagent derived from horseshoe crab blood for BET; detects endotoxin via enzymatic cascade [31]. |

| Recombinant Factor C (rFC) Assay | An animal-free alternative for endotoxin detection using a laboratory-created Factor C protein [35]. |

| Endotoxin Standards | Used to calibrate the BET and perform inhibition/enhancement testing; must be handled without contamination [31]. |

| Bacterial Endotoxins Test (BET) Reagents | Includes chromogenic or turbidimetric substrates for kinetic assays, and specific buffers to maintain optimal reaction conditions [30] [31]. |

| Validated Culture Media | For sterility testing, must support the growth of a wide range of microorganisms; growth promotion testing is mandatory [33]. |

| Pyrogen-Free Water | The diluent for all BET reagents and samples; any endotoxin contamination will cause false positives [31] [32]. |

Experimental Workflow for Discrepancy Resolution

The following diagram outlines a generalized, high-level workflow for investigating discrepancies in microbiological quality control, integrating principles from both BET and sterility testing.

Figure 2: General OOS Investigation Flowchart

Advanced Troubleshooting for Challenging Samples and Methods

In the microbiological quality control (QC) of pharmaceuticals, method suitability testing is a critical and often complex process that ensures reliable QC results. A core challenge is overcoming the inherent antimicrobial activity in many finished products, which can be due to active pharmaceutical ingredients (APIs) with antimicrobial properties, added preservatives, or other excipients. If this activity is not properly neutralized during testing, it can lead to false-negative results, creating a dangerous assumption that contaminants are absent. These undetected contaminants can then multiply during product storage or use, resulting in potential health risks for consumers [37].

Method suitability testing evaluates the residual antimicrobial activity of the product being tested to ensure the absence of any inhibitory effects on the growth of microorganisms under the conditions of the test. The goal is to establish a testing method for each raw material or finished product that effectively neutralizes any antimicrobial activity, allowing the expected growth of control microorganisms and ensuring the method can accurately detect organisms in the presence of the product [37]. This technical support center provides troubleshooting guidance and optimized protocols to help researchers overcome these challenges within the broader context of resolving discrepant results in microbiological method verification research.

Troubleshooting Guides

Common Neutralization Challenges and Solutions

Table 1: Troubleshooting Guide for Neutralization Challenges

| Problem | Possible Cause | Recommended Solution | Verification Method |

|---|---|---|---|

| Poor microbial recovery during method suitability testing | Insufficient dilution to overcome antimicrobial activity | Increase dilution factor sequentially (e.g., 1:10, 1:100, 1:200) with diluent warming [37] | Compare recovery to untreated control; target ≥84% recovery [37] |

| Antimicrobial activity persists despite dilution | Product contains preservatives or surfactants | Add chemical neutralizers (1-5% Tween 80, 0.7% lecithin) [37] | Test recovery with neutralizers vs. dilution alone |

| Highly potent antimicrobial products (e.g., antibiotics) | Dilution alone is insufficient | Combine high dilution with membrane filtration and multiple rinsing steps [37] | Use different membrane filter types; verify with multiple rinses |

| Inhibition of specific microorganisms | Method not optimized for all compendial strains | Extend verification to include Burkholderia cepacia and other challenging strains [37] | Include full panel of standard strains in suitability testing |

| Discrepant results between labs | Variation in neutralization protocols | Standardize protocol using harmonized standards (USP <61>, ISO 16140) [13] [38] | Implement interlaboratory comparison studies |

Advanced Optimization Strategies