Primer Specificity Validation with BLAST Analysis: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on validating primer specificity using BLAST analysis.

Primer Specificity Validation with BLAST Analysis: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating primer specificity using BLAST analysis. It covers the foundational principles of why specificity is critical for assay accuracy, detailing common pitfalls like non-specific amplification and primer-dimer formation that can lead to false positives. The guide offers a step-by-step methodological framework for using tools like NCBI Primer-BLAST and interpreting results, alongside advanced troubleshooting and optimization strategies for challenging templates such as GC-rich regions or complex genomes. Furthermore, it explores supplementary in-silico validation techniques and compares BLAST analysis with other bioinformatics tools, empowering scientists to design robust, specific primers essential for reliable PCR outcomes in diagnostics and clinical research.

Why Primer Specificity is Your Most Critical Assay Variable

The Critical Impact of Non-Specific Amplification on Research Outcomes

Non-specific amplification represents a pervasive and critical challenge in molecular biology, capable of undermining the validity of research data, diagnostic results, and drug development processes. This phenomenon occurs when primers or probes bind to unintended nucleic acid sequences, leading to the amplification of off-target products that can generate false positives, reduce assay sensitivity, and compromise quantitative accuracy. The implications extend across diverse fields—from clinical diagnostics to fundamental research—where the integrity of molecular data directly impacts scientific conclusions and translational applications.

Within this context, BLAST (Basic Local Alignment Search Tool) analysis has emerged as an indispensable in silico method for pre-experimental validation of primer and probe specificity. By identifying potential cross-reactions with non-target sequences before laboratory work begins, BLAST analysis serves as a critical first line of defense against the costly consequences of non-specific amplification. This guide systematically compares the impact of non-specific amplification across different research scenarios, provides experimental data demonstrating its effects, and outlines methodology for leveraging BLAST-based validation to enhance research outcomes.

Comparative Analysis of Non-Specific Amplification Across Research Domains

Non-specific amplification manifests differently across experimental contexts, with varying consequences and methodological remedies. The table below summarizes key findings from published studies investigating this phenomenon:

Table 1: Comparative Impact of Non-Specific Amplification Across Research Domains

| Research Domain | Primary Cause of Non-Specificity | Impact on Research Outcomes | Recommended Solution |

|---|---|---|---|

| Gene Expression Studies (qPCR) | Primer-dimer formation; off-target amplification due to homologous sequences | False positive signals; reduced PCR efficiency; invalid quantification of correct products [1] | In silico validation with Primer-BLAST; primer design spanning exon-exon junctions [2] [1] |

| Microbial 16S rRNA Sequencing | Off-target amplification of host (human) DNA when bacterial biomass is low | Wasted sequencing reads (up to 77.2% in breast tumor samples); reduced statistical power for rare taxa [3] | Switch primer sets (V1-V2 region reduces human DNA amplification by 80% compared to V3-V4) [3] |

| Molecular Diagnostics | Flawed primer/probe design with structural incompatibilities and low selectivity | Critical specificity failures; false positive results in clinical samples [4] | Comprehensive in silico analysis (secondary structure prediction, specificity assessment) [4] |

| Isothermal Amplification (EXPAR) | Unconventional DNA polymerase activity interacting with single-stranded templates | Background amplification limiting sensitivity; high limits of detection [5] | Physical separation of template and polymerase until reaction temperature is reached [5] |

The data reveal that non-specific amplification is not a singular problem but rather a collection of related challenges requiring domain-specific solutions. Across all domains, however, a common theme emerges: pre-experimental in silico validation significantly mitigates the risk of non-specific amplification.

Experimental Evidence: Quantifying the Impact of Non-Specific Amplification

Case Study 1: Gene Expression Analysis in Wnt-Pathway Research

A comprehensive survey of 93 validated qPCR assays for genes in the Wnt-pathway demonstrated that amplification of nonspecific products occurs frequently, independent of Cq or PCR efficiency values [1]. Through systematic titration experiments, researchers determined that the occurrence of both low and high melting temperature artifacts depended critically on three factors:

- Annealing temperature

- Primer concentration

- cDNA input

Table 2: Experimental Conditions Leading to Non-Specific Amplification in qPCR

| Experimental Parameter | Effect on Specificity | Optimal Range/Condition |

|---|---|---|

| Primer Concentration | High concentrations increase primer-dimer formation | 1 μM (as used in validated Wnt-pathway assays) [1] |

| cDNA Input | High template concentrations increase off-target amplification | Titration required; 5 ng total RNA equivalents used in validation [1] |

| Annealing Temperature | Lower temperatures promote non-specific binding | 60°C for Wnt-pathway primers [1] |

| Bench Time | Longer pipetting times significantly increase artifacts | Standardize and minimize preparation time [1] |

Experimental Protocol: The researchers designed primers according to specific criteria: length of 19-22 bp, annealing Tm of 60±1°C, ≤1°C difference between primer Tms, limited similarity to other genomic sequences (especially in the last 4 bases at the 3' end), and amplicon size between 70-150 bp [1]. Primer specificity was verified using melting curve analysis, gel electrophoresis, and sequencing of PCR products.

Case Study 2: 16S rRNA Sequencing in Human Microbiome Studies

Research published in Scientific Reports revealed a profoundly underreported artifact in microbial ecology: off-target amplification of human DNA in 16S rRNA gene sequencing [3]. This problem particularly affects samples with low microbial biomass and high host DNA content, such as human biopsies.

Experimental Findings:

- Breast tumor samples amplified with V3-V4 primers showed 77.2% of amplicon sequence variants (ASVs) aligning to the human genome [3]

- Normal breast tissue showed 34.1% human-derived ASVs [3]

- Oesophageal biopsies showed 55.6% human-derived ASVs [3]

- In contrast, primer sets targeting the V1-V2 region reduced human DNA alignment by 80% [3]

Methodological Details: Researchers compared two primer sets (V1-V2 and V3-V4) using the same breast tumor samples. Library preparation involved 25 amplification cycles with NEBNext High Fidelity 2X PCR Master Mix, followed by sequencing on Illumina MiSeq with 2×300 bp chemistry. Bioinformatic analysis involved quality control with FastQC, trimming with Trimmomatic, and resolution into ASVs with DADA2 [3].

Case Study 3: Molecular Diagnostics for Visceral Leishmaniasis

A 2025 study evaluating the specificity of primers and TaqMan MGB probes for Leishmania detection revealed unexpected amplification in all negative control samples, indicating critical specificity failures [4]. The researchers employed both in silico analysis and experimental validation to diagnose and address the problem.

Experimental Protocol:

- Sample Collection: 85 serum samples from domestic dogs and wild animals

- Primer Design: LEISH-1/LEISH-2 primer pair with TaqMan MGB probe

- qPCR Conditions: Standard thermal cycling with fluorescence detection

- Specificity Assessment: Comparison with ELISA results

In Silico Analysis Methodology:

- Multiple sequence alignment using MAFFT

- Secondary structure prediction with RNAfold

- Specificity assessment using Primer-BLAST

- Thermodynamic analysis of oligonucleotides

The investigation revealed that the observed false positives stemmed primarily from probe-related issues rather than primer problems. The researchers subsequently designed a new oligonucleotide set (GIO) that demonstrated superior performance in computational analyses, with improved structural stability and specificity [4].

BLAST Analysis for Primer Specificity Validation: Methods and Tools

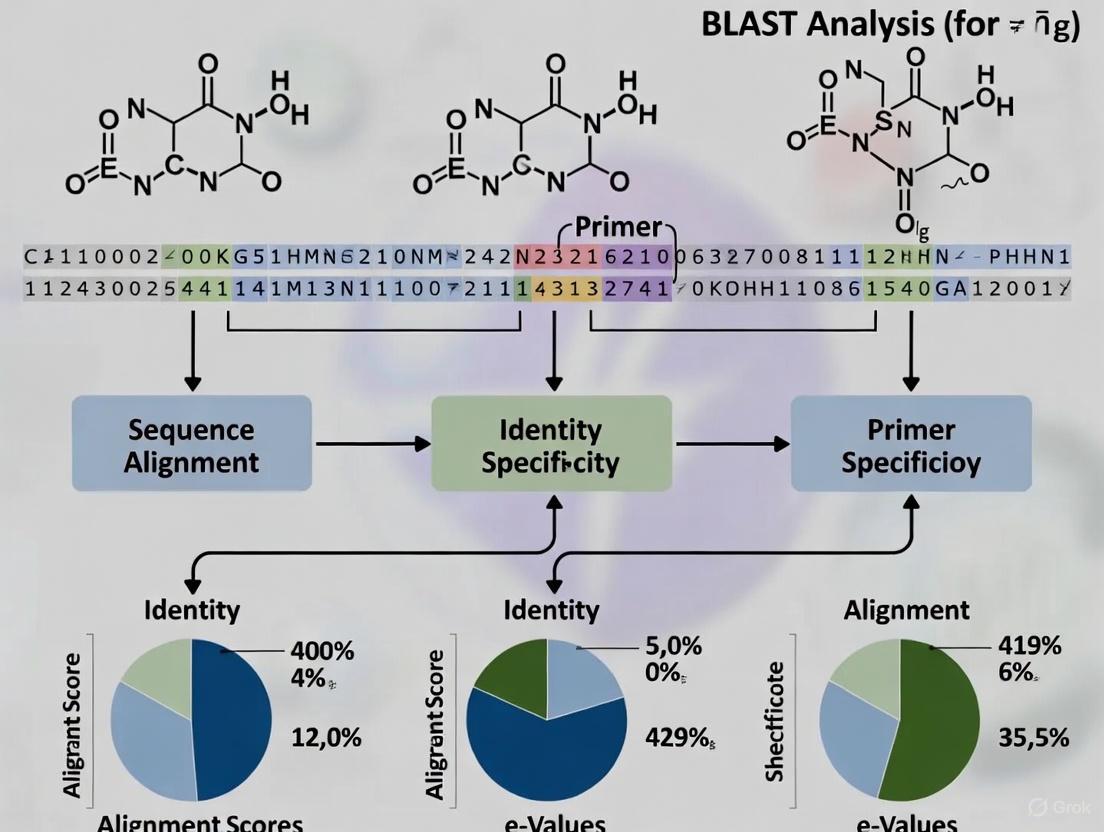

BLAST-based specificity checking represents a powerful approach for identifying potential non-specific amplification before conducting wet lab experiments. The following diagram illustrates the integrated workflow for BLAST-assisted primer design and validation:

Implementation of BLAST-Based Validation

NCBI Primer-BLAST represents the gold standard for in silico primer validation, integrating primer design tools with BLAST search capabilities to ensure target specificity [2]. The tool provides multiple critical parameters for controlling specificity assessment:

- Database Selection: Users can select from specialized databases including RefSeq mRNA, Refseq representative genomes, core_nt, and custom databases [2]

- Organism Restriction: Specificity checking can be limited to particular organisms, improving search speed and relevance [2]

- Exon-Junction Spanning: Primers can be designed to span exon-exon junctions, preventing amplification of genomic DNA [2]

- Mismatch Tolerance: Users can require a minimum number of mismatches to unintended targets, enhancing specificity [2]

AssayBLAST represents a newer tool specifically designed for analyzing large sets of primers and probes simultaneously [6]. Its optimized BLAST parameters include:

dust = 'no'(disables low-complexity filtering)word_size = 7(increases sensitivity for short sequences)gapopen = 10andgapextend = 6(prioritizes hits without gaps)reward = 5andpenalty = -4(favors exact matches) [6]

In validation studies, AssayBLAST achieved 97.5% accuracy in predicting probe-target hybridization outcomes compared to experimental microarray data [6].

Essential Research Reagent Solutions for Preventing Non-Specific Amplification

The following toolkit summarizes key laboratory reagents and bioinformatic resources for mitigating non-specific amplification:

Table 3: Research Reagent Solutions for Preventing Non-Specific Amplification

| Reagent/Resource | Function | Specific Application |

|---|---|---|

| Primer-BLAST | In silico primer design with integrated specificity checking | General PCR, qPCR primer design [2] |

| AssayBLAST | Analysis of large primer/probe sets against custom databases | Multiparameter assays, microarray design [6] |

| Hot-Start Polymerases | Inhibit polymerase activity at room temperature | Reduce primer-dimer formation in early PCR stages [1] |

| Exon-Junction Spanning Primers | Distinguish between cDNA and genomic DNA targets | Gene expression studies (qPCR) [2] [1] |

| PrimerBank | Repository of experimentally validated primers | Gene expression detection/quantification (human/mouse) [7] [8] |

| Strand-Displacing Polymerases | Enable isothermal amplification methods | EXPAR, LAMP, HDA applications [5] |

Non-specific amplification presents a multifaceted challenge with significant implications for research integrity across molecular biology, diagnostics, and microbial ecology. The experimental evidence demonstrates that the impact can be quantitative (reduced sensitivity, wasted sequencing capacity) and qualitative (false positives, erroneous conclusions). The case studies highlight that solution strategies must be tailored to specific experimental contexts—whether through primer redesign, alternative primer sets, or modified reaction conditions.

A consistent finding across all domains is the critical importance of comprehensive in silico validation using BLAST-based tools before experimental implementation. Resources such as Primer-BLAST and AssayBLAST provide researchers with powerful, accessible methods to identify potential cross-reactivity and optimize assay specificity. When combined with appropriate laboratory practices—including careful primer design, reaction optimization, and validation—these computational approaches significantly enhance the reliability and reproducibility of molecular assays, ultimately strengthening the foundation of biomedical research and diagnostic development.

In polymerase chain reaction (PCR) experiments, primer specificity is the definitive characteristic that ensures the amplification of the intended target DNA sequence and nothing more. Non-specific amplification occurs when primers anneal to regions other than the designated target, leading to false positives, reduced reaction efficiency, and inaccurate results in downstream analyses [9]. The core challenge in primer design lies in predicting and avoiding these off-target interactions through careful in silico validation before any wet-lab experiment begins.

The two primary manifestations of specificity failures are off-target binding and primer-dimer formation. Off-target binding can occur when even a single primer matches multiple genomic locations, potentially leading to the amplification of unintended sequences, especially in the presence of recent gene duplicates [9]. Primer-dimers are self-artifacts where primers anneal to themselves or each other, driven by complementary sequences, which consumes reaction resources and can outcompete target amplification [10]. This guide objectively compares the predominant methods for validating primer specificity: automated suites like NCBI's Primer-BLAST and manual BLAST analysis, providing a framework for researchers to select the optimal strategy for their validation needs.

Comparative Analysis of Specificity Validation Methods

The two principal approaches for confirming primer specificity are using integrated automated tools and conducting manual BLAST searches. The following table summarizes the core characteristics of each method.

Table 1: Comparison of Primer Specificity Validation Methods

| Feature | Automated Tool (e.g., NCBI Primer-BLAST) | Manual BLAST Analysis |

|---|---|---|

| Core Function | Automatically designs primers and/or checks their specificity against a selected database [2] [11] | Allows user-controlled alignment of primer sequences against a custom database to check for mis-priming [9] |

| Primary Use Case | Designing new primer pairs or checking pre-designed pairs for a specific template [11] | In-depth investigation of potential off-target hits, especially for problematic sequences or multiplexing applications [9] |

| Key Advantages | - High convenience and speed- Integrates primer design with specificity check- Provides a list of specific primer pairs- Configurable for mRNA/cDNA applications (e.g., exon junction spanning) [2] | - Offers maximum control over search parameters and result interpretation- Enables concatenated BLAST of both primers to find potential amplicons [9] |

| Critical Parameters | - Source organism and database selection- "Primer must span an exon-exon junction" option [2] | - -task blastn-short for sensitivity- -dust no -soft_masking false to search entire genome- Custom scoring (e.g., -penalty -3 -reward 1) [9] |

| Limitations | A "black box" process with less user control over the final primer selection algorithm | Steeper learning curve; requires user expertise to set parameters and correctly interpret all hits [9] |

Experimental Protocols for Specificity Assessment

Protocol 1: Specificity Check Using NCBI Primer-BLAST

This protocol is ideal for designing new primers or when a specific template sequence (e.g., an mRNA RefSeq accession) is available [11].

- Access the Tool: Navigate to the NCBI Primer-BLAST submission form.

- Input Template: In the "PCR Template" section, enter the target sequence as an accession number (e.g., an NCBI mRNA reference sequence) or in FASTA format. Using a RefSeq mRNA accession directs the tool to design primers specific to that splice variant [2] [11].

- Set Primer Parameters (Optional): If you have pre-designed primers, enter their sequences in the "Primer Parameters" section. Use the actual sequence (5'→3') for the forward primer (plus strand) and the reverse primer (minus strand) [2].

- Configure Specificity Parameters: This is a critical step for obtaining precise results.

- Organism: Enter the source organism name. This is strongly recommended to speed up the search and ensure relevance [2] [11].

- Database: Select the smallest database that contains your target (e.g., Refseq mRNA) for the most precise results. For broadest coverage, the "nr" database can be used [2] [11].

- Exon Junction Span (for mRNA/cDNA): To ensure amplification is specific to cDNA and not genomic DNA, select the "Primer must span an exon-exon junction" option. This forces at least one primer in a pair to span a junction [2].

- Execute and Analyze: Click "Get Primers." The tool returns a list of primer pairs and their specific PCR products, showing the intended target and any potential off-target amplicons based on the database search [2].

Protocol 2: Specificity Check Using Manual BLASTN

This protocol offers granular control and is suited for verifying pre-designed primers, especially when investigating weak off-target binding or for multiplex PCR assays [9].

- Sequence Preparation: Obtain the sequences (5'→3') for your forward and reverse primers.

- Database Selection: Curate a BLAST database specific to your experiment. For most cases, this means using the genome of your organism of interest rather than a massive, multi-species database. This increases search sensitivity [9].

- Configure BLASTN Parameters: The standard BLASTN settings are not sensitive enough for short primer sequences. Use the following specialized parameters [9]:

-task blastn-short: Decreases the word size to 7, making the search sensitive enough to find short alignments with mismatches.-dust no -soft_masking false: Turns off filters for repetitive or low-complexity regions, ensuring you search the entire genome.- Custom Scoring: Adjust the scoring system to penalize mismatches heavily, reflecting the strict requirements for primer annealing:

-reward 1 -penalty -3 -gapopen 5 -gapextend 2.

- Run BLAST and Interpret Hits: Execute the search and analyze the results.

- Ideal Outcome: A single, high-quality hit per primer in the expected genomic location.

- Check Coordinates: If multiple hits are found, examine their genomic coordinates and orientation. For a primer pair to amplify an off-target product, both must bind in forward-reverse orientation within a feasible distance (ideally under 1000 bp) [9].

- Concatenated BLAST: To check for potential amplicons from both primers, concatenate them with a few "NNN" nucleotides in between and BLAST this combined sequence. This can reveal genomic segments where both primers might bind to generate a spurious product [9].

Workflow Visualization for Primer Specificity Analysis

The following diagram illustrates the logical decision pathway and methodologies for the two specificity validation protocols described above.

Successful primer design and validation rely on a suite of in silico and wet-lab resources. The following table details key solutions for this process.

Table 2: Essential Research Reagent Solutions for Primer Design and Validation

| Tool/Reagent | Function/Description | Key Application Notes |

|---|---|---|

| NCBI Primer-BLAST [2] [11] | An integrated tool for designing primers and checking their specificity against nucleotide databases in one step. | The primary tool for designing new target-specific primers. Crucial for designing primers that span exon-exon junctions for cDNA-specific amplification. |

| BLASTN Suite [9] | A standard algorithm for comparing nucleotide sequences. When configured with specific parameters, it is powerful for manual primer specificity checking. | Use -task blastn-short and other specialized parameters for sensitive detection of short, partial primer matches. Essential for in-depth off-target analysis. |

| IDT OligoAnalyzer Tool [10] | A free online tool for analyzing oligonucleotide properties, including melting temperature (Tm), hairpins, self-dimers, and heterodimers. | Screen primer designs for self-complementarity (ΔG > -9 kcal/mol). Check Tm to ensure forward and reverse primers are within 2°C of each other. |

| Thermostable DNA Polymerase | Enzyme that catalyzes the synthesis of new DNA strands during PCR. | Selection depends on amplicon length and fidelity requirements. Standard Taq polymerase is insufficient for long amplicons (>500 bp) or targets with high GC content. |

| DNase I (RNase-free) | Enzyme that degrades DNA. | Treat RNA samples before reverse transcription to remove contaminating genomic DNA, which is critical for accurate gene expression analysis via qPCR [10]. |

The comparative data and protocols presented demonstrate that both automated and manual BLAST strategies are essential for a robust primer specificity validation workflow. NCBI Primer-BLAST offers an unparalleled, streamlined solution for most standard applications, particularly when a clear template sequence is defined. Its integration of design and validation accelerates the research process. Conversely, manual BLAST analysis provides the necessary flexibility and depth for troubleshooting difficult primers, designing complex multiplex assays, or when working with non-standard genomes or metagenomic samples.

The choice between methods should be guided by the experimental context. For routine cloning or gene expression analysis (qPCR) of a single transcript variant, Primer-BLAST is typically sufficient and more efficient. However, for applications where the cost of failure is high, such as in diagnostic assay development, or when investigating gene families with high homology, the rigorous, investigator-led approach of manual BLAST is indispensable. Ultimately, defining and ensuring primer specificity is a critical, non-negotiable step in the scientific method of PCR-based research. By leveraging the appropriate tools and understanding their strengths and limitations, researchers can confidently generate reliable, reproducible, and meaningful experimental data.

In polymerase chain reaction (PCR) experiments, the success of DNA amplification hinges on the precise interaction between short synthetic oligonucleotides (primers) and the template DNA. These primer-template interactions are governed by a set of fundamental parameters that collectively determine the efficiency, specificity, and yield of the PCR reaction. Three core parameters—melting temperature (Tm), GC content, and secondary structures—form the foundation of effective primer design. Proper management of these parameters ensures that primers bind specifically to their target sequences while avoiding non-specific amplification and structural complications that can compromise experimental results. The accurate prediction and control of these interactions are particularly crucial in applications requiring high specificity, such as diagnostic assay development, species-specific detection, and multiplex PCR systems. This guide examines these core parameters in detail, providing a comparative analysis of their optimal ranges and experimental implications to assist researchers in designing robust PCR assays.

Core Parameter Analysis: Quantitative Comparison

The interplay between melting temperature, GC content, and secondary structures establishes the thermodynamic framework for primer-template interactions. The table below summarizes the optimal ranges and critical considerations for these core parameters based on established primer design guidelines.

Table 1: Core Parameters Governing Primer-Template Interactions

| Parameter | Optimal Range | Impact on PCR | Consequences of Deviation |

|---|---|---|---|

| Primer Length | 18-25 nucleotides [12] [13] [14] | Balances specificity with binding efficiency | Short primers: Reduced specificity; Long primers: Secondary structure formation |

| Melting Temperature (Tm) | 52-65°C [12] [13]; Ideal: 55-65°C [13] [14] | Determines annealing temperature | Too high: Low product yield; Too low: Non-specific products |

| GC Content | 40-60% [12] [13] [14] | Affects primer stability and Tm | Low: Unstable binding; High: Non-specific binding |

| GC Clamp | 1-2 G/C bases in last 5 bases at 3' end [12] [14] | Stabilizes primer binding at extension point | >3 G/C bases: Increases non-specific priming |

| 3' End Stability | Maximum ΔG of five bases from 3' end [12] | Affects false priming | Unstable 3' end (less negative ΔG): Reduces false priming |

| Tm Difference Between Primer Pair | ≤2-5°C [12] [14] | Ensures synchronous binding | >5°C difference: Can lead to no amplification |

Melting Temperature (Tm) Fundamentals

Melting temperature represents the temperature at which 50% of the primer-template duplex dissociates into single strands, indicating duplex stability [12] [13]. The Tm directly determines the appropriate annealing temperature (Ta) for PCR cycling parameters. According to the Rychlik formula, which is widely respected for calculating optimum annealing temperature:

Ta Opt = 0.3 × (Tm of primer) + 0.7 × (Tm of product) - 14.9 [12]

This formula accounts for both primer stability and product characteristics, typically resulting in good PCR product yield with minimal false products. For practical applications, the annealing temperature is generally set 2-5°C below the lower Tm of the primer pair [14]. Modern Tm calculations typically employ the nearest neighbor thermodynamic method, which incorporates di-nucleotide pair enthalpy (ΔH) and entropy (ΔS) values with salt corrections, providing superior accuracy compared to simple GC-content based approximations [12].

GC Content and Distribution

GC content represents the percentage of guanine and cytosine bases within the primer sequence. The stability of primer-template binding is significantly influenced by GC content due to the triple hydrogen bonds between G-C base pairs compared to the double bonds in A-T pairs [13] [15]. The distribution of GC bases throughout the primer is equally important—clusters of G/C bases or long runs of a single nucleotide should be avoided as they can promote mispriming [12] [14]. Specifically, more than three G or C bases within the last five bases at the 3' end should be avoided as this creates overly strong binding that increases non-specific amplification [12] [13]. A balanced distribution of GC bases throughout the primer ensures stable yet specific binding across the entire primer-template interface.

Secondary Structure Considerations

Secondary structures formed by intramolecular or intermolecular interactions can significantly impair primer functionality by reducing primer availability for target binding.

Table 2: Secondary Structure Parameters and Tolerances

| Structure Type | Definition | Stability Tolerance (ΔG) | Impact on PCR |

|---|---|---|---|

| Hairpins | Intramolecular folding within a single primer [12] [15] | -2 kcal/mol (3' end); -3 kcal/mol (internal) [12] | Reduces primer availability; 3' end hairpins most detrimental |

| Self-Dimers | Intermolecular interactions between two identical primers [12] [14] | -5 kcal/mol (3' end); -6 kcal/mol (internal) [12] | Consumes primers; reduces product yield |

| Cross-Dimers | Intermolecular interactions between forward and reverse primers [12] [14] | -5 kcal/mol (3' end); -6 kcal/mol (internal) [12] | Creates primer-dimer artifacts; competes with target amplification |

The stability of these secondary structures is quantified by Gibbs Free Energy (ΔG), where larger negative values indicate more stable, problematic structures [12]. The relationship is defined by ΔG = ΔH – TΔS, where ΔH represents enthalpy change and ΔS represents entropy change. Screening tools such as OligoAnalyzer can calculate these ΔG values to help researchers eliminate primers with problematic secondary structures [14].

Experimental Validation and BLAST Analysis Protocols

Specificity Validation Using BLAST Analysis

Ensuring primer specificity is critical for accurate PCR results, particularly when distinguishing between closely related species or genetic variants. The National Center for Biotechnology Information's Primer-BLAST tool represents the gold standard for validating primer specificity, integrating primer design with comprehensive database searching [2] [11]. The following workflow illustrates the specificity validation process:

For pre-designed primers, a concatenation approach can enhance specificity validation. By joining forward and reverse primers with 5-10 "N" nucleotides and searching against an appropriate database, researchers can simultaneously verify both primers binding to the same genomic location with correct orientation and spacing [16]. This method efficiently confirms that the primer pair will generate a single amplicon of the expected size from the intended target.

Specialized BLAST Parameters for Primer Analysis

Standard BLAST parameters are optimized for longer sequences and may lack sensitivity for primer-length queries. The following specialized BLASTN parameters significantly improve detection of potential off-target binding sites for primers [9]:

- Task: blastn-short (decreases word size to 7 for better sensitivity with short sequences)

- Filtering: -dust no -soft_masking false (avoids ignoring repetitive regions)

- Scoring: -penalty -3 -reward 1 (increases mismatch penalty for stricter alignment)

- Gap penalties: -gapopen 5 -gapextend 2 (strongly penalizes gaps in primer alignment)

These parameters enhance the detection of partial matches that could lead to undesirable mis-priming, even with sequences that have only limited similarity [9]. The search should be conducted against the most specific database possible, typically the genome of the organism being studied, to improve sensitivity and reduce false positives [9].

Experimental Verification of Primer Performance

After in silico validation, wet-lab experimentation provides the ultimate verification of primer functionality. The following protocol outlines a systematic approach for experimental validation:

Pilot PCR Optimization: Conduct gradient PCR to determine optimal annealing temperature, typically 2-5°C below the calculated Tm of the primers [14].

Specificity Assessment: Run PCR products on agarose gels to verify a single amplicon of expected size. Sequence any secondary bands to identify sources of non-specific amplification.

Efficiency Calculation: For qPCR applications, generate standard curves with serial dilutions of template. Primers with 90-110% amplification efficiency are considered optimal.

Cross-Reactivity Testing: Test primers against related non-target species or isoforms to confirm specificity, particularly for species-specific assays.

Recent advances in high-throughput primer evaluation, such as the piecewise logistic model implemented in PrimerScore2, enable scoring systems that predict primer performance based on multiple parameters [17]. This approach was validated in a study where 17 out of 19 (89.5%) low-scoring primer pairs demonstrated poor amplification depth, while 18 out of 19 (94.7%) high-scoring pairs showed high depth in NGS libraries [17].

Research Reagent Solutions for Primer Design and Validation

Table 3: Essential Tools and Reagents for Primer Design and Validation

| Tool/Reagent Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Primer Design Software | Primer-BLAST [2] [11], Primer3 [17], Primer Premier [12] | Automated primer design following established parameters | Standard PCR, qPCR, and specialized PCR applications |

| Specificity Validation Tools | NCBI Primer-BLAST [11], SequenceServer [9], PrimeSpecPCR [18] | Database searching for off-target binding sites | Ensuring species-specific amplification; avoiding cross-homology |

| Secondary Structure Analysis | OligoAnalyzer [14], Primer3 ntthal algorithm [17] | Prediction of hairpins, self-dimers, and cross-dimers | Eliminating primers with problematic intermolecular interactions |

| Thermodynamic Calculation Tools | Primer3 oligotm [17], Nearest-neighbor calculator [12] | Accurate Tm prediction using di-nucleotide values | Determining optimal annealing temperatures |

| Multiplex Primer Design | PrimerPlex [12], PrimerScore2 [17] | Design of multiple primer pairs for simultaneous amplification | SNP genotyping, multiplex PCR panels |

The three core parameters of melting temperature, GC content, and secondary structures collectively govern the fundamental interactions between primers and template DNA in PCR experiments. Through systematic management of these parameters—maintaining Tm between 52-65°C, GC content between 40-60%, and minimizing stable secondary structures—researchers can significantly improve PCR specificity and efficiency. The integration of computational design tools with comprehensive BLAST analysis provides a robust framework for developing primers that meet exacting experimental requirements, particularly for applications demanding high specificity such as diagnostic assays and species identification. As PCR technologies continue to evolve, the precise control of these core interactions remains essential for generating reliable, reproducible results across diverse molecular biology applications.

How BLAST Analysis Predicts and Prevents Experimental Failure

In molecular biology research, experimental failure from non-specific primer binding is a major bottleneck, leading to inconclusive results, wasted reagents, and significant project delays. Ensuring primer specificity is paramount for the accuracy of techniques like PCR. This is where Basic Local Alignment Search Tool (BLAST) analysis becomes an indispensable predictive tool. By computationally screening primers against genomic databases before laboratory experiments, researchers can identify potential off-target binding sites and optimize primer design to prevent failure.

This article frames BLAST analysis within the context of primer specificity validation, comparing its performance against alternative bioinformatics tools and conventional methods without in-silico validation. We objectively evaluate these methodologies based on experimental data, supporting a broader thesis on the critical role of pre-experimental validation in robust scientific research.

The Critical Role of Primer Specificity

The polymerase chain reaction (PCR) is a foundational technique in molecular biology, diagnostics, and drug development. Its success critically depends on the specific binding of designed primers to their intended target DNA sequences. Non-specific amplification occurs when primers bind to non-target regions, leading to false-positive results, erroneous data interpretation, and compromised diagnostic conclusions [19].

The challenges in primer design are compounded by the genomic variability among viral strains and the necessity for primers that can target multiple variants conservedly. Conventional primer design methods often rely on manual curation, making them time-consuming and susceptible to researcher biases. Factors such as optimal primer length, GC content, melting temperature, and the potential formation of primer dimers or hairpins further complicate the design process and threaten experimental reliability [19]. Automated, bioinformatics-driven approaches that integrate specificity validation are thus essential for modern molecular biology.

Methodology: Experimental Protocols for Specificity Validation

In-silico BLAST Analysis Protocol

A standard protocol for validating primer specificity using BLAST involves a precise sequence of steps to ensure comprehensive analysis. The following workflow details this procedure, from sequence preparation to final specificity confirmation.

Workflow Description: The process begins with the automated retrieval of relevant plant virus genomic sequences from the NCBI database using tools like Biopython. These sequences undergo Multiple Sequence Alignment (MSA) using algorithms like Clustal Omega to identify conserved regions. A consensus sequence is generated, representing the shared genetic information, which serves as the template for primer design [19].

Primer design parameters are optimized, after which the critical step of Primer-BLAST analysis is performed. This specialized BLAST tool checks the proposed primers against reference databases to predict potential cross-hybridization with non-target sequences. If off-target binding is predicted, primer parameters are optimized, and the BLAST analysis is repeated. Primers passing this in-silico validation proceed to wet-lab experimental testing, resulting in primers with confirmed high specificity [19].

Conventional Non-Computational Methods

Traditional primer design often relies on manual design using limited sequence information and basic parameters like melting temperature and length, without systematic specificity verification. Gel electrophoresis is the primary method for detecting non-specific amplification, but this occurs post-experiment, after resources have already been consumed. This approach is inherently reactive rather than predictive, making it less efficient and more prone to failure compared to BLAST-based methods [19].

Comparative Performance Analysis

Quantitative Comparison of Validation Methods

The table below summarizes the objective comparison between BLAST-based validation and alternative approaches, based on experimental data and tool capabilities.

Table 1: Performance comparison of primer specificity validation methods

| Method | Specificity Validation Approach | Prevention of Experimental Failure | Time Required | Wet-Lab Validation Success Rate | Key Limitations |

|---|---|---|---|---|---|

| BLAST Analysis | Computational prediction of off-target binding across genomic databases | Predictive (pre-experiment) | Minutes to hours | High (Validated by Primer-BLAST) [19] | Limited by database completeness; does not account for complex secondary structures |

| Alternative Bioinformatics Tools (e.g., AutoPVPrimer) | Integrated random forest classifier & visual dimer analysis [19] | Predictive (pre-experiment) | Minutes (automated) | High (Reported for Tomato Mosaic Virus) [19] | Requires computational expertise; modular pipeline setup |

| Conventional (No In-Silico Validation) | Post-experimental gel analysis | Reactive (post-experiment) | N/A (failure detected after execution) | Variable & Unreliable [19] | High rate of false positives/negatives; resource-intensive troubleshooting |

Case Study: AutoPVPrimer Pipeline for Plant Viruses

The AutoPVPrimer pipeline exemplifies the integration of BLAST analysis into a comprehensive, AI-enhanced workflow for plant virus primer design. In one application targeting the Tomato Mosaic Virus (ToMV), the pipeline successfully designed specific primers by:

- Automated Sequence Retrieval: Using Biopython to gather genomic sequences from NCBI [19].

- Consensus Generation: Creating a consensus sequence from aligned genomes to target conserved regions [19].

- Machine Learning-Optimized Design: Employing a random forest classifier to optimize primer design parameters [19].

- Specificity Validation: Using Primer-BLAST to confirm primer specificity against non-target sequences [19].

This case demonstrates that a methodology incorporating BLAST analysis significantly increases the probability of experimental success by preemptively identifying and eliminating primers with potential for cross-reactivity.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful primer design and validation rely on a suite of computational tools and reagents. The following table details these essential components.

Table 2: Essential research reagents and solutions for primer design and validation

| Tool/Reagent | Function | Role in Preventing Experimental Failure |

|---|---|---|

| NCBI Database | Repository of genomic sequences | Provides comprehensive data for target identification and off-target prediction |

| BLAST Suite | Computational tool for sequence similarity search | Identifies potential cross-hybridization sites before experiments |

| Biopython | Python library for bioinformatics | Automates sequence retrieval and analysis tasks |

| Clustal Omega | Multiple sequence alignment tool | Identifies conserved regions for robust primer design across variants |

| primer3-py | Python binding for Primer3 | Automates core primer design based on thermodynamic parameters |

| PCR Reagents | Enzymes, nucleotides, buffers | High-quality reagents ensure efficient amplification after specific primers are designed |

| AutoPVPrimer | AI-enhanced primer design pipeline | Integrates machine learning and BLAST validation for optimized design [19] |

BLAST analysis stands as a critical, non-negotiable step in modern primer design, effectively predicting and preventing experimental failure by identifying non-specific binding risks in silico. When compared to conventional methods lacking computational validation or integrated into advanced pipelines like AutoPVPrimer, BLAST-based validation demonstrates superior performance in ensuring primer specificity, saving valuable time and resources.

The integration of BLAST analysis into the experimental design workflow, particularly within the context of primer specificity validation research, represents a fundamental shift from reactive troubleshooting to predictive experimental design. This approach significantly enhances the reliability, reproducibility, and efficiency of molecular biology research, directly contributing to more robust scientific outcomes in diagnostics and drug development.

A Step-by-Step Protocol for BLAST-Based Primer Validation

The validation of primer specificity is a critical step in molecular biology research and drug development. For standard primers, conventional BLASTN searches with default parameters are typically sufficient. However, when the query involves short oligonucleotides (e.g., antisense oligonucleotides or ASOs), these default settings often fail to identify significant matches, risking false negatives in specificity analysis. This guide details the essential parameter adjustments required to optimize BLASTN for short oligos, compares its performance against alternative tools, and presents supporting experimental data, framing the discussion within the broader context of primer specificity validation research.

Essential BLASTN Parameters for Short Oligo Analysis

When configuring BLASTN for short queries, specific parameter adjustments are non-negotiable to ensure sensitivity. The table below summarizes the critical parameters and their adjusted values for short oligo searches compared to standard BLASTN.

Table 1: Critical BLASTN Parameter Adjustments for Short Oligonucleotides

| Parameter | Standard blastn Default | Recommended for Short Oligos | Functional Impact |

|---|---|---|---|

| -task | megablast or blastn |

blastn-short |

Optimizes the entire algorithm for query sequences typically shorter than 30 nucleotides. [20] [21] |

| -word_size | 11 (for blastn task) |

7 | Reduces the length of the initial exact match seed, increasing search sensitivity for short sequences. [20] [21] |

| -dust | yes (or 20 64 1) |

no |

Disables masking of low-complexity regions, which is crucial as short oligos can be mistaken for such repeats. [20] [21] |

| -evalue | 10 | 1000 - 10000 | Significantly relaxes the E-value threshold to account for the high probability of finding short matches by random chance in large databases. [21] [22] |

| -reward | 2 | 1 | Decreases the reward for a nucleotide match, refining the scoring system for shorter alignment lengths. [20] |

| -penalty | -3 | -3 (typically unchanged) | The penalty for a mismatch remains stringent to maintain specificity. [20] |

The -task blastn-short option is the cornerstone of this configuration. It automatically sets the word_size to 7 and adjusts the scoring matrix to be more permissive, which is essential for queries as short as 10-20 bases. [21] Without this task, BLAST may return no hits for short sequences even with a permissive E-value. [21] Disabling the dust filter with -dust no is equally critical, as the default low-complexity masking can incorrectly filter out valid short oligonucleotide sequences. [21]

Performance Comparison with Alternative Tools

While a customized BLASTN is highly effective, researchers have several tools at their disposal for specificity validation. The following table provides a high-level comparison.

Table 2: Performance and Application Comparison of Specificity Checking Tools

| Tool / Method | Primary Use Case | Key Strength | Key Limitation | Typical Workflow |

|---|---|---|---|---|

| BLASTN (optimized) | Validating pre-designed short oligos (e.g., ASOs). | High flexibility and control over search parameters; can find targets with significant mismatches. [22] | Requires manual parameter tuning; local alignment may not show full primer-target alignment. [22] | Single-step specificity check of a known oligo sequence. |

| Primer-BLAST | De novo design of target-specific primers. | Integrated pipeline: designs primers and checks specificity in one step, using a global alignment for accuracy. [2] [22] | Less suitable for validating pre-designed, non-standard oligos like gapmers. | End-to-end primer design without an existing candidate sequence. |

| In-Silico PCR | Predicting amplicons from a primer pair. | Fast, index-based amplification prediction. | Limited sensitivity for detecting targets with mismatches; requires pre-processed databases. [22] | Rapidly checking the theoretical PCR product of a primer pair. |

Specialized tools like ASOG (AntiSense Oligonucleotide Generator) demonstrate the application of these principles in a dedicated pipeline. ASOG uses BLASTn to systematically detect off-target effects, a critical step in ASO development that relies on properly configured nucleotide searches. [23]

Experimental Protocols and Data

Robust experimental design is essential for generating reliable sequencing data that serves as the foundation for specificity validation.

High-Performance Protocol for Ultra-Short DNA Sequencing

A recent study developed an optimized protocol for sequencing ultra-short DNA fragments (as short as 40 bp) using Oxford Nanopore Technology (ONT), which is crucial for generating reference data for oligo validation. [24] [25] Key methodological adjustments from the standard ONT protocol include:

- Increased DNA Input: Using 250 fmol of dsDNA duplex for library preparation to compensate for lower ligation efficiency. [24] [25]

- Extended Ligation Time: Adapter ligation was performed for 20 minutes using the Quick T4 DNA Ligation Module to improve adapter attachment to short fragments. [24] [25]

- Modified Bead-Based Purification: An increased AMPure XP beads-to-DNA ratio of 1.8x was used to enhance the recovery of short fragments. [24] [25]

This high-performance protocol was benchmarked against the standard ONT protocol, achieving over ten times the sequencing output for 40 bp fragments, thereby providing high-quality data for downstream bioinformatic analysis. [24] [25]

Bioinformatic Analysis Workflow

The following diagram illustrates the typical bioinformatic processing and analysis workflow used to generate and validate short oligonucleotide sequences, from raw data to BLAST analysis.

Diagram 1: Bioinformatic Analysis Workflow

In the final BLAST analysis step, the representative sequences from clustering are used as queries. The parameters -task blastn-short and -dust no are applied to ensure sensitive detection of potential off-target binding sites across the genome. [25] [21]

The Scientist's Toolkit

The following reagents and software are essential for conducting experiments in ultra-short DNA sequencing and analysis.

Table 3: Essential Research Reagents and Software Solutions

| Item Name | Function / Application | Example Product / Source |

|---|---|---|

| Ligation Sequencing Kit | Prepares DNA libraries for nanopore sequencing by end-repairing, adenylating, and ligating adapters. | Oxford Nanopore Ligation Sequencing Kit (e.g., SQK-LSK114) [24] [25] |

| AMPure XP Beads | Solid-phase reversible immobilization (SPRI) beads for size-selective purification and cleanup of DNA libraries. | Beckman Coulter [24] [25] |

| Quick T4 DNA Ligation Module | Enzyme mix for efficient ligation of sequencing adapters to DNA fragments. | New England Biolabs (NEB) [24] [25] |

| BLAST Suite | The standard software package for performing local sequence alignment searches. | NCBI BLAST+ Command Line Applications [20] |

| Primer-BLAST | A web-based tool that integrates primer design with specificity checking using BLAST. | NCBI [2] [22] |

| Dorado Basecaller | Converts raw electrical signal from nanopore sequencers into nucleotide sequences (FASTQ). | Oxford Nanopore Technologies [25] |

Configuring BLASTN with -task blastn-short and -dust no is a fundamental requirement for the accurate specificity validation of short oligonucleotides. This optimized setup, when used within a robust experimental and bioinformatic workflow, provides researchers and drug development professionals with a reliable method to detect off-target effects, thereby de-risking experiments and therapeutic programs. While integrated tools like Primer-BLAST are excellent for standard primer design, a finely tuned BLASTN search remains the most flexible and powerful approach for analyzing pre-designed short oligos, such as ASOs.

In polymerase chain reaction (PCR) experiments, primer specificity is paramount for accurate and reliable results. A critical, yet often overlooked, factor in achieving this specificity is the selection of an appropriate nucleotide database for in silico validation. The database serves as the reference universe against which potential primer binding sites are compared; an incomplete or poorly chosen database can lead to undetected off-target binding and failed experiments. This guide objectively compares the performance and applications of available database options—from comprehensive public collections to focused custom genomes—providing researchers with the data needed to make informed decisions for their primer validation workflows.

Database Options for Primer Specificity Checking

The choice of database directly influences the sensitivity and specificity of your primer validation. The table below summarizes the key database options available in tools like Primer-BLAST and their optimal use cases.

Table 1: Comparison of Databases for Primer Specificity Validation

| Database Name | Description & Content | Best Use Cases | Key Considerations |

|---|---|---|---|

| RefSeq mRNA [2] [22] | Curated mRNA sequences from NCBI's Reference Sequence collection. | - Reverse Transcription PCR (RT-PCR)- Gene expression studies (qPCR) | High quality and non-redundant, but limited to annotated mRNA sequences. |

| RefSeq Representative Genomes [2] | A non-redundant set of the best-quality reference and representative genomes across taxa. | - Cross-species specificity checks- Designing primers for a broad group of organisms | Reduces computational time and complexity by minimizing redundancy. |

| core_nt [2] | The standard nucleotide collection (nr/nt) excluding eukaryotic chromosomal sequences from genome assemblies. | - General purpose specificity checking when a full genomic context is not needed | Faster search speed than the complete nt database [2]. |

| Custom Database [2] | User-defined sequences (FASTA), accession numbers, or genome assembly accessions. | - Metagenomic studies- Pathogen detection in a host background- Validating against proprietary or novel sequences | Offers maximum flexibility and relevance but requires user to provide high-quality sequences [2]. |

| Genomes for selected eukaryotic organisms [2] | RefSeq representative genomes from primary chromosome assemblies only, without alternate loci. | - Eukaryotic genomic DNA PCR- Avoiding false positives from highly similar paralogous genes | Avoids sequence redundancy introduced by including alternate loci, simplifying output [2]. |

Experimental Protocols for Database Selection

The following section details methodologies from published studies that have rigorously tested database performance in primer validation and related genomic analyses.

Protocol 1: Primer Specificity Workflow with Primer-BLAST

Primer-BLAST represents the gold standard for integrating primer design with specificity checking, using a combined BLAST and global alignment algorithm [22].

- Input Template: Provide the target sequence as a FASTA sequence, RefSeq accession, or NCBI gi.

- Database Selection: Choose the appropriate specificity database from the options in Table 1. For organism-specific amplification, it is strongly recommended to enter the organism name to limit the search, which increases speed and relevance [2].

- Algorithm Parameters: The tool uses a sensitive BLAST parameters to detect targets with up to 35% mismatches to the primer sequence. Users can adjust the maximum E-value (default is 30,000 for primer-only input) and the number of mismatches allowed in the 3' end to fine-tune stringency [22].

- Analysis: The algorithm performs a MegaBLAST search of the template to identify non-unique regions, instructing Primer3 to place primers in unique regions if possible. Candidate primers are then checked for specificity using a full primer-target alignment against the selected database [22].

Protocol 2: Large-Scale Primer Design and Validation with CREPE

For projects requiring high-throughput primer design (e.g., for targeted amplicon sequencing), the CREPE pipeline offers a scalable solution that combines Primer3 with the alignment tool ISPCR [26].

- Input and Primer Design: A custom input file specifying target genomic regions (in BED format) is processed to generate a Primer3 input file. Primer3 then designs forward and reverse primers for each target site [26].

- In Silico PCR and Specificity Check: Designed primer pairs are analyzed using ISPCR, which is configured with parameters optimized for sensitivity (

-minGood 15,-tileSize 11,-stepSize 5) to find potential off-target binding sites even with imperfect matches [26]. - Off-Target Assessment: A custom evaluation script processes the ISPCR output. It filters out low-quality alignments (score < 750) and calculates a normalized percent match between off-target and on-target amplicons. Off-targets with an 80-100% match are flagged as high-quality (concerning), while those below 80% are considered low-quality [26].

- Experimental Validation: In the original study, this pipeline achieved a >90% experimental success rate in PCR amplification for primers deemed acceptable by the CREPE evaluation script, demonstrating the practical efficacy of this validation method [26].

Protocol 3: BLAST-Based Validation for Metagenomic Detection

In sensitive applications like pathogen detection in metagenomic samples, a two-stage validation process is recommended to ensure precision [27].

- First-Pass Classification: Use a fast, heuristic classification tool (e.g., Kraken) with a standard database to process metagenomic reads and make initial taxonomic assignments [27].

- BLAST-Based Validation: Sequences assigned to the taxon of interest (e.g., a specific pathogen) are validated using BLASTN against a comprehensive database (e.g., NCBI

nt). - Result Filtering: The BLAST results are filtered based on optimal parameters (e.g., percent identity, alignment length) determined via simulation. Reads are simulated from genomes of the target taxon, and BLAST parameters are adjusted to maximize the recovery of true positives [27].

- Confirmation: A sequence is confirmed as a true positive only if its best BLAST hit meets the optimized threshold and is within the target taxon [27].

Performance and Experimental Data

Independent studies provide quantitative data on the performance of different database and tool combinations.

Table 2: Experimental Performance Metrics of Specificity Checking Tools

| Tool / Pipeline | Methodology | Key Performance Findings |

|---|---|---|

| Primer-BLAST [22] | Primer3 + BLAST/Global Alignment | Effectively detects potential amplification targets with up to 35% mismatches to primers, addressing a key limitation of standard BLAST [22]. |

| CREPE Pipeline [26] | Primer3 + ISPCR (BLAT) | Experimental PCR validation showed over 90% success rate for amplification when primers were pre-screened and deemed acceptable by the pipeline's off-target assessment [26]. |

| BLASTN Validation [27] | BLASTN against nt database |

When used to validate heuristic classifier results, this method provides high-precision confirmation of taxonomic assignments in metagenomic samples, though it is computationally intensive [27]. |

Visualization of Workflows

The following diagram illustrates the logical workflow for selecting a database and validating primer specificity, integrating the concepts and protocols discussed.

Database Selection and Primer Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Computational Tools for Primer Validation

| Item / Resource | Function / Description | Example / Source |

|---|---|---|

| Primer-BLAST | Web-based tool for designing target-specific primers or checking specificity of existing primers. | NCBI (https://www.ncbi.nlm.nih.gov/tools/primer-blast/) [2] [22] |

| CREPE Pipeline | Software for large-scale, parallel primer design and specificity analysis. | GitHub (BreussLabPublic) [26] |

| Reference Genome Sequence | High-quality genomic sequence used as a template for primer design and as a basis for custom databases. | NCBI RefSeq, Ensembl |

| BLAST+ Executables | Command-line version of the BLAST suite for local database searches and custom automation. | NCBI |

| In-Silico PCR Tool (ISPCR) | A tool for rapidly predicting PCR products from a set of primers against a reference genome. | UCSC Genome Browser [26] |

| ART (A.R.T.) | Simulation tool to generate synthetic next-generation sequencing reads for testing and validation. | [27] |

In molecular biology research and drug development, the polymerase chain reaction (PCR) is a foundational technique whose success critically depends on primer specificity. Non-specific primer binding can lead to amplification of unintended targets, compromising experimental results and diagnostic accuracy. The validation of primer specificity has therefore become an essential step in experimental design, with several bioinformatic tools now available to researchers. This guide objectively compares the performance of NCBI Primer-BLAST—a widely used web-based tool—with emerging alternatives for in silico primer validation, supported by experimental data and standardized analysis protocols.

Primer-BLAST combines the primer design capabilities of Primer3 with BLAST-based specificity checking, allowing researchers to either design new target-specific primers or check the specificity of existing primers. Its unique value proposition lies in integrating a global alignment algorithm with BLAST to ensure complete primer-target alignment, enabling detection of targets with significant mismatches (up to 35%) that might still be amplifiable under experimental conditions [22]. This technical implementation addresses a critical limitation of standard BLAST, which uses local alignment and may not return complete match information across the entire primer range.

Comparative Analysis of Primer Specificity Tools

Performance Metrics and Technical Specifications

Table 1: Core Functionality Comparison of Primer Specificity Tools

| Tool Feature | Primer-BLAST | CREPE | AssayBLAST | In-Silico PCR |

|---|---|---|---|---|

| Specificity Algorithm | BLAST + Needleman-Wunsch global alignment | BLAT (BLAST-Like Alignment Tool) | Optimized BLAST searches | BLAT with exact matching focus |

| Primer Design Capability | Integrated (Primer3) | Integrated (Primer3) | No (validation only) | No (validation only) |

| Off-target Detection Sensitivity | High (up to 35% mismatches) | Moderate (configurable) | High (adjusted parameters) | Low (perfect matches default) |

| Throughput Capacity | Single to moderate batches | High (parallel processing) | High (batch processing) | Moderate |

| Graphical Output | Detailed primer mapping | Limited (summary statistics) | Tabular data (matrix format) | Basic (hit/not hit) |

| Strand Specificity Checking | Yes | Not specified | Explicit dual-strand verification | Implicit |

| Experimental Validation | Extensive literature | 90% amplification success [26] | 97.5% microarray accuracy [6] | Limited published data |

Table 2: Technical Specifications and Output Capabilities

| Technical Parameter | Primer-BLAST | CREPE | AssayBLAST |

|---|---|---|---|

| Default Mismatch Tolerance | Up to 35% (7/20 bases) | Configurable (user-defined) | Up to 4 mismatches (default) |

| Graphical Output Elements | Template map, primer positions, exon/intron structure | Chromosomal coordinates, off-target counts | Genome positions, mismatch maps |

| Specificity Report Metrics | Off-target amplicon sizes, mismatch positions, alignment scores | Normalized percent match (80-100% = concerning) | Mismatch counts, strand orientation, Tm values |

| Database Flexibility | Multiple NCBI databases, organism restriction | Custom genome reference files | User-provided target sequences |

| Best Application Context | Standard PCR/qPCR primer design & validation | Targeted amplicon sequencing panels | Multiplex assays, microarray design |

Key Differentiators and Performance Insights

Primer-BLAST's distinctive advantage lies in its sensitive mismatch detection capabilities, employing BLAST parameters with an expect value cutoff of 30,000 (primer-only case) to ensure detection of potential amplification targets despite multiple mismatches [22]. The tool incorporates a two-stage process: first identifying template-specific regions using MegaBLAST, then generating candidate primers with Primer3 placed outside highly similar unintended sequences when possible [22].

Experimental data from comparative studies demonstrates that CREPE (CREATE Primers and Evaluate) achieves approximately 90% successful amplification for primers deemed acceptable by its evaluation script when validated in targeted amplicon sequencing applications [26]. This performance metric indicates robust prediction capabilities, though direct comparison studies between tools are limited in current literature.

AssayBLAST shows remarkable accuracy in microarray hybridization prediction, achieving 97.5% agreement between in silico predictions and experimental results when validating Staphylococcus aureus microarray assays [6]. This performance is attributed to its dual BLAST search approach (forward and reverse complement sequences) and stringent mismatch counting.

Experimental Protocols for Tool Validation

Standardized Workflow for Specificity Assessment

Table 3: Essential Research Reagent Solutions for Primer Validation Studies

| Reagent/Resource | Function in Validation | Example Sources/Platforms |

|---|---|---|

| Reference Genomes | Specificity database for in silico analysis | NCBI RefSeq, Ensembl, UCSC Genome Browser |

| BLAST Databases | Off-target binding assessment | nr/nt, RefSeq mRNA, RefSeq genomic |

| Oligo Analysis Tools | Primer thermodynamic properties | IDT OligoAnalyzer, Eurofins Genomics Tool |

| Target Sequences | PCR template for primer design | RefSeq mRNAs (NM_ accessions), GenBank records |

| In Silico PCR Tools | Amplicon prediction validation | UCSC In-Silico PCR, ISPCR (command-line) |

Diagram 1: Experimental workflow for comprehensive primer specificity validation using complementary computational tools.

Primer-BLAST Specific Protocol

Objective: Validate primer specificity for mRNA detection while minimizing genomic DNA amplification.

Methodology:

- Template Preparation: Obtain RefSeq mRNA accession (e.g., NM_000600 for human IL-6) [28]. Avoid coding sequence (CDS)-only entries as intron information is required for proper design.

- Parameter Configuration:

- Set product size range to 70-200 bp for optimal real-time PCR efficiency [28]

- Define melting temperatures: Min 59°C, Opt 62°C, Max 65°C with maximum Tm difference of 3°C

- Enable intron inclusion with minimum intron size of 200 bp to flag genomic contamination

- Specify target organism (e.g., Homo sapiens) to restrict specificity checking

- Specificity Assessment: Execute Primer-BLAST with default specificity parameters, which employ an expect value of 30,000 for primer-only cases to ensure sensitive off-target detection [22].

- Output Interpretation: Examine both graphical views and detailed specificity reports as described in Section 4.

Interpretation of Output Reports

Graphical Views Analysis

Primer-BLAST's graphical output provides an intuitive overview of primer binding locations relative to template features. The visualization includes:

- Template Structure: Exon-intron organization for gene sequences, with green bars indicating exons and connecting lines representing introns [29].

- Primer Positioning: Arrows showing primer binding locations and orientation, with forward primers above and reverse primers below the template line.

- Product Span: Amplicon length and position relative to important genomic landmarks.

- Feature Mapping: Critical elements like exon-exon junctions that primers can be designed to span, preventing genomic DNA amplification [2].

In the graphical display, researchers should verify that primer pairs:

- Flank the target region with adequate overlap (typically 15-30 bases into exons)

- Are positioned to span large introns (>200 bp) when detecting mRNA while avoiding genomic DNA

- Avoid known SNP sites that might create mismatches and reduce amplification efficiency

- Are located in regions without secondary structure that might inhibit binding

Specificity Report Interpretation

Diagram 2: Decision framework for interpreting Primer-BLAST specificity reports, highlighting critical mismatch assessment criteria.

The specificity report provides detailed alignment data between primer pairs and potential off-target sequences. Key interpretation elements include:

- Mismatch Significance: Experimental evidence indicates that mismatches at the 3' end of primers (particularly the last 2 bases) significantly reduce amplification efficiency, while 5' mismatches are more tolerable [22]. Primer-BLAST's global alignment ensures all mismatches are identified and positioned.

- Amplicon Context: Off-target products larger than 1000 bp are generally less concerning as PCR efficiency decreases with amplicon size [2].

- Cross-Reactivity Assessment: The tool checks not only forward-reverse pairs but also forward-forward and reverse-reverse combinations that might generate primer-dimer artifacts or amplify unintended targets [2].

- Normalized Match Scoring: Alternative tools like CREPE employ normalized percent match calculations (alignment score/amplicon length) to classify off-targets, with 80-100% match considered high-quality (concerning) off-targets [26].

Based on comparative analysis of experimental data and technical capabilities, researchers should select primer specificity tools according to their specific application needs. Primer-BLAST remains the optimal choice for standard PCR and qPCR applications, offering balanced sensitivity and user-friendly interpretation through its integrated graphical and specificity reports. For large-scale sequencing projects involving hundreds to thousands of targets, CREPE provides superior throughput with demonstrated 90% experimental success rates. For multiplex assays and microarray designs, AssayBLAST offers specialized validation with exceptional prediction accuracy (97.5%).

Critical success factors across all platforms include using curated reference databases (RefSeq over nr/nt when possible), implementing organism-restricted searches to improve speed and relevance, and correlating in silico predictions with experimental validation using standardized control templates. Future developments in primer specificity validation will likely focus on machine learning approaches that incorporate experimental amplification efficiency data to refine mismatch tolerance predictions, further bridging the gap between computational prediction and experimental results.

Within molecular biology and clinical diagnostics, the polymerase chain reaction (PCR) is a foundational technique for amplifying specific DNA regions. However, its success is critically dependent on the design of primers that are highly specific to the intended genomic target. Non-specific amplification can lead to false positives, reduced amplification efficiency, and erroneous results in downstream analyses [9]. This challenge is particularly acute in clinical settings, such as the analysis of human biopsy samples, where the target bacterial DNA is vastly outnumbered by human DNA [30].

This case study is situated within a broader thesis on the use of BLAST analysis for primer specificity validation. We objectively compare the performance of primer sets targeting different hypervariable regions of the 16S rRNA gene when applied to human gastrointestinal tract biopsies. The central problem is off-target amplification of human DNA, which can compromise the validity of microbiome profiling. We present experimental data comparing the widely used V4 primers to a modified V1–V2 primer set, evaluating their specificity, taxonomic richness, and overall performance in a challenging clinical sample type.

Primer Design Workflow and Specificity Validation

The process of designing and validating gene-specific primers is a multi-stage process that integrates bioinformatic tools with experimental verification. The following workflow outlines the critical steps from initial sequence selection to final specificity check.

Critical Steps in the Workflow

- Identify Target Gene Sequence: The process begins with the selection of an appropriate genetic marker. For bacterial identification and microbiome studies, the 16S ribosomal RNA (rRNA) gene is the standard marker due to its presence in all bacteria and its mix of highly conserved and variable regions [31] [32].

- Select Target Hypervariable Region: The choice of which variable region(s) (V1–V9) of the 16S rRNA gene to amplify is crucial. This decision directly impacts specificity and taxonomic resolution. This case study focuses on comparing the V4 and V1–V2 regions [31] [30].

- Design Primer Candidates: Tools like Primer3 are used to automate the design of primer pairs based on standard parameters, including melting temperature (Tm), GC content, and the absence of secondary structures like hairpins [26].

- Specificity Check with BLAST: Candidate primers are analyzed for specificity using tools like NCBI's Primer-BLAST or custom pipelines like CREPE (CREate Primers and Evaluate). These tools check for unintended binding sites (off-targets) across a specified genomic database [2] [11] [26].

- In silico PCR: Tools like ISPCR (In-Silico PCR) simulate the PCR process to predict all potential amplicons generated by a primer pair in a given genome, providing a score based on primer mismatches [26].

- Evaluate Off-Targets: Potential off-target amplicons are classified. The CREPE pipeline, for instance, labels off-targets with a normalized match percentage of 80–100% as "high-quality off-targets" (HQ-Off), which are concerning, and those below 80% as "low-quality off-targets" (LQ-Off), which are less likely to amplify efficiently [26].

- Experimental Validation: The final, critical step is to test the primers in the lab using real clinical samples to confirm that in silico predictions match experimental results [30].

Experimental Protocol: Comparing Primer Performance in Clinical Samples

Sample Collection and DNA Extraction

The experimental data cited in this case study were derived from the analysis of 40 human biopsies from the esophagus, stomach, and duodenum [30]. Total DNA was extracted using a Gram-positive DNA purification kit. DNA concentration was measured using a spectrophotometer, and samples were stored at -80°C until analysis [30].

Primer Sets and PCR Amplification

Two primer sets were compared head-to-head:

- V4 Primers (515F-806R): The widely used primer set from the Earth Microbiome Project, targeting the V4 region [30].

- V1–V2M Primers (68FM-338R): A modified primer set designed to minimize off-target amplification of human DNA. The forward primer 68FM was modified from S-D-Bact-0049-a-S-21 to include Fusobacteriota, which has a two-base mismatch at the 3' terminus with the original primer [30].

PCR Protocol: Amplification was performed with an initial denaturation at 95°C for 5 minutes, followed by 30 cycles of denaturation at 95°C for 30 seconds, annealing at 55°C for 30 seconds, and extension at 70°C for 3 minutes [31]. Purified amplicons were sequenced on Illumina platforms (HiSeq for V1–V2 and MiSeq for V3–V4 in prior studies) [31].

Bioinformatic Analysis

Sequencing data was processed using QIIME2 [31] [30]. Chao1 and Shannon's indices were used to measure alpha diversity. Taxonomy was assigned using a pre-trained Naive Bayes classifier based on the Human Oral Microbiome Database (eHOMD) [31]. Amplicon Sequence Variants (ASVs) aligning to the human genome were identified and filtered out to assess the rate of off-target amplification [30].

Results: Quantitative Comparison of Primer Performance

Off-Target Amplification and Taxonomic Richness

The core of this case study is the direct comparison of the V4 and V1–V2M primer sets. The quantitative data below summarizes their performance in clinical biopsy samples.

Table 1: Comparative Performance of V4 vs. V1–V2M Primers in GI Biopsies

| Performance Metric | V4 Primers (515F-806R) | V1–V2M Primers (68F_M-338R) | Experimental Context |

|---|---|---|---|

| Off-Target Human DNA Amplification | Average of 70% of ASVs (up to 98% in some samples) [30] | Dropped to practically zero [30] | Human GI tract biopsies (Esophagus, Stomach, Duodenum) |

| Taxonomic Richness (Alpha Diversity) | Significantly lower [30] | Significantly higher, especially at species level [30] | Esophagus and Duodenum biopsies |

| Detection of Phylum Fusobacteriota | Present | Absent with original V1-V2 primers; detected with modified 68F_M [30] | All biopsy sites |

| Primer Set Redundancy | 515F/806RB combined with 27F/338R covered 89% of all orders [32] | 27F/338R alone showed the highest number of OTUs and read counts [32] | Coastal seawater samples |

Impact on Perceived Microbiome Composition

The choice of primer not only affects quantitative metrics like richness but also qualitatively shapes the observed microbial community structure.

Table 2: Impact of Primer Choice on Microbial Community Profile

| Taxonomic Group | Result with V4 Primers | Result with V1–V2M Primers | Notes |

|---|---|---|---|

| Actinobacteria & Proteobacteria | Lower representation | Significantly higher representation [30] | Impacts understanding of community balance |

| Bacteroidota | Higher representation | Lower representation [30] | Can skew community interpretation |

| Fusobacteriota | Detected | Not detected with original V1-V2 primer [30] | Highlights need for primer optimization |

| Pelagibacterales & Rhodobacterales | Lower OTU detection | Higher OTU detection with 27F/338R and 515F/806RB combo [32] | Marine sample data; shows ecosystem-specific bias |

The Scientist's Toolkit: Essential Research Reagents

The following reagents and tools are essential for executing the experimental protocols cited in this case study.

Table 3: Key Research Reagent Solutions for Primer Validation Studies

| Reagent / Tool | Function / Application | Example / Source |

|---|---|---|

| Gram-positive DNA Purification Kit | Extraction of genomic DNA from complex clinical samples like biopsies. | Lucigen, Biosearch Technology [30] |

| Herculase II Fusion DNA Polymerase | High-fidelity PCR amplification for preparing sequencing libraries. | Agilent [32] |

| Illumina Sequencing Kits | High-throughput amplicon sequencing on various platforms (MiSeq, HiSeq). | Illumina MiSeq Reagent Kit v3 [32] |

| Primer Design & Specificity Tools | Bioinformatics tools for designing primers and checking for off-target binding. | Primer3 [26], NCBI Primer-BLAST [2] [11], CREPE pipeline [26] |

| 16S rRNA Reference Databases | Curated databases for taxonomic classification of sequencing reads. | Human Oral Microbiome Database (HOMD) [31], SILVA [32] |

Discussion

BLAST Analysis as a Cornerstone for Specificity Validation