Precision Target Identification for PCR Assays: From Foundational Principles to Advanced Applications

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of target identification for PCR assays.

Precision Target Identification for PCR Assays: From Foundational Principles to Advanced Applications

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of target identification for PCR assays. It explores the foundational principles of selecting and validating genetic targets, delves into advanced methodological applications including multiplexing and variant detection, and offers systematic troubleshooting and optimization protocols. Furthermore, it details rigorous validation frameworks and comparative analyses with alternative diagnostic technologies. By synthesizing current research and established guidelines, this resource aims to equip scientists with the knowledge to design robust, reliable, and clinically impactful PCR-based diagnostics.

Laying the Groundwork: Core Principles of PCR Target Selection and Design

The polymerase chain reaction (PCR) stands as a cornerstone of modern molecular biology, providing an indispensable tool for researchers and clinicians in nucleic acid detection. The evolution of PCR technology from its conventional form to quantitative real-time PCR (qPCR) and digital PCR (dPCR) has created a sophisticated diagnostic landscape where selecting the appropriate methodological approach—qualitative versus quantitative—is paramount to experimental and clinical success [1]. This selection is fundamentally guided by the primary objective of the investigation, whether it requires simple detection of a pathogen's presence or absolute quantification of viral load for patient management. Within the context of target identification for PCR assay research, understanding the operational characteristics, limitations, and appropriate applications of each format is critical for designing robust studies and generating reliable, interpretable data.

The core distinction lies in the nature of the data generated. Qualitative PCR provides a binary yes/no answer regarding the presence of a specific nucleic acid sequence, making it suitable for diagnostic applications where establishing presence or absence of a pathogen or genetic variant is sufficient. In contrast, quantitative PCR (qPCR) measures the amount of a specific DNA or RNA target present in a sample, relating the amplification signal to a standard curve for relative quantification. The emerging technology of digital PCR (dPCR), considered the third generation of PCR, provides absolute quantification of nucleic acids without the need for a standard curve by partitioning a sample into thousands of individual reactions and applying Poisson statistics [1]. This technical progression has progressively enhanced sensitivity, precision, and tolerance to inhibitors, thereby expanding the horizons of molecular diagnostics and research.

Technical Principles and Performance Characteristics

Core Technological Differences

The fundamental divergence between qualitative and quantitative PCR methodologies stems from their underlying detection mechanisms and data output. Conventional qualitative PCR relies on endpoint detection, typically using gel electrophoresis, to confirm the presence or absence of an amplified product. While this approach is straightforward and cost-effective, it lacks the capability to determine the initial amount of target nucleic acid and is susceptible to contamination from post-amplification handling.

Quantitative real-time PCR (qPCR) represents a significant advancement by monitoring the accumulation of amplified DNA in real-time during each cycle of the PCR process. This is achieved through fluorescent reporting systems, such as DNA-intercalating dyes or target-specific fluorescent probes (e.g., TaqMan probes or molecular beacons) [1]. The cycle threshold (Ct), the point at which the fluorescence crosses a predetermined threshold, is used for quantification relative to a standard curve of known concentrations. This allows for the determination of the relative quantity of the target nucleic acid in the sample.

Digital PCR (dPCR) employs a different paradigm based on sample partitioning. The PCR mixture is divided into a large number of parallel nanoreactions—either through water-in-oil droplet emulsification (ddPCR) or microchambers on a solid chip—so that each partition contains either 0, 1, or a few nucleic acid targets according to a Poisson distribution [1]. Following end-point PCR amplification, the fraction of positive partitions is counted, and the absolute target concentration is computed using Poisson statistics, eliminating the need for a standard curve [1]. This calibration-free approach presents powerful advantages for absolute quantification.

Comparative Performance Metrics

The performance characteristics of these PCR methodologies vary significantly, influencing their suitability for different applications. The table below summarizes key analytical parameters based on comparative studies:

Table 1: Comparative Performance of Qualitative PCR, qPCR, and dPCR

| Performance Parameter | Qualitative PCR | Quantitative PCR (qPCR) | Digital PCR (dPCR) |

|---|---|---|---|

| Detection Output | Presence/Absence | Relative Quantification | Absolute Quantification |

| Standard Curve Required | No | Yes | No |

| Sensitivity | Lower | Moderate | Higher [2] |

| Precision | Not applicable | Moderate (Median CV% >4.5%) | High (Median CV%: 4.5%) [2] |

| Dynamic Range | Not applicable | Wide (e.g., 400–50,000 copies/ml for CMV) [3] | Wide with high linearity (R² > 0.99) [2] |

| Tolerance to Inhibitors | Low | Moderate | High [2] |

| Ability for Multiplexing | Limited | Possible | Excellent [2] |

| Key Advantage | Simplicity, cost-effectiveness | Broad dynamic range, established workflows | High sensitivity and precision for low-abundance targets [1] [2] |

A direct comparison of commercial PCR assays for cytomegalovirus (CMV) detection highlights the sensitivity differential. The qualitative AMPLICOR CMV test demonstrated 50% sensitivity (12 of 24 replicates positive) at an input concentration of 63 CMV DNA copies/ml, achieving 100% sensitivity only at 1,000 copies/ml [3]. In contrast, the quantitative COBAS AMPLICOR CMV MONITOR test had a defined lower limit of sensitivity at 400 CMV DNA copies/ml and was linear up to 50,000 copies/ml [3]. Meanwhile, dPCR has demonstrated superior sensitivity and precision compared to qPCR, particularly for detecting low bacterial loads in complex clinical samples like subgingival plaque, where it reduced false negatives at low target concentrations [2].

Application-Oriented Experimental Design

Defining the Objective: A Decision Framework

The choice between qualitative, quantitative (qPCR), and digital (dPCR) approaches should be driven by the primary research or clinical question. The following workflow diagram outlines the key decision points for selecting the appropriate PCR methodology:

Application-Specific Protocol Selection

Pathogen Detection and Viral Load Monitoring

For infectious disease diagnostics, the clinical requirement dictates the technological choice. Qualitative PCR is sufficient for confirming active infection when the pathogen is expected to be present at detectable levels, such as in symptomatic patients. For example, the qualitative AMPLICOR CMV test provides a simple positive/negative result for clinical decision-making [3].

Quantitative PCR is essential when viral load monitoring is needed to assess disease progression or treatment efficacy. In managing AIDS patients with CMV infection, studies have shown that the quantity of CMV DNA in plasma is an independent marker of CMV disease and survival, making qPCR vital for patient management [3]. The quantitative COBAS AMPLICOR CMV MONITOR test with a dynamic range of 400–50,000 copies/ml exemplifies this application [3].

Digital PCR offers advantages in detecting low-level persistent infections, early pathogen detection before reaching clinically significant thresholds, and precise monitoring of minimal residual disease. Its superior sensitivity and precision at low concentrations make it particularly valuable for scenarios where accurate quantification near the limit of detection is critical [2].

Oncology and Liquid Biopsy Applications

In oncology, dPCR has demonstrated particular utility for liquid biopsy applications due to its ability to detect rare genetic mutations within a background of wild-type genes [1]. This capability enables non-invasive monitoring of tumor heterogeneity and treatment response through detection of circulating tumor DNA (ctDNA). The technology's high sensitivity allows for identification of mutant alleles present at very low frequencies, which is often challenging for qPCR. dPCR's partitioning principle minimizes competition between targets in multiplex assays, making it especially effective for analyzing complex clinical samples with multiple potential mutations [2].

Gene Expression Analysis

For gene expression studies using reverse transcription PCR (RT-PCR), qPCR remains the established standard for relative quantification of transcript levels across different samples or experimental conditions. However, dPCR is increasingly employed when absolute copy number of specific transcripts is required without reference to standard curves, particularly for low-abundance transcripts where its precision offers significant advantages.

Experimental Protocols and Methodologies

Detailed dPCR Protocol for Multiplex Pathogen Detection

The following protocol, adapted from a 2025 study on periodontal pathobionts, illustrates a modern dPCR workflow for simultaneous detection and quantification of multiple bacterial targets in clinical samples [2]:

Table 2: Research Reagent Solutions for Multiplex dPCR

| Reagent/Material | Function | Specification/Concentration |

|---|---|---|

| QIAcuity Probe PCR Kit | Provides master mix for probe-based dPCR | Includes 4× Probe PCR Master Mix |

| Specific Primers | Target-specific amplification | 0.4 µM each primer in reaction |

| Double-Quenched Hydrolysis Probes | Target-specific fluorescence detection | 0.2 µM each probe in reaction |

| Restriction Enzyme (Anza 52 PvuII) | Digests genomic DNA to reduce viscosity | 0.025 U/µL in reaction |

| QIAcuity Nanoplate 26k | Microfluidic chip for partitioning | ~26,000 partitions per well |

| QIAcuity Four Instrument | Integrated partitioning, thermocycling, imaging | Multi-channel fluorescence detection |

Sample Preparation and DNA Extraction:

- Subgingival plaque samples are collected using absorbent paper points inserted into periodontal pockets for 10 seconds.

- Samples are pooled into tubes containing 1 mL of reduced transport fluid (RTF) with 10% glycerol and stored at -20°C.

- DNA extraction is performed using the QIAamp DNA Mini kit according to manufacturer's instructions [2].

dPCR Reaction Setup:

- Prepare 40 µL reaction mixtures containing:

- 10 µL of sample DNA

- 10 µL of 4× Probe PCR Master Mix

- 0.4 µM of each specific primer

- 0.2 µM of each specific probe

- 0.025 U/µL restriction enzyme

- Nuclease-free water to volume

- Prepare reaction mixtures in pre-plates, then transfer to QIAcuity Nanoplate 26k 24-well plate

- Seal with QIAcuity Nanoplate Seal

dPCR Workflow and Data Acquisition: The dPCR process involves three integrated steps as illustrated below:

Partitioning and Thermocycling:

- The loaded nanoplate undergoes priming and rolling with partitioning of reaction mixture into approximately 26,000 partitions

- Thermocycling conditions:

- Initial denaturation/enzyme activation: 2 min at 95°C

- 45 amplification cycles of: 15 s at 95°C and 1 min at 58°C

Endpoint Fluorescence Imaging:

- Image acquisition on multiple channels:

- Green channel (A. actinomycetemcomitans): Threshold 30 RFU, exposure 500 ms, gain 6 dB

- Yellow channel (P. gingivalis): Threshold 40 RFU, exposure 500 ms, gain 6 dB

- Crimson channel (F. nucleatum): Threshold 40 RFU, exposure 400 ms, gain 8 dB

- Image acquisition on multiple channels:

Data Analysis:

- Analyze data using QIAcuity Software Suite

- DNA concentrations automatically calculated according to Poisson distribution

- Apply Volume Precision Factor according to manufacturer's instructions

- A reaction is considered positive if at least three partitions are positive

- For samples with high concentration (>10⁵ copies/reaction), test serial dilutions to avoid signal saturation

Primer and Probe Design Considerations

Effective assay design is critical for both qPCR and dPCR performance. Key considerations include:

Primer Design:

- Keep melting temperatures (Tm) of primer pairs within 2°C of each other

- Maintain primer length between 18-22 base pairs

- Design with GC content of 35-65% without long stretches (>4 bases) of the same nucleotide

- Minimize G/C repeats, especially at the 3' end of the primer

- Check sequences for hairpins, self-dimers, and hetero-dimers

- Verify specificity using NCBI's BLAST tool against the entire genome [4]

Probe Design (for hydrolysis probe assays):

- Keep Tm of probe 4-8°C higher than primers

- Maintain probe length between 20-25 base pairs

- Avoid overlapping probe and primer binding sites

- Avoid guanine at the 5' end of the probe due to quenching effects [4]

Specificity Enhancements:

- For RT-qPCR, design primers over an exon-exon junction to specifically quantify mRNA rather than genomic DNA

- Check that primer and probe binding sites do not contain common single nucleotide polymorphisms (SNPs)

The selection between qualitative, quantitative (qPCR), and digital PCR (dPCR) methodologies represents a critical decision point in the design of both clinical diagnostic assays and research experiments. This choice must be guided by a clear understanding of the clinical or research objective, with qualitative approaches serving detection needs and quantitative methods addressing measurement requirements. While qPCR remains the established workhorse for relative quantification across a wide dynamic range, dPCR offers compelling advantages for applications requiring absolute quantification, exceptional sensitivity for low-abundance targets, and precise detection in complex matrices. The continuing evolution of PCR technologies promises further refinement of these capabilities, enabling researchers and clinicians to address increasingly sophisticated questions in molecular diagnostics and personalized medicine. As the field advances, the strategic selection of the appropriate PCR methodology based on well-defined objectives will remain fundamental to generating reliable, clinically actionable data.

The precision of polymerase chain reaction (PCR) assays is fundamentally dependent on the initial and critical step of target gene selection. In the context of target identification for PCR assays research, the chosen genetic target dictates the entire assay's performance, guiding its applicability in clinical diagnostics, drug development, and fundamental research. This process requires a meticulous balance between three core pillars: analytical sensitivity (the ability to detect minimal target amounts), analytical specificity (the ability to distinguish the target from non-target sequences), and target conservation (the reliable presence of the sequence across all relevant strains or isolates). This technical guide details the strategic principles and experimental methodologies for identifying and validating genetic targets that fulfill these stringent criteria, thereby ensuring the development of robust, reliable, and clinically actionable PCR assays.

Core Principles of Target Gene Selection

Sensitivity: Achieving Ultra-Low Limit of Detection

Sensitivity in molecular diagnostics refers to the lowest concentration of a target nucleic acid that an assay can reliably detect. Achieving a low limit of detection (LoD) is paramount for diagnosing early-stage infections or identifying pathogens that persist at very low levels in clinical samples, such as in bloodstream infections where pathogen loads can be as low as 1–2 colony-forming units (CFU) per milliliter [5].

Traditional quantitative PCR (qPCR) often struggles with such ultra-low levels due to limited sensitivity, typically in the range of 0.1 × 10⁴ – 10⁵ copies/mL [5]. To overcome this, advanced methodologies focus on signal amplification rather than, or in addition to, target amplification. A prime example is the development of the TCC method (Target-amplification-free Collateral-cleavage-enhancing CRISPR-CasΦ method). This one-pot assay leverages a dual stem-loop DNA amplifier that works in concert with the CRISPR-CasΦ system. Upon target recognition, a cyclic cleavage and activation cascade is triggered, dramatically amplifying the fluorescent detection signal without a pre-amplification step. This approach has achieved a record-low detection limit of 0.18 attomolar (aM), enabling the detection of pathogenic bacteria at concentrations as low as 1.2 CFU/mL in human serum within 40 minutes [5].

Similarly, for tuberculosis diagnosis, the ActCRISPR-TB assay enhances sensitivity through a multi-guide RNA strategy that fine-tunes the balance between cis- and trans-cleavage activity of the Cas12a protein. By selectively using guide RNAs that favor trans-cleavage (signal generation) over cis-cleavage (amplicon degradation), the assay optimizes target accumulation and signal production, achieving a sensitivity of 5 copies/μL [6]. These examples underscore that maximizing sensitivity often requires moving beyond simple PCR to incorporate engineered enzymatic systems and sophisticated reaction dynamics that minimize background noise and maximize signal output.

Specificity: Ensuring Accurate Target Discrimination

Specificity ensures that an assay detects only the intended pathogen or genetic variant and does not cross-react with non-targets, which is critical for accurate diagnosis and treatment. The gold standard for achieving high specificity is the selection of target genes or regions that are unique to the organism of interest.

Pan-genome analysis has emerged as a powerful bioinformatics-driven approach for discovering specific molecular targets. This method involves comparing the entire set of genes from numerous representative genomes of the target species against those of closely related non-target species. A study aiming to find specific targets for Acinetobacter baumannii analyzed 642 genome sequences and screened them against 28 non-A. baumannii strains. The rigorous criteria required candidates to be 100% present in all target strains and completely absent in all non-target strains. This process identified nine highly specific target genes, including outO, ureE, and rplY, which were subsequently validated with 100% specificity in PCR assays [7].

When strict genus- or species-specific genes are elusive, comparative genomics can identify targets with a highly restricted distribution. For instance, in the differentiation of the Enterobacter genus, researchers found that the hpaB gene, while not entirely unique, was absent from the genomes of the most closely related genera, such as Huaxiibacter, Lelliottia, and Silvania. This restricted distribution allowed for the design of a semi-nested PCR assay that accurately identified Enterobacter strains with high specificity [8].

For discriminating between viral variants, such as the Delta and Omicron strains of SARS-CoV-2, allele-specific primers and probes are designed to target unique mutation profiles. These oligonucleotides are engineered to be exquisitely sensitive to single-nucleotide polymorphisms (SNPs), ensuring that they only bind perfectly to and amplify the intended variant, thereby providing a reliable tool for strain identification without the need for whole-genome sequencing [9].

Conservation: Guaranteeing Assay Robustness Across Strains

A robust diagnostic assay must perform consistently across the full spectrum of genetic diversity within a species. A target that is highly variable or absent in even a small fraction of circulating strains can lead to false-negative results and undermine the assay's clinical utility.

The foundation for assessing conservation is a comprehensive analysis of available genomic data. The process for designing the Enterobacter genus-specific primers exemplifies this. The hpaABC gene region was first confirmed to be present in 4,276 Enterobacter RefSeq genomes, with its absence in only two strains (0.047%) attributed to genome incompleteness, confirming it as part of the core genome [8].

Pan-specific primer design is a bioinformatics strategy to create primers that can detect all known genetic variants of a pathogen. This is particularly crucial for viruses with high mutation rates and diversity, such as poliovirus. The workflow involves:

- Collecting a representative set of genome sequences that captures the known diversity of the pathogen.

- Generating a multiple sequence alignment (MSA) using tools like MAFFT.

- Using specialized software (e.g., varVAMP) to identify conserved regions within the MSA that are suitable for primer binding across all genotypes [10].

This approach ensures that the resulting primers are resilient to genetic drift and can detect both known and emerging genotypes, future-proofing the diagnostic assay against viral evolution.

Table 1: Summary of Target Selection Strategies and Performance Metrics

| Pathogen / Application | Selected Target / Method | Selection Strategy | Key Performance Outcome |

|---|---|---|---|

| Acinetobacter baumannii [7] | ureE gene (among 8 others) | Pan-genome analysis (100% presence in target, 100% absence in non-targets) | 100% specificity; qPCR LoD of 10⁻⁷ ng/μL |

| Enterobacter genus [8] | hpaBC gene region | Comparative genomics (restricted distribution in closest relatives) | Accurate genus-level identification via semi-nested PCR |

| SARS-CoV-2 Variants [9] | Allele-specific primers for spike protein mutations | In-silico analysis of variant-specific SNPs | 100% specificity; LoD of 1×10² copies/mL for Omicron/Delta |

| Clinical Pathogens (BSI) [5] | TCC CRISPR-CasΦ with dual stem-loop amplifier | Signal amplification engineering | LoD of 0.11 copies/μL; Detection of 1.2 CFU/mL in serum |

| Mycobacterium tuberculosis [6] | ActCRISPR-TB with multi-gRNA for IS6110 | Engineering asymmetric Cas12a cis/trans cleavage | LoD of 5 copies/μL; 93% sensitivity on respiratory samples |

Experimental Protocols for Target Validation

Protocol 1: Pan-Genome Analysis for Specific Target Discovery

This protocol provides a framework for identifying species-specific targets through computational analysis of genomic datasets.

Materials:

- Hardware: High-performance computing cluster or server.

- Software: Prokka (v1.14.6) for genome re-annotation, Roary (v3.13.0) for pan-genome construction, BLAST+ suite for specificity verification.

- Data: Whole-genome sequences of all available target species strains and a curated set of non-target species strains (from NCBI, ENA).

Method:

- Data Acquisition and Curation: Download and curate a diverse set of genome sequences for the target species (e.g., 642 A. baumannii genomes) and a representative set of non-target genomes (e.g., 28 other species) [7].

- Genome Annotation: Re-annotate all genomes using Prokka to ensure consistent gene calling and annotation across all samples.

- Pan-genome Construction: Input the annotation files into Roary to construct the pan-genome. Use a conservative BLASTP identity cutoff (e.g., 85%) and a requirement for a gene to be present in 99% of isolates to define the "core genome" [7].

- Identification of Candidate Targets: Analyze the Roary output to identify gene clusters that are present in 100% of the target species genomes and 0% of the non-target genomes [7].

- In-silico Specificity Verification: Perform a BLASTN search of the candidate gene sequences against the entire non-redundant nucleotide database (nt) to rule out any unexpected homologies in other organisms.

- Primer Design: Design primers for the validated candidate genes using tools such as Primer3, ensuring they meet standard criteria for PCR (amplicon size 85-125 bp, Tm ~60°C, etc.) [11].

Protocol 2: Analytical Sensitivity and Specificity Testing

Once candidate targets and their associated primers are designed, wet-lab validation is essential.

Materials:

- Strains: A collection of target species strains (e.g., 152 A. baumannii clinical isolates) and a panel of non-target strains for cross-reactivity testing [7].

- Reagents: DNA extraction kit, PCR mix (e.g., 2× PCR Mix), dNTPs, high-fidelity DNA polymerase, primer pairs, qPCR probe if applicable, nuclease-free water.

- Equipment: Thermal cycler, real-time PCR instrument, agarose gel electrophoresis system.

Method:

- DNA Extraction: Extract genomic DNA from all reference and non-target strains using a standardized commercial kit.

- Specificity Testing (Cross-Reactivity):

- Set up PCR reactions containing each primer pair and DNA from each non-target strain.

- Amplify and analyze products via agarose gel electrophoresis or qPCR fluorescence. The ideal result is no amplification from any non-target strain [7].

- Limit of Detection (LoD) Determination:

- Prepare a standard curve using a quantified sample of the target DNA (e.g., from a type strain). Serially dilute the DNA across a range covering high to single-copy concentrations.

- Run the qPCR assay with these dilutions in replicates (e.g., n=3 or n=5). The LoD is the lowest concentration at which ≥95% of the replicates are positive [9] [6].

- For absolute quantification, use a standard curve with known copy numbers. The assay's efficiency (E) should be between 90-110%, and the correlation coefficient (R²) should be ≥0.99 [11].

Table 2: Key Research Reagent Solutions for Target Selection and Validation

| Item | Function / Application | Examples / Notes |

|---|---|---|

| Bioinformatics Tools | ||

| Roary [7] | Rapid large-scale pan-genome analysis. | Used to identify core genes present in all target strains. |

| Primer3 / Primer3-py [12] [11] | Thermodynamically optimized primer design. | Integral to automated primer design pipelines. |

| varVAMP [10] | Design of pan-specific primers from MSAs. | Crucial for highly variable viral pathogens. |

| MAFFT [12] [10] | Generating multiple sequence alignments. | Creates the input alignments for varVAMP and conservation analysis. |

| Enzymes & Assay Systems | ||

| CasΦ (Cas12j) Protein [5] | CRISPR-based detection; used in TCC assay for signal amplification. | Smaller size (~80 kDa) than Cas12a/Cas13; high sensitivity. |

| High-Fidelity Polymerase [13] | PCR amplification with low error rates for cloning and sequencing. | E.g., Pfu, KOD; possess 3'→5' exonuclease (proofreading) activity. |

| Hot-Start Polymerase [13] | Prevents non-specific amplification prior to PCR cycling. | Reduces primer-dimer formation and improves specificity. |

| Buffer Additives | ||

| DMSO [13] | Reduces secondary structure in high-GC templates. | Typical working concentration: 2-10%. |

| Betaine [13] | Homogenizes DNA melting temperatures for GC-rich templates. | Typical working concentration: 1-2 M. |

| Mg²⁺ Ions [13] | Essential cofactor for DNA polymerase activity. | Concentration requires optimization (typically 1.5-2.5 mM). |

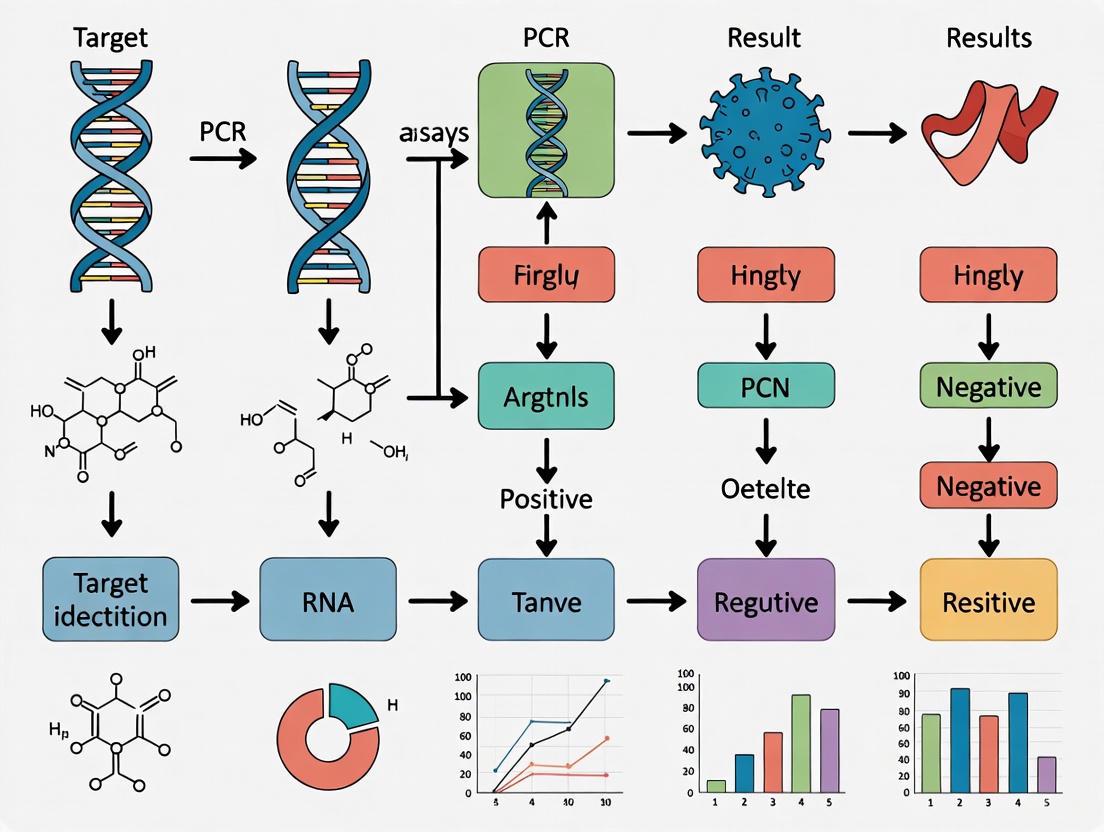

Workflow and Logical Diagrams

The following diagram illustrates the integrated workflow for the selection and validation of a diagnostic target, from initial computational analysis to final clinical application.

Diagram 1: Integrated workflow for target gene selection and assay validation.

The next diagram outlines the reaction mechanism of an advanced CRISPR-based detection system, highlighting the engineering principles that enable ultra-high sensitivity.

Diagram 2: Reaction mechanism of the TCC CRISPR-CasΦ assay.

The accuracy of polymerase chain reaction (PCR) assays is fundamentally dependent on the precise identification of genetic targets and the rational design of oligonucleotide primers. In the context of rapidly evolving pathogens, such as SARS-CoV-2, this process demands a sophisticated approach that leverages large-scale genomic data and computational tools. In-silico analysis, the use of computer simulations to analyze biological data, has become an indispensable methodology for this purpose. By utilizing vast, publicly available genomic repositories like GISAID and GenBank, researchers can ensure that primer sets are specific, sensitive, and resilient to genetic drift. This whitepaper provides an in-depth technical guide for researchers and drug development professionals on employing in-silico analysis and primer design within the broader framework of target identification for PCR assay research. The discussion is framed around practical workflows, experimental protocols, and the essential tools required to develop robust diagnostic and research assays, with illustrations from recent advancements in SARS-CoV-2 variant detection.

The Role of Public Genomic Databases in Target Identification

Public genomic databases serve as the foundational resource for understanding pathogen diversity and identifying conserved regions suitable for assay targeting. Two of the most critical platforms are GISAID and the NCBI sequence database, which includes GenBank.

GISAID: A Platform for Pathogen Genomics

The GISAID Initiative was created to address significant concerns in the scientific community regarding data sharing, specifically the fear of being "scooped" and the failure to properly acknowledge data contributors [14]. Launched in 2008 with the EpiFlu database, GISAID provides an alternative to traditional public-domain archives by upholding submitters' rights and ensuring data is shared rapidly and transparently [14]. Its access model requires registration and agreement to terms of use, which enforces a sharing mechanism that assures reciprocity and protects the integrity of the data for future generations [14].

For SARS-CoV-2, GISAID's EpiCoV database has become an indispensable resource. Data shows that an average nation shares approximately 90% of its SARS-CoV-2 genetic sequence data exclusively via GISAID, a trend also observed among the 27 European Member States [14]. This comprehensive, timely, and curated data makes GISAID a primary source for identifying circulating variants and designing corresponding detection assays.

The National Center for Biotechnology Information (NCBI) provides a suite of tools and databases, including GenBank, a public-domain archive of nucleotide sequences. Unlike GISAID, access to GenBank is anonymous and does not protect submitter rights in the same way, which was a primary reason for GISAID's creation [14]. Nonetheless, GenBank remains a vast and critical resource.

A key tool available through NCBI is Primer-BLAST. This program integrates primer design with a check for target specificity across sequences in the NCBI database [15]. It allows researchers to design primers that must span exon-exon junctions (to limit amplification to mRNA) and to enforce specificity against a selected organism or a custom database, ensuring that primers do not generate off-target amplicons [15].

Table 1: Comparison of Key Public Databases for Primer Design

| Feature | GISAID | NCBI GenBank |

|---|---|---|

| Primary Focus | Pathogen-specific (e.g., Influenza, SARS-CoV-2) | Comprehensive, all-domain nucleotide sequences |

| Access Model | Registered access with Terms of Use; upholds submitter rights | Open, anonymous access |

| Data Curation | Yes, includes quality control and curation team | Largely automated submission |

| Key Tool Integration | Primary data source for custom pipelines | Integrated with Primer-BLAST for specificity checking |

| Best Use Case | Tracking circulating variants of a specific pathogen; designing variant-specific assays | Broad specificity checking; designing assays for a wider range of targets |

A Workflow for In-silico Primer Design and Validation

The following section outlines a detailed, end-to-end protocol for designing and validating primers using public databases and computational tools.

The diagram below illustrates the logical workflow from data acquisition to final primer selection, integrating both GISAID and NCBI resources.

Detailed Experimental Protocol

Step 1: Data Acquisition and Curation

- Objective: Gather a comprehensive and representative set of genomic sequences for the target pathogen and related non-target organisms.

- Methodology:

- Access GISAID EpiCoV Database: Download full-genome sequences for the pathogen of interest (e.g., SARS-CoV-2). Filter sequences by date, geographic location, and lineage/PANGO lineage to ensure a dataset representative of current and historical variants [14] [16].

- Access NCBI GenBank: Download sequences for closely related pathogens, human genome sequences, and common commensal microbes to serve as a non-target background for specificity analysis.

- Curate the Dataset: Remove sequences that are incomplete, of low quality, or have an unusually high number of ambiguous bases (N's). This step is crucial for the accuracy of downstream analysis.

Step 2: Target Identification and Conserved Region Analysis

- Objective: Identify genomic regions that are conserved within the target pathogen but distinct from non-target sequences.

- Methodology:

- Perform Multiple Sequence Alignment (MSA): Use tools like MAFFT or Clustal Omega to align all downloaded target pathogen sequences. For a large dataset from GISAID, consider using a representative subset to manage computational load.

- Analyze Conservation: From the MSA, identify regions of high conservation (low entropy). These regions are less likely to contain mutations that would cause assay failure.

- Check for Cross-Reactivity: Perform a preliminary BLASTN search of the conserved regions against the non-target sequence dataset (from Step 1.2) to ensure uniqueness to the target pathogen.

Step 3: In-silico Primer Design

- Objective: Design candidate primer pairs within the conserved regions identified in Step 2.

- Methodology:

- Use Primer Design Software: Input the chosen conserved genomic region (in FASTA format) into a program like Primer3 or Primer3Plus [16]. These tools automate the selection of primers based on a set of thermodynamic parameters.

- Set Design Parameters: The following table summarizes critical parameters and their recommended values for a robust RT-qPCR assay [15] [16].

Table 2: Key Parameters for In-silico Primer Design

| Parameter | Recommended Value | Rationale |

|---|---|---|

| Primer Length | 18-22 base pairs (bp) | Balances specificity and binding energy. |

| Amplicon Size | 70-200 bp | Ideal for efficient amplification in qPCR. |

| Melting Temperature (Tm) | 58-62°C; < 2°C difference between primer pairs | Ensures both primers bind efficiently during the same annealing step. |

| GC Content | 40-60% | Provides stable primer-template binding; avoids high GC regions that can form secondary structures. |

| 3'-End Sequence | Avoid runs of identical nucleotides, especially G/C | Prevents mispriming and improves specificity. |

Step 4: Specificity and Sensitivity Check with Primer-BLAST

- Objective: Validate that candidate primer pairs only amplify the intended target.

- Methodology:

- Run NCBI Primer-BLAST: Input the forward and reverse primer sequences into the Primer-BLAST tool [15].

- Configure Specificity Settings:

- Database: Select "RefSeq representative genomes" or a custom database you uploaded from Step 1.

- Organism: Specify the target organism (e.g., "Severe acute respiratory syndrome coronavirus 2") to limit the search and increase speed.

- Exon Junction Span: If targeting mRNA, select "Primer must span an exon-exon junction" to ensure genomic DNA is not amplified [15].

- Analyze Results: The tool will return a list of potential PCR products. A specific primer pair should produce a single, intended amplicon from the target sequence and no significant hits against non-target sequences.

Step 5: Comprehensive In-silico Validation

- Objective: Perform a final, thorough check against all known sequence variations.

- Methodology:

- In-silico PCR against Full Dataset: Use a custom script or tool to perform an in-silico PCR against the entire curated dataset from GISAID. This checks if the primers will bind effectively across all known variants, including Variants of Concern (VOCs).

- Check for Mismatches: Pay special attention to mismatches, particularly at the 3'-end of the primers, as these are most likely to cause amplification failure [17]. Primers with a single 3'-end mismatch in a circulating variant should be rejected.

Advanced Applications: AI and Multiplexing for Variant Detection

The rapid emergence of SARS-CoV-2 VOCs highlighted the limitations of static primer designs and the need for advanced, adaptive approaches.

AI-Driven Primer Design

A 2023 study demonstrated an innovative pipeline using Evolutionary Algorithms (EAs), a type of Artificial Intelligence (AI), to design primer sets for SARS-CoV-2 and its VOCs (Alpha and Omicron) [16]. The process, which started from sequences in GISAID, was able to deliver primer sets in a matter of hours. The AI was tasked with uncovering specific 21-bp sequences and ranking them by their suitability as primers and their discriminative capacity [16]. The resulting primer set for the main lineage, UtrechtU-ORF3a, showed comparable or superior sensitivity to commercial kits in clinical validation with patient samples [16]. This demonstrates the potential of AI to rapidly respond to evolving pathogen threats.

Designing Multiplex PCR Assays

For pathogens with high mutation rates, a multiplex PCR approach is recommended. This involves designing assays with multiple genetic targets to compensate for the likelihood of mutations in any single target [16] [17]. The U.S. FDA notes that "tests with multiple targets are more likely to continue to perform as described in the test's labeling as new variants emerge" [17]. The workflow for a multiplex assay involves designing several primer sets for different, non-overlapping regions of the genome and validating them simultaneously for specificity and lack of primer-dimer interactions.

Table 3: Research Reagent Solutions for In-silico Primer Design

| Reagent / Tool | Function | Key Features / Notes |

|---|---|---|

| GISAID EpiCoV | Primary data source for pathogen sequences | Provides curated, timely data with submitter rights protection; essential for variant tracking [14]. |

| NCBI GenBank | Comprehensive sequence database | Used for broad specificity checks and accessing non-target sequences [15]. |

| Primer3/Primer3Plus | Core primer design algorithm | Automates primer selection based on user-defined thermodynamic parameters [15] [16]. |

| NCBI Primer-BLAST | Integrated primer design & specificity check | Critical for verifying that primers only amplify the intended target and not other sequences in the database [15]. |

| MAFFT/Clustal Omega | Multiple Sequence Alignment | Identifies conserved genomic regions across a set of pathogen sequences for robust target selection. |

| AI/Evolutionary Algorithms | Advanced primer discovery | Rapidly generates and ranks potential primers from large datasets (e.g., GISAID) for specific targets or variants [16]. |

In-silico analysis, powered by the vast genomic data in public repositories like GISAID and GenBank, has revolutionized the process of PCR primer design. A rigorous workflow encompassing data curation, target identification, rational primer design, and comprehensive in-silico validation is fundamental to developing assays that are both specific and resilient. The integration of advanced techniques, such as AI-driven design and multiplexing, further enhances our ability to respond with agility to evolving pathogens, as starkly demonstrated during the COVID-19 pandemic. By adhering to these detailed methodologies and leveraging the essential tools outlined in this guide, researchers and drug development professionals can significantly improve the accuracy and reliability of their PCR-based assays, thereby strengthening both diagnostic capabilities and fundamental research.

The Role of Mutation Profiling in Emerging Pathogens and Variant Identification

Mutation profiling has become an indispensable tool in the landscape of infectious disease research and public health. The rapid identification of genetic variations in emerging pathogens directly influences the development of precise molecular diagnostics, particularly PCR assays. As pathogens evolve, their mutation profiles serve as critical targets for detection, enabling researchers to design assays that can differentiate between established and novel variants. This technical guide explores the advanced methodologies and computational frameworks that underpin mutation profiling, with a specific focus on their application to PCR assay target selection and validation. The integration of high-throughput sequencing, sophisticated bioinformatics, and innovative molecular techniques creates a powerful paradigm for preempting diagnostic failures and maintaining assay efficacy in the face of pathogen evolution.

Core Technologies in Mutation Detection and Analysis

The technological landscape for mutation profiling is diverse, encompassing methods ranging from targeted detection to whole-genome analysis. Each platform offers distinct advantages in sensitivity, throughput, and applicability to diagnostic development.

Real-Time PCR and Advanced Melting Techniques

High-Resolution Melting (HRM) Analysis provides a rapid, closed-tube method for discriminating sequence variants based on the melting temperature (Tm) profiles of amplified DNA targets. In malaria diagnostics, HRM targeting the 18S SSU rRNA gene achieved a significant temperature differentiation of 2.73°C to distinguish between Plasmodium falciparum and Plasmodium vivax, demonstrating concordance with sequencing results [18]. This technique is particularly valuable for screening known mutation hotspots and confirming primer specificity during PCR assay development.

Competitive Allele-Specific TaqMan PCR (castPCR) represents another advanced approach that combines allele-specific TaqMan qPCR with an MGB oligonucleotide blocker to effectively suppress non-specific amplification from wild-type sequences. This technology enables the detection of somatic mutations with sensitivity down to 1 cancer cell in 1,000 normal cells, though its principles are equally applicable to pathogen variant detection [19]. The castPCR workflow achieves results in approximately three hours from sample to result, providing rapid turnaround for assay validation.

Digital PCR and Next-Generation Sequencing Platforms

Droplet Digital PCR (ddPCR) systems offer absolute quantification of target sequences without requiring standard curves, enabling sensitive detection of rare mutations and copy number variations. The QX Continuum ddPCR System exemplifies this technology, providing enhanced sensitivity for analyzing subtle changes in gene expression and identifying rare mutations that may signify emerging variants [20].

Next-Generation Sequencing (NGS) has revolutionized mutation profiling by enabling comprehensive analysis of entire pathogen genomes. Illumina's NovaSeq X and Oxford Nanopore Technologies platforms deliver improvements in speed, accuracy, and read length, facilitating large-scale surveillance projects [21]. The integration of artificial intelligence with NGS data, through tools like Google's DeepVariant, has further enhanced variant calling accuracy, uncovering patterns that traditional methods might miss [21].

Table 1: Comparison of Major Mutation Profiling Technologies

| Technology | Sensitivity | Throughput | Key Applications in Pathogen Surveillance | Implementation Considerations |

|---|---|---|---|---|

| HRM Analysis | Varies by assay; detects low parasite densities (0.02 parasites/μL) [18] | Moderate | Species differentiation, SNP identification | Requires precise primer design and temperature control |

| castPCR | High (detects 1 mutant in 1,000 wild-type) [19] | High | Specific mutation detection, rare variant identification | Specialized reagents and blocker oligos required |

| ddPCR | High (absolute quantification without standards) [20] | Moderate | Rare mutation detection, copy number variation | Specialized equipment needed; minimal training required |

| NGS | High (comprehensive variant detection) [21] | Very High | Unknown variant discovery, transmission tracking | Bioinformatics expertise required; higher cost per sample |

Computational Frameworks for Variant Identification

The volume and complexity of data generated by modern sequencing technologies necessitate sophisticated computational approaches for variant identification and characterization.

Unsupervised Learning for Emerging Variant Detection

The ICA-Var (Independent Component Analysis of Variants) pipeline represents an innovative unsupervised approach that transforms mutation frequencies in wastewater samples into independent sources with co-varying mutation patterns [22]. This method employs a multivariate independent component analysis to identify time-evolving SARS-CoV-2 variants without prior reliance on characterized reference barcodes. In validation studies, ICA-Var demonstrated earlier detection of emerging variants including EG.5, HV.1, and BA.2.86 compared to reference-based tools like Freyja, achieving this through enhanced statistical power derived from analyzing multiple samples simultaneously [22].

The fundamental advantage of unsupervised approaches lies in their ability to detect novel co-varying mutation patterns not previously associated with known variants. This capability is particularly crucial for identifying truly emergent pathogens or recombinant strains that may not match existing reference sequences.

Genome-Informed Assay Development

A genome-informed approach to assay design leverages comprehensive genomic data to identify new targets for specific detection. In developing a multi-targeted real-time PCR assay for the grapevine pathogen Xylophilus ampelinus, researchers identified novel genomic targets that demonstrated high specificity and sensitivity across different grapevine tissues including leaves, roots, and xylem [23]. The XampBA2 assay emerged with superior performance, offering high diagnostic sensitivity and robustness across diverse plant matrices [23].

This methodology exemplifies the direct translation of mutation profiling data into optimized detection assays, ensuring that diagnostic tools evolve in parallel with their target pathogens.

Multi-Omics Integration and Cloud Computing

Multi-omics approaches combine genomics with transcriptomics, proteomics, metabolomics, and epigenomics to provide a comprehensive view of biological systems [21]. This integration helps link genetic mutations with functional consequences, offering insights into variant behavior and pathogenicity that inform more effective assay design.

The computational demands of multi-omics analysis are substantial, necessitating cloud computing platforms like Amazon Web Services (AWS) and Google Cloud Genomics, which provide scalable infrastructure for storing and processing terabyte-scale datasets [21]. These platforms enable global collaboration among researchers and facilitate the real-time data sharing essential for rapid response to emerging threats.

Experimental Protocols for Mutation Profiling

Protocol 1: High-Resolution Melting Analysis for Pathogen Differentiation

Purpose: To differentiate pathogen species and strains based on sequence variations in target genes.

Materials:

- Extracted DNA from clinical or environmental samples

- HRM-compatible real-time PCR instrument (e.g., Light Cycler 96 Instrument, Roche)

- HRM master mix with saturating DNA dye

- Species-specific primers targeting conserved variable regions

Methodology:

- Primer Design: Design primers flanking regions with known sequence variations between target pathogens. For malaria detection, the 18S SSU rRNA region has proven effective [18].

- Reaction Setup: Prepare 20 μL reactions containing 1× HRM master mix, 200 nM primers, and approximately 10 ng DNA template.

- PCR Amplification:

- Initial denaturation: 95°C for 5 minutes

- 40 cycles of:

- Denaturation: 94°C for 45 seconds

- Annealing: 60°C for 45 seconds

- Extension: 72°C for 70 seconds

- Final elongation: 72°C for 10 minutes [18]

- HRM Analysis:

- Denature at 95°C for 1 minute

- Renature at 40°C for 1 minute

- Continuous fluorescence monitoring from 65°C to 95°C with 0.2°C increments

- Data Interpretation: Analyze melting curve shapes and temperature shifts compared to reference strains.

Validation: Compare results with sequencing data. In malaria studies, HRM showed complete agreement with sequencing in species identification [18].

Protocol 2: Multi-targeted Real-Time PCR Assay Development

Purpose: To develop a sensitive and specific real-time PCR assay for detecting emerging pathogen variants.

Materials:

- Pure cultures of target and non-target reference strains

- DNA extraction kit (e.g., Qiagen DNA Mini Kit)

- Real-time PCR instrument

- Probe-based real-time PCR master mix

- Designed primer-probe sets

Methodology:

- Target Identification: Conduct genome-wide analysis to identify unique target sequences specific to the pathogen. For X. ampelinus, multiple genomic regions were evaluated [23].

- Primer and Probe Design: Design multiple primer-probe sets targeting different genomic regions.

- Specificity Testing:

- Test against a panel of target and non-target strains

- Verify no cross-reactivity with closely related species

- Sensitivity Determination:

- Perform limit of detection studies with serial dilutions of target DNA

- Establish the minimum detectable copy number

- Assay Validation:

- Test assay performance across different sample matrices

- Evaluate reproducibility through inter- and intra-assay variability tests

- Performance Comparison: Compare with reference methods when available

In the X. ampelinus assay development, three new assays (XampBA2, TXmp22.4, and XampBA7) demonstrated high specificity and sensitivity, with XampBA2 showing the best overall performance [23].

Table 2: Key Research Reagent Solutions for Mutation Profiling Studies

| Reagent/Kit | Primary Function | Application Context | Key Features |

|---|---|---|---|

| TaqMan Mutation Detection Assays [19] | Detection of specific mutations | Cancer research, pathogen variant identification | castPCR technology; detects rare mutations (1:1000) |

| Qiagen DNA Mini Kit [18] | Nucleic acid extraction | General purpose DNA purification from diverse samples | Compatible with blood, tissues, microbial cultures |

| HRM Master Mix [18] | High-resolution melting analysis | Species differentiation, SNP identification | Contains saturating DNA dye for precise melting curves |

| NovaSeq X Reagents [21] | Next-generation sequencing | Comprehensive variant discovery | High-throughput, low per-base cost |

Case Studies in Emerging Pathogen Surveillance

SARS-CoV-2 Variant Detection in Wastewater

Wastewater surveillance has emerged as a critical tool for monitoring SARS-CoV-2 variant dynamics. In a comprehensive study analyzing 3,659 wastewater samples collected over two years from urban and rural locations, researchers implemented the ICA-Var pipeline to identify emerging variants through unsupervised clustering of co-varying mutation patterns [22].

The methodology successfully detected Delta variants in late 2021, Omicron variants throughout 2022, and emerging recombinant XBB variants in 2023. Notably, this approach identified most variants earlier than other computational tools and revealed unique co-varying mutation patterns not associated with any known variant [22]. This demonstrates the power of multivariate methods to boost statistical power and support accurate early detection of emerging pathogens, even in the absence of clinical testing data.

Influenza Subclade K Monitoring

The emergence of influenza subclade K (H3N2) demonstrates the challenge of rapid variant detection for seasonal pathogens. This variant, responsible for severe flu seasons in the Southern Hemisphere, contains seven gene changes on a key segment of the virus that alter its shape, making it harder for immune systems and vaccines to recognize [24].

Early analysis showed that while current vaccines still provided protection, particularly for children (75% effectiveness against hospitalization), effectiveness for adults was lower (30-40%) [24]. This case highlights the critical importance of continuous mutation profiling to inform vaccine composition and diagnostic assay updates in near real-time.

Implementation in PCR Assay Target Identification

The ultimate application of mutation profiling data lies in informing the selection of optimal targets for PCR assay development. This process requires careful consideration of several factors to ensure assay longevity and accuracy.

Target Selection Criteria

Conservation-Variability Balance: Ideal targets should contain sufficiently conserved regions for primer binding while encompassing variable regions for specific variant identification. The 18S SSU rRNA gene in Plasmodium species exemplifies this balance, containing both highly conserved regions for universal primer design and variable regions for species differentiation [18].

Functional Significance: Mutations in functionally significant genes (e.g., spike protein in SARS-CoV-2) often represent stable selection that persists in viral populations, making them valuable targets for diagnostic assays.

Multi-target Approaches: Employing multiple targets, as demonstrated in the X. ampelinus assay development, provides redundancy and confirmation, mitigating the risk of diagnostic escape due to further mutation [23]. The XampBA2, TXmp22.4, and XampBA7 assays were designed to work independently, with the recommendation to use multiple assays for confirmation of identification.

Assay Validation Frameworks

Analytical Validation:

- Determine limit of detection (LoD) for each target

- Assess specificity against near-neighbor species

- Evaluate precision and reproducibility

- Establish linearity and dynamic range

Clinical/Biological Validation:

- Test performance across relevant sample matrices

- Assess capability to detect emerging variants

- Verify concordance with reference methods

- Determine diagnostic sensitivity and specificity

In the malaria HRM study, validation included testing 300 samples from individuals with suspected malaria symptoms, with results compared to both microscopic examination and sequencing [18]. This comprehensive approach confirmed the technique's reliability for species identification.

Mutation profiling represents a cornerstone capability in the continuous battle against emerging pathogens and their evolving variants. The integration of sophisticated detection technologies, computational frameworks, and systematic validation protocols creates a robust foundation for PCR assay target identification. As pathogens continue to evolve, the dynamic interplay between mutation surveillance and diagnostic development becomes increasingly critical. The methodologies and case studies presented in this technical guide provide researchers with both the theoretical framework and practical tools to develop resilient detection assays capable of adapting to the relentless pace of pathogen evolution. Future advancements in single-cell genomics, spatial transcriptomics, and AI-powered variant prediction promise to further enhance our capacity to preempt diagnostic gaps and maintain effective surveillance in an ever-changing microbial landscape.

Understanding the Impact of Template Quality and Sample Type on Target Accessibility

In the realm of molecular diagnostics and PCR-based research, the accuracy and sensitivity of an assay are fundamentally dependent on the initial quality of the genetic template and the nature of the sample from which it is derived. Within the broader thesis of target identification for PCR assays, understanding target accessibility—the ease with which primers and polymerase can access and amplify a specific genomic sequence—is paramount. Template quality and sample type are not merely preliminary variables; they are active determinants of this accessibility, influencing hybridization efficiency, polymerase processivity, and ultimately, the reliability of experimental results. This guide provides an in-depth technical examination of how these factors impact PCR performance, offering researchers and drug development professionals detailed methodologies and data-driven strategies to optimize their assays.

The Critical Link Between Template Quality, Sample Type, and Target Accessibility

Target accessibility refers to the physicochemical availability of a nucleic acid sequence for primer binding and polymerase elongation during PCR. High-quality template DNA or RNA, characterized by integrity, purity, and accurate concentration, is a prerequisite for efficient amplification. However, the path to obtaining such a template is heavily influenced by the sample type.

Different sample types present unique challenges and introduce distinct profiles of contaminants and inhibitors. For instance, nasopharyngeal swabs, commonly used for respiratory virus detection, may contain mucus and salts that can co-purify with nucleic acids [25]. In contrast, samples from soil or plants are frequently contaminated with humic acids and phenols, which are potent polymerase inhibitors [13]. The presence of these substances directly compromises target accessibility by several mechanisms:

- Binding to Nucleic Acids: Inhibitors like humic acid can bind directly to the DNA template, creating a physical barrier that blocks primer annealing and polymerase progression.

- Enzyme Inactivation: Substances such as heparin or ionic detergents can irreversibly denature or inhibit the DNA polymerase enzyme.

- Cofactor Chelation: Potent chelators like Ethylenediaminetetraacetic acid (EDTA), often a carryover from DNA extraction protocols, can sequester the essential magnesium (Mg²⁺) cofactor, rendering the polymerase inactive [13].

The sample collection and processing method also plays a crucial role. A study validating a multiplex respiratory panel highlighted that retrospectively collected samples, which had been frozen, sometimes required additional pre-processing steps like centrifugation and washing to remove debris and preservation solutions that could interfere with extraction efficiency. Freshly collected specimens, however, could often be processed directly [25]. This underscores that the sample's history is an integral part of its "type" and must be considered when evaluating template quality and planning an assay.

Impact of Sample Type and Common Inhibitors

The table below summarizes common sample types, their associated inhibitors, and the specific mechanisms by which they impact PCR accessibility and efficiency.

Table 1: Common Sample Types, Inhibitors, and Their Impact on PCR

| Sample Type | Common Inhibitors/Contaminants | Mechanism of PCR Inhibition | Effect on Target Accessibility |

|---|---|---|---|

| Blood & Serum | Heparin, Hemoglobin, Immunoglobulin G | Heparin binds to and inhibits polymerase; heme interferes with fluorescence detection [26]. | Blocks enzyme activity; reduces fluorescence signal, obscuring results. |

| Soil & Plants | Humic Acid, Fulvic Acid, Polyphenols | Bind to nucleic acids and polymerase, creating a physical barrier to amplification [13]. | Prevents primer annealing and polymerase binding to the template. |

| Nasopharyngeal Swabs | Mucus, Salts, Proteins | Increases viscosity, can co-purify with nucleic acids, and may inhibit enzyme function [25]. | Creates a physical barrier; non-specific binding competes with primer binding. |

| Microbial Cultures | Polysaccharides, Proteins from cell lysis | Can co-precipitate with nucleic acids during extraction, interfering with downstream reactions. | Can coat the template, reducing primer and polymerase accessibility. |

| Formalin-Fixed Paraffin-Embedded (FFPE) Tissues | Formaldehyde cross-links, Paraffin | Formaldehyde causes nucleic acid cross-linking and fragmentation [13]. | Creates physical blocks in the template, preventing polymerase processivity. |

Experimental Protocols for Assessing and Ensuring Template Quality

Robust experimental validation is critical for confirming template quality and ensuring the reliability of PCR results. The following protocols provide a framework for this essential process.

Protocol for Determining the Limit of Detection (LOD) in Complex Sample Types

The LOD defines the lowest concentration of a target that can be reliably detected in a specific sample matrix and is a direct measure of an assay's sensitivity under realistic conditions.

- Sample Preparation: Spike a known quantity of the target nucleic acid (e.g., from a cultured isolate or synthetic standard) into the sample matrix of interest (e.g., nasopharyngeal swab medium, soil extract). Use a negative sample matrix, confirmed to be target-free, as the diluent [25] [26].

- Serial Dilution: Create a logarithmic serial dilution (e.g., 1:10) of the spiked sample matrix across a concentration range expected to bracket the LOD.

- Nucleic Acid Extraction: Extract nucleic acids from each dilution using the standard protocol intended for the assay. Include a negative extraction control (nuclease-free water processed through extraction).

- PCR Amplification: Amplify each extracted dilution in a defined number of replicates (a minimum of 20 replicates is recommended for statistical rigor) using the optimized PCR conditions [25] [26].

- Data Analysis: Determine the LOD using probit analysis. The LOD is statistically defined as the concentration at which the target is detected with ≥95% probability [25] [26]. Plot the positive rate against the concentration and fit a probit model to identify this point.

Protocol for Evaluating Extraction Efficiency and Purity

This protocol assesses the success of the nucleic acid extraction in removing PCR inhibitors and yielding a pure, amplifiable template.

- Extraction with External Control: During the nucleic acid extraction from the test sample, include a known quantity of a non-competitive exogenous control (e.g., a synthetic DNA or RNA sequence not found in the sample) [25]. This controls for extraction efficiency and inhibition.

- Spectrophotometric Analysis: Measure the concentration and purity of the extracted nucleic acids using a spectrophotometer (e.g., Nanodrop). Key metrics include:

- A260/A280 Ratio: An ratio of ~1.8 indicates pure DNA; ~2.0 indicates pure RNA. Significant deviations suggest protein or other contamination.

- A260/A230 Ratio: A ratio in the range of 2.0-2.2 is ideal. Lower values suggest contamination with salts, EDTA, or carbohydrates.

- Amplification of Controls: Perform real-time PCR amplifying both the external control and an endogenous control (e.g., a housekeeping gene from the sample). Compare the cycle threshold (Ct) values of the external control extracted from the sample versus the control extracted from water.

- Interpretation: A significantly delayed Ct in the sample extract indicates the presence of PCR inhibitors that were not removed during extraction, directly signaling reduced target accessibility.

Workflow Diagram for Template Quality Assessment

The following diagram visualizes the logical workflow for a comprehensive template quality assessment, integrating the protocols described above.

Mitigation Strategies and Optimization Techniques

When template quality is suboptimal or target accessibility is low, several proven strategies can be employed to rescue the assay.

Table 2: Strategies to Overcome PCR Inhibition and Improve Target Accessibility

| Strategy | Description | Use Case Example |

|---|---|---|

| Template Dilution | Diluting the extracted nucleic acid reduces the concentration of inhibitors to a level that no longer affects the polymerase, while often retaining sufficient target for detection [13]. | First-line approach for suspected inhibition; effective against carryover salts and weak inhibitors. |

| Use of Buffer Additives | DMSO (2-10%): Disrupts DNA secondary structures, improving polymerase processivity on GC-rich templates.Betaine (1-2 M): Homogenizes the melting temperature of DNA, aiding in the amplification of templates with varying GC content and long amplicons [13]. | DMSO for GC-rich targets (>65%); Betaine for long-range PCR or complex templates. |

| Alternative Polymerase Selection | High-Fidelity Polymerases (e.g., Pfu, KOD): Possess 3'→5' exonuclease (proofreading) activity for higher accuracy.Hot-Start Taq: Requires heat activation, preventing non-specific primer extension and primer-dimer formation during reaction setup, which is crucial for complex samples [13]. | High-Fidelity for cloning/sequencing; Hot-Start for all sample types to enhance specificity. |

| Modified Extraction Protocols | Incorporating additional wash steps, using specialized inhibitor removal kits, or implementing pre-processing steps like centrifugation to remove debris [25]. | Essential for challenging sample types like soil, plants, or complex clinical matrices like swabs. |

| Mg²⁺ Concentration Optimization | Titrating the concentration of Mg²⁺, a critical polymerase cofactor, between 1.5-4.0 mM. Too little reduces activity; too much promotes non-specific binding and reduces fidelity [13]. | Fine-tuning is required for any new assay or when changing sample types to maximize yield and specificity. |

The Scientist's Toolkit: Essential Reagents for Quality PCR

The following table details key reagents and their critical functions in ensuring high-fidelity PCR amplification, particularly when working with challenging templates.

Table 3: Key Research Reagent Solutions for Optimized PCR

| Reagent / Material | Function / Rationale |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Pfu) | Contains proofreading (3'→5' exonuclease) activity, drastically reducing error rates during amplification, which is critical for sequencing and cloning applications [13]. |

| Hot-Start Taq Polymerase | Remains inactive at room temperature, preventing non-specific amplification and primer-dimer formation during reaction setup. This is vital for maximizing specificity and yield in complex, multi-template reactions [13]. |

| DMSO (Dimethyl Sulfoxide) | A buffer additive that helps denature DNA secondary structures by lowering the template's melting temperature (Tm). This is particularly useful for amplifying GC-rich regions (>65% GC) that are prone to forming stable secondary structures [13]. |

| Betaine | A chemical additive that equalizes the contribution of GC and AT base pairs to DNA stability. This homogenizes the Tm across the template, improving the amplification efficiency of long targets and regions with high secondary structure [13]. |

| Inhibitor-Resistant Reaction Buffers | Specially formulated buffers that can tolerate common inhibitors found in complex samples (e.g., humic acid, heparin, hematin), improving first-pass amplification success rates. |

| Magnesium Chloride (MgCl₂) | The source of Mg²⁺ ions, an essential cofactor for DNA polymerase activity. Its concentration must be optimized for each assay, as it directly influences primer annealing, enzyme fidelity, and overall reaction efficiency [13]. |

| Nucleic Acid Extraction Kits (Inhibitor Removal) | Kits designed for specific sample types (soil, blood, FFPE) that include silica-membrane technology and dedicated wash buffers to remove PCR inhibitors and yield pure nucleic acids. |

Advanced Strategies and Real-World Deployments in PCR Assay Development

In the evolving landscape of molecular diagnostics and pathogen detection, researchers face a persistent challenge: the need to detect an expanding panel of targets with limited instrumentation resources. The context of target identification for PCR assays research demands sophisticated approaches that maximize information output from minimal platform complexity. Multiplex polymerase chain reaction (PCR), defined as the simultaneous amplification of multiple nucleic acid targets in a single reaction vessel, represents a cornerstone methodology for efficient genetic analysis [27]. While conventional multiplexing strategies often rely on expensive multi-channel instruments capable of detecting multiple fluorescent dyes, significant technological advances now enable sophisticated multiplexing on standard single-channel real-time PCR instruments [28] [29].

This technical guide explores two revolutionary approaches that expand detection capabilities without requiring hardware modifications: dynamic melting curve analysis and Multiple Detection Temperature (MuDT) methodology. These techniques represent paradigm shifts in data acquisition and analysis, allowing researchers to extract multidimensional information from a single fluorescent channel [28] [29]. By leveraging the fundamental principles of DNA thermodynamics and probe kinetics, these methods transform limitations into opportunities for innovation within drug development and diagnostic research environments where comprehensive pathogen profiling is essential for therapeutic decision-making.

The imperative for such methodologies is particularly strong in clinical diagnostics, where syndromes like respiratory infections, gastrointestinal illnesses, and bloodstream infections can involve dozens of potential pathogens with overlapping clinical presentations [27] [30]. Syndromic testing approaches, which target comprehensive groupings of pathogens associated with specific clinical syndromes, benefit tremendously from expanded multiplexing capabilities without corresponding increases in instrumentation complexity or cost [30]. This technical guide provides detailed methodologies and experimental protocols to master these advanced multiplexing techniques, ultimately enhancing target identification capabilities within PCR assay research.

Fundamental Principles of Single-Channel Multiplexing

Thermal Discrimination of Amplicons

The foundation of single-channel multiplexing rests on the thermodynamic principle that each unique DNA amplicon exhibits characteristic melting behavior based on its GC content, length, and sequence composition. Conventional post-amplification melting curve analysis has long exploited these differences for product identification, but single-channel multiplexing extends this principle through dynamic analysis during each PCR cycle [28]. When using intercalating dyes like SYBR Green or EvaGreen, fluorescence intensity directly correlates with double-stranded DNA quantity, with sharp decreases occurring at each amplicon's specific melting temperature (Tm) [28].

The critical innovation lies in performing melting curve analysis during the transition from elongation to denaturation phases in each thermal cycle, rather than solely after amplification completion. This continuous fluorescence monitoring (CFM) approach captures hundreds of data points during temperature ramping, generating melting profiles for amplification products in real time [28]. Research demonstrates that a temperature ramping rate of 0.8 K·s–1 provides optimal resolution for distinguishing amplicons with Tm differences as small as 2°C, significantly expanding multiplexing capacity on single-channel instruments [28].

Detection Temperature-Based Discrimination

An alternative approach, termed Multiple Detection Temperature (MuDT), eliminates the need for melting curve analysis entirely by leveraging differential fluorescence intensities at strategically chosen detection temperatures [29]. This method employs hybridization-based chemistry (such as Tagging Oligonucleotide Cleavage and Extension - TOCE) where fluorescence generation requires probe-target duplex formation [29].

The fundamental principle recognizes that at temperatures significantly above a probe's Tm, no fluorescence signal is generated, while at temperatures below Tm, robust fluorescence occurs. By selecting intermediate detection temperatures where high-Tm targets generate fluorescence but low-Tm targets do not, researchers can discriminate multiple targets through strategic temperature control during data acquisition [29]. The MuDT system further enables quantification through ΔRFU (change in Relative Fluorescence Units) analysis between detection temperatures, assigning threshold cycle (Ct) values to individual targets despite shared fluorescence channels [29].

Figure 1: Fundamental workflows for single-channel multiplexing approaches showing (A) dynamic melting curve analysis and (B) Multiple Detection Temperature (MuDT) methodology.

Experimental Approaches and Methodologies

Dynamic Melting Curve Analysis Protocol

The dynamic melting curve analysis method enables real-time specificity determination and multiplexing by performing melting analysis during each PCR cycle [28]. The following protocol provides a detailed methodology for implementation:

Sample Preparation and Reaction Setup:

- Prepare PCR master mix containing intercalating dye (e.g., SYBR Green I or EvaGreen), DNA polymerase, dNTPs, buffer components, and primers for all targets.

- Include 3-5 mM MgCl₂ in the final reaction concentration to enhance fluorescence signal.

- Design primers to generate amplicons with Tm differences of at least 2°C, targeting lengths between 50-150 bp for optimal amplification efficiency [28].

- Distribute reactions into appropriate single-channel real-time PCR instruments.

Thermal Cycling with Continuous Fluorescence Monitoring:

- Initial denaturation: 95°C for 2 minutes

- 40-45 cycles of:

- Denaturation: 95°C for 15 seconds

- Annealing: Primer-specific temperature (typically 55-65°C) for 20 seconds

- Extension: 72°C for 30 seconds

- Data acquisition: Continuous fluorescence monitoring during slow ramping (0.8 K·s–1) from extension to denaturation temperature [28]

Data Processing and Analysis:

- Convert instrument-reported temperature to actual sample temperature using system-specific time constant (typically ≈1.4 s) and differential equation: dTS/dt = (T − TS)/τ, where TS is sample temperature, T is heater temperature, and τ is the system time constant [28].

- Split fluorescence F(t) and temperature TS(t) data into individual PCR cycles.

- Eliminate time parameter to generate fluorescence F as a function of temperature TS for each cycle.

- Calculate the negative derivative of fluorescence with respect to temperature (−dF/dTS) to identify melting peaks.

- Construct amplification curves for each target by plotting −dF/dTS peak heights versus cycle number.

- Determine Ct values for each target using nonlinear curve fitting to the function: Y = a/(1 + e^−(N−b)/c)), where Y is −dF/dTS peak height, N is cycle number, and a, b, c are fitting parameters [28].

Table 1: Performance Characteristics of Single-Channel Multiplexing Methods

| Parameter | Dynamic Melting Curve Analysis | MuDT Approach |

|---|---|---|

| Minimum Tm Difference | 2°C [28] | 10°C [29] |

| Maximum Targets Demonstrated | 3 [28] | 2 per channel [29] |

| Data Points per Cycle | Hundreds (continuous monitoring) [28] | 2-3 (discrete temperatures) [29] |

| Ct Determination | Individual for each target [28] | Individual for each target [29] |

| Typical Ramping Rate | 0.8 K·s–1 [28] | Not applicable |

| Detection Temperatures | Not applicable | 60°C, 72°C, 95°C [29] |

MuDT (Multiple Detection Temperatures) Protocol

The MuDT approach enables multiplexing without melting curve analysis by employing strategic detection temperature selection [29]:

Assay Design and Probe Configuration:

- Employ hybridization-based chemistry such as TOCE (Tagging Oligonucleotide Cleavage and Extension), molecular beacons, or FRET probes [29].

- Design probes for each target with minimum Tm differences of 10°C between high and low Tm targets.

- For TOCE chemistry: Design "Pitcher" oligonucleotides that specifically bind targets and release "Extenders" when cleaved. The Extenders serve as primers for "Catcher" templates containing quenched fluorescent molecules [29].

Thermal Cycling with Multiple Detection Steps:

- Initial denaturation: 95°C for 2 minutes

- 40-45 cycles of:

- Denaturation: 95°C for 15 seconds

- Annealing and extension: 60°C for 30-60 seconds (with fluorescence acquisition)

- Additional detection steps: 5 seconds each at strategically chosen temperatures (e.g., 60°C, 72°C, and 95°C) with fluorescence acquisition at each [29]

Data Analysis and Target Quantification:

- For high-Tm target identification: Use fluorescence signals at the intermediate detection temperature (e.g., 72°C) where low-Tm targets generate minimal signal.