PCR Optimization Guide 2025: Proven Strategies for High-Yield, High-Fidelity Results

This guide provides new researchers and drug development professionals with a comprehensive framework for mastering Polymerase Chain Reaction (PCR) optimization.

PCR Optimization Guide 2025: Proven Strategies for High-Yield, High-Fidelity Results

Abstract

This guide provides new researchers and drug development professionals with a comprehensive framework for mastering Polymerase Chain Reaction (PCR) optimization. It covers foundational principles, from reagent function and primer design to advanced methodological strategies like hot-start and quantitative PCR. The article delivers a systematic troubleshooting guide to resolve common amplification issues and outlines rigorous validation protocols to ensure results are reproducible, specific, and suitable for sensitive downstream applications like cloning and sequencing. By integrating foundational knowledge with practical optimization techniques and validation standards, this resource aims to accelerate experimental success in both research and clinical diagnostics.

PCR Fundamentals: Core Principles and Reaction Components

The Polymerase Chain Reaction (PCR) is a cornerstone technique in molecular biology, enabling the amplification of trace amounts of DNA or RNA into millions of copies for a wide array of applications, from gene cloning and diagnostic testing to forensic analysis and biomedical research [1] [2]. For new researchers, a deep understanding of the core PCR process is the essential first step toward effective experimentation and optimization. This guide provides an in-depth technical examination of the four fundamental steps of a standard PCR—denaturation, annealing, extension, and analysis—framed within the critical context of PCR optimization for robust and reliable results.

The Core Principles of PCR

At its heart, PCR is an enzymatic process that amplifies a specific DNA segment through repeated temperature cycles. Each cycle theoretically doubles the amount of the target DNA fragment, leading to exponential amplification [1]. The process relies on a thermostable DNA polymerase, primers designed to flank the target sequence, and nucleotides to build the new DNA strands [3]. The standard PCR formula for yield is PCR product yield = 2N copies, where N is the number of cycles. However, as cycles progress, reagents are consumed and byproducts accumulate, eventually leading to a plateau phase where amplification efficiency drops [1]. Understanding and optimizing the reaction components and cycling conditions is key to maximizing yield and specificity before this plateau occurs.

Detailed Breakdown of the Four PCR Steps

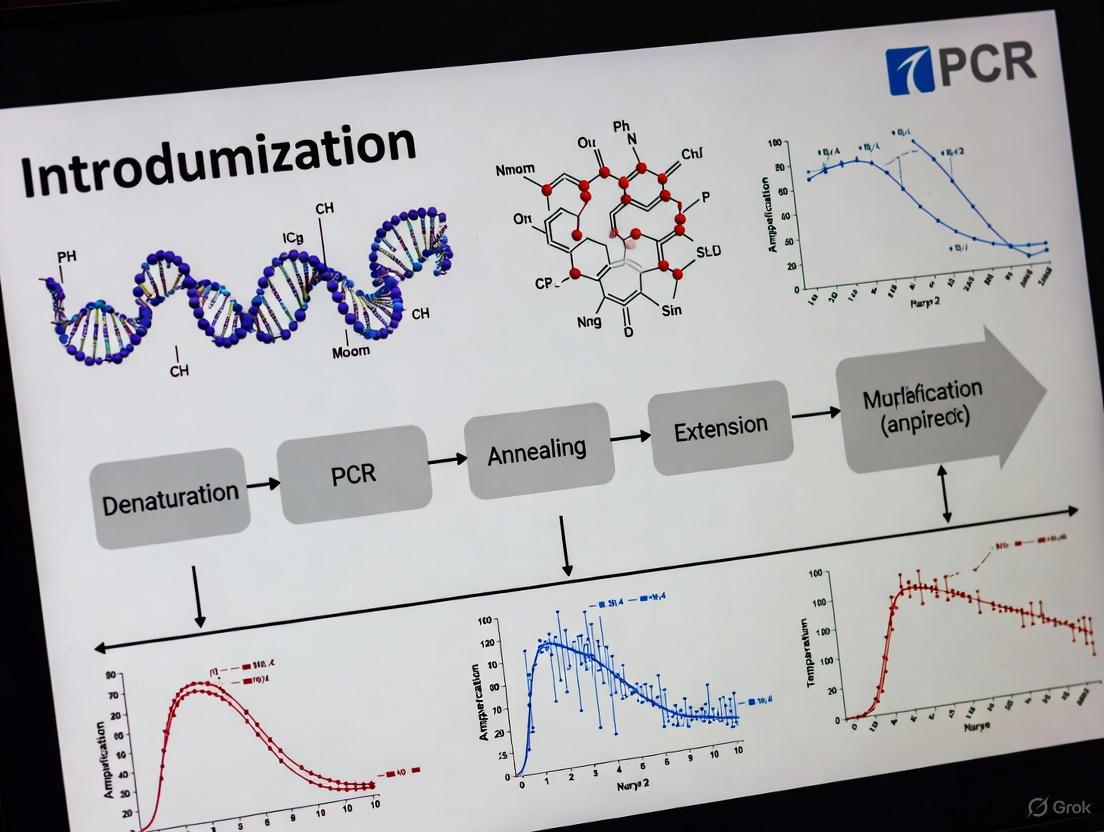

The following diagram illustrates the three temperature-dependent steps of a PCR cycle—Denaturation, Annealing, and Extension—which are repeated 25-40 times to exponentially amplify the target DNA sequence. Analysis is performed after cycling is complete to evaluate the results.

Step 1: Denaturation

The denaturation step is crucial for initiating the reaction by separating the double-stranded DNA template into single strands, providing the necessary template for primer binding.

Process and Purpose: During denaturation, the reaction mixture is heated to a high temperature (typically 94–98°C), which breaks the hydrogen bonds between complementary base pairs of the double-stranded DNA molecule [4] [2]. This results in single-stranded DNA molecules that are accessible to primers in the subsequent annealing step. The initial denaturation at the beginning of the PCR program is often longer (* 1–3 minutes* ) to ensure complete separation of complex DNA, such as genomic DNA, and to activate hot-start DNA polymerases [4]. Subsequent denaturation steps in each cycle are shorter, often 10–60 seconds [5].

Optimization Considerations:

- Template Complexity: Mammalian genomic DNA or DNA with high GC content (>65%) may require longer denaturation times or higher temperatures for complete separation [4].

- Enzyme Stability: While Taq polymerase can withstand brief denaturation temperatures, prolonged incubation above 95°C can lead to enzyme denaturation. Highly thermostable enzymes from Archaea are more suitable for challenging templates requiring harsh denaturation conditions [4].

- Additives: Reagents like glycerol, DMSO, or formamide can help denature DNA with strong secondary structures, potentially reducing the need for extreme temperatures or extended times [4].

Step 2: Annealing

In the annealing step, the reaction temperature is lowered to allow primers to bind specifically to their complementary sequences on the single-stranded DNA template.

Process and Purpose: The temperature is rapidly cooled to a range typically between 45°C and 72°C for 30 seconds to 2 minutes [4] [5]. This enables the forward and reverse primers to hybridize (anneal) to the target DNA flanking the region to be amplified. The annealing temperature is a critical parameter determined by the primer's melting temperature (Tm), which is the temperature at which 50% of the primer-duplex dissociates [4].

Optimization Considerations:

- Tm Calculation: The simplest formula for estimating Tm is Tm = 4(G + C) + 2(A + T), where G, C, A, and T represent the number of each nucleotide in the primer [4] [6]. A more accurate method is the Nearest Neighbor method, which accounts for salt concentrations [4].

- Annealing Temperature: A common starting point is to set the annealing temperature 3–5°C below the calculated Tm of the primers [4]. If nonspecific amplification occurs, increase the temperature in 2–3°C increments. If yield is low, decrease the temperature similarly [4] [5].

- Primer Design: Primers should be 15–30 nucleotides long with a GC content of 40–60% [5]. The 3' end should be rich in G or C bases to enhance binding stability and reduce mismatches [1] [5]. The Tm for both primers should be similar (within 5°C), and their sequences should be checked to avoid primer-dimer formation [5].

- Universal Annealing: Some advanced reaction buffers are formulated to allow for a universal annealing temperature (e.g., 60°C), simplifying protocol setup and reducing optimization time [4].

Step 3: Extension

During extension, the DNA polymerase synthesizes a new DNA strand complementary to the template, starting from the bound primers.

Process and Purpose: The temperature is raised to the optimal range for the DNA polymerase, typically 68–72°C [4] [6]. The enzyme adds nucleotides (dNTPs) to the 3' end of the primer, synthesizing a new DNA strand in the 5' to 3' direction. The extension time depends on the length of the amplicon and the speed of the polymerase. A common guideline is 1 minute per 1000 base pairs for Taq polymerase, though faster enzymes are available [4] [5].

Optimization Considerations:

- Polymerase Selection: Different DNA polymerases have varying extension rates, fidelity (accuracy), and processivity (number of nucleotides added per binding event). For example, Pfu polymerase has proofreading activity (high fidelity) but a slower extension rate than Taq [5].

- Two-Step PCR: If the annealing temperature is within 3°C of the extension temperature, the annealing and extension steps can be combined into a single two-step PCR, shortening the total run time [4].

- Final Extension: A final extension step of 5–15 minutes after the last cycle is often added to ensure all amplicons are fully synthesized and to facilitate proper adenylation (A-tailing) for TA cloning [4].

Step 4: Analysis

After thermal cycling, the amplified PCR products must be analyzed to confirm the success of the reaction, including the presence, size, and specificity of the amplicon.

Process and Purpose: The most common method for analyzing conventional PCR products is agarose gel electrophoresis [3] [2]. The DNA fragments are separated by size in an electric field and visualized using fluorescent dyes like ethidium bromide or safer alternatives. The resulting band pattern is compared to a DNA ladder of known sizes to verify the amplicon size.

Optimization and Advanced Techniques:

- Troubleshooting: The absence of a band, smearing, or multiple bands on the gel indicates issues with amplification specificity or efficiency, requiring optimization of primers, Mg2+ concentration, or cycling conditions [1] [5].

- Quantitative Analysis: In quantitative real-time PCR (qPCR), amplification is monitored in real-time using fluorescent dyes or probes. The quantification cycle (Cq) is determined, which is the cycle number at which the fluorescence crosses a defined threshold, allowing for precise quantification of the initial DNA template [7].

- High-Resolution Melting (HRM): Following qPCR, HRM analysis can be used to distinguish between PCR products based on their melting temperature (Tm), which is sensitive to sequence, length, and GC content. This is useful for species identification, genotyping, and mutation detection without the need for gel electrophoresis [8].

Critical PCR Components and Optimization

A successful PCR requires careful optimization of its core components. The following table summarizes the key reagents, their functions, and critical optimization parameters.

Table 1: Key Research Reagent Solutions for PCR Optimization

| Reagent | Function | Standard Concentration/Range | Optimization Tips |

|---|---|---|---|

| DNA Polymerase | Enzyme that synthesizes new DNA strands [2]. | Varies by enzyme; e.g., 1.25-2.5 U/50µL reaction [5]. | Use hot-start versions to reduce nonspecific amplification [5]. Select high-fidelity enzymes for cloning [5]. |

| Primers | Short oligonucleotides that define the start and end of the target sequence [3]. | 0.1-1.0 µM each; often optimal at 0.4-0.5 µM [1] [5]. | Avoid primer-dimer formation; ensure Tm values are similar and 3' ends are G/C-rich [1] [5]. |

| Template DNA | The DNA sequence to be amplified. | 10 ng - 1 µg genomic DNA; 1e4 - 1e6 copies [5] [6]. | Ensure high quality and purity. Re-quantify DNA stored for long periods [1]. |

| dNTPs | Nucleotide building blocks (dATP, dCTP, dGTP, dTTP) for new DNA strands [3]. | 20-200 µM of each dNTP [5]. | Use balanced equimolar concentrations. Degraded dNTPs are a common cause of PCR failure. |

| MgCl₂ | Essential cofactor for DNA polymerase activity [5]. | 1.5-2.5 mM (often included in buffer) [5] [6]. | Critical optimization parameter; titrate in 0.5-1 mM increments between 1-8 mM [6]. |

| Buffer | Provides optimal chemical environment (pH, salts) for the reaction [5]. | Usually supplied as 10X concentrate, used at 1X [5]. | May contain additives like (NH4)2SO4 or KCl to enhance specificity and yield. |

| Additives | Enhance amplification of difficult templates (e.g., high GC%) [5]. | DMSO: 1-10%; BSA: ~400 ng/µL [5]. | Use DMSO or formamide for GC-rich templates; BSA can alleviate inhibition from sample contaminants [5]. |

Experimental Protocol: Standard Endpoint PCR

This detailed protocol is adapted from methodologies used in recent research and commercial kits, providing a robust starting point for new researchers [1] [8] [6].

Table 2: PCR Master Mix Setup for a 50 µL Reaction

| Component | Stock Concentration | Final Concentration | Volume per 50 µL Reaction |

|---|---|---|---|

| PCR Buffer | 10X | 1X | 5 µL |

| dNTP Mix | 10 mM each | 200 µM each | 1 µL |

| Forward Primer | 10 µM | 0.4 µM | 2 µL |

| Reverse Primer | 10 µM | 0.4 µM | 2 µL |

| Template DNA | Variable (e.g., 50 ng/µL) | ~100 ng | 2 µL |

| MgCl₂ | 25 mM | 1.5-2.5 mM* | 3-5 µL |

| DNA Polymerase | 5 U/µL | 1.25-2.5 U | 0.5 µL |

| Nuclease-Free Water | - | - | To 50 µL |

*Concentration may require optimization; if already present in the buffer, do not add extra.

Procedure:

- Prepare Master Mix: In a sterile, nuclease-free tube, combine all components except the template DNA on ice. This minimizes pipetting errors and contamination. A common order is water → primers → template → PCR Mix enzymes [1].

- Aliquot and Add Template: Dispense the master mix into individual PCR tubes or a multi-well plate, then add the template DNA to each reaction.

- Thermal Cycling: Place the tubes in a thermal cycler and run the program with the parameters outlined in the following table.

Table 3: Standard Three-Step PCR Thermal Cycling Conditions

| Step | Temperature | Time | Cycles | Purpose |

|---|---|---|---|---|

| Initial Denaturation | 94-98°C | 2-5 minutes | 1 | Complete denaturation of complex DNA and enzyme activation [4] [6]. |

| Denaturation | 94-98°C | 20-60 seconds | 25-35 | Separate newly synthesized DNA strands for the next cycle. |

| Annealing | 45-72°C* | 20-60 seconds | 25-35 | Primer binding to the specific target sequence. |

| Extension | 68-72°C | 20-60 sec/kb | 25-35 | Synthesis of new DNA strands by the polymerase. |

| Final Extension | 68-72°C | 5-10 minutes | 1 | Complete synthesis of all PCR fragments and A-tailing for cloning [4]. |

| Hold | 4-10°C | ∞ | 1 | Short-term storage of samples. |

*Temperature is primer-specific and must be optimized. _*Time is dependent on amplicon length and polymerase speed.

- Post-PCR Analysis: Analyze 5-10 µL of the PCR product using agarose gel electrophoresis alongside an appropriate DNA size marker to confirm amplification success.

Mastering the four steps of standard PCR—denaturation, annealing, extension, and analysis—provides the foundation upon which all successful molecular biology experiments are built. For the new researcher, a meticulous approach to optimizing each component, from primer design and reagent concentrations to thermal cycling parameters, is not merely a procedural requirement but a critical investment in research efficacy. As PCR technologies continue to evolve with innovations like digital PCR, faster enzymes, and microfluidic platforms, this foundational knowledge will remain essential for adapting to new methods and driving scientific discovery in diagnostics, drug development, and basic research [9] [10].

The Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, enabling the exponential amplification of specific DNA sequences from minimal starting material [11]. Its development revolutionized fields from medical diagnostics to biomedical research [12]. The power of PCR hinges on the precise interplay of core reaction components, each fulfilling a critical biochemical role. For researchers embarking on PCR optimization, a deep understanding of these components—template DNA, primers, deoxynucleoside triphosphates (dNTPs), and the reaction buffer—is not merely beneficial but essential for experimental success. This guide provides an in-depth technical examination of these core elements, framing their functions within the context of robust, reproducible PCR setup and optimization for drug development and scientific research.

Core Component 1: Template DNA

The template DNA is the target sequence that will be amplified. It can originate from various sources, including genomic DNA (gDNA), complementary DNA (cDNA), or plasmid DNA [13]. The composition and complexity of the DNA directly influence the optimal input amount for efficient amplification.

Quantity and Quality Considerations

The optimal amount of template DNA varies significantly based on its type and complexity. For instance, 0.1–1 ng of pure plasmid DNA is often sufficient, whereas 5–50 ng may be required for more complex genomic DNA in a standard 50 µL reaction [13]. Using too much DNA can increase the risk of nonspecific amplification, while too little can reduce the final product yield. The sensitivity of the DNA polymerase used also influences the required template input; enzymes engineered for higher sensitivity require less starting material [13]. In some protocols, especially those involving gDNA, the template input may be defined by copy number, which can be calculated using Avogadro's constant and the molar mass of the DNA [13]. In theory, PCR can amplify a target from a single DNA copy, but in practice, efficiency is highly dependent on reaction components and parameters [13].

Technical Guidelines for Use

Purification: For best results, template DNA should be of high purity. While unpurified PCR products can sometimes be re-amplified, carryover salts, dNTPs, and primers from the previous reaction can inhibit amplification [13]. Diluting the initial reaction in water or, preferably, purifying the amplicons beforehand is recommended.

Handling: The DNA template should be quantified accurately, typically using spectrophotometric or fluorometric methods. It is crucial to avoid introducing nucleases or contaminants that could degrade the template or inhibit the DNA polymerase.

Core Component 2: Primers

PCR primers are short, synthetic single-stranded DNA oligonucleotides, typically 15–30 bases in length, that are designed to be complementary to sequences flanking the target region [13] [14]. They are the key ingredient that defines the specific DNA sequence to be amplified, serving as the starting point for DNA synthesis by the polymerase [14].

Design Principles for Specificity and Efficiency

Careful primer design is paramount for successful PCR amplification. The following principles should be adhered to:

- Length and Melting Temperature (Tm): Primers should be 15-30 nucleotides long, with a calculated Tm between 55–70°C. The Tms of the forward and reverse primers should be within 5°C of each other to ensure both bind with similar efficiency during the annealing step [13] [15].

- GC Content: The GC content should ideally be between 40–60%, with a uniform distribution of G and C bases to prevent mispriming [13] [16].

- 3' End Specificity: The 3' end of the primer is critical for initiation. It should end in a G or C base (a "GC clamp") to promote stable binding due to stronger hydrogen bonding, but should not contain more than three consecutive G or C bases, as this can promote nonspecific priming [13] [15].

- Secondary Structures: Primers must be checked for self-complementarity (which can form hairpin loops) and complementarity to each other (which can form primer-dimers), particularly at their 3' ends [13] [15].

Table 1: Primer Design Guidelines Summary

| Parameter | Recommended Value | Rationale |

|---|---|---|

| Length | 15–30 nucleotides | Balances specificity and binding efficiency. |

| Melting Temperature (Tm) | 55–70°C (within 5°C for a pair) | Ensures simultaneous primer annealing. |

| GC Content | 40–60% | Provides optimal stability; too high increases nonspecific binding. |

| 3' End | One G or C; avoid >3 consecutive G/C | Promotes specific anchoring and extension; reduces mispriming. |

| Secondary Structures | Avoid self-complementarity and primer-dimer formation | Prevents amplification failure and spurious products. |

Optimization in Reaction Setup

In a standard PCR, primers are typically used at a final concentration of 0.1–1 µM [13]. Higher concentrations can lead to mispriming and nonspecific amplification, while lower concentrations may result in little or no amplification of the desired target. For challenging applications like long PCR or when using degenerate primers, concentrations of 0.3–1 µM are often favorable [13].

Core Component 3: Deoxynucleoside Triphosphates (dNTPs)

Deoxynucleoside triphosphates (dNTPs) are the essential building blocks from which DNA polymerase synthesizes a new DNA strand. The four dNTPs—dATP, dCTP, dGTP, and dTTP—provide the adenine, cytosine, guanine, and thymine nucleotides required for DNA replication [16] [17].

Biochemical Function and Reaction Energetics

DNA polymerase catalyzes the formation of a phosphodiester bond between the 3'-hydroxyl group of the last nucleotide in the growing DNA chain and the 5'-phosphate group of the incoming dNTP [17]. The hydrolysis of the dNTP's triphosphate group into pyrophosphate releases energy, which drives the polymerization reaction forward [17]. The four dNTPs are typically added to the PCR in equimolar amounts to ensure balanced and accurate base incorporation [13] [16].

Concentration and Purity in Optimization

The recommended final concentration for each dNTP in a standard PCR is typically 200 µM [15]. However, concentrations can be adjusted within a 20–200 µM range depending on the application [18]. Lower dNTP concentrations (e.g., 20–40 µM) can increase specificity and, when used with non-proofreading polymerases, improve fidelity [13] [18]. Conversely, higher concentrations may be needed for long PCR fragments but can be inhibitory if they exceed optimal levels [13] [18]. The purity of dNTPs is critical; degraded or impure dNTPs can introduce mutations and reduce amplification efficiency [16].

Table 2: dNTP Concentration Guidelines

| Application | Typical Final Concentration (per dNTP) | Notes |

|---|---|---|

| Standard PCR | 150–200 µM | Standard starting point for most applications. |

| High-Fidelity PCR | 20–50 µM | Reduces misincorporation by non-proofreading enzymes. |

| Long-range PCR | Up to 200 µM | Ensures sufficient building blocks for long fragments. |

| General Range | 20–200 µM | Adjustments may be needed based on other components like Mg²⁺. |

Specialized dNTPs for Advanced Applications

Modified dNTPs expand the utility of PCR in research and diagnostics. For example, dTTP can be partially or fully replaced with deoxyuridine triphosphate (dUTP). Subsequent treatment with Uracil DNA Glycosylase (UDG) prior to PCR degrades any carryover amplicons from previous reactions, preventing false positives [13]. Other modified dNTPs (e.g., fluorescently labeled or biotinylated) are used for labeling amplicons for downstream applications like sequencing or detection, though the DNA polymerase must be compatible with these modifications [13] [17].

Core Component 4: Reaction Buffer

The PCR buffer provides a stable chemical environment that optimizes the activity and stability of the DNA polymerase. It typically contains a pH buffer, salts, and magnesium ions [18].

Key Constituents and Their Roles

- Magnesium Ions (Mg²⁺): This is a critical cofactor for all DNA polymerases. Mg²⁺ facilitates the binding of the polymerase to the primer-template complex and catalyzes the incorporation of dNTPs during phosphodiester bond formation [13] [18]. The concentration of Mg²⁺ (usually supplied as MgCl₂) is one of the most important variables to optimize, typically ranging from 0.5–5.0 mM [18] [15]. Excessive Mg²⁺ reduces specificity and can promote non-specific amplification, while insufficient Mg²⁺ renders the polymerase inactive [18].

- pH Buffer: Tris-HCl is commonly used to maintain a stable pH, usually around 8.3, which is optimal for Taq polymerase activity [18].

- Potassium Salt (KCl): Potassium chloride, typically at 35–100 mM, helps promote primer annealing by stabilizing the primer-template duplex [18] [15].

Additives and Enhancers

Various additives can be incorporated into the buffer to overcome amplification challenges:

- Dimethyl Sulfoxide (DMSO): Added at 1–10% (though often <5%), DMSO disrupts base pairing, which helps reduce secondary structures in GC-rich templates and effectively lowers the Tm [18] [15]. High concentrations can inhibit Taq polymerase.

- Betaine: Used at 0.5–2.5 M, betaine can enhance the amplification of GC-rich templates and reduce the DNA Tm's dependence on dNTP concentration [18] [15].

- Bovine Serum Albumin (BSA): BSA (up to 0.8 mg/mL) can bind to inhibitors present in the DNA sample, such as polyphenols or salts, thereby neutralizing their effects [18].

Integrated PCR Workflow and Component Interaction

The PCR process is a cyclic sequence of temperature changes designed to exploit the functions of each core component. The following diagram illustrates the workflow and highlights where each key component acts.

The Scientist's Toolkit: Essential Research Reagents

Successful PCR optimization requires not only the core components but also a suite of reliable reagents and tools. The following table details essential materials for setting up and analyzing PCR experiments.

Table 3: Essential Research Reagents for PCR

| Reagent / Material | Function / Application | Key Considerations |

|---|---|---|

| Thermostable DNA Polymerase | Enzyme that synthesizes new DNA strands. | Choice depends on application (e.g., standard, high-fidelity, long-range). Taq is common; Pfu offers proofreading [11] [12]. |

| dNTP Mix | Pre-mixed equimolar solution of dATP, dCTP, dGTP, dTTP. | Sourced as purified, high-quality solutions to ensure fidelity and efficiency. Verify concentration and avoid freeze-thaw cycles [19] [17]. |

| Oligonucleotide Primers | Custom-designed sequences defining the amplification target. | Must be designed with specificity in mind. Require proper resuspension and storage to prevent degradation [14] [15]. |

| Nuclease-Free Water | Diluent for the reaction mixture. | Essential for preventing degradation of reaction components by environmental nucleases. |

| Thermal Cycler | Instrument that automates temperature cycling. | Must be calibrated for accurate and reproducible temperature control across all wells. |

| Agarose & Electrophoresis Equipment | For post-PCR analysis to separate and visualize amplicons by size. | Requires DNA ladder (size standard) for product verification [12]. |

| PCR Additives (e.g., DMSO, BSA) | Enhancers to overcome challenges like GC-richness or sample inhibitors. | Concentration must be optimized, as high levels can be inhibitory [18] [15]. |

Mastering the core components of PCR—template DNA, primers, dNTPs, and buffer—is a fundamental requirement for any researcher seeking to harness the full power of this technique. The journey from a failed reaction to a specific, high-yield amplification often lies in the systematic optimization of these elements. By applying the detailed principles and guidelines outlined in this whitepaper, from precise primer design and dNTP balancing to strategic buffer formulation, scientists can transform PCR from a black-box procedure into a reliably controlled and powerfully adaptable tool. This deep understanding is the bedrock upon which robust, reproducible, and innovative research in drug development and molecular biology is built.

The Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, enabling the specific amplification of target DNA sequences from minimal starting material [2]. At the heart of every PCR reaction lies the DNA polymerase, an enzyme responsible for synthesizing new DNA strands by adding nucleotides to a growing DNA chain during the extension phase of thermal cycling [20]. Since the introduction of Taq DNA polymerase from Thermus aquaticus in the 1980s, significant advancements have been made in developing specialized DNA polymerases with enhanced properties tailored for specific applications [20]. These developments have transformed PCR from a relatively simple amplification method to a sophisticated tool capable of meeting the rigorous demands of modern research, clinical diagnostics, and therapeutic development.

The selection of an appropriate DNA polymerase represents one of the most critical factors in PCR optimization, directly impacting amplification success, yield, specificity, and sequence accuracy [20]. Researchers now face a choice among conventional polymerases like Taq, high-fidelity enzymes with proofreading capabilities, and specialized hot-start formulations designed to prevent nonspecific amplification. Each polymerase category offers distinct advantages and limitations that must be carefully considered in the context of experimental goals, template characteristics, and downstream applications. This technical guide provides an in-depth comparison of these polymerase classes, equipping researchers with the knowledge needed to make informed decisions for their specific PCR applications within the broader context of PCR optimization.

Key Characteristics of DNA Polymerases

Thermostability

Thermostability refers to a DNA polymerase's ability to withstand the high temperatures required for DNA denaturation during PCR cycling without significant loss of activity. Taq DNA polymerase, derived from the thermophilic bacterium Thermus aquaticus, has a half-life of approximately 40 minutes at 95°C, making it suitable for standard PCR applications [20] [13]. However, polymerases from hyperthermophilic archaea such as Pyrococcus furiosus (Pfu polymerase) exhibit dramatically enhanced thermostability—approximately 20 times more stable than Taq at 95°C [20]. This property is particularly valuable for protocols requiring prolonged high-temperature incubations or for amplifying templates with extensive secondary structure that may require longer denaturation times.

Processivity

Processivity defines the number of nucleotides a DNA polymerase can incorporate per single binding event with the template DNA [20]. Highly processive enzymes remain bound to the DNA template for extended periods, incorporating more nucleotides before dissociating. Taq polymerase has moderate processivity, incorporating approximately 60 bases per second at 70°C [13]. Early proofreading polymerases like Pfu historically exhibited lower processivity due to competition between polymerization and exonuclease activities, but modern engineered polymerases have addressed this limitation through the incorporation of DNA-binding domains that enhance template affinity without compromising enzymatic activity [20]. Enhanced processivity is particularly beneficial for amplifying long templates (>5 kb), GC-rich sequences that form stable secondary structures, and when PCR inhibitors are present in the sample [20].

Fidelity and Proofreading Activity

Fidelity refers to the accuracy with which a DNA polymerase replicates the template sequence, minimizing misincorporation of incorrect nucleotides [21]. This characteristic is quantified as the error rate, typically expressed as the number of errors per base per duplication event [22]. Fidelity is primarily determined by two mechanisms: selective nucleotide incorporation at the polymerase active site and 3'→5' exonuclease (proofreading) activity that removes misincorporated nucleotides [20] [21].

The geometric constraints of the polymerase active site promote selective incorporation of correct nucleotides through optimal alignment of catalytic groups. When an incorrect nucleotide is incorporated, the resulting suboptimal architecture causes a synthetic delay, increasing the opportunity for the incorrect nucleotide to dissociate before chain elongation continues [21]. Proofreading enzymes contain a separate exonuclease domain that detects and excises misincorporated nucleotides from the 3' end of the growing DNA strand before further extension occurs [21]. Polymerases with robust proofreading capabilities can achieve error rates up to 280 times lower than Taq polymerase [21].

Specificity and Hot-Start Activation

Specificity refers to a polymerase's ability to amplify only the intended target sequence without producing nonspecific byproducts such as primer-dimers or misprimed amplification artifacts [20]. Conventional DNA polymerases exhibit residual activity at room temperature, allowing them to extend primers that have bound to non-target sequences with partial complementarity during reaction setup [23]. These nonspecific products are then amplified throughout subsequent PCR cycles, reducing target yield and compromising downstream applications.

Hot-start technology addresses this limitation by inhibiting polymerase activity during reaction setup until elevated temperatures are reached in the thermal cycler [23]. This inhibition is achieved through various mechanisms including antibody-mediated blocking, chemical modification, Affibody molecules, or aptamers that bind to the enzyme's active site at lower temperatures [23] [24]. When the reaction mixture reaches the initial denaturation temperature (typically >90°C), the inhibitory molecule is denatured or released, restoring full polymerase activity under conditions where primer binding is highly specific [20]. This approach significantly reduces nonspecific amplification and increases target yield, particularly for complex templates or low-copy-number targets [23].

Table 1: DNA Polymerase Characteristics and Their Impact on PCR Performance

| Characteristic | Definition | Impact on PCR | Representative Enzymes |

|---|---|---|---|

| Thermostability | Ability to withstand high temperatures without denaturation | Determines suitability for high-temperature protocols and template denaturation requirements | Pfu (high), Taq (moderate) |

| Processivity | Number of nucleotides incorporated per binding event | Affects amplification efficiency for long templates, GC-rich regions, and in presence of inhibitors | Engineered polymerases (high), Taq (moderate), Pfu (lower) |

| Fidelity | Accuracy of DNA sequence replication | Critical for cloning, sequencing, and mutagenesis; reduces downstream sequencing burden | Q5 (very high), Pfu/Phusion (high), Taq (standard) |

| Specificity | Ability to amplify only intended targets | Reduces nonspecific products and primer-dimers; improves yield and downstream application success | Hot-start formulations (high), Standard polymerases (variable) |

Comparative Analysis of DNA Polymerase Types

Taq DNA Polymerase

Taq DNA polymerase, isolated from Thermus aquaticus, represents the foundational enzyme that enabled the automation of PCR [2]. This 94 kDa enzyme exhibits robust DNA-synthesizing capability with a typical incorporation rate of 60 nucleotides per second at 70°C [13]. Its moderate thermostability (half-life of approximately 40 minutes at 95°C) makes it suitable for standard PCR applications with denaturation temperatures of 94-95°C [13]. A distinctive feature of Taq polymerase is its terminal transferase activity, which adds a single deoxyadenosine (A) to the 3' ends of PCR products. This property is exploited in TA cloning strategies, facilitating direct ligation of PCR products into vectors with complementary 3'-T overhangs.

The primary limitation of Taq polymerase is its relatively low fidelity, with error rates typically ranging from 1.0-20.0×10⁻⁵ errors per base per duplication [22] [21]. This accuracy limitation stems from the absence of 3'→5' proofreading exonuclease activity, leaving the enzyme unable to correct misincorporated nucleotides. Consequently, Taq polymerase is generally unsuitable for applications requiring high sequence accuracy, such as cloning without subsequent sequencing verification, site-directed mutagenesis, or long-amplicon generation where errors accumulate across the sequence.

Table 2: Taq DNA Polymerase Characteristics and Applications

| Property | Specification | Optimal Reaction Conditions | Primary Applications |

|---|---|---|---|

| Origin | Thermus aquaticus | 1.5-2.0 mM Mg²⁺, pH 8.3-9.0 | Routine PCR, genotyping, allele-specific PCR |

| Error Rate | 1.0-20.0×10⁻⁵ errors/bp/duplication [22] | 200 µM each dNTP | Diagnostic applications not requiring sequence perfection |

| Thermal Stability | Half-life ~40 min at 95°C [13] | Denaturation: 94-95°C | Standard-length amplifications (≤5 kb) |

| Processivity | ~60 nt/second at 70°C [13] | Extension: 68-72°C | TA cloning strategies |

| Special Features | 5'→3' polymerase activity, A-tailing | Annealing: 5°C below primer Tm | Educational demonstrations, routine amplification |

High-Fidelity DNA Polymerases

High-fidelity DNA polymerases represent a significant advancement over Taq through the incorporation of 3'→5' exonuclease (proofreading) activity that dramatically reduces error rates during DNA synthesis [20]. These enzymes typically fall into two categories: naturally occurring polymerases from hyperthermophilic archaea and engineered enzymes optimized through directed evolution. The proofreading mechanism involves enzymatic removal of misincorporated nucleotides from the 3' end of the growing DNA strand before continuing synthesis, improving accuracy by 10-300-fold compared to Taq polymerase [21].

Naturally occurring proofreading enzymes include Pfu (from Pyrococcus furiosus), Pwo (from Pyrococcus woesii), and KOD (from Thermococcus kodakarensis). These polymerases typically achieve error rates in the range of 1.0×10⁻⁶ to 1.2×10⁻⁵ errors per base per duplication [22] [21]. Engineered high-fidelity enzymes such as Phusion, Q5, and Platinum SuperFi II represent further refinements, combining proofreading activity with enhancements to processivity, thermostability, and amplification efficiency. These engineered enzymes can achieve error rates as low as 5.3×10⁻⁷ errors per base per duplication, approaching 300-fold greater accuracy than Taq polymerase [21].

A notable consideration when working with archaeal proofreading polymerases is their inability to amplify uracil-containing templates due to the presence of a uracil-binding pocket that functions as part of a DNA repair mechanism [20]. This property prevents their use in applications requiring dUTP incorporation for carryover prevention or bisulfite-treated DNA analysis. Additionally, some proofreading enzymes exhibit slower synthesis rates compared to Taq polymerase, potentially requiring longer extension times, particularly for long amplicons.

Table 3: High-Fidelity DNA Polymerase Error Rates and Fidelity

| Enzyme | Error Rate (errors/bp/duplication) | Relative Fidelity (Compared to Taq) | Proofreading Activity |

|---|---|---|---|

| Taq | 1.5×10⁻⁴ [21] | 1X | No |

| KOD | 1.2×10⁻⁵ [21] | 12X | Yes |

| Pfu | 5.1×10⁻⁶ [21] | 30X | Yes |

| Deep Vent | 4.0×10⁻⁶ [21] | 44X | Yes |

| Phusion | 3.9×10⁻⁶ [21] | 39X | Yes |

| Q5 | 5.3×10⁻⁷ [21] | 280X | Yes |

Hot-Start DNA Polymerases

Hot-start DNA polymerases employ inhibition strategies to prevent enzymatic activity during reaction setup until elevated temperatures are reached in the thermal cycler [23]. This technology addresses a fundamental limitation of conventional PCR: the extension of misprimed sequences and primer-dimers that occur when reactions are assembled at room temperature [24]. By blocking polymerase activity until the first denaturation step, hot-start methods ensure that DNA synthesis initiates only under conditions of high stringency, dramatically improving amplification specificity and yield [23].

Multiple hot-start technologies have been developed, each with distinct mechanisms and performance characteristics. Antibody-based inhibition utilizes monoclonal antibodies that bind to the polymerase's active site, with dissociation occurring during the initial denaturation step (typically 2-3 minutes at 95°C) [23] [24]. This approach offers rapid activation and restoration of full enzymatic activity but introduces exogenous protein into the reaction. Chemical modification methods employ covalently attached inhibitory groups that block enzyme activity, with gradual reactivation occurring over multiple thermal cycles [23]. While offering stringent inhibition and animal-component-free formulation, chemical activation may require longer initialization times (up to 10-15 minutes) and is less suitable for long amplicons (>3 kb) [24].

Alternative approaches include Affibody molecules (engineered binding proteins) and aptamers (inhibitory oligonucleotides) that block polymerase activity until thermal denaturation [23]. These methods offer shorter activation times and animal-component-free formulations but may provide less stringent inhibition compared to antibody-based methods. Recent innovations include heat-activatable primers containing thermolabile phosphotriester modifications at 3'-terminal positions, which block primer extension until converted to natural phosphodiester linkages at elevated temperatures [25].

Diagram 1: Hot-Start PCR activation mechanism and inhibition methods. Polymerase activity is blocked during reaction setup until heat activation enables specific amplification.

Experimental Protocols for Fidelity Assessment

Direct Sequencing Method

The direct sequencing approach provides the most comprehensive assessment of polymerase fidelity by identifying all types of errors (substitutions, insertions, and deletions) across the entire amplified sequence [22]. This method involves amplifying a target sequence, cloning the products, and sequencing individual clones to identify mutations introduced during amplification.

Protocol:

- Template Preparation: Select a well-characterized template (e.g., plasmid containing a target gene of approximately 1-2 kb). Use 25 pg of plasmid DNA per reaction to ensure sufficient amplification cycles for error detection [22].

- PCR Amplification: Perform amplification using standardized conditions: 30 cycles with denaturation at 95°C for 15-30 seconds, annealing at appropriate temperature for 15-30 seconds, and extension at enzyme-specific optimal temperature (1-2 minutes per kb) [22] [26].

- Product Cloning: Purify PCR products using agarose gel extraction or PCR purification kits. Clone into sequencing vector using restriction digestion/ligation or recombination-based cloning systems such as Gateway technology [22].

- Sequencing and Analysis: Sequence 50-100 individual clones using Sanger sequencing. Align sequences to the reference template and identify mutations. Calculate error rate using the formula: Error rate = total mutations / (total bp sequenced × number of doublings) [22].

The number of template doublings can be calculated from the fold-amplification using the equation: Doublings = log₂(fold-amplification) [22]. This method provides a direct measurement of all error types but requires significant sequencing effort to achieve statistical significance for high-fidelity enzymes, particularly those with error rates below 1×10⁻⁶ [21].

LacZ-Based Screening Assay

The LacZ fidelity assay provides a higher-throughput alternative for initial fidelity assessment through phenotypic screening of mutations in a reporter gene [21]. This method amplifies the lacZα gene, which produces the α-peptide of β-galactosidase, and clones the products into an appropriate vector.

Protocol:

- Template Amplification: Amplify the lacZα gene (approximately 500 bp) using the polymerase under test conditions. Standardize cycle number (typically 16-25 cycles) and template input across comparisons [21].

- Ligation and Transformation: Ligate PCR products into lacZ-deficient vector and transform into an appropriate Escherichia coli host strain.

- Phenotypic Screening: Plate transformed bacteria on media containing X-gal (5-bromo-4-chloro-3-indolyl-β-D-galactopyranoside) and IPTG (isopropyl β-D-1-thiogalactopyranoside). Colonies containing error-free inserts produce functional β-galactosidase and develop blue color, while those with mutations in critical regions remain white [21].

- Calculation: Determine the mutation frequency as the ratio of white colonies to total colonies. Sequence white colonies to characterize the specific mutations introduced.

While higher-throughput than direct sequencing, this method only detects mutations within the critical 349-base region of the lacZ gene that affect β-galactosidase function, potentially underestimating total error rates [21]. Additionally, not all sequence alterations necessarily disrupt enzyme function, creating potential false negatives.

Next-Generation Sequencing Approaches

Next-generation sequencing (NGS) platforms enable comprehensive fidelity assessment by sequencing millions of PCR products simultaneously, providing statistically robust error rate measurements even for high-fidelity enzymes [21]. Single-molecule real-time (SMRT) sequencing by PacBio is particularly valuable as it sequences individual PCR molecules without an intermediate amplification step that could introduce additional errors.

Protocol:

- Library Preparation: Amplify target sequence (typically 1-2 kb) using standardized conditions. Purify products and prepare sequencing library without bacterial cloning.

- Sequencing: Perform SMRT sequencing to generate circular consensus sequences with high accuracy (background error rate of approximately 9.6×10⁻⁸ errors/base) [21].

- Bioinformatic Analysis: Align sequences to reference template using appropriate algorithms. Identify substitutions, insertions, and deletions. Filter potential sequencing errors using quality scores and consensus requirements.

- Error Rate Calculation: Calculate error rates using the formula: Error rate = total confirmed errors / (total bases sequenced × number of doublings). Include standard deviations from multiple replicates.

This approach provides the most statistically rigorous fidelity measurements for high-fidelity enzymes but requires specialized instrumentation and bioinformatic expertise [21].

Selection Guidelines for Specific Applications

Application-Based Polymerase Selection

Different experimental applications impose distinct requirements on DNA polymerase characteristics, necessitating careful selection to achieve optimal results. The following guidelines outline polymerase recommendations for common research scenarios:

Cloning and Expression Studies: Applications involving gene cloning, protein expression, or functional analysis require maximum sequence accuracy to ensure encoded proteins maintain proper amino acid sequences and biological activity. High-fidelity polymerases with proofreading capability such as Q5, Phusion, or Pfu are strongly recommended [20] [21]. These enzymes provide error rates 10-280 times lower than Taq polymerase, minimizing the need for extensive clone sequencing to identify error-free constructs [21]. For TA cloning specifically, non-proofreading enzymes like Taq may be necessary to generate the required 3'A overhangs, but should be followed by thorough sequence verification.

Diagnostic PCR and Genotyping: Applications focused on target detection, such as pathogen identification, genetic screening, or genotyping, prioritize specificity and robustness over ultimate sequence accuracy. Hot-start Taq formulations (antibody-mediated or chemically modified) provide excellent specificity while maintaining cost-effectiveness for high-throughput applications [23] [2]. The hot-start mechanism prevents false positives from mispriming while offering rapid activation for shorter protocol times.

Long-Range PCR: Amplification of targets >5 kb requires polymerases with high processivity and strong strand displacement activity. Engineered polymerases such as PrimeSTAR GXL or specialty long-range mixes combine proofreading activity with enhanced processivity [20]. These formulations often include accessory proteins that improve polymerase binding and progression through complex template regions.

Quantitative PCR (qPCR): qPCR applications require robust amplification efficiency and minimal primer-dimer formation to ensure accurate quantification. Hot-start polymerase formulations, particularly antibody-mediated systems, provide excellent specificity and consistent CT values across replicates [23] [2]. For high-resolution melting curve analysis, polymerases producing uniform amplicons without spurious products are essential.

High-Throughput and Automated Applications: Robotic liquid handling systems benefit from polymerases with room temperature stability during setup. Modern hot-start formulations, particularly antibody-based and chemical modification methods, maintain inhibition during extended room temperature incubation, enabling reliable high-throughput processing without specialized chilled equipment [23].

Table 4: Polymerase Selection Guide for Specific Applications

| Application | Recommended Polymerase Type | Key Considerations | Alternative Options |

|---|---|---|---|

| Cloning & Mutagenesis | High-fidelity proofreading (Q5, Phusion, Pfu) | Lowest error rate critical for sequence integrity | Standard Taq with extensive sequencing |

| Diagnostic PCR | Hot-start Taq (antibody or chemical) | Specificity, cost-effectiveness, rapid results | Standard Taq with optimized conditions |

| Long Amplicons (>5 kb) | Engineered high-processivity enzymes | Enhanced processivity, strand displacement capability | Polymerase mixtures with processivity factors |

| Quantitative PCR | Hot-start (antibody-mediated) | Minimal primer-dimer, consistent efficiency | Chemically modified hot-start |

| Multiplex PCR | Stringent hot-start (antibody or chemical) | Reduced mispriming with multiple primer pairs | Standard hot-start with optimized Mg²⁺ |

| TA Cloning | Standard Taq | A-tailing activity required | Proofreading enzymes with A-tailing protocol |

Template-Specific Considerations

Template characteristics significantly influence polymerase selection and performance. The following template-specific guidelines ensure optimal amplification across diverse scenarios:

High-GC Content Templates: GC-rich sequences (>65% GC) form stable secondary structures that impede polymerase progression. Polymerases with high processivity and enhanced strand displacement capability are essential [20]. Buffer additives such as DMSO, betaine, or GC enhancers can improve melting of secondary structures. Engineered polymerases with DNA-binding domains often outperform natural enzymes for these challenging templates.

Low-Copy-Number Targets: Amplification of rare targets requires maximal specificity to prevent amplification of nonspecific products that can overwhelm the desired signal. Stringent hot-start formulations (antibody-based or chemical modification) provide the strongest inhibition during reaction setup [23] [24]. Additionally, polymerases with high affinity for template (low Km) improve efficiency with limited starting material.

Complex Templates: Templates with extensive secondary structure, hairpins, or repetitive elements benefit from polymerases with both high processivity and proofreading activity [20]. The combination of efficient strand displacement and high fidelity ensures complete and accurate amplification of challenging regions. Elevated denaturation temperatures possible with hyperthermostable enzymes like Pfu can improve melting of stable structures.

Uracil-Containing Templates: Applications involving dUTP incorporation (for carryover prevention) or bisulfite-treated DNA (for methylation analysis) require polymerases capable of amplifying uracil-containing templates [20]. Most archaeal proofreading enzymes cannot amplify these templates due to uracil-binding pockets, making engineered versions or Taq-based systems necessary.

The Scientist's Toolkit: Essential Research Reagents

Table 5: Essential Reagents for PCR Optimization and Fidelity Assessment

| Reagent/Category | Function/Purpose | Application Notes |

|---|---|---|

| High-Fidelity Polymerases | Accurate DNA synthesis for cloning and sequencing | Q5, Phusion, Pfu for low-error amplification; require dNTP optimization [21] |

| Hot-Start Polymerases | Specificity enhancement through temperature activation | Antibody-based: rapid activation; Chemical: stringent inhibition [23] |

| dNTP Mixtures | Nucleotide substrates for DNA synthesis | 200 µM each dNTP standard; lower concentrations (50-100 µM) may enhance fidelity [26] |

| MgCl₂ Solutions | Cofactor for polymerase activity | 1.5-2.0 mM optimal for Taq; concentration affects specificity and yield [26] [13] |

| Fidelity Assessment Systems | Error rate measurement | lacZ assay for screening; Sanger sequencing for validation; NGS for comprehensive analysis [21] |

| Buffer Additives | Enhancement of specific amplification | DMSO, betaine, formamide for GC-rich templates; BSA for inhibitor resistance [20] |

| Cloning Kits | Downstream application of PCR products | TA cloning for Taq products; blunt-end cloning for proofreading enzymes [22] |

The selection of an appropriate DNA polymerase represents a critical decision point in PCR experimental design, with significant implications for amplification success, data quality, and downstream application performance. Taq DNA polymerase remains suitable for routine applications where ultimate sequence accuracy is not paramount, while high-fidelity enzymes with proofreading capability are essential for cloning, protein expression, and any application requiring precise DNA replication. Hot-start formulations, available through multiple inhibition technologies, provide enhanced specificity across all polymerase classes by preventing nonspecific amplification during reaction setup.

The continuing evolution of DNA polymerase technology, including engineered enzymes with combined advantages of high fidelity, processivity, and specificity, continues to expand PCR capabilities. By understanding the fundamental characteristics of each polymerase class and their performance in specific experimental contexts, researchers can make informed selections that optimize results while conserving resources. As PCR maintains its position as a cornerstone technique in molecular biology, appropriate polymerase selection remains fundamental to experimental success across diverse research domains.

Polymersse chain reaction (PCR) is a foundational technique in molecular biology, and its success critically depends on the design of the oligonucleotide primers used to initiate DNA synthesis. Effective primers must specifically bind to the target DNA sequence with high efficiency while avoiding structures that compromise amplification. This guide details the core principles of PCR primer design, providing researchers with a structured framework to create robust assays for applications ranging from basic gene amplification to diagnostic test development.

Core Parameters for Effective Primer Design

The performance of a PCR assay is governed by several key physicochemical properties of the primers. Adhering to the following quantitative ranges ensures optimal binding, specificity, and yield.

Table 1: Core Parameter Guidelines for PCR Primer Design

| Parameter | Recommended Range | Rationale & Impact |

|---|---|---|

| Primer Length | 18–30 nucleotides (bp) [27] [28]; 18–24 bp is often optimal [29] | Balances specificity (longer) with efficient binding and annealing (shorter) [27] [28]. |

| Melting Temperature (Tm) | 55–65°C [30]; 60–64°C is ideal [31]; Primer pairs should be within 2–5°C of each other [27] [31] [30] | Ensures both primers anneal to the template simultaneously under a single reaction temperature [27] [31]. |

| GC Content | 40–60% [27] [28] [5] | Provides stable primer-template binding without promoting non-specific interactions [27] [32]. |

| GC Clamp | Presence of G or C bases at the 3' end | Strengthens local binding via stronger hydrogen bonding, providing a stable start point for polymerase [27] [30] [32]. |

Designing to Avoid Secondary Structures and Mispriming

A well-designed primer must not only bind to its intended target but also avoid unintended interactions with itself, its partner primer, or off-target sequences.

Avoid Secondary Structures: Primers should be screened for hairpins (intra-primer homology), which occur when a region of three or more bases is complementary to another region within the same primer [29]. The stability of these structures is measured by Gibbs free energy (ΔG); any self-dimers or hairpins should have a ΔG weaker (more positive) than –9.0 kcal/mol [31].

Prevent Primer-Dimer Formation: Self-dimers (two same-sense primers annealing) and cross-dimers (forward and reverse primers annealing to each other) consume reagents and reduce yield [29]. This is often caused by inter-primer homology, particularly at the 3' ends [27].

Eliminate Repeated Sequences: Avoid runs of the same nucleotide (e.g., ACCCC) or dinucleotide repeats (e.g., ATATAT), as these can cause mispriming [27] [29].

Ensure Specificity: Always verify primer specificity by performing a sequence similarity search (e.g., NCBI BLAST) against the genome of your organism to ensure they are unique to the intended target [31] [29].

The Primer Design and Validation Workflow

A systematic approach to primer design, from in silico planning to bench-side validation, significantly increases the chance of a successful PCR experiment. The following diagram and protocol outline this process.

Experimental Protocol: Primer Validation and Annealing Temperature Optimization

After designing and obtaining primers, empirical validation is crucial. This protocol uses a gradient PCR to determine the optimal annealing temperature (Ta) [29].

- Primer Resuspension and Dilution: Resuspend the lyophilized primers in sterile TE buffer or nuclease-free water to create a high-concentration stock (e.g., 100 µM). Prepare a working dilution (e.g., 10 µM) for use in PCR reactions [28].

- PCR Master Mix Setup: Assemble reactions using a standard master mix. A typical 50 µL reaction may contain [5]:

- Gradient PCR Cycling:

- Initial Denaturation: 94–98°C for 1–5 minutes [5].

- Amplification Cycles (25–35 cycles):

- Denaturation: 94–98°C for 10–60 seconds.

- Annealing: Set a temperature gradient across the thermal cycler block, typically from 5–10°C below the calculated average Tm of the primers to a few degrees above it [29]. Maintain for 30 seconds.

- Extension: 70–80°C (depending on the polymerase) for 1 minute per 1000 bp [5].

- Final Extension: 70–80°C for 5 minutes [5].

- Hold: 4°C ∞.

- Analysis: Separate the PCR products by agarose gel electrophoresis. The optimal annealing temperature is the one that produces a single, intense band of the expected size with minimal to no non-specific products or primer-dimer [29].

The Scientist's Toolkit: Essential Reagents for PCR

A successful PCR experiment relies on a suite of carefully selected reagents, each serving a critical function in the reaction.

Table 2: Essential Research Reagents for PCR

| Reagent / Solution | Function in the Reaction |

|---|---|

| Thermostable DNA Polymerase (e.g., Taq, Pfu) | Enzyme that synthesizes new DNA strands by adding nucleotides to the 3' end of the primers [2] [5]. |

| dNTP Mix (dATP, dTTP, dCTP, dGTP) | The essential building blocks (nucleotides) for the synthesis of new DNA strands [5]. |

| Oligonucleotide Primers (Forward & Reverse) | Short, single-stranded DNA sequences that define the start and end of the target amplicon by binding complementarily to the template [2] [29]. |

| PCR Reaction Buffer (with Mg²⁺) | Provides the optimal chemical environment (pH, salts) for polymerase activity. Mg²⁺ is a critical cofactor for the enzyme [5]. |

| Template DNA | The sample DNA containing the target sequence to be amplified [5]. |

| Nuclease-Free Water | Solvent used to bring the reaction to its final volume, ensuring no enzymatic degradation of reagents [5]. |

Advanced Considerations and Troubleshooting

- GC-Rich Targets: For templates with high GC content (>60%), additives like DMSO (1-10%) or formamide (1.25-10%) can be included in the reaction mix to help disrupt stable secondary structures and improve amplification [5].

- qPCR Probes: When designing hydrolysis (TaqMan) probes for quantitative PCR, the probe should have a Tm that is 5–10°C higher than the primers, be 20–30 bases long, and avoid a guanine (G) base at the 5' end [31].

- Cloning and Modification: For cloning applications, primers can include 5' extensions containing restriction enzyme sites. Typically, 3-4 extra nucleotides are added 5' of the restriction site to allow for efficient enzyme cutting [27].

Within the framework of polymerase chain reaction (PCR) optimization, the precise control of reaction components is paramount for achieving specific and efficient DNA amplification. Among these components, divalent cations, particularly magnesium ions (Mg²⁺), stand out as a critical cofactor that is indispensable for enzymatic activity and reaction fidelity. For new researchers, understanding the role of Mg²⁺ extends beyond recognizing it as a simple buffer ingredient; it is a fundamental regulator of PCR thermodynamics and kinetics. Magnesium ions directly influence DNA polymerase function, primer-template binding stability, and the overall specificity of the amplification process [33] [34]. Consequently, the optimization of Mg²⁺ concentration is not an optional step but a necessary one for successful PCR, especially when dealing with challenging templates such as genomic DNA or GC-rich sequences [35]. This guide provides an in-depth examination of the role of magnesium in PCR, offering detailed methodologies for its optimization to enhance the reproducibility and reliability of experimental results.

Biochemical Mechanisms of Magnesium in PCR

Magnesium ions (Mg²⁺) serve as an essential cofactor for thermostable DNA polymerases, with their role rooted in well-defined biochemical mechanisms. The primary function of Mg²⁺ is to facilitate the catalytic activity of the DNA polymerase enzyme. It does this by forming a coordinate bond between the enzyme's active site and the phosphate groups of the incoming deoxynucleoside triphosphates (dNTPs) [34]. Specifically, the Mg²+ ion binds to the alpha phosphate group of a dNTP, enabling the nucleophilic attack by the 3'-hydroxyl group of the primer terminus and the subsequent release of pyrophosphate. This interaction is crucial for the formation of the phosphodiester bond that elongates the DNA chain [13]. Without adequate free Mg²⁺, DNA polymerases remain enzymatically inactive, leading to PCR failure [33] [36].

A secondary, but equally critical, function of Mg²⁺ is to stabilize the interaction between the primer and the template DNA. The phosphate backbone of DNA is negatively charged, creating electrostatic repulsion between two complementary nucleic acid strands. Mg²⁺ ions, being divalent cations, effectively shield these negative charges, reducing repulsion and facilitating the annealing of primers to their target sequences [34] [37]. This charge stabilization increases the melting temperature (Tm) of the DNA duplex. Quantitative analyses have demonstrated a logarithmic relationship between MgCl₂ concentration and DNA melting temperature, with every 0.5 mM increase in MgCl₂ within the 1.5–3.0 mM range raising the Tm by approximately 1.2°C [35]. This dual role in both enzyme catalysis and nucleic acid stabilization makes Mg²⁺ a central player in the PCR process.

Table 1: Core Biochemical Functions of Magnesium Ions in PCR

| Function | Molecular Mechanism | Effect on PCR |

|---|---|---|

| Enzyme Cofactor | Binds to dNTPs at the polymerase active site, catalyzing phosphodiester bond formation [34] [13]. | Essential for DNA polymerase activity; without it, DNA elongation cannot proceed. |

| Charge Shielding | Neutralizes the negative charge on the DNA phosphate backbone, reducing electrostatic repulsion [34] [37]. | Stabilizes the primer-template duplex, facilitating proper annealing and increasing melting temperature. |

| Fidelity Regulation | Influences the accuracy of nucleotide incorporation by the DNA polymerase [33] [37]. | Optimal concentrations maximize fidelity; excess Mg²⁺ can reduce enzyme specificity and increase error rates. |

Quantitative Effects and Optimal Concentration Ranges

The concentration of Mg²⁺ is a critical variable that requires precise optimization, as both deficiency and excess can be detrimental to PCR success. A comprehensive meta-analysis of PCR studies established an optimal MgCl₂ concentration range of 1.5 to 3.0 mM for efficient PCR performance [35]. Within this range, a consistent logarithmic relationship with DNA melting temperature is observed, which is fundamental for calculating accurate annealing temperatures [35]. For many standard applications with Taq DNA polymerase, a concentration of 1.5-2.0 mM is often optimal [38].

The requirement for Mg²⁺ is not absolute but is dynamically influenced by the concentrations of other reaction components that can chelate or bind the ion. dNTPs, primers, and the DNA template itself all compete for the available free Mg²⁺ [33] [36]. Notably, dNTPs are strong chelators; therefore, an increase in dNTP concentration must be balanced with an increase in Mg²⁺ concentration to ensure an adequate pool of free ions remains available for the DNA polymerase [13]. Furthermore, the complexity and type of DNA template influence the optimal Mg²⁺ level. Genomic DNA, with its high complexity, often requires higher Mg²⁺ concentrations compared to more straightforward templates like plasmid DNA [35]. The presence of chelating agents from sample preparation, such as EDTA or citrate, can also sequester Mg²⁺, necessitating higher starting concentrations to compensate [33] [34].

Table 2: Factors Influencing Optimal Magnesium Concentration in PCR

| Factor | Influence on Mg²⁺ Requirement | Practical Consideration |

|---|---|---|

| dNTP Concentration | dNTPs chelate Mg²⁺. Higher [dNTP] requires higher [Mg²⁺] to maintain free ion levels [33] [13]. | Standard dNTP concentration is 200 µM each. Adjust Mg²⁺ if dNTP concentration is altered. |

| Template Type & Complexity | Complex templates (e.g., genomic DNA) require more Mg²⁺ than simple ones (e.g., plasmid DNA) [35]. | Start with 2.0 mM for genomic DNA and 1.5 mM for plasmid DNA, then optimize. |

| Presence of Chelators | Agents like EDTA (from DNA storage buffers) bind Mg²⁺, making it unavailable [33] [37]. | Ensure Mg²⁺ is in excess of EDTA concentration. Use minimal EDTA in template storage buffers. |

| Primer Sequence & Tm | Higher Mg²⁺ stabilizes duplexes, effectively lowering the optimal annealing temperature [35]. | If increasing annealing temperature is not an option, slightly reducing Mg²⁺ can increase stringency. |

Deviations from the optimal Mg²⁺ window have clear and predictable consequences. Insufficient Mg²⁺ results in low enzyme activity, yielding little to no PCR product due to inefficient primer extension and poor polymerase function [34] [38] [37]. Conversely, excess Mg²⁺ reduces the fidelity of the DNA polymerase and decreases reaction specificity. This can lead to non-specific amplification, as seen by multiple bands or smears on an agarose gel, because the stabilized primer-template complexes allow primers to bind to incorrect, partially complementary sites on the template DNA [33] [37] [36].

Experimental Optimization Protocols

Magnesium Titration Methodology

A systematic approach to Mg²⁺ optimization is crucial for developing robust PCR assays, particularly for novel targets or challenging templates. The following protocol provides a detailed methodology for determining the optimal MgCl₂ concentration.

- Reaction Setup: Prepare a master mix containing all PCR components except MgCl₂ and the DNA template. Aliquot this master mix into a series of thin-walled PCR tubes. A typical 50 µL reaction will include 1X PCR buffer (without Mg²⁺), 200 µM of each dNTP, 0.1–0.5 µM of each primer, 0.5–2.5 units of DNA polymerase, and a defined amount of template DNA (e.g., 10–100 ng genomic DNA) [38] [15]. The final volume of the master mix should account for the variable MgCl₂ and water to be added.

- MgCl₂ Titration: Supplement each reaction tube with MgCl₂ from a stock solution (e.g., 25 mM) to create a concentration gradient. A recommended starting range is 1.0 mM to 4.0 mM in increments of 0.5 mM [38] [37]. For example, to achieve a final concentration of 1.5 mM in a 50 µL reaction, add 3 µL of 25 mM MgCl₂ stock. Include a negative control (no template DNA) for each Mg²⁺ level to detect contamination or primer-dimer formation.

- Thermal Cycling and Analysis: Run the reactions under standard cycling conditions appropriate for the primer set and amplicon size. Analyze the resulting PCR products using agarose gel electrophoresis. The optimal Mg²⁺ concentration is identified as the one that produces a single, intense band of the expected amplicon size with a clean background and minimal to no non-specific products or primer dimers [15] [39].

Integrated Optimization with Annealing Temperature

Mg²⁺ concentration and annealing temperature (Ta) are interdependent parameters. A higher Mg²⁺ concentration stabilizes the primer-template duplex, which is analogous to lowering the effective Ta. Therefore, optimization should consider both variables simultaneously. The most efficient method is to perform a gradient PCR combined with Mg²⁺ titration [37]. This involves setting up the Mg²⁺ titration series as described and then running the thermal cycler with an annealing temperature gradient across the block. This two-dimensional approach allows for the identification of the best combination of [Mg²⁺] and Ta that yields specific amplification with high yield. Computational models have been developed that achieve excellent predictive capabilities (R² = 0.9942 for MgCl₂) by integrating Tm, GC content, amplicon length, and dNTP concentration, providing a theoretical starting point for empirical optimization [40].

Diagram 1: PCR Magnesium and Temperature Optimization Workflow.

The Scientist's Toolkit: Research Reagent Solutions

A successful PCR experiment relies on high-quality reagents. The following table details essential materials and their specific functions, with a focus on magnesium-related components.

Table 3: Essential Reagents for PCR Optimization with Magnesium

| Reagent | Function/Description | Optimization Consideration |

|---|---|---|

| Thermostable DNA Polymerase (e.g., Taq) | Enzyme that synthesizes new DNA strands. Requires Mg²⁺ as a cofactor for activity [33] [38]. | Use 0.5–2.5 units/50 µL reaction. Higher amounts may tolerate inhibitors but can increase non-specific bands. |

| MgCl₂ Stock Solution (e.g., 25 mM) | Source of divalent magnesium cations (Mg²⁺) for the reaction. | The most common variable for optimization. Supplied separately for fine-tuning [33] [36]. |

| PCR Buffer (Mg²⁺-free) | Provides optimal pH and ionic strength (e.g., Tris-HCl, KCl). | Using a Mg²⁺-free buffer allows for precise, independent control over Mg²⁺ concentration [33]. |

| dNTP Mix | Building blocks (dATP, dCTP, dGTP, dTTP) for new DNA synthesis. | Standard final concentration is 200 µM of each dNTP. They chelate Mg²⁺, so [Mg²⁺] must be in excess [38] [13]. |

| Template DNA | The DNA sample containing the target sequence to be amplified. | Quality is critical. Common amounts: 10–100 ng genomic DNA, 1–10 ng plasmid DNA. Excess template can increase background [38] [13]. |

| Oligonucleotide Primers | Short, single-stranded DNA sequences that define the start and end of the amplicon. | Typical final concentration is 0.1–0.5 µM each. Higher concentrations can promote mispriming [38] [13]. |

Troubleshooting Common Magnesium-Related PCR Issues

Even with a standardized protocol, researchers may encounter suboptimal results. The table below outlines common PCR problems linked to Mg²⁺, their potential causes, and corrective actions.

Table 4: Troubleshooting Guide for Magnesium-Related PCR Issues

| Observed Problem | Potential Cause | Recommended Solution |

|---|---|---|

| No PCR Product | Mg²⁺ concentration is too low; DNA polymerase is inactive [38] [37]. | Increase MgCl₂ concentration in 0.5 mM steps, ensuring it exceeds total [dNTP]. Check for EDTA in template sample. |

| Multiple Bands or Smear | Mg²⁺ concentration is too high, leading to non-specific priming and reduced fidelity [33] [37]. | Decrease MgCl₂ concentration in 0.5 mM steps. Simultaneously, increase the annealing temperature. |

| Primer-Dimer Formation | Excess Mg²⁺ and low annealing temperature facilitate primer-to-primer annealing [34] [39]. | Optimize Mg²⁺ concentration and increase annealing temperature. Ensure 3' ends of primers are not complementary. |

| Low Yield | Suboptimal Mg²⁺ concentration, either too high or too low, reducing efficiency [34]. | Titrate MgCl₂ to find the concentration that maximizes product yield without introducing non-specific products. |

| GC-Rich Template Failure | Stable secondary structures prevent primer binding or polymerase elongation. | Increase Mg²⁺ (e.g., up to 4 mM) to enhance duplex stability. Consider adding 2–10% DMSO or 1–2 M betaine as enhancers [37] [36]. |

Diagram 2: Effect of Magnesium Concentration on PCR Outcome.

Magnesium is far more than a passive component in a PCR buffer; it is a critical cofactor that sits at the crossroads of enzymatic catalysis, nucleic acid thermodynamics, and reaction specificity. Its concentration directly dictates the success or failure of amplification. For researchers embarking on PCR optimization, a methodical titration of MgCl₂ represents one of the most impactful and accessible steps toward achieving robust and reproducible results. By understanding its biochemical roles, recognizing the factors that influence its availability, and applying systematic optimization and troubleshooting protocols, scientists can effectively harness the power of this essential divalent cation to advance their molecular biology research.

Advanced PCR Methods and Their Specific Applications

The Polymerase Chain Reaction (PCR) is a foundational technique in molecular biology, enabling the exponential amplification of specific DNA sequences. However, conventional PCR is often plagued by issues of non-specific amplification and primer-dimer formation, which drastically reduce yield, sensitivity, and reliability [23]. These artifacts typically occur during reaction setup at room temperature, where DNA polymerase retains partial enzymatic activity and can extend primers that are misprimed or bound to each other [41].

Hot-Start PCR represents a critical advancement in PCR optimization by employing specialized mechanisms to inhibit DNA polymerase activity at lower temperatures, preventing unwanted amplification events before thermal cycling begins [23]. This technical guide explores the principles, methodologies, and applications of Hot-Start PCR, providing researchers with comprehensive protocols to enhance assay specificity and efficiency, particularly for demanding applications in diagnostics, genetic testing, and drug development.

The Problem: Non-Specific Amplification in Conventional PCR

In conventional PCR, the reaction mixture is assembled at room temperature, creating conditions where non-specific amplification can occur through two primary mechanisms:

- Mispriming: DNA polymerase can extend primers that bind to template sequences with low homology at lower temperatures [23]. These misprimed sequences compete with the target amplicon for reaction components, significantly reducing the efficiency of specific amplification [25].

- Primer-Dimer Formation: Primers can bind to each other through complementary sequences, creating short artificial templates that DNA polymerase efficiently amplifies [23]. Primer-dimers consume reaction reagents and can dominate the reaction, especially when target template is limited [25].

Impact on Experimental Results

The consequences of non-specific amplification are particularly pronounced in applications requiring high sensitivity:

- Reduced Target Yield: Non-specific products compete with the desired amplicon for enzymes, nucleotides, and primers [23]

- Compromised Sensitivity: Detection of low-copy number targets becomes unreliable amid background amplification [25]

- Downstream Application Failure: Non-specific products can interfere with cloning, sequencing, and other molecular biology applications [23]

Hot-Start PCR: Fundamental Principles and Mechanisms

Core Concept

Hot-Start PCR employs biochemical modifications to prevent DNA polymerase extension at room temperature, with activation occurring only after an initial high-temperature incubation step [23]. This simple yet powerful principle ensures that the polymerase becomes active only when the reaction temperature provides sufficient stringency for specific primer-template hybridization [41].

Molecular Mechanisms of Popular Hot-Start Technologies

Several biochemical approaches have been developed to implement the Hot-Start principle, each with distinct advantages and considerations:

Table 1: Comparison of Major Hot-Start Technologies

| Technology | Mechanism of Action | Activation Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Antibody-Based | Anti-Taq antibody binds polymerase active site [23] | Initial denaturation (95°C, 2-10 min) [42] | Rapid activation; full enzyme activity restored [23] | Animal-origin components; exogenous proteins in reaction [23] |

| Chemical Modification | Covalent linkage of inhibitory chemical groups [23] | Extended pre-incubation (95°C, 10-15 min) [23] | High stringency; animal-component free [23] | Longer activation time; may affect long amplicons [23] |

| Aptamer-Based | Oligonucleotide aptamers bind and inhibit polymerase [41] | Elevated temperature (>70°C) [43] | Short activation time; animal-component free [23] | Potential reversibility; lower stringency [23] |

| Affibody-Based | Engineered protein domains block active site [23] | Initial denaturation [23] | Low protein content; animal-component free [23] | Potential lower stringency [23] |

| Primer-Based | Thermolabile groups (OXP) block 3' extension [25] | Temperature-dependent deprotection [25] | Targeted inhibition; no polymerase modification needed [25] | Requires specialized primer synthesis [25] |

| Physical Separation | Wax barriers or separate compartments [41] | Wax melting (~70°C) [41] | Simple principle; no specialized reagents [41] | Manual intensive; potential contamination risk [41] |

Diagram: Hot-Start PCR workflow showing inhibition at room temperature and specific activation during initial denaturation

Experimental Protocols and Methodologies

Standard Hot-Start PCR Protocol

The following protocol is adapted from manufacturer recommendations for antibody-based Hot-Start polymerases [42]:

Reaction Setup (20-50 μL volume):

- Master Mix Preparation: