PCR Mastery: A Scientist's Guide to Fundamentals, Optimization, and Advanced Applications

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth exploration of Polymerase Chain Reaction (PCR).

PCR Mastery: A Scientist's Guide to Fundamentals, Optimization, and Advanced Applications

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with an in-depth exploration of Polymerase Chain Reaction (PCR). It covers core principles from reaction components to thermocycling, details methodological considerations for diverse PCR types including qPCR and dPCR, and offers systematic troubleshooting for common pitfalls like contamination and non-specific amplification. The article further validates techniques through comparative analysis of emerging methods, equipping professionals with the knowledge to ensure robust, reproducible, and high-fidelity results in both research and clinical diagnostics.

The Building Blocks of PCR: Core Principles and Reaction Components

The Polymerase Chain Reaction (PCR) is a cornerstone technique of modern molecular biology, enabling the exponential amplification of specific DNA fragments from minute starting quantities. [1] Since its introduction by Kary Mullis in 1985, PCR has revolutionized fields from clinical diagnostics to basic research. [1] [2] Its success, however, hinges on the precise interplay of five essential components: the template DNA, primers, DNA polymerase, deoxynucleoside triphosphates (dNTPs), and magnesium ions (Mg²⁺). [3] A thorough understanding of the role, optimal concentration, and common pitfalls associated with each component is fundamental for developing robust and reliable PCR-based assays in research and drug development. This guide provides an in-depth technical examination of these core elements, framing them within the context of PCR fundamentals and common experimental challenges.

The Five Essential Components

Template DNA

Role: The template DNA is the target sequence that will be amplified. It provides the architectural blueprint that the primers and DNA polymerase use to synthesize new DNA strands. [3]

Key Considerations: The source, quality, and quantity of template DNA are critical for amplification success. Template DNA can originate from various sources, including genomic DNA (gDNA), complementary DNA (cDNA), or plasmid DNA. [4] The optimal input amount depends on the template's complexity; for instance, 0.1–1 ng of plasmid DNA is often sufficient, while 5–50 ng may be required for gDNA in a 50 µL reaction. [4] [5] Using too much DNA can lead to non-specific amplification and reagent depletion, whereas too little can result in weak or no amplification. [5] The template must also be of high purity, as contaminants like salts, solvents, or proteins can inhibit DNA polymerase. [1] [6] [5] In some applications, direct PCR is performed from crude samples (e.g., cells or tissue lysates), which requires DNA polymerases with high resistance to inhibitors. [7]

Primers

Role: Primers are short, single-stranded DNA oligonucleotides (typically 15–30 bases long) that are designed to be complementary to the sequences flanking the target region. [4] They provide the free 3'-hydroxyl group necessary for DNA polymerase to initiate DNA synthesis. [1]

Key Considerations: Meticulous primer design is arguably the most critical factor for PCR specificity. Poorly designed primers are a common source of PCR failure, leading to issues like primer-dimer formation or amplification of non-target sequences. [5] Table 1 summarizes the fundamental principles of effective primer design. Primers should be checked for self-complementarity (which can cause hairpin loops) and complementarity to each other (which leads to primer-dimer formation). [2] The two primers in a pair should have similar melting temperatures (Tm) to ensure both bind to their respective targets with similar efficiency during the annealing step. [2] [4] Primer concentration is also crucial; high concentrations promote mispriming, while low concentrations yield little product. [4] A range of 0.1–1 µM is generally recommended. [4]

Table 1: Key Guidelines for Primer Design

| Parameter | Recommended Guideline | Rationale |

|---|---|---|

| Length | 15–30 nucleotides | Balances specificity and binding efficiency. [4] |

| GC Content | 40–60% | Ensates stable binding; too high can cause non-specific binding. [2] [4] |

| Melting Temp (Tm) | 55–70°C; primers within 5°C of each other | Allows a single annealing temperature for both primers. [2] [4] |

| 3' End | End with a G or C; avoid >3 G/C in last 5 bases | "Clamps" the end for efficient extension while minimizing mispriming. [2] [4] |

| Specificity | Avoid self-complementarity, direct repeats, and complementarity between primers | Prevents secondary structures (hairpins) and primer-dimer artifacts. [2] [4] |

DNA Polymerase

Role: DNA polymerase is the enzyme that catalyzes the template-directed synthesis of new DNA strands. It adds nucleotides to the 3' end of the annealed primer, extending the complementary strand in the 5' to 3' direction. [1] [3]

Key Considerations: The discovery of thermostable DNA polymerases, like Taq polymerase from Thermus aquaticus, was pivotal for automating PCR. [1] [3] Taq polymerase has a half-life of approximately 40 minutes at 95°C, allowing it to withstand the repeated high-temperature denaturation steps. [4] In a standard 50 µL reaction, 1–2.5 units of enzyme are typically used. [8] [4] However, Taq polymerase lacks proofreading (3'→5' exonuclease) activity, leading to a relatively high error rate, which can be a significant drawback for applications like cloning or sequencing. [3] For such applications, high-fidelity polymerases (e.g., Pfu, Q5) are preferred. [5] Furthermore, hot-start polymerases (inactivated by antibodies or chemical modifications until the initial denaturation step) are widely used to prevent non-specific amplification and primer-dimer formation that can occur during reaction setup at lower temperatures. [6] [7]

Deoxynucleoside Triphosphates (dNTPs)

Role: dNTPs (dATP, dCTP, dGTP, and dTTP) are the essential building blocks from which DNA polymerase synthesizes the new DNA strands. [3]

Key Considerations: The four dNTPs must be provided in equimolar concentrations to ensure faithful and efficient DNA synthesis. [4] [3] A final concentration of 0.2 mM for each dNTP is commonly used and is generally suitable for amplifying a wide range of targets. [4] [3] The concentration of dNTPs is intrinsically linked to the Mg²⁺ concentration, as Mg²+ binds to dNTPs in the reaction. [4] Excessively high dNTP concentrations can chelate all available Mg²⁺, inhibiting the polymerase, while concentrations that are too low will limit the yield of the PCR product. [4] For some high-fidelity applications, lower dNTP concentrations (0.01–0.05 mM) can be used to improve fidelity. [4] [3] dNTPs are labile and should be stored at -20°C in neutral pH buffers to prevent degradation. [3]

Magnesium Ions (Mg²⁺)

Role: Magnesium ions act as an essential cofactor for DNA polymerase activity. [3] They facilitate the binding of the dNTPs to the enzyme's active site and are directly involved in the catalytic reaction for phosphodiester bond formation. [4] Additionally, Mg²⁺ helps stabilize the double-stranded structure of DNA and the primer-template complex. [4] [3]

Key Considerations: The concentration of Mg²⁺ is one of the most variable parameters in PCR optimization and has a profound impact on reaction efficiency and specificity. It is typically supplied as MgCl₂ in the reaction buffer. [2] A final concentration in the range of 1.5 to 2.5 mM is a common starting point, but optimal concentration must be determined empirically for each primer-template system. [2] [4] Table 2 outlines the effects of incorrect Mg²⁺ concentration. Too little Mg²⁺ results in low enzyme activity and low product yield, while too much Mg²⁺ can stabilize non-specific primer-template interactions, leading to spurious amplification, and can also increase the error rate of non-proofreading polymerases. [4]

Table 2: Effects of Mg²⁺ Concentration on PCR

| Mg²⁺ Level | Impact on PCR Reaction |

|---|---|

| Too Low | Reduced DNA polymerase activity; low or no yield of the desired product. [4] |

| Optimal | High specificity and yield; efficient primer annealing and strand elongation. [2] |

| Too High | Increased non-specific amplification; higher error rate in nucleotide incorporation. [4] |

The PCR Workflow and Component Interaction

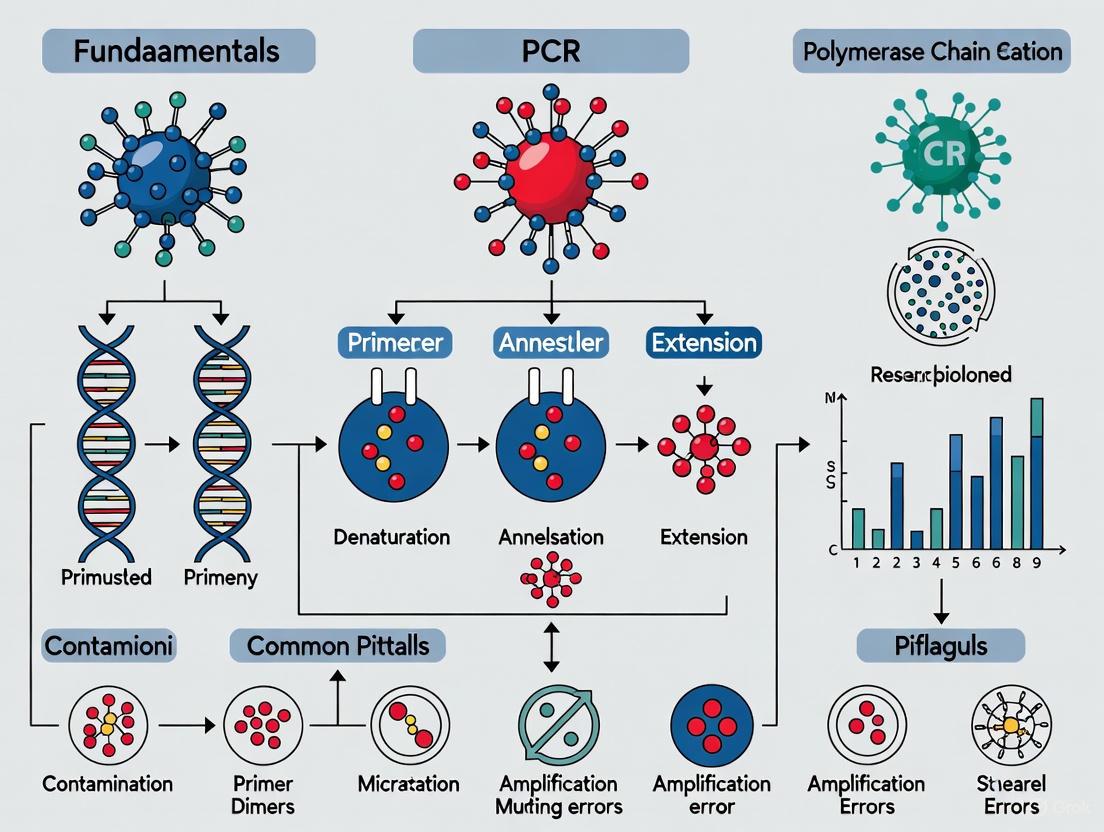

A standard PCR involves a cyclical three-step process: denaturation, annealing, and extension. The interaction of the five core components throughout these steps is illustrated in the workflow below.

Advanced PCR Strategies and Troubleshooting

Common Pitfalls and Research-Ready Solutions

Even with a sound theoretical understanding, PCR experiments can fail. The table below links common problems directly to their underlying causes and provides actionable solutions for researchers.

Table 3: Common PCR Problems and Research Solutions

| Problem | Potential Causes | Proven Solutions & Reagents |

|---|---|---|

| No/Low Yield | Degraded template, inefficient polymerase, low [dNTPs/Mg²⁺], incorrect Tm. [6] | Quantify DNA (spectro/fluorometry); use high-processivity enzymes; titrate Mg²⁺ and dNTPs; optimize with gradient PCR. [6] [5] |

| Non-Specific Bands/Smearing | Low annealing temperature, excess enzyme/primers/Mg²⁺, contaminated primers. [6] | Increase annealing T; use hot-start polymerase; titrate primers/enzyme/Mg²⁺; design new primers; use additives like DMSO or BSA. [6] [4] [7] |

| Primer-Dimer | Primer 3'-end complementarity, overlong annealing, high primer concentration. [6] | Redesign primers; increase annealing T; use hot-start polymerase; reduce primer concentration. [6] [2] [4] |

Advanced Strategies for Challenging Applications

For demanding research applications, standard PCR conditions are often insufficient. Advanced strategies have been developed to overcome these challenges:

- Hot-Start PCR: This method employs an inactivated DNA polymerase (via antibodies, aptamers, or chemical modification) that is only activated at high temperatures. This prevents non-specific amplification and primer-dimer formation during reaction setup, significantly enhancing specificity. [7]

- Touchdown PCR: This cycling strategy starts with an annealing temperature higher than the primers' Tm and gradually decreases it in subsequent cycles. This ensures that the first, most critical amplifications are highly specific, favoring the desired target over non-specific products. [7]

- GC-Rich PCR: Amplifying GC-rich templates (>65%) is difficult due to stable secondary structures. The use of additives like DMSO, formamide, or betaine helps destabilize these structures. Combining these with highly processive polymerases and higher denaturation temperatures (e.g., 98°C) can enable successful amplification. [2] [7]

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Reagents for PCR Setup and Analysis

| Reagent / Kit | Function / Application |

|---|---|

| Hot-Start DNA Polymerase | Increases specificity by preventing activity until initial denaturation. Essential for multiplex and high-sensitivity PCR. [7] |

| dNTP Mix (Neutral pH) | Provides balanced, high-purity nucleotides for efficient and accurate DNA synthesis. [3] |

| MgCl₂ Solution | A separate, titratable source of Mg²⁺ for fine-tuning reaction stringency and yield. [2] [4] |

| PCR Additives (DMSO, BSA, Betaine) | Co-solvents and stabilizers to overcome challenges like high GC-content, secondary structures, or the presence of inhibitors. [2] [7] |

| Nucleic Acid Gel Electrophoresis System | Standard method for analyzing PCR amplicon size, quantity, and specificity post-amplification. [1] [8] |

The robust and reproducible amplification of DNA via PCR is a fundamental skill in the molecular scientist's arsenal. Success is not merely a function of following a protocol but hinges on a deep, mechanistic understanding of the five essential components—template DNA, primers, DNA polymerase, dNTPs, and Mg²⁺—and their dynamic interplay. By applying the principles of optimal primer design, meticulous reagent quantification, and strategic optimization outlined in this guide, researchers and drug development professionals can effectively troubleshoot failed experiments, adapt methods for specialized applications, and ensure the generation of high-quality, reliable data that underpins scientific discovery.

The Polymerase Chain Reaction (PCR) is one of the most pivotal techniques in modern molecular biology, enabling the exponential amplification of specific DNA sequences from minimal starting material. Since its development by Kary Mullis in 1983, PCR has become an indispensable tool across diverse fields including basic research, clinical diagnostics, and pharmaceutical development [9]. At the heart of this method lies the thermal cycler, an instrument that automates the precise temperature cycling required for DNA amplification. This technical guide provides a comprehensive examination of the PCR process through the lens of thermal cycler operation, offering laboratory professionals an in-depth understanding of the instrumentation, biochemical processes, and optimization strategies essential for experimental success.

The Core Mechanism of PCR: Three Essential Stages

The PCR process employs repeated cycles of three fundamental temperature-dependent steps to achieve exponential amplification of a target DNA sequence. Each stage performs a distinct biochemical function facilitated by the precise temperature control of the thermal cycler [9] [10].

Denaturation

The initial step of each PCR cycle involves denaturation, where the reaction mixture is heated to a high temperature, typically between 94-98°C [10]. At this elevated temperature, the hydrogen bonds between complementary base pairs in the double-stranded DNA template are broken, resulting in the separation of the DNA into two single strands. This process provides the necessary single-stranded templates for the subsequent priming and extension steps. Incomplete denaturation, often resulting from insufficient temperature or time at the denaturation temperature, can lead to poor amplification efficiency and yield [10].

Primer Annealing

Following denaturation, the temperature is rapidly lowered to the annealing temperature, typically within the range of 50-65°C [10]. During this stage, short, single-stranded DNA primers bind to their complementary sequences on the flanking regions of the target DNA segment. The specificity of this annealing process is critical for successful amplification, as it determines which DNA sequence will be amplified. The optimal annealing temperature is primer-specific and must be carefully optimized—too high a temperature prevents primer binding and reduces yield, while too low a temperature permits non-specific binding and amplification of unintended products [11] [10].

Extension

The final step involves extension or elongation, where the temperature is raised to the optimal working temperature for the DNA polymerase, typically 72°C for Taq polymerase [9] [10]. During this phase, the DNA polymerase binds to the primer-template complexes and synthesizes new complementary DNA strands by adding nucleotides to the 3' ends of the primers in the 5'→3' direction. The duration of the extension step is proportional to the length of the target DNA fragment, with common extension times of 1 minute per kilobase for standard polymerases [11].

These three steps constitute one PCR cycle, and the process is typically repeated for 25-35 cycles, potentially generating millions of copies of the target DNA sequence [9].

Figure 1: The Three Fundamental Stages of PCR Amplification. This cyclic process of denaturation, annealing, and extension enables exponential amplification of target DNA sequences over 25-35 cycles.

Thermal Cycler Technology: Instrumentation and Performance Metrics

The thermal cycler is far more than a simple programmable heating block; it is a sophisticated instrument that guarantees the precision, reproducibility, and efficiency of the PCR process. Understanding its components and performance characteristics is essential for optimal experimental design and execution [10].

Critical Instrument Components

- Peltier Elements: Solid-state heat pumps responsible for the rapid and precise heating and cooling of the reaction block, with performance measured by ramp rate (°C/second) [10].

- Thermal Block: The metal block (typically aluminum) that holds the reaction vessels. Its critical performance indicator is temperature uniformity across all wells, ideally within ±0.5°C [10].

- Heated Lid: Maintains temperature (usually 105°C) above the reaction liquids to prevent evaporation and condensation, ensuring reaction volume consistency [10].

- Interface/Software: Enables programming of complex protocols, including gradient functionality for rapid annealing temperature optimization [10].

Key Performance Metrics for Laboratory Applications

When selecting a thermal cycler for research applications, several critical performance metrics must be considered [10]:

Table 1: Essential Performance Metrics for Thermal Cyclers in Research Applications

| Performance Metric | Technical Specification | Impact on PCR Results |

|---|---|---|

| Temperature Accuracy | Typically within ±0.25°C of setpoint | Ensures each reaction step occurs at optimal temperature for enzyme activity and specificity |

| Temperature Uniformity | ±0.5°C across entire block | Prevents well-to-well variation in amplification efficiency and yield |

| Ramp Rate | Up to 6°C/second in advanced systems | Reduces overall run time and limits duration at suboptimal temperatures |

| Block Capacity and Flexibility | 96-well standard, with options for 384-well and dual blocks | Accommodates varying throughput needs and experimental scales |

| Calibration Requirements | Regular calibration with certified temperature probes | Maintains long-term accuracy and reproducibility for regulated environments |

The Scientist's Toolkit: Essential PCR Reagents and Their Functions

Successful PCR amplification requires careful formulation of reaction components, each serving specific functions in the amplification process [9] [11] [12].

Table 2: Essential Components of a PCR Reaction Master Mix

| Reagent Component | Typical Concentration | Critical Function | Optimization Notes |

|---|---|---|---|

| DNA Polymerase | 0.5-2.5 units/50μL reaction | Enzyme that synthesizes new DNA strands; thermostability essential | Choice depends on application: Taq for routine PCR, high-fidelity enzymes for cloning [11] |

| Primers (Forward & Reverse) | 0.1-0.5 μM each | Sequence-specific oligonucleotides that define amplification targets | Design critical: 18-25 bases, 40-60% GC content; avoid dimers and secondary structures [11] |

| dNTPs | 200 μM each | Nucleotide building blocks (dATP, dCTP, dGTP, dTTP) for DNA synthesis | Balanced concentrations essential to prevent misincorporation errors [12] |

| Magnesium Chloride (MgCl₂) | 1.5-2.5 mM | Cofactor for DNA polymerase; significantly impacts enzyme activity and fidelity | Concentration requires optimization; affects primer annealing and product specificity [12] |

| Reaction Buffer | 1X concentration | Provides optimal pH and ionic conditions for polymerase activity | Often includes additives like DMSO or betaine for challenging templates [12] |

| Template DNA | 1-100 ng (genomic DNA) | Source of target sequence to be amplified | Quality critical: assess via spectrophotometry (A260/280 >1.8); avoid contaminants [11] [12] |

Advanced PCR Applications and Corresponding Thermal Cycler Requirements

The fundamental PCR process has been adapted for specialized applications, each with distinct thermal cycling requirements and instrumental considerations [10].

Quantitative PCR (qPCR)

qPCR incorporates fluorescent reporters to monitor amplification in real-time, enabling precise quantification of initial target concentration. This method requires thermal cyclers with integrated optical systems, including excitation light sources and fluorescence detectors. The instruments must provide exceptional temperature stability and uniformity to ensure consistent fluorescence measurements at each cycle [10]. Data analysis involves determining the cycle threshold (Ct), the cycle number at which fluorescence exceeds a defined threshold, which correlates with the initial target concentration [10] [13].

Reverse Transcription PCR (RT-PCR)

Designed for RNA analysis, RT-PCR begins with a reverse transcription step where reverse transcriptase synthesizes complementary DNA (cDNA) from RNA templates. Thermal cyclers for this application must accommodate an extended initial incubation at lower temperatures (typically 37-50°C) for cDNA synthesis before transitioning to standard PCR cycling [10].

Digital PCR (dPCR)

dPCR represents an advanced approach for absolute nucleic acid quantification without standard curves. The method works by partitioning samples into thousands of individual reactions, with thermal cyclers specifically designed for endpoint detection and analysis. Systems like the Bio-Rad QX200 Droplet Digital system and Qiagen QIAcuity employ different partitioning technologies (water-oil emulsions vs. nanoplate partitions) but both require precise thermal control for accurate absolute quantification [14].

Optimization Strategies and Troubleshooting Common PCR Issues

Even experienced researchers encounter PCR failures, often stemming from subtle deviations in protocol or reaction components. Systematic optimization and troubleshooting are essential for reliable results [11] [12].

Annealing Temperature Optimization

The annealing temperature is one of the most critical variables requiring optimization. Using an annealing temperature gradient function, available on many modern thermal cyclers, represents the most efficient approach to establish ideal conditions. The recommended starting point is 5°C below the primer melting temperature (Tm), with empirical testing across a range to identify the temperature providing maximum specificity and yield [11] [12].

Addressing Amplification Challenges

- GC-Rich Templates: Stable secondary structures in GC-rich regions (>60% GC content) can hinder amplification. Additives including DMSO (5-20%), formamide (5-20%), or betaine (1-3 M) can help melt these structures and improve results [12].

- AT-Rich Templates: For AT-rich sequences, use longer primers (>22 bp) and consider two-step PCR (combining annealing and extension). Additional MgCl₂ (up to 10 mM) may also enhance amplification [12].

- Long Amplicons: Amplification of fragments >5 kb requires polymerases with proofreading activity and longer extension times. Specialized enzyme blends like TAKARA LA Taq are specifically designed for long-range PCR [12].

Common PCR Pitfalls and Solutions

- Contamination Issues: Use dedicated equipment, filter tips, and separate work areas for PCR setup. Include negative controls (no-template controls) to detect contamination [11].

- Non-specific Amplification: Optimize annealing temperature, reduce template concentration, use hot-start polymerases, or adjust magnesium concentration [11] [12].

- Poor Yield: Check reagent integrity, ensure sufficient cycle numbers, verify primer quality, and optimize template quality and concentration [11].

- No Amplification: Verify polymerase activity, check primer design and specificity, ensure adequate template quality and concentration, and confirm thermal cycler calibration [11] [12].

The thermal cycler stands as a cornerstone technology in molecular biology, transforming the theoretical process of DNA amplification into a robust, reproducible, and automated laboratory technique. Its precision in orchestrating the delicate temperature transitions between denaturation, annealing, and extension directly determines the specificity, efficiency, and yield of PCR amplification. For research and drug development professionals, a comprehensive understanding of thermal cycler operation, performance metrics, and optimization strategies is not merely technical detail but fundamental knowledge required for experimental success. As PCR methodologies continue to evolve with emerging technologies including digital PCR, microfluidics, and rapid cycling systems, the underlying principles of precise thermal control remain constant. Mastery of these principles enables researchers to troubleshoot experimental challenges, validate methodological approaches, and generate reliable, reproducible data that advances scientific discovery and therapeutic development.

In the Polymerase Chain Reaction (PCR), the DNA polymerase enzyme serves as the core engine, catalyzing the synthesis of new DNA strands. The selection of an appropriate DNA polymerase is a critical decision that directly determines the success, accuracy, and efficiency of amplification. All polymerases are not created equal; they possess distinct characteristics tailored for different applications. For researchers, scientists, and drug development professionals, understanding these differences—particularly in fidelity, thermostability, and specificity—is fundamental to experimental design. This guide provides an in-depth technical examination of DNA polymerases, focusing on the key differentiators between common enzymes like Taq, Pfu, and Hot-Start variants, and their impact on overcoming common PCR pitfalls. Selecting the wrong polymerase can lead to a cascade of problems, from misincorporated mutations in cloned sequences to complete amplification failure, underscoring the necessity of an informed choice [15] [16].

Core Characteristics of DNA Polymerases

The performance of a DNA polymerase in PCR is defined by four key properties: fidelity, thermostability, specificity, and processivity. A thorough understanding of these characteristics is a prerequisite for optimal enzyme selection.

- Fidelity: Fidelity refers to the accuracy of DNA synthesis, quantified as the error rate (number of misincorporated nucleotides per base synthesized per duplication event). DNA polymerases with proofreading activity (3'→5' exonuclease activity) can recognize and excise misincorporated nucleotides, resulting in significantly higher fidelity. Error rates are typically expressed in scientific notation (e.g., 10⁻⁵), where a smaller exponent indicates higher accuracy [16].

- Thermostability: This is the ability of the enzyme to retain activity after prolonged exposure to the high temperatures required for PCR denaturation steps (typically ~95°C). Enzymes derived from hyperthermophilic organisms, such as Pyrococcus furiosus, exhibit superior thermostability compared to those from Thermus aquaticus [16].

- Specificity: Specificity describes the enzyme's ability to amplify only the intended target sequence, minimizing non-specific products and primer-dimers. Hot-Start polymerases are engineered for enhanced specificity by remaining inactive until a high-temperature activation step, preventing spurious amplification during reaction setup [16] [17].

- Processivity: Processivity is the number of nucleotides a polymerase can incorporate per single binding event. A highly processive enzyme can more efficiently amplify long targets, GC-rich sequences, and templates with complex secondary structures [16].

Table 1: Defining Core Characteristics of DNA Polymerases

| Characteristic | Definition | Impact on PCR | Enzyme Example |

|---|---|---|---|

| Fidelity | Accuracy of nucleotide incorporation during DNA synthesis. | Critical for cloning, sequencing, and mutagenesis; low fidelity introduces mutations. | Pfu, Q5, Phusion [18] [16] |

| Thermostability | Resistance to irreversible inactivation at high temperatures (e.g., 95°C). | Essential for PCR; higher thermostability allows for more cycles and robust amplification. | Pfu (hyperthermostable) [16] |

| Specificity | Ability to amplify only the intended target sequence. | Reduces background and non-specific amplification, leading to cleaner results. | Hot-Start Taq [16] [17] |

| Processivity | Number of nucleotides added per enzyme-binding event. | Important for amplifying long fragments and difficult templates (e.g., high GC%). | Engineered polymerases [16] |

Quantitative Fidelity Comparison of Common DNA Polymerases

Fidelity is often the primary criterion for enzyme selection in applications requiring high accuracy. The error rates of different polymerases can vary by orders of magnitude. The following table synthesizes quantitative fidelity data from direct sequencing and manufacturer specifications, providing a clear comparison for researchers.

Table 2: Error Rate and Fidelity of Common DNA Polymerases

| DNA Polymerase | Proofreading (3'→5' Exo) | Published Error Rate (Errors/bp/duplication) | Fidelity Relative to Taq | Resulting Ends |

|---|---|---|---|---|

| Taq | No | 1.3 - 20 x 10⁻⁵ [18] | 1x [15] | 3'A Overhang |

| AccuPrime Taq (HF) | No | Not Available | ~9x better [15] | 3'A Overhang |

| OneTaq | Yes (Low) | Not Available | ~2x better [18] | 3'A/Blunt |

| Pfu | Yes | 1 - 2 x 10⁻⁶ [15] | ~6-10x better [15] | Blunt |

| Phusion | Yes | 4 x 10⁻⁷ (HF Buffer) [15] | ~50x better [15] [18] | Blunt |

| Q5 | Yes | Not Available | ~280x better [18] | Blunt |

The data demonstrates a stark contrast between non-proofreading and proofreading enzymes. While standard Taq polymerase has an error rate in the range of 10⁻⁵, high-fidelity enzymes like Pfu, Phusion, and Q5 exhibit error rates in the 10⁻⁶ to 10⁻⁷ range, making them over 10 to 200 times more accurate than Taq [15] [18]. This translates to a significantly lower probability of introducing mutations during amplification, which is indispensable for downstream applications like cloning and functional analysis.

Experimental Protocols for Determining Fidelity

The fidelity values cited in manufacturer documentation and research papers are derived from rigorous experimental assays. Understanding these methodologies is crucial for critically evaluating the reported data.

LacZ-Based Forward Mutation Assay

This classical method involves amplifying a region of the lacZ gene (which encodes β-galactosidase) and cloning the products into a vector. The plasmid is then transformed into E. coli, and colonies are screened using a colorimetric assay.

- Functional lacZ: Forms blue colonies.

- Mutated lacZ: Loses function and forms white colonies. The error rate is calculated based on the number of white colonies, and the mutated sequences are often analyzed to determine the types of mutations (mutational spectrum) the polymerase tends to make [15] [16].

Direct Sequencing of Cloned PCR Products

With the reduced cost of DNA sequencing, direct sequencing has become a powerful and straightforward method for fidelity determination.

- Methodology: A target sequence (or multiple diverse targets) is amplified by PCR. The products are cloned, and multiple individual clones are Sanger sequenced. The sequences are then aligned and compared to the known original template sequence to identify any mutations introduced during PCR [15].

- Advantage: This method allows for the interrogation of a vast DNA sequence space, as it is not limited to a single reporter gene and can reveal how sequence context influences error rate [15].

Next-Generation Sequencing (NGS)

NGS offers the most comprehensive approach for fidelity measurement.

- Workflow: PCR amplicons are prepared and directly subjected to NGS, generating millions of sequence reads. Bioinformatic analysis compares these reads to the reference template, providing a highly accurate and detailed profile of the polymerase's error rate and mutational spectrum across the entire amplicon [16].

The following diagram illustrates the logical workflow for selecting a fidelity assay based on experimental goals and resources.

A Practical Guide to DNA Polymerase Selection

Choosing the correct polymerase requires matching the enzyme's properties to the experimental application. The following table provides a consolidated guide to streamline this decision-making process.

Table 3: Polymerase Selection Guide by Application

| Application | Recommended Polymerase Type | Key Rationale | Specific Examples |

|---|---|---|---|

| Routine PCR/Genotyping | Standard or Hot-Start Taq | Cost-effective, robust for simple amplicons; Hot-Start improves specificity. | GoTaq G2, Hot Start Taq [17] |

| Cloning & Site-Directed Mutagenesis | High-Fidelity Proofreading | Low error rate is critical to avoid introducing mutations into the cloned insert. | Q5, Phusion, Pfu [18] [16] [17] |

| Long-Range PCR (>5 kb) | High-Processivity Blends | Engineered for high processivity and stability to synthesize long fragments. | LongAmp Taq, GoTaq Long PCR Master Mix [18] [17] |

| Rapid Colony PCR | Master Mix Formulations | Pre-mixed, convenient, and often contain dyes for direct gel loading. | Various Taq Master Mixes [17] |

| Amplification from Difficult Templates (GC-rich, inhibitors) | High-Processivity / Specialty | High affinity for templates; some are formulated with enhancers to overcome inhibitors. | Platinum II Taq, GC-rich specific kits [16] [19] |

The Hot-Start Advantage

Hot-Start technology is a modification that inhibits the polymerase's activity at room temperature. This is typically achieved through antibody-based inhibition or chemical modification. By preventing activity during reaction setup, Hot-Start polymerases drastically reduce the amplification of non-specific targets and primer-dimers that form at lower temperatures, thereby increasing the yield of the desired product and simplifying troubleshooting [16] [17]. This makes them a superior choice for most applications, especially multiplex PCR and high-throughput setups.

Troubleshooting Common PCR Issues Related to Polymerase Selection

Many common PCR problems can be traced back to a suboptimal choice of DNA polymerase or reaction conditions. The table below outlines key issues, their polymerase-related causes, and evidence-based solutions.

Table 4: Troubleshooting PCR Problems via Polymerase Selection and Optimization

| Observation | Possible Polymerase-Related Cause | Recommended Solution |

|---|---|---|

| No Amplification | Enzyme inhibited by sample contaminants; poor thermostability. | Use a polymerase with high processivity and inhibitor tolerance; verify enzyme thermostability [16] [19]. |

| Non-Specific Bands/High Background | Non-Hot-Start polymerase activity during setup; annealing temperature too low. | Switch to a Hot-Start polymerase; increase annealing temperature [16] [19] [20]. |

| Low Fidelity/Sequence Errors | Use of a low-fidelity polymerase (e.g., Taq); excessive Mg²⁺; too many cycles. | Use a high-fidelity polymerase (e.g., Q5, Pfu); optimize Mg²⁺ concentration; reduce cycle number [19] [20]. |

| Failure to Amplify Long Templates | Low-processivity enzyme; insufficient extension time. | Use a long-range PCR enzyme blend; increase extension time [19] [17]. |

| Primer-Dimer Formation | Non-Hot-Start polymerase extends complementary 3' primer ends during setup. | Switch to a Hot-Start polymerase; optimize primer design and concentration [6] [19]. |

The Scientist's Toolkit: Essential Reagents for PCR and Fidelity Analysis

- High-Fidelity DNA Polymerase (e.g., Q5, Phusion): An engineered enzyme with high intrinsic fidelity and proofreading activity, essential for applications where sequence accuracy is paramount [18] [16].

- Hot-Start DNA Polymerase (e.g., Hot Start Taq, Platinum II Taq): A modified enzyme that remains inactive until a high-temperature step, used to suppress non-specific amplification and primer-dimer formation [16] [17].

- dNTP Mix: A solution containing equimolar concentrations of dATP, dCTP, dGTP, and dTTP. Unbalanced dNTP concentrations can increase the error rate of the polymerase [19] [20].

- Mg²⁺ Solution (MgCl₂ or MgSO₄): A critical cofactor for DNA polymerase activity. Its concentration must be optimized, as excess Mg²⁺ can reduce fidelity and promote non-specific binding [2] [19] [20].

- PCR Enhancers/Additives (e.g., DMSO, Betaine, BSA): Reagents used to improve amplification efficiency of difficult templates (e.g., GC-rich) or to mitigate the effects of PCR inhibitors present in some sample types [2] [6] [19].

- Cloning Vector & Competent Cells: Required for fidelity assays like the lacZ screen or direct sequencing of clones, allowing for the isolation and analysis of individual PCR products [15] [16].

The selection of a DNA polymerase is a fundamental step in PCR experimental design that directly influences the reliability and interpretation of results. Taq polymerase remains a robust and cost-effective choice for routine amplification where ultimate fidelity is not critical. However, for applications demanding high accuracy—such as cloning, sequencing, and mutagenesis—proofreading enzymes like Pfu, Phusion, and Q5 are indispensable due to their error rates that are orders of magnitude lower. Furthermore, the adoption of Hot-Start technology, regardless of fidelity needs, provides a straightforward path to enhanced specificity and yield. By aligning the core characteristics of fidelity, thermostability, specificity, and processivity with the experimental goals, researchers can strategically select the optimal enzyme, thereby avoiding common pitfalls and ensuring the success of their molecular biology workflows.

In the polymerase chain reaction (PCR), template DNA is the genetic material that contains the target sequence to be amplified. It serves as the essential blueprint for DNA polymerase to synthesize new DNA strands. The quality, quantity, and source of the template DNA are foundational factors that directly determine the success or failure of any PCR experiment [1]. Effective utilization of nucleic acids in molecular biology applications—from genetic engineering and drug development to diagnostics and therapeutics—requires precise analysis and manipulation, making the understanding of template DNA paramount [21]. This guide provides an in-depth examination of template DNA sources, optimal input amounts, and rigorous quality assessment methodologies, framed within the critical context of PCR fundamentals and common experimental pitfalls.

Template DNA for PCR can originate from a diverse array of biological materials. The composition and complexity of the DNA source significantly influence the optimal input amount for amplification [4].

- Genomic DNA (gDNA): Extracted from the nucleus of cells, gDNA is a complex template containing the entire genetic complement of an organism. It is commonly isolated from blood, tissue, or cultured cells [22]. Due to its complexity, it typically requires a higher input amount, often in the range of 5–50 ng per 50 µL reaction [4].

- Complementary DNA (cDNA): Synthesized from messenger RNA (mRNA) using the enzyme reverse transcriptase, cDNA represents the expressed gene profile of a cell at a specific time [1]. It is the template of choice for gene expression analysis via reverse transcription PCR (RT-PCR).

- Plasmid DNA: These small, circular, extrachromosomal DNA molecules are commonly used in molecular cloning and recombinant protein expression. Because of their simplicity and high copy number, they require significantly less template, often only 0.1–1 ng per 50 µL reaction [4].

- PCR Products: Previously amplified DNA fragments can be re-amplified. However, unpurified products may contain carryover primers, dNTPs, and salts that can inhibit subsequent reactions. It is generally recommended to purify or dilute these products before re-amplification [4].

Saliva has emerged as a viable and non-invasive source of human DNA, particularly useful in forensic science and pediatric or geriatric populations where blood collection is challenging [23]. Saliva contains exfoliated buccal epithelial cells, with one study reporting a mean DNA yield of 48.4 ± 8.2 μg/mL from saliva samples, which was sufficient for successful Short Tandem Repeat (STR) amplification in 75% of samples despite some protein contamination [23]. This highlights that with proper handling, even suboptimal samples can yield usable DNA for PCR.

Optimal Template Quantity and Calculation

Using the correct amount of template DNA is critical for reaction success. Insufficient template leads to weak or no amplification, while excess template can increase nonspecific amplification and deplete reagents [24] [4].

Recommended Quantities by Template Type

The table below summarizes the optimal template quantities for a standard 50 µL PCR reaction.

Table 1: Optimal Template DNA Quantities for a 50 µL PCR Reaction

| Template Type | Recommended Quantity | Notes | Key Considerations |

|---|---|---|---|

| Plasmid DNA | 0.1–1 ng | Low complexity, high copy number. | Higher amounts can promote nonspecific binding. |

| Genomic DNA | 1 ng–1 µg [25];5–50 ng is typical [4] | High complexity, single or low-copy targets. | Requires more template due to the large genome and single-copy target genes. |

| cDNA | 1–100 ng | Derived from mRNA; depends on abundance of target transcript. | Varies significantly with the expression level of the gene of interest. |

| PCR Products | Variable; 1–5 µL of a diluted (1:10 to 1:100) prior reaction. | Purification is recommended to remove inhibitors from the first PCR. | Unpurified products carry over reagents that can inhibit the new reaction. |

Template Copy Number Calculation

In theory, a single molecule of DNA is sufficient for amplification under ideal conditions [4]. In practice, however, amplification efficiency depends on reaction components and polymerase sensitivity. For absolute quantification, especially with gDNA, template amount is sometimes expressed as copy number. The copy number can be calculated using Avogadro's constant (L = 6.022 x 10²³ molecules/mol) and the molar mass of the DNA:

Copy number = L x (mass of DNA input (g) / molar mass of DNA (g/mol))

The molar mass of a double-stranded DNA template is calculated as (number of base pairs) x (660 g/mol/bp). Online tools are available to simplify this calculation, ensuring that a sufficient number of target molecules are present in the reaction to allow for detectable amplification within a reasonable number of cycles [4].

Assessment of Template DNA Quality

The purity and integrity of template DNA are as critical as its concentration. Contaminants and degradation are major causes of PCR failure.

Quality Assessment Methods

Several techniques are employed to evaluate DNA quality, each with distinct strengths and limitations.

Table 2: Methods for Assessing DNA Quantity and Quality

| Method | Principle | Information Provided | Key Advantages | Key Limitations |

|---|---|---|---|---|

| UV-Vis Spectrophotometry [21] | Measures UV light absorption at 260 nm (nucleic acids), 280 nm (proteins), and 230 nm (salts, organics). | Concentration and purity ratios (A260/A280, A260/A230). | Quick, simple, and requires small sample volumes. | Cannot differentiate between DNA, RNA, and free nucleotides; inaccurate with contaminants. |

| Fluorometry [21] | Fluorescent dyes (e.g., PicoGreen) bind specifically to dsDNA and emit light upon excitation. | Highly specific and sensitive concentration, even for low-abundance samples. | Specific for dsDNA, more sensitive than UV, less affected by contaminants. | Requires specific dyes and equipment; results depend on calibration standards. |

| Agarose Gel Electrophoresis [21] | Separates DNA molecules by size in an electric field within an agarose matrix. | Visual assessment of DNA integrity (degradation) and approximate size and quantity. | Directly visualizes integrity; confirms high molecular weight for gDNA. | Not truly quantitative; time-consuming; requires more sample. |

Interpreting Purity Ratios

Spectrophotometric ratios are key indicators of sample purity:

- A260/A280 Ratio: Assesses protein contamination.

- A260/A230 Ratio: Assesses contamination by salts, carbohydrates, or organic compounds like guanidine or phenol.

It is important to note that the pH and ionic strength of the solvent can affect these ratios, and the blank solution should match the sample buffer [21]. A study on salivary DNA found that while only 45% of samples had optimal A260/A280 ratios (1.6-2.0), 75% still produced successful STR amplifications, indicating that slightly impure DNA can sometimes be used effectively [23].

Detailed Experimental Protocols

Protocol for DNA Extraction from Saliva and Blood

This protocol is adapted from a study comparing DNA yield from saliva and blood [23].

Sample Collection:

- Saliva: Ask subjects to spit 2 mL into a sterile disposable Petri dish. Transfer the saliva to a sterile vial using a sterile pipette.

- Blood: Draw 5 mL of blood using a sterile syringe and store it in a sterile Ethylenediaminetetraacetic acid (EDTA) vial to prevent coagulation.

- Store all samples at -20°C until DNA extraction.

DNA Extraction via Phenol-Chloroform Method:

- Lyse cells in the sample using a buffer containing a detergent like SDS or Triton X-100 [22].

- Add Proteinase K to digest proteins and inactivate nucleases [22].

- Add EDTA to chelate Mg²⁺, an essential cofactor for DNases, thereby protecting DNA from degradation [22].

- Add a mixture of phenol and chloroform to separate DNA from cellular debris. Centrifuge to partition the mixture: the upper aqueous phase contains DNA, the interphase contains denatured proteins, and the lower organic phase contains lipids.

- Carefully transfer the aqueous phase to a new tube.

- Precipitate the DNA by adding cold ethanol or isopropanol.

- Wash the DNA pellet with 70% ethanol to remove residual salts.

- Air-dry the pellet and resuspend it in sterile water or TE buffer.

DNA Quantification and Purity Assessment:

- Dilute 5 µL of the extracted DNA in 995 µL of sterile water (dilution factor = 200).

- Use a UV-Vis spectrophotometer to measure the absorbance at 260 nm, 280 nm, and 230 nm.

- Calculate the DNA concentration and the A260/A280 and A260/A230 ratios as described in previous sections.

Workflow for Template DNA Assessment

The following diagram illustrates the logical workflow for preparing and assessing template DNA prior to PCR.

The Scientist's Toolkit: Essential Reagents for DNA Analysis

Successful DNA analysis relies on a suite of specialized reagents. The table below details key materials and their functions.

Table 3: Essential Reagents for DNA Extraction and Quality Assessment

| Reagent / Material | Function | Key Considerations |

|---|---|---|

| Proteinase K [22] | A broad-spectrum serine protease that digests proteins and inactivates nucleases during cell lysis. | Essential for breaking down histones and other DNA-associated proteins. |

| EDTA (Ethylenediaminetetraacetic acid) [22] | A chelating agent that binds divalent metal ions like Mg²⁺ and Ca²⁺. | Inactivates DNases by removing their essential cofactor (Mg²⁺), thus protecting DNA from degradation. |

| Phenol-Chloroform [23] [22] | An organic solvent mixture used to separate DNA from other cellular components after lysis. | Proteins and lipids partition into the organic phase or interphase, while DNA remains in the aqueous phase. |

| Ethanol / Isopropanol [22] | Precipitates nucleic acids out of solution. | Used after extraction to concentrate and purify DNA from aqueous solutions. |

| SYBR Green / PicoGreen [21] | Fluorescent dyes that bind specifically to double-stranded DNA (dsDNA). | Used in fluorometric quantification; highly specific and sensitive compared to UV spectroscopy. |

| Agarose [21] | A polysaccharide polymer used to create a porous gel matrix for electrophoresis. | Allows separation of DNA fragments by size when an electric field is applied. |

| Ethidium Bromide (or safer alternatives) | Intercalating dye that binds to DNA and fluoresces under UV light. | Enables visualization of DNA bands in an agarose gel. (Note: Handle with care, safer alternatives are available). |

The reliability of any PCR experiment is fundamentally rooted in the starting material: the template DNA. A comprehensive understanding of the various DNA sources, their optimal quantification, and rigorous assessment of their quality and integrity is not merely a preliminary step but a critical determinant of experimental success. By adhering to standardized protocols for extraction, utilizing the appropriate quantification methods, and meticulously checking for contaminants and degradation, researchers can circumvent common pitfalls and ensure the generation of specific, efficient, and reproducible amplification results. As PCR continues to be a cornerstone technique in research, diagnostics, and therapeutics, mastering the fundamentals of template DNA preparation remains an indispensable skill for all life scientists.

Polymersase Chain Reaction (PCR) is a foundational technique in molecular biology, and its success critically depends on the design of oligonucleotide primers. Well-designed primers are the cornerstone of specific and efficient DNA amplification, enabling accurate results in gene expression analysis, cloning, diagnostics, and drug development. This guide details the core principles of PCR primer design, providing researchers with the knowledge to avoid common pitfalls and optimize their experimental outcomes. Adherence to these fundamentals ensures the amplification of the intended target with high yield and specificity, forming a reliable basis for downstream applications and research conclusions.

Core Primer Design Parameters

The following parameters form the foundation of effective primer design. Optimizing each one is crucial for successful PCR amplification.

Primer Length

Primer length directly influences specificity and hybridization efficiency.

Table 1: Primer Length Guidelines

| Parameter | Recommended Range | Rationale |

|---|---|---|

| Optimal Length | 18–30 nucleotides [26] [27] [28] | Provides a balance between specificity and efficient annealing. Shorter primers bind more efficiently but may lack specificity. |

| Specificity Consideration | Longer within range (e.g., 24–30 nt) | Increases specificity for complex templates like genomic DNA [27]. |

| Efficiency Consideration | Shorter within range (e.g., 18–22 nt) | Anneal more effectively to the target sequence, potentially requiring fewer PCR cycles [29]. |

Melting Temperature (T_m)

The melting temperature (T_m) is the temperature at which 50% of the DNA duplex dissociates into single strands. It is a critical factor for determining the PCR annealing temperature [29].

Key Guidelines:

- Recommended

T_m: Aim for aT_mbetween 55°C and 75°C, with an ideal range of 60–64°C for standard PCR [26] [28]. - Primer Pair Matching: The

T_mfor the forward and reverse primers should be within 1–5°C of each other to ensure both primers bind simultaneously with similar efficiency [26] [27] [28]. T_mCalculation: TheT_mis influenced by the primer's length, sequence, and buffer conditions. Simple formulas likeT_m = 4(G + C) + 2(A + T)can provide estimates, but for accuracy, use sophisticated algorithms (e.g., nearest-neighbor method) available in online tools that account for specific reaction conditions such as salt and Mg²⁺ concentration [30] [28].

GC Content

GC content refers to the percentage of guanine (G) and cytosine (C) bases in the primer sequence.

Table 2: GC Content and Sequence Considerations

| Feature | Recommendation | Reason for Recommendation |

|---|---|---|

| GC Content | 40–60% [26] [27] [29] | Balances primer stability and specificity. |

| GC Clamp | Include a G or C at the 3' end [26]. | Strengthens the binding of the primer's critical 3' end due to stronger triple hydrogen bonds. |

| Sequence Repeats | Avoid runs of 4 or more identical bases (e.g., GGGG) or dinucleotide repeats (e.g., ATATAT) [26] [28]. | Prevents mispriming and slippage, which can lead to non-specific products. |

| Base Distribution | Distribute G/C and A/T residues evenly; avoid high GC concentration at the 3' end [27]. | Prevents stable non-specific binding and promotes uniform hybridization. |

Avoiding Secondary Structures

Primers must be screened for self-complementarity to avoid structures that hinder amplification.

- Hairpins: Intramolecular base pairing within a single primer. Avoid loops with a ΔG value stronger (more negative) than -9.0 kcal/mol [28].

- Self-Dimers: Formed when two identical primers hybridize to each other.

- Cross-Dimers: Formed when forward and reverse primers hybridize together [29].

These structures reduce primer availability, decrease amplification efficiency, and can lead to primer-dimer artifacts, a common amplification of the primers themselves [26] [27].

Primer Design and Experimental Workflow

The process of successful PCR amplification extends from in-silico design to empirical validation. The following diagram illustrates the key stages and decision points in this workflow.

Experimental Protocols for Optimization

Even with perfect in-silico design, empirical optimization is often necessary.

Protocol 1: Annealing Temperature Optimization via Gradient PCR

A gradient thermal cycler is used to test a range of annealing temperatures simultaneously [31].

- Calculate

T_m: Determine the averageT_mof your primer pair using a reliable calculator. - Set Gradient: Program the thermal cycler with an annealing temperature gradient spanning approximately 5–10°C below to 5°C above the calculated average

T_m[32]. - Analyze Results: Run the PCR and analyze the products by gel electrophoresis. The optimal annealing temperature yields the highest amount of the correct specific product with minimal non-specific amplification.

Protocol 2: Touchdown PCR for Enhanced Specificity

This method begins with an annealing temperature higher than the estimated T_m of the primers and gradually decreases it in subsequent cycles [27].

- Initial Annealing Temperature: Start 5–10°C above the calculated

T_m. - Cycling Program: For the first 10–15 cycles, decrease the annealing temperature by 1°C per cycle.

- Final Cycles: Complete the amplification with 15–20 cycles at the final, lower annealing temperature (e.g., at or slightly below the calculated

T_m). This approach favors the amplification of the specific target in the early cycles, giving it a competitive advantage that is maintained in later cycles.

The Scientist's Toolkit: Research Reagent Solutions

Selecting the right reagents is as critical as primer design. The following table outlines key solutions that can streamline PCR setup and improve results.

Table 3: Essential Research Reagents for PCR

| Reagent / Solution | Function & Application |

|---|---|

| Universal Annealing Buffers | Specialized buffers (e.g., with isostabilizing components) that allow a universal annealing temperature (e.g., 60°C) for primers with different T_ms, drastically reducing optimization time [31]. |

| High-Fidelity DNA Polymerases | Enzymes with proofreading activity (3'→5' exonuclease) to correct misincorporated nucleotides during amplification, essential for cloning and sequencing applications. |

| Hot-Start DNA Polymerases | Engineered to be inactive at room temperature, preventing non-specific amplification and primer-dimer formation during reaction setup, thereby increasing specificity and yield [31]. |

| GC-Rich Enhancers / Additives | Reagents like DMSO, betaine, or glycerol that help denature stable secondary structures in GC-rich templates, facilitating primer binding and polymerase progression. |

Online T_m Calculators |

Web-based tools (e.g., from Thermo Fisher, IDT) that use sophisticated algorithms to calculate T_m and annealing temperatures based on specific polymerase and buffer conditions [32] [28]. |

| Primer Design & Analysis Tools | Software (e.g., OligoAnalyzer Tool, PrimerQuest) for designing primers and analyzing parameters like hairpins, self-dimers, and heterodimers [28]. |

Mastering the fundamentals of primer design—length, melting temperature, GC content, and the avoidance of secondary structures—is a non-negotiable skill for researchers relying on PCR. By adhering to the quantitative guidelines and optimization protocols outlined in this guide, scientists can systematically overcome common pitfalls, thereby enhancing the reliability and reproducibility of their experiments. This foundational knowledge, combined with strategic use of modern reagent solutions, empowers robust experimental design and accelerates progress in drug development and fundamental biological research.

Advanced PCR Methodologies: Selecting the Right Tool for Your Application

The Polymerase Chain Reaction (PCR) has revolutionized molecular biology since its invention in 1986, providing an powerful method for amplifying specific DNA sequences [33]. This foundational technique has evolved through several generations, each overcoming limitations of its predecessors and expanding the application landscape for researchers and clinicians. While end-point PCR established the basic principle of DNA amplification through thermal cycling, it primarily offered qualitative assessment of target presence or absence through gel electrophoresis [34]. The need for quantification spurred the development of quantitative PCR (qPCR), which enables real-time monitoring of amplification progress through fluorescent detection systems [34] [35]. Most recently, digital PCR (dPCR) has emerged as a third-generation technology that provides absolute nucleic acid quantification without requiring standard curves by employing principles of limiting dilution and Poisson statistics [33].

The evolution of PCR technologies has been paralleled by specialized methodological adaptations designed to address specific experimental challenges. Multiplex PCR enables simultaneous amplification of multiple targets in a single reaction, significantly improving throughput and efficiency while conserving precious samples [36]. Conversely, long-range PCR addresses the technical challenges associated with amplifying extended genomic regions beyond the capabilities of standard polymerases, enabling applications in genome mapping and structural variation studies [12]. This technical guide provides a comprehensive comparison of these core PCR technologies, framing their relative advantages, limitations, and optimal applications within the context of common experimental pitfalls and fundamental principles.

Technology Comparison: Principles and Applications

Core Methodologies and Workflows

End-point PCR, also known as conventional PCR, represents the original amplification technique where DNA is amplified through 25-40 thermal cycles, with the final product quantified using gel electrophoresis [34]. This approach provides qualitative or semi-quantitative results based on band intensity but lacks precise quantification capabilities [34]. The method suffers from the "plateau effect" where reaction components become limiting, making the final product concentration an unreliable indicator of starting template quantity [37].

Quantitative PCR (qPCR), also called real-time PCR, monitors amplification progress as it occurs through fluorescent detection systems [34] [35]. Two primary detection chemistries are employed: (1) DNA-binding dyes like SYBR Green that intercalate non-specifically into double-stranded DNA, and (2) sequence-specific probes (such as TaqMan) that provide enhanced specificity through hybridization [38]. The critical measurement in qPCR is the quantification cycle (Cq) or threshold cycle (Ct), which represents the cycle number at which fluorescence exceeds a background threshold [34]. This value correlates inversely with the starting template concentration, enabling quantification through comparison with standard curves [35].

Digital PCR (dPCR) takes a fundamentally different approach by partitioning a PCR reaction into thousands of individual reactions, with some partitions containing the target molecule and others containing none [34] [33]. Following endpoint amplification, the fraction of positive partitions is counted, and the original target concentration is calculated using Poisson statistics [34]. This partitioning strategy enables absolute quantification without standard curves and significantly improves detection sensitivity for rare targets [14] [37]. dPCR implementations include droplet-based systems (ddPCR) that create water-in-oil emulsions and chip-based systems using microfluidic chambers [39] [33].

Comparative Performance Characteristics

The selection of appropriate PCR technology depends heavily on experimental requirements, as each method exhibits distinct performance characteristics across metrics including sensitivity, precision, dynamic range, and tolerance to inhibitors.

Table 1: Performance Comparison of Major PCR Technologies

| Parameter | End-Point PCR | Quantitative PCR (qPCR) | Digital PCR (dPCR) |

|---|---|---|---|

| Quantification Capability | Qualitative/Semi-quantitative | Quantitative (relative/absolute) | Absolute quantification |

| Detection Principle | Gel electrophoresis | Real-time fluorescence | Partition counting + Poisson statistics |

| Precision | + | ++ | +++ |

| Dynamic Range | Limited | 5-6 logs | 3-4 logs |

| Sensitivity | Moderate | High | Very high (rare allele detection) |

| Tolerance to Inhibitors | Low | Moderate | High |

| Throughput | + | +++ | ++ |

| Multiplexing Capability | + | + | +++ |

| Standard Curve Required | No | Yes | No |

| Cost Considerations | Low | Moderate | High (instrument) |

Sensitivity and Precision: dPCR demonstrates superior precision and sensitivity, particularly for low-abundance targets [34]. This technology can resolve small copy number differences with much lower coefficients of variation compared to qPCR, making it invaluable for applications requiring detection of rare mutations or slight expression changes [34] [37]. The partitioning approach enriches targets from background, improving both amplification efficiency and tolerance to inhibitors commonly found in complex samples [34].

Dynamic Range and Throughput: qPCR maintains advantages in dynamic range and throughput, efficiently handling samples with concentration variations up to 5-6 orders of magnitude [34]. This broader dynamic range makes qPCR more suitable for measuring large expression differences between targets [34]. For high-throughput applications where similar samples are processed with identical protocols, qPCR typically offers faster processing times and lower per-sample costs [34].

Multiplexing Capabilities: Advanced multiplexing represents a critical capability across PCR platforms. dPCR systems offer enhanced multiplexing capacity, with some platforms supporting up to 5-plex reactions in a single well [39]. qPCR multiplexing traditionally requires multiple fluorescent channels with different probe colors, though recent innovations in single-channel multiplexing combining intercalating dyes with specific probes have expanded possibilities [38].

Applications and Technology Selection Guidelines

The optimal PCR technology selection depends fundamentally on experimental goals and sample characteristics:

qPCR is preferred for:

- High-throughput screening applications

- Gene expression analysis with large dynamic range requirements

- Routine diagnostics with established targets and protocols

- Situations where cost-effectiveness is paramount

dPCR excels in:

- Absolute quantification without standard curves

- Detection of rare targets (mutations, pathogens)

- Copy number variation analysis

- Analyzing samples with PCR inhibitors

- Applications requiring high precision and reproducibility across laboratories

End-point PCR remains relevant for:

- Cloning and sequencing verification

- Educational applications

- Qualitative assessment of target presence

- Applications with minimal quantification requirements

Multiplex PCR provides significant advantages when analyzing multiple targets simultaneously, conserving sample material and reducing processing time [36]. Implementation considerations include careful primer design to minimize interactions and compatibility with detection systems [38].

Long-range PCR addresses amplification of extended regions (≥5kb) requiring specialized enzyme blends with proofreading capabilities to maintain processivity and fidelity across large fragments [12].

Experimental Protocols and Methodologies

Quantitative PCR (qPCR) Protocol

Sample Preparation and DNA Quantification:

- Extract DNA using high-quality kits or phenol-chloroform extraction [40]

- Assess DNA integrity using agarose gel electrophoresis [40]

- Quantify DNA concentration spectrophotometrically (e.g., NanoDrop), ensuring A260/280 and A260/230 ratios >1.8 [12]

- Use 1-10 ng of plasmid DNA or 50-250 ng of genomic DNA as template [40]

Reaction Setup:

- Prepare master mix containing:

- Distribute equal volumes to all reaction wells

- Add template DNA to individual reactions, including no-template controls

- Use filter tips and dedicated pipettes to minimize contamination [40]

Thermal Cycling and Data Acquisition:

- Initial denaturation: 95°C for 2-10 minutes

- 35-45 cycles of:

- Denaturation: 95°C for 15-30 seconds

- Annealing: Primer-specific temperature for 15-60 seconds

- Extension: 72°C for 30 seconds-1 minute (duration depends on amplicon size)

- Fluorescence acquisition during annealing or extension phase

- Melt curve analysis (if using DNA-binding dyes): 65°C to 95°C with continuous fluorescence monitoring

Data Analysis:

- Set fluorescence threshold in exponential phase of amplification

- Determine Cq values for all samples

- For absolute quantification: Generate standard curve using serial dilutions of known standards

- For relative quantification: Apply ΔΔCq method with reference gene normalization

Digital PCR (dPCR) Protocol

Reaction Setup:

- Prepare PCR mixture similar to qPCR but with adjusted probe concentrations

- For droplet-based systems (ddPCR):

- Load sample into droplet generation cartridge

- Generate 10,000-20,000 droplets per sample [14]

- For nanoplate-based systems:

Partitioning and Amplification:

- Ensure proper partition formation (monodisperse droplets or uniform chambers)

- Transfer partitions to thermocycler (separate instrument for ddPCR, integrated for nanoplate systems)

- Perform endpoint PCR amplification:

- Initial denaturation: 95°C for 10 minutes

- 40-45 cycles of:

- Denaturation: 94°C for 30 seconds

- Annealing/Extension: 60°C for 60 seconds

- Final stabilization: 4°C-98°C (depending on platform)

Fluorescence Reading and Analysis:

- For ddPCR: Transfer droplets to reader, analyzing individually as they flow past detector [39]

- For nanoplate dPCR: Image entire plate using multi-channel fluorescence detection [39]

- Set fluorescence thresholds to distinguish positive and negative partitions

- Apply Poisson correction to calculate absolute target concentration:

- Concentration = -ln(1 - p) / V, where p = fraction of positive partitions, V = partition volume

Advanced Applications: Single-Channel Multiplex qPCR

A novel approach combining intercalating dyes with specific probes enables multiplexing within a single fluorescent channel [38]:

Reaction Design:

- Use EvaGreen intercalating dye combined with FAM-labeled probe for one target

- Design second target without probe, distinguished by melting temperature

- Record fluorescence at both denaturation and elongation phases

Data Analysis:

- Use denaturation curve (FAM signal only) to quantify first target

- Calculate second target concentration from difference between elongation and denaturation curves

- Verify specificity through melting curve analysis

This method effectively doubles throughput capabilities without requiring multiple fluorescence channels, providing an economical alternative to conventional multiplex qPCR [38].

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Essential Research Reagents for PCR Applications

| Reagent/Material | Function | Application Notes |

|---|---|---|

| DNA Polymerases | Catalyzes DNA synthesis | Taq for routine PCR; high-fidelity blends (Q5, Phusion) for long-range and cloning [40] |

| dNTPs | Building blocks for DNA synthesis | Use 200 μM concentration; avoid multiple freeze-thaw cycles [12] |

| Primers | Target sequence recognition | 18-25 bases; 40-60% GC content; validate with gradient PCR [40] |

| Probes | Sequence-specific detection | Hydrolysis (TaqMan) or hybridization formats; optimize concentration [38] |

| Intercalating Dyes | Non-specific DNA detection | SYBR Green, EvaGreen; enables melt curve analysis [38] |

| PCR Buffers | Optimal reaction environment | Contains salts, buffers; may include MgCl₂ (1.5-5 mM) [40] |

| Additives | Enhance specificity/yield | DMSO (5-10%) for GC-rich templates; BSA for inhibitor resistance [12] |

| Digital PCR Plates | Reaction partitioning | Nanoplates with 8,500-26,000 partitions; platform-specific [39] |

| Droplet Generation Oil | Creates water-in-oil emulsion | Critical for ddPCR; includes stabilizers to prevent coalescence [39] |

Troubleshooting Common PCR Pitfalls

Contamination Prevention and Control

Contamination represents one of the most significant challenges in molecular diagnostics, particularly for sensitive applications:

- Establish Physical Separation: Perform reagent preparation, sample processing, and product analysis in separate dedicated areas [40]

- Use Barrier Tips: Implement filter-containing pipette tips to minimize aerosol contamination [40]

- Include Controls: Always incorporate no-template controls (NTC) to detect contamination and positive controls to verify reaction efficiency [40] [12]

- Employ Decontamination Solutions: Regularly clean workspaces with DNA degradation solutions (e.g., 10% bleach, commercial DNA away products) [40]

- Implement Unidirectional Workflow: Move from "clean" to "dirty" areas without backtracking

Primer and Probe Design Optimization

Poorly designed primers represent a common source of PCR failure:

- Bioinformatic Validation: Use design software (Primer3, NCBI Primer-BLAST) to check for secondary structures, dimers, and specificity [40]

- Length and Composition: Design primers 18-25 bases long with 40-60% GC content [40]

- Temperature Matching: Ensure primer pairs have similar melting temperatures (±1°C)

- Avoid Repetitive Sequences: Exclude regions with runs of identical nucleotides

- Experimental Validation: Test new primers with gradient PCR and melt curve analysis before experimental use [40]

Reaction Condition Optimization

Suboptimal reaction conditions frequently cause variable results:

- Annealing Temperature: Determine optimal temperature using gradient PCR, typically 3-5°C below primer Tm [40]

- Magnesium Concentration: Titrate MgCl₂ (1.5-5 mM) as it significantly impacts specificity and yield [12]

- Cycle Number: Limit to 25-35 cycles for standard PCR; excessive cycles increase background [40]

- Template Quality: Verify DNA integrity by gel electrophoresis; avoid repeated freeze-thaw cycles [12]

- Inhibitor Removal: Implement additional purification steps (ethanol precipitation, column purification) if inhibitors are suspected [40]

Digital PCR-Specific Considerations

dPCR introduces unique technical considerations:

- Partition Quality: Monitor for uniform droplet size or chamber filling; variability affects quantification accuracy [39]

- Template Concentration: Optimize to avoid saturation (too many positives) or excessive negatives [34]

- Rain Effect: Address intermediate fluorescence populations through thermal optimization and probe design [39]

- Volume Accuracy: Ensure precise pipetting as variations significantly impact absolute quantification [14]

- Data Interpretation: Apply appropriate Poisson correction, particularly with high positive fractions (>10%) [34]

The evolving landscape of PCR technologies offers researchers an expanding toolkit for nucleic acid analysis, with each method exhibiting distinct advantages for specific applications. qPCR remains the workhorse for high-throughput quantitative analysis, providing robust performance across diverse sample types with established protocols and reagents. dPCR has emerged as a powerful alternative for applications requiring absolute quantification, exceptional sensitivity for rare targets, and superior tolerance to inhibitors. Multiplexing approaches continue to advance, enabling increasingly complex experimental designs within single reactions. Long-range PCR addresses specialized needs for amplifying extended genomic regions.

Technology selection should be guided by experimental priorities: qPCR for dynamic range and throughput, dPCR for precision and absolute quantification, and endpoint PCR for basic qualitative applications. Regardless of platform, attention to fundamental principles—primer design, contamination control, and reaction optimization—remains essential for generating reproducible, publication-quality data. As PCR technologies continue to evolve, researchers can anticipate further improvements in sensitivity, multiplexing capability, and accessibility, expanding the boundaries of molecular analysis across basic research, clinical diagnostics, and biotechnology applications.

Amplifying DNA targets with high guanine-cytosine (GC) content and pronounced secondary structures represents a significant challenge in molecular assay development. These complex targets resist standard polymerase chain reaction (PCR) conditions due to their unique physicochemical properties, often resulting in PCR failure, non-specific amplification, or significantly reduced yield. GC-rich DNA sequences, typically defined as having >60% GC content, exhibit greater thermal stability due to three hydrogen bonds between G-C base pairs compared to two in A-T pairs [41]. This increased stability elevates the melting temperature required for DNA denaturation and promotes the formation of stable secondary structures, such as hairpin loops and stem-loop configurations, that physically impede polymerase progression [42] [43].

Within the context of PCR fundamentals and common pitfalls, these challenges frequently manifest in failed experiments, wasted reagents, and delayed research progress. For researchers working with genomes known for high GC content, such as Mycobacterium tuberculosis (approximately 66% GC) or human promoter regions, these issues become routine obstacles requiring specialized approaches [43]. This technical guide provides a comprehensive framework for overcoming these challenges through optimized primer design, specialized reagents, and tailored experimental protocols validated for complex targets.

Understanding the Fundamental Challenges

Structural and Thermodynamic Barriers

The primary challenge in amplifying GC-rich regions stems from their structural and thermodynamic properties. The increased stability of GC-rich DNA is primarily attributed to base stacking interactions rather than hydrogen bonding alone [41]. These stacking interactions create DNA duplexes with melting temperatures that may exceed standard PCR denaturation temperatures (typically 94-95°C). Consequently, incomplete denaturation occurs, leaving template strands partially annealed and unavailable for primer binding.

Furthermore, these regions readily form intramolecular secondary structures, particularly stable hairpin loops that accumulate during thermal cycling [41]. When primers themselves contain GC-rich sequences, they tend to form self-dimers, cross-dimers, and stem-loop structures that can impede the DNA polymerase's progression along the template molecule, leading to truncated PCR products [43]. GC-rich sequences at the 3' end of primers can also lead to mispriming, where primers bind to partially homologous sequences with reduced stringency.

Biochemical Complications

From a biochemical perspective, DNA polymerases often stall or dissociate when encountering these stable secondary structures. The strong hydrogen bonding in GC-rich templates can cause polymerases to pause, increasing the likelihood of incomplete extension products [44]. This effect is compounded when using standard Taq DNA polymerase, which may lack the processivity required for traversing these challenging regions. Additionally, the high melting temperatures required for GC-rich templates can accelerate enzyme denaturation over multiple cycles, particularly when denaturation temperatures exceed 95°C for extended periods [41].

Optimized Primer Design Strategies

Fundamental Primer Design Parameters

Successful amplification of GC-rich targets begins with meticulous primer design. While standard primer design principles apply, they require stricter adherence and additional considerations for complex templates. The table below summarizes the key parameters for optimal primer design against GC-rich targets.