Navigating Diagnostic Accuracy: A Comprehensive Analysis of False Positives and Negatives in PCR Testing

This article provides a systematic evaluation of the factors contributing to false-positive and false-negative results in polymerase chain reaction (PCR) diagnostics, a critical issue for researchers, scientists, and drug development...

Navigating Diagnostic Accuracy: A Comprehensive Analysis of False Positives and Negatives in PCR Testing

Abstract

This article provides a systematic evaluation of the factors contributing to false-positive and false-negative results in polymerase chain reaction (PCR) diagnostics, a critical issue for researchers, scientists, and drug development professionals. It explores the foundational principles of diagnostic accuracy, including the relationship between cycle threshold (Ct) values and false-positive rates, where a Ct > 35 can lead to false-positive rates of 15-24% [citation:4]. The content covers methodological advancements from open-source platforms [citation:1] to novel techniques like high-resolution melting (HRM) analysis [citation:8], alongside practical strategies for contamination control and workflow optimization [citation:2][citation:7]. Through comparative analyses with alternative diagnostic methods like blood culture [citation:6] and antigen tests [citation:3], the article validates PCR's clinical utility while addressing its limitations. The synthesis offers a roadmap for enhancing test reliability, guiding future assay development, and improving clinical decision-making in molecular diagnostics.

Understanding the Spectrum and Impact of PCR Diagnostic Errors

In molecular diagnostics, the analytical and clinical performance of a test is fundamentally characterized by its ability to correctly classify true positive and true negative samples. False positives occur when an uninfected individual tests positive, while false negatives occur when an infected individual tests negative [1]. These errors carry significant implications for clinical management, public health interventions, and research validity, particularly in the context of PCR-based diagnostics for infectious diseases like COVID-19.

The Reverse Transcriptase-Polymerase Chain Reaction (RT-PCR) test emerged as the predominant nucleic acid amplification test (NAAT) for detecting SARS-CoV-2 RNA during the COVID-19 pandemic [1]. While much attention has focused on false negative rates due to their direct impact on disease transmission, false positive results present distinct challenges, particularly in low-prevalence settings where their proportional impact increases substantially [1]. Understanding the mechanisms, rates, and implications of both false positives and false negatives is essential for researchers, laboratory scientists, and drug development professionals working to optimize diagnostic platforms and interpret experimental results.

Core Concepts and Definitions

Fundamental Metrics for Diagnostic Accuracy

The performance of any diagnostic test is evaluated through several key metrics that quantify its ability to distinguish between true states of infection or non-infection:

- Sensitivity: The proportion of truly infected individuals who test positive, reflecting the test's ability to correctly identify infection [1]

- Specificity: The proportion of truly uninfected individuals who test negative, reflecting the test's ability to correctly exclude infection [1]

- False Positive Rate (FPR): The proportion of uninfected individuals who incorrectly test positive

- False Negative Rate (FNR): The proportion of infected individuals who incorrectly test negative [2]

- Positive Predictive Value (PPV): The proportion of positive tests that are true positives [1]

- Negative Predictive Value (NPV): The proportion of negative tests that are true negatives

Impact of Disease Prevalence on Predictive Values

The relationship between test performance characteristics and disease prevalence critically influences the clinical utility of diagnostic tests. Positive predictive value demonstrates profound dependence on disease prevalence, wherein the same test with fixed sensitivity and specificity will yield dramatically different PPV across populations with varying infection rates [1].

Table 1: Impact of Prevalence on Positive Predictive Value (Assuming 95% Sensitivity, 98% Specificity)

| Prevalence | Population (n=10,000) | True Positives | False Positives | Positive Predictive Value |

|---|---|---|---|---|

| 10% (Diagnostic) | 1,000 infected, 9,000 uninfected | 950 | 180 | 84.0% |

| 1% (Screening) | 100 infected, 9,900 uninfected | 95 | 198 | 32.4% |

| 0.1% (Population) | 10 infected, 9,990 uninfected | 9.5 | 199.8 | 4.5% |

This mathematical relationship demonstrates that in low-prevalence settings typical of screening programs, even tests with high specificity can produce a majority of false positive results among all positive tests [1]. This has profound implications for the design of screening programs and interpretation of positive results in research settings.

Quantitative Analysis of False Positives and Negatives in PCR Testing

Documented Rates of False Positive Results

Multiple studies have investigated the frequency and causes of false positive RT-PCR results across different testing environments:

Table 2: Documented False Positive Rates in SARS-CoV-2 RT-PCR Studies

| Study Context | Sample Size | False Positive Rate | Key Findings | Citation |

|---|---|---|---|---|

| Asymptomatic screening | 24,717 tests (6,251 asymptomatic) | 6.9% of positive tests | 20 false positives identified through retesting protocol; technologist errors and cross-contamination common causes | [3] |

| Entertainment industry screening | 122,300 tests | 22.6% of positive tests (in investigated subset) | PPV of 77.4%; selection bias toward investigating unexpected positives in asymptomatic individuals with prior negative tests | [1] |

| Quality control protocol | 288 positive tests in asymptomatic unexposed | 6.9% false positive rate | Root cause analysis identified technologist errors and cross-contamination from high viral load specimens | [3] |

A quality assurance review of RT-PCR testing documented that among 24,717 samples tested, 6.9% of positive results in asymptomatic, unexposed individuals were false positives upon retesting [3]. In another analysis of screening programs in the entertainment industry, 54 of 239 positive tests (22.6%) were determined to be false positives, yielding a positive predictive value of 77.4% in that specific context [1].

Documented Rates of False Negative Results

Studies of false negative RT-PCR tests demonstrate variable rates depending on testing timing, specimen quality, and disease severity:

Table 3: Documented False Negative Rates in SARS-CoV-2 RT-PCR Studies

| Study Context | Sample Size | False Negative Rate | Key Findings | Citation |

|---|---|---|---|---|

| Discordant testing analysis | 100,001 tests (95,919 patients) | 9.3% in discordant subgroup | Sensitivity of 90.7%; most false negatives occurred with low viral loads in early infection | [2] |

| Hospitalized patients | 145 confirmed COVID-19 cases | 3.45% initial false negative | False negatives occurred with early testing in moderate illness or late testing in severe illness | [4] |

| Systematic review | Multiple studies | Range of 2-29% | Variability due to sampling timing, specimen type, and assay differences | [2] |

A large discordant testing analysis of 100,001 COVID-19 tests found a false negative rate of 9.3% (sensitivity of 90.7%) in a subgroup of patients with discordant results [2]. Another study of hospitalized patients found that among 145 confirmed COVID-19 cases, 5 (3.45%) had an initial false negative RT-PCR test result [4].

Experimental Protocols for Assessing PCR Accuracy

Methodology for Evaluating False Positive Results

Quality Control Retesting Protocol [3]:

- Sample Selection: All specimens from asymptomatic, unexposed persons with SARS-CoV-2 positive tests are identified for retesting

- Retesting Procedure: A second test is performed on the original sample; if "non-detected," a third test is conducted for confirmation

- Root Cause Analysis: For confirmed false positives, a comprehensive investigation includes:

- Review of amplification curves and cycle threshold (Ct) values

- Examination of technologists' records and testing paperwork

- Analysis of specimen location on testing plates relative to high viral load specimens

- Assessment of potential cross-contamination sources

Clinical Correlation Approach [1]:

- Identification of Unexpected Positives: Positive results in asymptomatic individuals with prior negative PCR tests are flagged for evaluation

- Confirmatory Retesting: Individuals undergo retesting 24+ hours after the positive test on at least two occasions

- Result Interpretation: If both retests are negative, the initial test is classified as a false positive

Methodology for Evaluating False Negative Results

Discordant Testing Analysis [2]:

- Cohort Identification: Review laboratory data to identify patients with an initial negative RT-PCR followed by a positive result within 14 days (one incubation period)

- Sample Retrieval: Retrieve stored samples from both negative and positive tests for the same patient

- Multitarget Retesting: Retest negative samples using three alternate RT-PCR assays targeting different genes (E gene, N1/N2 regions of nucleocapsid genes)

- Result Classification: A negative sample is classified as a false negative if repeat testing yields positive results for ≥2 of three gene targets

- Quality Assessment: Test all discordant swabs for human ribonuclease P (RNAse P) to assess sample quality and collection adequacy

Clinical Validation Protocol [5]:

- Analytical Specificity: Test against 23 related virus strains (including human coronaviruses 229E, NL63, OC43, HKU1, MERS-CoV, influenza viruses, adenovirus, rhinovirus, and others) to confirm no cross-reactivity

- Limit of Detection (LOD): Determine using tenfold serial dilutions of viral isolate from known COVID-19 patient; extract RNA and perform RT-PCR in triplicate

- Linearity and Efficiency: Evaluate using plasmids containing cloned target SARS-CoV-2 genes (RdRp and E), serially diluted tenfold from different initial concentrations

- Reproducibility Assessment: Perform intra-assay (triplicate RT-PCR reactions) and inter-assay (repeat testing 3 days later) variability analysis

Factors Contributing to Diagnostic Errors

Multiple technical and procedural factors can contribute to false positive RT-PCR results [1]:

- Contamination During Sampling: Infected healthcare workers or contaminated surfaces; aerosolization of virus during collection

- Extraction and Amplification Contamination: Aerosolization in containment hoods; amplicon contamination in PCR amplification

- Reagent Contamination: Manufacturers' production facilities where positive controls may contaminate other reagents

- Equipment Contamination: Sample carryover from high viral titer specimens

- Cross-Reactivity: Amplification of non-target genetic material from other coronaviruses or organisms

- Sample Mix-Ups: Mislabeling or transposition of samples

- Software and Data Issues: Instrument software problems; data entry or transmission errors

- Interpretive Variability: Assuming indeterminate results (with Ct values >35 cycles) are positive; non-specific reactions

A root cause analysis of false positive results identified that technologist errors (misplacement of specimens in testing plates) and cross-contamination from high viral load specimens in adjacent wells were common causes [3].

Multiple factors contribute to false negative RT-PCR results [2] [4]:

- Suboptimal Specimen Collection: Inadequate sampling technique or inappropriate swab type

- Testing Timing: Testing too early in the disease process before detectable viral replication, or too late during convalescence when viral loads have decreased

- Low Analytic Sensitivity: Assay limitations in detecting low viral concentrations

- Inappropriate Specimen Type: Using upper respiratory samples when infection is predominantly lower respiratory

- Variable Viral Shedding: Natural fluctuations in viral load throughout infection course

- Sample Handling and Transport Issues: Delay in processing or improper storage conditions

- Inhibitory Substances: Presence of PCR inhibitors in the sample matrix

- Primer/Probe Mismatches: Genetic mutations in target regions affecting amplification efficiency

A study of hospitalized COVID-19 patients found that false negative results occurred in two distinct scenarios: (1) patients with moderate disease tested soon after symptom onset, and (2) patients with severe/critical disease who had delayed testing later in their illness course when viral clearance was occurring [4].

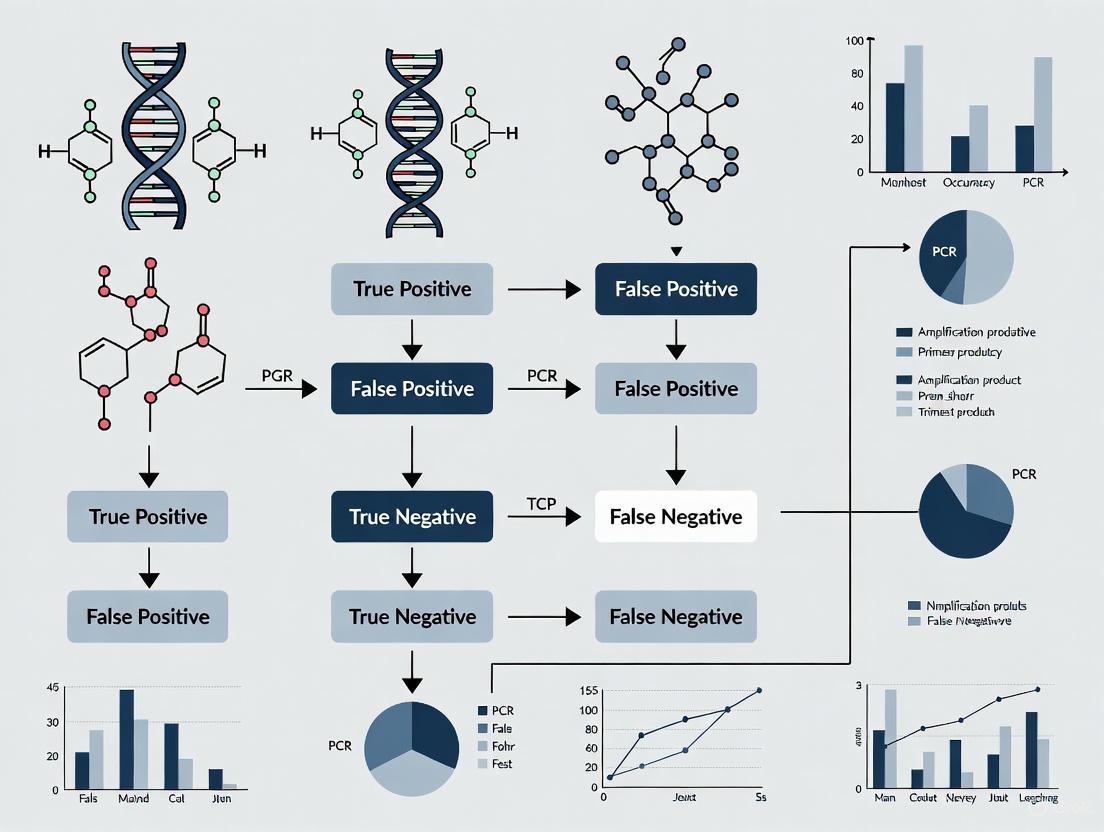

Visualization of Testing Workflows and Quality Control

Diagram 1: PCR Testing Workflow with Quality Control Checkpoints and Potential Error Sources

Diagram 2: False Positive and Negative Result Causes and Impacts

Research Reagent Solutions and Essential Materials

Table 4: Key Research Reagents and Materials for PCR Diagnostic Validation

| Reagent/Material | Function | Example Specifications | Application in Validation |

|---|---|---|---|

| Primers and Probes | Target-specific amplification | WHO-recommended sequences for SARS-CoV-2 RdRp, E, N genes [5] | Specific detection of target pathogen |

| Positive Control Template | Analytical sensitivity determination | Plasmids containing cloned target genes; viral isolates of known titer [5] | Limit of detection studies, assay linearity |

| Negative Control Material | Specificity assessment | Human specimens negative for target pathogen; other related viruses [5] | Cross-reactivity testing, contamination monitoring |

| Nucleic Acid Extraction Kits | RNA isolation and purification | QIAamp Viral RNA Mini kit (QIAGEN) or equivalent [5] | Standardized nucleic acid recovery |

| RT-PCR Master Mix | Enzymatic amplification | AgPath-ID one-step RT-PCR reagents (Applied Biosystems) or equivalent [5] | Consistent reverse transcription and amplification |

| Reference Panels | Analytical performance evaluation | Characterized clinical samples; external quality assessment panels | Inter-laboratory comparison, proficiency testing |

| Inhibition Controls | Detection of PCR inhibitors | Exogenous internal control RNA spiked into samples | Identification of problematic specimens |

Implications for Research and Drug Development

Impact on Clinical Trials and Study Validity

The accuracy of PCR-based diagnostics carries significant implications for research and drug development:

- Patient Enrollment: False positives can lead to inappropriate inclusion of uninfected participants in treatment trials, potentially diluting observed treatment effects

- Endpoint Determination: False negatives may lead to missed endpoints in vaccine or therapeutic trials, reducing statistical power

- Safety Monitoring: Misclassification of infection status can compromise safety assessments in clinical trials

- Epidemiological Studies: Inaccurate test results distort understanding of disease transmission dynamics and risk factors

Quality Assurance Recommendations

Based on the documented causes of false results, several quality control measures are recommended for research settings:

- Retesting Protocols: Implement automatic retesting of positive results from asymptomatic individuals in low-prevalence settings [1] [3]

- Sample Tracking: Monitor amplification curves and Ct values for consistency; investigate weak positive results with high Ct values [1]

- Plate Layout Optimization: Strategically position high viral load samples to minimize cross-contamination risk to negative controls and low-positive samples [3]

- Multi-target Detection: Employ assays targeting multiple genetic regions to confirm positive results and detect primer/probe mismatches [2]

- External Quality Assessment: Participate in proficiency testing programs to maintain analytical performance standards

The accurate classification of false positives and negatives remains fundamental to evaluating diagnostic test performance in PCR-based testing. Understanding the multifactorial origins of diagnostic errors—from pre-analytical variables to analytical limitations and post-analytical interpretation—is essential for researchers, laboratory professionals, and drug developers. The documented rates of false positive (approximately 6.9-22.6% of positives in screening contexts) and false negative results (approximately 3.45-9.3% in clinical studies) highlight the importance of context-specific test interpretation [1] [2] [3].

Robust quality control measures, including retesting protocols, multi-target confirmation, and comprehensive root cause analysis of discrepant results, are critical for maintaining diagnostic accuracy in both clinical and research settings. As PCR technologies continue to evolve with advancements in multiplexing, digital PCR, and point-of-care applications, the fundamental principles of diagnostic accuracy and error characterization remain essential for valid research outcomes and effective drug development.

Clinical and Economic Consequences of Erroneous PCR Results

Polymerase chain reaction (PCR) testing represents a cornerstone of modern molecular diagnostics, providing unparalleled sensitivity in detecting pathogenic nucleic acids. However, the clinical utility of these tests is fundamentally constrained by their potential to produce erroneous results—both false positives and false negatives. These inaccuracies propagate beyond individual patient harm to impose substantial economic burdens on healthcare systems through unnecessary treatments, extended hospitalizations, and misallocated resources. Within the context of an evolving diagnostic landscape that increasingly incorporates rapid, point-of-care, and syndromic panel PCR testing, a critical examination of error consequences is essential for researchers, laboratory scientists, and drug development professionals. This analysis synthesizes recent evidence to compare the performance of various PCR methodologies, quantify their associated clinical and economic impacts, and delineate evidence-based protocols for error mitigation, thereby providing a framework for optimizing diagnostic strategies in both research and clinical practice.

Mechanisms and Causes of Erroneous PCR Results

False positive PCR results, wherein the test incorrectly indicates the presence of a target pathogen, arise from multiple technical and procedural vulnerabilities. A primary concern is laboratory contamination, which can occur during sample collection, nucleic acid extraction, or PCR amplification phases through mechanisms such as aerosolized amplicons, contaminated reagents, or carryover from high-titer specimens [1] [6]. The analytical specificity of the primer-probe system is equally critical; cross-reactivity with non-target genetic sequences from closely related pathogens or human genomic material can generate spurious signals [7]. The prevalence of these false positives is profoundly influenced by disease prevalence. During a period of low COVID-19 prevalence (0.5%), one study found that 84% (26/31) of positive results were likely false positives, yielding a positive predictive value (PPV) of only 16% [8]. This relationship is mathematically inherent; as prevalence decreases, the PPV plummets, meaning false positives can substantially outnumber true positives in screening scenarios [1].

Conversely, false negative results—failures to detect a true infection—typically stem from suboptimal assay sensitivity, inadequate sample collection, or the presence of PCR inhibitors in the reaction [6]. The timing of sample collection relative to infection course is also crucial, as viral loads may be below the assay's limit of detection during very early or late stages of illness [1]. The consequences are particularly severe in contagion management, as undetected infected individuals may not be isolated, accelerating community transmission [6]. In clinical care, false negatives can lead to delayed or missed diagnoses, inappropriate treatments, and poor patient outcomes, creating significant liability in both diagnostic and drug development contexts where accurate patient stratification is paramount.

Clinical Consequences of Erroneous Results

Direct Patient Harm and Mismanagement

The clinical implications of erroneous PCR results extend beyond statistical error rates to tangible patient harm. False positives can trigger a cascade of unnecessary interventions, including unindicated antibiotic prescriptions, invasive diagnostic procedures, and delays in identifying the true etiology of a patient's symptoms [8] [7]. Documented cases from COVID-19 testing illustrate these perils: patients with false positive results were inappropriately cohorted with infectious individuals in hospital wards, needlessly exposing them to the virus [8]. Others faced substantial disruptions to essential care, such as being removed from organ transplant waiting lists or experiencing postponed surgeries, creating potentially life-threatening delays [8]. The psychological impact on patients receiving a false diagnosis of a serious infection is another significant consideration, often manifesting as heightened anxiety and distress [7].

Systemic and Public Health Impacts

At a systems level, erroneous results distort epidemiological surveillance by inflating apparent disease incidence and complicating public health response planning [1]. False positives consume limited infection control resources through unnecessary contact tracing, quarantine measures, and environmental decontamination [1]. They also erode trust in diagnostic testing systems among both clinicians and patients, potentially leading to hesitation in adopting new molecular technologies. Conversely, false negatives undermine infection control by providing false reassurance, potentially leading to relaxed safety behaviors and increased transmission risks, particularly in congregate settings [6].

Table 1: Documented Clinical Consequences of False Positive PCR Results

| Consequence Category | Specific Examples | Setting Documented |

|---|---|---|

| Care Disruptions | Delayed surgeries; Removal from transplant lists; Prolonged hospital stays | Hospital pre-admission screening [8] |

| Inappropriate Placement | Cohorting non-infected with infected patients | Hospital infection control [8] |

| Unnecessary Interventions | Additional testing; Unwarranted antibiotic use; Contact tracing | Nursing homes, community screening [8] [1] |

| Resource and Workflow Strain | Staff quarantine; Distraction from other care activities; Administrative burden | Healthcare institutions, production workplaces [8] [1] |

Economic Impact of Diagnostic Inaccuracy

Cost Analyses of Testing Strategies

The economic ramifications of PCR diagnostic accuracy are quantifiable and substantial. A large, propensity-matched US study compared healthcare resource utilization and costs between patients tested for respiratory infections using syndromic RT-PCR with next-day results versus those receiving other or no diagnostic tests. Over six months post-testing, the syndromic RT-PCR cohorts demonstrated significantly lower mean costs across multiple care domains compared to matched subcohorts using culture, other PCR, point-of-care only, or no testing [9] [10]. Specifically, for oropharyngeal infections, the RT-PCR group showed lower costs for total outpatient services ($2,598 vs. $2,970), physician office visits ($624 vs. $689), and emergency department visits ($290 vs. $397) compared to the culture subcohort [9]. These findings highlight how accurate, timely pathogen identification can streamline patient management and reduce downstream healthcare consumption.

The Economic Case for Rapid and Point-of-Care PCR

Economic modeling further supports the value proposition of high-accuracy testing, even at higher per-test costs. A health economic analysis of point-of-care (POC) PCR for influenza-like illnesses found that despite its higher upfront cost, POC PCR saved $196–$269 per patient compared to send-out PCR and rapid antigen strategies, respectively [11]. These savings accrued through reduced downstream resource utilization, including lower rates of hospitalizations and ICU admissions, and a decreased need for repeat testing [11]. Similarly, a cost-effectiveness analysis of rapid, syndromic PCR for hospital-acquired pneumonia (HAP) found lower total ICU costs in the intervention group (£33,149 vs. £40,951 for standard care), despite the additional cost of the PCR test itself [12]. This demonstrates that the clinical efficiencies enabled by rapid, accurate diagnostics—particularly more targeted antibiotic therapy and potentially shorter ICU stays—can offset initial test expenses.

Table 2: Economic Comparisons of PCR Testing Strategies for Respiratory Infections

| Testing Strategy | Economic Outcome | Study Context |

|---|---|---|

| Syndromic RT-PCR (Next-Day Results) | Lower total outpatient, physician visit, and ED costs over 6 months [9] | Oropharyngeal/Respiratory Tract Infections (Propensity-Matched Study) |

| Point-of-Care PCR (e.g., Xpert Xpress) | Saved $196–$269 per patient vs. send-out PCR/antigen strategies [11] | Influenza-Like Illnesses (Cost-Consequence Analysis) |

| Rapid Syndromic PCR (ICU-Based) | Lower total ICU costs (£33,149 vs. £40,951), cost-effective for antibiotic stewardship [12] | Hospital-Acquired and Ventilator-Associated Pneumonia (RCT-Based Economic Evaluation) |

Comparative Performance of PCR Technologies

Evaluating Traditional, Rapid, and Digital PCR Platforms

The diagnostic landscape features multiple PCR platforms with distinct performance characteristics. Traditional real-time RT-PCR (rRT-PCR) remains the gold standard for many applications due to its well-established protocols and high throughput. However, rapid rtRT-PCR systems like the STANDARD M10 assay have emerged to address the need for faster turnaround times. In a comparative study of pre-admission screening, the STANDARD M10 demonstrated a mean turnaround time of 2.1 hours with 90% of results reported within 2.9 hours, dramatically faster than the 10.7–17.1 hours required for pooled testing with standard rRT-PCR [13]. The overall agreement between the methods was high (97.3%), supporting the utility of rapid platforms in time-sensitive clinical scenarios such as same-day admissions [13].

Meanwhile, digital PCR (dPCR) platforms like the Lab-On-An-Array (LOAA) system offer potential advantages in sensitivity and reproducibility. An evaluation in Ghana found LOAA had "almost perfect" agreement (κ ≥0.88) with rRT-PCR for detecting RSV, SARS-CoV-2, and Flu B, and good agreement for Flu A (κ = 0.72) [14]. Its superior sensitivity makes dPCR particularly promising for detecting low viral loads, where traditional rRT-PCR might yield false negatives. However, the choice of platform must be context-dependent, balancing factors such as required throughput, turnaround time, cost constraints, and the clinical implications of missed cases versus false positives in a given setting.

The Role of Syndromic Multiplex Panels

Syndromic PCR panels represent a significant advancement by testing for multiple potential pathogens simultaneously. This approach is particularly valuable when clinical presentation does not point to a single causative agent, as is common with respiratory and gastrointestinal infections. The broader diagnostic capture of these panels reduces the need for sequential testing, potentially leading to faster definitive diagnosis and more appropriate initial treatment [7]. From an economic perspective, this efficiency can translate into lower overall costs, as demonstrated by the reduced healthcare utilization in patients receiving syndromic testing for respiratory infections [9]. For gastrointestinal pathogens, panels like the Applied BioCode Gastrointestinal Pathogen Panel (GPP) that utilize barcoded magnetic bead technology can detect 17 targets simultaneously, improving specificity and reducing the risk of cross-reactivity that leads to false positives [7].

Methodologies for Evaluating PCR Performance

Clinical Agreement Studies

A standard methodology for establishing PCR test performance is the clinical agreement study, which compares a new assay against an accepted reference method. The study comparing the STANDARD M10 rapid rtRT-PCR to pooled rRT-PCR exemplifies this approach [13]. In this design, paired nasopharyngeal and oropharyngeal swabs were collected from 3,931 patients with low clinical suspicion of COVID-19. One specimen was tested immediately with the STANDARD M10, while the other was transported to a central laboratory for pooled testing using the Allplex SARS-CoV-2 assay. The key performance metrics calculated were positive percent agreement (sensitivity), negative percent agreement (specificity), and overall agreement, with discrepant results resolved by supplemental testing with alternative PCR assays. This design directly assesses clinical performance in a relevant patient population.

Digital PCR Evaluation Protocols

The performance evaluation of novel digital PCR systems requires a rigorous comparative design. The assessment of the LOAA dPCR system in Ghana employed a cross-sectional hospital-based study enrolling 356 participants with suspected respiratory illness [14]. Oropharyngeal swabs were tested in parallel using both the LOAA dPCR and a established rRT-PCR assay (FluoroType SARS-CoV-2/Flu/RSV). Viral RNA was extracted using a standardized kit (Qiagen Viral Mini Kit) prior to parallel testing. The dPCR's performance was quantified using standard metrics—sensitivity, specificity, PPV, NPV—with rRT-PCR as the reference standard. The study also assessed agreement using the kappa statistic (κ) and the area under the curve (AUC), providing a comprehensive profile of the dPCR's operational characteristics under real-world conditions in a resource-limited setting [14].

PCR Evaluation Workflow: This diagram illustrates the standard protocol for evaluating a new PCR test's performance against a reference method, including discrepant analysis.

Strategies for Minimizing Erroneous Results

Technical and Laboratory Quality Control

Minimizing false positives requires stringent contamination control throughout the testing process. Key measures include physical separation of pre-PCR, PCR amplification, and post-PCR areas, implementing unidirectional workflow, using dedicated equipment and supplies for each area, and employing rigorous decontamination protocols using reagents like 10% sodium hypochlorite or UV light [6]. Technical enhancements to the PCR process itself include uracil-DNA-glycosylase (UNG) treatment to degrade carryover amplicons from previous reactions, hot-start PCR to prevent non-specific amplification during reaction setup, and touchdown PCR to improve primer specificity [6]. Primer and probe design is equally critical; they should target conserved but pathogen-specific genomic regions and be regularly verified against updated sequence databases to avoid cross-reactivity with newly identified variants or related organisms [6].

Analytical and Operational Approaches

Beyond technical controls, analytical strategies are essential. Laboratories should establish and validate cycle threshold cutoffs for distinguishing true low-positive results from background noise or non-specific amplification [8] [1]. Implementing and consistently using appropriate internal and external controls—including no-template controls to detect contamination and positive extraction controls to verify nucleic acid recovery—is fundamental to monitoring assay performance [6]. From an operational perspective, external quality assurance (EQA) programs provide independent assessment of laboratory performance, while comprehensive training of laboratory personnel in standardized sampling procedures and automated workflows reduces operator-dependent variability [7]. Crucially, in low-prevalence settings or when asymptomatic individuals are screened, clinicians and laboratories should maintain a higher index of suspicion for false positives, particularly for results with high Ct values or those positive for only a single target in a multiplex assay [8] [1]. Such results should trigger confirmation with a repeat test or a different platform before definitive action is taken.

Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for PCR Diagnostic Development

| Reagent / Material | Critical Function | Application Notes |

|---|---|---|

| Primers & Probes | Target-specific amplification and detection | Design for unique genomic regions; regular BLAST verification avoids cross-reactivity [6] |

| UNG Enzyme | Prevents amplicon carryover contamination | Degrades uracil-containing PCR products from previous runs; included in many master mixes [6] |

| Hot-Start Polymerase | Increases amplification specificity | Remains inactive until high temperature reduces non-specific priming [6] |

| Nuclease-Free Water | Reaction preparation | Prevents degradation of nucleic acids and reagents by environmental nucleases [6] |

| Bovine Serum Albumin | PCR enhancer | Mitigates the effect of common PCR inhibitors present in clinical samples (200-400 ng/µL) [6] |

| Validated Transport Media | Preserves sample integrity | Maintains nucleic acid stability during transport/storage; some inhibit nucleases [13] [14] |

False Positive Relationships: This diagram maps primary causes of false positive PCR results to corresponding mitigation strategies and highlights the resulting consequences.

The clinical and economic consequences of erroneous PCR results are non-trivial, affecting patient safety, healthcare costs, and public health efficacy. False positives drive unnecessary interventions and resource consumption, while false negatives undermine infection control and delay appropriate care. The evidence demonstrates that investing in more accurate testing platforms—including rapid syndromic panels and point-of-care PCR—can generate significant downstream savings and improved outcomes, despite higher initial test costs. For researchers and drug developers, this underscores the importance of diagnostic accuracy as a key variable in clinical trial design and therapeutic development pathways. Future efforts should focus on standardizing performance evaluations across platforms, improving primer-probe specificity for emerging variants, and developing even more robust protocols to minimize contamination in high-throughput settings. Through continued refinement of PCR technologies and implementation of stringent quality controls, the diagnostic community can mitigate the risks of erroneous results while maximizing the profound benefits that molecular diagnostics offer modern medicine.

Cycle Threshold (Ct) Values as a Key Predictor of False Positives

Polymerase chain reaction (PCR) diagnostics represent a cornerstone of modern molecular testing, yet their reliability is fundamentally influenced by cycle threshold (Ct) values, which serve as a critical predictor of false-positive outcomes. The Ct value refers to the number of amplification cycles required for the signal of a PCR reaction to cross a predetermined threshold, thereby detecting the target pathogen. This quantitative measure exhibits an inverse relationship with viral load—specimens with high viral concentrations typically yield low Ct values, while those with minimal target material require more amplification cycles, resulting in higher Ct values [15]. Within the context of diagnostic accuracy, false-positive results present a substantial challenge, potentially leading to clinical mismanagement, unnecessary patient isolation, and skewed epidemiological data [16] [17].

The predictive value of any diagnostic test, including PCR, is intrinsically tied to disease prevalence within the tested population. Even tests with high specificity can generate significant proportions of false-positive results when deployed in low-prevalence settings [16] [17]. For SARS-CoV-2 diagnostics, multiple studies have demonstrated that false-positive rates escalate dramatically as Ct values increase, particularly beyond specific thresholds where the detected genetic material may represent non-infectious viral fragments, contamination, or background noise rather than true, replicating virus [18] [19] [20]. This comprehensive analysis examines the experimental evidence establishing Ct values as a key predictor of false positives, compares performance across diagnostic systems, and provides methodological frameworks for researchers seeking to minimize diagnostic inaccuracies in PCR-based testing.

Experimental Evidence Linking Ct Values and False Positives

SARS-CoV-2 PCR Testing

Multiple large-scale studies have established a definitive correlation between elevated Ct values and increased false-positive rates in SARS-CoV-2 detection. A comprehensive analysis of 1,255 positive or suspected positive results from eleven laboratories utilizing seven different PCR reagents revealed striking stratification of false-positive probabilities based on Ct values [19]. When both target genes exhibited Ct values below 30, false positives were considered a small probability event, occurring in only ≤1.72% of cases. However, when Ct values fell between 30-35, significant discrepancies emerged among different testing reagents, with false-positive rates ranging from 0% to 9.14% (P < 0.001) [19]. Most notably, when any target gene displayed a Ct value exceeding 35, the false-positive rate surged to 15.58-24.22%, indicating that approximately one in four to one in five positive results may be incorrect in this high Ct range [19].

The relationship between Ct values and infectious potential further substantiates these findings. Research from the French group of Professor Didier Raoult demonstrated that the probability of viral culture positivity—a marker of infectious virus—declines precipitously as Ct values increase [20]. At a Ct threshold of 25, approximately 70% of samples remained positive in cell culture; this percentage dropped to 20% at Ct 30, and plummeted to just 3% at Ct 35. Crucially, no samples with Ct values above 35 demonstrated infectious potential in cell culture, suggesting that high Ct positives frequently detect non-viable viral fragments [20]. These findings align with observations from external quality assessment schemes, where Ct values reported for SARS-CoV-2 detection exhibited substantial inter-protocol variability, with 7.7% of results deviating by more than ±4.0 cycles from respective means—discrepancies attributed to systematic errors that contribute to false-positive interpretations [18].

Beyond SARS-CoV-2: Evidence from Oncology Diagnostics

The association between elevated Ct values and false-positive results extends beyond infectious disease diagnostics into other molecular testing domains. Recent investigations in non-small cell lung cancer (NSCLC) molecular profiling have revealed a high incidence of false positives in EGFR S768I mutation detection using the Idylla qPCR system [21]. This diagnostic inaccuracy carries significant clinical implications, as detection of the S768I mutation directly influences therapeutic decision-making for NSCLC patients. Meticulous comparison with next-generation sequencing (NGS) results demonstrated that numerous S768I "positives" identified via the Idylla qPCR platform represented false positives, particularly when amplification curves exhibited specific characteristics associated with higher Ct values [21]. These findings underscore the broader applicability of Ct value interpretation across diagnostic contexts and highlight the critical importance of confirmatory testing for mutations with substantial therapeutic consequences.

Table 1: False-Positive Rates Stratified by Ct Value Ranges in SARS-CoV-2 PCR Testing

| Ct Value Range | False-Positive Rate | Probability of Infectious Virus | Recommended Action |

|---|---|---|---|

| Ct < 30 | ≤1.72% | High (≈70% at Ct=25) | Report immediately; low false-positive probability |

| 30 ≤ Ct < 35 | 0-9.14% (varies by reagent) | Moderate to Low (≈20% at Ct=30) | Consider reagent-specific performance; potential for false positives |

| Ct ≥ 35 | 15.58-24.22% | Very Low (≈3% at Ct=35) | Retest original sample before reporting; high false-positive probability |

Comparative Performance of Diagnostic Systems

Inter-Laboratory and Inter-Reagent Variability

The reliability of Ct values as predictors of false positives is substantially influenced by both the testing reagents employed and the specific laboratory environments. Analysis of different SARS-CoV-2 testing institutions revealed marked variations in false-positive rates, particularly within intermediate and high Ct value ranges [19]. When initial screening produced Ct values between 30-35 for both target genes, false-positive rates differed significantly across testing institutions (P < 0.001), with some facilities maintaining minimal false positives while others reported rates approaching 10% [19]. These discrepancies likely stem from differences in personnel training, equipment calibration, nucleic acid extraction methods, and contamination control protocols, highlighting the profound impact of operational factors on diagnostic accuracy.

Comparative performance assessment of seven distinct PCR reagents revealed notable variations in false-positive rates, especially within the critical 30-35 Ct range [19]. While Sansure and Daan reagents maintained relatively low false-positive rates (0% and 1.41%, respectively) in this range, other reagents exhibited substantially higher rates, reaching up to 9.14% [19]. These findings underscore the importance of reagent selection and validation for laboratories aiming to minimize false-positive diagnoses, particularly when testing populations with low disease prevalence where the positive predictive value naturally declines. The analytical sensitivity of different PCR systems, typically measured by the limit of detection (LOD) in copies/mL, represents another critical variable influencing Ct value reliability and consequent false-positive probabilities [19].

Table 2: Comparison of PCR Reagent Performance in SARS-CoV-2 Detection

| Reagent Name | Limit of Detection (copies/mL) | False-Positive Rate (Ct 30-35) | Manufacturer |

|---|---|---|---|

| Daan | 200 | 1.41% | Daan Gene Co., Ltd. (Guangzhou, China) |

| Sansure | 200 | 0% | Sansure Biotech Inc. (Changsha, China) |

| BioGerm | 150 | 7.69% | BioGerm Medical Co., Ltd. (Shanghai, China) |

| EasyDiagnosis | 200 | 9.14% | Wuhan EasyDiagnosis Biomedicine Co., Ltd. (Wuhan, China) |

| Zybio | 200 | Data not specified | Zybio Co., Ltd. (Chongqing, China) |

| ZJ | 200 | Data not specified | ZJ Biotech Co., Ltd. (Shanghai, China) |

| Bioperfectus | 350 | Data not specified | Jiangsu Bioperfectus Technologies Co., Ltd. (Jiangsu, China) |

Impact of Cycle Threshold Settings

The predetermined cycle threshold setting established by individual laboratories represents a fundamental determinant of false-positive rates. Laboratories utilizing excessively high maximum cycle thresholds (frequently 40-45 cycles) inadvertently increase their susceptibility to false-positive results by amplifying minimal background noise or non-specific amplification [20] [15]. Empirical evidence suggests that more reasonable cutoff values between 30-35 cycles optimize the balance between detection sensitivity and specificity [20]. Analyses indicate that up to 90% of positive tests at a cycle threshold of 40 would be negative at a Ct of 30, dramatically illustrating how laboratory-specific protocol choices directly influence false-positive rates and subsequent clinical interpretations [20].

This relationship between cycle threshold settings and diagnostic accuracy prompted the World Health Organization (WHO) to issue specific guidance regarding Ct value interpretation [15] [22]. The WHO emphasized that "careful interpretation of weak positive results is needed" and noted that "the distinction between background noise and actual presence of the target virus is difficult to ascertain" at high Ct values [15]. These recommendations align with the established technical guidelines for PCR implementation (MIQE guidelines), which explicitly state that "Cq values higher than 40 are suspect because of the implied low efficiency and generally should not be reported" [15].

Methodological Protocols for Ct Value Analysis

Standardized Experimental Workflow

The following experimental workflow provides a systematic approach for evaluating the relationship between Ct values and false-positive rates in PCR diagnostics:

Sample Collection and Processing:

- Collect respiratory specimens (nasopharyngeal swabs, oropharyngeal swabs, or saliva) in appropriate viral transport media [19] [23].

- Extract nucleic acids using automated extraction systems following manufacturer protocols, incorporating both positive and negative extraction controls to monitor contamination [19].

- For comparative studies, divide each specimen aliquot for parallel testing across different PCR platforms or reagents [19] [21].

PCR Amplification and Detection:

- Utilize real-time PCR instruments with calibrated fluorescence detection capabilities [19].

- Program thermal cycling conditions according to manufacturer specifications for target pathogens, typically including: reverse transcription at 50-55°C for 10-30 minutes, initial denaturation at 95°C for 2-5 minutes, followed by 40-45 cycles of denaturation (95°C for 10-30 seconds) and annealing/extension (55-60°C for 20-60 seconds) [19] [22].

- Implement multiplex reactions detecting at least two target genes to enhance specificity and reduce false positives from single-gene amplification [18] [16].

Data Analysis and Interpretation:

- Set fluorescence thresholds consistently across all runs in the exponential phase of amplification [22].

- Record Ct values for all detected targets, noting specimens with single-gene positivity or delayed amplification (Ct > 35) [19].

- For validation studies, retest all initial positives using alternative PCR platforms, different gene targets, or reference methods such as next-generation sequencing [19] [21].

- Perform statistical analysis using appropriate software (e.g., SPSS, GraphPad Prism) to calculate false-positive rates across Ct value strata and compare performance between reagents or laboratories [19].

Diagram 1: Experimental Workflow for Ct Value Analysis in PCR Diagnostics. This diagram illustrates the standardized protocol for evaluating the relationship between Ct values and false-positive rates, highlighting critical decision points based on Ct value stratification.

Quality Control and Validation Procedures

Implementing rigorous quality control measures is essential for reliable Ct value interpretation and false-positive minimization:

Pre-Analytical Controls:

- Incorporate external negative controls (blank samples) during nucleic acid extraction to detect contamination in reagents or extraction systems [19] [16].

- Include positive extraction controls with known weak viral concentrations (Ct 30-35) to monitor extraction efficiency and amplification sensitivity [19].

Analytical Controls:

- Run no-template controls (NTCs) containing all PCR reagents except template nucleic acid in each amplification batch to identify reagent contamination [19] [16].

- Include positive amplification controls with standardized Ct values (e.g., Ct 25, 30, 35) to verify reaction efficiency and inter-run comparability [18].

- Implement internal control targets (e.g., human housekeeping genes) to identify inhibition and validate sample quality [19].

Post-Analytical Validation:

- Establish laboratory-specific Ct value cutoffs based on internal validation studies comparing PCR results with viral culture positivity or clinical symptom correlation [20].

- Implement mandatory retesting protocols for samples with Ct values above predetermined thresholds (e.g., Ct > 35) using different gene targets or alternative PCR platforms [19] [17].

- Participate in external quality assessment (EQA) schemes to evaluate inter-laboratory performance and identify systematic biases in Ct value reporting [18].

Essential Research Reagent Solutions

Table 3: Key Research Reagents for PCR Diagnostic Validation Studies

| Reagent/Category | Specific Examples | Function in False-Positive Research |

|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp Viral RNA Mini Kit, MagMAX Viral/Pathogen Kit | Isolate high-quality RNA/DNA while minimizing cross-contamination between samples |

| Reverse Transcriptase Enzymes | SuperScript IV Reverse Transcriptase, GoScript Reverse Transcriptase | Convert RNA to cDNA with high fidelity and efficiency for subsequent PCR amplification |

| PCR Master Mixes | TaqPath Master Mix, LightCycler Multiplex RNA Virus Master | Provide optimized buffer conditions, enzymes, and dNTPs for sensitive and specific amplification |

| Positive Control Materials | Quantitated RNA transcripts, armored RNA, viral culture supernatants | Establish standard curves, determine limits of detection, and validate assay performance |

| Negative Control Materials | Nuclease-free water, human genomic DNA, respiratory pathogen panels | Identify contamination sources and establish specificity against related pathogens |

| Probe/Primer Sets | CDC N1/N2 primers-probes, WHO recommended targets, E/RdRp/ORF1ab genes | Target conserved genomic regions with varying sensitivity and specificity profiles |

| Inhibition Relief Reagents | T4 Gene 32 Protein, BSA, betaine | Overcome PCR inhibitors in clinical specimens that may cause anomalous Ct values |

Cycle threshold values serve as indispensable predictors of false-positive results across diverse PCR diagnostic applications, from infectious disease detection to oncological mutation profiling. The accumulated experimental evidence demonstrates a consistent pattern: false-positive rates escalate dramatically as Ct values exceed 30, with particularly concerning rates observed beyond Ct 35. This relationship underscores the critical importance of establishing and adhering to laboratory-specific Ct value cutoffs, implementing confirmatory testing protocols for high-Ct results, and maintaining rigorous quality control measures throughout the testing process. For the research community, these findings highlight the necessity of reporting Ct values alongside qualitative results, validating reagent performance across clinically relevant Ct ranges, and developing standardized approaches to Ct value interpretation that balance analytical sensitivity with clinical relevance. As PCR technologies continue to evolve and find new diagnostic applications, the fundamental relationship between Ct values and false-positive risk remains a cornerstone consideration for ensuring diagnostic accuracy and appropriate clinical decision-making.

Molecular diagnostic assays, particularly real-time PCR (qPCR), are foundational tools for detecting and managing infectious diseases. Their success relies on the specific binding of primers and probes to complementary target sequences in the pathogen's genome [24]. However, the sustained transmission and proliferation of pathogens, as witnessed during the COVID-19 pandemic, lead to the emergence of new variants with mutations. This can result in signature erosion, a phenomenon where diagnostic tests developed using earlier genomic sequences of a pathogen fail to detect new variants, causing false negative (FN) results [24]. Such false negatives can have severe consequences for patient care and public health measures, including uncontrolled disease transmission. This article explores signature erosion as a primary source of false negatives, comparing the performance of various assay designs and presenting experimental data on their resilience.

Experimental Approaches to Quantifying Signature Erosion

Understanding the impact of mutations on assay performance requires robust experimental methodologies. The following protocol exemplifies a systematic approach to wet-lab testing of in silico predictions.

Detailed Experimental Protocol for Evaluating Mismatch Impact

A comprehensive study tested 16 PCR assays with over 200 synthetic templates spanning the SARS-CoV-2 genome to assess the impact of mismatches. The methodology can be summarized as follows [24] [25]:

- Assay and Mutation Selection: Assays were selected based on in silico monitoring using tools like the PCR Signature Erosion Tool (PSET), which identifies assays at risk of signature erosion due to emerging mutations. Mutated templates were designed to represent a diverse range of naturally occurring mismatch types (e.g., substitutions, deletions) and locations within the primer and probe binding sites.

- Template Synthesis: Wild-type and mutated template sequences were synthesized as DNA oligos (e.g., gBlock fragments), including flanking sequences to mimic the genomic context.

- qPCR Amplification: Each template (wild-type and mutant) was tested at multiple initial concentrations (e.g., 50, 500, 5000, and 50,000 copies per reaction) in triplicate reactions. A universal qPCR master mix and thermocycling protocol were used to standardize conditions across all assays. The protocol often uses a slightly reduced annealing temperature (e.g., 55°C) to be more permissive of mismatches and thus stress-test the assays.

- Data Analysis: Performance was quantified by comparing the cycle threshold (Ct) values of mutated templates to their wild-type counterparts. The key metric is the ΔCt, calculated as (Average CtMutated) - (Average CtWild-Type). Additional metrics include amplification efficiency, linear regression coefficient (R²), and y-intercept.

The workflow for this experimental process is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key reagents and materials essential for conducting experiments on signature erosion.

| Item | Function in Experiment |

|---|---|

| Synthetic DNA Templates (gBlocks) | Serve as wild-type and mutant target sequences for qPCR amplification, allowing controlled introduction of specific mutations [25]. |

| qPCR Master Mix | A standardized reagent containing DNA polymerase, dNTPs, and buffer to ensure consistent reaction conditions across all tested assays [25]. |

| Primers & Hydrolysis Probes | Oligonucleotides designed to bind specific pathogen sequences. Probes are typically labeled with a fluorophore (e.g., FAM) and a quencher for real-time detection [25]. |

| Real-time PCR Instrument | Equipment to perform thermal cycling and fluorescence detection, enabling measurement of cycle threshold (Ct) values [25]. |

| PSET (PCR Signature Erosion Tool) | An in silico tool that monitors the performance of diagnostic tests against global pathogen sequence databases to predict potential assay failures [24]. |

Quantitative Impact of Mismatches on PCR Performance

The robustness of a PCR assay is not universally compromised by the presence of a mismatch. The impact is highly variable and depends on several factors related to the mismatch's characteristics.

Factors Determining Mismatch Impact

- Position of the Mismatch: Mismatches located closer to the 3' end of the primer, especially within the last 5 nucleotides, generally have a more severe impact on PCR efficiency than those farther away [24].

- Number of Mismatches: While a single mismatch may be tolerated, the accumulation of multiple mismatches within a primer or probe binding site dramatically increases the risk of false negatives. Complete PCR amplification failure is often observed with four or more mismatches [24].

- Type of Mismatch: The specific base change (e.g., A-A, G-A) influences the degree of destabilization. Single mismatches can cause a broad range of Ct value shifts, from minor (<1.5 ΔCt) to severe (>7.0 ΔCt) [24].

- Assay Design and Reaction Conditions: Factors such as primer/probe concentration, GC content, and thermocycling parameters (e.g., annealing temperature) can affect an assay's tolerance for mismatches [24] [25].

Comparative Assay Performance Data

Experimental data reveals how different assays respond to various mutation profiles. The following table summarizes performance changes from a study testing multiple assay designs against a panel of mutated SARS-CoV-2 templates [25].

| Mutation Feature | Example Impact on PCR Performance (ΔCt) | Assays Affected |

|---|---|---|

| Single Mismatch | Minor (<1.5) to Severe (>7.0) | Varies by type and position [24] |

| 3'-End Mismatch | Often >7.0 ΔCt (complete blocking possible) | High [24] |

| Multiple Mismatches | Drastic reduction; failure with ≥4 mismatches | High [24] |

| Mismatch in One Primer | Moderate ΔCt shift | Moderate [25] |

| Mismatches in Both Primers | Large ΔCt shift, often leading to false negatives | High [25] |

| Probe Mismatch Only | Often minimal ΔCt shift, may affect fluorescence | Low [25] |

Despite the potential for failure, it is noteworthy that many PCR assays proved to be extremely robust, performing well even with significant signature erosion and the accumulation of mutations over time [24].

Machine Learning and Evolutionary Dynamics

Predictive Modeling for Assay Performance

Given the complexity of factors influencing mismatch impact, machine learning (ML) models offer a promising path toward predicting assay performance degradation. Using large experimental datasets that capture mutation features and resulting ΔCt values, ML models can learn to assess risk.

One study used 13 features—including mismatch position, type, and local sequence context—to train models. The best-performing model achieved a sensitivity of 82% and specificity of 87% in predicting significant performance changes, demonstrating the feasibility of this approach [25]. Key features for prediction included the position of the mismatch and the type of nucleotide change [25].

Evolutionary Pressures and Diagnostic Test Escape

The evolution of pathogens in response to human interventions is a major public health challenge. Diagnostic testing itself can act as a selective pressure, favoring variants that avoid detection, a phenomenon known as diagnostic test escape [26].

Mathematical models show that the evolution of detection avoidance is driven by:

- Testing Frequency and Compliance: Imperfect compliance with testing and isolation measures can significantly increase selection for detection-avoidant variants [26].

- Intermediate Testing Rates: Counterintuitively, an intermediate level of testing can select for the highest level of detection avoidance, whereas very high testing rates might drive the pathogen extinct [26].

- Genomic Signature Conservation: Viruses, particularly those with large genomes, exhibit highly specific genomic signatures that are conserved and distinct from their hosts. This suggests strong evolutionary pressures shape and preserve these signatures, which has implications for designing diagnostics and attenuated vaccines [27].

The relationship between testing regimes and pathogen evolution is complex, as illustrated below.

Signature erosion, driven by the continuous evolution of pathogens, presents a clear and present danger to the reliability of PCR diagnostics, directly leading to false negative results. Experimental evidence shows that the impact of mismatches is not uniform; it depends critically on the mutation's location, type, and multiplicity. While many assays demonstrate remarkable robustness, the risk of failure necessitates continuous monitoring of pathogen evolution. The integration of in silico tools like PSET, wet-lab validation, and emerging machine learning models provides a powerful, multi-faceted strategy to proactively identify vulnerable assays. Furthermore, acknowledging that diagnostic testing itself can shape pathogen evolution underscores the need for thoughtful public health policies that balance detection with the risk of selecting for escape variants. A unified framework for rapid test development and evaluation, informed by these principles, is crucial for preparing for future outbreaks of emerging infections [28].

Cross-contamination and Sample Integrity Issues in Pre-analytical Phases

The pre-analytical phase encompasses all processes from sample collection to the point of PCR amplification, representing the most vulnerable stage for errors in molecular diagnostics. Errors during this phase are a predominant source of false-positive and false-negative results, fundamentally compromising the reliability of PCR-based assays [6] [29]. The integrity of samples and the potential for cross-contamination are particularly critical in diagnostic settings, where erroneous results can directly impact patient care and public health decisions. For instance, during the COVID-19 pandemic, false-positive RT-PCR results in low-prevalence screening settings were found to have a positive predictive value as low as 32.4%, meaning nearly two-thirds of positive results were incorrect in some scenarios [1]. This guide objectively compares the performance of various commercially available solutions and methodological approaches designed to mitigate these pre-analytical challenges, providing researchers with evidence-based data for informed decision-making.

Critical Pre-analytical Factors Affecting PCR Results

Cross-contamination in PCR laboratories primarily originates from two sources: previously amplified PCR products (amplicons) and cross-contamination between clinical samples [6] [30]. Amplicon contamination is particularly problematic because these products exist in extremely high concentrations (millions of copies) and can serve as efficient templates for subsequent amplification, leading to false-positive results [6]. Contamination can occur at multiple stages:

- During sample collection: Swabs may accidentally touch contaminated gloves or surfaces [30].

- Nucleic acid extraction: Aerosolization in containment hoods can spread contaminants [1].

- PCR amplification setup: Carryover of amplicons from previous reactions or contamination of reagents [1] [6].

- Equipment contamination: High viral titer specimens can contaminate equipment, leading to sample carryover [1].

The consequences of such contamination are far-reaching, including unnecessary additional testing and treatments, psychological distress to patients, delays in correct diagnosis, and in pandemic situations, unnecessary quarantine and contact tracing measures [1] [6].

Factors Compromising Sample Integrity

Sample integrity is crucial for obtaining accurate PCR results, and multiple factors in the pre-analytical phase can compromise this integrity:

Improper sample collection: The type of sample collected significantly impacts detection sensitivity. For respiratory viruses like SARS-CoV-2, sputum samples provide the highest accuracy, followed by nasal swabs, while throat swabs are less recommended [30]. The stability of viral RNA also varies across sample types, with nasal swabs generally providing higher stability compared to blood and saliva [30].

Suboptimal storage conditions and time: The stability of viral nucleic acids is highly dependent on storage duration and temperature. Research shows that respiratory viruses remain more stable in saliva collection devices than in transport swab systems when stored at room temperature or 37°C for up to 96 hours [31]. Furthermore, different collection devices vary in their ability to inactivate viruses, impacting both sample integrity and safety for healthcare personnel [31].

RNA degradation issues: RNA molecules are acutely vulnerable to degradation, which can lead to false-negative results or inaccurate quantification in RT-qPCR assays [32]. Degraded RNA samples disproportionately affect the amplification of 5' transcript regions compared to 3' regions due to interruption of cDNA synthesis from the poly-A tail [32].

Comparative Analysis of Methodologies and Products

Contamination Prevention Technologies

Table 1: Comparison of Contamination Prevention Methods

| Method/Product | Mechanism of Action | Effectiveness | Limitations | Implementation Considerations |

|---|---|---|---|---|

| UNG/dUTP System [33] [34] | Incorporation of dUTP in place of dTTP during PCR; UNG enzyme degrades uracil-containing contaminants in subsequent reactions | Effectively eliminates carryover contamination; Complete degradation of contaminants demonstrated [34] | Requires optimization of dUTP:dTTP ratio (e.g., 175µM dUTP + 25µM dTTP); Not compatible with bisulfite-treated DNA without modification [33] [34] | Compatible with GoTaq DNA Polymerase systems; Can be incorporated into commercial master mixes |

| Physical Segregation [6] | Separate dedicated areas for pre-PCR, PCR amplification, and post-PCR activities | Fundamental to minimizing cross-contamination; Prevents aerosol transfer between processes | Requires significant laboratory space and infrastructure; May not be feasible in all settings | Unidirectional workflow; Dedicated equipment and lab coats for each area |

| Aseptic Techniques [6] | Strict laboratory hygiene including fresh gloves, controlled pipetting, UV sterilization | Reduces introduction of contaminants from personnel and environment | Dependent on strict staff compliance and training | Regular servicing and calibration of pipettes; Use of sterile labware and filter tips |

The UNG/dUTP system represents a biochemical approach to contamination control that has demonstrated high efficacy in preventing carryover contamination. Experimental data shows that when optimized with a concentration of 175µM dUTP + 25µM dTTP, robust amplification is maintained while effectively preventing false positives from previous amplifications [34]. However, this method requires modification when working with bisulfite-treated DNA used in methylation studies, as the bisulfite conversion process itself creates uracil residues that would be degraded by UNG [33]. A modified bisulfite treatment procedure generating sulfonated DNA has been developed to overcome this limitation [33].

Sample Collection and Stabilization Systems

Table 2: Comparison of Sample Collection and Stabilization Systems

| System Type | Viral Stability | Inactivation Capability | Suitable Storage Conditions | Best Use Applications |

|---|---|---|---|---|

| Saliva Collection Devices (PreAnalytiX) [31] | High stability at RT and 37°C for up to 96 hours | Complete inactivation of enveloped viruses; 10E+4 reduction for adenovirus | Maintains RNA integrity across temperature variations | Multi-virus detection strategies; High-throughput settings |

| Transport Swab Systems (Universal Transport Media) [31] | Moderate stability; decreased over time at elevated temperatures | No inactivation of tested respiratory viruses | Requires stricter temperature control | Direct amplification approaches; When immediate processing is possible |

| Inactivating Additives [31] | Maintains nucleic acid stability while reducing infectivity | Strong reduction of enveloped virus replication; limited effect on non-enveloped viruses | Compatible with various collection devices | When operator safety is paramount; Resource-limited settings |

Comparative studies of pre-analytical properties of different collection systems reveal that saliva collection devices generally provide superior viral RNA/DNA stability compared to traditional transport swab systems, especially when storage at room temperature or elevated temperatures is necessary [31]. Furthermore, certain saliva collection devices completely inactivate enveloped viruses such as influenza A/B and RSV A/B, significantly reducing the infection risk for healthcare personnel during sample handling and processing [31]. This inactivation capability is particularly valuable in pandemic situations or when dealing with highly pathogenic viruses.

RNA Integrity Assessment Methods

Table 3: Comparison of RNA Integrity Assessment Methods

| Method | Principle | Output Metrics | Cost and Accessibility | Advantages | Limitations |

|---|---|---|---|---|---|

| 3':5' Assay [32] | RT-qPCR with primers for 3' and 5' regions of housekeeping genes; ratio indicates degradation | 3':5' ratio (1.0 = intact; >1.0 = degraded); Correlates with RIN values | Low cost; Uses standard lab equipment (qPCR instruments) | Quantitative; Requires small RNA amounts; Species-adaptable | Requires optimization; Dependent on proper primer design |

| Microfluidic Capillary Electrophoresis (e.g., Agilent Bioanalyzer) [32] | Electro-phoretic separation and quantification of rRNA fragments | RNA Integrity Number (RIN 1-10); RIN >8 = intact; <5 = degraded | Higher equipment cost; Specialized chips and reagents | Standardized metric; Visual electropherogram output | Higher per-sample cost; Requires specialized equipment |

| Agarose Gel Electrophoresis [32] | Visual assessment of 28S and 18S rRNA band intensities | Qualitative assessment (intact bands vs. smearing) | Low cost; Widely accessible | Simple implementation; No specialized equipment | Qualitative only; Requires substantial RNA amounts |

The 3':5' assay provides a cost-effective, PCR-based alternative for quantitative assessment of RNA integrity that correlates well with established RIN values from microfluidic capillary electrophoresis systems [32]. Experimental data demonstrates that 3':5' ratios show similar assessment of RNA integrity status across a spectrum from intact to heavily degraded samples, with threshold criteria equivalent to RIN cut-off values that can guide sample selection for downstream RT-qPCR analyses [32]. This method is particularly valuable in resource-limited settings or for high-throughput applications where the cost of microfluidic systems may be prohibitive.

Experimental Protocols and Methodologies

UNG Cross-Contamination Prevention Protocol

The following protocol, adapted from published methodologies [33] [34], details the experimental procedure for implementing UNG-mediated carryover prevention:

Reagents and Equipment:

- GoTaq DNA Polymerase or compatible polymerase system

- dUTP:dTTP mixture (optimally 175µM dUTP:25µM dTTP)

- Uracil-N-Glycosylase (UNG)

- Standard PCR reagents: dATP, dCTP, dGTP, reaction buffer, primers

- Thermal cycler

Procedure:

- Prepare PCR master mix containing standard components with the substitution of dTTP with the optimized dUTP:dTTP mixture.

- Add UNG enzyme (typically 0.5-1 unit per reaction) to the master mix.

- Aliquot the master mix into reaction tubes and add template DNA.

- Incubate reactions at 25-37°C for 10-15 minutes to allow UNG to degrade any uracil-containing contaminants.

- Activate hot-start polymerase and initiate PCR with initial denaturation at 95°C for 2-10 minutes to inactivate UNG.

- Proceed with standard PCR cycling parameters.

Validation:

- Include negative controls (no template) to confirm absence of contamination.

- Test system with known uracil-containing amplimer to verify degradation efficiency.

- Ensure amplification efficiency matches standard dTTP-based PCR through standard curve analysis.

Experimental data demonstrates that this approach completely eliminates amplification when uracil-containing products are treated with UNG, while robust amplification occurs when UNG is omitted from the reaction [34].

3':5' RNA Integrity Assay Protocol

This protocol, adapted from the methodology described by [32], details the procedure for quantitative assessment of RNA integrity:

Reagents and Equipment:

- High-quality RNA samples (A260/A280 >1.8)

- Reverse transcription reagents: anchored oligo-dT primers, reverse transcriptase

- qPCR reagents: SYBR Green or probe-based master mix

- Primers targeting 3' and 5' regions of reference genes (e.g., Pgk1 for rat)

- Real-time PCR instrument

Procedure:

- Reverse transcribe RNA using anchored oligo-dT primers to ensure transcription initiation from the poly-A tail.

- Perform qPCR amplification with two primer sets: one targeting the 3' region and another targeting the 5' region of a stable reference gene.

- Analyze amplification curves and determine Cq values for both amplicons.

- Calculate the 3':5' ratio using the formula: Ratio = 2^(Cq5' - Cq3')

- Compare ratios to established thresholds: Ratio ≈1.0 indicates intact RNA; progressively higher ratios indicate increasing degradation.

Validation:

- Establish correlation with RIN values using samples with known integrity.

- Determine threshold values for sample acceptance (typically equivalent to RIN >5.0).

- Verify absence of genomic DNA contamination through no-RT controls.

Experimental data shows strong correlation between 3':5' ratios and RIN values across different tissue types, storage conditions, and degradation levels, supporting its use as a reliable integrity assessment method [32].

Research Reagent Solutions

Table 4: Essential Research Reagents for Pre-analytical Quality Control

| Reagent/Category | Specific Examples | Function/Application | Key Considerations |

|---|---|---|---|

| Nucleic Acid Polymerases | GoTaq DNA Polymerase [34] | PCR amplification | Compatibility with dUTP/UNG systems; Robust amplification efficiency |

| UNG Enzyme | Uracil-DNA Glycosylase [33] [34] | Degradation of uracil-containing contaminants | Concentration optimization; Heat-inactivation profile |

| Modified Nucleotides | dUTP:dTTP mixtures [34] | Incorporation into amplicons for contamination control | Optimal ratio with dTTP (e.g., 175µM:25µM) for balance of amplification and contamination control |

| Sample Collection Systems | Saliva collection devices (PreAnalytiX, Norgen) [31] | Sample collection, stabilization, and virus inactivation | Viral stability characteristics; inactivation capabilities; compatibility with downstream applications |

| Transport Media | Universal Transport Media (UTM) [31] | Maintain viral viability/nucleic acid integrity during transport | Storage stability; effect on different virus types; compatibility with direct amplification |

| RNA Integrity Assessment Reagents | 3' and 5' primer sets for reference genes [32] | Quantitative evaluation of mRNA degradation | Species-specific design; amplicon size and positioning |

| Nuclease-Free Water | PCR-grade water [35] | Diluent for molecular reactions | Certification as nuclease-free; absence of PCR inhibitors |

Workflow and Process Diagrams

Diagram 1: Pre-analytical Workflow with Risks and Prevention Measures. This diagram illustrates the complete sample journey from collection to analysis, highlighting major contamination risks at each stage and corresponding prevention strategies.

Diagram 2: 3':5' RNA Integrity Assay Principle. This diagram contrasts the experimental outcomes between intact and degraded RNA samples, demonstrating how differential amplification efficiency between 3' and 5' regions quantitatively measures RNA degradation.

The pre-analytical phase represents the most vulnerable stage for errors in PCR-based diagnostics, with cross-contamination and sample integrity issues being predominant sources of false results. This comparative analysis demonstrates that systematic implementation of preventive strategies - including UNG/dUTP systems for amplicon control, appropriate sample collection devices with stabilization properties, and rigorous RNA integrity assessment - can significantly enhance the reliability of molecular diagnostic results. The experimental protocols and quantitative data presented provide researchers with practical methodologies for implementing these quality control measures in various laboratory settings. As PCR technologies continue to evolve and find new applications in research and clinical diagnostics, maintaining vigilance during the pre-analytical phase remains fundamental to generating accurate, reproducible results that can reliably inform scientific conclusions and clinical decisions.

Advanced Methodologies for Enhancing PCR Detection Accuracy

The pursuit of diagnostic accuracy in polymerase chain reaction (PCR) testing relentlessly focuses on minimizing false positives and false negatives. While traditional, commercial "closed" PCR systems dominate clinical laboratories, their proprietary nature can limit reagent flexibility, hinder protocol customization, and elevate costs, potentially restricting access and innovation. In contrast, open-source PCR platforms are emerging as a transformative alternative, designed around modular hardware, accessible software, and non-proprietary consumables. This comparison guide objectively evaluates the performance of these open-source systems against established commercial alternatives, with a specific focus on experimental data pertaining to diagnostic sensitivity and specificity—the core metrics in the fight against erroneous results.