Navigating AST Verification: A 2025 Guide to Breakpoint Updates, Regulatory Compliance, and Emerging Technologies

This article provides a comprehensive roadmap for researchers, scientists, and drug development professionals facing the complex challenges of antimicrobial susceptibility test (AST) verification.

Navigating AST Verification: A 2025 Guide to Breakpoint Updates, Regulatory Compliance, and Emerging Technologies

Abstract

This article provides a comprehensive roadmap for researchers, scientists, and drug development professionals facing the complex challenges of antimicrobial susceptibility test (AST) verification. Covering the period from foundational principles to future prospects, it details the evolving regulatory landscape, including the 2024 FDA final rule on Laboratory-Developed Tests and the major January 2025 FDA recognition of CLSI breakpoints. The content offers practical methodologies for verification and validation, strategies for overcoming financial, technical, and operational barriers, and a comparative analysis of rapid phenotypic and genotypic AST technologies. By synthesizing current standards, innovative solutions, and validation frameworks, this guide aims to equip professionals with the knowledge to ensure testing accuracy, enhance patient care, and combat antimicrobial resistance.

The Evolving Landscape of AST: Regulatory Shifts and the Imperative for Updated Breakpoints

The AMR Crisis and the Critical Role of Accurate AST

Antimicrobial resistance (AMR) is a quantifiable, escalating global crisis that threatens to undo decades of progress in infectious disease control. A 2025 World Health Organization (WHO) report revealed that one in six laboratory-confirmed bacterial infections worldwide were resistant to antibiotic treatments in 2023. Between 2018 and 2023, antibiotic resistance rose in over 40% of the pathogen-antibiotic combinations monitored, with an average annual increase of 5–15% [1]. The human cost is staggering, with AMR contributing to millions of deaths annually, a figure projected to rise without urgent intervention [2] [3].

Within this crisis, Antimicrobial Susceptibility Testing (AST) serves as a critical frontline defense. Accurate and timely AST guides effective therapeutic decisions, helps contain the spread of resistant pathogens, and supports antimicrobial stewardship efforts. However, researchers and clinical scientists face significant challenges in AST verification and implementation. This technical support center addresses these specific experimental and procedural challenges.

Global Resistance Prevalence of Key Pathogens (2023 WHO Data)

The following table summarizes the scale of resistance for critical bacterial pathogens, underscoring the necessity for precise AST [1].

| Bacterial Pathogen | Key Antibiotic Class Resisted | Global Resistance Prevalence (%) | Notes / Regional Variation |

|---|---|---|---|

| Klebsiella pneumoniae | Third-generation cephalosporins | >55% | Leading cause of resistant bloodstream infections; exceeds 70% in the WHO African Region [1]. |

| Escherichia coli | Third-generation cephalosporins | >40% | A major contributor to urinary tract and bloodstream infections [1]. |

| Acinetobacter spp. | Carbapenems | Rising | Carbapenem resistance, once rare, is becoming more frequent, severely limiting treatment options [1]. |

| Staphylococcus aureus | Methicillin (MRSA) | — | Remains a leading cause of hospital-acquired infections [2]. |

| Neisseria gonorrhoeae | Ceftriaxone & Azithromycin | — | First cases of untreatable strains reported, raising major public health concerns [2]. |

Troubleshooting Guides & FAQs

This section provides targeted solutions for common issues encountered during AST experiments and verification processes.

FAQ: Addressing Fundamental AST Challenges

Q1: What are the primary reasons for discrepant results between our in-house AST and reference laboratory tests?

Discrepancies often stem from methodological inconsistencies. Ensure your lab adheres to reference methods such as broth microdilution as per ISO 20776-1:2019 and EUCAST methodology [4]. Other factors include:

- Inoculum density: Variations in the number of bacteria used can significantly impact Minimum Inhibitory Concentration (MIC) results.

- Incubation conditions: Strict control of temperature, atmosphere, and duration is critical.

- Quality control strains: Regularly test using standard control strains (e.g., ATCC strains) to validate procedures and reagents.

Q2: How can we accelerate AST to provide results for critically ill patients faster than the standard 48 hours?

Rapid AST technologies are now emerging to address this exact challenge. Several approaches have received regulatory clearance and can provide results in hours instead of days [5]:

- Single-cell imaging and microfluidics: Systems like the ASTar instrument use high-speed time-lapse microscopy to monitor bacterial replication in miniaturized channels with different antibiotics [5].

- Metabolic VOC detection: Platforms like the Vitek Reveal system use nanosensors to detect volatile organic compounds emitted by growing bacteria, providing AST results in approximately 5 hours [5].

- Direct-from-sample testing: Investigational methods using magnetic nanoparticles to capture bacteria directly from blood samples without pre-incubation can yield results within an hour [5].

Q3: Our lab is implementing a new rapid AST method. What are the key steps for verification?

A robust verification protocol is essential. Key steps include:

- Correlation with reference method: Test a panel of 50-100 well-characterized clinical isolates, including resistant, susceptible, and intermediate strains, in parallel with your new method and the reference broth microdilution method. Calculate essential agreement (EA) and categorical agreement (CA).

- Precision/reproducibility testing: Perform repeat testing on the same samples across different days and by different technologists.

- Challenging with genetically characterized strains: Include isolates with well-defined resistance mechanisms (e.g., blaKPC for carbapenem resistance, mecA for MRSA) to ensure the method detects specific resistance phenotypes accurately [4].

Troubleshooting Guide: Common Experimental Pitfalls

| Problem | Possible Cause | Solution |

|---|---|---|

| Poor reproducibility of MIC values | Inconsistent inoculum preparation | Standardize the culture method and use a densitometer or quantitative plating to verify the inoculum size for each run. |

| Indeterminate or "skip" wells | Contamination or antibiotic degradation. | Check sterility techniques and ensure proper storage and handling of antibiotic panels; avoid freeze-thaw cycles. |

| Failure of quality control strains | Compromised reagents or deviation from standard protocols. | Prepare fresh media and reagents. Re-confirm the identity and viability of the QC strain. |

| New method fails to detect a specific resistance mechanism | The method's inherent limitations for that mechanism. | Understand the mechanism (e.g., enzymatic, efflux). Supplement phenotypic testing with genotypic methods (e.g., PCR) for key resistance genes (e.g., vanA, mcr-1, blaNDM) [2]. |

Experimental Protocols for AST Verification

Protocol 1: Reference Broth Microdilution for MIC Determination

This is the reference standard method for phenotypic AST [4].

Methodology:

- Antibiotic Panel Preparation: Prepare two-fold serial dilutions of the antibiotic in a cation-adjusted Mueller-Hinton broth in a 96-well microtiter plate. The concentration range should cover expected clinical breakpoints.

- Inoculum Standardization: Grow the test isolate to log phase and adjust the turbidity to a 0.5 McFarland standard. Further dilute the suspension to achieve a final inoculum of ~5 x 10^5 CFU/mL in each well.

- Inoculation and Incubation: Dispense the standardized inoculum into each well of the antibiotic panel. Include growth control (no antibiotic) and sterility control (no inoculum) wells.

- Incubation: Incub the plate at 35±2°C for 16-20 hours in an ambient atmosphere.

- Reading and Interpretation: The Minimum Inhibitory Concentration (MIC) is the lowest concentration of antibiotic that completely inhibits visible growth of the organism. Interpret results (S/I/R) using current EUCAST clinical breakpoints [4].

Protocol 2: Genetic Verification of Carbapenem Resistance

For isolates showing reduced susceptibility to carbapenems, confirm the presence of acquired carbapenemase genes.

Methodology:

- DNA Extraction: Use a commercial kit to extract genomic DNA from an overnight pure culture of the test isolate.

- PCR Amplification: Perform real-time PCR using primers and probes specific for major acquired carbapenemase genes (e.g., blaKPC, blaNDM, blaOXA-48, blaVIM, blaIMP) as recommended by EUCAST [4].

- Analysis: A positive amplification curve for a specific target confirms the presence of that carbapenemase gene. This genotypic data should be correlated with the phenotypic carbapenem MIC.

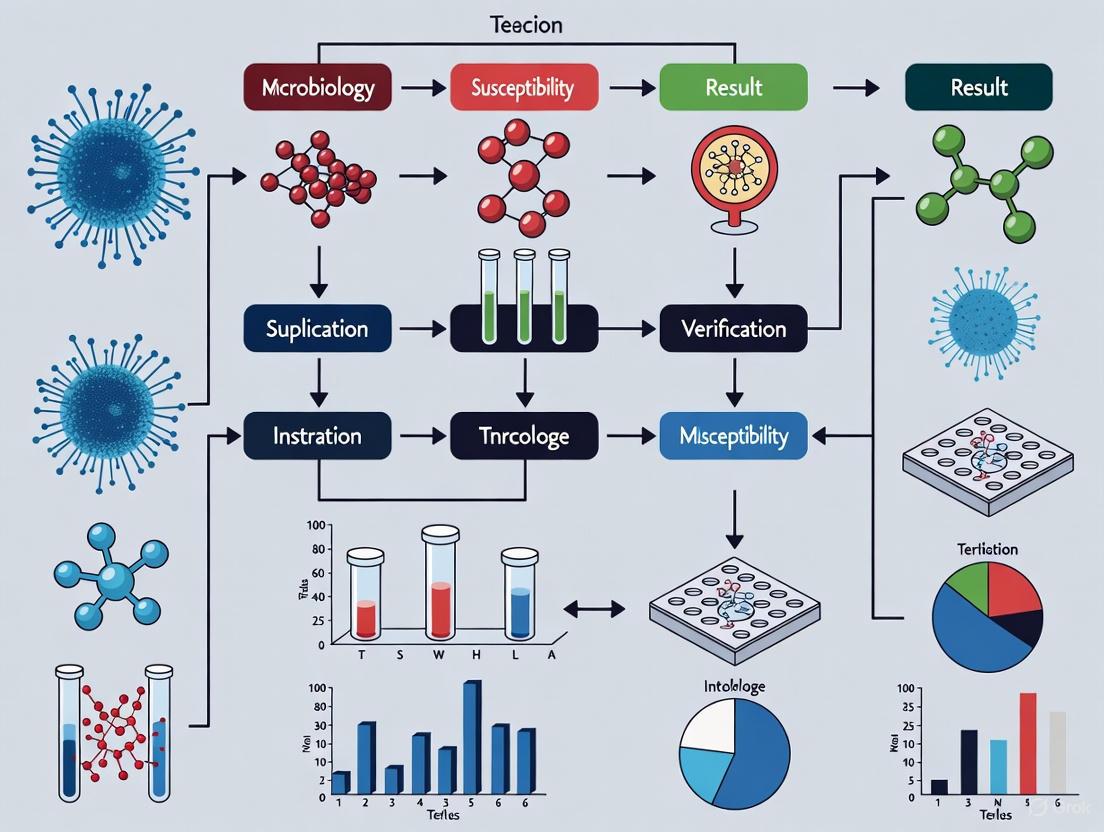

Workflow Visualization

The following diagram illustrates the logical workflow for integrating phenotypic and genotypic AST methods to comprehensively characterize bacterial resistance.

The Scientist's Toolkit: Key Research Reagent Solutions

Essential materials and their functions for establishing robust AST protocols in a research setting.

| Item | Function & Application | Key Considerations |

|---|---|---|

| Cation-Adjusted Mueller-Hinton Broth (CAMHB) | Standardized culture medium for broth microdilution AST. | Ensures consistent ion concentration, which is critical for the activity of aminoglycosides and tetracyclines. |

| Frozen or Lyophilized Antibiotic Panels | Pre-made panels with serial antibiotic dilutions for MIC testing. | Reduces preparation error and improves reproducibility. Check stability and storage conditions. |

| ATCC Quality Control Strains | Reference strains with known MIC ranges (e.g., E. coli ATCC 25922, P. aeruginosa ATCC 27853). | Used for daily or weekly quality control to monitor the performance of AST methods and reagents. |

| PCR Master Mix & Primers/Probes | For genotypic detection of specific resistance determinants (e.g., mecA, vanA, blaKPC). | Enables rapid confirmation of resistance mechanisms and detection of emerging threats like mcr-1 [2]. |

| Standardized Inoculum System | Densitometer or turbidity standard (0.5 McFarland) for preparing a consistent bacterial inoculum. | A critical step; inaccuracy here is a major source of MIC variability. |

In the fight against antimicrobial resistance (AMR), accurate susceptibility testing is a critical pillar of modern medicine and drug development. For researchers and clinical scientists, navigating the landscape of interpretive standards—primarily those from the Clinical and Laboratory Standards Institute (CLSI), the U.S. Food and Drug Administration (FDA), and the European Committee on Antimicrobial Susceptibility Testing (EUCAST)—presents a significant verification challenge. Discrepancies between these standards have historically complicated test validation, regulatory compliance, and the reliable interpretation of data. This guide provides troubleshooting and methodological support for professionals managing these complexities within their research and development workflows.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: Our laboratory needs to choose a primary standard for antimicrobial susceptibility testing (AST). What are the key differences between CLSI, FDA, and EUCAST?

A: The choice of standard depends on your geographic location, regulatory requirements, and the specific microorganisms you work with. The following table outlines the core characteristics of each major standardizing body.

Table 1: Key Characteristics of Major Antimicrobial Susceptibility Testing Standards

| Standardizing Body | Primary Focus & Audience | Update Cycle | Key Document(s) | Regulatory Status |

|---|---|---|---|---|

| CLSI | Global consensus standards for clinical and research laboratories [6] [7] | Annual [6] | M100 (for aerobic and anaerobic bacteria) [6] | FDA-recognized; the gold standard for many laboratories [6] [8] |

| FDA | Regulatory oversight for the United States; dictates labeling of FDA-cleared devices and antimicrobials [8] [9] | Reviewed every 6 months per the 21st Century Cures Act [9] | Susceptibility Test Interpretive Criteria (STIC) website [8] | Legally required for FDA-cleared AST devices in the U.S. [9] |

| EUCAST | Standards for European countries and those adopting its methodologies [10] | Annual [10] | Clinical Breakpoint Tables (for bacteria and fungi) [10] [11] | Widely adopted in Europe and by many research institutions globally |

Q2: We are encountering discrepancies when applying different breakpoints to the same dataset. How should we resolve this?

A: Discrepancies are a common verification challenge. A systematic approach is required for resolution.

- Define the Context of Testing: First, determine the purpose of your test. Is it for clinical diagnostics supporting patient care in the U.S.? If so, adherence to FDA-recognized breakpoints is necessary for compliant operation of FDA-cleared devices [9]. For research or in regions outside the U.S., CLSI or EUCAST standards may be more appropriate.

- Consult the Most Current Documents: Breakpoints are updated frequently to respond to evolving AMR data. Ensure you are using the latest editions. For example, as of 2025, the FDA fully recognizes the CLSI M100 35th Edition, a significant harmonization step that resolves many previous discrepancies [6] [8] [9].

- Check for Explicit Exceptions: The FDA now generally recognizes the entire CLSI M100 standard, listing only specific exceptions and additions on its website [8] [9]. Always cross-reference the FDA's "Exceptions or Additions" column for your specific drug-bug combination.

- Document Your Rationale: In your research protocols or laboratory standard operating procedures (SOPs), clearly document which standard is used and the justification for its selection, especially if it differs from the device's FDA-cleared claims.

Q3: How has the FDA's regulation of Laboratory-Developed Tests (LDTs) affected AST, and what does it mean for our research?

A: The FDA's final rule on LDTs, which phased out its previous enforcement discretion policy in 2024, significantly impacts AST methodologies that deviate from FDA-cleared claims [9]. This is highly relevant for research and verification workflows.

- What is an LDT in AST Context? Common scenarios now considered LDTs include:

- Modifying an FDA-cleared AST device to interpret results with newer CLSI breakpoints not yet on the device's label [9].

- Using a device for an organism-antimicrobial combination for which it is not cleared [9].

- Employing AST methods not considered FDA-recognized reference methods (e.g., broth disk elution for certain combinations) [9].

- Navigating the New Rule: The rule includes certain enforcement discretion exceptions. One key exception applies to LDTs implemented before May 6, 2024, and those offered within an integrated healthcare system to meet an unmet medical need for its patients [9]. However, this creates a challenge for reference laboratories serving external clients. The recent FDA recognition of many CLSI standards provides a pathway for manufacturers to update their devices, which in turn reduces the need for laboratories to create LDTs [9].

Q4: What is the basic experimental protocol for verifying a broth microdilution method according to CLSI standards?

A: The broth microdilution method is a reference standard detailed in CLSI document M07 [6]. The following workflow provides an overview of the verification process.

Detailed Protocol Steps:

- Principle: This method determines the Minimum Inhibitory Concentration (MIC) of an antimicrobial agent by testing its serial dilutions in a liquid growth medium against a standardized bacterial inoculum. The MIC is the lowest concentration that prevents visible growth.

- Materials and Reagents:

- Cation-Adjusted Mueller-Hinton Broth (CAMHB): The standard medium for most non-fastidious aerobic bacteria.

- Sterile Microtiter Plates: 96-well plates containing serial two-fold dilutions of antimicrobials.

- Standardized Bacterial Inoculum: Adjust a log-phase broth culture or a saline suspension of colonies to a 0.5 McFarland standard, then further dilute to achieve a final inoculum of ~5 x 10^5 CFU/mL in each well.

- Quality Control (QC) Strains: Use specific strains listed in CLSI M100 (e.g., E. coli ATCC 25922, S. aureus ATCC 29213) to verify the precision and accuracy of the test system [6].

- Procedure:

- Inoculate the microtiter plates with the prepared bacterial suspension.

- Incubate the plates aerobically at 35±2°C for 16-20 hours.

- After incubation, examine each well for visible growth.

- Interpretation:

- Record the MIC as the lowest antimicrobial concentration that completely inhibits visible growth.

- Compare the MIC value to the interpretive criteria (breakpoints) in the current CLSI M100 document to categorize the isolate as Susceptible (S), Intermediate (I), or Resistant (R) [6].

- The QC strain results must fall within the published acceptable ranges for the test to be considered valid [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Antimicrobial Susceptibility Testing

| Item | Function/Description | Key Standard for Use |

|---|---|---|

| Cation-Adjusted Mueller-Hinton Broth (CAMHB) | Standardized medium for broth dilution (MIC) tests for aerobic bacteria. Provides consistent ion concentration for reliable antibiotic activity. | CLSI M07 [6] |

| Mueller-Hinton Agar | Standardized medium for disk diffusion testing. Depth and pH are critically controlled. | CLSI M02 [6] |

| Antimicrobial Powder/Premade Panels | High-purity antimicrobial agents for preparing in-house dilution panels or commercial frozen/microtiter panels. | CLSI M07/M100 [6] |

| 0.5 McFarland Standard | Turbidity standard (latex or barium sulfate) to standardize bacterial inoculum density for both dilution and diffusion methods. | CLSI M07/M02 [6] |

| Quality Control (QC) Strains | Frozen or lyophilized reference strains (e.g., ATCC strains) with defined MICs used to monitor the precision and accuracy of the test system. | CLSI M100 [6] |

Visualizing the Breakpoint Application and Regulatory Workflow

The process of conducting AST and applying breakpoints, especially in a regulated environment, involves multiple decision points. The following diagram maps this complex workflow.

The year 2025 marks a transformative period in the landscape of antimicrobial susceptibility testing (AST). In a significant regulatory shift, the U.S. Food and Drug Administration (FDA) has recognized numerous breakpoints published by the Clinical and Laboratory Standards Institute (CLSI), including those for microorganisms representing previously unmet needs [9]. This unprecedented alignment between regulatory standards and clinical guidelines heralds a more pragmatic approach to AST, representing a substantial advancement for researchers, clinical laboratories, and ultimately patient care worldwide [9]. This technical support center provides essential guidance for navigating these changes, offering troubleshooting assistance and detailed protocols to facilitate seamless implementation within research and development workflows.

FAQ: Understanding the 2025 Regulatory Changes

1. What specifically changed with the FDA's recognition of CLSI breakpoints in early 2025?

In January 2025, the FDA updated its Susceptibility Test Interpretive Criteria (STIC) to fully recognize the standards published in several key CLSI documents [12] [9]:

- CLSI M100, 35th Edition (Aerobic and Anaerobic Bacteria)

- CLSI M45, 3rd Edition (Infrequently Isolated or Fastidious Bacteria)

- CLSI M24S, 2nd Edition (Mycobacteria, Nocardia spp., and Other Aerobic Actinomycetes)

- CLSI M43-A, 1st Edition (Human Mycoplasmas)

- CLSI M38M51S, 3rd Edition (Filamentous Fungi)

A critical structural change on the FDA's STIC webpages is that now only exceptions or additions to the recognized CLSI standards are specifically listed, rather than enumerating all recognized CLSI breakpoints [9]. This establishes the CLSI standards as the default recognized criteria unless explicitly noted otherwise.

2. How does this recognition affect the development and verification of Laboratory Developed Tests (LDTs)?

The FDA's recognition provides a clearer pathway for LDTs using these breakpoints, particularly important given the FDA's final rule on LDTs that took effect in 2024 [9]. Prior to this recognition, modifying an FDA-cleared AST device to interpret results with current CLSI breakpoints, or validating a novel AST device for organism-drug combinations without FDA-recognized breakpoints, constituted an LDT requiring FDA oversight. The 2025 recognition significantly reduces these scenarios by aligning FDA-recognized breakpoints with current CLSI standards.

3. What is the practical significance of recognizing CLSI M45 breakpoints?

The recognition of CLSI M45 breakpoints for infrequently isolated or fastidious bacteria addresses a critical unmet need in clinical practice and research [9]. Many of these breakpoints are based on historical data for microorganisms where clinical trial data or contemporary pharmacokinetic-pharmacodynamic studies are unlikely to be conducted. Despite this, they have been used for decades in managing patients with serious infections caused by these organisms. Their formal recognition enables more standardized AST for diverse microbes causing infections and provides a pathway for commercial manufacturers to develop tests for these pathogens [9].

4. How do these changes impact compliance with College of American Pathologists (CAP) requirements?

The College of American Pathologists requires laboratories to implement updated breakpoints within 3 years of their official publication by the FDA or other standards development organization [13]. The 2025 FDA recognition establishes a clear reference point for compliance, requiring laboratories to use these current breakpoints for interpreting antimicrobial susceptibility test results. Effective January 2024, laboratories must use current breakpoints, making it unacceptable to use breakpoints no longer recognized by either FDA or CLSI [13].

Troubleshooting Guide: Implementing Updated Breakpoints

Challenge 1: Verification and Validation of Updated Breakpoints

Problem: Researchers encounter difficulties performing verification or validation studies required to implement updated breakpoints in their systems.

Solution: Utilize the Breakpoint Implementation Toolkit (BIT) developed through a collaboration of CLSI, APHL, ASM, CAP, and CDC [14].

Implementation Steps:

- Documentation: Use BIT Part A to document all breakpoints currently in use across AST instruments, laboratory information systems, and electronic health records [14].

- Gap Analysis: Employ BIT Part B (CLSI vs. FDA Breakpoints spreadsheet) to identify discrepancies between current implementations and newly recognized standards [14].

- Study Documentation: Utilize BIT Part C template to document verification or validation study results, creating evidence for accreditation or regulatory bodies [14].

- Reference Materials: Access CDC and FDA Antibiotic Resistance (AR) Bank isolate sets outlined in BIT Part D for validation studies [14].

- Data Analysis: Use BIT Parts E and F, which include prefilled Excel worksheets with AR Bank data and calculation tools [14].

Challenge 2: Managing Discrepancies in Recognized Breakpoints

Problem: Despite broader recognition, some specific breakpoints still differ between CLSI and FDA criteria.

Solution: Systematic approach to identifying and managing exceptions.

Implementation Steps:

- Consult FDA STIC Table: Regularly check the "Exceptions or Additions to the Recognized CLSI Standard" column in the FDA's antibacterial susceptibility test interpretive criteria table [8].

- Review Notices of Updates: Monitor the FDA's "Notices of Updates" page for the latest changes to recognized standards [12].

- Prioritize Updates: Focus implementation efforts on breakpoints with clinical significance for your specific research focus or patient population.

- Document Justifications: Maintain detailed records when using alternative breakpoints, including input from antimicrobial stewardship teams where appropriate [13].

Challenge 3: Updating Automated AST Systems

Problem: Automated antimicrobial susceptibility testing systems may not immediately incorporate the most recently recognized breakpoints.

Solution: Proactive engagement with manufacturers and implementation of interim solutions.

Implementation Steps:

- Manufacturer Communication: Contact AST system manufacturers for specific guidance on breakpoints used and clearance status with their systems [14].

- LIS Modifications: Work with information technology staff to update laboratory information systems where AST interpretations are applied post-testing.

- Verification Studies: Perform verification studies per BIT guidelines when implementing software updates or manual overrides for breakpoint interpretations.

- Quality Control: Enhance quality control procedures to ensure updated breakpoints are correctly applied and functioning as intended.

Experimental Protocols for Breakpoint Verification

Protocol 1: Broth Microdilution Method Verification

Purpose: To verify the accuracy of updated breakpoints using reference broth microdilution methods.

Materials:

- BIT-recommended isolate sets from CDC and FDA AR Bank [14]

- Cation-adjusted Mueller-Hinton broth (for most non-fastidious bacteria)

- CLSI M07-compliant microdilution trays [6]

- Appropriate quality control strains

Methodology:

- Prepare antibiotic stock solutions at appropriate concentrations based on CLSI M100 guidelines [6].

- Perform serial dilutions in broth media to create a concentration range encompassing the breakpoints.

- Inoculate wells with standardized bacterial suspensions (5 × 10⁵ CFU/mL).

- Incubate at 35±2°C for 16-20 hours (standard incubation) or as recommended for specific organisms.

- Read Minimum Inhibitory Concentration (MIC) as the lowest concentration completely inhibiting visible growth.

- Interpret MICs using both previous and updated breakpoints.

- Compare essential and categorical agreement between old and new interpretations.

Troubleshooting:

- If unacceptable error rates occur (>3% major errors, >10% very major errors), verify inoculum preparation methodology.

- For fastidious organisms, ensure appropriate supplementation of media and incubation conditions per CLSI M45 guidelines [14].

Protocol 2: Disk Diffusion Method Verification

Purpose: To validate updated zone diameter breakpoints for disk diffusion testing.

Materials:

- BIT-recommended isolate sets [14]

- Mueller-Hinton agar plates (appropriate formulations for specific organisms)

- CLSI-compliant antibiotic disks

- Measuring calipers or automated zone readers

Methodology:

- Prepare bacterial suspensions to 0.5 McFarland standard.

- Lawn inoculate agar plates within 15 minutes of standardization.

- Apply antibiotic disks ensuring firm contact with agar surface.

- Incubate at 35±2°C for 16-18 hours (standard) or as recommended.

- Measure zones of inhibition to nearest millimeter.

- Interpret using both previous and updated breakpoint criteria.

- Calculate categorical agreement and identify discrepancies.

Troubleshooting:

- If trailing growth occurs with certain drug-organism combinations, read inhibition at 80% growth reduction.

- For fastidious organisms, ensure appropriate atmospheric conditions during incubation.

Research Reagent Solutions

Table: Essential Materials for Breakpoint Implementation Studies

| Reagent/Resource | Function/Purpose | Source/Reference |

|---|---|---|

| CDC & FDA AR Bank BIT Isolate Sets | Provides characterized bacterial isolates with known resistance mechanisms for validation studies [14] | BIT Part D [14] |

| CLSI M100, 35th Edition | Definitive reference for current breakpoints, quality control ranges, and testing methodologies [6] | CLSI [6] |

| Breakpoint Implementation Toolkit (BIT) | Comprehensive guide for performing verification studies, documenting results, and implementing updated breakpoints [14] | CLSI/APHL/ASM/CAP/CDC collaboration [14] |

| CLSI M07 Standard | Reference method for broth dilution antimicrobial susceptibility testing [6] | CLSI [6] |

| CLSI M02 Standard | Reference method for disk diffusion antimicrobial susceptibility testing [6] | CLSI [6] |

| CLSI M45, 3rd Edition | Standards for infrequently isolated or fastidious bacteria [14] | CLSI [14] |

Visual Workflows for Breakpoint Implementation

Breakpoint Implementation Process

LDT Validation Pathway

The FDA's 2025 recognition of CLSI breakpoints represents a landmark achievement in standardizing antimicrobial susceptibility testing. This alignment addresses critical challenges in managing antimicrobial resistance by providing researchers and clinical laboratories with clear, contemporary standards that reflect current understanding of resistance mechanisms and treatment outcomes. By leveraging the resources and protocols outlined in this technical support guide, research professionals can navigate this transition effectively, ensuring their work remains at the forefront of antimicrobial stewardship and patient care. The continued collaboration between regulatory bodies and standards organizations promises to further enhance our collective ability to combat the ongoing threat of antimicrobial resistance through scientifically robust and clinically relevant testing methodologies.

The U.S. Food and Drug Administration (FDA) has initiated a significant regulatory shift with its Final Rule on Laboratory Developed Tests, effectively ending its longstanding enforcement discretion policy. The rule, effective July 5, 2024, amends FDA regulations to explicitly state that IVD products are devices under the Federal Food, Drug, and Cosmetic Act "including when the manufacturer of these products is a laboratory" [15]. This redefinition clarifies that LDTs are regulated medical devices, subjecting them to the same oversight as other IVDs [16]. For clinical microbiology laboratories performing Antimicrobial Susceptibility Testing, this change profoundly impacts how test verification, validation, and implementation must be approached.

Understanding the LDT Phaseout Timeline and Requirements

Five-Stage Phaseout Policy

The FDA is implementing a structured, five-stage phaseout of its enforcement discretion policy over four years. Laboratories must meet specific compliance milestones according to the following timeline [16] [15]:

| Stage | Deadline | Key Compliance Requirements |

|---|---|---|

| Stage 1 | May 6, 2025 | Medical device reporting (MDR), corrections and removals reporting, and complaint handling |

| Stage 2 | May 6, 2026 | Establishment registration, device listing, labeling, and investigational use requirements |

| Stage 3 | May 6, 2027 | Quality System Regulation including good manufacturing practices |

| Stage 4 | November 6, 2027 | Premarket review requirements for high-risk LDTs (Class III) |

| Stage 5 | May 6, 2028 | Premarket review requirements for low and moderate-risk LDTs (Class I & II) |

Enforcement Discretion Exceptions

The Final Rule includes limited enforcement discretion for specific LDT categories [16] [15]:

- Pre-May 2024 LDTs: Tests marketed before the rule's issuance that remain unmodified may be exempt from premarket review and QSR requirements

- 1976-Type LDTs: Tests with characteristics common when the Medical Device Amendments were passed

- NYCLEP-approved LDTs: Tests approved by New York State's Clinical Laboratory Evaluation Program

- Public Health Service: Tests manufactured and used within Veteran's Health Administration or Department of Defense

AST Verification Under the New Framework

Impact on Antimicrobial Susceptibility Testing

The LDT Final Rule significantly affects common AST practices in clinical microbiology laboratories [9]:

- Modifying FDA-cleared AST devices to interpret results with current breakpoints (CLSI or updated FDA breakpoints)

- Validating new organism-antimicrobial combinations not included in the device's cleared indications

- Implementing novel AST methodologies not considered reference methods (e.g., broth disk elution for colistin)

- Updating breakpoints on legacy systems that were cleared with obsolete interpretive criteria

Breakpoint Recognition Changes

A January 2025 FDA update recognized many breakpoints published by the Clinical and Laboratory Standards Institute, representing a major advancement for AST [9]. The FDA now recognizes standards including:

- CLSI M100 35th edition (aerobic and anaerobic bacteria)

- CLSI M45 3rd Ed (infrequently isolated or fastidious bacteria)

- CLSI M24S 2nd Ed (mycobacteria, Nocardia spp., and other aerobic Actinomycetes)

- CLSI M27M44S 3rd Ed (yeast)

- CLSI M38M51S 3rd Ed (filamentous fungi)

The FDA's revised approach lists only exceptions or additions where no CLSI breakpoints are available, rather than listing all recognized CLSI breakpoints [9].

Troubleshooting Common AST Verification Challenges

Regulatory Compliance Issues

Scenario: Laboratory modified an FDA-cleared AST device to use current CLSI breakpoints rather than the obsolete breakpoints with which the device was originally cleared.

Solution: Under the Final Rule, this modification constitutes an LDT requiring compliance. Laboratories should [17] [9]:

- Document the validation demonstrating equivalent performance to reference methods

- Implement a predetermined change control plan for future breakpoint updates

- Review enforcement discretion exceptions to determine if the modification qualifies

- Consider the integrated health system exception if meeting an unmet need for patients within the same system

Scenario: Laboratory needs to validate AST for a novel antimicrobial-organism combination lacking FDA-recognized breakpoints.

- Consult FDA-STIC website for recognized breakpoints and exceptions

- Utilize the FDA-established docket for submitting information to support new interpretive criteria

- Document clinical justification for breakpoint selection based on available pharmacological and microbiological data

- Implement rigorous validation against reference methods when available

Operational Implementation Barriers

Scenario: Financial constraints limit adoption of rapid ID/AST technologies despite clinical benefits.

Solution [19]:

- Calculate total cost of ownership including reagent rental agreements versus capital expenditure

- Document clinical impact through pilot studies showing improved outcomes, reduced length of stay, or better antimicrobial stewardship

- Explore innovative funding models through antimicrobial stewardship programs or hospital quality initiatives

- Consider phased implementation starting with high-impact patient populations

Scenario: Limited technical expertise for implementing rapid AST methodologies during all shifts.

Solution [19]:

- Develop comprehensive training programs with competency assessments for all shifts

- Implement tiered reporting with expert review during off-hours

- Utilize available CLIA frameworks for personnel requirements based on test complexity

- Establish 24/7 consultative support from senior microbiology staff

Frequently Asked Questions

Q1: Can we continue using our current AST methods that were implemented before May 2024?

A1: Yes, with limitations. The Final Rule includes enforcement discretion for LDTs implemented before May 6, 2024, provided they are not significantly modified. However, these tests must still comply with medical device reporting, quality system, and other applicable requirements according to the phaseout schedule [15].

Q2: How does the FDA's recognition of CLSI breakpoints in early 2025 affect our AST verification?

A2: The January 2025 recognition of multiple CLSI standards enables laboratories to use these breakpoints without creating an LDT, provided the AST system manufacturer has updated the device clearance. For legacy systems, verification against these recognized breakpoints may still be considered an LDT if it modifies the device's intended use [9].

Q3: What are the consequences of not complying with the LDT Final Rule for our AST verification?

A3: Non-compliant laboratories may face regulatory action including warnings, fines, or prohibitions on testing. Additionally, results from non-compliant tests may not be reimbursed by payers or accepted for clinical care decisions [16].

Q4: How should we handle breakpoint updates for our AST systems under the new regulations?

A4: FDA guidance recommends implementing a Predetermined Change Control Plan for AST systems to facilitate timely adoption of updated breakpoints. For legacy systems, changes to incorporate new breakpoints require submission of a new 510(k) or compliance with LDT requirements [17].

Q5: Are there any exceptions for public health laboratories performing AST for surveillance?

A5: The Final Rule applies to public health laboratories, potentially affecting tests like ceftazidime-avibactam-aztreonam testing performed by the Antibiotic Resistance Laboratory Network. These laboratories should review the enforcement discretion exceptions and may need to pursue FDA clearance for surveillance tests used for clinical decision-making [9].

Essential Research Reagent Solutions for AST Verification

| Reagent/Component | Function in AST Verification | Regulatory Considerations |

|---|---|---|

| Reference strain panels | Quality control and method comparison | Must be traceable to recognized collections (ATCC, etc.) |

| CLSI reference powders | Establishing reference MIC values | Documentation of source and purity required for validation |

| Quality control strains | Daily monitoring of test performance | Must include susceptible and resistant strains for each drug |

| Cation-adjusted Mueller-Hinton broth | Standardized medium for broth microdilution | Must meet CLSI specifications for composition |

| Supplemental additives | Testing fastidious organisms (e.g., HTM, SBM) | Validation required for each matrix-organism combination |

AST Verification Workflow Under LDT Final Rule

The FDA's LDT Final Rule represents a fundamental shift in the regulatory landscape for antimicrobial susceptibility testing. Laboratories must now navigate a structured four-year phaseout of enforcement discretion while maintaining essential testing services. Success requires understanding the nuanced exceptions, implementing robust quality systems, and proactively planning for breakpoint updates. The recent FDA recognition of CLSI standards provides welcome clarity for many AST applications, but laboratories must still verify their specific implementations comply with the new framework. By approaching these changes systematically and utilizing available resources, laboratories can continue providing critical AST results while meeting enhanced regulatory expectations for test verification.

Antimicrobial resistance (AMR) is a pressing global health crisis, associated with an estimated 4.95 million deaths worldwide annually [20]. At the frontline of detecting and combating AMR are clinical microbiology laboratories, which perform antimicrobial susceptibility testing (AST) to guide effective patient treatment. The accuracy of this testing depends critically on using current, clinically relevant interpretive criteria, known as breakpoints. These breakpoints are the pre-established standards that categorize microorganisms as Susceptible (S), Intermediate (I), or Resistant (R) to specific antimicrobial agents based on Minimum Inhibitory Concentration (MIC) measurements or zone diameter sizes [20].

Using obsolete breakpoints introduces significant risk for patient mismanagement. Imagine a scenario where a patient transfers between hospitals and receives conflicting susceptibility results for the same bacterial isolate—all because the first facility used outdated breakpoints that incorrectly categorized a resistant organism as susceptible [20]. Such cases underscore why identifying and updating obsolete breakpoints constitutes a foundational compliance requirement for all clinical laboratories. Regulatory bodies have taken notice: The College of American Pathologists (CAP) now mandates that laboratories use current breakpoints by January 1, 2024, and implement new breakpoints within three years of their official publication [13] [20].

Understanding Breakpoint Obsoleteness: Regulatory Frameworks and Updates

The Breakpoint Ecosystem: FDA, CLSI, and EUCAST

In the United States, breakpoint standardization involves multiple key organizations with complementary roles. The U.S. Food and Drug Administration (FDA) regulates drugs and AST devices, requiring FDA clearance for any changes to commercial testing systems [20]. The Clinical and Laboratory Standards Institute (CLSI) is an independent standards development organization that regularly reviews and updates breakpoints based on the latest resistance patterns, pharmacological data, and clinical outcomes [9]. Internationally, the European Committee on Antimicrobial Susceptibility Testing (EUCAST) serves a similar standard-setting function [10].

Historically, disconnects between these organizations created challenges. The FDA was often unable to recognize updated CLSI breakpoints in a timely manner, leading to situations where laboratories continued applying breakpoints more than ten years out of date [9]. A significant regulatory shift occurred in early 2025 when the FDA recognized many CLSI breakpoints across multiple standards, including those for infrequently isolated or fastidious bacteria [9]. This unprecedented alignment heralds a more pragmatic approach to AST regulation and facilitates easier laboratory compliance.

Consequences of Obsolete Breakpoint Usage

- Misleading Susceptibility Reports: Outdated breakpoints may fail to detect emerging resistance mechanisms, leading to "susceptible" categorization for actually resistant organisms [9]

- Suboptimal Patient Therapy: Clinicians relying on obsolete reports may prescribe ineffective antibiotics, potentially leading to treatment failure and adverse outcomes [20]

- Compromised Antimicrobial Stewardship: Inaccurate susceptibility data undermines institutional efforts to combat antimicrobial resistance through appropriate antibiotic use [20]

- Regulatory Non-Compliance: Laboratories using obsolete breakpoints risk citations during CAP inspections for failing to meet current standards [13]

Table: Key Regulatory Milestones in Breakpoint Management

| Date | Regulatory Action | Impact on Laboratories |

|---|---|---|

| 2006 | FDA began requiring use of FDA-recognized breakpoints on cleared devices | Increased regulatory oversight of AST interpretations [9] |

| 2016 | 21st Century Cures Act enabled FDA recognition of CLSI breakpoints | Created pathway for alignment between CLSI and FDA standards [9] |

| 2022 | CAP announced new breakpoint requirements | Mandated documentation of breakpoints in use and plan to update obsolete versions [13] |

| 2024 | CAP requirement for current breakpoints took effect | Required laboratories to use current breakpoints for MIC and disk diffusion tests [13] |

| January 2025 | FDA recognized many CLSI breakpoints across multiple standards | Major alignment between FDA and CLSI, particularly for fastidious organisms [9] |

Step-by-Step Methodology for Identifying Obsolete Breakpoints

Comprehensive System Mapping and Documentation

The first critical step involves creating a complete inventory of where breakpoints are applied throughout the laboratory testing and reporting workflow. Breakpoints may be embedded in multiple systems, each requiring verification [20]:

- Automated AST Instruments: Check the software versions and configuration settings on commercial susceptibility testing platforms

- Laboratory Information System (LIS): Review how susceptibility interpretations are applied and stored within the laboratory's data management system

- Electronic Health Record (EHR): Verify that interpretations displayed to clinicians match those used in the laboratory

- Manual Testing Procedures: Document breakpoints used for manual methods like disk diffusion or gradient diffusion tests

CAP requirement MIC.11380 mandates that laboratories have written criteria for determining and interpreting susceptibility results, reviewed annually [13]. Laboratories should maintain a master spreadsheet documenting each antimicrobial-organism combination and the breakpoint sources applied at each stage of testing and reporting [14].

Comparative Analysis Against Current Standards

After mapping existing breakpoints, systematically compare them against current recognized standards. The process differs slightly depending on whether your laboratory follows CLSI or EUCAST standards, though most U.S. laboratories will reference CLSI and FDA resources [20]:

For CLSI-aligned laboratories:

- Obtain the current edition of CLSI M100 (35th edition, 2025)

- Compare each organism-drug combination breakpoint against the corresponding value in M100

- Verify recognition status on the FDA Susceptibility Test Interpretive Criteria (STIC) website

- Note any exceptions or additions the FDA specifies for specific drug-bug combinations

Important consideration: After January 2025, the FDA recognizes most breakpoints published in CLSI M100 35th Edition, M45 3rd Edition, and other specific standards unless exceptions are explicitly listed [9] [8]. The FDA STIC website now primarily lists exceptions to the recognized CLSI standards rather than repeating all recognized breakpoints [9].

Table: Common Scenarios Indicating Obsolete Breakpoints

| Scenario | Example | Recommended Action |

|---|---|---|

| Breakpoints match older CLSI editions | Using 2014 carbapenem breakpoints for Enterobacterales | Update to current CLSI M100 35th edition values [20] |

| FDA exceptions not implemented | CLSI ciprofloxacin breakpoints for Acinetobacter not recognized by FDA | Apply FDA exceptions listed on STIC website [9] |

| No breakpoints for established drug-bug combinations | Missing breakpoints for fastidious organisms covered in CLSI M45 | Implement breakpoints now recognized by FDA from M45 3rd Edition [9] |

| AST system not cleared for current breakpoints | Automated instrument uses pre-2025 breakpoints despite FDA recognition of current CLSI standards | Work with manufacturer to update or perform laboratory validation [20] |

Prioritization Framework for Breakpoint Updates

Not all breakpoint updates carry equal urgency. Laboratories should establish a risk-based prioritization system focusing first on updates with the greatest potential clinical impact [20]:

High-Priority Updates:

- Carbapenem breakpoints for Enterobacterales (critical for detecting carbapenemase producers)

- Anti-MRSA agents (vancomycin, daptomycin, linezolid)

- Broad-spectrum cephalosporins and β-lactam/β-lactamase inhibitor combinations

- Organism-drug combinations with documented treatment failures using old breakpoints

Medium-Priority Updates:

- Oral antibiotics with well-established breakpoints

- Organism-drug combinations rarely encountered in your patient population

- Antimicrobials not routinely used in your institution

Documentation Requirement: Any decision not to report a specific drug or to delay breakpoint implementation should be formally documented in laboratory procedures with input from the antimicrobial stewardship team [20].

Troubleshooting Common Breakpoint Implementation Challenges

Frequently Asked Questions

Q: Our automated AST system hasn't been cleared by the FDA for the latest breakpoints. Can we still update them? A: Yes, but the process differs. If the breakpoints are FDA-recognized but not yet cleared on your specific system, you can perform a laboratory validation (more extensive) rather than verification (less extensive). This constitutes off-label use of the device but is acceptable with proper documentation [20].

Q: How do we handle situations where CLSI and FDA breakpoints differ? A: Following the January 2025 updates, most CLSI breakpoints are now FDA-recognized. For the remaining discrepancies, U.S. laboratories must follow FDA breakpoints. However, laboratories may choose to report additional comments noting CLSI interpretations if clinically relevant, with proper documentation and notification to clinicians about the difference [9] [8].

Q: What if our AST panels don't have the testing range to accommodate new breakpoints? A: This requires contacting your manufacturer representative. Some panels may not include high enough antimicrobial concentrations to detect resistance with newer breakpoints. Manufacturers may provide information about when updated panels will be available or suggest alternative testing methods until the appropriate testing range is available [20].

Q: Are there specific resources to help with the breakpoint update process? A: Yes, a collaborative Breakpoint Implementation Toolkit (BIT) has been developed by CLSI, APHL, ASM, CAP, and CDC. This toolkit provides templates for documentation, verification protocols, and access to CDC/FDA Antibiotic Resistance Isolate Bank strains for validation studies [14].

Validation and Verification Methodologies

When implementing updated breakpoints, laboratories must perform either verification or validation studies depending on the regulatory status:

Verification Study (for FDA-cleared breakpoints on your system):

- Confirm performance comparable to manufacturer's FDA clearance claims

- Test approximately 30-50 clinical isolates representing susceptible, intermediate, and resistant categories

- Demonstrate ≥90% essential agreement and categorical agreement with reference method

- Document all results using standardized templates like those in the BIT [14]

Validation Study (for off-label use of breakpoints not cleared on your system):

- More extensive evaluation requiring 100+ isolates

- Include challenge set with known resistance mechanisms

- Establish performance specifications for your laboratory

- Requires more rigorous documentation and approval process [20]

Implementing updated breakpoints requires specific reagents and reference materials to ensure accurate validation and verification studies. The following resources represent the essential toolkit for researchers and laboratory professionals undertaking breakpoint updates:

Table: Research Reagent Solutions for Breakpoint Implementation

| Resource | Function/Application | Source/Availability |

|---|---|---|

| CDC & FDA Antibiotic Resistance Isolate Bank | Provides quality-controlled isolates with characterized resistance mechanisms for validation studies | CDC AR Bank panels; BIT-recommended sets [14] |

| CLSI M100 Supplement | Current breakpoint standards for commonly isolated bacteria | Annual CLSI publication [9] [8] |

| CLSI M45 Document | Breakpoints for infrequently isolated or fastidious bacteria | CLSI standard (3rd Edition recognized by FDA) [9] [8] |

| Breakpoint Implementation Toolkit (BIT) | Templates, protocols, and calculation tools for verification/validation studies | Collaborative resource from CLSI, APHL, ASM, CAP, CDC [14] |

| FDA STIC Website | Official listing of FDA-recognized breakpoints and exceptions | fda.gov/drugs/development-resources/antibacterial-susceptibility-test-interpretive-criteria [8] |

| EUCAST Clinical Breakpoint Tables | International breakpoint standards for global harmonization | eucast.org/clinical_breakpoints [10] |

Identifying and eliminating obsolete breakpoints is not a one-time project but rather an ongoing competency for modern clinical microbiology laboratories. The regulatory landscape has significantly improved with the FDA's 2025 recognition of numerous CLSI standards, creating unprecedented alignment between these two key organizations [9]. However, laboratories must maintain vigilance through established quality management processes.

A sustainable breakpoint management program includes:

- Annual review of all breakpoints against current CLSI M100 and FDA STIC resources

- Proactive communication with AST system manufacturers regarding update timelines

- Collaboration with antimicrobial stewardship and pharmacy teams to prioritize updates

- Documentation of all verification/validation activities using standardized tools

- Monitoring for newly published breakpoints and implementing within the 3-year CAP requirement window

By establishing robust processes for identifying obsolete breakpoints, laboratories not only achieve regulatory compliance but, more importantly, contribute significantly to the global fight against antimicrobial resistance through more accurate detection and reporting of resistance patterns. This foundational work ensures that susceptibility reports provide clinicians with the most current, evidence-based information to guide life-saving therapeutic decisions for patients with serious infections.

A Step-by-Step Framework for AST Verification and Breakpoint Implementation

Troubleshooting Guides

Guide 1: Resolving Discrepancies Between CLSI and FDA Breakpoints

Problem: Observing inconsistent antimicrobial susceptibility test (AST) results between CLSI and FDA interpretive criteria.

Solution: Systematically identify and verify the breakpoints in use.

- Confirm Breakpoint Sources: Use BIT Part A to document all breakpoints currently used in your laboratory, a requirement for College of American Pathologists (CAP) accreditation [14].

- Identify Specific Discrepancies: Consult BIT Part B, which contains a comprehensive spreadsheet comparing all disk diffusion and MIC breakpoints from CLSI M100 (35th Edition) and M45 (3rd Edition) with their corresponding FDA criteria [14]. This allows you to pinpoint exactly where mismatches occur.

- Validate with Isolate Panels: For breakpoints with discrepancies, perform a verification study using the recommended isolate sets from the CDC & FDA Antibiotic Resistance (AR) Isolate Bank, detailed in BIT Part D [14]. Using these standardized isolates ensures your validation is based on well-characterized controls.

Guide 2: Troubleshooting a Failed Breakpoint Verification Study

Problem: Your internal verification study for a new breakpoint yields unacceptable performance or errors.

Solution: Verify your methodology and data analysis using the BIT's structured templates.

- Review Experimental Setup: Ensure you are using the correct AR Bank isolate set as recommended in BIT Part D. Confirm that your AST methodology (e.g., broth microdilution, agar dilution) aligns with CLSI standards [14].

- Check Data Analysis: Use BIT Part F, a prefilled Excel workbook containing MIC results for AR Bank isolates. This template automates calculations, helping you verify your own data analysis process and identify potential calculation errors [14].

- Document for Scrutiny: If your study's results are valid but unexpected, use BIT Part C (the Breakpoint Implementation Summary template) to thoroughly document your entire study process and results. This provides a clear audit trail for any accreditation or regulatory body [14].

Frequently Asked Questions (FAQs)

Q1: What is the Breakpoint Implementation Toolkit (BIT), and who developed it? The BIT is a comprehensive kit designed to assist clinical laboratories in performing the verification or validation studies required to update their antimicrobial susceptibility testing (AST) breakpoints. It was developed through a collaboration between the Clinical and Laboratory Standards Institute (CLSI), the Association of Public Health Laboratories (APHL), the American Society for Microbiology (ASM), the College of American Pathologists (CAP), and the US Centers for Disease Control and Prevention (CDC) [14].

Q2: My lab's AST system is FDA-cleared. Why do I need to perform breakpoint verification? As of January 2024, laboratories are required to use breakpoints recognized by either CLSI or the FDA. Even if your AST system is FDA-cleared, the breakpoints it uses may not be the most current. The BIT helps you verify that the breakpoints you are applying, regardless of the system, are updated and correct, thus meeting regulatory and accreditation requirements [14].

Q3: Where can I find standardized bacterial isolates for my breakpoint verification study? The BIT directs users to the CDC and FDA Antibiotic Resistance (AR) Isolate Bank. BIT Part D specifically lists the AR Bank isolate sets that are recommended for use with the toolkit for breakpoint verification and validation studies [14].

Q4: What should I do if the breakpoint I need to verify is not covered by the AR Bank BIT sets? The toolkit includes BIT Part G, which is a blank form template for data entry. You can use this template to structure your validation or verification studies when using bacterial isolates from sources other than the recommended AR Bank sets [14].

Q5: How does the BIT help with the documentation required for accreditation? BIT Part C provides a standardized template (the Breakpoint Implementation Summary) for documenting the results of your verification or validation studies. This completed template serves as evidence of your study and can be presented to accreditation or regulatory bodies like CAP [14].

Experimental Protocols & Data

Table 1: Key Components of the Breakpoint Implementation Toolkit (BIT)

Table summarizing the core parts of the BIT and their primary functions in breakpoint management.

| BIT Component | Primary Function | Key Application in the Lab |

|---|---|---|

| Part A: Breakpoints in Use | Document current lab breakpoints | Meet CAP documentation requirements [14] |

| Part B: CLSI vs FDA Breakpoints | Compare breakpoint standards | Identify discrepancies between CLSI M100/M45 and FDA STIC criteria [14] |

| Part C: Breakpoint Implementation Summary | Template for study documentation | Create a report for accreditation bodies [14] |

| Part D: CDC & FDA AR Bank BIT Isolate Sets | List recommended isolate panels | Source quality-controlled organisms for validation studies [14] |

| Part F: AR Bank Data Entry & Calculations | Prefilled Excel worksheet with MIC data | Verify and automate calculation steps during testing [14] |

Table 2: Essential Research Reagent Solutions for AST Verification

Table detailing key materials and reagents used in antimicrobial susceptibility test verification studies.

| Research Reagent / Material | Function in AST Verification |

|---|---|

| CDC & FDA AR Bank Isolate Sets | Provides standardized, quality-controlled bacterial strains with known resistance mechanisms for breakpoint validation [14]. |

| Cation-Adjusted Mueller-Hinton Broth | The standardized growth medium for broth microdilution AST, ensuring consistent bacterial growth and antibiotic activity. |

| Mueller-Hinton Agar Plates | The standardized solid medium for disk diffusion AST, essential for consistent zone of inhibition measurements. |

| Antimicrobial Powder/Standard Disks | The source of the antibiotic agent being tested, with known potency, for preparing custom MIC panels or disk diffusion tests. |

Standard Protocol for Breakpoint Verification Using the BIT

Objective: To verify a new or updated antimicrobial breakpoint using the CLSI Breakpoint Implementation Toolkit.

Methodology:

- Documentation of Baseline: Using BIT Part A, create a complete inventory of all antimicrobial breakpoints currently in use within the laboratory information system (LIS) and AST instruments [14].

- Gap Analysis: Consult BIT Part B to identify any differences between the laboratory's current breakpoints and the most recent versions recognized by CLSI or the FDA. This spreadsheet is the definitive source for comparison [14].

- Acquisition of Verification Isolates: From the list provided in BIT Part D, order the appropriate CDC & FDA AR Bank isolate set for the antibiotic and organism in question. These panels include strains with a range of MICs to adequately challenge the new breakpoint [14].

- Experimental Testing: Perform AST on the acquired isolates using the laboratory's standard method (e.g., broth microdilution, disk diffusion). Testing should be performed in replicates as per standard laboratory quality control procedures.

- Data Analysis and Calculation: Input your experimental MIC results into BIT Part F. This prefilled Excel template will assist in organizing data and performing necessary calculations to compare your results against the expected outcomes from the AR Bank [14].

- Final Documentation and Implementation: Upon successful verification, complete BIT Part C (Breakpoint Implementation Summary). This document summarizes the study rationale, methodology, results, and the final decision to implement the new breakpoint, serving as the primary record for accreditors [14].

Navigating the Evolving Regulatory Framework for AST

The regulatory landscape for Antimicrobial Susceptibility Testing (AST) devices and interpretive criteria has undergone significant changes. Understanding this framework is the first step in engaging with industry partners and assessing a device's clearance status.

The 21st Century Cures Act and Breakpoint Recognition

The 21st Century Cures Act, enacted in 2016, established a more streamlined system for updating Susceptibility Test Interpretive Criteria (STIC), commonly known as breakpoints [18]. This act mandates that the FDA posts recognized STIC standards online and updates this information at least every six months. For developers, this means that the most current breakpoints are now referenced on FDA webpages rather than in individual drug labels, allowing for more rapid updates in response to emerging antimicrobial resistance [18].

FDA Recognition of CLSI Standards

A pivotal recent development occurred in January 2025, when the FDA recognized numerous breakpoints published by the Clinical and Laboratory Standards Institute (CLSI) [9]. This recognition includes standards for aerobic and anaerobic bacteria (CLSI M100 35th edition), infrequently isolated or fastidious bacteria (CLSI M45 3rd Ed), and various fungi and mycobacteria [9]. This major update provides a pragmatic solution for testing a wider array of microorganisms and enables commercial manufacturers to develop tests for pathogens that were previously not covered by FDA-recognized breakpoints.

The LDT Final Rule and Its Impact

The FDA's final rule on Laboratory-Developed Tests (LDTs), which took effect in 2024, phases out the agency's historical enforcement discretion policy [9]. This ruling has direct implications for clinical laboratories that modify FDA-cleared AST devices, for instance, to interpret results with current CLSI breakpoints that may not yet be recognized by the FDA. Such modifications are now classified as LDTs and are subject to FDA regulatory oversight. Understanding the exceptions to this rule, such as for tests implemented before May 6, 2024, is crucial for compliance [9].

Key Steps for Assessing FDA Clearance Status

Before engaging in a partnership or utilizing an AST system, verifying its FDA clearance status is a critical due diligence step. The following workflow outlines the key steps and decision points in this process.

Consult the FDA's 510(k) Clearances Database

The FDA maintains a public database of devices cleared through the 510(k) premarket notification process [21]. This database is searchable and can be browsed by year. For example, a search for 2025 clearances reveals numerous AST systems, including updates to established platforms like the ORTHO VISION Analyzer and Roche's cobas HIV-1 Quantitative nucleic acid test [22]. When assessing a device, always confirm its 510(k) number, applicant name, and specific cleared device name.

Verify Recognized Susceptibility Test Interpretive Criteria (STIC)

The FDA-Recognized Antimicrobial Susceptibility Test Interpretive Criteria website is the official source for current breakpoints [18]. Following the January 2025 update, the structure of these webpages has changed. The FDA now defaults to recognizing all breakpoints published in specific CLSI standards unless an exception is explicitly listed [9]. Researchers must cross-reference the breakpoints used in their AST device with those on the FDA's STIC pages to ensure compliance and clinical relevance.

Confirm Breakpoint Status with Manufacturers

Directly engage with device manufacturers to confirm the specific FDA-cleared breakpoint version embedded in their instrument's software. The College of American Pathologists requires laboratories to update AST breakpoints within three years of FDA recognition [9]. Proactively inquiring about a manufacturer's timeline for implementing newly recognized breakpoints is essential for planning and ensures your testing remains current.

Experimental Protocols for Verification and Compliance

Once a device's clearance status is confirmed, laboratories must perform their own verification studies. The following table summarizes the core components of a standard verification protocol.

Table 1: Core Components of an AST Verification Protocol

| Protocol Component | Description | Key Parameters |

|---|---|---|

| Accuracy Testing | Compare results from the new device/system against a reference method (e.g., CLSI broth microdilution M07) [9]. | Essential Agreement (EA), Category Agreement (CA). |

| Precision Testing | Assess reproducibility of results by testing a panel of isolates in replicates across different days and operators. | % of results within one doubling dilution (for MIC). |

| Quality Control (QC) | Perform QC using standard reference strains (e.g., ATCC strains) as recommended by the manufacturer and CLSI guidelines. | QC ranges must be within specified limits. |

Protocol for Verifying an AST System with Updated Breakpoints

This protocol is critical if you are validating the use of more recent CLSI breakpoints on an FDA-cleared device that was originally cleared with older criteria [9].

- Define Scope: Identify the specific organism-drug combinations for which the breakpoint has changed.

- Strain Selection: Assemble a panel of 20-30 well-characterized bacterial isolates. This panel should include strains with MICs at or near the new breakpoints to robustly challenge the interpretive categories.

- Reference Testing: Test the panel using the reference broth microdilution method (CLSI M07) to establish the reference MICs [9].

- Device Testing: Test the same panel using the AST device and its standard operating procedure.

- Data Analysis: Calculate the categorical agreement (CA) and essential agreement (EA) between the device results and the reference results, interpreted with the new breakpoints. A CA of ≥90% is typically required for verification.

- Documentation: Meticulously document all procedures, raw data, and analysis to meet quality standards.

Troubleshooting Guide and FAQs

Q1: Our laboratory wants to update the breakpoints on our FDA-cleared automated AST system to the latest CLSI standards, but the manufacturer has not yet updated the device software. Can we proceed?

A: Yes, but this modification is now classified as a Laboratory-Developed Test (LDT) under the FDA's final rule [9]. You must perform a full validation, as outlined in the experimental protocol above, to ensure patient safety and result accuracy. You should also be aware of the FDA's enforcement discretion for LDTs implemented before May 6, 2024, and those offered within an integrated healthcare system to meet an unmet medical need [9].

Q2: We are testing a rare bacterial species for which no FDA-recognized breakpoints exist. What is the best course of action?

A: The January 2025 FDA update recognized many CLSI standards for infrequently isolated or fastidious bacteria (e.g., M45) [9]. First, check if breakpoints are now available. If not, testing would constitute an LDT. In such cases, using CLSI M45 breakpoints or epidemiological cut-off values (ECOFFs) is the community standard, but a rigorous internal validation is mandatory.

Q3: We found a 510(k) clearance for an AST device, but it does not list the specific organism-antibiotic combination we need. Does this mean we cannot use it?

A: Not necessarily. The device may have been cleared for a broader panel after the 510(k) was posted. This is a critical point for discussion with the manufacturer. If the combination is not cleared, using it would be an "off-label" use, rendering the test an LDT subject to the new rule [9].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful AST verification and research rely on a foundation of well-characterized materials. The following table details key reagents and their functions.

Table 2: Key Research Reagent Solutions for AST Verification

| Reagent/Material | Function in Experiment | Critical Quality Points |

|---|---|---|

| Reference Bacterial Isolates | To challenge the AST system and ensure accuracy and precision near breakpoints. | Use well-characterized strains from reputable sources (e.g., ATCC). Should include a range of susceptibility profiles. |

| QC Strains (e.g., E. coli ATCC 25922, P. aeruginosa ATCC 27853) | To monitor the day-to-day performance and reproducibility of the AST system. | Must yield MICs within the established QC range for the antibiotic being tested. |

| Cation-Adjusted Mueller-Hinton Broth (CAMHB) | The standard medium for broth microdilution AST, ensuring consistent ion concentration that affects antibiotic activity. | Must be prepared and stored according to CLSI guidelines to avoid divalent cation variation. |

| Antimicrobial Powders | Used to prepare in-house reference trays for broth microdilution. | Source from a certified supplier. Purity and potency must be documented. Proper storage is critical for stability. |

FAQs: Navigating AST Verification Challenges

Q1: What is the gold standard reference method for Antimicrobial Susceptibility Testing (AST), and when can it be modified? A: The standard reference method is broth microdilution (BMD) in cation-adjusted Mueller-Hinton broth (CAMHB), as defined by CLSI M07 and ISO 20776-1 standards [23] [24]. While some novel antimicrobial agents may require scientifically justified modifications to this method to better reflect their clinical activity, any changes must be rigorously validated [23]. Modifications should never be made solely to produce lower Minimum Inhibitory Concentration (MIC) values or to make one agent appear superior to another [24].

Q2: What are the critical considerations for modifying a standard AST method during drug development? A: Clinical and Laboratory Standards Institute (CLSI) and the European Committee on Antimicrobial Susceptibility Testing (EUCAST) highlight several key considerations [23]:

- Initiate AST methods evaluation early in the drug development process.

- Use only minimal and scientifically justified adjustments.

- Consult with AST experts when encountering challenges with standard methods.

- Avoid modifications aimed solely at reducing MIC values, as this is scientifically unsound and can mislead.

Q3: What are the risks of unnecessary deviations from reference AST methods? A: Unnecessary deviations can lead to several significant negative outcomes, including increased development costs, regulatory hurdles, delays in test availability, and reduced clinical adoption of the new test or drug [24]. Adherence to the reference method ensures reliability and facilitates smoother implementation in clinical laboratories [23].

Q4: Where can I find officially recognized susceptibility test interpretive criteria (breakpoints)? A: The U.S. Food and Drug Administration (FDA) recognizes the breakpoints published in the CLSI Performance Standards for Antimicrobial Susceptibility Testing (M100) [8]. The FDA provides a comprehensive table of antibacterial drugs and the recognized standards that apply to them on its website [8].

Troubleshooting Guides for Common AST Verification Issues

Issue 1: Unexpected MIC Results with a New Antimicrobial Agent

Problem: Your experimental data for a new drug shows unexpectedly high or variable MICs compared to standard agents. Solution:

- Confirm Methodology: First, rigorously verify that your lab is precisely following the CLSI M07 or ISO 20776-1 reference BMD method without unvalidated alterations [24].

- Review Drug Properties: Investigate if the drug's physicochemical properties (e.g., solubility, stability in CAMHB) necessitate a justified modification of the medium. Consult published literature or regulatory guidance early [23].

- Action: Consult with AST experts or the drug developer to discuss the findings and determine if a method modification is scientifically warranted or if the results accurately reflect the drug's activity [23].

Issue 2: Inconsistent Results Across Different Laboratory Sites

Problem: A standardized verification protocol yields different MIC results when performed in different laboratories. Solution:

- Audit Reagents: Ensure all labs use the same lot and source of CAMHB and other reagents. Check the preparation and storage conditions of drug stock solutions [23].

- Standardize Inoculum: Verify that the inoculum preparation method (e.g., turbidity standard) is consistently applied across all sites [25].

- Cross-Check Equipment: Calibrate incubators and other equipment to ensure uniform environmental conditions [26].

- Action: Organize a joint session where technologists from different sites perform the test simultaneously to identify and correct procedural discrepancies.

Issue 3: Difficulty in Reproducing Published Reference Ranges

Problem: Your team cannot reproduce the quality control (QC) ranges or reference data provided in the drug's package insert or regulatory documents. Solution:

- Gather Information: Collect all available documentation, including the approved product labeling and the specific CLSI M100 supplement where interpretive criteria may be listed [8].

- Check for Exceptions: Review the FDA's "Exceptions or Additions to the Recognized CLSI Standard" for the specific drug, as additional testing conditions may be specified [8].

- Action: If the discrepancy persists, contact the drug manufacturer for technical support and report the issue to the relevant standards body (CLSI or EUCAST) to contribute to method refinement [23].

Strategic Planning Workflow for AST Verification

The following diagram outlines a logical workflow for clinical teams to prioritize and manage AST verification updates, based on best practices from CLSI, EUCAST, and regulatory guidance.

Research Reagent Solutions for AST Verification

The following table details essential materials and their functions for establishing reliable AST methods, based on regulatory and standards body requirements [23] [24] [8].

| Item | Function in AST Verification |

|---|---|

| Cation-Adjusted Mueller-Hinton Broth (CAMHB) | The standard medium for broth microdilution (BMD) tests; provides a consistent and defined environment for bacterial growth and antimicrobial activity [24]. |

| Broth Microdilution (BMD) Trays | Multi-well panels used to test multiple concentrations of an antimicrobial agent against a bacterial isolate simultaneously to determine the MIC [24]. |

| CLSI M100 Document | Provides the recognized standards for performance, interpretive criteria (breakpoints), and quality control parameters for AST [8]. |

| Quality Control (QC) Strains | Reference bacterial strains with known MIC ranges used to validate the accuracy and precision of the AST test procedure [8]. |

| Turbidity Standard (e.g., 0.5 McFarland) | Used to standardize the density of the bacterial inoculum suspension, ensuring a consistent number of organisms is used in each test [25]. |

Common AST Method Modification Scenarios

This table summarizes potential scenarios where modifications to the standard method might be considered and the recommended strategic approach, based on joint CLSI/EUCAST guidance [23] [24].

| Scenario | Justification Required? | Recommended Action |

|---|---|---|

| Novel drug requires a specific supplement in the medium to maintain stability or activity. | Yes, with data on drug stability. | Early consultation with regulatory and AST experts is crucial to design a valid and acceptable modified method [23]. |

| Desire to report a lower MIC for a new drug compared to a competitor. | No. This is strongly discouraged and is not scientifically valid [24]. | Adhere to the standard BMD method. Superiority should be demonstrated through clinical outcomes, not artificial MIC manipulation [23]. |