Determining qPCR Assay Limit of Detection: A Comprehensive Guide for Robust Assay Verification

This article provides a comprehensive framework for verifying the Limit of Detection (LOD) in quantitative PCR (qPCR) assays, a critical parameter for researchers, scientists, and drug development professionals.

Determining qPCR Assay Limit of Detection: A Comprehensive Guide for Robust Assay Verification

Abstract

This article provides a comprehensive framework for verifying the Limit of Detection (LOD) in quantitative PCR (qPCR) assays, a critical parameter for researchers, scientists, and drug development professionals. It covers the foundational principles of LOD and its distinction from the Limit of Quantification (LOQ), explores methodological approaches for LOD determination including Poisson analysis and the novel PCR-Stop method, details troubleshooting strategies for common pitfalls, and outlines rigorous validation procedures against established guidelines. By integrating foundational knowledge with practical application and validation protocols, this guide aims to empower scientists to achieve reliable, reproducible, and regulatory-compliant qPCR results in both research and clinical settings.

Understanding Limit of Detection: The Foundation of a Reliable qPCR Assay

In analytical science, particularly in fields like pharmaceutical development and clinical diagnostics, determining the lowest amount of an analyte that can be reliably measured is crucial for method validation. Two fundamental parameters in this context are the Limit of Detection (LOD) and Limit of Quantitation (LOQ) [1] [2]. These parameters define the capabilities of an analytical procedure at its lower end and are essential for interpreting results when target analytes are present at very low concentrations [3]. For quantitative real-time PCR (qPCR) assays used in gene therapy development, accurately establishing these limits ensures reliable detection and measurement of genetic material, directly impacting assessments of biodistribution, shedding, and efficacy [4]. Understanding the distinction between LOD and LOQ is not merely academic; it determines whether a result can be used for qualitative detection alone or for precise quantitative analysis, thereby influencing critical decisions in drug development pathways.

Conceptual Definitions and Distinctions

The Limit of Detection (LOD) and Limit of Quantitation (LOQ) are terms used to describe the smallest concentration of a measurand that can be reliably measured by an analytical procedure, but they serve distinct purposes [1].

Limit of Detection (LOD): The LOD is the lowest analyte concentration that can be reliably distinguished from the analytical background or blank, but not necessarily quantified as an exact value [5] [2] [6]. It represents the point at which detection is feasible, answering the question, "Is the analyte present?" The Clinical and Laboratory Standards Institute (CLSI) defines it as "the lowest amount of analyte in a sample that can be detected with (stated) probability, although perhaps not quantified as an exact value" [5]. A detection at this level provides a qualitative yes/no answer.

Limit of Quantitation (LOQ): The LOQ is the lowest concentration at which the analyte can not only be reliably detected but also quantified with stated acceptable levels of precision and accuracy (bias) [1] [5]. The CLSI defines LOQ as "the lowest amount of measurand in a sample that can be quantitatively determined with {stated} acceptable precision and stated, acceptable accuracy, under stated experimental conditions" [5]. At or above the LOQ, the method generates results that are numerically meaningful.

The core distinction is that the LOD concerns identification, while the LOQ concerns measurement [6]. The LOQ is always at a higher concentration than the LOD, though the magnitude of this difference depends on the analytical technique and the predefined goals for bias and imprecision [1] [7].

Table 1: Core Conceptual Differences between LOD and LOQ

| Feature | Limit of Detection (LOD) | Limit of Quantitation (LOQ) |

|---|---|---|

| Primary Question | Is the analyte present? | How much of the analyte is present? |

| Reliability | Distinguished from blank with stated confidence | Quantified with acceptable accuracy and precision |

| Typical Use | Qualitative detection of impurities | Quantitative determination of impurities |

| Relationship | The foundational limit for detection | LOQ ≥ LOD; always at or above the LOD |

Calculation Methodologies and Experimental Protocols

Several experimental approaches exist for determining LOD and LOQ, each with specific protocols and data requirements. The choice of method depends on the nature of the analysis (instrumental vs. non-instrumental) and regulatory guidelines.

Standard Deviation of the Blank and the Calibration Curve

This common approach utilizes the statistical characteristics of the blank signal and the sensitivity of the analytical method.

Protocol for LOD/LOQ based on Blank Replicates:

- Sample Preparation: Measure a sufficient number of replicates of a blank sample (containing no analyte). The CLSI EP17 guideline recommends 60 replicates for establishing these parameters and 20 for verification [1].

- Data Analysis: Calculate the mean (

mean_blank) and standard deviation (SD_blank) of the responses from the blank replicates. - Calculation:

- Limit of Blank (LoB): This is a prerequisite for LOD calculation and is defined as the highest apparent analyte concentration expected from a blank sample. It is calculated as

LoB = mean_blank + 1.645 * SD_blank(assuming a 95% confidence level for a one-tailed test) [1]. - LOD: The LOD is determined by also testing replicates of a sample containing a low concentration of analyte. The LOD is calculated as

LOD = LoB + 1.645 * SD_low concentration sample[1].

- Limit of Blank (LoB): This is a prerequisite for LOD calculation and is defined as the highest apparent analyte concentration expected from a blank sample. It is calculated as

Protocol based on Calibration Curve Slope: This method, recommended by ICH Q2(R1), uses the standard deviation of the response and the slope of the calibration curve [6].

- Calibration: Prepare a calibration curve with samples in the range of the expected detection limit.

- Data Analysis: Calculate the standard deviation (σ) of the response (e.g., from the y-intercepts of regression lines or the residual standard deviation) and the slope (S) of the calibration curve.

- Calculation:

The factor 3.3 for LOD is derived from the 5% probability of both Type I (false positive) and Type II (false negative) errors, while the factor 10 for LOQ provides a higher confidence level suitable for quantification [6].

Signal-to-Noise Ratio

This practical method is frequently used in chromatographic techniques and is applicable when a baseline noise is observable.

- Protocol:

- Data Acquisition: Compare signals from samples with known low concentrations of analyte against the signal of a blank.

- Measurement: The signal-to-noise ratio (S/N) is measured.

- Establishment of Limits:

Empirical Determination in qPCR

For qPCR assays, which have a logarithmic response (Cq values) and cannot produce a Cq for a true negative sample, standard approaches require adaptation [5].

- Protocol for Empirical LOD in qPCR:

- Sample Dilution Series: Prepare a dilution series of the target nucleic acid, covering a range from well above to below the expected detection limit. A 2-fold dilution series is common [5].

- High Replication: Analyze a large number of replicates at each concentration, especially the lower ones (e.g., 64-128 replicates) to obtain a robust statistical model [5].

- Data Analysis (Logistic Regression):

- Define a detection cut-off (e.g., a maximum Cq value).

- For each concentration, calculate the proportion of positive replicates (Cq < cut-off).

- Fit a logistic regression model to the data (proportion detected vs. log2(concentration)).

- The LOD is defined as the concentration at which 95% of the replicates are detected [5] [4]. The LOQ is the lowest concentration quantified with acceptable precision and accuracy, often determined by a predefined %CV (e.g., 20-25%) [1] [4].

Table 2: Comparison of LOD and LOQ Calculation Methods

| Method | Key Inputs | LOD Formula | LOQ Formula | Best Suited For |

|---|---|---|---|---|

| Blank & Low Sample SD [1] | Meanblank, SDblank, SDlow sample | LoB + 1.645(SDlow sample) | (Not covered by this method) | General clinical chemistry methods |

| Calibration Slope [6] | SD of response (σ), Slope (S) | 3.3 * σ / S | 10 * σ / S | Instrumental methods per ICH guidelines |

| Signal-to-Noise [6] | Signal height, Noise height | S/N ≥ 3 | S/N ≥ 10 | Chromatographic methods (HPLC, GC) |

| Empirical (qPCR) [5] [4] | Replicate detection data | Concentration at 95% detection | Concentration with defined precision (e.g., CV≤20%) | qPCR and other binary/no-threshold methods |

The Scientist's Toolkit: Key Reagents and Materials for qPCR Validation

Establishing LOD and LOQ for a qPCR assay requires specific, high-quality reagents and materials to ensure sensitivity, specificity, and reproducibility.

Table 3: Essential Research Reagent Solutions for qPCR Assay Validation

| Reagent / Material | Function / Role in LOD/LOQ Determination |

|---|---|

| Target Template (e.g., gDNA, Plasmid) | Serves as the standard for creating the dilution series to empirically determine LOD/LOQ. Should be of known concentration and purity [5]. |

| Sequence-Specific Primers & Probes | Critical for assay specificity. Probe-based assays (e.g., TaqMan) are preferred over dye-based (SYBR Green) for higher specificity and reduced false positives, which is crucial at the detection limit [4]. |

| qPCR Master Mix | Contains DNA polymerase, dNTPs, and optimized buffers. Essential for efficient amplification, especially of low-copy-number targets at the LOD [5]. |

| Nuclease-Free Water | Used for preparing dilutions and controls. Must be free of nucleases to prevent degradation of the target template and reagents, which could artificially raise the LOD [4]. |

| No Template Control (NTC) | A critical negative control containing all reagents except the target template. Used to confirm the assay does not generate false-positive signals, ensuring specificity [4]. |

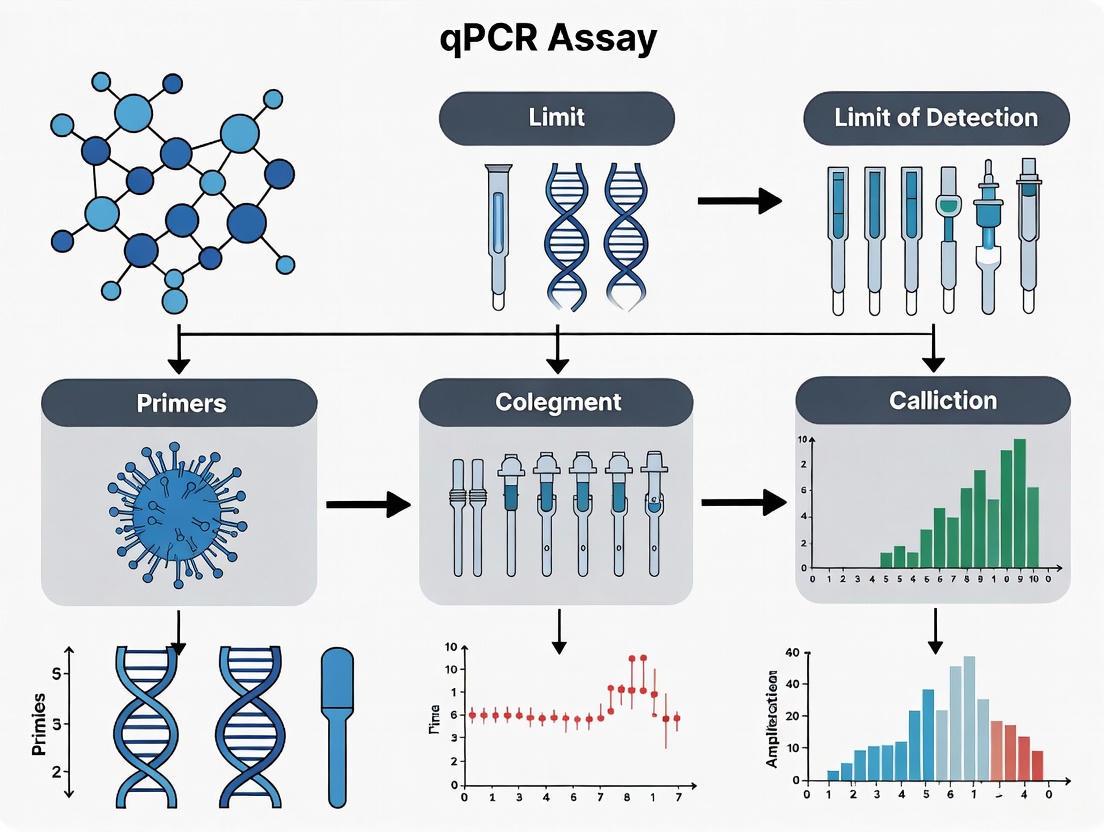

A Workflow for Determination and Verification

The following diagram illustrates a generalized, logical workflow for determining and verifying the LOD and LOQ of an analytical method, incorporating elements from the various protocols.

The distinction between the Limit of Detection (LOD) and the Limit of Quantitation (LOQ) is fundamental in analytical science. The LOD defines the ultimate sensitivity of a method for detecting the presence of an analyte, while the LOQ defines the threshold for reliable numerical quantification with stated precision and accuracy [1] [2] [6]. Selecting the appropriate experimental protocol—whether based on statistical analysis of blank and low-concentration samples, signal-to-noise evaluation, or empirical testing as required for qPCR—is critical for generating defensible data [5] [3]. For researchers and drug development professionals working with sensitive techniques like qPCR in gene therapy, a rigorous and well-documented approach to establishing these parameters ensures that the assay is "fit for purpose," providing confidence in data used to make pivotal decisions about therapeutic safety and efficacy [4].

The Limit of Detection (LOD) represents a fundamental performance characteristic of molecular diagnostic assays, defining the lowest concentration of an analyte that can be reliably distinguished from zero. This parameter directly determines an assay's clinical utility, particularly for early disease detection when pathogen levels are minimal. This review comprehensively examines LOD assessment across multiple nucleic acid amplification platforms, including qPCR, dPCR, LAMP, and MCDA, highlighting how methodological choices during validation impact diagnostic accuracy. We present comparative experimental data demonstrating platform-specific LOD values and provide detailed protocols for establishing reliable detection limits in accordance with international biometrological standards.

In clinical diagnostics and biomedical research, the Limit of Detection (LOD) serves as a critical benchmark for evaluating assay performance, representing the lowest quantity of an analyte that can be reliably detected with a stated probability [8]. Also referred to as "analytical sensitivity," LOD is mathematically defined as the concentration at which a sample tests positive with ≥95% probability, typically established through probit analysis of dilution series with multiple replicates [9] [8]. This parameter fundamentally differs from the Limit of Quantification (LOQ), which represents the lowest concentration that can be measured with acceptable precision and accuracy [8].

The clinical implications of LOD are profound, particularly for infectious disease diagnostics where early detection directly impacts patient management and public health interventions. Assays with superior LOD enable identification of pathogens during initial stages of infection when viral loads are minimal, facilitating timely treatment initiation and infection control measures [10]. For chronic infections like hepatitis B and C, high-sensitivity detection is crucial for identifying carriers with low-level viremia and monitoring treatment response [10] [11]. Similarly, in transplant medicine, sensitive cytomegalovirus (CMV) detection prevents disease progression in immunocompromised patients [8].

LOD requirements vary substantially based on clinical context. Screening tests for blood safety demand exceptional sensitivity to prevent transmission from window-period donations, while monitoring tests for treatment response may prioritize dynamic range over ultimate sensitivity. Understanding these distinctions is essential for appropriate test selection and interpretation in both clinical and research settings.

Comparative LOD Performance Across Detection Platforms

qPCR/dPCR Platforms

Quantitative PCR remains the gold standard for nucleic acid detection due to its well-established protocols and robust performance characteristics. In Japanese encephalitis virus (JEV) detection from piggery wastewater, the ACDP JEV G4 RT-qPCR assay demonstrated an LOD of 2.20–5.70 copies/reaction, significantly outperforming other tested assays which failed to detect JEV in many field samples [12]. The process limit of detection (PLOD), accounting for sample preparation, was 72–282 copies/10 mL of wastewater, highlighting how extraction efficiency impacts overall assay sensitivity [12].

For Haemophilus parasuis (HPS) detection, an optimized qPCR assay targeting the INFB gene achieved an impressive LOD of less than 10 copies/μL, with coefficients of variation consistently below 1% in repeatability testing [13]. This sensitivity proved essential for identifying low bacterial loads in complex clinical samples where interfering substances typically compromise detection.

Multiplex qPCR assays for respiratory pathogens face additional challenges in maintaining sensitivity across multiple targets. A fluorescence melting curve analysis (FMCA)-based multiplex PCR for six respiratory pathogens achieved LODs between 4.94 and 14.03 copies/μL, demonstrating that carefully optimized multiplex assays can maintain sensitivity comparable to single-plex formats [9].

Isothermal Amplification Platforms

Isothermal nucleic acid amplification techniques provide compelling alternatives to PCR, particularly in point-of-care settings. Loop-mediated isothermal amplification (LAMP) for human cytomegalovirus (hCMV) DNA detection demonstrated an LOD of 39.09 copies/reaction (with 95% confidence interval of 25.33–65.84 copies/reaction) [8]. The associated lower limit of quantification was approximately 100 copies/reaction, establishing the dynamic range for both qualitative detection and quantitative measurement [8].

For hepatitis C virus (HCV) detection, an RT-LAMP assay achieved an LOD of 10–20 copies per reaction (3.26 log₁₀ IU/mL) with 94% sensitivity and 100% specificity compared to RT-qPCR [11]. This performance approaches that of laboratory-based qPCR while offering significantly reduced complexity and cost.

Multiple cross displacement amplification (MCDA), a more recent isothermal method, has demonstrated exceptional sensitivity. A novel single-tube multiplex MCDA assay combined with gold nanoparticle-based lateral flow biosensors (AuNPs-LFB) achieved an LOD of 10 copies for both HBV and HCV, matching the analytical sensitivity of standard qPCR while offering rapid, visual interpretation of results [10].

Table 1: Comparative LOD Values Across Molecular Detection Platforms

| Detection Platform | Target | LOD | Reference Method | Clinical Performance |

|---|---|---|---|---|

| RT-qPCR (ACDP JEV G4) | Japanese encephalitis virus | 2.20–5.70 copies/reaction | Field samples from piggery wastewater | Detected JEV in 24/30 field samples vs. 17/30 for comparator assay [12] |

| qPCR | Haemophilus parasuis | <10 copies/μL | Commercial kits & national standards | 100% positive/negative percent agreement with national standard [13] |

| Multiplex FMCA-PCR | Six respiratory pathogens | 4.94–14.03 copies/μL | Commercial RT-qPCR kits | 98.81% agreement with reference method; detected 51.54% pathogen-positive cases [9] |

| LAMP | Human cytomegalovirus | 39.09 copies/reaction (25.33–65.84) | qPCR | Suitable for qualitative detection; LLOQ ~100 copies/reaction [8] |

| RT-LAMP | Hepatitis C virus | 10–20 copies/reaction (3.26 log₁₀ IU/mL) | RT-qPCR | 94% sensitivity, 100% specificity; detection in <40 minutes [11] |

| MCDA-AuNPs-LFB | HBV/HCV | 10 copies | qPCR | 100% sensitivity and specificity concordant with qPCR [10] |

Methodologies for LOD Determination

Experimental Design for LOD Assessment

Robust LOD determination requires carefully constructed dilution series with sufficient replicates at each concentration to enable statistical analysis. For hCMV LAMP assay validation, researchers analyzed 24 replicates at 8 different hCMV DNA concentrations (total 192 samples) to establish reliable LOD estimates with appropriate confidence intervals [8]. This extensive replication accounts for biological and technical variability, providing a biometrologically sound foundation for LOD claims.

The fundamental steps in LOD determination include:

- Preparation of dilution series: Serial dilutions of target nucleic acid (using quantified reference material or characterized clinical samples)

- Replicate testing: Multiple replicates (typically ≥20) at each concentration level to establish detection frequency

- Probit analysis: Statistical modeling of the probability of detection across concentrations to determine the 95% detection point [8] [9]

- Verification: Confirmation of the calculated LOD with independent samples at the determined concentration

For the FMCA-based multiplex respiratory panel, each dilution was assessed in 20 replicates, with LOD formally defined as the concentration detectable with ≥95% probability [9]. This rigorous approach ensures reliable detection limits that hold clinical utility.

Standard Curve Generation in qPCR

In qPCR assays, LOD determination incorporates standard curve generation using serial dilutions of known concentrations. The standard curve plots Ct values against the logarithm of template concentrations, creating a linear relationship in the quantifiable range [14]. Key parameters include:

- Slope: Used to calculate amplification efficiency: E = [10(−1/slope) − 1] × 100 [15]

- Linear range: The interval where accurate quantification occurs, bounded by the Limit of Detection (LOD) and Upper Limit of Quantification (ULOQ)

- R² value: Indicates linearity fit, with values >0.99 considered ideal [14]

The mathematical relationship is expressed as: y = mx + b, where y represents the Ct value, m is the slope, x is the log concentration, and b is the y-intercept [14]. This equation enables quantification of unknown samples based on their Ct values.

Table 2: Essential Components for LOD Determination Experiments

| Component | Specifications | Function in LOD Determination |

|---|---|---|

| Reference Material | Quantified nucleic acids (plasmids, in vitro transcripts, characterized clinical isolates) | Provides known concentration standards for dilution series and standard curve generation [8] [9] |

| Dilution Matrix | Negative sample matrix matching test samples (e.g., naive serum, nuclease-free water) | Maintains consistent reaction conditions across dilution series; identifies matrix effects [12] [11] |

| Amplification Reagents | Polymerase, primers, probes, nucleotides, buffer components | Execute nucleic acid amplification; quality and consistency directly impact LOD [13] [16] |

| Detection System | Fluorescence reader, lateral flow strips, colorimetric indicators | Detect amplification products; sensitivity influences overall assay LOD [10] [11] |

| Statistical Software | Probit analysis capabilities (R, SPSS, specialized validation software) | Calculate LOD with confidence intervals from binary detection data [8] [17] |

Factors Influencing LOD and Diagnostic Accuracy

Reaction Efficiency and Inhibition

PCR efficiency dramatically impacts LOD and quantitative accuracy. Ideal 100% efficiency means DNA doubles each cycle, but real-world assays typically achieve 65–90% due to inhibitors, suboptimal primers, or enzyme limitations [15]. Efficiency (E) is calculated from the standard curve slope: E = [10(−1/slope) − 1] × 100, with efficiencies of 90–110% (slopes −3.3 to −3.6) considered acceptable [14].

Efficiency directly affects quantitative results, particularly in gene expression studies using the 2−ΔΔCt method, which assumes perfect efficiency [17]. Efficiency variations between target and reference genes introduce substantial inaccuracies, potentially leading to erroneous biological conclusions [15] [17]. Mathematical approaches like ANCOVA (Analysis of Covariance) offer improved robustness to efficiency variability compared to traditional 2−ΔΔCt calculations [17].

Inhibitors present in clinical samples (hemoglobin, immunoglobulin, urea, heparin) profoundly impact LOD by reducing reaction efficiency [13]. The HPS qPCR assay systematically evaluated 14 endogenous and exogenous interfering substances, finding less than 5% impact on Ct values compared to controls [13]. This rigorous interference testing ensures maintained sensitivity in complex matrices.

Primer/Probe Design and Target Selection

Primer and probe design critically influence assay sensitivity and specificity. For regulated bioanalysis, designing at least three candidate primer/probe sets is recommended, as in silico predictions don't always translate to empirical performance [16]. Specificity verification against host genomes/transcriptomes using tools like NCBI Primer Blast is essential, but must be confirmed empirically in target matrices [16].

Strategic target selection can enhance specificity. For gene therapy biodistribution assays, targeting exon-exon junctions or vector-specific sequences distinguishes therapeutic from endogenous sequences [16]. Similarly, the HBV/HCV MCDA assay targeted conserved regions of prevalent genotypes circulating in China, ensuring broad detection capability [10].

For the respiratory multiplex FMCA assay, probes incorporated base-free tetrahydrofuran residues at variable positions, minimizing the impact of subtype sequence variations on melting temperature and maintaining consistent LOD across variants [9].

Sample Processing and Extraction Efficiency

The overall diagnostic process sensitivity depends on both analytical LOD and extraction efficiency from clinical samples. The concept of Process Limit of Detection (PLOD) accounts for sample preparation, representing the lowest concentration detectable in the original sample matrix [12]. For JEV wastewater surveillance, the PLOD of 72–282 copies/10 mL reflected both extraction recovery (14.9–26.6%) and analytical sensitivity [12].

Simplified sample processing can maintain sensitivity while improving point-of-care utility. The HCV RT-LAMP assay detects RNA directly from lysed serum without RNA purification, achieving LOD of 10–20 copies/reaction in under 50 minutes total workflow [11]. This approach eliminates extraction efficiency variability while providing sensitivity adequate for clinical detection.

Diagram 1: LOD Determination Workflow. Critical quality control checkpoints (red) ensure accurate LOD estimation throughout assay development and validation.

The Limit of Detection represents a fundamental assay characteristic with direct implications for diagnostic accuracy and clinical utility. As demonstrated across multiple platforms, LOD values provide critical benchmarks for comparing assay performance and selecting appropriate methodologies for specific clinical scenarios. The continuing evolution of molecular technologies, particularly isothermal amplification methods, promises increasingly sensitive detection capabilities approaching or matching gold standard qPCR while offering simplified workflows suitable for decentralized testing.

Robust LOD determination requires rigorous experimental designs incorporating sufficient replicates, appropriate statistical analyses, and comprehensive evaluation of interfering substances. The mathematical foundations of qPCR efficiency and standard curve generation provide frameworks for understanding sensitivity limitations and optimizing assay performance. As molecular diagnostics continue expanding into public health surveillance, point-of-care testing, and complex multiplex applications, thoughtful consideration of LOD requirements within specific clinical contexts will remain essential for advancing patient care and disease control.

The Limit of Detection (LOD) represents the lowest concentration of an analyte that can be reliably distinguished from zero and is a critical parameter in validating analytical methods across pharmaceutical and clinical diagnostics. Regulatory agencies worldwide establish stringent LOD requirements to ensure product safety, particularly for methods detecting trace contaminants, pathogens, or biomarkers. In pharmaceutical quality control, residual host cell DNA in biological products like vaccines poses potential health risks including tumorigenesis and infectivity, necessitating highly sensitive detection methods [18]. Similarly, clinical diagnostics require validated LODs to ensure reliable pathogen detection for accurate diagnosis and treatment guidance.

The regulatory landscape for diagnostic tests involves multiple frameworks. Laboratory Developed Tests (LDTs) have traditionally been regulated under the Clinical Laboratory Improvement Amendments (CLIA) by the Centers for Medicare & Medicaid Services (CMS) rather than the FDA [19]. However, recent regulatory shifts have created uncertainty, with a March 2025 federal court decision vacating the FDA's Final Rule that would have explicitly classified LDTs as medical devices [20] [19]. This evolving regulatory context underscores the importance of robust LOD validation that meets both scientific and compliance requirements across different jurisdictions and applications.

LOD Requirements Across Regulatory Standards

Pharmaceutical Quality Control Standards

Regulatory authorities worldwide have established specific limits for residual DNA levels in biological products to ensure patient safety. As highlighted in Table 1, the World Health Organization (WHO) and US Food and Drug Administration (FDA) allow up to 10 ng/dose of residual host cell DNA in biological products or vaccines [18]. This threshold represents a risk-based approach that balances safety concerns with manufacturing feasibility.

Table 1: Regulatory Limits for Residual DNA in Biological Products

| Regulatory Body | Limit for Residual DNA | Application Context |

|---|---|---|

| World Health Organization (WHO) | ≤10 ng/dose | Biological products/vaccines |

| US FDA | ≤10 ng/dose | Biological products/vaccines |

Different detection methods offer varying levels of sensitivity for quantifying residual DNA, as compared in Table 2. The quantitative polymerase Chain Reaction (qPCR) method stands out with a remarkable detection limit as low as fg (10⁻¹⁵ g), making it significantly more sensitive than fluorescent dye, hybridization, or immunoenzymatic methods [18]. This superior sensitivity, combined with its accuracy and precision, has established qPCR as the preferred technique for residual DNA quantification in pharmaceutical quality control, and it is the only method specifically specified in Chapter 509 of the United States Pharmacopoeia [18].

Table 2: Comparison of Residual DNA Detection Methods

| Detection Method | Typical Detection Limit | Regulatory Recognition |

|---|---|---|

| Fluorescent Dye (PicoGreen) | ng (10⁻⁹ g) | Chinese Pharmacopoeia |

| Hybridization | 1-10 pg | - |

| Immunoenzymatic Method | pg (10⁻¹² g) | European Directorate for the Quality of Medicines & HealthCare |

| qPCR Method | fg (10⁻¹⁵ g) | United States Pharmacopoeia (Chapter 509) |

Clinical Diagnostic Performance Standards

While explicit LOD thresholds vary by analyte and clinical context, diagnostic tests must demonstrate sufficient sensitivity to detect clinically relevant concentrations. For infectious disease testing like Haemophilus parasuis (HPS) detection in veterinary medicine, methods must reliably identify pathogens at low concentrations in complex sample matrices [13]. The Clinical Laboratory Improvement Amendments (CLIA) establish quality standards for laboratory testing, focusing on analytical validity including sensitivity, though specific LOD thresholds are typically determined based on clinical requirements rather than fixed regulatory values.

The recent court decision regarding LDT regulation reinforces that CLIA, rather than FDA medical device regulations, continues to govern LDT compliance for now [19]. This maintains a framework where laboratories must validate that their tests meet performance specifications, including LOD, appropriate for the test's intended use, without the specific premarket review requirements that apply to commercially distributed diagnostic kits.

Experimental Approaches for LOD Validation

qPCR Method Development for Residual DNA Detection

A 2025 study developed and validated a qPCR assay for detecting residual Vero cell DNA in rabies vaccines, providing an exemplary protocol for pharmaceutical applications [18]. The researchers targeted two highly repetitive genomic sequences: a "172bp" tandem repeat (6.8×10⁶ copies/haploid genome) and an Alu repetitive sequence (approximately 3×10⁵ copies/haploid genome) [18]. This strategic selection of high-copy number targets enhanced assay sensitivity by increasing the number of potential amplification sites per unit of DNA.

The experimental workflow followed a systematic approach to optimize and validate the method, as illustrated below:

The validation protocol comprehensively assessed critical analytical parameters. For the 172bp sequence target, the method demonstrated excellent linearity with a quantification limit of 0.03 pg/reaction and a detection limit of 0.003 pg/reaction [18]. Precision studies showed relative standard deviation (RSD) across samples ranging from 12.4% to 18.3%, with recovery rates between 87.7% and 98.5% [18]. The method showed no cross-reactivity with common bacterial and cell strains, confirming high specificity for quality control applications.

Diagnostic qPCR Assay for Pathogen Detection

A separate 2025 study established a qPCR method for detecting Haemophilus parasuis (HPS) in clinical samples from pig farms, demonstrating LOD validation approaches for infectious disease diagnostics [13]. This method targeted the INFB gene of HPS and was specifically optimized to overcome interference from substances present in complex clinical and environmental samples.

The experimental workflow for diagnostic assay validation included several critical stages:

Using a seven-fold dilution series of recombinant plasmid DNA, researchers determined the LOD was less than 10 copies/µL, significantly more sensitive than many existing HPS detection methods [13]. The method demonstrated exceptional precision with coefficient of variation (CV) consistently below 1% in both inter-batch and intra-batch repeatability tests [13]. When testing 248 clinical samples, the assay achieved 100% positive and negative percent agreement with national standards while detecting more positives (9.27%) than commercial kits (6.05%), demonstrating both regulatory alignment and superior diagnostic sensitivity [13].

Essential Research Reagent Solutions

Successful LOD validation requires specific high-quality reagents and materials. Table 3 details essential research reagent solutions for qPCR-based detection methods in both pharmaceutical and diagnostic contexts, compiled from the cited studies:

Table 3: Research Reagent Solutions for qPCR LOD Studies

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Magnetic Bead Nucleic Acid Kits | Extract DNA from complex samples while removing inhibitors | Vaccine samples [18], Clinical specimens [13] |

| Targeted Primers & Probes | Amplify and detect specific sequences with high specificity | Vero cell "172bp" repeats [18], HPS INFB gene [13] |

| qPCR Master Mixes | Provide optimized enzymes, buffers, dNTPs for efficient amplification | RealStar Fast dye qPCR premix [13] |

| Reference DNA Standards | Create standard curves for precise quantification | Vero DNA National Standard [18] |

| Interference Substances | Assess assay robustness against real-world contaminants | Ethanol, isopropanol, biological components [13] |

Comparative Analysis of LOD Validation Approaches

Pharmaceutical and diagnostic applications share common LOD validation principles but differ in their specific implementation requirements. Pharmaceutical quality control emphasizes extreme sensitivity for risk mitigation, with methods like the Vero DNA detection achieving detection limits of 0.003 pg/reaction to comply with the 10 ng/dose regulatory threshold [18]. Diagnostic validation prioritizes reliability in complex sample matrices, as demonstrated by the HPS assay maintaining performance despite interfering substances [13].

Both fields require rigorous specificity testing, precision assessment, and determination of linearity and quantification limits. However, diagnostic validation typically includes more extensive testing with clinical samples to establish performance across diverse real-world conditions. The evolving regulatory landscape for LDTs adds further complexity to diagnostic validation requirements, though the core scientific principles of robust LOD determination remain essential across both fields [19].

In the rigorous world of quantitative PCR (qPCR) assay validation, four key performance parameters form the bedrock of a reliable method: Limit of Detection (LOD), efficiency, linearity, and dynamic range [21] [22]. For researchers and drug development professionals, understanding the individual meaning and, more importantly, the intricate interplay between these parameters is crucial for developing assays that are both sensitive and quantitatively accurate. This guide provides a structured comparison of these parameters, grounded in experimental data and protocols, to inform robust qPCR assay verification.

Foundational Concepts and Their Interrelationships

Before delving into experimental data, it is essential to define the core parameters and understand how they influence one another.

Limit of Detection (LOD) is the lowest amount of analyte that can be detected with a stated probability (typically 95%) but not necessarily quantified as an exact value [5] [21] [1]. It represents the sensitivity threshold of an assay, answering the question, "Is the target there?"

Limit of Quantification (LOQ) is the lowest amount of analyte that can be quantitatively determined with stated acceptable precision and accuracy [5] [21]. The LOQ defines the lower boundary of an assay's quantitative capability and is always at or above the LOD [1].

Efficiency refers to the performance of the qPCR amplification itself. An ideal reaction doubles the target DNA every cycle, resulting in 100% efficiency. Deviations from this ideal can impact the accuracy of quantification across the entire dynamic range.

Linearity describes the ability of an assay to produce results that are directly proportional to the concentration of the analyte across the dynamic range [23]. It is typically assessed via a calibration curve, with the coefficient of determination (R²) indicating how well the data fits a straight line.

Dynamic Range is the concentration interval over which the assay exhibits acceptable linearity, accuracy, and precision [23] [21]. It is bounded at the lower end by the Lower Limit of Quantification (LLOQ) and at the upper end by the Upper Limit of Quantification (ULOQ).

The relationship between these parameters is hierarchical and interdependent. The dynamic range defines the entire usable concentration window of an assay. Within this window, the segment from the LLOQ to the ULOQ must demonstrate acceptable linearity. The LOD resides below the LLOQ, in a region where detection is possible but precise quantification is not. Finally, the foundational element underpinning all of this is amplification efficiency; a non-optimal efficiency will compromise linearity and distort quantification throughout the dynamic range. This logical flow is illustrated below.

Experimental Protocols for Parameter Determination

Protocol for Determining LOD and LOQ

A standard empirical approach for determining LOD involves analyzing a serial dilution of the target analyte with a high number of replicates at each dilution level [24] [1].

- Preparation: Create a primary serial dilution series of the target (e.g., a cloned amplicon or genomic DNA) covering a range from a concentration that is consistently detected down to one that is rarely detected (e.g., from 1000 copies/reaction to 1 copy/reaction using 1:10 dilutions).

- Primary Run: Test each dilution in a small number of replicates (e.g., triplicate) to identify the range where detection becomes inconsistent.

- Secondary Dilution: Perform a secondary, finer serial dilution (e.g., 1:2 dilutions) within the critical range identified in step 2.

- High-Replicate Testing: Analyze these secondary dilutions in a high number of replicates (e.g., 10-20) [24].

- Data Analysis: Tabulate the detection rate (number of positive replicates/total replicates) at each concentration. The LOD is defined as the lowest concentration at which the detection rate is ≥95% [24]. The LOQ can be determined as the lowest concentration where acceptable precision (e.g., a defined CV) is maintained, often corresponding to the bottom of the linear dynamic range [21].

Protocol for Establishing Linearity and Dynamic Range

The linear dynamic range is established through a calibration curve, which also provides data for calculating amplification efficiency [23].

- Calibration Standards: Prepare a series of standard solutions with known concentrations of the target analyte. A 10-fold dilution series spanning 6-8 orders of magnitude is typical.

- qPCR Run: Analyze all standard samples in replicate using the qPCR assay.

- Curve Fitting: Plot the mean Cq (Quantification Cycle) value for each standard against the logarithm of its known concentration. Perform linear regression analysis on the data.

- Parameter Calculation:

- Linearity: Assessed by the coefficient of determination (R²). An R² value > 0.99 generally indicates excellent linearity [23].

- Efficiency: Calculated from the slope of the standard curve using the formula: Efficiency = [10^(-1/slope) - 1] × 100%. An ideal efficiency of 100% corresponds to a slope of -3.32.

- Dynamic Range: The range of concentrations between the lowest (LLOQ) and highest (ULOQ) standards that meet predefined criteria for linearity, accuracy, and precision.

Comparative Analysis of qPCR Performance Data

The following tables summarize experimental data from validated qPCR assays, illustrating the performance parameters in practice.

Table 1: Performance data from a validated qPCR assay for residual Vero cell DNA in rabies vaccines. [18]

| Parameter | Target: "172bp" Sequence | Target: Alu Repetitive Sequence |

|---|---|---|

| Linearity (R²) | Excellent | Not Specified |

| Dynamic Range | 0.3 fg/μL to 30 pg/μL | 3 fg/μL to 300 pg/μL |

| LOD | 0.003 pg/reaction | Not Specified |

| LOQ | 0.03 pg/reaction | Not Specified |

| Precision (RSD) | 12.4% - 18.3% | Not Specified |

| Specificity | No cross-reactivity with bacterial and other cell strains | No cross-reactivity with bacterial and other cell strains |

Table 2: Comparison of LOD and LOQ for different PCR-based platforms from independent studies. [25] [26]

| Application / Platform | Limit of Detection (LOD) | Limit of Quantification (LOQ) | Note |

|---|---|---|---|

| Cyclospora Detection (qPCR) [26] | As few as 5 oocysts in Romaine lettuce | Not Specified | Multi-laboratory validation; 69% detection rate at 5 oocysts |

| Nanoplate dPCR (QIAcuity One) [25] | 0.39 copies/μL input | 54 copies/reaction | Using synthetic oligonucleotides |

| Droplet dPCR (QX200) [25] | 0.17 copies/μL input | 85.2 copies/reaction | Using synthetic oligonucleotides |

The Scientist's Toolkit: Essential Reagents and Materials

A successful qPCR validation study relies on specific, high-quality reagents. The following table details key materials used in the featured Vero cell DNA assay [18].

Table 3: Key research reagent solutions for qPCR assay development and validation.

| Reagent / Material | Function in the Assay | Example from Validation Study |

|---|---|---|

| Cell Line & DNA Standard | Provides the source of target DNA and a calibrated standard for quantification. | Vero cell line from CAS cell bank; Vero DNA Standard from NIFDC [18]. |

| Primers & Probes | Specifically target and amplify a unique genomic sequence for detection. | Primers/Probes for the "172bp" tandem repeat and Alu repetitive sequences [18]. |

| Nucleic Acid Extraction Kit | Isolates and purifies residual DNA from the vaccine matrix. | DNA preparation kit (magnetic beads method) [18]. |

| qPCR Master Mix | Contains essential components for amplification (polymerase, dNTPs, buffer). | In-house detection reagents containing enzymes, buffers, probes, and primers [18]. |

| qPCR Instrument | Performs thermal cycling and real-time fluorescence detection. | SHENTEK-96S qPCR instrument [18]. |

The relationship between LOD, efficiency, linearity, and dynamic range is not merely sequential but deeply synergistic. As the experimental data shows, a well-validated assay like the one for residual Vero DNA demonstrates a wide dynamic range with excellent linearity and a precise LOD, all underpinned by a robust amplification process [18]. When verifying a qPCR assay, these parameters must be evaluated as an interconnected system. Compromising on one—such as poor efficiency—can undermine the reliability of the others, leading to a method that may detect but cannot be trusted to accurately quantify. A thorough understanding of these key performance parameters ensures the development of qPCR assays that generate reliable, reproducible, and defensible data for critical applications in drug development and molecular diagnostics.

Proven Methods for Determining LOD in Your qPCR Workflow

Quantitative Real-Time PCR (qPCR) stands as the most sensitive and specific technique for the detection of nucleic acids, playing a definitive role in gene quantification studies across basic sciences and clinical research [27] [5]. The accuracy of this quantification hinges on proper assay validation, where determining the Limit of Detection (LoD) and Limit of Quantification (LoQ) is critical for assessing assay performance, particularly in diagnostic applications and regulated environments like drug development [5]. The LoD is defined as the lowest amount of analyte in a sample that can be detected with a stated probability, while the LoQ is the lowest amount that can be quantitatively determined with acceptable precision and accuracy under stated experimental conditions [5].

The foundation for determining these parameters is a properly constructed standard curve using the dilution series method. This method enables researchers to validate primer efficiency, define the dynamic range of detection, and establish the sensitivity limits of their qPCR assay, ensuring that subsequent experimental results reflect biological reality rather than technical artifacts [28].

Theoretical Foundations of the qPCR Standard Curve

The Relationship Between Cq Values and Template Concentration

In qPCR, the amplification process follows an exponential function described by the equation: [ Q(n) = Q(0) \times E^n ] Where ( Q(n) ) is the quantity of product at cycle ( n ), ( Q(0) ) represents the initial template quantity, and ( E ) is the PCR efficiency [27]. The cycle quantification (Cq) value is the estimated cycle number at which the amplification curve crosses a defined threshold, providing an indirect measure of the initial template quantity [27].

When a sample is diluted by a factor ( d ), the relationship becomes: [ Cq = -\log(d)/\log(E) + \log(T/Q(0)) / \log(E) ] This equation indicates that a semi-log plot of Cq versus ( \log(d) ) has a slope of ( -1/\log(E) ), from which the amplification efficiency ( E ) can be derived [27]. This fundamental relationship forms the basis for standard curve analysis in qPCR.

Understanding PCR Efficiency and Its Implications

Under ideal conditions, PCR amplification proceeds with 100% efficiency, doubling the number of DNA molecules every cycle (( E = 2 )). In practice, reactions rarely achieve perfection, and efficiencies between 90% and 110% are generally considered acceptable [28]. Efficiency values outside this range may indicate issues with primer design, reaction inhibition, or suboptimal reaction conditions that require troubleshooting [28].

The accuracy of efficiency estimation is crucial because small errors in efficiency estimation can lead to large miscalculations of initial template quantity. For example, a 0.05 error in E (when E is approximately 2) can result in a 53-110% misestimate after 30 cycles due to the exponential nature of PCR amplification [27].

Experimental Protocol: Establishing a Standard Curve for LOD Determination

Preparation of Dilution Series

The process begins with creating a serial dilution of a known DNA standard. This standard can be recombinant plasmid, genomic DNA, or synthesized oligonucleotide with precisely determined concentration [29]. The dilution series should:

- Cover a wide dynamic range, typically 5-6 orders of magnitude (e.g., 5- to 10-fold serial dilutions) [28] [30]

- Include at least five data points to properly define the linear relationship [28] [30]

- Be prepared using accurate pipetting techniques with well-calibrated equipment to minimize dilution errors [28]

For each dilution level, multiple replicates (typically triplicate) are run to assess technical variability and repeatability. Including a negative control (water instead of DNA) is essential to detect potential contamination in the reaction components [28].

qPCR Run Parameters

The qPCR reactions are performed using standardized cycling conditions appropriate for the primer set and detection chemistry. Key considerations include:

- Using consistent reaction volumes and master mix composition across all samples

- Ensuring proper positive and negative controls are included

- Using a sufficient number of cycles (typically 40-50) to detect the most diluted samples [31]

After the run, Cq values are determined for each reaction by setting the threshold in the region of exponential amplification across all amplification plots [5].

Data Analysis and Standard Curve Generation

The Cq values are plotted against the logarithm of the initial template concentration or dilution factor. The resulting data points are fitted to a straight line, which forms the standard curve [30]. Analysis of this curve provides several critical parameters:

- PCR Efficiency: Calculated from the slope of the standard curve using the formula: ( E = (10^{-1/slope}) - 1 ) [28]

- Correlation Coefficient (R²): Should be > 0.99 to indicate a good linear relationship between Cq values and template concentration [28] [30]

- Standard Deviation of Replicates: Should be within 0.2 cycles for technical replicates to ensure reliable R² and efficiency values [28]

The following workflow illustrates the complete process from sample preparation to data analysis:

Determining Limit of Detection and Limit of Quantification

Statistical Approaches for LOD and LOQ Determination

Determining LOD and LOQ in qPCR presents unique challenges because the measured Cq values are proportional to the log of the target concentration, creating a logarithmic rather than linear response. This means conventional approaches for estimating these parameters based on linear response and normal distribution in linear scale are not appropriate [5].

A robust approach involves analyzing multiple replicates across a dilution series of known concentrations, including samples with very low template concentrations. The LOD can be determined as the lowest concentration where a pre-defined proportion of replicates (e.g., 95%) yield a positive amplification signal [5]. Statistical methods such as logistic regression modeling can be applied to estimate the probability of detection at different concentration levels, providing a mathematically rigorous determination of LOD [5].

The LOQ is established as the lowest concentration where quantification meets stated acceptable precision and accuracy requirements, typically assessed through coefficient of variation (CV) calculations. One study established an LOQ of 54 copies/reaction for a nanoplate-based digital PCR system and 85.2 copies/reaction for a droplet-based system using a 3rd degree polynomial model fit [25].

Experimental Design for LOD/LOQ Determination

A comprehensive approach to LOD/LOQ determination involves:

- Testing a wide range of template concentrations with high replication, particularly at the lower end

- Including a sufficient number of negative controls to establish the false positive rate

- Analyzing results using appropriate statistical models that account for the logarithmic nature of qPCR data

- Considering the impact of sample matrix on assay performance by spiking standards into negative sample matrix [32] [5]

One study used a 2-fold dilution series covering 1 to 2048 molecules per reaction with 64-128 replicates per concentration to thoroughly characterize assay performance [5].

Comparative Performance Data: qPCR vs. Digital PCR

Sensitivity and Precision Comparison

Recent comparative studies between qPCR and digital PCR (dPCR) provide valuable insights into their relative performance for quantification applications. The table below summarizes key performance metrics from published studies:

Table 1: Comparison of qPCR and dPCR Performance Characteristics

| Parameter | qPCR | Digital PCR | Experimental Context |

|---|---|---|---|

| Dynamic Range | 8 logs [32] | 6 logs [32] | Using gBlocks for CAR-T manufacturing validation |

| LoD | 32 copies for RCR [32] | 10 copies for RCR [32] | Replication-competent retrovirus detection |

| Data Variation | Up to 20% difference in copy number ratio [32] | Lower variation [32] | Sample comparisons in CAR-T manufacturing |

| Correlation of Linked Genes | R² = 0.78 [32] | R² = 0.99 [32] | Genes linked in one construct |

| Precision (CV) | Not reported | 6-13% for ddPCR, 7-11% for ndPCR [25] | Synthetic oligonucleotide quantification |

Practical Implications for Method Selection

The choice between qPCR and dPCR depends on specific application requirements. dPCR offers advantages for applications requiring high precision and minimal variation, such as CAR-T manufacturing validations where it provides "a less variable and significantly more compact array of regulatory tests" [32]. However, qPCR maintains a wider dynamic range and may be more suitable for applications where target concentrations vary extensively [32].

For environmental monitoring and microbial quantification, dPCR has demonstrated superior resistance to inhibition caused by humic acids and better sensitivity for low-abundance targets [25]. The precision of dPCR makes it particularly valuable for detecting small fold-changes in target concentration and for quantifying targets near the limit of detection.

Advanced Calibration Strategies and Experimental Design

Alternative Calibration Models

Traditional qPCR quantification requires including a standard curve in each instrument run, which consumes significant resources. Advanced calibration strategies offer alternatives:

- Single Model: Traditional approach with a standard curve in each run [29]

- Master Model: A single calibration curve derived from multiple instrument runs [29]

- Pooled Model: Combines data from multiple runs to reduce uncertainty in both slope and intercept parameters [29]

- Mixed Model: Achieves uncertainty estimates similar to the single model while increasing available reaction wells per run [29]

Research indicates that the pooled model can reduce uncertainty in both slope and intercept parameter estimates compared to the traditional single curve approach, potentially improving quantification accuracy while optimizing resource utilization [29].

Efficient Experimental Designs

Alternative experimental designs can streamline qPCR experimentation while maintaining data quality. One proposed approach uses "dilution-replicates instead of identical replicates," where a single reaction is performed on several dilutions for every test sample rather than multiple identical replicates [27]. This design:

- Enables estimation of PCR efficiency for each sample directly

- Eliminates the need for separate efficiency determination experiments

- Provides more flexibility in handling outliers compared to traditional replicate designs [27]

This approach can be particularly valuable for large-scale gene expression studies where reducing operational costs and technical errors is significant [27].

Essential Research Reagent Solutions

Successful implementation of the dilution series method for LOD determination requires careful selection of research reagents and tools. The following table outlines key solutions and their functions:

Table 2: Essential Research Reagent Solutions for qPCR Standard Curve Analysis

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| DNA Standards (gBlocks, recombinant plasmid, genomic DNA) | Calibration reference for standard curve | Must be accurately quantified; choice affects uncertainty [32] [29] |

| Probe-based qPCR Master Mix | Provides enzymes, dNTPs, buffer for amplification | Optimized mixes reduce batch-to-batch variation [31] |

| Primer/Probe Sets | Target-specific amplification | Must be validated for efficiency; typically used at 200-500 nM [31] |

| DNA Purification Kits | Nucleic acid extraction from samples | Efficiency impacts final quantification; QIAamp DNA kits commonly used [31] |

| Restriction Enzymes (e.g., HaeIII, EcoRI) | Enhance access to target sequences | Choice affects precision, especially for high copy number targets [25] |

| Statistical Software (e.g., GenEx) | Data analysis for LOD/LOQ determination | Enables logistic regression modeling for detection probability [5] |

The dilution series method for setting up a standard curve provides the foundation for reliable LOD determination in qPCR assays. The experimental data and comparative analyses presented enable researchers to make evidence-based decisions about their quantification strategies. Key considerations for implementation include:

- Investing appropriate resources in initial standard curve validation to prevent unreliable results in subsequent experiments

- Selecting the appropriate quantification technology (qPCR vs. dPCR) based on the required dynamic range, precision, and sensitivity for the specific application

- Adopting advanced calibration models and experimental designs to optimize resource utilization while maintaining data quality

- Using appropriate statistical approaches for LOD/LOQ determination that account for the logarithmic nature of qPCR data

As molecular technologies continue to evolve, the precise determination of detection and quantification limits remains fundamental to generating reliable, reproducible data in both research and clinical applications. The methodologies and comparisons outlined provide a roadmap for researchers to validate their qPCR assays with confidence, ensuring that results truly reflect biological reality rather than technical artifacts.

Quantitative polymerase chain reaction (qPCR) is a cornerstone technique in molecular biology, clinical diagnostics, and drug development for its ability to precisely quantify nucleic acids. A critical challenge in assay development lies in accurately determining the lower limits of detection and quantification, where traditional calibration curves become less reliable. At very low template concentrations (<10 initial target molecules), the random distribution of DNA molecules follows Poisson statistics, which must be accounted for to validate an assay's true quantitative capabilities [33] [34]. Boundary Limit Analysis using Poisson distribution provides a mathematical framework to confirm whether a qPCR assay can reliably detect and quantify a single DNA molecule and distinguish between integer copy numbers at these low concentrations [34]. This guide compares Poisson analysis with other emerging validation methodologies, providing researchers with experimental data and protocols for rigorous assay verification.

Methodologies for qPCR Boundary Limit Analysis

Poisson Distribution-Based Analysis

Theoretical Basis: Poisson distribution describes the probability of observing k events in a fixed interval when events occur with a known constant mean rate and independently of the time since the last event. In qPCR, it models the distribution of target DNA molecules across replicate reactions at low concentrations [34]. When the average number of target molecules per reaction (λ) is low, the probability of any reaction containing exactly k copies is given by: P(k) = (λ^k * e^-λ) / k!

The proportion of negative reactions (those with zero target molecules, k=0) is P(0) = e^-λ. This relationship allows for the estimation of the actual target concentration in the sample based on the observed fraction of negative reactions [35].

Experimental Protocol for Poisson Analysis:

- Sample Preparation: Prepare a dilution series of the target DNA to yield average concentrations of 1-10 copies per reaction based on spectrophotometric measurements [34].

- Replicate Reactions: Perform a minimum of 60-80 replicate qPCR reactions at each dilution level to achieve sufficient statistical power [33].

- qPCR Amplification: Run all replicates under identical cycling conditions optimized for the specific assay.

- Data Analysis:

- Record the number of positive and negative reactions for each dilution.

- Calculate the observed fraction of negative reactions (Pobs).

- Estimate the actual mean concentration per reaction using λest = -ln(Pobs).

- Compare λest with the expected concentration based on dilution to assess quantification accuracy [34].

Interpretation: A well-validated assay should demonstrate a linear relationship between the expected and observed concentrations across the 1-10 copy range. Significant deviations may indicate issues with amplification efficiency, inhibition, or pipetting inaccuracy [35].

PCR-Stop Analysis

Theoretical Basis: PCR-Stop analysis is a recently developed validation tool that investigates qPCR assay performance during initial amplification cycles in the range >10 initial target molecule numbers (ITMN) [33]. It tests whether DNA duplication follows theoretical doubling during early cycles and whether the assay maintains consistent efficiency from the first cycle.

Experimental Protocol for PCR-Stop Analysis [33]:

- Experimental Setup: Prepare six batches, each containing eight identical replicate reactions with the same target DNA quantity (>10 ITMN).

- Pre-Run Cycles: Subject batches to ascending numbers of pre-run amplification cycles (0 to 5 cycles):

- Batch 1: Directly placed into cooler (0 cycles)

- Batch 2: 1 pre-run cycle then cooled

- Batch 3: 2 pre-run cycles then cooled

- Up to Batch 6: 5 pre-run cycles then cooled

- Main qPCR Run: Transfer all batches to a real-time PCR thermocycler for a complete run with the full number of cycles.

- Data Analysis Criteria:

- Efficiency Consistency: Calculate whether DNA duplicates according to pre-runs (ideal: 100% efficiency).

- Replicate Variation: Determine relative standard deviation (RSD) between the eight samples of each batch.

- Quantitative Resolution: Assess steady increase of quantification cycle (Cq) values across batches.

- Qualitative Limit: Note any negative samples indicating detection failure.

Table 1: Performance Comparison of Two Assays Using PCR-Stop Analysis

| Criterion | Well-Performing prfA Assay | Suboptimal exB Assay (10 ITMN) |

|---|---|---|

| Efficiency from PCR-Stop | 93.7% | 109.6% |

| Efficiency from Calibration Curve | 94.6% | 100.6% |

| Average RSD Across Batches | ~20% | Approaching 300% |

| Linearity (R²) | High (similar to calibration) | 0.6981 (low) |

Digital PCR (dPCR) for Validation

Theoretical Basis: Digital PCR provides absolute quantification by partitioning a sample into thousands of nanoliter-scale reactions, each containing zero, one, or a few target molecules [36] [35]. After PCR amplification, the fraction of positive partitions is counted, and the initial concentration is calculated using Poisson statistics.

Experimental Protocol for dPCR Validation [35]:

- Sample Partitioning: Divide the reaction mix into thousands of partitions using microfluidics (droplets or chips).

- PCR Amplification: Amplify target sequences in partitions through standard thermal cycling.

- Fluorescence Reading: Measure endpoint fluorescence for each partition.

- Threshold Determination: Apply fluorescence threshold to classify partitions as positive or negative.

- Concentration Calculation: Estimate target concentration using Poisson correction: λ = -ln(1 - p), where p is the proportion of positive partitions.

Advantages and Limitations: dPCR provides high precision without standard curves and is less affected by inhibitors but requires specialized equipment and has a more complex workflow [36]. One study comparing RT-qPCR and RT-droplet digital PCR (ddPCR) for relative quantification of alternatively spliced isoforms found both techniques had similar dynamic range, linearity, limit of blank (LOB), limit of detection (LOD), and limit of quantification (LOQ) at biologically relevant template concentrations [36].

Comparative Performance Data

Method Capabilities and Applications

Table 2: Comparison of qPCR Validation Methods

| Method | Effective Range | Key Parameters | Strengths | Limitations |

|---|---|---|---|---|

| Poisson Analysis | <10 initial target copies [33] [34] | Quantitative resolution, qualitative detection limit | Validates single-molecule detection, confirms integer discrimination | Limited to low copy numbers, requires many replicates |

| PCR-Stop Analysis | >10 initial target copies [33] | Early-cycle efficiency, quantitative resolution, replicate consistency | Tests efficiency from first cycle, reveals enzyme activation issues | Does not cover very low copy number range |

| Digital PCR | Broad range (theoretically 1-10^5 copies) [35] | Absolute quantification, precision, partition efficiency | No standard curve needed, resistant to inhibitors | Specialized equipment, complex workflow, partition variation [35] |

| Calibration Curve (Traditional) | Typically >100 copies | Efficiency (E), correlation coefficient (Rsq) | Simple, familiar, wide dynamic range | Limited information on low-copy performance |

Experimental Data from Literature

Table 3: Experimental Performance Data from Validation Studies

| Assay / Method | Target | Reported Sensitivity | Precision (RSD) | Reference |

|---|---|---|---|---|

| qPCR for Vero DNA [18] | "172bp" repetitive sequence | LOD: 0.003pg/reaction LOQ: 0.03pg/reaction | 12.4-18.3% | |

| TaqMan qPCR for DEC [37] | Diarrheagenic E. coli virulence genes | Most: 1.60×10¹ copies/μL (stx2: 1.60×10² copies/μL) | Within-group: 0.12-0.88% Between-group: 0.67-1.62% | |

| Modified qPCR (Mit1C) [26] | Cyclospora cayetanensis in produce | 5 oocysts (69.23% detection rate) | Between-lab variance: ~0 (high reproducibility) | |

| RT-dPCR vs RT-qPCR [36] | BRCA1 isoforms | Same LOB, LOD, LOQ for both methods | Similar precision and reproducibility |

Experimental Workflows and Signaling Pathways

Poisson Analysis Experimental Workflow

Method Application Ranges and Relationships

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for qPCR Boundary Limit Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Hot Start Polymerases | DNA amplification with reduced non-specific priming | PCR-Stop analysis helps compare activation of chemically modified vs antibody-complexed enzymes [33] |

| Hydrolysis Probes (TaqMan) | Sequence-specific detection with high specificity | Fluorophore (FAM) and quencher (BHQ1) provide low background; essential for precise Cq determination [37] |

| DNA Extraction Kits (Magnetic Beads) | Isolation of high-purity DNA from samples | Critical for removing inhibitors that affect low-copy amplification efficiency [18] |

| Digital PCR Partitioning Reagents | Formation of nanoliter-scale reaction chambers | Water-in-oil droplets or chip-based partitions for absolute quantification [35] |

| Standard Reference Materials | Calibration and quality control | Certified DNA standards essential for method validation and cross-platform comparison [18] |

| Inhibition-Resistant Master Mixes | Enhanced amplification efficiency | Particularly important for complex matrices in food, clinical, or environmental samples [26] |

Advanced boundary limit analysis utilizing Poisson distribution provides essential validation for qPCR assays at low copy numbers, complementing traditional calibration curves. Each method—Poisson analysis, PCR-Stop, and digital PCR—offers unique strengths for different concentration ranges and application needs. Poisson distribution remains fundamental for establishing true single-molecule detection capabilities, while PCR-Stop analysis reveals crucial information about early-cycle amplification efficiency. Digital PCR emerges as a powerful alternative that inherently incorporates Poisson statistics for absolute quantification. For comprehensive assay validation, researchers should consider implementing multiple approaches to fully characterize assay performance across the entire dynamic range, ensuring reliable results in diagnostic, pharmaceutical, and research applications.

Quantitative PCR (qPCR) stands as one of the most pivotal molecular techniques in modern laboratories, with applications spanning diagnostics, gene expression analysis, and pathogen detection. Despite its widespread adoption, a significant challenge persists in accurately validating assay performance, particularly during the crucial initial amplification cycles where detection issues and inefficiencies often originate. Traditional validation methods like calibration curves provide limited insight into these early amplification events, creating a critical gap in comprehensive qPCR assessment. PCR-Stop analysis emerges as a novel methodological solution specifically designed to investigate amplification efficiency during the first few cycles of qPCR, providing researchers with an essential tool for thorough assay validation and preventing potential misinterpretations of quantitative data [33].

This technique operates within the range of >10 initial target molecule numbers (ITMN), ideally supplementing Poisson analysis which covers <10 ITMN, thereby offering a comprehensive validation approach across different concentration ranges [33]. By revealing whether a qPCR assay starts immediately with its average efficiency, PCR-Stop analysis provides crucial information about enzyme activation and overall reaction robustness, making it particularly valuable for applications requiring precise quantification such as relative gene expression analysis using the comparative Ct method (2−ΔΔCt) [33].

Principles and Methodology of PCR-Stop Analysis

Conceptual Framework

The fundamental principle underlying PCR-Stop analysis is the systematic investigation of amplification behavior during the initial qPCR cycles that are typically inaccessible to standard monitoring. The method tests whether DNA duplication follows theoretical doubling according to pre-run cycles, whether variation exists between replicates, and if qPCR initiates immediately with its average efficiency from the first cycle [33]. This validation approach thereby reveals both quantitative and qualitative resolution of assays, along with their specific limitations [33].

In ideal conditions with 100% efficiency, each pre-run cycle would result in exact duplication of the initial template, with no deviation among replicates. However, in practice, deviations occur, and the overall average efficiency typically falls below 100%. PCR-Stop analysis quantifies these deviations, providing researchers with actual precision data of the amplification reaction independent of statistical analysis from calibration curves [33].

Experimental Workflow

The practical execution of PCR-Stop analysis involves a structured approach utilizing standard qPCR laboratory equipment and reagents. The process consists of several methodical steps:

Figure 1: PCR-Stop Analysis Experimental Workflow

The procedure begins with preparation of six batches, each containing eight identical samples with target DNA quantities exceeding the Poisson distribution range (>10 ITMN) [33]. These batches undergo differential pre-amplification treatment: the first batch receives no pre-run cycles and is immediately placed in the cooler, while subsequent batches undergo progressively increasing pre-run cycles (1-5 cycles) of the PCR assay being tested before cooling. Finally, all batches undergo a complete qPCR run simultaneously, allowing direct comparison of amplification patterns across different pre-amplification stages [33].

Key Performance Criteria

Analysis of PCR-Stop experiments focuses on four critical criteria that collectively define assay performance [33]:

DNA Duplication Accuracy: Measures how closely actual amplification during pre-runs matches theoretical doubling, reflecting consistent efficiency during initial cycles.

Inter-Replicate Variation: Quantified through relative standard deviation (RSD) among the eight samples in each batch, indicating assay consistency and reliability.

Quantitative Resolution: Assessed through steady increase of values across batches and regularity within batches, demonstrating the assay's ability to distinguish different template concentrations.

Qualitative Detection Limits: Determined by presence of negative samples, revealing the boundary where detection fails despite sufficient template concentration.

For a well-performing assay, efficiency calculated from PCR-Stop analysis should closely correlate with efficiency derived from calibration curves. Significant discrepancies indicate underlying issues with amplification consistency during initial cycles that traditional validation methods would miss [33].

Comparative Experimental Data

Performance Assessment Across Assay Systems

Implementation of PCR-Stop analysis across different qPCR assays reveals substantial variation in initial amplification efficiency that traditional validation methods fail to detect:

Table 1: PCR-Stop Analysis Performance Comparison Between Different qPCR Assays

| Assay Name | Target | Efficiency from Calibration Curve | Efficiency from PCR-Stop (10 ITMN) | Efficiency from PCR-Stop (100 ITMN) | RSD (%) | Quantitative Resolution |

|---|---|---|---|---|---|---|

| prfA | Listeria monocytogenes | 94.6% | 93.7% | N/A | ~20% | Suitable |

| exB | Salmonella enterica | 100.6% | 109.6% | 93% | ~300% | Limited |

| Ideal Assay | N/A | 100% | 100% | 100% | 0% | Perfect |

Data adapted from research on PCR-Stop analysis reveals that the well-validated prfA assay shows consistent efficiency between calibration curve (94.6%) and PCR-Stop analysis (93.7%), with acceptable RSD of approximately 20% across all batches [33]. In contrast, the exB assay demonstrated significant discrepancies, with PCR-Stop analysis revealing 109.6% efficiency at 10 ITMN and 93% at 100 ITMN, despite showing a seemingly optimal 100.6% efficiency in traditional calibration curves [33]. The exB assay also exhibited substantially higher RSD approaching 300%, indicating poor consistency between replicates [33].

Comparison with Alternative Validation Approaches

PCR-Stop analysis addresses specific limitations inherent in other qPCR validation methods:

Table 2: Comparison of qPCR Validation Methods

| Validation Method | Quantitative Range | Qualitative Resolution | Efficiency Assessment | Practical Complexity |

|---|---|---|---|---|

| PCR-Stop Analysis | >10 ITMN | Yes | Direct measurement | Moderate |

| Poisson Analysis | <10 ITMN | Yes | Limited | High |

| Calibration Curves | Broad dynamic range | No | Statistical estimation | Low |

| Digital PCR | Entire range | Yes | Direct measurement | High |

While Poisson analysis effectively validates quantitative and qualitative resolution in the boundary limit area (<10 ITMN), it provides limited information about actual amplification efficiency [33]. Calibration curves, though useful for determining overall efficiency and linearity across a broad range, reflect only a small statistical sample and offer limited insight into main performance parameters [33]. PCR-Stop analysis uniquely fills the gap for ranges >10 ITMN while providing direct assessment of efficiency during critical initial cycles.

Advantages and Applications in qPCR Assay Validation

Enhanced Sensitivity for Detection Issues

PCR-Stop analysis demonstrates superior capability in identifying subtle amplification problems that compromise data reliability. Research has revealed that simple polymerase replacement in established assays can dramatically impact performance, with some substitutions leading to complete amplification failure despite the internal amplification control functioning properly [38]. In extreme cases, polymerase substitution resulted in up to >10⁶-fold reduction in analytical sensitivity, emphasizing the critical importance of thorough validation when modifying established protocols [38].

This technical insight is particularly valuable for diagnostic applications where false negatives carry significant consequences. For instance, in validation of H5 influenza virus subtyping RT-qPCR assays, establishing a detection limit of 230 copies/mL with no cross-reactivity with seasonal influenza strains was essential for clinical implementation [39]. Similarly, in multi-laboratory validation of Cyclospora cayetanensis detection methods, the ability to detect as few as five oocysts in Romaine lettuce demonstrated the method's sensitivity for food safety testing [26].

Optimization of Reference Gene Selection

In gene expression studies using relative quantification methods, proper normalization using stable reference genes is paramount for obtaining reliable results. PCR-Stop analysis provides crucial technical validation for reference gene performance across different tissue types and experimental conditions [40].

Research on sweet potato tissues demonstrated significant variation in reference gene stability, with IbACT, IbARF and IbCYC showing the most stable expression across fibrous roots, tuberous roots, stems, and leaves, while IbGAP, IbRPL and IbCOX displayed the highest variability [40]. Such tissue-specific variation in reference gene stability underscores the importance of empirical validation rather than relying on conventional reference genes without proper verification.

Impact on Data Interpretation

The efficiency measurements obtained through PCR-Stop analysis directly affect the accuracy of quantitative interpretations in qPCR experiments. Efficiency values between 85-110% are generally considered acceptable, with deviations beyond this range indicating potential issues with reaction optimization, sample quality, or presence of inhibitors [41].