Demonstrating Equivalence in Microbiological Method Validation: A Strategic Guide for Pharmaceutical Researchers

This article provides a comprehensive framework for researchers and drug development professionals to successfully demonstrate equivalence during the validation of alternative and rapid microbiological methods.

Demonstrating Equivalence in Microbiological Method Validation: A Strategic Guide for Pharmaceutical Researchers

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to successfully demonstrate equivalence during the validation of alternative and rapid microbiological methods. Aligned with global regulatory guidelines like USP <1223>, Ph. Eur. 5.1.6, and the ISO 16140 series, the content covers foundational principles, methodological applications for sterility testing and environmental monitoring, troubleshooting for complex matrices, and advanced comparative statistical techniques. It addresses current challenges, including the ongoing revision of Ph. Eur. chapter 5.1.6 and the integration of novel technologies like AI and growth-based rapid methods, offering a practical path to regulatory compliance and enhanced product safety.

The Regulatory and Conceptual Bedrock of Method Equivalence

In the pharmaceutical industry, ensuring the safety and quality of products through microbiological testing is paramount. For decades, this relied on traditional, culture-based methods. The emergence of Rapid Microbiological Methods (RMMs) offers significant advantages in speed, sensitivity, and automation [1]. However, adopting these new technologies requires a rigorous demonstration that their performance is equivalent or superior to the compendial methods they are intended to replace. This foundational principle of equivalence forms the core of two key regulatory guidances: the United States Pharmacopeia (USP) General Chapter <1223> and the European Pharmacopoeia (Ph. Eur.) General Chapter 5.1.6.

This guide provides a structured comparison of these two chapters, which serve as critical roadmaps for researchers and drug development professionals validating alternative microbiological methods. The validation process is essential to guarantee that these methods are fit for their intended purpose and provide reliable and accurate results, thereby ensuring patient safety and product quality [1]. As the field continues to evolve, with the Ph. Eur. chapter currently under significant revision, understanding the nuances and convergences between these documents is more important than ever [2].

Regulatory Framework and Scope Comparison

USP General Chapter <1223>

USP <1223>, titled "Validation of Alternative Microbiological Methods," provides a comprehensive framework for the validation of alternative methods within the pharmaceutical industry [1] [3]. Its scope is extensive, covering alternative methods used for microbial enumeration, identification, detection, antimicrobial effectiveness testing, and sterility testing [1]. It encompasses a wide range of technologies, including RMMs, automated methods, and molecular methods like polymerase chain reaction (PCR) and nucleic acid amplification techniques [1]. The chapter mandates that any alternative method must demonstrate it is suitable for its intended use and shows non-inferiority compared to the compendial method [1].

Ph. Eur. General Chapter 5.1.6

The Ph. Eur. General Chapter 5.1.6, titled "Alternative methods for control of microbiological quality," parallels USP <1223> in its mission to facilitate the implementation of RMMs [2]. A revised draft of this chapter was published for public consultation in the first half of 2025, indicating the Ph. Eur. Commission's effort to stay at the forefront of scientific progress in this innovative and diverse field [2]. The revision aims to reflect current methodologies, update implementation guidance, and clarify the responsibilities of both suppliers and users [2]. It provides particular support for the implementation of RMMs for products with a short shelf-life, where faster results are especially beneficial [2].

Table 1: Key Characteristics of USP <1223> and Ph. Eur. 5.1.6

| Feature | USP <1223> | Ph. Eur. 5.1.6 |

|---|---|---|

| Primary Focus | Validation of alternative microbiological methods [1] | Control of microbiological quality using alternative methods [2] |

| Key Applications | Microbial enumeration, identification, detection, antimicrobial effectiveness testing, sterility testing [1] | To be clarified in the revised version, but expected to cover similar applications to USP [2] |

| Core Principle | Demonstration of equivalency and non-inferiority to the compendial method [1] | Facilitates implementation of Rapid Microbiological Methods (RMMs) [2] |

| Current Status | Officially active [1] | Under significant revision; public consultation ended June 2025 [2] |

| Emphasis | Method suitability and meeting predefined acceptance criteria [1] | User and supplier responsibilities; optimization of implementation strategies [2] |

Core Validation Concepts and Experimental Requirements

The demonstration of equivalence is not a single test but a multifaceted process evaluating several key performance characteristics. USP <1223> provides detailed guidance on the validation requirements that form the basis of any experimental study.

Foundational Validation Criteria

According to USP <1223>, the validation of an alternative microbiological method must address several key aspects to prove its acceptability [1]:

- Accuracy: The closeness of test results obtained by the alternative method to the true value.

- Precision: The degree of agreement among individual test results when the method is applied repeatedly to multiple samples.

- Specificity: The ability of the method to unequivocally assess the analyte in the presence of other components that may be expected to be present, such as interfering substances in a product matrix.

- Limit of Detection (LOD): The lowest quantity of the target microorganism that can be detected, but not necessarily quantified, under the stated experimental conditions.

- Limit of Quantification (LOQ): The lowest quantity of the target microorganism that can be quantified with acceptable accuracy and precision.

- Robustness: A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters.

- Linearity, Repeatability, and Ruggedness are also identified as critical validation elements [1].

The Equivalency Study and Statistical Analysis

A pivotal component of the validation process is the equivalency study, where the alternative method is directly compared against the compendial method. USP <1223> mandates that this study should include appropriate data elements, such as the number of replicates, independent tests, and different product lots or matrices tested [1]. Crucially, a statistical analysis must be performed to compare the data generated by the new method and the compendial method [1]. The alternative method must meet the predefined acceptance criteria outlined in USP to demonstrate non-inferiority [1].

Table 2: Essential Performance Characteristics for Validating Alternative Microbiological Methods

| Performance Characteristic | Experimental Goal | Key Consideration |

|---|---|---|

| Accuracy | Measure proximity to a known reference value [1] | Demonstrates the method's freedom from systematic error. |

| Precision | Determine repeatability and intermediate precision [1] | Assesses the method's random error; often tested with multiple replicates. |

| Specificity | Confirm target detection amidst potential interferents [1] | Critical for testing in complex product matrices. |

| Limit of Detection (LOD) | Identify the lowest detectable level of a microorganism [1] | Particularly important for sterility testing and pathogen detection. |

| Limit of Quantification (LOQ) | Identify the lowest quantifiable level with accuracy and precision [1] | Key for microbial enumeration tests. |

| Robustness | Evaluate resistance to small, deliberate method parameter changes [1] | Ensures the method is reliable during routine use in a lab. |

| Equivalency | Establish non-inferiority to the compendial method via statistical comparison [1] | The core of the validation, requiring a direct head-to-head study. |

Experimental Protocols and Data Generation

Successfully validating an alternative method requires a meticulously planned experimental protocol. The following workflow outlines the key stages, from planning to regulatory submission.

Detailed Experimental Workflow

- Define User Requirement Specification (URS): The process begins by identifying the key requirements from all stakeholders to prepare a URS document. This defines the specific needs of the facility, including whether a qualitative or quantitative method is required [1].

- Instrument Qualification: This stage involves Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ) to verify that the equipment is installed correctly, operates according to the manufacturer's specifications, and meets the performance criteria outlined in the URS [1].

- Method Suitability Testing: Before the full equivalency study, it is critical to verify that the chosen method does not cause interference or enhancement with the product itself. This step often references other USP chapters (e.g., <61>, <62>, <63>) to ensure the product matrix does not inhibit or falsely promote microbial growth [1].

- Perform the Equivalency Study: This is the core experimental phase. A statistically sound number of replicates, independent tests, and different product lots are tested in parallel using both the alternative and the compendial method. The data generated is then subjected to statistical analysis to demonstrate non-inferiority [1].

- Assemble the Validation Report: All testing performed, including method parameters, equipment used, and raw data, must be thoroughly documented in a validation report. This report must provide data proving the method's suitability for its intended purpose and be reviewed and approved by appropriate personnel, such as quality assurance [1].

- Regulatory Submission and Ongoing Monitoring: The validated method is submitted for regulatory approval. After implementation, ongoing monitoring and maintenance, including periodic system suitability testing and calibration, are necessary to ensure continued reliable performance [1].

Case Study: Automated ID/AST System Workflow Analysis

A study comparing two automated systems, VITEK 2 and Phoenix, provides a concrete example of experimental data generation for instrument comparison. The study evaluated workflow efficiency and time-to-result for identification and antimicrobial susceptibility testing.

Table 3: Experimental Comparison of Two Automated Microbiology Systems

| Performance Metric | VITEK 2 System | Phoenix System | Statistical Significance (P-value) |

|---|---|---|---|

| Manipulation Time per Batch | 10.6 ± 1.0 minutes | 20.9 ± 1.8 minutes | < 0.001 [4] |

| Mean Time to Result (All Isolates) | 506 ± 120 minutes | 727 ± 162 minutes | < 0.001 [4] |

| ID Correct for Enterobacteriaceae | 137/140 (98%) | 135/140 (96%) | 0.72 [4] |

| Overall Category Agreement (AST) | 97.0% | 97.0% | Not Significant [4] |

The data shows that while both systems performed accurately, the VITEK 2 system required significantly less manual manipulation time and delivered results faster. This type of quantitative, head-to-head comparison is central to demonstrating the operational advantages of an alternative method as part of the equivalence claim [4].

Essential Research Reagents and Materials

The successful execution of validation experiments relies on a foundation of well-characterized reagents and materials. The following table details key solutions and their functions in microbiological assays.

Table 4: Key Research Reagent Solutions for Microbiological Equivalence Studies

| Research Reagent / Material | Function in Validation Experiments |

|---|---|

| Reference Bacterial Strains (e.g., ATCC strains) | Certified microorganisms used as positive controls and for challenging the alternative method to demonstrate accuracy and specificity [5]. |

| Compendial Culture Media (e.g., Difco Antibiotic Media) | Standardized growth media specified in pharmacopoeial methods (USP <61>, <62>) used as the comparator in equivalency studies [5]. |

| McFarland Standards | Suspensions of predetermined turbidity (e.g., 0.5 McFarland) used to standardize microbial inoculum density, ensuring consistent and reproducible challenge levels [4]. |

| Pure-Grade Reference Powders (e.g., USP-grade antibiotics) | Materials with known potency and purity used as primary standards for quantitative assays, such as potency determinations [5]. |

| Validated Sampling Materials | Sterile swabs, containers, and diluents that do not inhibit microbial growth, critical for sample integrity during method transfer and robustness studies. |

USP <1223> and Ph. Eur. 5.1.6 provide the essential frameworks for demonstrating the equivalence of alternative microbiological methods in the pharmaceutical industry. While USP <1223> offers a well-established and detailed pathway focusing on method suitability and statistical equivalency, the Ph. Eur. is actively modernizing its chapter 5.1.6 to provide clearer implementation strategies, particularly for critical applications like short shelf-life products [1] [2].

For researchers and drug development professionals, the path to successful validation is systematic. It requires a stepwise approach beginning with clear user requirements, moving through rigorous instrument and method qualification, and culminating in a robust, statistically sound equivalency study against the compendial method. As demonstrated by comparative instrument studies, the payoff is not only regulatory compliance but also tangible operational benefits like reduced time-to-result and enhanced workflow efficiency [1] [4]. Adherence to these principles ensures that the adoption of innovative RMMs enhances, rather than compromises, the unwavering commitment to drug safety and quality.

The regulatory landscape for microbiological quality control is undergoing significant transformation, driven by scientific advancement and industry need for faster, more efficient methods. The European Pharmacopoeia (Ph. Eur.) general chapter 5.1.6. "Alternative methods for control of microbiological quality" is currently under revision, with a draft published in Pharmeuropa 37.2 for public consultation until the end of June 2025 [2]. This revision represents a pivotal development in the acceptance and implementation of Rapid Microbiological Methods (RMM), offering a more structured pathway for their adoption, particularly for products with short shelf-lives [2] [6]. The European Directorate for the Quality of Medicines & HealthCare (EDQM) is spearheading this initiative, reinforcing its commitment to staying at the forefront of scientific progress while addressing stakeholder expectations in this fast-moving field [2]. This guide explores these regulatory updates and provides a comparative analysis of RMM performance to aid in demonstrating methodological equivalence.

Revised Ph. Eur. Chapter 5.1.6: Key Changes and Implications

The revised chapter 5.1.6 aims to facilitate the implementation of RMMs, an expanding area of microbiology that is both innovative and diverse [2]. The chapter has undergone significant revisions to reflect current scientific and regulatory thinking.

Major Enhancements in the Draft Revision

- Clarified Responsibilities: The revised chapter more clearly delineates the responsibilities of RMM suppliers and users, providing a defined framework for implementation [2].

- Optimized Implementation Strategies: It includes new information to help users optimize their strategies by capitalizing on suitable tests already performed and evaluating different implementation activities simultaneously [2].

- Updated Validation Guidance: The primary validation subsection has been updated and clarified. Furthermore, the guidance on product-specific validation has been extensively revised and now provides several examples of validation strategies [2].

- Broader Technical Scope: While the chapter previously limited nucleic acid amplification techniques (NAT) mainly to mycoplasma testing, there is ongoing discussion about broadening this scope to include applications such as rapid sterility testing [6].

Stakeholder Feedback and Implementation Challenges

Feedback from industry stakeholders, including the ECA Pharmaceutical Microbiology Working Group, highlights several areas requiring further clarification:

- Resource-Intensive Validation: Stakeholders have noted that the validation requirements remain resource-intensive and have called for more streamlined processes to reduce duplicated work across laboratories [6].

- Comparability Testing Debates: There is ongoing debate regarding the necessity of direct side-by-side testing for comparability, especially when an alternative method demonstrates a theoretical detection limit of one microorganism (1 CFU) [6].

- "Stressed Microorganisms" Reference: Concerns have been raised about references to "stressed microorganisms" without a clear standard for producing pharmaceutical-representative stressed strains [6].

EDQM Certification Project and Support Initiatives

Parallel to the revision of chapter 5.1.6, the EDQM is actively promoting several initiatives to support the pharmaceutical industry in adopting new methodologies.

Proposed Certification System for RMM

A significant proposal emerging from stakeholder feedback is the creation of an EDQM certification system for RMMs [6]. This system would:

- Save time and share validation resources among laboratories

- Avoid duplicated work across different organizations

- Provide a recognized standard for regulatory acceptance

Ongoing EDQM Engagement and Training

The EDQM maintains an active schedule of conferences and training programs to support industry professionals. Recent and upcoming events include:

- "Certified for Success: Using the CEP Procedure to Elevate Quality and Drive..." conference in November 2025 [7]

- The 2025 EDQM virtual training programme for professionals in drug development, R&D, pharmaceutical quality assurance, and quality control [7]

- Publication of new resources clarifying the stepwise process for obtaining a Certification of Suitability (CEP) or having a change approved [7]

Comparative Performance Analysis of Rapid Microbiological Methods

Workflow and Time Efficiency Comparison: VITEK 2 vs. Phoenix

A comprehensive study evaluating the workflow and performance of two automated systems provides valuable quantitative data for method comparison [4].

Table 1: Workflow and Time Efficiency Comparison Between Two Automated Systems

| Parameter | VITEK 2 System | Phoenix System | Statistical Significance |

|---|---|---|---|

| Mean Manipulation Time per Batch (7 isolates) | 10.6 ± 1.0 minutes | 20.9 ± 1.8 minutes | P < 0.001 [4] |

| Mean Time to Result (All Bacterial Groups) | 506 ± 120 minutes | 727 ± 162 minutes | P < 0.001 [4] |

| Identification Accuracy (Enterobacteriaceae) | 98% (137/140 strains) | 96% (135/140 strains) | P = 0.72 [4] |

| Overall Category Agreement (All isolates) | 97.0% | 97.0% | Not Significant [4] |

The VITEK 2 system demonstrated significantly less manual manipulation time and faster time to results compared to the Phoenix system, while maintaining equivalent identification accuracy [4].

Validation of Soleris System for Yeast and Mold Detection

A 2023 study validated the Soleris automated method for quantitative detection of yeasts and molds in an antacid oral suspension against traditional plate-count methods [8].

Table 2: Validation Parameters for Soleris System for Yeast and Mold Detection

| Validation Parameter | Result | Acceptance Criterion |

|---|---|---|

| Probability of Detection | Statistically equivalent to reference method | P > 0.05 (Fisher's exact test) [8] |

| Limits of Detection and Quantification | Not inferior to reference method | P > 0.05 (Fisher's exact test) [8] |

| Precision | Standard deviation <5, Coefficient of variance <35% | Meeting predefined thresholds [8] |

| Accuracy | >70% | Meeting predefined thresholds [8] |

| Linearity | R² >0.9025 | Meeting predefined thresholds [8] |

| Ruggedness | ANOVA, P < 0.05 | Meeting predefined thresholds [8] |

The study concluded that the Soleris technology met all validation criteria to be considered an alternative method for yeast and mold quantification in the specific pharmaceutical matrix tested [8].

Experimental Protocols for Method Validation

Protocol for Automated System Evaluation

The comparative study between VITEK 2 and Phoenix systems followed this methodology [4]:

- Bacterial Isolates: 307 fresh clinical isolates comprising 141 Enterobacteriaceae, 22 nonfermenting gram-negative bacilli, 93 Staphylococcus spp., and 51 Enterococcus spp. [4]

- Inoculum Preparation: Bacterial colonies were suspended and adjusted to a 0.5 McFarland standard using a densitometer [4]

- Testing Procedure:

- Reference Methods: API systems (API 20 E, ID 32 GN, API 32 Staph, API 32 Strep) for identification; frozen microdilution panels according to NCCLS guidelines for susceptibility testing [4]

Protocol for Equivalence Validation Study

The Soleris validation study employed this comprehensive approach [8]:

- Study Design: Comparison of Soleris automated method versus traditional plate-count method for quantitative detection of yeasts and molds

- Testing Matrix: Antacid oral suspension (aluminum hydroxide 4% + magnesium hydroxide 4% + simethicone 0.4%)

- Microbial Models: A. brasiliensis and C. albicans as suitable models for yeasts and molds

- Statistical Analysis: Utilized probability of detection, linear Poisson regression, Fisher's test, and multifactorial analysis of variance (ANOVA) to establish equivalence

- Validation Parameters Assessed: Precision, accuracy, linearity, ruggedness, operative range, and specificity

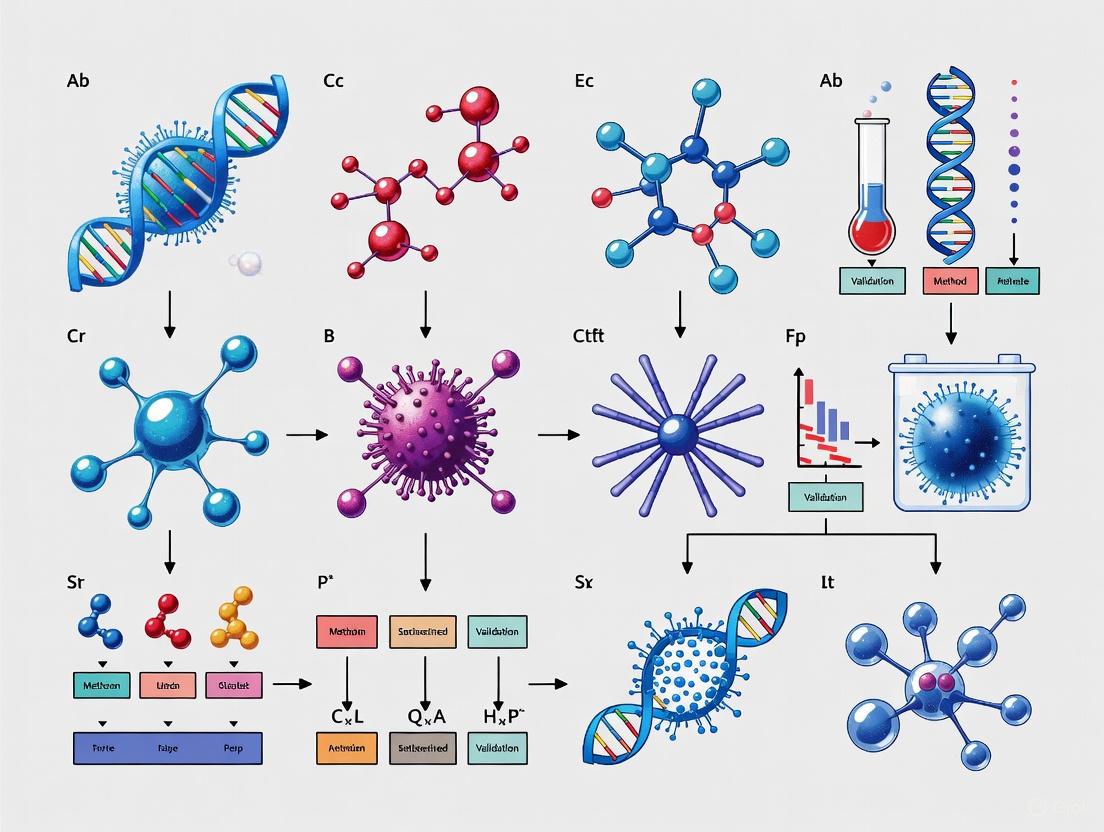

Visualizing the RMM Implementation Pathway

The following workflow diagram illustrates the key stages in implementing an alternative microbiological method according to regulatory guidance:

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for RMM Implementation

| Item | Function/Application | Example from Literature |

|---|---|---|

| Automated Identification Cards | Organism identification through biochemical reactions | VITEK 2 ID-GPC card for gram-positive cocci; ID-GNB card for gram-negative rods [4] |

| Antimicrobial Susceptibility Testing Panels | Determine susceptibility profiles to various antibiotics | VITEK 2 AST-P 523 panel for gram-positive cocci; AST-N021 card for gram-negative bacilli [4] |

| Reference Strains for Quality Control | System verification and performance validation | E. coli ATCC 25922, P. aeruginosa ATCC 27853, S. aureus ATCC 29213 [4] |

| Inoculum Preparation Systems | Standardized microbial suspension preparation | DensiChek Densitometer for VITEK 2; Crystal Spec Nephelometer for Phoenix system [4] |

| Culture Media for Strain Maintenance | Bacterial subculture prior to testing | Columbia agar with 5% defibrinated sheep blood [4] |

| Specialized Detection Kits | Detection of specific resistance mechanisms | ESBL detection kits using combined disk methods [4] |

The ongoing revision of Ph. Eur. chapter 5.1.6 and the proposed EDQM certification project represent significant advancements in creating a more responsive and science-based regulatory framework for rapid microbiological methods. The experimental data presented demonstrates that RMMs can provide equivalent performance to traditional methods while offering substantial improvements in time efficiency. As the public consultation period continues until June 2025, stakeholders have an opportunity to contribute to the shaping of these important guidelines [2]. The continued collaboration between industry, regulatory bodies, and method suppliers will be essential for realizing the full potential of these innovative technologies in enhancing pharmaceutical quality control.

In the field of food and feed microbiology, reliable test results are paramount for ensuring product safety and quality. The International Organization for Standardization (ISO) 16140 series provides a standardized framework for microbiological method validation and verification, establishing clear protocols that help laboratories, test kit manufacturers, and food business operators implement methods correctly [9]. These standards have gained significant importance in recent years, with parts of the series being endorsed by European Regulation (EC) 2073/2005, making them essential for compliance in food safety testing [10].

A fundamental challenge faced by researchers and scientists is the precise distinction between method validation and method verification—two related but distinct processes that are often incorrectly used interchangeably [11]. This terminology confusion can lead to improper implementation of testing protocols, potentially compromising the reliability of results. Within the ISO 16140 framework, these concepts have clearly defined meanings and purposes: validation proves that a method is fundamentally sound and fit-for-purpose, while verification demonstrates that a particular laboratory can successfully perform that validated method [11] [9]. This guide examines the critical differences between these processes, providing researchers with a clear understanding of ISO 16140 terminology and its practical application in demonstrating methodological equivalence.

Core Concepts: Validation and Verification Defined

What is Method Validation?

Method validation is the process of proving whether the performance characteristics of a particular testing method are suitable for its intended use [11]. More specifically, it determines whether the testing process can accurately detect or quantify specified microorganisms [11]. Validation answers the fundamental question: "Is this method scientifically sound and fit-for-purpose?"

The ISO 16140-2 standard serves as the base protocol for alternative methods validation and is cross-referenced by other parts of the 16140 series [9]. This process typically involves two main phases: a method comparison study and an interlaboratory study [9]. The data generated through validation provides potential end-users with performance data for a given method, enabling them to make informed choices about implementation [9].

What is Method Verification?

Method verification is the confirmation that an individual laboratory or user can properly perform a validated method and that the method performs as specified in the validation study [11]. Unlike validation, which focuses on the method itself, verification focuses on the user of the method [11]. This process is usually conducted on an ongoing basis within a laboratory to ensure the validated method continues to perform as expected [11].

According to the ISO 16140 framework, verification is only applicable to methods that have been previously validated using an interlaboratory study [9]. The protocol for verification is detailed in ISO 16140-3, which provides a harmonized approach for laboratories to demonstrate their competency in implementing validated methods [9] [10].

Comparative Analysis: Key Differences at a Glance

The table below summarizes the fundamental distinctions between method validation and method verification according to the ISO 16140 framework:

Table 1: Core Differences Between Validation and Verification

| Aspect | Validation | Verification |

|---|---|---|

| Primary Focus | The method itself [11] | The user/laboratory implementing the method [11] |

| Central Question | Is the method fit-for-purpose? [11] | Can we perform the method correctly? [11] |

| When Conducted | When a new test method is introduced or when changes are made [11] | Ongoing basis to ensure continued proper performance [11] |

| Typical Performer | Method developer or multiple laboratories [9] | Single user laboratory [9] |

| ISO 16140 Reference | ISO 16140-2 (alternative methods) [9] | ISO 16140-3 (single laboratory verification) [9] |

| Scope of Application | Broad range of foods/categories [9] | Laboratory's specific scope and food items [9] |

The ISO 16140 Series Framework

The ISO 16140 series consists of multiple parts that form a comprehensive network of validation and verification procedures:

Table 2: Parts of the ISO 16140 Series

| Part | Title | Scope and Purpose |

|---|---|---|

| ISO 16140-1 | Vocabulary [9] | Provides definitions and terminology for the series [9] |

| ISO 16140-2 | Protocol for the validation of alternative methods [9] | Base standard for alternative methods validation [9] |

| ISO 16140-3 | Protocol for method verification [9] | Verification of reference/validated methods in a single lab [9] |

| ISO 16140-4 | Protocol for method validation in a single laboratory [9] | Validation without interlaboratory study [9] |

| ISO 16140-5 | Protocol for factorial interlaboratory validation [9] | For non-proprietary methods in specific cases [9] |

| ISO 16140-6 | Protocol for validation of confirmation/typing methods [9] | For alternative confirmation and typing procedures [9] |

| ISO 16140-7 | Protocol for validation of identification methods [9] | For identification methods without reference methods [9] |

Relationship Between Standards

The relationship between validation and verification standards in the ISO 16140 series follows a logical progression, as visualized in the workflow below:

Diagram: Method Validation and Verification Workflow in ISO 16140

Experimental Protocols and Procedures

Validation Protocols (ISO 16140-2)

The validation of alternative methods against reference methods follows rigorous experimental protocols outlined in ISO 16140-2. For qualitative methods, the validation includes determination of Relative Limit of Detection (RLOD), sensitivity, specificity, and accuracy through interlaboratory studies [9]. The RLOD represents the ratio between the limit of detection of the alternative method and the reference method [9].

For quantitative methods, validation includes assessment of correlation coefficient, mean difference between methods, and reproducibility comparisons [9]. The Amendment 1 of ISO 16140-2, published in September 2024, introduced new calculations for various elements including qualitative method evaluation and RLOD of the interlaboratory study [9].

The validation study typically tests a minimum of five different food categories from the fifteen defined categories in Annex A of ISO 16140-2 [9]. When these five categories are validated, the method is regarded as being validated for a "broad range of foods" [9].

Verification Protocols (ISO 16140-3)

The verification process in ISO 16140-3 consists of two distinct stages, each with specific experimental protocols:

Implementation Verification

This first stage demonstrates that the user laboratory can perform the method correctly [9]. The laboratory tests one of the same food items evaluated in the validation study to demonstrate that they can obtain similar results [9]. For qualitative methods, this involves determining the estimated Limit of Detection (eLOD₅₀) - the smallest number of microorganisms that can be detected on 50% of occasions [10]. The obtained eLOD₅₀ value must be equal to or less than four times the LOD₅₀ value from the validation study, or ≤4 cfu/test portion if no LOD₅₀ is available [10].

For quantitative methods, implementation verification assesses intralaboratory reproducibility (Sᵢᵣ) [10]. The Sᵢᵣ value must be equal to or lower than two times the lowest mean value observed in the interlaboratory reproducibility (Sᵣ) from the validation study [10].

Food Item Verification

The second stage demonstrates that the user laboratory can correctly test challenging food items within their specific scope of accreditation [9]. Laboratories test several challenging food items using defined performance characteristics [9].

For qualitative methods, this again uses the eLOD₅₀ approach with the same acceptance criteria [10]. For quantitative methods, food item verification evaluates the estimated bias (ebias) between inoculated samples and the inoculum without sample at three different concentration levels [10]. The difference must be ≤0.5 log [10].

For confirmation methods, verification tests inclusivity (ability to detect target microorganisms) and exclusivity (lack of interference with non-target microorganisms) using five pure target strains and non-target strains with an acceptance limit of 100% concordance with the reference method [10].

Essential Research Reagent Solutions

The following table details key reagents and materials essential for conducting validation and verification studies according to ISO 16140 protocols:

Table 3: Essential Research Reagents for Method Validation and Verification

| Reagent/Material | Function in Validation/Verification | Application Examples |

|---|---|---|

| Reference Method Materials | Provides benchmark for comparison during alternative method validation [9] | Culture media, reagents, and equipment specified in standardized reference methods [9] |

| Certified Reference Strains | Serves as inoculum for determination of LOD₅₀, inclusivity, and exclusivity [10] | Target and non-target microorganisms for verification studies; stressed cultures for validation [10] |

| Defined Food Category Samples | Represents the matrix for method validation across different product types [9] | Food items from the 15 defined categories in ISO 16140-2 Annex A [9] |

| Proprietary Alternative Method Kits | Subject of validation studies against reference methods [9] | Commercial test kits, rapid methods, automated systems [9] [10] |

| Confirmation and Typing Reagents | Used for validation of alternative confirmation procedures per ISO 16140-6 [9] | Biochemical, molecular, or serological reagents for microbial confirmation [9] |

Regulatory Context and Industry Impact

The ISO 16140 series plays a critical role in the regulatory landscape for food safety. In the European Union, the validation and certification requirements for using alternative methods are included in European Regulation 2073/2005 [9] [10]. This regulatory endorsement makes compliance with ISO 16140 standards essential for food business operators seeking to implement alternative microbiological methods.

The introduction of ISO 16140-3 has particularly impacted laboratories accredited to ISO 17025:2017, which requires demonstration of method verification [11] [10]. Before this standard, laboratories developed their own verification protocols, leading to variability and potential disputes when different laboratories obtained discordant results [10]. The harmonized protocol in ISO 16140-3 ensures consistent verification practices across laboratories, strengthening confidence in microbiological testing results throughout the food supply chain.

A transition period was established for the implementation of ISO 16140-3, recognizing that some reference methods were not yet fully validated at the time of publication [9]. This transition period allows laboratories to verify these non-validated reference methods according to a specific protocol (Annex F of ISO 16140-3) until the methods are formally validated by standardization organizations [9].

The distinction between validation and verification within the ISO 16140 framework represents a fundamental concept for ensuring reliability in microbiological testing. Validation establishes that a method is scientifically sound and fit-for-purpose, while verification demonstrates that a specific laboratory can properly implement that method. This clear terminology separation, supported by detailed experimental protocols in the various parts of the ISO 16140 series, provides a robust framework for demonstrating methodological equivalence.

For researchers and drug development professionals, understanding these distinctions is essential not only for regulatory compliance but also for maintaining the highest standards of testing accuracy. The harmonized approaches provided by the ISO 16140 standards facilitate global acceptance of microbiological methods, ultimately contributing to enhanced food safety and public health protection in an increasingly complex global food supply chain.

The Analytical Procedure Lifecycle Management (APLM) Approach as per USP <1220>

The Analytical Procedure Lifecycle Management (APLM) approach, formalized in the United States Pharmacopeia (USP) general chapter <1220>, represents a fundamental shift in how analytical procedures are developed, validated, and maintained within the pharmaceutical industry. This systematic framework moves beyond the traditional, often ritualistic, method validation approach to embrace a holistic lifecycle management process based on sound science and risk management [12] [13]. The APLM framework is designed to ensure that analytical procedures remain fit for their intended purpose throughout their entire operational life, providing greater confidence in the quality and reliability of generated data, which is particularly crucial in pharmaceutical development and manufacturing.

The adoption of APLM aligns with the Quality by Design (QbD) principles already established in pharmaceutical process development, applying similar rigorous, systematic thinking to analytical methods [12]. This approach emphasizes enhanced procedure understanding and control, leading to more robust and reliable methods. For researchers and scientists working on demonstrating equivalence in microbiological method validation, the APLM framework provides a structured, scientifically-defensible pathway for comparing alternative methods and generating the necessary validation data to support claims of equivalence or similarity [14].

The Three-Stage APLM Model

USP <1220> structures the analytical procedure lifecycle into three interconnected stages, creating a continuous improvement model with feedback mechanisms at each transition point.

Stage 1: Procedure Design and Development

This initial stage focuses on defining the analytical procedure's requirements and developing a method that meets these needs. The cornerstone of this phase is the Analytical Target Profile (ATP), a predefined objective that explicitly states the procedure's intended purpose and required performance criteria [13]. The ATP defines what the procedure needs to achieve, rather than how it should be performed, and serves as the foundation for all subsequent lifecycle activities. Procedure development then involves selecting the appropriate analytical technique, designing the experimental approach, and conducting systematic studies to understand the method's operational boundaries and critical parameters. Knowledge gained through risk assessments and development experiments is documented to support future lifecycle stages [12].

Stage 2: Procedure Performance Qualification

This stage involves experimental demonstrations that the analytical procedure performs as intended and meets the ATP criteria [12]. Traditionally referred to as "method validation," this phase confirms through laboratory studies that the procedure is fit for purpose. The qualification activities verify various performance characteristics appropriate to the procedure's intended use, which for quantitative methods typically includes parameters such as accuracy, precision, specificity, and range. The data generated provides objective evidence that the procedure consistently produces reliable results that meet the pre-defined ATP standards [13]. At the conclusion of this stage, the procedure's performance is confirmed to be suitable for routine use.

Stage 3: Ongoing Procedure Performance Verification

The final stage ensures continuous monitoring of the analytical procedure during routine use to verify that it remains in a state of control and continues to meet ATP criteria [12]. This represents a significant advancement over traditional approaches, where method performance was often assumed to remain acceptable until a failure occurred. Ongoing verification involves systematically tracking method performance through control charts, system suitability tests, and trend analysis of quality control data. This monitoring provides early detection of potential performance issues or unfavorable trends, enabling proactive interventions before method failure occurs. The stage also includes managing procedure changes through a formal change control process and confirming performance after any modifications [13].

The following workflow diagram illustrates the interconnected nature of these three stages and their key components:

Experimental Application: Comparing Microbiological Enumeration Methods

A practical application of the APLM approach demonstrates its utility in comparing alternative analytical procedures and assessing their equivalence, which is particularly valuable in microbiological method validation research.

Experimental Protocol and Methodology

A case study published in the Journal of Dietary Supplements detailed the application of APLM principles to validate and compare two microbiological enumeration procedures for a Lactobacillus acidophilus probiotic ingredient [14]. The study followed a structured protocol:

- Measurand Definition: The analytical target was defined as the enumeration of viable Lactobacillus acidophilus cells in a single-strain powdered ingredient, expressed as log10 colony-forming units per gram (log10 CFU/g).

- ATP Establishment: The ATP specified the required measurement uncertainty (0.097 log10 CFU/g) and predefined acceptance criteria for method performance characteristics.

- Procedure Comparison: Two established enumeration procedures were evaluated: ISO 20128 and USP <64>.

- Risk Assessment: A formal risk assessment was conducted to identify potential sources of variation and method performance challenges.

- Statistical Analysis: Data analysis included ANOVA for variance component estimation and calculation of tolerance intervals (TI) based on each procedure's measurement uncertainty.

- Equivalence Evaluation: Method equivalence was assessed by comparing the calculated tolerance intervals of both procedures [14].

Comparative Results and Data Analysis

The experimental data generated through this systematic approach provided quantitative comparisons between the two enumeration methods, with results summarized in the table below:

Table 1: Comparison of ISO 20128 and USP <64> Enumeration Methods for L. acidophilus

| Performance Parameter | ISO 20128 Method | USP <64> Method | Acceptance Criteria |

|---|---|---|---|

| Intermediate Precision | 0.062 log10 CFU/g | Not Specified | <0.097 log10 CFU/g |

| Target Measurement Uncertainty | 0.097 log10 CFU/g | 0.097 log10 CFU/g | Not Applicable |

| Tolerance Interval Range | 11.14-11.76 log10 CFU/g | 11.41-11.62 log10 CFU/g | Not Applicable |

| Fitness for Purpose | Demonstrated | Not Fully Demonstrated | Meeting ATP Requirements |

The data revealed that the intermediate precision for the ISO 20128 method (0.062 log10 CFU/g) was well within the target measurement uncertainty (0.097 log10 CFU/g), demonstrating it was fit for purpose [14]. When comparing the two procedures using tolerance intervals, the ISO 20128 method showed a broader range (11.14-11.76 log10 CFU/g) compared to the USP <64> method (11.41-11.62 log10 CFU/g). The observed overlap in tolerance intervals indicated that the methods were similar but not statistically equivalent [14].

The following diagram visualizes the tolerance interval comparison methodology that forms the basis for this equivalence assessment:

Essential Research Reagent Solutions

Implementing the APLM approach for microbiological method validation requires specific reagents and materials designed to support robust analytical procedures. The following table details key research reagent solutions and their functions in enumeration studies:

Table 2: Essential Research Reagent Solutions for Microbiological Enumeration Studies

| Reagent/Material | Function in Analysis | Application Notes |

|---|---|---|

| Selective Growth Media | Supports growth of target microorganisms while inhibiting competitors | Formulation must be optimized for specific probiotic strains; requires validation for each matrix |

| Reference Strain Cultures | Serves as positive controls for method performance qualification | Certified reference materials with known viability profiles provide highest accuracy |

| Matrix-Matched Calibrators | Establishes quantitative relationship between signal response and microbial count | Critical for establishing method linearity and accuracy across specified range |

| Viability Markers | Distinguishes between live and non-viable microorganisms | Flow cytometry-compatible dyes offer alternative to culture-based methods |

| Sample Stabilization Solutions | Maintains microorganism viability during sample processing and storage | Prevents viability loss between sampling and analysis, reducing measurement uncertainty |

Implications for Demonstrating Method Equivalence

The APLM framework provides a scientifically rigorous foundation for demonstrating method equivalence in microbiological research, offering significant advantages over traditional approaches.

The application of tolerance intervals based on measurement uncertainty, as demonstrated in the case study, offers a statistically sound approach for comparing analytical procedures [14]. This methodology provides a more nuanced understanding of method comparability than simple point estimates or overlapping confidence intervals. The finding that methods can be "similar but not equivalent" has important practical implications for method selection and validation strategies in pharmaceutical development.

For researchers focused on microbiological method validation, the APLM approach facilitates informed decision-making regarding method suitability, transfer, and comparison. The structured documentation and risk assessment requirements create a comprehensive knowledge base that supports regulatory submissions and technical justification of method selection [14] [13]. Furthermore, the ongoing performance verification stage ensures that method performance continues to be monitored during routine use, providing continuous data to support the original equivalence decision or identify when re-evaluation may be necessary.

The integration of APLM principles with statistical tools such as tolerance interval analysis creates a powerful framework for demonstrating method equivalence that aligns with current regulatory expectations and quality standards in pharmaceutical development. This approach represents industry best practice for ensuring the reliability and comparability of analytical data throughout a method's operational lifecycle.

Building a Risk-Based Strategy for Method Selection and Implementation

In regulated industries, selecting and implementing new analytical methods is a critical undertaking where failures can impact product quality, patient safety, and regulatory compliance. A risk-based strategy provides a structured framework to prioritize efforts, focusing resources on the most critical aspects of a method's performance and ensuring robust validation. For microbiological methods, demonstrating equivalence between a new method and a compendial or established reference method is a core requirement, as the results directly influence safety-critical decisions [15].

This guide objectively compares performance assessment approaches and provides the experimental protocols needed to build a rigorous, risk-based strategy for method selection and implementation, framed within the context of demonstrating methodological equivalence.

Core Principles of a Risk-Based Strategy

A risk-based strategy shifts the validation paradigm from a uniformly exhaustive approach to a targeted, scientifically justified one. The core principle is to identify what poses the greatest threat to data integrity or product quality and to focus control measures there [16].

Foundational Steps

The implementation of this strategy rests on a sequence of foundational steps:

- Define Clear Objectives and Scope: Before assessments begin, establish what the method must achieve and the boundaries of the evaluation. This includes understanding primary business objectives, areas of greatest risk, and specific compliance requirements [17].

- Establish a Risk Management Framework: Adopt a structured framework, such as ISO 14971 for medical devices or ISO 31000 for general risk management, to ensure consistency and alignment with industry best practices [17] [16]. These frameworks provide standardized processes for risk identification, analysis, evaluation, and control.

- Select the Right Risk Methodology: The choice of methodology depends on the nature of the risks and available data. A hybrid approach often works best, combining qualitative expert judgment for complex, subjective risks with quantitative, data-driven analysis for objective risk modeling [17].

The Risk-Based Strategy Workflow

The following diagram illustrates the logical workflow for implementing a risk-based strategy for method selection and implementation, integrating core principles and process steps.

Experimental Protocols for Method Comparison

A formal method comparison study is the cornerstone of demonstrating equivalence. A well-designed and carefully planned experiment is key to generating valid results and conclusions [18].

Key Experimental Design Factors

The table below summarizes the critical parameters for designing a robust comparison of methods experiment, drawing from established clinical and laboratory standards [19] [18].

Table: Key Experimental Design Factors for Method Comparison

| Factor | Recommended Protocol | Rationale & Additional Details |

|---|---|---|

| Sample Number | Minimum of 40 samples; preferably 100-200 [19] [18]. | A larger number of samples helps identify unexpected errors due to interferences or sample matrix effects. |

| Sample Type | Authentic patient or product specimens [19]. | Avoids spiked samples where possible to ensure the matrix reflects real-world conditions. |

| Measurement Range | Should cover the entire clinically or analytically meaningful range [18]. | Critical for evaluating method performance across all potential result values. |

| Replication | Duplicate measurements for both test and comparative method are advisable [19] [18]. | Minimizes the effect of random variation and helps identify measurement mistakes. |

| Time Period | Analysis should be performed over multiple days (minimum of 5) and multiple analytical runs [19] [18]. | Ensures the experiment captures typical day-to-day performance variation. |

| Sample Stability | Analyze test and comparative methods within 2 hours of each other [19]. | For unstable analytes, appropriate preservation or faster processing is required. |

The Comparison of Methods Experiment

The purpose of the comparison of methods experiment is to estimate inaccuracy or systematic error (bias) [19]. You perform this experiment by analyzing a set of patient samples by both the new method (test method) and a comparative method.

- Selection of Comparative Method: An ideal comparative method is a reference method whose correctness is well-documented. However, most laboratories use a routine method as the comparator. In this case, large differences must be interpreted carefully, and additional experiments may be needed to identify which method is inaccurate [19].

- Defining Acceptable Bias: Before starting the experiment, define the acceptable bias based on performance specifications. These specifications can be derived from models such as the Milano hierarchy, considering the effect on clinical outcomes, biological variation, or state-of-the-art performance [18].

Data Analysis and Performance Metrics

Once data is collected, the analysis phase begins. This involves both graphical and statistical techniques to understand the nature and size of the differences between methods.

Graphical Analysis: The First Essential Step

Graphical presentation of the data is a fundamental first step to visually inspect the agreement and identify outliers or patterns [18].

- Scatter Plots: Plot the results from the test method (y-axis) against the comparative method (x-axis). The line of equality (y=x) can be superimposed. This plot helps visualize the overall agreement and the linearity of the relationship across the measurement range [18].

- Difference Plots (Bland-Altman): Plot the difference between the two methods (test - comparative) on the y-axis against the average of the two methods on the x-axis. This plot is excellent for visualizing the magnitude of differences across the concentration range and identifying any systematic trends, such as increasing bias with higher concentrations [18].

Statistical Analysis for Estimating Systematic Error

While graphs provide a visual impression, statistical calculations provide numerical estimates of the error. It is crucial to avoid inadequate statistical tests like correlation analysis or t-tests, as they are not designed to assess method comparability [18].

Table: Statistical Methods for Method Comparison Analysis

| Statistical Method | Primary Use | Interpretation & Output |

|---|---|---|

| Linear Regression | To estimate constant and proportional systematic error over a wide analytical range [19]. | Slope: Estimates proportional error. Y-intercept: Estimates constant error. Standard Error of the Estimate (S~y/x~): Measures scatter around the regression line. |

| Deming Regression | An alternative to ordinary linear regression that accounts for measurement error in both methods. | More appropriate when the comparative method is not a true reference method with negligible error. |

| Passing-Bablok Regression | A non-parametric method that is robust to outliers and does not require assumptions about error distribution. | Useful for data with non-normal errors or outlier values. |

| Bias (Paired t-test) | To estimate the average systematic error when the analytical range is narrow [19]. | The mean difference between the test and comparative method results. The paired t-test can determine if the bias is statistically significant. |

The systematic error (SE) at a critical decision concentration (X~c~) is calculated from the regression line (Y = a + bX) as follows [19]:

- Calculate the corresponding Y-value: Y~c~ = a + bX~c~

- Calculate the systematic error: SE = Y~c~ - X~c~

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and solutions required for a robust microbiological method equivalence study.

Table: Essential Research Reagent Solutions for Microbiological Method Validation

| Item / Reagent | Function in the Experiment |

|---|---|

| Certified Reference Material | Provides a sample with a known, traceable value to act as a truth-bearer for assessing method trueness and calibration. |

| Strain Collections | Well-characterized, certified microbial strains used to challenge the method, ensuring it can accurately detect, identify, or enumerate target organisms. |

| Inhibitor/Interference Solutions | Solutions containing substances like antibiotics, surfactants, or sample matrix components used to test the method's robustness and specificity in the presence of potential interferents. |

| Selective & Non-Selective Growth Media | Used in culture-based methods to assess recovery efficiency, selectivity against non-target organisms, and overall growth promotion. |

| Sample Matrix Simulants | Mimics the composition of the actual product sample (e.g., food homogenate, serum) to validate method performance in the absence of the actual product during preliminary testing. |

Implementing the Strategy: From Assessment to Validation

With risks prioritized and experimental data in hand, the strategy moves to implementation and control.

The output of the risk assessment directly informs the validation strategy and resource allocation [16]:

- High-Risk Functions/Failures: Require comprehensive testing. All system and sub-systems must be thoroughly tested according to a scientific, data-driven rationale. This is similar to the classic, rigorous validation approach.

- Medium-Risk Functions/Failures: Require testing of functional requirements to ensure the item has been properly characterized.

- Low-Risk Functions/Failures: May not require formal testing, but the presence and basic function of the item should be verified.

Continuous Monitoring and Verification

A risk-based strategy is not a one-time event. The final stage is intended to maintain the validated state during routine production and use [16]. This involves:

- Continued Process Verification: Establishing a system to detect unplanned process variations. Data should be evaluated to ensure the process remains in a state of control.

- Tracking Key Risk Indicators (KRIs): Monitoring specific metrics that serve as early warning signals for emerging risks, such as an increase in invalidated results or deviations from calibration curves [20] [17].

- Regular Reviews and Adaptations: The risk landscape and method performance can change. Regular reviews of the strategy, incorporating feedback from audits, out-of-specification results, and technological advances, are essential for continuous improvement [20] [17].

Implementing Equivalence Studies: From Protocol to Practice

In the highly regulated landscape of pharmaceutical development and manufacturing, demonstrating the equivalence of methods, processes, or products is a critical necessity. Whether implementing a rapid microbiological method to replace a traditional pharmacopoeial method, transferring a process between facilities, or developing a biosimilar, robust equivalence studies are fundamental to ensuring that changes do not adversely impact product quality, safety, or efficacy. These studies are grounded in a systematic framework often referred to as a Comparability Protocol—a predefined, comprehensive plan that generates validated evidence to assure that the performance of a new method or product is comparable to, or not inferior than, an established standard [21] [6].

The European Pharmacopoeia (Ph. Eur.) Chapter 5.1.6, which addresses alternative microbiological methods, is currently under significant revision, highlighting the dynamic nature of this field. Stakeholder feedback has emphasized the resource-intensive nature of current validation requirements and sparked technical debates, such as whether comparability can ever be established without direct side-by-side testing, even when an alternative method has a theoretical limit of detection (LOD) of 1 CFU [6]. Furthermore, organizations like AOAC INTERNATIONAL are actively working on revising their microbiological method guidelines (Appendix J), questioning if validation needs differ by use case and whether culture should still be considered the undisputed "gold standard" for confirmation [22]. These ongoing developments underscore the importance of a deeply understood and rigorously applied framework for equivalence testing, making the design of robust studies—featuring parallel testing and clear protocols—more crucial than ever for researchers, scientists, and drug development professionals.

Core Principles: Equivalence Testing vs. Significance Testing

A foundational concept in designing a robust equivalence study is the critical distinction between equivalence testing and traditional significance testing (e.g., a t-test). The two approaches answer fundamentally different questions and their misuse is a common pitfall.

- Significance Testing: A standard t-test poses the question, "Is there a statistically significant difference between the two means?" A resulting p-value > 0.05 indicates that there is insufficient evidence to conclude a difference exists. This is not the same as concluding the two are equivalent. The study might simply have too few replicates or the data may be too variable to detect a meaningful difference [21].

- Equivalence Testing: This approach seeks to answer, "Is the difference between the two means small enough to be practically insignificant?" Instead of testing for a difference of zero, it tests whether the difference lies within a pre-specified, acceptable margin of equivalence. As stated in the United States Pharmacopeia (USP) chapter <1033>, "This is a standard statistical approach used to demonstrate conformance to expectation and is called an equivalence test. It should not be confused with the practice of performing a significance test..." [21].

The most common statistical procedure for demonstrating equivalence is the Two One-Sided Tests (TOST) procedure. In this framework, the null hypothesis is that the means differ by a clinically or practically relevant quantity. The alternative hypothesis, which the researcher aims to demonstrate, is that the difference between the products is too small to be clinically relevant. The TOST procedure essentially tests whether the confidence interval for the difference in means lies entirely within a predefined equivalence interval [23] [21].

The Equivalence Testing Workflow: A Conceptual Diagram

The following diagram illustrates the logical flow and decision points in the Two One-Sided Tests (TOST) procedure for establishing equivalence.

Designing a Parallel Study for Method Comparability

A parallel study design, where the alternative and reference methods are applied to separate but comparable sample sets, is a common and powerful approach for demonstrating equivalence, particularly in microbiological method validation.

Key Experimental Protocol: Parallel Comparative Study

The following workflow outlines the key stages in executing a parallel comparative study for microbiological methods, from sample preparation to final statistical analysis.

Detailed Methodology:

- Sample Preparation and Inoculation: The product (e.g., an antacid oral suspension) is artificially contaminated with representative challenge microorganisms (e.g., C. albicans and A. brasiliensis) at three or more different bioburden levels, spanning low, medium, and high concentrations relevant to the specification limits. Using naturally contaminated samples is also an option if available and reproducible [24].

- Sample Allocation: The prepared samples are randomly assigned to two parallel groups. This randomization is crucial to eliminate bias and ensure the groups are comparable before the different methods are applied.

- Parallel Testing: One group of samples is tested using the alternative rapid method (e.g., Soleris Direct Yeast and Mold automated method), while the other, parallel group is tested using the reference pharmacopoeial method (e.g., Plate Count). The study by Ramírez et al. validated the Soleris method by establishing a relationship between detection time (from the alternative method) and colony-forming units (from the reference method) [24].

- Data Collection: For each sample, the relevant quantitative data is recorded. For the alternative method, this may be a detection time, a fluorescence signal, or a quantitative result from an instrument. For the reference method, this is typically a colony count (CFU).

- Statistical Analysis for Equivalence: The collected data is subjected to a suite of statistical analyses to demonstrate equivalence. Key parameters and tests include [24] [22]:

- Probability of Detection (POD) & Limit of Detection (LOD): The POD is calculated across the different inoculation levels, and the LOD of the alternative method is shown to be statistically similar to the reference method using Fisher's exact test (P > 0.05) [24].

- Linearity and Model Fit: A linear Poisson regression can be used to model the relationship between the output of the alternative method (e.g., detection time) and the reference method (CFU), with a high coefficient of determination (R² > 0.9025) indicating a strong relationship [24].

- Analysis of Variance (ANOVA): A multifactorial ANOVA is used to assess the ruggedness of the method and to confirm that the results from the alternative method are in statistical agreement with the reference plating procedure [24].

- Equivalence Testing (TOST): As described in the principles section, the TOST procedure is applied to confirm that the differences in performance between the two methods are within pre-defined, acceptable equivalence limits [21].

Quantitative Comparison of Rapid Microbiological Methods

The table below summarizes key validation data from published equivalence studies for various rapid microbiological methods, providing a benchmark for expected performance.

| Method / Technology | Target Microorganism | Matrix | Key Validation Parameters & Results | Reference Method |

|---|---|---|---|---|

| Soleris Direct Yeast & Mold [24] | Yeast (C. albicans) & Mold (A. brasiliensis) | Antacid Oral Suspension | Accuracy: >70%; Precision: CV <35%; Linearity: R² >0.9025; LOD/LOD: Statistically equivalent (Fisher's test, P >0.05) | Plate Count |

| iQ-Check EB [25] | Enterobacteriaceae | Infant Formula & Cereals | Certificate issued for detection in test portions up to 375 grams. | Not Specified |

| Autof ms1000 [25] | Bacteria, Yeasts, Molds (Confirmation) | Isolated Colonies from Agar | Certificate issued for confirmation using MALDI-TOF mass spectrometry. | Reference Culture Methods |

| Petrifilm Bacillus cereus [25] | Bacillus cereus | Food & Animal Feed | Validation according to ISO 16140-2:2016. | ISO 7932:2004 |

Establishing Risk-Based Acceptance Criteria

Setting scientifically justified and risk-based acceptance criteria is the cornerstone of a successful equivalence study. The "equivalence window" used in the TOST procedure should not be arbitrary; it must reflect the potential impact on product quality and patient safety.

Acceptance Criteria Setting Framework

The process for defining these critical equivalence limits is outlined in the following diagram, which moves from regulatory and risk foundations to specific statistical inputs.

Detailed Methodology for Setting Acceptance Criteria:

- Risk-Based Categorization: The product or process parameter under investigation should be assigned a risk level (High, Medium, Low) based on its potential impact on product safety, efficacy, and quality. This is a fundamental principle of ICH Q9 (Quality Risk Management) [21].

- Define Practical Limits (Δ): The upper and lower practical limits (UPL and LPL) that define the equivalence window are set based on this risk categorization. These limits are often defined as a percentage of the specification tolerance (the range between the Upper and Lower Specification Limits, USL and LSL). For example [21]:

- High Risk: Allow only small practical differences (e.g., 5-10% of tolerance).

- Medium Risk: Allow moderate differences (e.g., 11-25% of tolerance).

- Low Risk: Allow larger differences (e.g., 26-50% of tolerance).

- Impact on OOS Rates: A best practice is to evaluate the impact of a shift in the mean equal to the proposed equivalence limit on the potential Out-of-Specification (OOS) rates. Using Z-scores and calculating the area under the normal distribution curve can estimate the resulting parts per million (PPM) failure rate. The acceptance criteria should be chosen to minimize the risk of measurements falling outside the product specification [21].

- Protocol Finalization: The finalized equivalence limit (Δ) is documented in the comparability protocol, providing a pre-defined, scientifically justified target for the equivalence study.

The Scientist's Toolkit: Essential Reagents and Materials

A successful equivalence study relies on high-quality, well-characterized materials. The following table details key research reagent solutions and their critical functions in microbiological method validation.

| Item / Reagent | Function in Equivalence Study |

|---|---|

| Challenge Strains | Representative microorganisms (e.g., C. albicans, A. brasiliensis) used to artificially inoculate the product; they must be well-characterized and relevant to the product's bioburden flora [24]. |

| Reference Culture Media | Standardized media prescribed by pharmacopoeial methods (e.g., Plate Count Agar) used for the reference method; essential for cultivating and enumerating microorganisms for comparison [24]. |

| Alternative Method Kits | Ready-to-use reagent kits or cassettes for rapid methods (e.g., Soleris vials, PCR detection kits); their lot-to-lot consistency is critical for method ruggedness [25] [24]. |

| Neutralizing Agents | Components in dilution buffers or media that inactivate antimicrobial properties of the product itself (e.g., in antacids, suspensions), ensuring accurate microbial recovery [24]. |

| Standard Reference Materials | Certified materials with known properties used to calibrate instruments and validate the accuracy of both the alternative and reference methods [22]. |

| Stressed Microorganisms | Challenge populations that have been subjected to sub-lethal stress (e.g., heat, desiccation) to simulate "real-world" injured microbes and challenge the method's detection capability more rigorously [6]. |

Designing a robust equivalence study is a multifaceted process that requires careful planning, from selecting an appropriate parallel design and applying the correct statistical tools like TOST, to justifying risk-based acceptance criteria. The ongoing revisions to key guidelines like Ph. Eur. Chapter 5.1.6 and AOAC's Appendix J highlight a collective industry move towards more streamlined, scientifically sound validation frameworks. By adhering to the structured protocols and principles outlined in this guide—incorporating parallel testing, rigorous statistical analysis for equivalence, and a risk-based approach—researchers and drug development professionals can generate defensible data. This evidence is crucial for demonstrating comparability, thereby facilitating the adoption of innovative methods and ensuring the ongoing quality and safety of pharmaceutical products.

In microbiological method validation research, demonstrating that a new candidate method is equivalent to a established comparative method is a fundamental requirement [26]. This process is critical for ensuring reliable and accurate analytical results, whether for new instrument verification, reagent lot changes, or transitioning to new analytical platforms [26] [27]. The validation framework centers on establishing key performance parameters that collectively prove the method's suitability for its intended purpose.

Within regulatory frameworks such as ICH Q2(R1) and FDA Guidance for Industry, five parameters form the cornerstone of method validation: Accuracy, Precision, Specificity, Limit of Detection (LOD), and Limit of Quantitation (LOQ) [27]. These parameters are assessed through structured comparison studies that evaluate whether a candidate method produces results equivalent to a validated reference method [26]. For microbiological methods specifically, this requires strict adherence to Good Laboratory Practices (GLP) and considerations for adequate repair of sublethal lesions in target organisms, which is particularly crucial when examining processed food samples with potentially low colonization levels [28].

This guide provides a detailed comparison of experimental approaches for establishing these key validation parameters, supported by experimental data and protocols tailored for microbiological applications.

Core Validation Parameters: Definitions and Experimental Design

Accuracy and Precision

Accuracy reflects the closeness of agreement between a measured value and its corresponding true value [27]. Precision describes the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [27]. While accuracy measures correctness, precision measures reproducibility and consistency.

Table 1: Experimental Design for Assessing Accuracy and Precision

| Parameter | Experimental Approach | Data Analysis | Acceptance Criteria |

|---|---|---|---|

| Accuracy | Analysis of certified reference materials (CRMs) with known concentrations/spike recovery studies [27]. | Comparison of mean result to true value; calculation of percent recovery or bias [27]. | High accuracy indicates reliable results; recovery within 70-120% often acceptable depending on analyte [27]. |

| Precision | Repeated measurements (replicates) under specified conditions (repeatability, intermediate precision) [26] [27]. | Calculation of standard deviation (SD) and percent coefficient of variation (%CV) [26] [27]. | High precision indicates consistent results; %CV <10-15% often acceptable depending on analyte [27]. |

In comparison studies, accuracy is evaluated through mean difference or bias estimation between candidate and comparative methods [26]. For microbiological methods, this requires careful consideration of how replicates are handled—calculations should be based on the average of replicates to reduce error related to bias estimation [26].

Specificity

Specificity is the ability to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, matrix components, and other analytes [27]. For microbiological methods, this parameter is crucial in ensuring that detection and enumeration methods correctly identify target organisms without interference from background microflora.

Table 2: Experimental Approaches for Specificity Assessment

| Method Type | Experimental Design | Assessment Criteria |

|---|---|---|

| Detection Methods | Inoculate samples with target organism and potentially interfering microorganisms; assess detection capability in mixed cultures [28]. | Ability to detect target organism without false positives/negatives from interfering flora. |

| Enumeration Methods | Compare recovery of target organism from pure culture versus recovery in presence of background microflora [28]. | Percentage recovery compared to pure culture; minimal inhibition from competing organisms. |

| Identification Methods | Challenge method with closely related non-target organisms; assess misidentification rates [28]. | Correct identification rate; percentage of false positives. |

Specificity validation in food microbiology must account for the "adequacy of repair of sublethal lesions in target organisms," which is particularly important for methods detecting stressed cells in processed foods [28]. The experimental design should include samples with relevant background microflora typical of the food matrix being tested.

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

The Limit of Detection (LOD) represents the lowest concentration of an analyte that can be detected, but not necessarily quantified, under stated experimental conditions. The Limit of Quantitation (LOQ) is the lowest concentration that can be quantified with acceptable accuracy and precision [27].

For microbiological methods, LOD and LOQ validation requires specialized approaches:

- LOD for qualitative microbiological methods: Determined by testing serial dilutions of inoculum with known low levels of target microorganisms. The detection probability should be ≥95% at the claimed detection limit [28].

- LOQ for quantitative microbiological methods: Established by demonstrating acceptable accuracy (e.g., 70-125% recovery) and precision (e.g., %CV <35%) at the claimed quantitation limit across multiple replicates [27].

Comparative Experimental Data: Method Performance Assessment

Quantitative Comparison of Method Performance

Validation studies for demonstrating equivalence require careful planning of comparison pairs—selecting candidate instruments/methods against comparative (reference) instruments/methods [26]. The statistical approaches for comparing these methods depend on the nature of the data and the relationship between methods.

Table 3: Statistical Tools for Method Comparison Studies

| Statistical Method | Application in Method Validation | Data Requirements |

|---|---|---|

| T-Test | Comparing means between two groups/methods [29]. | Normal distribution, equal variances between groups. |

| ANOVA | Comparing means across multiple groups/methods simultaneously [29]. | Normal distribution, homogeneity of variances. |

| Regression Analysis | Evaluating the relationship between candidate and comparative methods; estimating bias as a function of concentration [26] [29]. | Data points spread throughout measuring range. |

| Bland-Altman Difference | Evaluating bias when comparative method is not a reference method [26]. | Paired measurements across sample concentration range. |

When the candidate method measures the analyte differently than the comparative method, the difference between results is often not constant across the concentration range [26]. In these cases, linear regression analysis is used to estimate bias as a function of concentration, providing the best possible estimation for bias [26].

Experimental Protocols for Key Validation Experiments