Comparative Limit of Detection in Microbiological Assays: A Guide for Method Selection, Optimization, and Validation

This article provides a comprehensive analysis of Limit of Detection (LOD) studies for microbiological assays, addressing the critical needs of researchers, scientists, and drug development professionals.

Comparative Limit of Detection in Microbiological Assays: A Guide for Method Selection, Optimization, and Validation

Abstract

This article provides a comprehensive analysis of Limit of Detection (LOD) studies for microbiological assays, addressing the critical needs of researchers, scientists, and drug development professionals. It explores the foundational principles defining LOD and its impact on diagnostic sensitivity. The scope covers a wide array of traditional and emerging methodologies, including molecular, serological, and microfluidic platforms, highlighting their comparative LOD performance. The content further delves into practical strategies for troubleshooting and optimizing assay precision, and establishes a rigorous framework for the validation and cross-platform comparison of LOD, essential for ensuring reliable data in research, clinical diagnostics, and antimicrobial stewardship.

Understanding Limit of Detection: Core Concepts and Critical Importance in Microbiology

In analytical microbiology and pharmaceutical development, the Limit of Detection (LOD) and Limit of Quantification (LOQ) are fundamental figures of merit that define the operational boundaries of an analytical procedure. The LOD represents the lowest concentration of an analyte in a sample that can be reliably detected, though not necessarily quantified as an exact value, while the LOQ is the lowest concentration that can be quantitatively determined with acceptable precision and accuracy under stated experimental conditions [1]. These parameters are particularly crucial in microbiological assays where natural microbial variability, complex matrices, and the living nature of the analytes present unique challenges not encountered in chemical analysis [1].

Understanding these limits is essential for researchers and drug development professionals when selecting appropriate methods for quality control, environmental monitoring, and sterility testing. Proper determination of LOD and LOQ ensures that analytical methods are fit-for-purpose, providing reliable data for critical decision-making in regulated environments. This guide provides a comprehensive comparison of how these fundamental metrics are defined, determined, and applied across different microbiological assay platforms, supported by experimental data and practical protocols.

Theoretical Foundations and Definitions

Conceptual Framework

The conceptual relationship between blank measurements, LOD, and LOQ can be visualized through their statistical distributions, which is fundamental to understanding how these limits are derived and interpreted in analytical practice.

Regulatory Definitions Across Guidelines

Different regulatory bodies provide specific definitions for LOD and LOQ, though these often share common principles while employing varying terminology.

Table 1: Regulatory Definitions of LOD and LOQ

| Regulatory Body | Limit of Detection (LOD) | Limit of Quantification (LOQ) |

|---|---|---|

| USP <1223> | "The lowest concentration of microorganisms in a test sample that can be detected" [2] | "The lowest number of microorganisms in a test sample that can be enumerated with acceptable accuracy and precision" [2] |

| PDA Technical Report TR33 | "The lowest concentration of microorganisms in a test sample that can be detected" [2] | "The lowest number of microorganisms in a test sample that can be enumerated with acceptable accuracy and precision" [2] |

| European Pharmacopoeia | Defined in E.P. 5.1.6 as part of validation process for alternative microbiological methods [2] | Determined through validation tests with specified confidence levels [2] |

| ICH Q2(R2) | "The lowest concentration of an analyte in a sample that can be reliably detected" [1] | "The lowest concentration of an analyte that can be quantitatively determined with acceptable precision and accuracy" [1] |

Experimental Determination Methods

Statistical Approaches for LOD/LOQ Determination

The determination of LOD and LOQ in microbiological assays requires specialized statistical approaches that account for the unique characteristics of microbial data, including high variability and censored observations (results below detection or quantification limits).

Table 2: Methods for Determining LOD and LOQ in Microbiological Assays

| Method | Approach | Application Context | Key Considerations |

|---|---|---|---|

| Signal-to-Noise Ratio | LOD = 3×(σ/S); LOQ = 10×(σ/S) where σ is blank standard deviation and S is signal intensity [3] | Instrument-based methods (e.g., ATP bioburden, molecular methods) | Requires multiple blank measurements; assumes normal distribution of noise [3] |

| Maximum Likelihood Estimation (MLE) | Fits parametric distribution (typically lognormal) to censored data to estimate parameters [4] | Food microbiology with heavily censored data; quantitative risk assessment | Handles data with >90% below LOQ; implemented in specialized tools like Microbial-MLE [4] |

| Extinction Dilution Testing | Assesses method linearity, LOD, and LOQ through serial dilutions [5] | Culture-based methods; method validation studies | Determines both LOD (lowest detected) and LOQ (lowest quantified with confidence) [5] |

| Poisson Confidence Interval | Uses Poisson statistics and probability intervals for microbial counts [2] | Plate count methods; low microbial concentrations | Accounts for discrete nature of colony counts; appropriate for low count ranges [2] |

Practical Workflow for LOD/LOQ Determination

A standardized experimental approach for determining LOD and LOQ ensures consistent and reliable results across different laboratories and methodologies. The following workflow illustrates the key stages in this determination process.

Comparative Analysis of Microbiological Methods

Method Performance Comparison

Different microbiological methods exhibit varying capabilities for detection and quantification, influenced by their underlying principles, amplification steps, and detection mechanisms.

Table 3: LOD and LOQ Comparison Across Microbiological Methods

| Method Type | Typical LOD | Typical LOQ | Key Applications | Method-Specific Considerations |

|---|---|---|---|---|

| Qualitative Culture Methods | 1 CFU per test portion (25-1500g) [6] | Not applicable (non-quantitative) | Pathogen detection (Salmonella, Listeria, E. coli O157:H7) [6] | Includes enrichment amplification; detects presence but not quantity [6] |

| Quantitative Plate Count | 10-100 CFU/g [6] | 10-100 CFU/g (depending on countable range) [6] | Aerobic plate count, indicator organisms, specific pathogens [6] | Limited by countable range (25-250 colonies); requires serial dilution [6] |

| Most Probable Number (MPN) | 3 MPN/g [6] | 3 MPN/g [6] | Low-level contamination; indicator organisms | Statistical estimate with wide confidence intervals [6] |

| ATP Bioburden (ASTM E2694) | Varies with sample volume and reagent concentration [5] | Varies; can be lower than culture methods for some samples [5] | Metalworking fluid monitoring, condition assessment | Sensitivity increases with filtered volume and reagent concentration [5] |

| Membrane Filtration Culture | 0.001 CFU/mL (with 1000mL sample) [5] | 0.03 CFU/mL (with 1000mL sample) [5] | Low bioburden testing, sterile products | Sensitivity depends on filtration volume; increases with larger volumes [5] |

Agreement Between Different Methodologies

When comparing alternative methods to reference culture methods, agreement studies provide valuable insights into practical performance. A 2015 study comparing ATP-bioburden (ASTM E2694) with culturable bacterial bioburden demonstrated 81% agreement between the two parameters, which is considered excellent agreement as it exceeds the generally accepted threshold of >70% [5]. This level of agreement supports the use of rapid methods like ATP testing as proxies for traditional culture methods in certain applications, though the ultimate decision depends on specific monitoring objectives and regulatory requirements [5].

Advanced Applications and Research Developments

Handling Censored Data with Maximum Likelihood Estimation

In food microbiology and environmental monitoring, datasets often contain a high percentage of non-detectable values (results below LOD or LOQ), creating censored data that presents analytical challenges. Traditional approaches of ignoring these values or substituting fixed values can lead to overestimation or underestimation of microbial concentrations [4]. The Microbial-Maximum Likelihood Estimation (MLE) tool provides a statistical approach to address this issue by fitting a parametric distribution (typically log-normal) to the observed data, including both detectable and non-detectable values [4]. This approach is particularly valuable for quantitative microbial risk assessment (MRA), where accurate estimation of low-level contamination is crucial for public health protection.

The Microbial-MLE tool, implemented as an Excel spreadsheet with Solver add-in, offers four sub-tools (QN1, QN2, QN3, QN4) categorized according to the type of microbiological enumeration test and the nature of the data (quantitative or semi-quantitative, with or without values below LOQ) [4]. This user-friendly implementation makes advanced statistical methods accessible to microbiologists without requiring deep mathematical expertise, facilitating more accurate data analysis in food safety and pharmaceutical manufacturing contexts.

Multidimensional Data Analysis in Novel Platforms

Emerging analytical platforms like electronic noses (eNoses) present unique challenges for LOD and LOQ determination due to their multidimensional output data. Unlike traditional methods that generate a single measurement per sample (zeroth-order data), eNoses produce multiple sensor responses for each sample (first-order data) [7]. Recent research has adapted traditional LOD/LOQ approaches for these complex systems using multivariate data analysis techniques including principal component analysis (PCA), principal component regression (PCR), and partial least squares regression (PLSR) [7].

Application of these methods to beer maturation monitoring demonstrated that different calculation approaches can yield LOD estimates varying by up to a factor of eight for compounds like acetaldehyde, diacetyl, dimethyl sulfide, ethyl acetate, isobutanol, and 2-phenylethanol [7]. For diacetyl specifically, the calculated LOD and LOQ were sufficiently low to suggest potential for process monitoring, highlighting the importance of compound-specific detection limit assessment in complex matrices [7].

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Materials for LOD/LOQ Studies

| Reagent/Material | Function in LOD/LOQ Studies | Application Context |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish calibration curves and determine method accuracy [1] | Chemical and microbiological method validation |

| Selective Growth Media | Enable isolation and quantification of target microorganisms [6] | Culture-based methods; specificity testing |

| Luciferin-Luciferase Reagents | Generate bioluminescent signal proportional to ATP concentration [5] | ATP bioburden methods (ASTM D4012, D7687, E2694) |

| Neutralizing Agents | Inactivate antimicrobial compounds in samples [1] | Bioburden testing of preservative-containing products |

| Matrix-Matched Standards | Account for matrix effects in complex samples [3] | Food, environmental, and biological sample analysis |

| Serial Dilution Buffers | Prepare logarithmic dilutions for extinction dilution studies [5] | Determination of method linear range, LOD, and LOQ |

| Membrane Filters | Concentrate microorganisms from large sample volumes [5] | Enhancing sensitivity of detection methods |

The determination of LOD and LOQ represents a critical component in the validation of microbiological assays, providing essential information about the operational limits and sensitivity of analytical methods. While fundamental definitions are consistent across methodologies, the practical approaches to determining these parameters must be adapted to the specific characteristics of each technology, accounting for factors such as microbial variability, matrix effects, and data structure.

Traditional culture methods, rapid molecular methods, and emerging platforms like eNoses each present unique considerations for detection and quantification limit assessment. Statistical approaches ranging from simple signal-to-noise ratios to advanced maximum likelihood estimation for censored data enable researchers to accurately characterize method performance across these diverse platforms. As microbiological analytical techniques continue to evolve, with increasing emphasis on rapid results and complex data outputs, the fundamental principles of LOD and LOQ determination remain essential for ensuring data reliability in research, pharmaceutical development, and quality control applications.

The Limit of Detection (LOD) is a fundamental analytical parameter defining the lowest concentration of an analyte that can be reliably detected by an analytical method. In microbiological diagnostics, LOD represents the minimal number of microbial organisms or viral particles that a test can identify with reasonable certainty, typically expressed as a concentration such as international units per milliliter (IU/mL) or colony-forming units per milliliter (CFU/mL) [8] [9]. This parameter is distinct from the Limit of Quantification (LOQ), which represents the lowest concentration that can be measured with acceptable precision and accuracy [9]. Understanding these concepts is crucial, as LOD determines whether a pathogen is merely detectable, while LOQ indicates whether it can be precisely quantified for clinical monitoring purposes.

The clinical significance of LOD extends across diagnostic accuracy, therapeutic decision-making, and public health surveillance. In infectious disease management, lower LOD values enable earlier detection of pathogens, facilitating timely intervention before extensive replication or transmission occurs. The precision of LOD determination directly impacts diagnostic reliability, particularly for infections with low microbial loads or during early stages of disease when prompt treatment is most effective [8] [10]. As antimicrobial resistance continues to escalate globally, claiming an estimated 700,000 lives annually with projections reaching 10 million by 2050, the imperative for highly sensitive diagnostic tools has never been more pressing [11] [12].

This analysis examines the critical role of LOD through a comprehensive evaluation of current microbiological assays, their performance characteristics in clinical settings, and their broader implications for antimicrobial stewardship and public health outcomes.

Comparative Performance of Diagnostic Assays: The Critical Role of LOD

HDV-RNA Assay Comparison

A recent national quality control multicenter study evaluating Hepatitis D Virus (HDV) RNA quantification assays revealed substantial variability in LOD performance across commercially available platforms. This comparative investigation assessed nine different assay systems across 30 centers, employing standardized panels including serial dilutions of WHO/HDV standard and clinical samples [8].

Table 1: Comparison of LOD and Performance Characteristics Across HDV-RNA Assays

| Assay System | 95% LOD (IU/mL) | Accuracy (log10 IU/ml difference) | Precision (Intra-run CV) | Linearity (R²) |

|---|---|---|---|---|

| AltoStar | 3 | <0.5 | NR | >0.90 |

| RealStar | 10 (min-max: 3-316) | <0.5 | <20% | >0.90 |

| Bosphore-on-InGenius | 10 | NR | <20% | >0.85 (<1000 IU/ml) |

| RoboGene | 31 (3-316) | <0.5 | NR | >0.90 |

| Nuclear-Laser-Medicine | 31 | <0.5 | NR | >0.90 |

| EuroBioplex | 100 (100-316) | <0.5 | <20% | >0.90 |

NR = Not Reported

The investigation demonstrated that AltoStar exhibited the highest sensitivity with a 95% LOD of 3 IU/mL, followed closely by RealStar and Bosphore-on-InGenius at 10 IU/mL [8]. This variability in LOD (ranging from 3 to 316 IU/mL across different platforms and centers) highlights significant inter-assay and intra-assay heterogeneity that could substantially impact clinical management. Particularly concerning was the finding that some assays showed greater than 1 log10 IU/mL underestimations of viral load, which could lead to inappropriate clinical decisions regarding therapy initiation or modification [8].

The study further revealed that for viral loads below 1000 IU/mL, only four assays (Bosphore-on-InGenius, AltoStar, RealStar, and RoboGene) maintained acceptable linearity (R² > 0.85), emphasizing the particular challenges of reliable quantification at low viral concentrations [8]. This finding has direct implications for monitoring treatment response, where precise quantification of diminishing viral loads is essential for assessing therapeutic efficacy.

Automated High-Throughput Molecular Systems

Comprehensive performance evaluation of high-throughput automated nucleic acid detection systems demonstrates the advancements in LOD consistency achievable through automation. One study of the PANA HM9000 Automated Molecular Detection System reported LOD values of 10 IU/mL for both EBV DNA and HCMV DNA, with exceptional precision (coefficients of variation below 5%) and excellent linearity (correlation coefficient ≥ 0.98) across a wide concentration range [10].

This system integrated all critical PCR workflow functions—including sample preprocessing, nucleic acid extraction, PCR setup, and amplification detection—into a fully automated, closed-loop platform [10]. The implementation of advanced biosafety mechanisms including physical partitioning, gradient negative pressure control, HEPA filtration, and UV disinfection enabled contamination-free operation even under continuous high-throughput conditions, addressing key variables that can affect LOD reliability in clinical laboratory settings [10].

The validation followed CLSI guidelines (EP05, EP06, EP07, EP09, EP12, EP17, and EP47) and included a 168-hour continuous operation stress test, processing approximately 2000 samples daily to verify consistent performance under sustained high-throughput conditions [10]. Such rigorous validation approaches provide a model for standardized evaluation of LOD claims across diagnostic platforms.

Methodological Frameworks for LOD Determination

Comparative Approaches to LOD Calculation

The determination of LOD varies significantly depending on methodological approach, which subsequently impacts the reported sensitivity values. A comparative investigation of different LOD calculation methods for HPLC-based analysis found substantial variation in results depending on the methodology employed [13]. The signal-to-noise ratio (S/N) method provided the lowest LOD and LOQ values, while the standard deviation of the response and slope (SDR) method yielded the highest values [13]. This methodological variability underscores the importance of standardizing LOD determination protocols to enable meaningful cross-platform comparisons.

Following established regulatory criteria, such as FDA guidelines for chromatographic-based pharmaceutical analysis, improves the accuracy and consistency of LOD determination [13]. In clinical microbiology, adherence to CLSI protocols provides a structured framework for validating analytical sensitivity, with specific guidelines (EP05, EP06, EP07, EP09, EP12, EP17, and EP47) offering methodological rigor and clinical relevance for assay validation [10].

Standardized Experimental Protocols for LOD Validation

Robust LOD validation requires systematic experimental approaches. The following workflow outlines a comprehensive protocol adapted from CLSI guidelines for determining and validating LOD in microbiological assays:

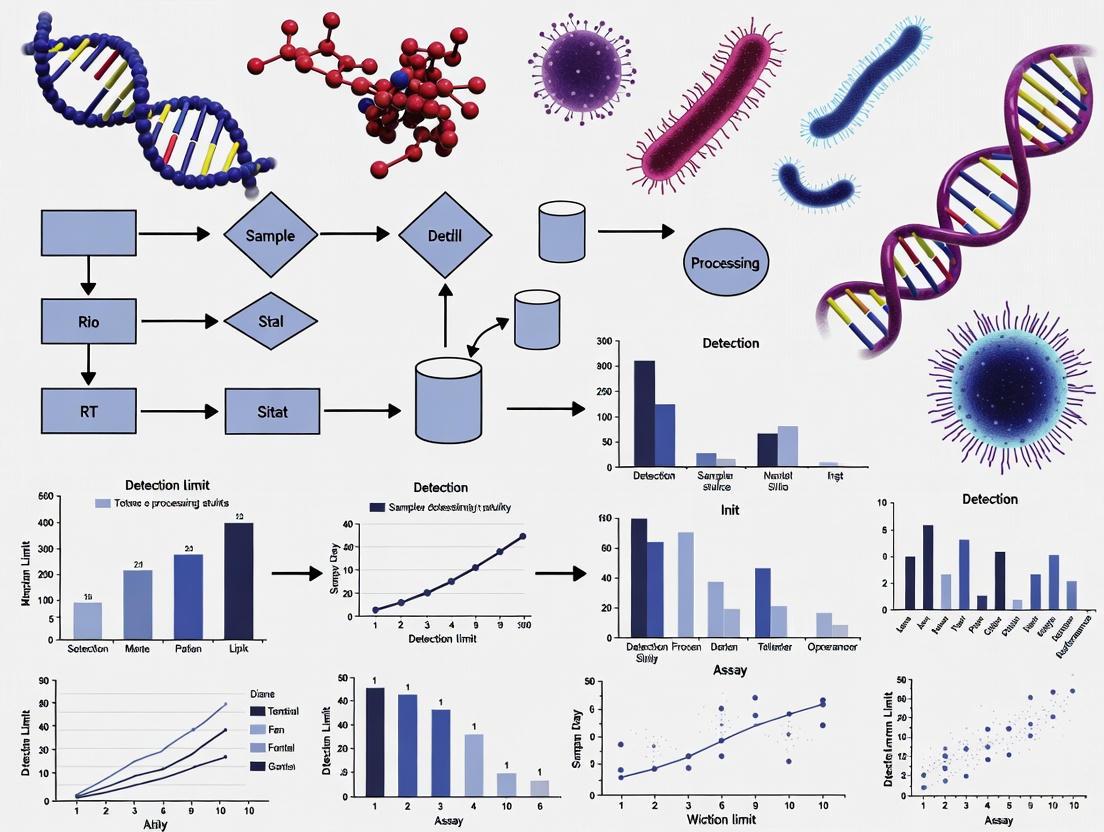

Figure 1: Experimental LOD Validation Workflow

Key components of LOD validation include:

Sample Panel Preparation: Utilize standardized reference materials (e.g., WHO International Standards) serially diluted in appropriate negative matrices to create concentration panels spanning the expected detection limit [8] [10].

Low Concentration Testing: Perform multiple replicates (typically 20-60 measurements) at critical concentrations near the expected LOD to determine the concentration at which ≥95% of tests return positive results [8].

Precision Assessment: Evaluate both intra-assay and inter-assay precision through repeated testing across different lots, operators, and instruments to determine coefficients of variation [8] [10].

Interference Testing: Assess potential cross-reactivity with related organisms or substances that may be present in clinical samples to ensure assay specificity [10].

The HDV-RNA study exemplifies this approach, employing two panels: Panel A comprised 8 serial dilutions of WHO/HDV standard (range: 0.5-5.0 log10 IU/mL), while Panel B included 20 clinical samples (range: 0.5-6.0 log10 log10 IU/mL) tested across 30 centers [8]. This design enabled comprehensive assessment of both analytical and clinical sensitivity across a biologically relevant concentration range.

LOD in Antimicrobial Susceptibility Testing and Stewardship

Diagnostic Stewardship and Antimicrobial Resistance

The critical role of LOD in antimicrobial stewardship extends beyond mere pathogen detection to influencing therapeutic decision-making and resistance containment. Diagnostic stewardship encompasses "ordering the right tests, for the right patient, at the right time" and promotes the judicious use of rapid molecular diagnostic tools to enable appropriate antibiotic therapy while avoiding excessive broad-spectrum antibiotic use [14].

The profound global impact of antimicrobial resistance underscores this importance, with drug-resistant infections causing approximately 700,000 deaths annually and projected to claim 10 million lives yearly by 2050 without effective intervention [11] [12]. In European Union and European Economic Area countries alone, antibiotic-resistant bacteria cause approximately 33,000 deaths annually and close to 900,000 disability-adjusted life years [11].

Rapid diagnostic methods with optimized LOD can significantly impact this crisis by enabling evidence-based treatment decisions. Currently, an estimated 30% of antibiotic prescriptions in Western countries are either unnecessary or suboptimal, often due to diagnostic uncertainty [11]. Furthermore, roughly 50% of antibiotic treatments are initiated with inappropriate antibiotics and without proper pathogen identification [11].

LOD Implications for AST Methodologies

The relationship between LOD and antimicrobial susceptibility testing (AST) methodologies reveals critical intersections between diagnostic sensitivity and therapeutic guidance:

Table 2: AST Methodologies and LOD Implications

| AST Methodology | Turnaround Time | LOD Considerations | Impact on Stewardship |

|---|---|---|---|

| Traditional Culture-Based | 18-48 hours | Dependent on bacterial growth capacity; higher LOD limits early detection | Delays targeted therapy; promotes empirical broad-spectrum use |

| Automated AST Systems | 6-24 hours after isolation | Standardized LOD across platforms | Faster results but still requires initial isolation |

| Molecular AST | 1-6 hours | Can detect resistance genes directly from specimens; potentially lower LOD for specific targets | Rapid detection of resistance mechanisms enables early targeted therapy |

| Novel Rapid Technologies | Minutes to hours | Varies widely by technology; often optimized for speed rather than ultimate sensitivity | Potential for point-of-care implementation and immediate treatment adjustment |

Traditional phenotypic AST methods, while accurate, are inherently limited by their dependence on bacterial growth, requiring prior isolation and resulting in extended turnaround times of 18-48 hours [11] [12]. This delay frequently compels physicians to initiate empirical antimicrobial therapies, with approximately 50% of antibiotic treatments started with inappropriate antibiotics due to lack of proper diagnosis [11].

Molecular methods offer significantly faster turnaround times (1-6 hours) and can detect resistance determinants directly in clinical specimens, potentially bypassing the need for culture [12]. However, these methods are limited to detecting only known resistance mechanisms targeted by specific probes and may overestimate resistance when detection does not correlate with phenotypic expression [12]. The LOD for these molecular targets becomes crucial for early detection of resistance mechanisms, particularly in low-burden infections or during the early stages of infection.

Essential Research Reagents and Methodologies

The execution of robust LOD studies requires specific reagents and methodologies standardized across laboratories. The following table outlines critical components for comparative LOD investigations:

Table 3: Essential Research Reagents for Comparative LOD Studies

| Reagent/Material | Function | Examples/Specifications |

|---|---|---|

| International Standards | Provide standardized reference materials for cross-assay comparison | WHO International Standards (e.g., WHO/HDV standard, WHO HCMV standard) [8] [10] |

| Clinical Sample Panels | Assess real-world performance across biological matrices | Characterized residual clinical samples spanning expected concentration range [10] |

| Negative Matrix Materials | Diluent for standards; assessment of specificity | Pathogen-free plasma, serum, or appropriate biological fluid [8] |

| Nucleic Acid Extraction Kits | Standardize extraction efficiency across platforms | Manufacturer-matched or comparable extraction systems [10] |

| Quality Control Materials | Monitor assay precision and reproducibility | Low-positive controls near LOD, negative controls [8] [10] |

| Reference Methodologies | Provide comparator for new assay validation | Established RT-qPCR platforms, reference culture methods [10] |

The HDV-RNA study exemplifies proper utilization of these research reagents, employing both WHO international standards and clinical samples across multiple centers to enable meaningful cross-platform comparisons [8]. Similarly, the evaluation of the automated molecular system used WHO International Standards for EBV and HCMV alongside clinical samples and national reference materials [10].

Public Health Implications and Future Directions

Population Health Consequences of LOD Variability

The heterogeneity in LOD performance across diagnostic platforms has profound implications for public health surveillance and intervention strategies. Inconsistent detection capabilities can lead to:

- Delayed outbreak recognition due to variable sensitivity in detecting low pathogen concentrations

- Inaccurate incidence estimates that compromise public health planning and resource allocation

- Ineffective containment measures when infected individuals go undetected

- Distorted antimicrobial resistance patterns due to uneven detection of resistant strains

The significant LOD variability observed in the HDV-RNA study (ranging from 3 to 316 IU/mL across different platforms) exemplifies how diagnostic inconsistency could hamper proper viral load quantification, particularly at low concentrations [8]. This variability directly impacts treatment monitoring and assessment of virological response to antiviral therapy, with potential consequences for both individual patient outcomes and population-level management of chronic infections.

Diagnostic Pathways and Public Health Impact

The relationship between LOD performance, diagnostic pathways, and public health outcomes can be visualized as follows:

Figure 2: Diagnostic LOD Impact Pathway

Future Directions and Standardization Needs

Addressing the challenges identified in comparative LOD studies requires concerted efforts across multiple domains:

Assay Improvement: The HDV-RNA study authors emphasized "the need to improve the diagnostic performance of most assays for properly identifying virological response to anti-HDV drugs," a conclusion applicable across infectious disease diagnostics [8].

Method Standardization: Development of universal protocols for LOD determination across diagnostic platforms would facilitate more meaningful comparisons and establish consistent performance expectations [13] [10].

Integrated Methodologies: As noted in evaluations of microbiological methodologies, "integration of multiple methodologies is recommended to overcome the limitations of individual techniques," providing more comprehensive understanding of microbial detection and resistance profiles [15].

Point-of-Care Adaptation: Future technology development should focus on creating "innovative, rapid, accurate, and portable diagnostic tools for AST" that maintain optimal LOD while increasing accessibility [12].

The comprehensive evaluation framework applied to automated molecular systems offers a model for standardized validation, incorporating concordance rate, accuracy, linearity, precision, LOD, interference testing, cross-reactivity, and carryover contamination assessment [10]. Such rigorous approaches ensure that LOD claims translate to reliable clinical performance across diverse laboratory settings.

The Limit of Detection represents far more than a technical analytical parameter—it serves as a fundamental determinant of diagnostic efficacy with cascading implications for clinical management, antimicrobial stewardship, and public health surveillance. Substantial variability in LOD across diagnostic platforms, as demonstrated in the HDV-RNA study where 95% LOD values ranged from 3 to 316 IU/mL, directly impacts patient care through delayed detection, inaccurate quantification, and potential mismanagement of antimicrobial therapy [8].

The critical importance of LOD optimization extends to the global antimicrobial resistance crisis, where improved diagnostic sensitivity contributes to antimicrobial stewardship by enabling rapid pathogen identification and resistance detection [14] [12]. With antimicrobial resistance claiming hundreds of thousands of lives annually and projected to cause greater morbidity in coming decades, the development and implementation of highly sensitive, reproducible diagnostic platforms constitutes an urgent public health priority [11] [12].

Future progress requires standardized validation methodologies, enhanced assay performance particularly at low analyte concentrations, and integration of novel technologies that maintain sensitivity while improving accessibility and speed. Through concerted efforts to optimize and standardize LOD performance across diagnostic platforms, the clinical microbiology community can significantly advance individualized patient care and strengthen collective defenses against the escalating threat of antimicrobial resistance.

In microbiological research and clinical diagnostics, the accurate detection and identification of microbial pathogens are fundamental. Culture-based methods (CFU), polymerase chain reaction (PCR), and serological assays represent three cornerstone methodologies, each with distinct principles, applications, and performance characteristics. The limit of detection (LOD) is a critical parameter that defines the lowest quantity of a microorganism that an assay can reliably detect, directly influencing diagnostic sensitivity and efficacy. Understanding the comparative advantages and limitations of these techniques is essential for selecting the appropriate tool for specific research or clinical scenarios, from food safety and environmental monitoring to managing human infectious diseases. This guide provides an objective comparison of CFU, PCR, and serology, supported by experimental data, to inform researchers, scientists, and drug development professionals in their methodological choices.

Comparative Performance Data

The selection of a diagnostic assay often involves trade-offs between sensitivity, specificity, speed, and cost. The following table summarizes the core performance characteristics of CFU, PCR, and Serology assays, drawing on direct comparative studies.

Table 1: Core Performance Characteristics of Benchmark Assays

| Assay Type | Key Performance Characteristics |

|---|---|

| Culture (CFU) | Considered the "gold standard" due to high specificity and the ability to provide a viable isolate for further analysis (e.g., antibiotic susceptibility testing). However, it is time-consuming (24-48 hours to several days) and has lower sensitivity compared to molecular methods. Its LOD is typically in the range of 101 to 104 CFU/g or mL, depending on the organism and sample matrix [16] [17]. |

| PCR | Highly sensitive and specific, with a rapid turnaround time (a few hours). A comprehensive review found PCR to have the lowest average LOD (6 CFU/mL) compared to other rapid methods [18]. Its performance can be influenced by the sample type; for example, stool samples can contain PCR inhibitors [16]. Real-time PCR (qPCR) is generally more sensitive than conventional PCR [19]. |

| Serology | Detects the host's immune response (antibodies) to an infection, which is useful for diagnosing diseases where the pathogen is difficult to culture or detect directly. It can have high specificity (>90%) and is valuable for single-serum diagnosis. However, its sensitivity can be variable, and it may not distinguish between current and past infections. Combining serology with PCR significantly increases diagnostic sensitivity [20]. |

The quantitative detection limits for these methods can vary significantly based on the target pathogen and sample type. The following table compiles specific LOD data from various experimental studies.

Table 2: Experimental Detection Limits for Various Pathogens and Sample Types

| Target Organism | Sample Type | Culture (CFU) | PCR (CFU) | Serology | Citation |

|---|---|---|---|---|---|

| Xylella fastidiosa | Blueberry tissue | - | 6 CFU/mL (avg., multiple PCR types) | - | [18] |

| Xylella fastidiosa | Pure culture | - | 25 fg DNA (≈9 copies) (qPCR) | - | [19] |

| Clostridium difficile | Spiked human stool | 10 CFU/g | 100 CFU/g | - | [16] |

| Campylobacter jejuni | Spiked human stool | 10,000 CFU/g | 100 CFU/g | - | [16] |

| Yersinia enterocolitica | Spiked human stool | 100 CFU/g | 10,000 CFU/g | - | [16] |

| Bordetella pertussis | Clinical (Household contacts) | Low sensitivity | Variable by target (IS481 more sensitive than ptxA-Pr) | >90% specificity (Single serology, ≥100 EU/mL) | [20] |

| Bacillus cereus | Donor human milk | 24-48 hr incubation (Gold standard) | Excellent sensitivity & specificity, fully automated | - | [21] |

| Mycoplasma pneumoniae | Throat swabs | 1 CFU (by culture) | 0.06-2 CFU/μL (19/21 culture+ samples) | - | [22] |

Detailed Experimental Protocols

To ensure reproducibility and provide insight into how comparative data are generated, detailed protocols from key cited studies are outlined below.

Protocol 1: Comparison of PCR and Serology for Bordetella pertussis

This study directly compared real-time PCR and single-serum serology for diagnosing pertussis in household contacts of infected infants [20].

- Sample Collection: Nasopharyngeal aspirates/swabs were collected for PCR, and acute and convalescent-phase blood samples were collected for serology.

- PCR Methodology:

- Targets: Two real-time PCR assays were used on a LightCycler platform: one targeting the multi-copy insertion sequence IS481 and another targeting the pertussis toxin promoter region (ptxA-Pr).

- Inhibition Control: An internal control DNA (ICD-PT) was spiked into each sample to detect PCR failure.

- Amplification: Conditions included 50 cycles of 5 s at 95°C, 5 s at 66°C, and 8 s at 72°C.

- Serology Methodology:

- Technique: Anti-pertussis toxin (PT) IgG was quantified by Enzyme-Linked Immunosorbent Assay (ELISA).

- Case Definition: A positive single serology was defined as a titer of ≥100 or ≥125 ELISA units (EU)/mL. A positive paired serology was defined as a two- or fourfold change in titer between acute and convalescent sera.

- Data Analysis: Sensitivity, specificity, and performance (Youden index) were calculated by pooling all clinical and laboratory diagnostic information as a composite gold standard.

Protocol 2: Comparison of Molecular and Serological Methods for Xylella fastidiosa

This study compared the detection limits of four molecular techniques and one serological technique for detecting Xylella fastidiosa in blueberry plants [19].

- Sample Preparation: DNA was extracted from leaf petioles and midribs of infected plants. For sensitivity analysis, DNA from a pure bacterial culture was serially diluted and mixed with uninfected blueberry DNA to mimic an infected sample matrix.

- Molecular Methods:

- Conventional PCR (C-PCR) & Real-time PCR (qPCR): Used the primer set RST 31/33 targeting the RNA polymerase sigma factor.

- LAMP (Loop-mediated isothermal amplification): An isothermal method performed in a heat block or water bath.

- AmplifyRP Acceler8: A recombinase-polymerase amplification (RPA)-based assay for on-site detection.

- Serological Method:

- DAS-ELISA: A double antibody sandwich enzyme-linked immunosorbent assay.

- LOD Determination: The detection limit for each assay was determined as the lowest concentration of the spiked DNA or bacteria that consistently yielded a positive result.

The workflow for a comprehensive comparative study integrating these methods is illustrated below.

Figure 1: Workflow for comparative evaluation of microbiological assays.

Research Reagent Solutions

The execution of CFU, PCR, and serology assays requires specific reagents and materials. The following table details key solutions and their functions as featured in the cited experiments.

Table 3: Key Research Reagents and Their Functions in Microbiological Assays

| Reagent / Material | Function / Application | Example Assay Types |

|---|---|---|

| Selective Culture Media | Supports growth of specific pathogens while inhibiting background flora; essential for viable count (CFU) and isolation. | Culture [16] |

| Primers (e.g., RST 31/33, IS481, ptxA-Pr) | Short, single-stranded DNA sequences designed to bind to and amplify specific target genes of the pathogen. | Conventional PCR, Real-time PCR [20] [19] |

| Probes (e.g., Hydrolysis/TaqMan, Hybridization) | Fluorescently-labeled oligonucleotides that bind specifically to amplified DNA, enabling real-time detection and quantification in qPCR. | Real-time PCR [20] [21] |

| Internal Control DNA | Non-target DNA spiked into samples to monitor for the presence of PCR inhibitors and confirm assay validity. | Real-time PCR [20] |

| Antigens (e.g., Purified Pertussis Toxin) | Immobilized pathogen-derived proteins used to capture specific antibodies from patient serum in an ELISA. | Serology (ELISA) [20] |

| Enzyme Conjugates & Substrates | Enzyme-linked antibodies (e.g., Horseradish Peroxidase) and their colorimetric/chromogenic substrates generate a detectable signal in ELISA. | Serology (ELISA) [19] |

| Nanoparticles (Gold, Magnetic) | Act as visual or electrochemical labels in lateral flow assays (LFIA) or to enhance nucleic acid extraction and amplification efficiency. | LFIA, PCR [18] |

CFU, PCR, and serology each occupy a critical and often complementary niche in the microbiologist's toolkit. Culture remains the unrivaled method for obtaining viable isolates but is constrained by time and sensitivity. PCR offers superior speed and detection limits for direct pathogen identification, while serology provides a window into the host's immune response, which is invaluable for diagnosing certain infections. The experimental data presented demonstrates that the optimal assay choice is not universal but depends heavily on the specific pathogen, sample matrix, and clinical or research question. Furthermore, combining these methodologies, such as using PCR and serology together, can yield the highest diagnostic sensitivity, underscoring the power of an integrated approach in advanced microbiological analysis and drug development.

In the development and validation of microbiological assays, the Limit of Detection (LOD) represents a fundamental performance parameter, defined as the minimum amount of a target pathogen or analyte that can be reliably distinguished from its absence with a specific degree of confidence, typically 95% [23]. Establishing a robust LOD is critical for ensuring diagnostic assays are "fit for purpose," particularly for pathogens with low infectious doses where early and accurate detection directly impacts clinical outcomes and public health interventions [23] [24]. The reliability of any LOD determination study is inherently tied to the quality and appropriateness of the reference materials and standards used throughout the analytical validation process. These materials form the foundational baseline against which assay sensitivity is measured, enabling meaningful comparisons across different methodological platforms and technologies.

The determination of LOD is not a singular concept but part of a family of low-concentration performance metrics. The Limit of Blank (LoB) describes the highest apparent analyte concentration expected from replicates of a blank sample containing no analyte, calculated as LoB = mean_blank + 1.645(SD_blank) assuming a Gaussian distribution [24]. The LOD itself is the lowest analyte concentration likely to be reliably distinguished from the LoB, determined by the formula LOD = LoB + 1.645(SD_low concentration sample) [24]. Beyond detection lies the Limit of Quantitation (LoQ), the lowest concentration at which the analyte can be reliably detected and quantified with predefined goals for bias and imprecision, always greater than or equal to the LOD [24]. Understanding these distinct but related parameters is essential for designing comprehensive comparative studies of microbiological assay performance.

The Critical Role of Reference Materials in LOD Studies

Defining Reference Materials and Standards

Reference materials and standards constitute the cornerstone of reliable LOD determination. In microbiological contexts, these encompass authenticated microbial strains with pre-established concentrations, quantified nucleic acids with known genome copy numbers, and synthetic molecular standards that mimic target genetic sequences [23]. The fundamental characteristic of these materials is their authentication and qualification through polyphasic characterization approaches that establish identity and confirm characteristic traits, making them ideal for determining the detection limit of an assay [23]. Without such properly characterized materials, any LOD determination remains questionable and non-transferable across laboratories.

The selection of appropriate reference materials must reflect the diversity of the target pathogen in clinical or environmental settings. For example, when developing an assay for Clostridioides difficile, it is essential to acquire strains representing the major known toxinotypes to ensure the determined LOD is relevant across clinically relevant variants [23]. This inclusivity testing guards against false negatives that might occur due to sequence variations affecting primer binding or antibody recognition, depending on the assay technology platform employed. Furthermore, the commutability of these materials—their behavior resembling native patient samples—is essential for obtaining clinically relevant LOD values, particularly when establishing LoB and LoD using clinical sample matrices [24].

Key Functions in LOD Determination

Reference materials serve multiple critical functions in LOD determination studies. Primarily, they provide a traceable baseline for analytical sensitivity, allowing different laboratories to benchmark their assays against a common standard [23]. This is particularly important for regulatory submissions where manufacturers must demonstrate adequate detection capabilities for in vitro diagnostic devices [25]. Secondly, they enable method comparison by providing a consistent input material for evaluating different analytical methodologies in terms of prediction ability and detection capability [26]. When different laboratories use the same well-characterized reference materials, the resulting LOD values become directly comparable across technological platforms.

A third crucial function is the facilitation of longitudinal performance monitoring. Using the same reference materials over time allows laboratories to track assay performance drift, identify reagent degradation, and maintain quality assurance protocols. This is especially valuable for molecular assays where amplicon contamination or enzyme activity decline can subtly affect LOD without complete assay failure. Finally, reference materials support troubleshooting and optimization during assay development. When unexpected LOD values are obtained, well-characterized reference materials help isolate whether problems originate from the detection chemistry, sample processing, or other analytical variables, thereby accelerating development cycles.

Types of Reference Materials and Their Applications

The selection of appropriate reference materials varies significantly depending on the assay format, detection technology, and intended application. The table below summarizes the primary categories of reference materials used in LOD determination for microbiological assays.

Table 1: Categories of Reference Materials for LOD Determination in Microbiological Assays

| Material Type | Description | Primary Applications | Key Considerations |

|---|---|---|---|

| Live Microbial Cultures | Viable, authenticated microorganisms with quantified concentration through culture-based methods | Culture-based detection methods, viability assays, infectivity studies | Requires proper storage and handling to maintain viability and concentration; essential for determining clinical LOD in spiked samples |

| Inactivated Microorganisms | Chemically or physically inactivated pathogens retaining structural components | Immunoassays, PCR-based methods where viability is not required | Improved safety profile; stability often enhanced compared to live cultures |

| Quantified Genomic DNA | Extracted nucleic acids with precisely determined concentration and copy number | Molecular assays (PCR, isothermal amplification, NGS) | Quantification method critical (e.g., PicoGreen, RiboGreen, Droplet Digital PCR); must address fragmentation state |

| Synthetic Molecular Standards | Engineered nucleic acid sequences mimicking target regions | Molecular assays, particularly for emerging pathogens or sequence variants | Lacks matrix effects; highly reproducible; may not fully capture extraction efficiency |

| Clinical Matrix Spikes | Reference materials incorporated into appropriate clinical matrices (blood, stool, etc.) | Determining clinical LOD accounting for matrix effects and extraction efficiency | Must mimic native patient samples; commutability assessment essential |

Selection Criteria for Reference Materials

Choosing appropriate reference materials requires careful consideration of multiple factors. Accuracy of quantification is paramount, as any error in the assigned concentration directly propagates to the determined LOD value [23]. The quantification method must be appropriate for the material type, with digital PCR increasingly recognized as the gold standard for nucleic acid quantification due to its absolute counting capability without need for standard curves. Stability under storage conditions and through freeze-thaw cycles is another critical factor, particularly for proficiency testing programs that ship materials to multiple laboratories.

The representativeness of the reference material to actual clinical samples affects the translational relevance of the determined LOD. While purified nucleic acids are excellent for establishing instrumental LOD, they fail to capture the complexities of nucleic acid extraction efficiency from clinical matrices, potentially leading to overly optimistic LOD estimates [23]. Furthermore, the genetic diversity represented in the reference materials should reflect circulating strains, requiring periodic updates to reference panels to maintain clinical relevance, particularly for rapidly mutating pathogens.

Experimental Protocols for LOD Determination

General Workflow for LOD Determination

The determination of LOD follows a systematic workflow that progresses from preliminary range-finding to definitive statistical estimation. The general approach involves serial dilution of quantified reference materials around an expected detection limit followed by extensive replication at each concentration level to establish reliable response curves and statistical distributions. The workflow diagram below illustrates the key stages in this process.

Diagram 1: LOD Determination Workflow

Detailed Step-by-Step Protocol

Preparation of Reference Materials

The initial step involves acquiring or preparing authenticated reference materials with accurately determined concentrations. For microbial cultures, this typically involves enumeration through plate counting or most probable number (MPN) methods. For nucleic acids, quantification using fluorescence-based methods (PicoGreen, RiboGreen) or digital PCR is essential [23]. The material should represent the target of interest—whole organisms for culture-based or antigen assays, genomic DNA for PCR-based methods, or specific protein targets for immunoassays. Proper documentation of the characterization methods and uncertainty estimates for the assigned values is crucial for interpreting subsequent LOD results.

Range-Finding Study

Before undertaking the full LOD determination, a preliminary range-finding study is conducted to identify the approximate detection limit. This involves testing a broad dilution series (e.g., 10-fold dilutions) with fewer replicates (typically 3-5) to identify the concentration range where the assay transitions from consistently detecting to inconsistently detecting the target. This range-finding step is critical for efficiently focusing the more resource-intensive definitive LOD study on the most relevant concentration region, thus optimizing the use of reference materials and laboratory resources.

Definitive LOD Study

Once the approximate range is identified, a definitive LOD study is performed with a tighter dilution series (e.g., 2-fold or 3-fold dilutions) around the suspected detection limit. Each dilution level is tested with a sufficient number of replicates (recommended 20-60) to obtain statistically robust estimates of detection frequency and response variability [23] [24]. For assays intended for complex sample matrices, the dilution series should be prepared in the appropriate matrix (e.g., stool for enteric pathogens, blood for bloodstream infections) to account for matrix effects that can influence extraction efficiency and amplification inhibition [23].

Data Analysis and Calculation

The data from the definitive study is analyzed to calculate both the LoB and LOD. The LoB is determined by testing replicates of a blank sample (containing no analyte) and calculating LoB = mean_blank + 1.645(SD_blank), which establishes the threshold above which a signal is considered detected with 95% confidence [24]. The LOD is then determined using replicates of a low-concentration sample and calculating LOD = LoB + 1.645(SD_low concentration sample) [24]. This statistical approach ensures that the LOD represents the concentration at which the signal can be distinguished from both the analytical noise (LoB) and the variability of low-level samples.

Comparative Experimental Data: Case Studies and Applications

Case Study: Clostridioides difficile Assay Development

A representative case study for LOD determination involves developing a PCR-based assay for Clostridioides difficile in stool samples. Researchers first acquired reference strains representing major toxinotypes and quantified the concentration of each culture preparation [23]. Following a range-finding study, they prepared an appropriate dilution series and spiked each dilution into negative stool matrix. After suitable recovery and concentration procedures, at least 20 replicates for each dilution were tested by the PCR assay and confirmed by colony counting [23]. The table below demonstrates hypothetical data from such a study, illustrating how LOD would be determined across different toxinotypes.

Table 2: Hypothetical LOD Determination for C. difficile Toxinotypes in Stool Matrix

| Toxinotype | LoB (CFU/mL) | Low Concentration Sample Mean (CFU/mL) | Low Concentration SD (CFU/mL) | Calculated LOD (CFU/mL) | Verified LOD (CFU/mL) |

|---|---|---|---|---|---|

| Toxinotype 0 | 12.5 | 45.2 | 8.7 | 26.8 | 30.0 |

| Toxinotype III | 12.5 | 48.7 | 9.2 | 27.6 | 30.0 |

| Toxinotype V | 12.5 | 52.1 | 10.5 | 29.8 | 35.0 |

| Toxinotype VIII | 12.5 | 43.9 | 11.2 | 30.9 | 35.0 |

This comparative approach reveals whether the assay maintains consistent sensitivity across genetic variants or requires optimization for certain toxinotypes. The slight variation in calculated LOD across toxinotypes could reflect genuine differences in amplification efficiency due to sequence variations, emphasizing the importance of testing diverse reference materials.

Case Study: Hepatitis B Virus Detection Assays

In the context of regulatory science, the reclassification of qualitative hepatitis B virus (HBV) antigen assays, HBV antibody assays, and quantitative HBV nucleic acid-based assays from class III to class II by the FDA illustrates the importance of standardized LOD determination using appropriate reference materials [25]. This reclassification was based on evidence that special controls, including well-defined analytical sensitivity requirements, could provide reasonable assurance of safety and effectiveness [25]. Manufacturers seeking clearance for these devices must demonstrate appropriate LOD using international standards or well-qualified in-house reference panels, enabling more consistent comparison across platforms and facilitating market access for improved diagnostic tools.

Advanced Applications: Detection of Low-Abundance Strains in Microbiome Studies

In cutting-edge microbiome research, methods like ChronoStrain have been developed specifically for profiling low-abundance microbial taxa with strain-level resolution in longitudinal samples [27]. Such algorithms require careful validation using defined microbial communities with known compositions and abundances to establish their limits of detection for specific strains. In benchmarking studies, ChronoStrain demonstrated significantly improved detection of low-abundance strains compared to existing methods like StrainGST and StrainEst, particularly in semi-synthetic benchmarks where ground truth abundances were known [27]. This highlights how proper reference materials enable not just assay validation but also methodological advancement in complex analytical scenarios.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful LOD determination requires access to a comprehensive toolkit of research reagents and reference materials. The table below details essential components for designing and executing robust LOD studies for microbiological assays.

Table 3: Essential Research Reagent Solutions for LOD Determination Studies

| Reagent Category | Specific Examples | Function in LOD Studies | Key Quality Metrics |

|---|---|---|---|

| Characterized Microbial Strains | ATCC Genuine Cultures, NCTC strains | Provide biologically relevant targets for assay validation; used for spiking studies | Authentication, viability, purity, accurate quantification, genetic characterization |

| Quantified Nucleic Acids | ATCC Genuine Nucleics, WHO International Standards | Enable molecular assay standardization; establish instrumental LOD without extraction variables | Concentration accuracy, purity (A260/280), fragment size distribution, copy number determination |

| Molecular Standards | Synthetic gBlocks, plasmid controls | Specific sequence targets without biological hazard; ideal for quantitative PCR standard curves | Sequence verification, concentration accuracy, stability |

| Clinical Matrices | Characterized negative stool, blood, urine | Provide realistic background for determining clinical LOD; assess matrix inhibition | Commutability, absence of target analyte, appropriate preservation |

| Quantification Assays | PicoGreen, RiboGreen, digital PCR | Precisely determine concentration of reference materials for accurate dilution series | Accuracy, precision, linear range, specificity |

| Extraction Controls | Exogenous internal control viruses, synthetic spike-ins | Monitor extraction efficiency across different matrices and concentrations | Non-interference with target, stability through extraction, distinct detection signal |

Reference materials and standards form the essential foundation for reliable LOD determination in microbiological assays, enabling meaningful comparisons across technologies, laboratories, and time. The selection of appropriate, well-characterized materials directly impacts the translational relevance of determined detection limits, bridging the gap between analytical sensitivity and clinical utility. As methodological advances continue to push detection capabilities to lower limits, with techniques like ChronoStrain demonstrating improved detection of low-abundance taxa [27], the role of reference materials becomes increasingly critical for validation and standardization.

The future of comparative LOD studies will likely see greater adoption of international standards for key pathogens, enhanced digital tools for data sharing and method comparison, and more sophisticated computational approaches for analyzing complex detection data. Throughout these advancements, the fundamental principle remains constant: reliable LOD determination requires a baseline established through authenticated, quantified reference materials that represent the biological and analytical challenges of real-world applications. By adhering to rigorous protocols using these standards, researchers and developers can ensure their microbiological assays deliver detection capabilities truly fit for purpose in clinical, public health, and research settings.

Methodologies in Practice: LOD Performance of Traditional and Emerging Assays

Limit of Detection (LOD) serves as a critical figure of merit for evaluating the analytical sensitivity of molecular diagnostics. This guide provides a systematic comparison of the LOD performance of three major nucleic acid amplification techniques: digital PCR (dPCR), quantitative PCR (qPCR), and isothermal amplification methods, notably Loop-Mediated Isothermal Amplification (LAMP). Drawing from recent experimental studies and statistical analyses, we consolidate quantitative data to inform assay selection for research and drug development, framing the discussion within the broader context of comparative LOD studies for microbiological assays.

In molecular diagnostics, the Limit of Detection (LOD) is defined as the lowest concentration of an analyte that can be consistently detected by a given assay with a high degree of confidence, typically 95% [28] [29]. The accurate determination of LOD is paramount for applications requiring high sensitivity, such as early disease detection, monitoring low-level pathogens, and quantifying residual disease. The fundamental principles of LOD estimation are rooted in statistical methods, often employing probit analysis to calculate the concentration at which 95% of tested samples return a positive result (C95) [28] [29]. While classical approaches sometimes assume a Poisson distribution of target molecules, modern frameworks account for technical and biological variations, such as overdispersion, using distributions like the negative binomial for more accurate LOD estimation [30] [29].

The evolution of nucleic acid amplification technologies has progressively pushed the boundaries of LOD. The gold-standard qPCR, despite its widespread use, faces limitations in absolute quantification and sensitivity due to its reliance on standard curves and exponential amplification phase measurement [31] [32]. The emergence of dPCR and refined isothermal techniques like LAMP offers promising alternatives, each with distinct advantages and LOD characteristics driven by their underlying mechanisms [33] [34] [35].

Quantitative PCR (qPCR)

qPCR, also known as real-time PCR, is a relative quantification method. It monitors the amplification of a target DNA sequence in real-time using fluorescent reporters. The quantification cycle (Cq), at which the fluorescence crosses a predetermined threshold, is used to determine the initial template concentration by comparison to a standard curve [34] [32]. Its performance is influenced by amplification efficiency and the accuracy of the external standards.

Digital PCR (dPCR)

dPCR is a third-generation PCR technology that enables absolute quantification of nucleic acids without a standard curve. The core principle involves partitioning a PCR reaction into thousands to millions of individual nanoliter-sized reactions. Following end-point amplification, the fraction of positive partitions is counted, and the absolute concentration is calculated using Poisson statistics [31] [34]. This partitioning allows for the detection of single molecules, significantly enhancing sensitivity and tolerance to PCR inhibitors [34] [32]. Common formats include droplet digital PCR (ddPCR) and microchamber-based dPCR [34].

Loop-Mediated Isothermal Amplification (LAMP)

LAMP is an isothermal nucleic acid amplification technique that operates at a constant temperature (typically 60-65°C). It utilizes a DNA polymerase with high strand displacement activity and four to six primers that recognize distinct regions of the target DNA, leading to the formation of loop structures that enable self-priming amplification [28] [35]. Its simplicity, speed, and compatibility with point-of-care (POC) settings make it an attractive alternative to PCR-based methods [28]. Digital LAMP (dLAMP) combines the absolute quantification benefits of digital analysis with the operational simplicity of isothermal amplification [35].

The following diagram illustrates the fundamental workflow differences between these three core technologies.

Comparative LOD Performance Data

The following tables consolidate experimental LOD data from recent studies across various targets, providing a direct comparison of the analytical sensitivity of each technology.

Table 1: Direct LOD comparison of molecular assays for SARS-CoV-2 detection [36]

| Assay | Technology Type | Probit LOD (copies/mL) |

|---|---|---|

| Roche Cobas | High-throughput qPCR | ≤ 10 |

| Abbott m2000 | High-throughput qPCR | 53 |

| Hologic Panther Fusion | High-throughput qPCR | 74 |

| CDC Assay (ABI 7500, EZ1) | Laboratory-developed qPCR | 85 |

| DiaSorin Simplexa | Sample-to-answer | 167 |

| GenMark ePlex | Sample-to-answer | 190 |

| Abbott ID NOW | Point-of-care Isothermal | 511 |

Table 2: LOD performance across different technologies and targets

| Target | Technology | Reported LOD | Context |

|---|---|---|---|

| Human CMV DNA | LAMP | 39.09 copies/reaction | Determined with 24 replicates per concentration [28] |

| HIV DNA | dPCR | 75 copies/10⁶ PBMC | LOD₉₅% determined via probit analysis [32] |

| Bacteria Genomic DNA | Digital LAMP (on membrane) | 11 copies/μL | Dynamic range from 11 to 1.1 × 10⁵ copies/μL [35] |

| SARS-CoV-2 Viral RNA | ddPCR | Effectively quantified low amounts | More suitable for determining copy number of reference materials than qPCR [31] |

Detailed Experimental Protocols

To ensure the reliability and reproducibility of LOD studies, standardized experimental protocols and statistical analyses are essential. The following sections outline key methodologies.

LOD Determination for a Qualitative LAMP Assay

A biometrological study for the detection of human Cytomegalovirus (hCMV) DNA provides a robust protocol for LOD determination in isothermal assays [28].

- Sample Preparation: A dilution series of eight different hCMV DNA concentrations was prepared. The concentration of the stock DNA was precisely determined via qPCR performed in 21 parallel replicates.

- Experimental Replication: The LAMP assay was performed with a total of 192 samples, comprising 24 replicates for each of the 8 concentrations. This high level of replication ensures statistical reliability for LOD calculation.

- Amplification Conditions: The LAMP reaction was conducted at a constant temperature of 65°C using a specific primer set targeting the hCMV genome. Fluorescence or turbidity was monitored for endpoint detection.

- Data Analysis: The LOD was calculated as the concentration at which 95% of the test results are positive (C95). This was determined statistically from the binary (detected/not detected) results across the dilution series, yielding an LOD of 39.09 copies/reaction with a 95% confidence interval [28].

LOD Determination for dPCR and Comparative Analysis with qPCR

A study monitoring total HIV DNA demonstrates a standard approach for evaluating dPCR and comparing it with qPCR [32].

- dPCR Optimization: The dPCR assay was optimized by testing different primers, probe concentrations, and thermocycling conditions. Key criteria included the fluorescence amplitude ratio between positive and negative controls, clear separation of positive/negative partitions, and minimal false positives.

- LOD and LOQ Assessment: The 95% LOD was determined by testing 14 concentrations in multiple replicates, followed by probit analysis. The Limit of Quantification (LOQ) was established as the lowest concentration with acceptable accuracy (98.4% in this study).

- Precision Measurement: Repeatability (intra-assay precision) and reproducibility (inter-assay precision) were evaluated by running replicates at low (100 copies/10⁶ PBMC) and high (1000 copies/10⁶ PBMC) concentrations. The Coefficient of Variation (CV) was calculated for both dPCR and qPCR.

- Result: The dPCR demonstrated significantly better reproducibility than qPCR (CV of 11.9% vs. 24.7% at high concentration, p-value = 0.024), underscoring its advantage for precise longitudinal monitoring [32].

Statistical Determination of LOD Using Probit Analysis

Probit analysis is a standard statistical method for determining the LOD from dilution series data.

- Experimental Design: A series of sample dilutions are prepared, spanning the expected LOD concentration. A sufficient number of replicates (e.g., 20 replicates) are tested at each concentration to obtain a reliable binary response rate [36].

- Model Fitting: The proportion of positive results at each concentration is transformed into a "probability unit" (probit). A regression line is fitted to the probits versus the logarithms of the concentrations.

- LOD Calculation: The LOD is defined as the concentration corresponding to a 95% probability of detection (probit = 1.645) on the fitted regression line [36] [28]. This method was used, for instance, to establish the LOD for various SARS-CoV-2 assays shown in Table 1 [36].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key reagents and materials for molecular assays based on cited studies

| Item | Function / Description | Example Use Case |

|---|---|---|

| Bst 2.0 WarmStart Polymerase | DNA polymerase with strand-displacement activity, crucial for LAMP. | Used in digital LAMP on a membrane for bacterial DNA and MS2 virus quantification [35]. |

| Track-Etched Polycarbonate (PCTE) Membrane | A low-cost substrate containing nano-pores that function as individual reaction chambers. | Served as a disposable platform for partitioning reactions in digital LAMP, costing <$0.10 per piece [35]. |

| Primer-Dye-Primer-Quencher Duplex Probe | A fluorescent probe system that generates high signal-to-noise ratios (e.g., 100x difference). | Employed in digital RT-LAMP to clearly distinguish positive from negative pores [35]. |

| Droplet Digital PCR System (e.g., QX200 from Bio-Rad) | Instrumentation for generating and analyzing water-in-oil droplets for ddPCR. | Used for absolute quantification of viral RNA in SARS-CoV-2 studies and reference material characterization [36] [31]. |

| 8E5 Cell Line | A cell line containing a single, integrated copy of the HIV provirus per cell, used as a quantitative standard. | Served as the standard for HIV DNA quantification in both qPCR and dPCR assays; its stability is critical [32]. |

The comparative analysis of LOD performance reveals a clear technological trajectory toward greater sensitivity and precision in molecular diagnostics. dPCR consistently demonstrates superior performance for applications requiring the highest level of accuracy, absolute quantification, and detection of rare targets, making it particularly valuable for liquid biopsy, viral reservoir monitoring, and reference material characterization [34] [32]. qPCR remains a robust, high-throughput workhorse for many diagnostic applications but shows greater variability, partly attributable to reliance on external standards [32]. Isothermal amplification techniques like LAMP offer an excellent balance of speed, simplicity, and sensitivity, especially suited for point-of-care testing. When combined with a digital format (dLAMP), they can achieve quantification capabilities rivaling dPCR at a potentially lower cost and with simpler instrumentation [28] [35].

Future developments are likely to focus on the integration of artificial intelligence (AI) for fluorescence image analysis and signal interpretation in platforms like dNAAT (digital Nucleic Acid Amplification Testing), which could further enhance precision and automate LOD determination [33]. Furthermore, the ongoing miniaturization and cost reduction of digital systems, including novel platforms like inexpensive membranes for dLAMP, promise to democratize access to ultra-sensitive molecular quantification, ultimately broadening its impact in research, clinical diagnostics, and public health [35].

Point-of-care (POC) testing has revolutionized diagnostic medicine by enabling rapid, on-site detection of pathogens and biomarkers without the need for complex laboratory infrastructure. These platforms are particularly vital for the early detection of infectious diseases, timely medical intervention, and effective public health management, especially in resource-limited settings. Among the most prominent POC technologies are lateral flow assays (LFAs), nucleic acid test strips, and paper-based microfluidic devices, each offering unique advantages in simplicity, cost-effectiveness, and rapid result generation.

A critical performance parameter for evaluating these diagnostic platforms is the limit of detection (LOD), defined as the lowest concentration of an analyte that can be reliably distinguished from zero. The LOD fundamentally determines a test's clinical utility, affecting its ability to identify early infections, detect low pathogen loads, and monitor disease progression. Understanding the factors that influence LOD—including assay design, signal detection methodology, and sample processing—is essential for researchers, scientists, and drug development professionals seeking to develop, validate, and implement these technologies.

This comparison guide provides a systematic evaluation of rapid POC platforms, focusing on their LOD performance characteristics, underlying technological principles, and experimental methodologies. By synthesizing current research data and technical specifications, this analysis aims to support evidence-based selection and optimization of POC diagnostic platforms for specific microbiological assay requirements.

Fundamental Principles and Architectures

Lateral Flow Assays (LFAs) are membrane-based diagnostic platforms that leverage capillary action to transport liquid samples across various zones where target analytes interact with recognition elements (typically antibodies or oligonucleotides). The classic LFA architecture consists of four key components: a sample pad for initial application, a conjugate pad containing labeled detection reagents, a nitrocellulose membrane with immobilized capture lines (test and control), and an absorbent pad that drives fluid flow [37]. The simplicity of this design enables rapid, user-friendly operation without requiring external instrumentation for basic colorimetric detection, making LFAs one of the most widely deployed POC formats globally.

Nucleic Acid Test Strips represent a specialized LFA variant designed specifically to detect amplified DNA or RNA sequences. These systems typically couple isothermal amplification techniques (such as RPA, LAMP, or NASBA) with lateral flow detection. Unlike conventional LFAs that primarily detect antigens or antibodies, nucleic acid strips often employ hybridization-based capture using complementary oligonucleotide probes immobilized on the test line [38]. This approach provides exceptional specificity for sequence-specific detection, making it particularly valuable for pathogen identification, genetic testing, and antimicrobial resistance profiling.

Paper-Based Microfluidic Analytical Devices (μPADs) encompass a broader category of diagnostic platforms that create defined hydrophilic/hydrophobic channels on paper substrates to control fluid movement. These devices enable more complex fluidic manipulations than simple lateral flow, including multiplexed parallel assays, multi-step chemical reactions, and preconcentration steps that can significantly enhance detection sensitivity [39] [40]. The fabrication of μPADs employs various patterning techniques—such as wax printing, photolithography, inkjet printing, and chemical vapor deposition—to create precise microfluidic networks that guide sample flow to specific detection zones [39].

Comparative LOD Performance Analysis

The table below summarizes the typical LOD ranges and performance characteristics of the three POC platform categories across various application domains:

Table 1: Comparative LOD Performance of POC Diagnostic Platforms

| Platform Category | Typical LOD Range | Detection Methods | Key Applications | Amplification Requirement |

|---|---|---|---|---|

| Lateral Flow Assays (LFA) | 1.0 pg/mL - 1.0 ng/mL (proteins) [37] | Colorimetric, Fluorescence, SERS [37] [41] | Infectious diseases (COVID-19, HIV, malaria), pregnancy testing, cardiac markers [42] [37] | Generally not required for high-abundance targets |

| Nucleic Acid Test Strips | 0.24 pg/mL - 40 pM (DNA) [38] [37] | Colorimetric (AuNPs), Fluorescence, Enzymatic detection [38] [43] | Pathogen detection (HIV-1, SARS-CoV-2), genetic markers, food safety testing [38] [43] | Required (RPA, LAMP, PCR) |

| Paper-Based Microfluidics (μPAD) | 13 mg/dL (glucose), 3 ng/mL (TNFα), 150 μg/L (Ni) [40] | Colorimetric, Electrochemical, Fluorescence [39] [40] | Glucose monitoring, cytokine detection, heavy metal detection, multiplexed assays [39] [40] | Target-dependent; often incorporates pre-concentration |

The data reveals significant variability in LOD across platforms, largely influenced by the detection methodology and signal amplification strategies. Conventional colorimetric LFAs typically exhibit higher LODs than nucleic acid-based systems that incorporate pre-amplification steps. However, recent advancements in nanomaterial-based signal enhancement have substantially improved the sensitivity of both LFA and μPAD platforms [41].

For nucleic acid test strips, the LOD is primarily determined by the efficiency of the upstream amplification process rather than the detection step itself. For instance, recombinase polymerase amplification (RPA) coupled with lateral flow detection has demonstrated exceptional sensitivity, achieving detection limits as low as 190 attomoles (1 × 10⁻¹¹ M) of DNA target [38]. This high sensitivity enables the detection of low-abundance targets that would be undetectable with direct antigen assays.

Paper-based microfluidic devices offer intermediate sensitivity but provide superior capabilities for sample processing and multiplexing. The LOD values for μPADs vary considerably depending on the specific application and detection chemistry, with some systems achieving clinically relevant sensitivity for biomarkers like glucose and cytokines [40].

Experimental Protocols and Methodologies

Nucleic Acid Lateral Flow Test with Tailed RPA Primers

This protocol describes a highly sensitive method for detecting DNA targets using recombinase polymerase amplification (RPA) with tailed primers, followed by lateral flow detection without the need for hapten labeling or post-amplification processing [38].

Sample Preparation and Amplification:

- DNA Extraction: Extract target DNA from clinical samples (e.g., blood, saliva, swabs) using appropriate nucleic acid extraction kits. For complex matrices, incorporate a paper-based extraction step using polyvinylpyrrolidone-treated elements to remove amplification inhibitors [44].

- Primer Design: Design tailed RPA primers consisting of a 3' target-specific sequence and a 5' universal tail (approximately 30-40 nucleotides). The forward and reverse primers should contain different universal tails to facilitate subsequent detection.