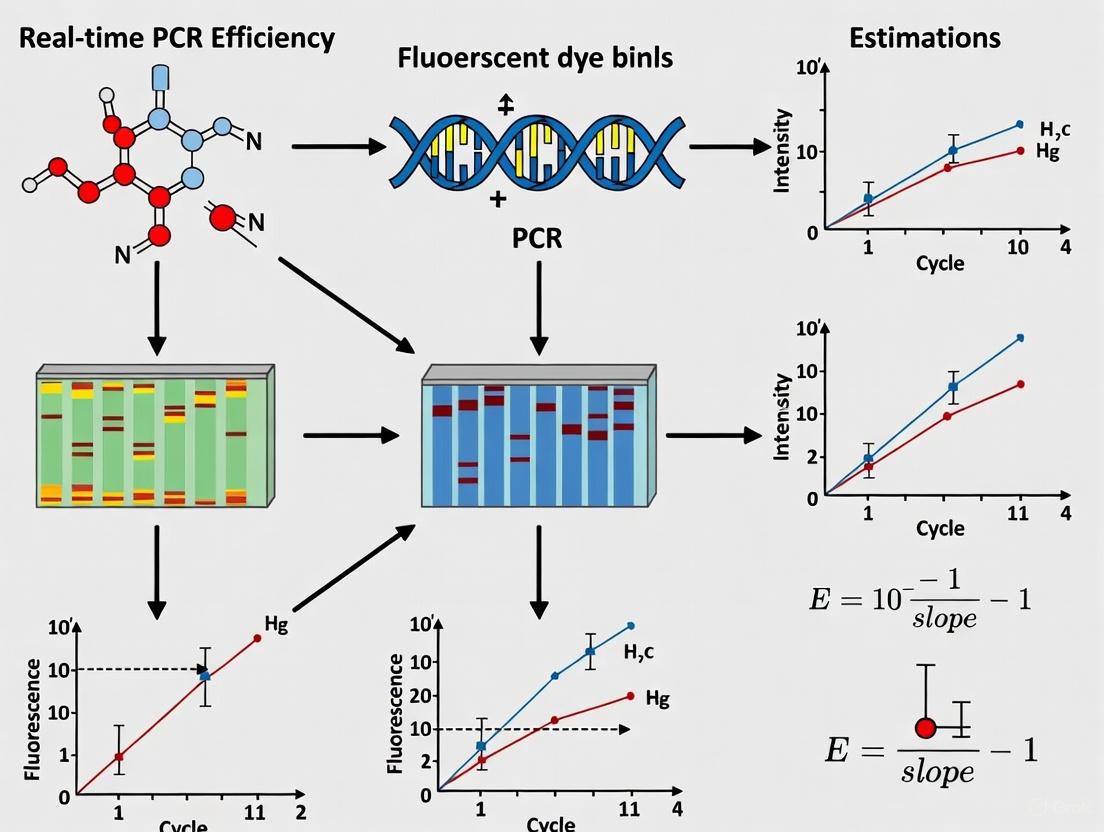

Beyond the Cq Value: A Comprehensive Guide to Accurate Real-Time PCR Efficiency Estimation

Accurate estimation of real-time PCR (qPCR) efficiency is not a mere technical formality but a fundamental prerequisite for reliable gene quantification, pathogen detection, and diagnostic assay validation.

Beyond the Cq Value: A Comprehensive Guide to Accurate Real-Time PCR Efficiency Estimation

Abstract

Accurate estimation of real-time PCR (qPCR) efficiency is not a mere technical formality but a fundamental prerequisite for reliable gene quantification, pathogen detection, and diagnostic assay validation. This article provides a comprehensive framework for researchers and drug development professionals to master efficiency estimation, from foundational principles to advanced troubleshooting. We explore the critical role of PCR kinetics, compare standard curve and amplification curve-based methods, and address common pitfalls that compromise data integrity. Furthermore, the guide delves into rigorous validation protocols and compares the performance of qPCR with emerging technologies like digital PCR, offering actionable strategies to optimize protocols, ensure regulatory compliance, and enhance the reproducibility of molecular data in biomedical research.

The Critical Role of PCR Efficiency: From Basic Kinetics to Data Integrity

Quantitative Polymerase Chain Reaction (qPCR) is a cornerstone technique in molecular biology, clinical diagnostics, and drug development for precisely measuring nucleic acid concentrations. At the heart of every qPCR experiment lies the PCR kinetic equation, a mathematical model that describes the exponential amplification of DNA throughout the thermal cycling process. This fundamental relationship connects the initial amount of target DNA to the amplified product detected at any cycle, with the reaction efficiency serving as the critical parameter determining quantification accuracy [1].

The foundational equation describing PCR amplification is: NC = N0 × (E + 1)C Where NC is the number of amplicon molecules after C thermocycles, N0 is the initial number of target molecules, and E is the amplification efficiency ranging from 0 to 1 (or 0% to 100%) [1]. During the exponential phase of PCR, reagents are in excess, enabling consistent amplification efficiency cycle-to-cycle [2]. This phase provides the quantitative data for determining the original template concentration. When the reaction reaches a predefined fluorescence threshold, the equation is rearranged to solve for the initial target quantity: N0 = Nt/(E + 1)Ct Where Ct is the threshold cycle and Nt is the number of amplicon molecules at that fluorescence threshold [1]. This relationship forms the mathematical basis for all qPCR quantification, highlighting how small variations in efficiency (E) exponentially impact calculated initial template quantities (N0) due to the exponent Ct in the equation.

Defining PCR Efficiency (E) and Its Mathematical Significance

Theoretical Foundation of Amplification Efficiency

PCR efficiency (E) represents the fraction of target molecules that are successfully copied in each PCR cycle [3]. Theoretically, under ideal conditions, each template molecule doubles every cycle, resulting in 100% efficiency (E = 1). This perfect efficiency is rarely achieved in practice due to various factors including reagent limitations, enzyme fidelity, and the presence of inhibitors in biological samples [2] [3]. The remarkable consistency of geometric amplification maintains the original quantitative relationships of the target gene across samples, making this phase essential for accurate quantification [2].

The mathematical relationship between efficiency and quantification becomes evident when examining the logarithmic form of the PCR equation: Log(N0) = –Log(E + 1) × Ct + Log(Nt) [1] This equation has the linear form y = mx + b, enabling the creation of standard curves for absolute quantification. The slope of this standard curve relates directly to PCR efficiency through the equation: E = 10(-1/slope) - 1 [3] [4] [5] A slope of -3.32 corresponds to 100% efficiency, with steeper slopes indicating lower efficiency [2]. This mathematical relationship provides the foundation for the most common method of efficiency estimation in qPCR experiments.

Impact of Efficiency on Quantification Accuracy

The exponential nature of PCR amplification means that small variations in efficiency dramatically affect quantification results. For example, a 0.5 variation in Ct results in a 29-41% miscalculation if E = 2, whereas a 0.05 error in E results in a 53-110% miscalculation after 30 cycles [6]. This sensitivity underscores why precise efficiency determination is not merely optional but essential for reliable qPCR results, particularly in gene expression studies where fold-change calculations directly impact biological interpretations [3].

Table 1: Impact of PCR Efficiency on Quantification Accuracy

| Efficiency Value | Theoretical Slope | Impact on N₀ Calculation | Practical Implications |

|---|---|---|---|

| 100% (E = 1.0) | -3.32 | Baseline (ideal) | Optimal quantification accuracy |

| 90% (E = 0.9) | -3.58 | 2.1-fold under-estimation at Ct=30 | Generally acceptable range |

| 80% (E = 0.8) | -3.87 | 5.8-fold under-estimation at Ct=30 | Questionable reliability |

| 110% (E = 1.1) | -3.10 | 2.8-fold over-estimation at Ct=30 | Indicates potential experimental error |

Comparative Analysis of Efficiency Estimation Methods

Standard Curve Method

The standard curve method represents the traditional approach for estimating PCR efficiency. This technique involves preparing a dilution series of known template concentrations, amplifying them in parallel with test samples, and plotting Ct values against the logarithm of initial concentrations [7] [2]. The slope of the resulting linear regression is then used to calculate efficiency using the formula E = 10(-1/slope) - 1 [3] [4].

While widely used, this method has significant limitations. It assumes that efficiency remains constant across all template concentrations, an assumption frequently violated in practice [7] [3]. A 2024 study demonstrated a decreasing trend in efficiency as DNA concentration increased in most cases, likely due to PCR inhibitors [3]. Additionally, standard curves are labor-intensive, require highly concentrated target material that may not be available, and are prone to errors from pipetting inaccuracies, contamination, and inhibitor effects [2] [8]. Recent research highlights that efficiency varies significantly across different qPCR instruments, further complicating standard curve application [8].

Single-Reaction Kinetic Analysis

Alternative methods estimate efficiency from individual amplification curves, eliminating the need for standard curves. These approaches model the exponential phase of amplification using the equation: Rn = R0 × (1 + E)n Where Rn is the fluorescence signal at cycle n, and R0 is the baseline signal [3]. These methods objectively identify the exponential phase of amplification using statistical approaches, such as analyzing standardized residuals to detect significant deviations from linear baseline fluorescence [7].

A 2003 study proposed a computational method that delimits the beginning of exponential observations in PCR kinetics using separate mathematical algorithms for ground fluorescence, non-exponential, and plateau phases [7]. This single-reaction approach provides results of higher accuracy than serial dilution methods and is sensitive to differences in starting target concentrations while resisting researcher subjectivity [7]. These individual-curve methods are particularly valuable for detecting inhibition or other factors that may affect specific samples differently.

Dilution-Replicate Experimental Design

A hybrid approach termed the "dilution-replicate design" replaces traditional identical replicates with dilution replicates for each test sample [6]. This method performs a single reaction on several dilutions for every test sample, creating individual standard curves without independent efficiency estimation. The design enables global efficiency estimation by fitting all standard curves simultaneously with a slope equality constraint, improving estimation robustness [(citation:2].

This approach offers several advantages: it requires fewer reactions than traditional designs, provides the option to exclude outliers rather than repeating runs, and eliminates the need for separate efficiency estimation or inter-run variation controls [6]. The dilution-replicate method effectively combines elements of both standard curve and single-reaction approaches while addressing some of their respective limitations.

Table 2: Comparison of PCR Efficiency Estimation Methods

| Method | Principles | Advantages | Limitations |

|---|---|---|---|

| Standard Curve | Serial dilutions of known standards; slope-derived efficiency | Familiar to most researchers; established workflow | Labor-intensive; assumes constant efficiency; pipetting errors affect accuracy |

| Single-Reaction Kinetics | Mathematical modeling of exponential phase from individual curves | No standard curves needed; identifies sample-specific issues; high accuracy [7] | Complex calculations; requires specialized software or algorithms |

| Dilution-Replicate Design | Multiple dilutions per sample with global fitting | Fewer reactions; handles outliers well; no separate efficiency estimation [6] | Still requires dilution series; global efficiency assumption |

| Visual Assessment | Parallelism of geometric slopes on log-scale amplification plots | No equations; not affected by pipetting errors [2] | Subjective; no numerical efficiency value |

Experimental Protocols for Efficiency Determination

Standard Curve Protocol for Efficiency Estimation

The following protocol outlines the steps for determining PCR efficiency using the standard curve method, based on established procedures from multiple studies [2] [3] [4]:

Template Preparation: Prepare a 5-10 point serial dilution series of known template concentration, typically spanning 6-9 orders of magnitude. Use the same matrix as test samples to maintain consistent background effects.

qPCR Amplification: Run all dilution points in triplicate or quadruplicate on the same qPCR plate under identical cycling conditions. Include no-template controls to detect contamination.

Data Collection: Record Ct values for each reaction. Manually set a consistent fluorescence threshold within the exponential phase of all amplifications to ensure comparability [4] [1].

Standard Curve Construction: Plot mean Ct values against the logarithm of initial template quantities. Perform linear regression analysis to determine the slope and R² value.

Efficiency Calculation: Calculate PCR efficiency using the formula E = 10(-1/slope) - 1. Acceptable efficiency typically ranges from 90-110% (slope of -3.1 to -3.6) [5].

For precise efficiency estimation, recent research recommends at least 3-4 qPCR replicates at each concentration to reduce uncertainty, which can reach 42.5% with single replicates [8]. Larger transfer volumes during dilution series preparation also reduce sampling error [8].

Protocol for Single-Reaction Efficiency Estimation

For methods estimating efficiency from individual amplification curves [7]:

Fluorescence Data Export: Export raw fluorescence data from the qPCR instrument for each reaction.

Background Phase Identification: Apply linear regression to initial cycles to establish the ground fluorescence phase. Use statistical tests (e.g., studentized residuals) to identify the cycle where fluorescence consistently deviates from background.

Exponential Phase Modeling: Fit the exponential growth model Rn = R0 × (1 + E)n to the identified exponential phase or use sigmoidal modeling of the entire amplification curve [3].

Parameter Optimization: Iteratively adjust parameters to minimize differences between observed and modeled fluorescence values.

Efficiency Calculation: For any two cycles (a and b) within the exponential phase, calculate efficiency using E = (Rn,a/Rn,b)1/(Ca-Cb) - 1 [3].

This method's advantage lies in its ability to detect efficiency variations between samples, which is particularly valuable when inhibitors or other sample-specific factors may affect amplification [7].

Figure 1: Single-Reaction Efficiency Estimation Workflow

Experimental Data on Efficiency Estimation Variability

Method-Dependent Efficiency Variations

Recent comparative studies reveal significant variations in efficiency values depending on the mathematical approach used. A 2024 assessment of 16 genes from Pseudomonas aeruginosa demonstrated that efficiency values differed substantially across mathematical methods [3]. When comparing standard curves to individual-curve approaches, researchers observed that:

- Standard curves yielded efficiency values of approximately 100% in three out of four cases

- Exponential model efficiencies ranged from 1.5-2.79 (50-79%)

- Sigmoidal model efficiencies ranged from 1.52-1.75 (52-75%) [3]

These differences directly impacted normalized expression values, highlighting how methodological choices affect final quantitative results. The study further noted a decreasing trend in efficiency as DNA concentration increased in most cases, suggesting PCR inhibitors may contribute to this observed effect [3].

Inter-Assay Variability in Efficiency Estimation

A comprehensive 2025 study evaluating inter-assay variability of standard curves across 30 independent experiments revealed significant variations even under standardized conditions [4]. Research on seven different virus targets showed:

- All viruses demonstrated adequate efficiency (>90%), but with notable variability between targets

- Norovirus GII showed the highest inter-assay efficiency variability

- SARS-CoV-2 N2 gene exhibited the largest quantitative variability (CV 4.38-4.99%) and the lowest efficiency (90.97%) [4]

This variability persisted despite standardized reagents, conditions, consumables, and operators, supporting the recommendation to include standard curves in every experiment for reliable quantification [4]. These findings align with earlier research showing that efficiency estimation varies significantly across different qPCR instruments, though it remains reproducibly stable on a single platform [8].

Table 3: Experimental Efficiency Variations Across Different Studies

| Study Reference | Targets Analyzed | Efficiency Range | Key Variability Findings |

|---|---|---|---|

| DNA 2024 [3] | 16 bacterial genes | 50-100% depending on method | Efficiency decreases with increasing DNA concentration; method choice significantly impacts quantification |

| Microorganisms 2025 [4] | 7 human viruses | 90.97-100% | Significant inter-assay variability despite standardized conditions; target-dependent differences observed |

| Rutledge & Côté 2003 [1] | 2 nested amplicons | ~100% | Single well-constructed standard curve provides ±6-21% precision depending on Ct |

| Ståhlberg et al. 2003 [7] | SRY plasmid DNA | Not specified | Single-reaction method more accurate than serial dilutions; sensitive to starting concentration differences |

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Essential Research Reagents for PCR Efficiency Studies

| Reagent/Material | Function in Efficiency Assessment | Implementation Example |

|---|---|---|

| SYBR Green Master Mix | Fluorescent detection of amplicon accumulation | Provides consistent reaction background for kinetic analysis [3] [9] |

| Quantified Standard DNA/RNA | Creates standard curve for absolute quantification | Serial dilutions of purified plasmid or synthetic nucleic acids [4] [1] |

| High-Purity Water | Diluent for standard curves and sample preparation | Minimizes introduction of inhibitors that affect efficiency [3] |

| Optical Reaction Plates | Compatible with fluorescence detection systems | Ensure consistent signal capture across all wells [4] |

| Calibrated Pipetting Systems | Accurate liquid handling for dilution series | Reduces technical variability in standard curve preparation [8] |

The PCR kinetic equation N0 = Nt/(E + 1)Ct establishes an exponential relationship between efficiency (E) and initial target quantification (N0). This mathematical foundation explains why precise efficiency determination is critical for accurate qPCR results. Current research demonstrates that no single efficiency estimation method is universally superior; each approach presents distinct advantages and limitations. The standard curve method provides familiarity but suffers from inherent variability and resource intensiveness [8] [4]. Single-reaction kinetics offer sample-specific efficiency but require sophisticated algorithms [7] [3]. Dilution-replicate designs present a balanced approach but still necessitate template dilution [6].

For researchers and drug development professionals, these findings underscore the importance of methodological transparency in qPCR experiments. Efficiency should not be treated as a constant but as a variable parameter that requires empirical determination and reporting. As the scientific community moves toward more rigorous quantitative standards, understanding the nuances of the PCR kinetic equation and its implementation remains fundamental to generating reliable, reproducible molecular data that advances both basic research and clinical applications.

In quantitative Polymerase Chain Reaction (qPCR), the amplification efficiency (E-value) is not merely a technical parameter but the foundational pillar upon which reliable quantification is built. It represents the fold increase in amplified product during each cycle of the PCR reaction, with a theoretical maximum of 2 (or 100% efficiency), indicating perfect doubling of the target sequence every cycle [10] [2]. Despite two decades of efforts by the qPCR community to promote standardization, most reported qPCR results remain grossly biased due to improper efficiency handling [10]. Inaccurate E-values initiate a cascade of computational errors that propagate through subsequent analysis, ultimately distorting biological conclusions, compromising diagnostic accuracy, and undermining the reproducibility of scientific research [11]. This review systematically evaluates the sources and impacts of efficiency miscalculation and compares modern methodologies for its accurate determination, providing researchers with a framework for robust nucleic acid quantification.

The Mathematical Cascade: How Efficiency Errors Distort Quantification

The Core Kinetic Equation of PCR

The entire quantitative framework of qPCR rests upon the kinetic equation of PCR:

NC = N0 × EC

Where NC is the number of amplicons after cycle C, N0 is the initial target quantity, and E is the amplification efficiency [10]. Through mathematical rearrangement to calculate the initial target quantity (N0 = NC/EC), it becomes evident that efficiency (E) is an exponent in the calculation. Consequently, even minor inaccuracies in E-value estimation become dramatically amplified through this exponential relationship, leading to significant miscalculations of initial target concentration [10] [11].

Table 1: Impact of Efficiency miscalculation on Quantitative Results

| True Efficiency | Assumed Efficiency | Reported Quantity vs. True Quantity | Fold Error after 30 Cycles |

|---|---|---|---|

| 85% (1.85) | 100% (2.00) | Underestimated | 5.9-fold |

| 95% (1.95) | 100% (2.00) | Underestimated | 1.7-fold |

| 105% (2.05) | 100% (2.00) | Overestimated | 2.1-fold |

| 110% (2.10) | 100% (2.00) | Overestimated | 4.1-fold |

Efficiency in Relative Expression Analysis (ΔΔCq Method)

The popular ΔΔCq method for relative gene expression quantification relies critically on the assumption of perfect, equal efficiency between target and reference gene assays [2]. When this assumption is violated, the mathematical simplification that makes ΔΔCq calculation convenient fails. The standard equation:

Relative Quantity = 2-ΔΔCq

implicitly sets efficiency at 2 (100%) for all assays [2]. A modified equation accounts for varying efficiencies:

Uncalibrated Quantity = (Etarget-Cttarget)/(Enorm-Ctnorm)

This correction is essential when target and reference genes amplify with different efficiencies, as failure to apply it introduces systematic errors that render fold-change calculations biologically meaningless [2] [11].

Methodological Comparison: Approaches for Efficiency Determination

Standard Curve Method

The traditional approach for efficiency determination employs a serial dilution series of a known template to generate a standard curve. The slope of the plot of Cq versus log(quantity) relates to efficiency through the equation:

E = 10-1/slope

A slope of -3.32 corresponds to 100% efficiency [2]. While theoretically sound, this method is notoriously prone to practical errors.

Table 2: Comparison of Efficiency Estimation Methods

| Method | Principle | Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Standard Curve | Linear regression of Cq vs. dilution series | 5-7 dilution points, replicates | Familiar, wide dynamic range | Prone to dilution errors, inhibitor effects [2] |

| Visual Assessment | Parallelism of logarithmic amplification curves | Multiple assays on same plate | No standard curve, identifies inhibition | Subjective, no numerical output [2] |

| Single-Reaction (LRE) | Fit to logistic model describing efficiency decay | Sufficient cycles in growth phase | No dilutions, accounts for efficiency decline | Requires sophisticated fitting algorithms [12] |

| Digital PCR | Absolute quantification via Poisson statistics | dPCR platform, partitioning | No standard curve, highest precision | Higher cost, limited throughput [13] [14] |

Single-Reaction Efficiency Estimation

Advanced computational methods now enable efficiency estimation from individual amplification curves, eliminating need for standard curves. These approaches model the entire amplification process, accounting for the characteristic decline in efficiency as reagents become limiting. The Logistic Model (LRE) has demonstrated particular promise, producing a parameter E0 that represents efficiency in the baseline region and agrees closely with calibration-based estimates [12]. Proper implementation requires fitting the raw fluorescence data to a model combining baseline and signal components, typically requiring four to six adjustable parameters in a nonlinear least-squares fit [12]. Statistically, the common practice of "baselining" - separately estimating and subtracting baseline fluorescence before analysis - increases the dispersion of efficiency estimates by approximately 75%, equivalent to tripling the number of reactions needed for equivalent precision [12].

Digital PCR: An Efficiency-Independent Alternative

Digital PCR (dPCR) represents a paradigm shift in nucleic acid quantification by providing absolute quantification without reliance on amplification efficiency or standard curves [13] [14]. Through partitioning of the reaction mixture into thousands of nanoscale reactions, dPCR enables binary endpoint detection (positive/negative) and application of Poisson statistics to calculate absolute template concentration [13]. Recent comparative studies demonstrate dPCR's superior accuracy, particularly for high viral loads of influenza A, influenza B, and SARS-CoV-2, showing greater consistency and precision than Real-Time RT-PCR [13]. This technology eliminates the cascading effects of efficiency miscalculation by circumventing the entire efficiency-based quantification framework.

dPCR Quantification Workflow

Experimental Protocols for Robust Efficiency Determination

Dilution-Replicate Design for Efficiency Estimation

An efficient experimental design alternative to traditional replication uses dilution-replicates instead of identical replicates. In this approach, a single reaction is performed on several dilutions for every test sample, similar to standard curve design but without replicates at each dilution [6]. This design enables simultaneous estimation of both PCR efficiency and initial target quantity through the relationship:

Cq = -log(d)/log(E) + log(T/Q(0))/log(E)

Where d is the dilution factor, E is efficiency, T is threshold fluorescence, and Q(0) is initial quantity [6]. This approach provides inherent quality control, as outliers at extreme dilutions can be identified and excluded without compromising the entire dataset.

One-Point Calibration with Efficiency Correction

For absolute quantification in diagnostic applications, the recommended approach combines a single undiluted calibrator with known target concentration and efficiency values derived from the amplification curves of both calibrator and unknown samples [11]. This method avoids the dilution errors and matrix effects that confound traditional standard curves while still providing efficiency-corrected results essential for meaningful biological interpretation and diagnostic accuracy [11].

Cascading Effects of Inaccurate E-Values

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for qPCR Efficiency Analysis

| Reagent/Material | Function | Considerations for Efficiency |

|---|---|---|

| TaqMan Gene Expression Assays | Predesigned primer-probe sets with guaranteed 100% efficiency | Eliminates efficiency estimation burden [2] |

| Digital PCR Plates/Cartridges | Nanoscale partitioning for absolute quantification | Bypasses efficiency concerns [13] [14] |

| Standard Curve Reference Material | Known concentration template for efficiency calibration | Requires verification of matrix matching [11] |

| Inhibitor-Resistant Master Mix | Enhanced polymerase chemistry tolerant to sample inhibitors | Prevents efficiency depression from sample matrix [15] |

| Nucleic Acid Purification Kits | Remove PCR inhibitors from sample preparations | Maintains optimal reaction efficiency [15] |

Accurate determination of PCR efficiency is not an optional refinement but a fundamental requirement for biologically meaningful qPCR results. The cascading effects of inaccurate E-values exponentially amplify through subsequent calculations, rendering quantitative interpretations unreliable. While traditional standard curve methods remain prevalent, they are susceptible to multiple confounding factors including dilution errors, inhibitor effects, and matrix mismatches [2] [11]. Emerging single-reaction estimation methods, particularly those based on logistic models of the entire amplification curve, offer promising alternatives that avoid these pitfalls while providing efficiency values that closely align with calibration-based estimates [12]. For applications demanding the highest possible precision, digital PCR represents the ultimate solution by eliminating efficiency dependence altogether through absolute quantification based on Poisson statistics [13] [14]. As qPCR continues to play a critical role in basic research, diagnostic applications, and drug development, researchers must prioritize proper efficiency determination and correction to ensure the validity and reproducibility of their conclusions. The MIQE (Minimum Information for Publication of Quantitative Real-Time PCR Experiments) guidelines should be extended to explicitly require reporting of both the methods used to determine PCR efficiency and the calculations used to derive reported target quantities [11].

Quantitative Polymerase Chain Reaction (qPCR) is a cornerstone technique in molecular biology for quantifying nucleic acids. Its reliability, however, hinges on a critical parameter: amplification efficiency [16]. Amplification efficiency refers to the rate at which a target DNA sequence is duplicated during each PCR cycle. The ideal, theoretical maximum is 100% efficiency, meaning the number of DNA molecules doubles perfectly with every cycle (a doubling factor of 2.0) [15]. In practice, a benchmark of 90–110% efficiency is widely considered acceptable for a robust and reliable assay [17] [15] [16]. This range corresponds to a standard curve slope between -3.58 and -3.10 [15]. Efficiency outside this optimal window can lead to significant inaccuracies in quantification, misrepresenting the actual amount of the target sequence in the original sample [6]. This article explores the technical underpinnings of this benchmark and investigates the common experimental factors that cause deviations, thereby bridging the gap between the ideal and the real in qPCR experimentation.

The 90-110% Efficiency Benchmark Explained

The 90-110% efficiency range is not an arbitrary guideline but a practical standard grounded in the mathematical relationship between efficiency and quantification accuracy. The calculation of efficiency is typically derived from the slope of a standard curve generated from a serial dilution of a known template [15] [4]. The formula Efficiency = [10^(-1/slope)] - 1 links the slope directly to the reaction's performance [4].

A reaction with 100% efficiency (-3.32 slope) perfectly doubles each cycle, ensuring that the difference in quantification cycle (Cq) values between successive samples in a 2-fold dilution series is exactly 1 [17]. This precision is crucial for distinguishing small concentration differences. As efficiency decreases, the ability to discriminate between 2-fold dilutions diminishes unless the standard deviation of Cq values is very low (≤ 0.167) [17]. The 90-110% benchmark represents a balance where the impact of minor efficiency variations on final quantification remains manageable for most research purposes, ensuring that the fluorescent signal is directly proportional to the initial DNA concentration over the assay's dynamic range [16].

Furthermore, this acceptable range is vital for comparing results across different reactions or laboratories. As highlighted by ThermoFisher, "Ct values from PCR reactions run under different conditions or with different reagents cannot be compared directly" [17]. Adhering to a common efficiency benchmark helps normalize these variables, promoting reproducibility and data integrity in line with the MIQE (Minimum Information for Publication of Quantitative Real-Time PCR Experiments) guidelines [16] [18].

Common Causes of Efficiency Deviations

Deviations from the ideal efficiency range are common in practice and stem from a variety of factors related to reaction chemistry, sample quality, and procedural execution.

Causes of Efficiency Below 90%

- Suboptimal Primer Design: This is a primary cause of poor efficiency. Issues such as primer-dimer formation, self-complementarity (hairpins), or inappropriate melting temperatures (Tm) can severely hinder primer-template annealing and extension, leading to inefficient amplification [15].

- Insufficient Reaction Component Concentration: The concentration of key reagents, including primers, probes, MgCl₂, or dNTPs, may be too low, preventing the polymerase from functioning at its maximum capacity [15].

- Sample Quality and Purity: The presence of polymerase inhibitors in the sample is a major culprit. Common contaminants carried over from nucleic acid isolation steps include ethanol, phenol, and SDS [15]. Other inhibitors like heparin, hemoglobin, and polysaccharides can also bind to or inactivate the polymerase enzyme [15].

Causes of Efficiency Exceeding 110%

While less intuitive, efficiencies significantly above 100% are almost always indicative of underlying problems, rather than a "super-efficient" reaction.

- Presence of PCR Inhibitors: This is the main reason for inflated efficiency values. When inhibitors are present in more concentrated samples, they cause a delay in the Cq value, meaning more cycles are needed for detection than should be. As the sample is diluted, the inhibitors are also diluted, reducing their effect and causing the Cq values to shift closer to the expected values. This flattens the standard curve, resulting in a shallower slope and a calculated efficiency of over 100% [15].

- Fluorescent Background or Non-Specific Amplification: When using intercalating dyes, the formation of primer-dimers or the amplification of unspecific products can generate a fluorescent signal that is not solely from the target amplicon. This artificial inflation of the background signal can distort the early, baseline phase of the amplification plot, leading to an incorrect calculation of efficiency [15].

- Pipetting Errors and Inaccurate Dilution Series: Inconsistent technique when preparing the standard curve dilutions can create a curve that does not accurately reflect the true logarithmic relationship between concentration and Cq, directly impacting the slope and the resulting efficiency calculation [15].

Table 1: Summary of Common Causes for qPCR Efficiency Deviations

| Deviation Type | Primary Causes | Underlying Mechanism |

|---|---|---|

| Low Efficiency (<90%) | Suboptimal primer design [15] | Poor annealing/extension; primer-dimer formation |

| Non-optimal reagent concentrations [15] | Limiting components for polymerase activity | |

| Presence of polymerase inhibitors [15] | Enzyme inactivation or binding | |

| High Efficiency (>110%) | PCR inhibition in concentrated samples [15] | Flattened standard curve due to delayed Cq in high-concentration samples |

| Unspecific amplification or primer dimers [15] | Dye-based fluorescence not solely from target | |

| Pipetting errors; inaccurate dilutions [15] | Incorrect standard curve slope |

Methodologies for Efficiency Estimation and Validation

Accurately determining qPCR efficiency is a prerequisite for validating any assay. The following experimental protocols are standard in the field.

Standard Curve Method

This is the most common approach for determining amplification efficiency.

- Serial Dilution Preparation: A sample with a known concentration of the target nucleic acid (e.g., a plasmid, PCR product, or synthetic oligonucleotide) is serially diluted. A minimum of 5 logs of template concentration is recommended for a rigorous evaluation, as a wider range reduces the artifact in efficiency calculation [17]. Each dilution is typically run in a minimum of 3 replicates.

- qPCR Run: The dilution series is amplified using the qPCR protocol under validation.

- Data Analysis: The mean Cq value for each dilution is plotted against the logarithm of its initial concentration. A linear regression trendline is fitted to the data points. The slope of this line is used to calculate efficiency using the formula:

E = [10^(-1/slope)] - 1[15] [4]. - Quality Assessment: The linear dynamic range and the coefficient of determination (R²) must be evaluated. An R² value >0.99 indicates a strong linear relationship and good predictability [17]. The linear range is the span of concentrations where the signal is directly proportional to the input [16].

Alternative Experimental Designs

Alternative methods can improve efficiency estimation while optimizing resource use. The dilution-replicate design proposes using several dilutions of every test sample instead of identical replicates. This design inherently generates a standard curve for each sample, allowing for direct efficiency estimation without independent curves and providing the option to exclude outliers from specific dilutions [6]. The data from all samples can be fit with a constraint of slope equality to derive a globally estimated PCR efficiency, which benefits from a higher degree of freedom and can be more robust against individual Cq errors [6].

A Practical Workflow for Efficiency Troubleshooting

The following diagram maps a logical pathway for diagnosing and correcting sub-optimal qPCR efficiency.

The Scientist's Toolkit: Essential Reagents and Materials

Successful qPCR assay development and validation require specific, high-quality reagents and materials. The following table details key components used in the experiments and methodologies cited in this guide.

Table 2: Key Research Reagent Solutions for qPCR Validation

| Reagent / Material | Function / Application | Example Use-Case |

|---|---|---|

| TaqMan Fast Virus 1-Step Master Mix [4] | An optimized ready-to-use mix for reverse transcription and qPCR, minimizing handling and variability. | Used in a 30-experiment study on standard curve variability for virus quantification in wastewater [4]. |

| GeneProof PathogenFree DNA Isolation Kit [19] | For efficient extraction of inhibitor-free DNA from complex clinical samples like tissue biopsies. | Used for DNA extraction from pediatric gastric biopsies prior to H. pylori detection via real-time PCR and HRM [19]. |

| Quantitative Synthetic RNA (from ATCC) [4] | Provides a stable, standardized material for generating consistent standard curves and determining assay limits. | Served as an exogenous positive control and standard for 30 independent RT-qPCR standard curve experiments for 7 different viruses [4]. |

| QIAcuity Digital PCR System [13] | A nanowell-based dPCR platform for absolute quantification without a standard curve, used for method comparison. | Employed in a comparative study with RT-qPCR for quantifying respiratory viruses (Influenza, RSV, SARS-CoV-2) [13]. |

| Seegene Allplex Respiratory Panel [13] | A multiplex Real-Time RT-PCR kit for the simultaneous detection of multiple respiratory pathogens. | Used for the initial detection and stratification of viral loads in respiratory samples in a dPCR vs. RT-qPCR comparison study [13]. |

The 90-110% efficiency benchmark is a pragmatic and scientifically grounded target that ensures the quantitative reliability of qPCR data. While the ideal of 100% efficiency is a valuable guide, real-world experimental conditions—ranging from primer design and reagent quality to the insidious presence of inhibitors—frequently cause deviations. Understanding these causes is the first step toward remediation. By employing rigorous validation methods like the standard curve, adhering to troubleshooting workflows, and utilizing appropriate reagents and controls, researchers can diagnose and correct efficiency problems. This disciplined approach bridges the gap between theoretical ideals and practical reality, ensuring that qPCR remains a robust and trustworthy tool for genetic quantification in research and diagnostics.

The quantitative polymerase chain reaction (qPCR) amplification curve is a fundamental visual representation of the DNA amplification process, charting the accumulation of fluorescence over consecutive cycles. Accurately identifying its distinct phases—ground, exponential, and plateau—is not merely an analytical exercise but a critical prerequisite for reliable gene quantification. The entire premise of qPCR quantification rests on establishing a quantitative relationship between the initial amount of target nucleic acid and the data gathered during the exponential phase [20]. Misidentification of these phases can lead to incorrect efficiency calculations and substantially skewed results, undermining the validity of any downstream analysis [21] [3]. This guide objectively compares the performance of different curve analysis methods, providing a framework for researchers to evaluate these approaches within the broader context of PCR efficiency estimation research.

Phase Characteristics and Quantitative Signatures

Each phase of the qPCR amplification curve possesses unique characteristics and quantitative signatures that researchers must recognize for accurate analysis. The following table summarizes the key attributes of each phase.

Table 1: Characteristics of qPCR Amplification Curve Phases

| Phase | Cycle Range | Fluorescence Trend | PCR Efficiency | Data Utility for Quantification |

|---|---|---|---|---|

| Ground (Linear/Baseline) | Early cycles (typically 1-15) [22] | At or near background level; little change [21] | Not applicable | Fluorescence establishes background level; quantification unreliable [23] |

| Exponential (Log-Linear) | Varies by initial template concentration | Rapid, exponential increase [20] | Constant and maximal [22] | Primary phase for reliable quantification [20] [3]; fluorescence is proportional to starting template [24] |

| Transitional | Follows exponential phase | Increase rate decreases as reagents become limiting [21] | Declining from maximum | Not suitable for quantification due to variable efficiency [21] |

| Plateau | Late cycles (e.g., 30-40) | Fluorescence stabilizes; no significant increase [20] [23] | Near zero; reaction stops [20] | Not useful for data calculation [20]; endpoint fluorescence is not representative of initial template [21] |

The progression through these phases is visually represented in the following amplification curve diagram.

Diagram 1: The four distinct phases of a typical qPCR amplification curve, showing the transition from background fluorescence through exponential growth to reaction saturation.

Experimental Protocols for Phase Identification

Standard Curve Method for Efficiency Determination

The standard curve method is a foundational approach for quantifying PCR efficiency and validating that the threshold for quantification is set within the exponential phase [20].

- Preparation of Standard Dilutions: Create a serial dilution series of a known concentration of target DNA or RNA. Typically, five to six log-fold dilutions are prepared (e.g., 1:10, 1:100, 1:1000) [23] [4].

- qPCR Run: Amplify all standard dilutions and unknown samples in the same qPCR run, ideally using triplicate technical replicates for each dilution point [23].

- Data Collection and Ct Determination: The qPCR instrument software records the fluorescence and calculates the Cycle threshold (Ct) for each reaction, which is the cycle number at which the fluorescence crosses a defined threshold [20].

- Standard Curve Plotting and Efficiency Calculation: Plot the log of the known starting concentration of each standard dilution against its mean Ct value. Perform linear regression to obtain the slope of the trendline. The PCR efficiency is then calculated using the formula: Efficiency (%) = (10⁻¹/ˢˡᵒᵖᵉ - 1) × 100 [3] [23] [4]. Optimal efficiency falls between 90-110% [23], corresponding to a slope of -3.6 to -3.1.

Individual Sample Efficiency Analysis with LinRegPCR

For a method that foregoes standard curves and calculates efficiency from the amplification profile of each sample, the LinRegPCR software provides a robust protocol [24] [22].

- Baseline Correction: The software performs an automated, user-independent baseline subtraction. Unlike instrument software that often uses the noisy ground phase cycles, LinRegPCR uses an iterative approach to determine a baseline value that results in the most data points forming a straight line in a log(fluorescence) versus cycle number plot [22].

- Identification of the Exponential Phase: The start of the exponential phase is identified as the first cycle with a continuous increase in fluorescence. The end is defined by the Second Derivative Maximum (SDM), the cycle where the increase in fluorescence begins to decrease, marking the transition into the plateau phase [22].

- Efficiency Calculation per Assay: The PCR efficiency for each reaction is determined from the slope of the exponential phase (a minimum of three consecutive cycles). To reduce variability, the efficiencies of all reactions for the same target are averaged, yielding a mean PCR efficiency per assay [22] [23].

- Setting a Common Quantification Threshold: A single fluorescence threshold is set within the exponential phase of all reactions, ensuring that Cq values are directly comparable across the entire run [22].

Comparative Performance of Analysis Methods

Different mathematical approaches for modeling amplification curves and estimating efficiency can yield varying results, directly impacting DNA quantification and gene expression analysis [3].

Table 2: Comparison of qPCR Efficiency Estimation Methods

| Method | Principle | Efficiency Range Reported | Impact on Quantification | Best Use Cases |

|---|---|---|---|---|

| Standard Curve | Linear regression of log(concentration) vs. Ct from diluted standards [3] | Typically >90% [4] | Assumes constant efficiency for all samples; potential overestimation [3] | Absolute quantification; clinical viral load testing [20] [4] |

| Exponential Model (Individual Curves) | Fitting the exponential phase of individual amplification curves [3] | 65-90% is common [21]; 50-79% in experimental data [3] | Accounts for sample-to-sample variation; more precise relative quantification [24] | High-precision gene expression studies; when standard curves are impractical [24] [22] |

| Sigmoidal Model | Modeling the entire amplification curve (baseline, exponential, plateau) [3] | 52-75% in experimental data [3] | Considers the full reaction kinetics; may better handle suboptimal reactions | Research with variable sample quality; comprehensive kinetic analysis [3] |

| 2-ΔΔCt (Livak) | Assumes ideal doubling efficiency (100%) for all reactions [3] [23] | Fixed at 100% (not calculated) | Can produce significant inaccuracies if true efficiency deviates from 100% [3] | Rapid screening only when target and reference genes are validated to have near-perfect efficiency [23] |

The Scientist's Toolkit: Essential Reagent Solutions

Successful interpretation of amplification curves relies on the quality of reagents and materials used in the qPCR workflow.

Table 3: Essential Research Reagents and Materials for qPCR

| Reagent/Material | Function | Considerations for Curve Analysis |

|---|---|---|

| SYBR Green I Master Mix | DNA-binding dye that fluoresces when bound to double-stranded DNA [20] | Dye chemistry can produce higher background in baseline phase compared to probe-based methods [22]. Use of a passive reference dye (ROX) allows for normalization (Rn) to minimize well-to-well variation [23]. |

| Hydrolysis Probes (TaqMan) | Sequence-specific probes with a fluorophore and quencher; fluorescence increases upon cleavage [20] | Provides higher specificity, reducing background signal from non-specific products. Baseline fluorescence can be higher (up to 10% of final signal) due to incomplete quenching [22]. |

| Primers | Short DNA sequences that define the region to be amplified [20] | Critical for reaction efficiency. Poor design (e.g., primer-dimer formation) can distort the baseline and exponential phases, leading to inaccurate Cq and efficiency values [24]. |

| Hot-Start DNA Polymerase | Enzyme engineered to reduce activity at lower temperatures, preventing non-specific amplification [9] | Minimizes primer-dimer and other non-specific products in early cycles, resulting in a cleaner baseline and more reliable transition into the true exponential phase [9]. |

| Commercial Lysis Buffers (e.g., SIL-B) | For direct PCR from crude samples (e.g., blood) without nucleic acid purification [9] | Reduces processing time but may introduce PCR inhibitors that depress reaction efficiency, flattening the exponential phase and increasing Cq values [9]. |

The precise identification of the ground, exponential, and plateau phases in a qPCR amplification curve is a cornerstone of reliable molecular quantification. As demonstrated, the choice of analysis method—whether standard curve, exponential, or sigmoidal modeling—significantly impacts the calculated PCR efficiency and final quantitative results. Researchers must be aware that efficiency is not an abstract value but a kinetic parameter directly derived from the exponential phase of the curve. For most applications requiring high accuracy, particularly in gene expression analysis or diagnostic validation, methods that calculate efficiency from individual sample curves, such as LinRegPCR, provide superior reproducibility and minimize the influence of inter-assay variability. Ultimately, robust experimental design, combined with a critical approach to curve interpretation, ensures that qPCR data remains a gold standard in quantitative molecular biology.

The cycle quantification (Cq) value, representing the PCR cycle at which a target amplicon is first detected, serves as the foundational data point in quantitative real-time PCR (qPCR) analysis. While this metric provides a convenient starting point for quantification, relying solely on Cq values without proper context of amplification efficiency introduces substantial risks in data interpretation. The prevailing assumption that Cq values directly and consistently correlate with initial template quantity across samples and experiments often fails in practice due to numerous technical and biological variables. This analysis examines the critical limitations of Cq-centric approaches and evaluates methodological frameworks for robust efficiency estimation, providing researchers with strategies to overcome these foundational pitfalls in molecular quantification.

The Critical Role of Amplification Efficiency in qPCR Quantification

Fundamental Relationship Between Cq and Efficiency

In qPCR, the relationship between the Cq value and the initial template quantity is mathematically described by the exponential amplification equation: Q = Q₀ × (1+E)^Cq, where Q represents the product quantity at the Cq value, Q₀ is the initial template quantity, and E is the amplification efficiency (values from 0 to 1, or 0% to 100%) [25] [2]. This equation forms the basis for all subsequent quantification methods. When efficiency is 100% (E=1), the template doubles every cycle, and the relationship becomes Q = Q₀ × 2^Cq. The inverse relationship means that higher initial template concentrations result in lower Cq values, while lower concentrations yield higher Cq values [25].

The critical importance of efficiency becomes apparent when considering that a slight variation in efficiency exponentially impacts calculated template quantities over multiple cycles. For a Cq of 20, the quantities resulting from 100% versus 80% efficiency differ by approximately 8.2-fold [2]. This dramatic effect underscores why accurate efficiency determination is paramount for reliable quantification.

Efficiency Estimation Methods and Their Limitations

The standard curve method remains the most widely accepted approach for estimating PCR efficiency [26]. This method involves creating a dilution series of a template with known relative concentrations, then plotting the Cq values against the logarithm of the concentrations. The efficiency is derived from the slope of the resulting line using the equation: E = 10^(-1/slope) - 1 [26] [2]. For a 10-fold dilution series, a slope of -3.32 corresponds to 100% efficiency [2].

However, this method contains inherent limitations. Errors in standard curve slopes are common due to inhibitors, contamination, pipetting inaccuracies, and dilution point mixing problems [2]. These errors can theoretically produce slopes suggesting greater than 100% efficiency, even though geometric efficiency cannot actually exceed 100% [2]. The visual assessment method offers an alternative approach by comparing geometric amplification slopes across assays. Parallel slopes suggest similar efficiencies, while non-parallel slopes indicate varying efficiencies [2].

Table 1: Impact of Amplification Efficiency Errors on Quantification

| Efficiency Error | Impact on Calculated Quantity | Impact After 30 Cycles |

|---|---|---|

| 5% overestimation | 53% overestimation [6] | Substantial overestimation |

| 5% underestimation | 29% underestimation [6] | Substantial underestimation |

| 20% lower efficiency | 8.2-fold difference at Cq 20 [2] | Dramatic miscalculation |

Critical Limitations of Cq-Value-Centric Analysis

Platform-Dependent Variability and Lack of Commutability

Cq values demonstrate significant variability across different qPCR platforms and laboratory setups, fundamentally limiting their direct comparability. A compelling example comes from SARS-CoV-2 testing, where studies revealed different Ct value thresholds correlated with clinical outcomes across different test platforms, even after controlling for other factors [27]. This platform-dependent variability means that Cq values obtained from one system cannot be directly compared to those from another, complicating cross-study comparisons and meta-analyses.

The interquartile ranges of Cq values between different patient groups and testing platforms can overlap by 20-50%, substantially reducing the discriminatory power for individual clinical decision-making [27]. This variability stems from multiple technical sources including different specimen collection devices, nucleic acid extraction methods, genomic targets, and RT-PCR chemistries [27]. Consequently, professional organizations like the Infectious Diseases Society of America (IDSA) and the Association for Molecular Pathology (AMP) jointly recommend against using Cq values for individual patient care decisions with qualitative tests [27].

Unaccounted Statistical Uncertainty and False Positive Rates

A fundamentally overlooked problem in conventional Cq value analysis is the systematic underestimation of statistical uncertainty when amplification efficiency estimates are treated as fixed values rather than distributions. Current efficiency-adjusted ΔΔCq methods typically disregard the uncertainty of the estimated efficiency, effectively assuming infinite precision in efficiency estimation [28]. This statistical oversight produces overly optimistic standard errors, artificially narrow confidence intervals, and deflated p-values that ultimately increase Type I error rates beyond expected significance levels [28].

The consequences of this statistical omission are particularly pronounced in validation studies where false positive control is paramount. When efficiency is determined with inadequate precision, the resulting inference on ΔΔCq values becomes dangerously anti-conservative, potentially leading to erroneous conclusions about differential expression [28]. Proper accounting of efficiency uncertainty through methods like the statistical delta method, Monte Carlo integration, or bootstrapping is necessary to maintain appropriate false positive rates, especially when efficiency estimates are based on limited dilution series or technical replicates [28].

Statistical Uncertainty Propagation in Cq Value Analysis

Template Sequence-Specific Bias in Multi-Template PCR

In multi-template PCR applications—essential for metabarcoding, microbiome studies, and DNA data storage—sequence-specific amplification efficiencies create substantial quantification bias independent of initial template abundance. Recent research utilizing deep learning models has demonstrated that specific sequence motifs adjacent to priming sites significantly impact amplification efficiency, challenging long-standing PCR design assumptions [29].

This phenomenon manifests as progressively skewed coverage distributions during amplification, where sequences with disadvantaged amplification efficiencies become severely underrepresented [29]. Remarkably, this bias persists even when controlling for GC content, suggesting previously unrecognized sequence-specific factors influence amplification efficiency [29]. Approximately 2% of random sequences demonstrate severely compromised amplification efficiencies as low as 80% relative to the population mean, resulting in their effective disappearance from sequencing data after 60 cycles [29].

The identification of adapter-mediated self-priming as a major mechanism causing low amplification efficiency provides mechanistic insight into this bias [29]. This sequence-dependent efficiency variation fundamentally undermines the assumption that Cq values or read counts directly reflect initial template abundances in multi-template applications, necessitating computational correction or specialized experimental designs.

Experimental Design Considerations for Robust Efficiency Estimation

Optimal Standard Curve Construction

Robust efficiency estimation requires carefully constructed standard curves with appropriate replication and dilution schemes. Evidence-based recommendations indicate that precise efficiency estimation requires standard curves with at least 3-4 qPCR replicates at each concentration [26]. Furthermore, using larger transfer volumes (e.g., 2-10μL) during serial dilution preparation reduces sampling error and enables calibration across wider dynamic ranges [26].

The ideal standard curve structure for accurate efficiency assessment consists of 7 points with a 10-fold dilution series [2]. However, practical constraints often necessitate modifications. The dilution-replicate experimental design offers an efficient alternative by performing single reactions on several dilutions for each test sample rather than multiple identical replicates [6]. This approach simultaneously estimates both PCR efficiency and initial quantity while providing built-in outlier identification through multiple dilution points [6].

Table 2: Recommended Experimental Designs for Efficiency Estimation

| Parameter | Traditional Approach | Dilution-Replicate Design | Evidence Source |

|---|---|---|---|

| Technical Replicates | 3 identical replicates per sample | Multiple dilutions per sample (no identical replicates) | [6] |

| Dilution Points | 4-5 points for 2-3 independent samples | 3+ dilution points per sample | [6] [2] |

| Minimum Replicates | 3-4 qPCR replicates per concentration | Single reactions per dilution point | [26] |

| Template Choice | Purified PCR product (risk of side reactions) | cDNA library or genomic DNA with target sequence | [26] |

| Volume Transfer | Not specified | 2-10μL to reduce sampling error | [26] |

Template Selection and Quality Considerations

Template choice significantly impacts efficiency estimates and their applicability to experimental samples. Purified PCR products, while convenient, often promote side reactions due to their short length and fail to reflect the effect of flanking sequences that may interfere with PCR through structural interactions [26]. For gene expression analysis, cDNA libraries provide long template molecules with representative secondary structures that better mimic experimental conditions [26]. For DNA quantification, genomic DNA or plasmids containing the gene of interest serve as appropriate standards, preferably after excising a fragment containing the target sequence to eliminate interfering supercoiling effects [26].

Sample quality assessment remains crucial, as inhibitors present in the sample matrix can substantially reduce apparent efficiency. Proper validation includes testing for inhibition through RNA or DNA spikes or serial dilution analysis [26]. Inhibition often manifests as deviation from linearity in concentrated samples, potentially causing unrealistic efficiency estimates exceeding 100% if these points are erroneously included in linear regression [26].

Template Selection and Experimental Design Workflow

Advanced Methodological Approaches and Computational Solutions

Linear Mixed Models for Uncertainty Propagation

Linear mixed effects models (LMMs) provide a statistical framework that simultaneously estimates the uncertainty of both efficiency and Cq values, properly accounting for technical and sample-level variability [28]. This approach enables correct propagation of efficiency estimation error into final ΔΔCq estimates, maintaining appropriate false positive rates in hypothesis testing [28]. While the concept of using LMMs in qPCR analysis is not novel, their application combined with statistical methods like the delta method, Monte Carlo integration, or bootstrapping to handle efficiency uncertainty represents an important methodological advancement [28].

The modified ΔΔCq equation incorporating efficiency terms takes the form: Uncalibrated Quantity = (etarget^(-Cttarget))/(enorm^(-Ctnorm)), where e represents geometric efficiency and Ct represents the threshold cycle for target and normalizer assays [2]. This formulation allows for differing efficiencies between target and reference genes, addressing a common source of bias in traditional ΔΔCq calculations that assume equal, perfect efficiency [2].

Deep Learning for Sequence-Specific Efficiency Prediction

Emerging computational approaches leverage deep learning to predict sequence-specific amplification efficiencies directly from DNA sequence information. Recent research demonstrates that one-dimensional convolutional neural networks (1D-CNNs) can accurately predict amplification efficiencies based solely on sequence features, achieving high predictive performance (AUROC: 0.88, AUPRC: 0.44) [29].

These models, trained on synthetic DNA pools with reliably annotated efficiency values, enable the identification of specific motifs associated with poor amplification and facilitate the design of inherently homogeneous amplicon libraries [29]. The CluMo (Motif Discovery via Attribution and Clustering) interpretation framework identifies problematic sequence motifs adjacent to adapter priming sites, revealing adapter-mediated self-priming as a major mechanism causing low amplification efficiency [29]. This approach reduces the required sequencing depth to recover 99% of amplicon sequences fourfold, offering significant efficiency improvements in multi-template PCR applications [29].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Robust qPCR Efficiency Estimation

| Reagent/Material | Function | Specifications/Quality Controls |

|---|---|---|

| TaqMan Gene Expression Assays | Pre-designed, pre-optimized primer-probe sets | >700,000 assays available; guaranteed 100% efficiency [30] [2] |

| Custom TaqMan Assay Design Tool | Web-based design of custom assays | Superior algorithm for optimal primer-probe design [30] |

| SYBR Green I Master Mix | Fluorescent DNA binding dye for detection | Requires validation of specificity via melt curve analysis [26] |

| Standard Curve Templates | Efficiency estimation reference | cDNA library, genomic DNA, or synthetic templates (gBlocks) [26] |

| DNase Treatment Reagents | gDNA removal from RNA samples | Critical for preventing false positives from contaminating gDNA [30] |

| Nucleic Acid Quality Assessment Tools | Sample quality verification | Spectrophotometric ratios (A260/A280, A260/A230); RNA integrity number [26] |

The limitations of relying solely on Cq values for qPCR quantification stem from multiple sources: efficiency variability across platforms and sequences, unaccounted statistical uncertainty, and template-specific amplification biases. Robust quantification requires moving beyond Cq-centric approaches to incorporate proper efficiency estimation, uncertainty propagation, and sequence-aware design. Methodological frameworks employing linear mixed models, advanced experimental designs, and emerging deep learning approaches offer pathways to overcome these fundamental limitations. As qPCR continues to serve critical roles in both basic research and clinical applications, acknowledging and addressing these foundational pitfalls remains essential for generating reliable, reproducible molecular quantification data.

A Practical Guide to Efficiency Estimation Methods: From Standard Curves to Single-Curve Analysis

In molecular biology, the accuracy of quantitative real-time PCR (qPCR) hinges on a critical component: the standard curve. This method serves as the gold standard for quantifying nucleic acids, providing a reliable benchmark against which the performance of both samples and assays are measured [31]. A gold standard test is defined as the best available diagnostic method under reasonable conditions, against which new tests are compared to gauge their validity [32]. In the context of a broader thesis on evaluating real-time PCR efficiency estimation methods, understanding the construction, interpretation, and limitations of standard curves is fundamental. This guide objectively compares this established methodology with emerging alternatives, such as digital PCR (dPCR), providing the experimental data and protocols researchers and drug development professionals need to make informed methodological choices.

The Fundamental Principles of the qPCR Standard Curve

The qPCR standard curve is a relationship model between the quantification cycle (Cq) values and the logarithm of known, serially diluted template concentrations [8]. This curve enables the conversion of raw Cq values from samples with unknown concentrations into absolute quantities. Its two most critical parameters are the slope and the correlation coefficient (R²).

The PCR efficiency (E) is calculated from the slope of the standard curve using the formula: E = [10^(-1/slope)] - 1 [8]. An ideal reaction with 100% efficiency, where the template doubles perfectly every cycle, has a slope of -3.32. In practice, an efficiency between 90% and 110% (slope between -3.58 and -3.10) is generally considered acceptable. The R² value, ideally >0.99, indicates the linearity and reliability of the curve across the dynamic range.

Building a Robust Standard Curve: Detailed Experimental Protocol

Constructing a precise standard curve requires meticulous attention to each step of the workflow, illustrated below.

Step 1: Preparation of Standard Material

The process begins with a template of known concentration, typically a synthetic oligonucleotide, purified PCR product, or genomic DNA. The initial concentration must be accurately determined using a high-integrity method like spectrophotometry (NanoDrop) or fluorometry (Qubit). This stock solution is then serially diluted, usually in 10-fold steps, to span the entire expected concentration range of the unknown samples, typically covering 5 to 6 orders of magnitude (e.g., from 10^0 to 10^5 copies/µL).

Step 2: qPCR Amplification

Each dilution in the series is amplified by qPCR in a minimum of 3-4 technical replicates to account for random pipetting and instrumentation errors [8]. All reactions must be performed under identical conditions: the same reaction mix, cycling protocol, and on the same instrument plate. The inclusion of a no-template control (NTC) is mandatory to confirm the absence of contamination.

Step 3: Data Analysis and Curve Fitting

The mean Cq value for each standard dilution is plotted against the logarithm of its known concentration. A regression line is fitted to these data points. The slope, y-intercept, and R² value of this line are automatically calculated by the qPCR instrument software. The PCR efficiency is then derived from the slope.

Step 4: Quantification of Unknowns

The Cq values of unknown samples are interpolated from the standard curve to determine their initial concentrations. The reliability of this quantification is directly dependent on the quality and precision of the standard curve.

Key Reagents and Research Solutions

The following table details essential materials and their functions for establishing a reliable qPCR standard curve.

| Item Name | Function/Brief Explanation |

|---|---|

| High-Purity Standard Template | A DNA or RNA fragment of known sequence and concentration. Serves as the absolute reference for quantification. |

| High-Fidelity DNA Polymerase | Enzyme for amplifying the standard template to ensure minimal introduction of errors during PCR product generation. |

| qPCR Master Mix | Optimized buffer containing DNA polymerase, dNTPs, salts, and fluorescent dyes (SYBR Green) or probe systems (TaqMan). |

| Spectrophotometer/Fluorometer | Instrument for accurately quantifying the initial concentration of the standard stock solution. |

| qPCR Thermocycler | Instrument that performs thermal cycling while monitoring fluorescence in real-time to generate Cq values. |

Comparative Performance Data: qPCR vs. Digital PCR

While qPCR is the established workhorse for nucleic acid quantification, digital PCR (dPCR) has emerged as a powerful alternative that eliminates the need for a standard curve. dPCR achieves absolute quantification by partitioning a sample into thousands of individual reactions, counting the positive and negative partitions, and using Poisson statistics to determine the original copy number [13] [31]. The table below summarizes a comparative performance analysis based on recent studies.

Table 1: Comparative analysis of qPCR and dPCR performance characteristics.

| Parameter | Real-Time PCR (qPCR) | Digital PCR (dPCR) |

|---|---|---|

| Quantification Basis | Relative to standard curve [31] | Absolute count of molecules [31] |

| PCR Efficiency | Requires precise estimation [8] | Largely independent of efficiency [13] |

| Precision & Sensitivity | High for moderate to high abundance targets [13] | Superior for low-abundance targets and rare mutations [13] [31] |

| Susceptibility to Inhibitors | Moderate; can affect Cq values [13] | Lower; partitioning reduces inhibitor effects [13] |

| Throughput & Cost | High-throughput, cost-effective [31] | Lower throughput, higher cost per sample [13] |

| Ideal Application | Gene expression, pathogen detection (high titer) [31] | Liquid biopsy, rare allele detection, viral load (low titer) [13] [31] |

A 2025 study on respiratory virus diagnostics during the 2023-2024 tripledemic provided concrete data supporting this comparison. The study found that dPCR "demonstrated superior accuracy, particularly for high viral loads of influenza A, influenza B, and SARS-CoV-2," and showed "greater consistency and precision than Real-Time RT-PCR, especially in quantifying intermediate viral levels" [13]. This highlights dPCR's performance advantages in scenarios demanding high precision, though the study notes its routine use is still limited by higher costs and reduced automation compared to qPCR [13].

Interpreting Results and Troubleshooting a Suboptimal Curve

A precise standard curve is the foundation of reliable data. The following diagram outlines the logical process for diagnosing and resolving common issues based on the curve's parameters.

Robust efficiency estimation is not merely academic; it has direct implications for quantitative accuracy. A study on the imprecision of PCR efficiency estimation found that the uncertainty may be as large as 42.5% if a standard curve with only one qPCR replicate is used [8]. This underscores the necessity of using at least 3-4 technical replicates per concentration to reduce this uncertainty and generate a precise efficiency estimate [8]. Furthermore, the estimated PCR efficiency can vary significantly across different qPCR instruments, indicating that a standard curve generated on one platform should not be assumed to be valid on another [8].

The qPCR standard curve remains the gold standard method for nucleic acid quantification due to its robustness, high throughput, and cost-effectiveness. Its proper construction and interpretation, requiring careful dilution series preparation, adequate replication, and critical evaluation of efficiency and linearity, are non-negotiable for generating valid data [8]. However, the emergence of dPCR presents a compelling alternative for applications where absolute quantification without a standard curve, superior precision for low-abundance targets, and reduced susceptibility to inhibitors are paramount [13] [31]. The choice between these technologies is not a matter of one being universally better, but rather depends on the specific requirements of the experiment—weighing the need for throughput and cost against the demand for ultimate sensitivity and precision. As the field of molecular diagnostics advances, the principles of the standard curve continue to underpin the validation of these new technologies, ensuring that the "gold standard" evolves while maintaining its foundational integrity.

In real-time quantitative PCR (qPCR), the accuracy of quantitative results is fundamentally dependent on the amplification efficiency of the assay. The standard curve method provides a robust, widely practiced approach for determining this critical parameter. Amplification efficiency (E) is defined as the fraction of target templates that is amplified during each PCR cycle; an efficiency of 1 (or 100%) represents a perfect doubling of amplicons every cycle [2] [10].

The relationship between the standard curve's slope and PCR efficiency is mathematically codified in the formula E = 10^(-1/slope) - 1 [33] [34]. This guide objectively compares this method against alternative approaches, evaluating its performance based on precision, practical implementation, and applicability within modern molecular research and drug development.

Core Principles: The Slope-Efficiency Relationship

The Mathematical Foundation

The standard curve is generated from a dilution series of a known template quantity. The threshold cycle (Ct) values obtained from these dilutions are plotted against the logarithm of their starting concentrations. The slope of the resulting trendline is the central component for calculating efficiency [34] [35].

- Ideal Scenario: A slope of -3.32 corresponds to a PCR efficiency of 100% [2] [34]. This is derived from the fact that a template doubling every cycle (100% efficiency) should see the Ct value change by -1 for every 2-fold dilution, or by approximately -3.32 for every 10-fold dilution (since log10(2) ≈ 0.301, and 1/0.301 ≈ 3.32).

- Acceptable Ranges: In practice, PCR efficiencies between 90% and 110% (approximately corresponding to slopes between -3.58 and -3.10) are generally considered acceptable for reliable quantification [34] [36]. Efficiencies outside this range can indicate issues with reaction optimization or the presence of inhibitors.

Performance Data and Comparison of Methods

The table below summarizes key performance characteristics and compares the standard curve method to other common approaches for efficiency estimation.

*Table 1: *Comparison of qPCR Efficiency Estimation Methods

| Method Feature | Standard Curve with Dilution Series | PCR Efficiency-Based Calculations (e.g., LinReg, DART-PCR) | Assumption of 100% Efficiency (ΔΔCq Method) |

|---|---|---|---|

| Core Principle | Relies on external dilution series to establish Ct-log(quantity) relationship [35]. | Calculates efficiency from the exponential phase of individual sample amplification curves [33]. | Assumes perfect doubling in every cycle; no experimental determination of actual efficiency [10]. |

| Quantitative Output | Provides a precise efficiency value (E) for the assay [33] [34]. | Provides an efficiency value per sample. | Does not provide an efficiency value; it is fixed at 2 (100%). |

| Key Quality Indicators | Slope (ideal: -3.32) and Coefficient of Determination (R²) (should be >0.99) [34] [16]. | Confidence interval of the calculated efficiency. | Not applicable. |

| Major Advantage | Simple, reliable, and provides routine validation of the methodology [35]. | Does not require laborious preparation of a dilution series [33]. | Extreme simplicity and low cost; no extra experiments needed. |

| Major Disadvantage | Requires additional labor, cost, and a suitable sample for the dilution series [2]. | The "black-box" nature of algorithms and sensitivity to baseline setting can affect results [10]. | Introduces significant bias if the true assay efficiency deviates from 100%, leading to inaccurate quantification [10]. |

| Impact of Inhibitors | Can be detected by a sub-optimal slope and R² value. | Can be detected by variations in per-sample efficiency. | Results are severely skewed, as the effect of inhibition is not accounted for. |

Experimental Protocol: Executing a Standard Curve Experiment

A precise standard curve requires meticulous execution. The following protocol details the key steps.

Reagent Solutions and Materials

*Table 2: *Essential Research Reagents for Standard Curve Generation

| Reagent / Material | Function in the Experiment |

|---|---|

| High-Quality DNA Template | Serves as the standard for the dilution series. It should be of known, high concentration and purity (e.g., plasmid, PCR product, gDNA) [34]. |

| Validated Primer Pair | Specifically amplifies the target amplicon. Efficiency testing is a critical step in primer validation itself [34]. |

| qPCR Master Mix | Contains DNA polymerase, dNTPs, buffer, and fluorescent dye (e.g., SYBR Green) or probe, providing the core chemistry for amplification and detection [10]. |

| Nuclease-Free Water | Used for preparing serial dilutions to ensure no enzymatic degradation of the template. |

| Optically Clear Plate & Seals | Ensure consistent fluorescence detection across all wells; cap design can affect signal [35]. |

| Calibrated Precision Pipettes | Critical for achieving accurate and reproducible serial dilutions, which directly impact the slope and R² [8]. |

Step-by-Step Workflow

The following diagram illustrates the end-to-end experimental workflow for determining PCR efficiency via a standard curve.

Detailed Methodology

- Prepare Serial Dilutions: Create a series of template dilutions, typically 5-fold, 10-fold, or 2-fold, covering at least 5 orders of magnitude [33] [16]. Using a high-quality template and performing accurate pipetting during this step is paramount. Using a larger transfer volume (e.g., 2-10 µl) when constructing the series can reduce sampling error [8].

- Run qPCR: Amplify each dilution in the series, including a no-template control (NTC). Running technical replicates (at least triplicates) for each dilution point is essential for assessing the repeatability and precision of the Ct values [34] [36].