A Practical Guide to Validating PCR Primer Specificity and Efficiency for Robust Molecular Assays

This article provides a comprehensive framework for researchers and drug development professionals to validate PCR primer specificity and efficiency, which are critical for reliable gene expression analysis, diagnostic assay development,...

A Practical Guide to Validating PCR Primer Specificity and Efficiency for Robust Molecular Assays

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to validate PCR primer specificity and efficiency, which are critical for reliable gene expression analysis, diagnostic assay development, and pathogen detection. Covering foundational design principles, methodological applications, advanced troubleshooting, and rigorous validation techniques, the guide synthesizes current best practices and innovative strategies, such as allele-specific designs and pan-genome analysis. By outlining a clear path from in-silico design to experimental confirmation, this resource aims to enhance the accuracy, reproducibility, and translational potential of PCR-based methods in biomedical and clinical research.

Laying the Groundwork: Core Principles of Primer Design for Specificity

The polymerase chain reaction (PCR) stands as one of the most transformative techniques in molecular biology, enabling the amplification of specific DNA sequences with remarkable precision. At the heart of every successful PCR experiment lies the careful selection and optimization of three fundamental primer parameters: melting temperature (Tm), GC content, and length. These interconnected factors collectively govern primer specificity, annealing efficiency, and ultimately, the success of amplification across diverse applications from basic research to clinical diagnostics. Mastering the delicate balance between these parameters requires both theoretical understanding and practical optimization strategies to ensure primers bind specifically to their intended targets while avoiding secondary structures and off-target interactions.

Within the broader context of primer specificity and efficiency research, contemporary investigations continue to reveal new dimensions of primer-template interactions. Recent studies demonstrate that even meticulously designed primers can exhibit sequence-specific amplification biases in multi-template PCR environments, highlighting the need for sophisticated design approaches that account for complex molecular interactions [1]. This guide systematically examines the core primer parameters, provides structured experimental protocols for validation, and introduces advanced computational tools to streamline the primer design process for researchers and drug development professionals.

Core Primer Parameters and Their Interrelationships

Melting Temperature (Tm): The Thermal Blueprint

The melting temperature (Tm) represents the temperature at which 50% of primer-template duplexes dissociate and become single-stranded, serving as a critical determinant for establishing appropriate PCR annealing conditions. The Tm fundamentally dictates the stringency of primer binding, with optimal values typically falling between 55°C and 65°C for standard PCR applications [2] [3]. Perhaps most critically, the forward and reverse primers in a pair should exhibit closely matched Tm values, ideally within 1-2°C of each other, to ensure synchronous binding to the template during the annealing step [3] [4]. Disparities exceeding 5°C frequently result in inefficient amplification and reduced product yield as one primer may anneal less efficiently than its counterpart.

Tm calculation methods have evolved substantially, with modern algorithms incorporating nearest-neighbor thermodynamics and precise salt corrections to enhance prediction accuracy. The modified Allawi & SantaLucia's thermodynamics method, for instance, has been optimized to maximize specificity and yield through parameter adjustment based on empirical performance data [5]. When calculating Tm values using online tools, researchers must input specific reaction conditions—particularly Mg²⁺ concentration (typically 1.5-2.0 mM for Taq DNA polymerase) and monovalent ion concentration—as these significantly impact the results [2] [4]. The relationship between Tm and annealing temperature (Ta) follows a well-established principle: the optimal Ta is generally 5°C below the Tm of the primers for standard polymerases, though this relationship varies with different enzyme systems [2] [3].

Table 1: Tm Recommendations by Polymerase Type

| Polymerase Type | Optimal Tm Range | Recommended Annealing Temperature | Special Considerations |

|---|---|---|---|

| Standard Taq | 55-65°C | 5°C below lowest primer Tm | Buffer composition affects actual Tm |

| High-Fidelity Enzymes | 60-70°C | Varies by enzyme; often higher than Taq | Follow manufacturer guidelines |

| Platinum SuperFi, Phire | Calculator-specific | Calculated via modified thermodynamics method | Uses adjusted parameters for specificity |

| Platinum II Taq, Phusion Plus | Universal 60°C annealing | Fixed 60°C | Special buffers enable universal annealing |

GC Content: Structural Stability and Specificity

GC content represents the percentage of guanine and cytosine bases within a primer sequence and directly influences duplex stability through enhanced hydrogen bonding between GC pairs compared to AT pairs. The optimal GC content for PCR primers falls within the 40-60% range, with many sources recommending approximately 50% as ideal for maintaining an appropriate balance between binding stability and specificity [2] [3] [4]. This range provides sufficient sequence complexity while minimizing the potential for secondary structure formation that can impede hybridization.

Primers with GC content below 40% may exhibit reduced binding stability and lower Tm values, potentially compromising amplification efficiency. Conversely, primers exceeding 60% GC content are prone to stable secondary structures, including hairpins and self-dimers, and demonstrate increased non-specific binding, particularly to GC-rich regions elsewhere in the genome [2] [3]. Additionally, sequences should avoid stretches of four or more consecutive G residues, which can promote the formation of higher-order structures called G-quadruplexes that interfere with efficient amplification [2]. The distribution of GC bases also merits consideration—ideally, GC residues should be spaced relatively evenly throughout the primer rather than clustered in specific regions [4].

In specialized applications involving GC-rich templates, researchers may employ buffer additives to ameliorate structural challenges. DMSO (dimethyl sulfoxide), typically used at concentrations of 2-10%, effectively lowers the Tm of DNA templates and helps resolve strong secondary structures [3]. Similarly, betaine (1-2 M) homogenizes the thermodynamic stability of GC-rich and AT-rich regions, often improving yield and specificity in challenging amplifications [3].

Primer Length: Balancing Specificity and Efficiency

Primer length serves as a direct determinant of both specificity and binding efficiency, with most applications utilizing primers between 18 and 30 nucleotides [2] [4]. This range typically provides sufficient sequence information for unique targeting within complex genomes while maintaining practical synthesis efficiency and cost-effectiveness. Within this continuum, specific applications favor different optimal lengths: 18-24 bases is often ideal for standard PCR, while quantitative PCR (qPCR) frequently employs primers at the shorter end of this spectrum [3].

Shorter primers (below 18 bases) risk reduced specificity due to an increased probability of coincidental sequence matches throughout the genome, particularly in organisms with large or complex DNA content. Conversely, excessively long primers (above 30 bases) may exhibit reduced annealing efficiency and increased propensity for secondary structure formation without providing meaningful gains in specificity [3]. The 3' end of the primer demands special attention—the last five bases at the 3' terminus (often called the "core") should be rich in G and C bases to enhance stability and ensure efficient initiation of polymerase extension, but should not form stable secondary structures that would impede binding [3].

Table 2: Optimal Ranges for Key Primer Parameters

| Parameter | Optimal Range | Suboptimal Conditions | Impact of Deviation |

|---|---|---|---|

| Primer Length | 18-30 bases | <18 bases: Reduced specificity >30 bases: Reduced annealing efficiency | Increased off-target amplification or failed reactions |

| GC Content | 40-60% (ideal: 50%) | <40%: Low binding stability >60%: Secondary structures | Poor yield, non-specific products, primer-dimer |

| Tm | 55-65°C | Outside range: Annealing issues | Failed amplification or non-specific binding |

| Tm Difference | ≤2°C between primers | >5°C difference | Asynchronous binding, reduced yield |

| 3' End Stability | GC-rich (but no G-runs) | A/T-rich or self-complementary | Failed polymerase initiation |

Experimental Protocols for Primer Validation

Annealing Temperature Optimization Using Gradient PCR

The empirical determination of optimal annealing temperature represents one of the most critical steps in PCR optimization, as theoretical calculations cannot fully account for the complexity of reaction conditions and template characteristics. The gradient PCR method provides a systematic approach to identify the ideal annealing temperature for each primer-template pair combination.

Protocol:

- Prepare a master mix containing all standard PCR components: template DNA, primers, dNTPs, reaction buffer, and DNA polymerase.

- Aliquot equal volumes of the master mix into individual PCR tubes or a multi-well plate.

- Program the thermocycler with a gradient across the annealing step, typically spanning a range of 6-10°C below to the extension temperature relative to the calculated Tm [5].

- Execute the PCR amplification using otherwise standard cycling parameters.

- Analyze the resulting products using agarose gel electrophoresis to assess amplification specificity and yield at each temperature.

Data Interpretation: The optimal annealing temperature produces a single, intense band of the expected amplicon size with minimal to no non-specific amplification. Lower temperatures within the gradient often yield multiple bands or smearing indicative of non-specific binding, while higher temperatures may result in diminished or absent amplification due to insufficient primer annealing [3]. For qPCR applications, the optimal temperature corresponds to the lowest Ct value with the highest fluorescence amplitude, indicating maximal amplification efficiency [2].

Specificity Assessment and Off-target Amplification Testing

Validating primer specificity is essential for applications requiring precise amplification, particularly in diagnostic settings and multiplex assays. Experimental verification complements in silico predictions and reveals actual performance under laboratory conditions.

Protocol:

- Perform PCR amplification using optimized annealing temperatures as determined through gradient analysis.

- Include appropriate controls: no-template control (NTC) to detect contamination, positive control with known template, and potentially a negative control with unrelated DNA.

- Analyze amplification products using high-resolution separation methods such as agarose or polyacrylamide gel electrophoresis.

- For definitive identification, excise the band of expected size and perform Sanger sequencing to confirm exact match to the target sequence.

- In qPCR applications, analyze melt curves following amplification; a single sharp peak indicates specific amplification, while multiple peaks suggest off-target products or primer-dimer formation.

Recent advances in large-scale primer validation incorporate deep learning approaches to predict sequence-specific amplification efficiencies. Convolutional neural networks (CNNs) trained on synthetic DNA pools can identify motifs associated with poor amplification, achieving high predictive performance (AUROC: 0.88) [1]. These computational tools help pre-emptively flag primers with potential specificity issues before experimental validation.

Computational Tools for Primer Design and Analysis

The evolution of computational tools has dramatically transformed primer design from a manual, labor-intensive process to an efficient, scalable operation. These tools integrate algorithms for calculating thermodynamic properties, predicting secondary structures, and assessing specificity through genome-wide comparisons.

NCBI Primer-BLAST represents one of the most comprehensive publicly available tools, combining the primer design capabilities of Primer3 with the specificity assessment of BLAST to generate target-specific primers [6]. Users can specify numerous constraints including Tm range, GC content, product size, and organism for specificity checking, with the tool providing both primer sequences and in silico validation against selected databases [6].

For large-scale projects requiring parallel primer design, automated pipelines like CREPE (CREate Primers and Evaluate) offer streamlined solutions by integrating Primer3 with In-Silico PCR (ISPCR) [7]. This platform designs primers for multiple target sites and performs comprehensive specificity analysis, generating annotated output files that include off-target likelihood assessments. Experimental validation of CREPE-designed primers demonstrated successful amplification for over 90% of primers deemed acceptable by the pipeline [7].

Commercial entities provide curated primer design tools with optimized default parameters. The IDT OligoAnalyzer and PrimerQuest tools incorporate sophisticated algorithms that account for nearest neighbor interactions and user-defined reaction conditions to calculate Tm and assess potential secondary structures [2]. Similarly, Thermo Fisher's Tm Calculator incorporates modified Allawi & SantaLucia's thermodynamics method, with parameters specifically adjusted for different DNA polymerases including Platinum SuperFi, Phusion, and Phire [5].

Table 3: Computational Tools for Primer Design and Analysis

| Tool Name | Primary Function | Key Features | Best For |

|---|---|---|---|

| Primer-BLAST | Primer design + specificity checking | Integrated BLAST search, graphical output | Ensuring target specificity |

| CREPE | Large-scale primer design | Batch processing, ISPCR integration | Targeted amplicon sequencing panels |

| IDT OligoAnalyzer | Primer analysis | Tm calculation, dimer/hairpin prediction | Quick primer quality check |

| Thermo Fisher Tm Calculator | Polymerase-specific Tm | Optimized for specific polymerases | Reaction condition optimization |

| Primer3 | Core primer design | Highly customizable parameters | Building blocks for custom pipelines |

Advanced Considerations for Specialized Applications

Quantitative PCR (qPCR) Probe Design

While sharing fundamental parameters with standard PCR primers, qPCR assays introduce additional considerations for probe design when using hydrolysis (TaqMan) chemistry. qPCR probes should possess a Tm 5-10°C higher than the accompanying primers to ensure probe hybridization prior to primer annealing, thereby guaranteeing that fluorescence measurement occurs specifically from the intended amplification [2]. For optimal fluorescence quenching and signal detection, double-quenched probes incorporating internal ZEN or TAO quenchers provide lower background compared to single-quenched designs, particularly for longer probes [2].

Probe placement should be in close proximity to either the forward or reverse primer binding site without overlapping the primer sequence itself, and a guanine base should be avoided at the 5' end as it can quench fluorophore emission [2]. As with primers, probes must be screened for self-complementarity and secondary structures that might inhibit hybridization or cleavage.

Multiplex PCR and Variant Detection

Multiplex PCR assays, which amplify multiple targets simultaneously, demand rigorous optimization of all primer parameters to ensure balanced amplification across all targets. In allele-specific PCR for variant detection, as demonstrated in SARS-CoV-2 variant typing, primer-probe sets are designed to target specific mutations such as Ins214EPE, Del 69-70, and L452R found in Omicron and Delta variants [8]. Such assays require exceptional specificity to discriminate between closely related sequences, often achieved through careful positioning of the variant nucleotide at the 3' end of the primer where mismatch recognition is enhanced [8].

In large-scale multiplex applications such as targeted amplicon sequencing, computational design tools must accommodate multiple constraints simultaneously. The CREPE pipeline, for instance, optimizes for targeted amplicon sequencing on 150 bp paired-end Illumina platforms by iteratively designing alternative amplicons compatible with the sequencing technology while maintaining specificity [7]. This approach successfully addresses the challenge of designing hundreds to thousands of primer pairs with compatible properties for parallel amplification.

Research Reagent Solutions

The following reagents and tools represent essential components for PCR primer design, validation, and implementation:

High-Fidelity DNA Polymerases (e.g., Pfu, KOD): Possess 3'→5' proofreading activity for high accuracy (error rates as low as 1.5×10⁻⁶ errors/bp), essential for cloning and sequencing applications [3].

Hot Start Taq DNA Polymerases: Require thermal activation, preventing non-specific amplification during reaction setup by inhibiting polymerase activity at room temperature [3].

Buffer Additives:

Magnesium Chloride (MgCl₂) Solutions: Essential polymerase cofactor; typically optimized between 1.5-4.0 mM concentration; significantly impacts enzyme activity, primer annealing, and fidelity [4].

Commercial Primer Design Platforms:

- IDT PrimerQuest Tool: Generates highly customized designs for qPCR assays and PCR primers with comprehensive analysis [2].

- Thermo Fisher Tm Calculator: Calculates polymerase-specific Tm values and recommended annealing temperatures [5].

- Eurofins Genomics Smart Default Primers: Pre-designed parameters following best-practice criteria with >80% full-length purity guarantee [9].

Specificity Verification Tools:

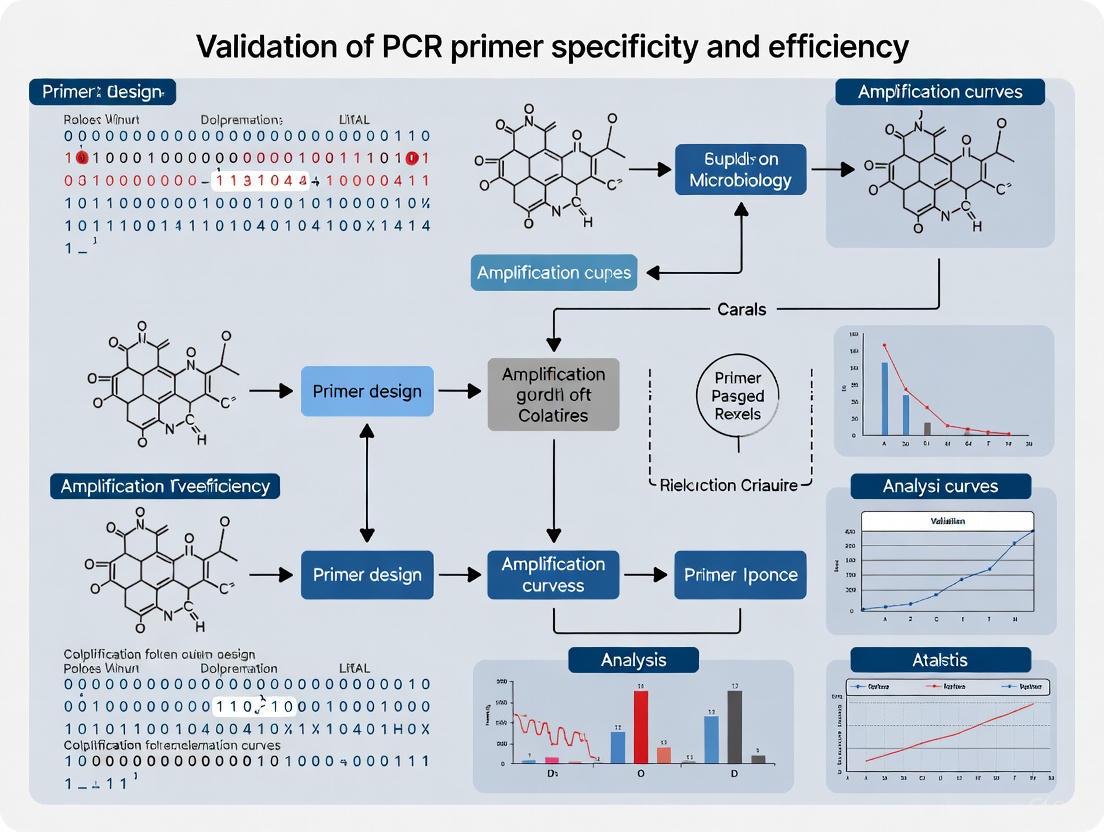

Workflow Diagram: Primer Design and Validation

Diagram Title: Primer Design and Validation Workflow

This workflow illustrates the systematic process of primer design and validation, highlighting the integration of computational design with experimental optimization. The pathway begins with target sequence definition, progresses through parameter-driven primer generation, incorporates comprehensive in silico analysis, and culminates in laboratory-based validation—a process that can be significantly accelerated through automated pipelines like CREPE that combine design and specificity assessment steps [7].

The meticulous optimization of primer melting temperature, GC content, and length remains foundational to successful PCR assay development. While established guidelines provide robust starting points—primer length of 18-30 bases, GC content of 40-60%, and Tm of 55-65°C with minimal difference between primer pairs—empirical validation remains indispensable for assay robustness. The continuing evolution of computational tools, from established platforms like Primer-BLAST to emerging solutions like CREPE, has dramatically enhanced our capacity to design specific primers at scale, yet laboratory verification through gradient PCR and specificity testing remains the ultimate validation standard.

Looking forward, the integration of deep learning approaches into primer design workflows promises to address persistent challenges in amplification efficiency prediction, particularly for complex multi-template applications [1]. These advances, coupled with improved biochemical formulations and more sophisticated specificity algorithms, will further enhance our ability to design primers that meet the escalating demands of modern molecular diagnostics, synthetic biology, and large-scale genomic studies. By mastering the fundamental parameters outlined in this guide while embracing emerging computational methodologies, researchers can ensure the development of highly specific, efficient, and reliable PCR assays across diverse applications.

The exquisite specificity and sensitivity of the polymerase chain reaction (PCR) and its quantitative variant (qPCR) are fundamentally dependent on the precise binding of primers to their intended target sequences. Among the most critical factors influencing this binding is the propensity of oligonucleotides to form secondary structures, including hairpins, self-dimers, and primer-dimers. These structures are not merely theoretical concerns; they have measurable, detrimental impacts on assay performance by reducing amplification efficiency, depleting available primer concentrations, and generating false-positive signals [10]. In loop-mediated isothermal amplification (LAMP), for instance, the presence of six primers per target significantly increases the likelihood of such interactions, often manifesting as a slowly rising baseline in real-time monitoring—a clear indicator of non-specific amplification and primer sequestration [10].

The thermodynamic stability of these unintended structures dictates their probability of formation. Principles of the nearest-neighbor model, which accounts for the identity and orientation of neighboring base pairs, enable researchers to predict the stability of secondary structures through the calculation of Gibbs free energy (ΔG) [10]. A single thermodynamic parameter derived from these calculations can effectively correlate with the probability of non-specific amplification, providing a quantitative framework for assessing primer quality [10]. This guide objectively compares strategies and tools for preventing these artifacts, providing experimental data and methodologies essential for researchers, scientists, and drug development professionals committed to validating primer specificity and efficiency.

Understanding and Characterizing Secondary Structures

Types and Consequences of Secondary Structures

Secondary structures arise from intramolecular and intermolecular base pairing, leading to several distinct forms that compromise assay integrity. Hairpins (or stem-loops) occur when two regions within a single primer are complementary, causing the molecule to fold back on itself [11]. This structure can physically block the primer from annealing to its template. Self-dimers form when two identical primers hybridize to each other, while cross-dimers (or hetero-dimers) involve hybridization between forward and reverse primers [11] [2]. The most pernicious form, the primer-dimer, results when two primers hybridize and are subsequently extended by DNA polymerase, effectively amplifying the primers themselves rather than the target sequence [10] [11].

The consequences of these structures are quantifiable and severe. In LAMP assays, primers with hairpins possessing 3' complementarity can form self-amplifying structures, leading to non-specific background amplification even without template [10]. This phenomenon depletes reaction components, reduces effective primer concentration, and consequently diminishes assay sensitivity and speed [10]. Similarly, in qPCR, primer-dimer formation generates fluorescent background signal that compromises accurate quantification, particularly in low-template samples [12].

Thermodynamic Principles of Structure Formation

The formation of secondary structures is governed by the fundamental laws of thermodynamics. The nearest-neighbor model provides the most accurate method for predicting the stability of these structures by considering the sequential arrangement of base pairs rather than treating them independently [10]. This model calculates the overall change in Gibbs free energy (ΔG) for the hybridization process, where more negative ΔG values indicate greater stability of the secondary structure [10].

For PCR applications, the melting temperature (Tm) represents the temperature at which 50% of the DNA duplex dissociates into single strands [11]. This parameter is crucial for determining the optimal annealing temperature (Ta) in PCR cycling conditions. The relationship between these parameters follows established principles: Ta should be set no more than 5°C below the Tm of the primers to ensure specific binding while preventing non-specific amplification [2]. The stability of GC base pairs, which form three hydrogen bonds compared to the two in AT pairs, disproportionately influences both Tm and ΔG, explaining why GC-rich sequences are more prone to stable secondary structures [11].

Table 1: Thermodynamic Parameters Influencing Secondary Structure Formation

| Parameter | Definition | Impact on Secondary Structures | Ideal Range |

|---|---|---|---|

| Gibbs Free Energy (ΔG) | Energy change of structure formation; more negative = more stable | ΔG < -9 kcal/mol indicates problematic stability [2] | > -9 kcal/mol for dimers/hairpins [2] |

| Melting Temperature (Tm) | Temperature where 50% of DNA duplex dissociates | Higher Tm increases risk of secondary annealing [11] | 60-64°C for primers [2] |

| GC Content | Percentage of G and C bases in sequence | GC-rich sequences form more stable structures [11] | 40-60% [11] [2] |

| Annealing Temperature (Ta) | Temperature used for primer binding in PCR | Ta too low permits mismatched annealing [2] | Within 5°C of primer Tm [2] |

Experimental Analysis of Secondary Structure Impact

Experimental Evidence from LAMP Assay Studies

Empirical investigations have quantitatively demonstrated the performance degradation caused by secondary structures. A systematic study examining RT-LAMP primer sets for dengue virus (DENV) and yellow fever virus (YFV) revealed that published primer sets frequently exhibited rising baselines when monitored in real-time with intercalating dyes—a direct consequence of amplifiable primer dimers and hairpin structures [10]. This phenomenon was observed even when using primer design software with standard screening criteria, indicating that common design protocols lack sufficient rigor for detecting these problematic interactions [10].

The experimental protocol employed in this research provides a template for systematic evaluation. Researchers utilized RT-LAMP reaction mixtures containing: 1× Isothermal amplification buffer supplemented to 8 mM Mg++, 1.4 mM each dNTP, 0.8 M betaine, primers at standard concentrations (0.2 µM each F3 and B3; 1.6 µM each FIP and BIP; 0.8 µM each LoopF and LoopB), Bst 2.0 WarmStart DNA polymerase, AMV Reverse Transcriptase, and a LAMP-compatible intercalating dye (SYTO 9, SYTO 82, or SYTO 62) in a 10 µL total reaction volume [10]. Reactions were incubated at 63°C with real-time monitoring using a Bio-Rad CFX 96 instrument [10]. This methodology enabled precise quantification of non-specific amplification through fluorescence kinetics.

QUASR Technique for Endpoint Detection

The QUenching of Unincorporated Amplification Signal Reporters (QUASR) technique provides particularly sensitive detection of amplification artifacts. This method utilizes a fluorescently labeled primer paired with a short, complementary quenching probe [10]. Following amplification, unincorporated labeled primers remain quenched, while those incorporated into amplicons produce bright fluorescent signals. When researchers applied this technique to DENV serotypes 1 and 3, they discovered an unexpected self-amplifying hairpin that necessitated additional primer modifications beyond those required to eliminate primer dimers [10]. This finding underscores the necessity of employing multiple detection strategies when validating primer sets, as different methods may reveal distinct artifacts.

Table 2: Experimental Methods for Detecting Secondary Structure Artifacts

| Method | Principle | Sensitivity to Artifacts | Applications |

|---|---|---|---|

| Real-time monitoring with intercalating dyes | Fluorescence increase as dsDNA accumulates | Detects primer-dimer extension products [10] | RT-LAMP, PCR, qPCR |

| QUASR (Endpoint detection) | Fluorescently labeled primers protected from quenchers when incorporated | High sensitivity to specific amplification vs. background [10] | Multiplex LAMP, endpoint detection |

| Gel electrophoresis | Size separation of amplification products | Visualizes primer-dimer bands vs. specific amplicons | Standard PCR validation |

| Thermodynamic analysis | Calculation of ΔG for potential structures | Predicts stability of hairpins and dimers before synthesis [10] | In silico primer design |

Computational Tools for Secondary Structure Analysis

Comparative Analysis of Primer Design and Validation Tools

Several sophisticated software tools are available to predict and prevent secondary structures during the primer design phase. These tools employ algorithms based on the nearest-neighbor model to calculate interaction energies and flag potentially problematic primers.

IDT OligoAnalyzer provides comprehensive analysis capabilities, including hairpin, self-dimer, and hetero-dimer predictions [13]. The tool accepts DNA or RNA sequences and allows adjustment of reaction conditions (oligo concentration, Na+, Mg2+, dNTP concentrations) to match specific experimental parameters [13]. For suspected structures, it calculates ΔG values, with recommendations that any self-dimers, hairpins, or heterodimers should have ΔG values weaker (more positive) than -9.0 kcal/mol [2].

Thermo Fisher Scientific's Multiple Primer Analyzer enables simultaneous analysis of multiple primer sequences, detecting possible primer-dimers based on user-defined parameters [14]. The tool reports Tm values using a modified nearest-neighbor method and provides information on GC content, molecular weight, and extinction coefficient [14]. The developers note that while this analyzer offers valuable preliminary guidance for selecting primer combinations, it is not conclusive, as primer-dimer formation can vary significantly under actual PCR conditions [14].

NCBI Primer-BLAST combines primer design with specificity validation, searching potential primers against selected databases to ensure they generate PCR products only on intended targets [6]. This tool incorporates specificity checking not only for forward-reverse primer pairs but also for forward-forward and reverse-reverse combinations, providing critical protection against primer-dimer formation [6].

Table 3: Comparison of Computational Tools for Secondary Structure Analysis

| Tool | Primary Function | Strengths | Limitations |

|---|---|---|---|

| IDT OligoAnalyzer [13] | Primer analysis and secondary structure prediction | Comprehensive dimer and hairpin analysis with ΔG values; BLAST integration [13] [2] | Single-sequence focus for some functions |

| Multiple Primer Analyzer [14] | Simultaneous analysis of multiple primers | Batch processing of primer sets; table format input [14] | Preliminary guidance only; requires experimental validation [14] |

| NCBI Primer-BLAST [6] | Primer design with specificity checking | Integrated specificity verification against databases [6] | Less focused on thermodynamic analysis of structures |

| mFold Tool [10] | Hairpin and secondary structure prediction | Detailed folding predictions with visualizations | Requires separate primer design |

Practical Workflow for Computational Validation

A robust computational validation workflow begins with generating candidate primers using design tools such as PrimerQuest or similar applications. These candidates should then be subjected to sequential analysis through the following steps:

- Self-complementarity check: Analyze each primer for potential to form hairpins or self-dimers using OligoAnalyzer or equivalent tools.

- Cross-dimer analysis: Screen all primer combinations (forward-forward, reverse-reverse, forward-reverse) for heterodimer formation.

- Specificity verification: Perform BLAST analysis to ensure primers are unique to the intended target sequence.

- Thermodynamic profiling: Calculate Tm values for all primers to ensure they fall within the optimal range (60-64°C) and differ by no more than 2°C between forward and reverse primers [2].

- Secondary structure reassessment: Re-analyze primers for secondary structures at the reaction temperature (typically 5°C below Tm) rather than at default room temperature.

This multi-step process significantly reduces the likelihood of secondary structure issues, though it does not eliminate the necessity for experimental validation.

Diagram 1: Computational primer design and validation workflow to minimize secondary structures.

Strategic Modifications to Eliminate Secondary Structures

Sequence-Based Redesign Strategies

When computational analysis identifies problematic secondary structures, several strategic modifications can eliminate these issues while maintaining target specificity. Research on LAMP primers demonstrated that minor sequence changes—sometimes involving just one or two bases—could successfully eliminate amplifiable primer dimers and hairpins while preserving or even improving assay performance [10]. These modifications typically involve:

Bumping priming sites: Slight adjustments to primer binding locations based on thermodynamic predictions can dramatically reduce non-specific background amplification [10]. This approach maintains the overall target region while disrupting complementarity responsible for dimer formation.

GC content optimization: Redistributing GC residues to avoid consecutive runs while maintaining overall GC content between 40-60% [11]. Particularly problematic is the presence of more than three G or C residues at the 3' end of a primer, which can promote non-specific binding [11].

Sequence repositioning: When possible, moving consecutive GC residues toward the center of primers helps prevent secondary structure formation through steric hindrance [11]. This strategy maintains binding energy while reducing self-complementarity.

Trimming or extending primers: Adjusting primer length to eliminate complementary regions while maintaining Tm in the optimal range. Longer primers (up to 30 bases) offer higher specificity but may hybridize more slowly, while shorter primers (<18 bases) may lack sufficient specificity [11].

Experimental Optimization Techniques

Beyond sequence modifications, several experimental approaches can mitigate the impact of secondary structures:

Temperature optimization: Setting the annealing temperature no more than 5°C below the primer Tm prevents tolerance for internal single-base mismatches or partial annealing that lead to nonspecific amplification [2]. For probes in qPCR applications, the Tm should be 5-10°C higher than that of the primers to ensure all target sites are saturated [2].

Reagent composition adjustments: Modifying Mg++ concentration, which significantly influences Tm calculations, should be accounted for when predicting secondary structure stability [2]. Additionally, additives such as betaine or DMSO can disrupt secondary structures that might form despite computational optimization [10].

Concentration optimization: Balancing primer and DNA concentrations with cycle numbers to minimize by-product formation while maintaining efficient amplification [11]. Higher primer concentrations increase the probability of dimer formation, particularly in later amplification cycles.

Successful prevention of secondary structures requires both computational tools and practical laboratory resources. The following table summarizes essential materials and their functions in designing and validating secondary-structure-free assays.

Table 4: Research Reagent Solutions for Secondary Structure Prevention

| Tool/Reagent | Function | Application Context |

|---|---|---|

| IDT OligoAnalyzer Tool [13] | Tm calculation, hairpin and dimer prediction | In silico primer design and optimization |

| Thermo Fisher Multiple Primer Analyzer [14] | Batch analysis of primer sets for dimer formation | Screening multiple primer combinations |

| NCBI Primer-BLAST [6] | Primer design with specificity verification | Ensuring target-specific amplification |

| Bst 2.0 WarmStart DNA Polymerase [10] | Isothermal amplification with reduced non-specific activity | LAMP/RT-LAMP assays |

| SYTO intercalating dyes [10] | Real-time monitoring of DNA amplification | Detection of non-specific amplification in real-time |

| Double-quenched probes [2] | Fluorescent detection with reduced background | qPCR with enhanced signal-to-noise ratios |

| Betaine [10] | Additive that disrupts secondary structures | PCR/LAMP with GC-rich templates |

Preventing secondary structures in PCR and related amplification technologies requires a comprehensive, integrated approach combining sophisticated computational design with rigorous experimental validation. The evidence clearly demonstrates that even minor oversights in primer design can lead to quantitatively measurable performance degradation, including reduced sensitivity, specificity, and quantitative accuracy [10] [12]. By employing the strategic modifications, analytical tools, and validation protocols outlined in this guide, researchers can significantly improve assay reliability and reproducibility.

The most successful outcomes invariably result from iterative optimization—beginning with computational predictions informed by thermodynamic principles, followed by systematic experimental validation under conditions that mirror actual application environments. As molecular diagnostics continues to expand into point-of-care testing, environmental monitoring, and food safety applications [11] [15], the imperative for robust, artifact-free primer designs will only intensify. Adherence to these structured approaches for avoiding secondary structures will ensure that PCR-based methods continue to deliver on their promise of exquisite sensitivity and specificity across diverse application domains.

In molecular biology and diagnostic assay development, the accuracy of polymerase chain reaction (PCR) experiments is fundamentally dependent on primer specificity and correct genomic localization. Non-specific amplification can lead to false positives in gene expression analysis, compromised diagnostic results, and invalid experimental conclusions [16]. The challenge of designing primers that exclusively amplify the intended target requires robust computational methods that integrate local alignment algorithms with detailed knowledge of genomic structure. This guide objectively compares the performance of three predominant in silico approaches for primer design and validation: manual BLAST analysis, the integrated tool Primer-BLAST, and the specialized RT-qPCR tool ExonSurfer. By providing a detailed comparison of their methodologies, performance metrics, and experimental applications, this review serves as a strategic resource for researchers selecting appropriate tools for validating PCR primer specificity and efficiency.

Fundamental Principles of Primer Specificity and Localization

The Critical Role of Specificity in Amplification

Primer specificity ensures that amplification occurs only at the intended genomic locus. Even minor mis-priming events can significantly impact PCR efficiency, particularly in quantitative applications where amplification bias can skew results dramatically [16]. In multiplex PCR setups, primers with off-target binding sites can lead to both false positives and false negatives, compromising experimental integrity. The exponential nature of PCR means that even a slight amplification advantage of one template over others results in a drastic reduction in the relative abundance of the disadvantaged template after just a few cycles [1].

Genomic Localization Considerations

The genomic context of primer binding sites profoundly impacts amplification success, requiring different strategies based on template source:

- Genomic DNA Amplification: For amplification from genomic DNA (gDNA), primers should avoid repetitive sequences that cause binding at multiple locations. The distance between primer pairs should be appropriate for the polymerase enzyme used, typically under 1000 nucleotides for standard polymerases [16].

- cDNA Amplification: When amplifying from cDNA (reverse-transcribed mRNA), designing primers to span exon-exon junctions ensures amplification only from spliced transcripts and not contaminating gDNA. This approach provides transcript-specific detection and enables identification of alternative splice variants [16] [17].

- Intron Avoidance: For gDNA templates, primers should not flank large intronic regions, as most polymerases cannot efficiently amplify across extremely long introns (some exceeding 100,000 nucleotides) [16].

Experimental Approaches and Methodologies

Manual BLAST Analysis for Primer Validation

Protocol for Specificity Checking

Manual BLAST analysis provides a flexible approach for validating pre-designed primers. The following optimized protocol ensures maximum sensitivity for detecting potential off-target binding:

- Select Appropriate BLAST Task: Use

-task blastn-shortinstead of standard nucleotide BLAST. This decreases the word size parameter to 7, dramatically increasing sensitivity for short sequences like primers [16]. - Disable Filtering: Specify

-dust no -soft_masking falseto prevent BLAST from ignoring repetitive or low-complexity regions that might represent potential binding sites [16]. - Adjust Scoring Parameters: Modify mismatch penalties to reflect PCR constraints:

-penalty -3 -reward 1 -gapopen 5 -gapextend 2. This scoring stringency better reflects the impact of mismatches on primer annealing [16]. - Database Selection: Restrict searches to relevant organism-specific databases rather than entire repositories like "all of RefSeq." Smaller databases yield stronger E-values and faster results [16].

- Hit Analysis: Examine alignment coordinates and orientations. For potential hits, check that primer pairs are correctly oriented (forward vs. reverse) and within reasonable amplification distance (typically <1000 bp) [16].

Advanced Concatenation Method

For enhanced specificity checking, concatenate both primers with a spacer sequence (e.g., "NNN") and BLAST this combined sequence. This approach identifies genomic regions where both primers might bind in proximity and correct orientation to generate off-target amplicons, which is particularly useful for detecting recent gene duplicates [16].

Primer-BLAST Integrated Design and Validation

Workflow and Specificity Assurance

Primer-BLAST integrates primer design with specificity validation in a single workflow [18]:

- Template Analysis: The submitted template sequence undergoes MegaBLAST to identify regions with high similarity to unintended targets in the specified database.

- Primer Generation: Primer3 generates candidate primer pairs, prioritizing placement in unique template regions when possible [18].

- Specificity Checking: A combined BLAST and Needleman-Wunsch global alignment algorithm identifies potential amplification targets for each primer pair [18].

- Filtering: Primer pairs are deemed specific only if they have no amplicons on unintended targets within user-defined specificity thresholds.

Figure 1: Primer-BLAST integrated workflow for specific primer design.

Advanced Genomic Localization Features

Primer-BLAST supports sophisticated genomic localization options:

- Exon-Exon Junction Spanning: Primers can be designed to span exon-exon junctions, with control over the minimal number of bases that must anneal to each exon (ensuring binding to the junction region rather than individual exons) [6].

- Intron Spanning: The tool can find primer pairs separated by at least one intron on corresponding genomic DNA, helping distinguish between mRNA and gDNA amplification [6].

- SNP Avoidance: Primers can be automatically designed to avoid known single nucleotide polymorphism sites [18].

ExonSurfer for RT-qPCR Applications

Specialized Workflow for Expression Analysis

ExonSurfer provides a streamlined, specialized workflow for RT-qPCR primer design [17]:

- Junction Selection: Automatically identifies optimal exon junctions based on user-selected transcript isoforms, prioritizing junctions present in all targeted transcripts but absent in non-targeted isoforms.

- Primer Design: Uses primer3-py to design primers with 3' ends placed at exon junctions.

- Two-Stage Specificity Filtering:

- First, aligns primers against mRNA databases to detect cross-hybridization with non-targeted transcripts.

- Second, performs genomic DNA alignment to identify potential gDNA amplification.

- SNP Avoidance: For human genes, automatically masks common SNPs (with optional user disable) during primer design.

Figure 2: ExonSurfer's specialized workflow for RT-qPCR primer design.

Experimental Validation

ExonSurfer's performance has been experimentally validated through designing primers for 26 targets across various gene sizes, expression levels, and transcript variants. Most designed primers accurately amplified their targets without further optimization, confirming the effectiveness of its specificity filters and junction placement strategy [17].

Performance Comparison and Experimental Data

Quantitative Tool Comparison

Table 1: Comprehensive comparison of primer design and validation approaches

| Feature | Manual BLAST | Primer-BLAST | ExonSurfer |

|---|---|---|---|

| Primary Function | Primer validation | Primer design + validation | Specialized RT-qPCR design |

| Specificity Algorithm | BLASTN with optimized parameters | BLAST + Global alignment | Two-step BLAST (mRNA & genomic) |

| Junction Spanning | Manual coordination required | Automated placement | Automated optimal junction selection |

| SNP Avoidance | Manual inspection | Supported | Automated for human genes |

| Organism Support | Virtually unlimited | Comprehensive (NCBI databases) | 7 species (H. sapiens, M. musculus, etc.) |

| Specificity Metrics | E-value, bit score, alignment length | Mismatch thresholds, 3' end constraints | Off-target annotation, isoform discrimination |

| Experimental Validation | User-dependent | Literature documentation [18] | 26 targets tested [17] |

| Best Application | Quick validation, atypical organisms | General purpose specific primer design | High-throughput RT-qPCR studies |

Efficiency and Specificity Performance Data

Recent methodological advances highlight the critical importance of proper primer design for amplification efficiency:

- Amplification Bias: In multi-template PCR, a template with just 5% lower amplification efficiency than average will be underrepresented by approximately half after only 12 PCR cycles, demonstrating how minor efficiency differences create significant quantitative bias [1].

- Sequence-Specific Effects: Deep learning models trained on synthetic DNA pools have identified that sequence-specific amplification efficiencies vary significantly, with approximately 2% of sequences showing very poor amplification efficiency (as low as 80% relative to population mean) regardless of GC content [1].

- Exon Junction Impact: Primers spanning exon-exon junctions effectively prevent gDNA amplification, with ExonSurfer demonstrating successful target-specific amplification for most of 26 tested genes without optimization [17].

Research Reagent Solutions

Table 2: Essential research reagents and resources for primer specificity validation

| Resource | Function | Application Context |

|---|---|---|

| NCBI Primer-BLAST | Integrated specific primer design | General PCR primer design with specificity assurance |

| ExonSurfer | Specialized RT-qPCR primer design | Gene expression analysis with isoform discrimination |

| BLAST+ Suite | Local sequence alignment | Custom validation pipelines and automated analysis |

| SequenceServer | BLAST interface with primer options | Visualization and interpretation of primer hits |

| primer3-py | Python primer design library | Custom primer design applications |

| dbSNP Database | SNP repository | Avoiding polymorphisms in primer binding sites |

| RefSeq Database | Curated sequence collection | Specificity checking against high-quality references |

Selecting the appropriate approach for primer design and validation requires careful consideration of experimental goals and genomic context. Manual BLAST analysis provides maximum flexibility for researcher-directed validation but demands more expertise and time. Primer-BLAST offers the most comprehensive solution for general PCR applications, integrating robust specificity checking with flexible genomic localization options. ExonSurfer excels specifically for RT-qPCR studies where transcript isoform discrimination and gDNA exclusion are paramount. Recent research confirms that sequence-specific effects beyond traditional design parameters significantly impact amplification efficiency, emphasizing the continued importance of sophisticated in silico validation tools for reliable molecular diagnostics and research. As PCR technologies continue evolving toward digital, microfluidic, and point-of-care applications [19], the fundamental requirement for precise primer specificity and correct genomic localization remains constant across platforms.

In molecular biology and diagnostic research, the polymerase chain reaction (PCR) serves as a fundamental technique for amplifying specific DNA sequences. The accuracy and efficiency of PCR amplification are profoundly influenced by the careful selection of genetic targets, which ranges from highly conserved genes common across broad taxonomic groups to strain-specific markers that enable fine-scale differentiation. This selection process directly impacts key performance metrics including specificity, sensitivity, and reproducibility across diverse applications from clinical diagnostics to environmental monitoring.

The expanding availability of genomic data, coupled with advanced bioinformatic tools, has revolutionized our approach to target identification. Researchers can now leverage pangenome analyses and deep learning models to systematically identify optimal genetic markers tailored to their specific detection or differentiation needs. This guide provides a comprehensive comparison of target selection strategies, supported by experimental data and detailed methodologies, to inform evidence-based decision-making for assay development.

Categories of Genetic Targets: From Universal to Specific

Genetic targets for PCR assays can be categorized along a spectrum of specificity, each with distinct applications and performance characteristics. The table below summarizes the primary categories and their defining features.

Table 1: Categories of Genetic Targets for PCR Assays

| Target Category | Definition | Primary Applications | Advantages | Limitations |

|---|---|---|---|---|

| Conserved Genes | Genes with high sequence similarity across species or genera | Species identification, phylogenetic studies | Broad detection capability; well-characterized | May lack resolution for closely related taxa |

| Strain-Specific Markers | Unique sequences identifying specific strains within a species | Outbreak investigation, strain tracking | High discriminatory power; precise identification | Requires extensive genomic knowledge |

| Antibiotic Resistance Genes (ARGs) | Genes conferring resistance to antimicrobial agents | Antimicrobial resistance monitoring, clinical diagnostics | Direct functional relevance; public health importance | Horizontal gene transfer can complicate attribution |

| Structural Variants | Insertions, deletions, or rearrangements in genomic architecture | Genetic mapping, functional gene validation | Often linked to phenotypic differences; stable inheritance | Can be challenging to detect with standard PCR |

Comparative Analysis of Target Selection Strategies

Conserved Gene Targets: Balancing Breadth and Specificity

Conserved genes, particularly those encoding essential cellular functions, have traditionally served as reliable targets for pathogen detection and identification. However, their performance varies significantly depending on the specific gene selected and the microbial group being targeted.

Table 2: Performance Comparison of Conserved Gene Targets in Diagnostic Assays

| Target Gene | Organism | Sensitivity | Specificity | Comparative Findings | Reference |

|---|---|---|---|---|---|

| sodC | Neisseria meningitidis | 100% (49/49 culture-positive isolates) | High (specific to N. meningitidis) | Outperformed ctrA; detected 76.6% vs. 46.7% in carriage samples | [20] |

| ctrA | Neisseria meningitidis | 67.3% (33/49 culture-positive isolates) | Moderate (absent in 16% of carriage isolates) | Produced false negatives due to sequence variations or absence | [20] |

| 16S rRNA | Various bacteria | Variable | Low for closely related species | Multiple copies in genome; overestimates diversity | [21] |

| rpoB | Various bacteria | High | Improved over 16S rRNA | Single copy; better discriminatory power | [21] |

The selection of sodC (Cu-Zn superoxide dismutase gene) over ctrA (capsule transport A gene) for N. meningitidis detection exemplifies how target choice dramatically impacts assay performance. The sodC gene is uniquely suited for diagnostic applications because it is specific to N. meningitidis and not found in other Neisseria species, with no reports of meningococci lacking this gene [20]. In contrast, the ctrA gene is absent in approximately 16% or more of carriage isolates, leading to false-negative results that compromise detection accuracy [20].

Strain-Specific Markers: Precision Through Pangenome Analysis

Advancements in genomic sequencing and bioinformatics have enabled the identification of strain-specific markers through pangenome analysis. This approach involves comparing entire genomic repertoires of multiple strains within a species to identify unique genetic signatures.

Table 3: Strain-Specific Markers Identified Through Pangenome Analysis

| Organism | Marker Type | Identified Markers | Specificity | Application | Reference |

|---|---|---|---|---|---|

| Campylobacter species | Gene-specific | petB, clpX, carB (C. coli); hypothetical proteins (C. jejuni, C. fetus) | ≥90% with minimal cross-reactivity | Species and subspecies differentiation | [22] |

| Erwinia amylovora | Simple Sequence Repeats (SSRs) | 27 SSRs within 26 single-copy genes | High for strain differentiation | Tracking fire blight pathogen strains | [21] |

| Pseudomonas aeruginosa | Hypothetical protein gene | WP_003109295.1 | High across 816 genomes | Food safety monitoring | [15] |

The workflow for identifying strain-specific markers typically begins with pangenome analysis to characterize core and accessory genomes across multiple strains. For Campylobacter species, researchers analyzed 105 high-quality genomes representing 33 species and 9 subspecies using the Roary ILP Bacterial Annotation Pipeline. This approach identified ribosomal genes (rpsL, rpsJ, rpsS, rpmA, etc.) as consistent components of the core genome, while accessory genes showed marked variability suitable for species differentiation [22].

The following diagram illustrates the comprehensive workflow for developing strain-specific markers from genomic data to validated assays:

Antibiotic Resistance Gene Targets: Addressing Sequence Diversity

The accurate detection of antibiotic resistance genes (ARGs) in environmental samples presents unique challenges due to the substantial sequence diversity within these genes. Conventional primer sets often fail to capture this diversity, leading to underestimation of ARG abundance.

A recent study addressing this challenge developed new primer sets for eleven clinically relevant ARGs (aadA, aadB, ampC, blaSHV, blaTEM, dfrA1, ermB, fosA, mecA, qnrS, and tetA(A)). The innovative design strategy involved retrieving all sequences with an orthology grade >70% for each target ARG from the KEGG database, followed by comprehensive multiple sequence alignments using the MAFFT algorithm [23].

This approach resulted in primer sets with significantly improved coverage of ARG diversity compared to conventional designs. When validated on environmental samples (activated sludge, river sediment, and agricultural soils), the optimized qPCR assays demonstrated high amplification efficiency (>90%), good linearity of standard curves (R²>0.980), and excellent reproducibility across experiments [23].

Experimental Protocols for Target Validation

Protocol 1: Pangenome-Based Marker Discovery

Application: Identification of species-specific genetic markers for bacterial differentiation [22]

Materials and Reagents:

- High-quality genome sequences from public databases (NCBI)

- CheckM software for quality assessment

- Roary ILP Bacterial Annotation Pipeline for pangenome analysis

- Geneious or similar software for sequence alignment and primer design

Methodology:

- Data Curation: Collect complete reference genomes for target species and related taxa.

- Quality Filtering: Apply CheckM with thresholds of ≥90% completeness, ≤5% heterogeneity, and ≤5% contamination.

- Pangenome Construction: Use Roary pipeline to identify core and accessory genes across all genomes.

- Marker Selection: Identify genes unique to target species with minimal cross-reactivity to non-target organisms.

- Primer Design: Design primers targeting conserved regions within species-specific markers.

- In Silico Validation: Verify primer specificity against full genome databases.

Validation: The identified markers for Campylobacter species (petB, clpX, carB for C. coli) demonstrated at least 90% specificity with minimal cross-reactivity [22].

Protocol 2: SSR Marker Identification for Strain Typing

Application: Development of strain-specific simple sequence repeats (SSRs) for bacterial pathogen typing [21]

Materials and Reagents:

- Reference genome sequence

- BLASTX for homology searches

- MISA (MicroSatellite identification tool) for SSR detection

- PCR reagents and capillary electrophoresis equipment

Methodology:

- Single-Copy Gene Identification: Perform BLASTX searches against whole protein databases to identify single-copy genes.

- SSR Screening: Use MISA to identify perfect SSRs within single-copy genes.

- Validation Across Strains: Extract SSR-containing regions from multiple strains to identify length polymorphisms.

- Primer Design: Design primers flanking polymorphic SSR regions.

- Experimental Validation: Test primers across strain collections to confirm discriminatory power.

Validation: In Erwinia amylovora, this approach identified 27 SSRs within 26 single-copy genes from strain CFBP 1430, with five genes showing distinguishable tandem repeat numbers across strains [21].

Protocol 3: qPCR Assay Development for Environmental ARG Detection

Application: Detection and quantification of antibiotic resistance genes in environmental samples [23]

Materials and Reagents:

- DNA extracted from environmental samples (e.g., activated sludge)

- Reference bacterial strains carrying target ARGs

- DreamTaq Hot Start DNA Polymerase

- qPCR instrumentation and SYBR Green chemistry

- Primers designed against aligned ARG sequences from KEGG

Methodology:

- Comprehensive Sequence Alignment: Retrieve all sequences for target ARGs from KEGG and align using MAFFT.

- Primer Design: Design primers targeting conserved regions across ARG variants.

- Thermocycling Optimization: Optimize annealing temperatures using gradient PCR.

- Standard Curve Generation: Create standard curves using cloned target genes or reference strains.

- Assay Validation: Determine amplification efficiency, linear dynamic range, and limit of detection.

- Specificity Testing: Verify absence of amplification in non-target organisms.

Validation: The optimized assays demonstrated high amplification efficiency (>90%), good linearity (R²>0.980), and consistent performance across different environmental sample types [23].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Their Applications in Target Validation

| Reagent/Software | Specific Function | Application Context | Performance Benefit | |

|---|---|---|---|---|

| DreamTaq Hot Start DNA Polymerase | PCR amplification with reduced non-specific binding | ARG detection in environmental samples | Improved specificity for complex samples | [23] |

| Roary ILP Pipeline | Rapid pangenome analysis from genome sequences | Bacterial marker discovery | Identifies core and accessory genes efficiently | [22] |

| MISA Tool | Identification of microsatellites in sequence data | SSR marker development | Automates detection of perfect repeats | [21] |

| CheckM | Quality assessment of microbial genomes | Data curation for pangenome analysis | Ensures high-quality input data | [22] |

| MAFFT Algorithm | Multiple sequence alignment for primer design | ARG primer development | Captures sequence diversity for broad detection | [23] |

| FastDNA Kit | Rapid isolation of microbial DNA from complex samples | Nucleic acid extraction from environmental samples | Efficient lysis of diverse microorganisms | [23] |

The selection of appropriate genetic targets for PCR-based assays requires careful consideration of the specific application, required level of discrimination, and inherent sequence diversity of the target population. Conserved genes like sodC offer reliable detection when broad specificity is needed, while strain-specific markers identified through pangenome analysis provide unprecedented resolution for outbreak investigation and epidemiological tracking.

Emerging approaches that account for natural sequence diversity, such as those developed for antibiotic resistance gene monitoring, demonstrate the importance of comprehensive sequence alignment in primer design. Similarly, the integration of deep learning models to predict amplification efficiency based on sequence features represents the next frontier in optimized assay development [1].

The experimental protocols and comparative data presented in this guide provide a framework for evidence-based target selection, enabling researchers to balance the competing demands of specificity, sensitivity, and practical implementation in molecular assay development.

From Sequence to Assay: Methodologies for Primer Validation and Application

The accuracy of polymerase chain reaction (PCR) and quantitative PCR (qPCR) experiments is fundamentally dependent on the specificity and efficiency of the primers and probes used. In molecular diagnostics, genetics research, and drug development, flawed primer design can lead to false positives, skewed quantification, and failed experiments. In-silico design tools have become indispensable for developing robust molecular assays, allowing researchers to move beyond error-prone manual design to automated, computationally-driven processes that incorporate thermodynamic predictions, cross-reactivity checks, and sophisticated efficiency modeling.

Framed within the broader thesis of validating PCR primer specificity and efficiency, this guide objectively compares leading in-silico primer design tools—with particular focus on PrimerQuest from Integrated DNA Technologies (IDT)—against emerging alternatives and methodologies. We present supporting experimental data, detailed protocols from recent studies, and visualization of workflows to assist researchers, scientists, and drug development professionals in selecting and implementing the most appropriate tools for their specific applications, from basic PCR to complex multi-template amplification and species-specific detection.

Tool Comparison: Features, Capabilities, and Performance

Comprehensive Tool Specifications

Table 1: Feature comparison of major in-silico primer design tools

| Tool Name | Primary Application | Key Features | Customization Parameters | Specificity Validation | Access Method |

|---|---|---|---|---|---|

| PrimerQuest (IDT) | PCR, qPCR, sequencing | • Predesigned assays for human, mouse, rat transcriptomes• Batch analysis (up to 50 sequences)• Hydrolysis probe design capability• Guaranteed 90%+ efficiency for predesigned assays | ~45 adjustable parameters (Tm, GC%, amplicon size, etc.) | Cross-react searches, secondary structure predictions | Web-based platform [24] |

| CREPE | Large-scale targeted amplicon sequencing | • Fusion of Primer3 and ISPCR functionality• Automated off-target assessment• Custom evaluation script for specificity scoring | Filtering based on off-target quality scores | In-Silico PCR (ISPCR) with BLAT algorithm for imperfect matches | Command-line tool [7] |

| PrimeSpecPCR | Species-specific qPCR | • Automated sequence retrieval from NCBI• Multiple sequence alignment (MAFFT)• Multi-tiered specificity testing | Customizable qPCR parameters, taxonomic focus | Specificity testing against NCBI GenBank database | Python toolkit with GUI [25] |

| Deep Learning Models (1D-CNN) | Multi-template PCR efficiency prediction | • Sequence-specific amplification efficiency prediction• Identification of poor amplification motifs• AUROC: 0.88, AUPRC: 0.44 | Model training on synthetic DNA pools | Motif discovery via CluMo interpretation framework | Research implementation (Python) [1] |

Performance Metrics and Experimental Validation

Table 2: Experimental performance data from tool implementations and studies

| Tool/Method | Application Context | Efficiency/Success Rate | Specificity Performance | Experimental Validation |

|---|---|---|---|---|

| PrimerQuest | General PCR/qPCR | >90% efficiency guaranteed for predesigned assays [24] | Cross-react searches to avoid off-target amplification [24] | IDT internal validation; user-dependent experimental verification |

| CREPE | Targeted amplicon sequencing | >90% successful amplification in experimental testing [7] | High-quality off-target identification (80-100% match) | Validation on 150bp paired-end Illumina platform |

| Novel Species-Specific Primers | Pseudomonas aeruginosa detection | High sensitivity in standard curve analysis [15] | Specific for P. aeruginosa among various Pseudomonas species | On-site testing on inoculated carrot samples |

| New ARG Primer Sets | Antibiotic resistance gene quantification | Amplification efficiency >90% [23] | Good linearity (R² > 0.980) across environmental samples | Testing on activated sludge, river sediment, agricultural soils |

| GIO Primer Set | Visceral leishmaniasis diagnosis | Pending experimental validation [26] | Superior in-silico specificity vs. LEISH-1/LEISH-2 set | Replaced probe with structural incompatibilities |

Experimental Protocols and Workflows

Standardized Primer Design and Validation Workflow

The following diagram illustrates the comprehensive workflow for in-silico primer design and validation, integrating capabilities from multiple tools discussed in this guide:

Workflow for In-Silico Primer Design and Validation

Protocol: ARG Primer Design and Validation for Environmental Samples

Recent research on antibiotic resistance gene (ARG) detection exemplifies a robust primer design and validation methodology [23]:

- Sequence Acquisition: Retrieve all target gene sequences (e.g., aadA, aadB, ampC, blaTEM, mecA) from Kyoto Encyclopedia of Genes and Genomes (KEGG) with orthology grade >70%

- Multiple Sequence Alignment: Use MAFFT algorithm to align sequences and identify conserved regions

- Primer Design: Design primers using Geneious software with parameters: Tm 55-65°C, primer size 18-22 bp, GC content 40-60%, amplicon size 80-150 bp

- In-Silico Specificity Check: Query full genomes (chromosomes and plasmids) of target and non-target strains to ensure absence of non-specific annealing

- Experimental Optimization: Test primers on genomic DNA from reference strains and environmental sample DNA pools using gradient PCR

- qPCR Validation: Establish standard curves using cloned DNA, genomic DNA, and cell suspensions; require amplification efficiency >90%, R² > 0.980, and demonstrate repeatability across experiments

Protocol: Species-Specific Primer Design with PrimeSpecPCR

For species-specific detection, as demonstrated in Pseudomonas aeruginosa identification [15] and leishmaniasis diagnostic development [26]:

- Genome Analysis: Compare 816 publicly available genome sequences to identify conserved, species-specific gene regions

- Consensus Generation: Employ multiple sequence alignment using MAFFT to generate consensus sequences across target species

- Thermodynamic Optimization: Use Primer3-py with parameters for qPCR applications: primer Tm 58-60°C, probe Tm 68-70°C, amplicon size 70-120 bp

- Multi-Tiered Specificity Testing: Test primers against NCBI GenBank database using BLASTN, with particular attention to closely related species

- Structural Analysis: Predict secondary structures using RNAfold to avoid self-complementarity and dimer formation

- Experimental Validation: Test sensitivity and specificity against related species and in spiked samples (e.g., P. aeruginosa-inoculated carrots)

Advanced Analysis: Addressing Multi-Template PCR Bias with Deep Learning

Recent research has revealed significant sequence-specific amplification biases in multi-template PCR, challenging conventional primer design approaches. A 2025 study in Nature Communications employed one-dimensional convolutional neural networks (1D-CNNs) to predict sequence-specific amplification efficiencies based solely on sequence information [1].

Key Findings on Amplification Bias

- Progressive Skewing: In multi-template PCR with 12,000 random sequences, coverage distributions broadened progressively over 90 cycles, with approximately 2% of sequences showing very poor amplification efficiency (as low as 80% relative to population mean)

- GC-Independent Effects: Constraining sequences to 50% GC content did not eliminate skewing, indicating factors beyond GC content influence amplification efficiency

- Reproducible Patterns: Poor amplification was reproducible and independent of pool diversity, with consistently poor-performing sequences effectively disappearing after 60 cycles

- Mechanistic Insight: Through the CluMo interpretation framework, specific motifs adjacent to adapter priming sites were identified as major contributors to poor efficiency, challenging long-standing PCR design assumptions

Implementation Workflow for Efficiency Prediction

Deep Learning Approach for Amplification Efficiency

This deep learning approach demonstrates how modern computational methods can address fundamental limitations in conventional primer design, particularly for complex applications like multi-template PCR used in metabarcoding and DNA data storage.

Research Reagent Solutions and Essential Materials

Table 3: Essential research reagents and materials for in-silico design and experimental validation

| Reagent/Material | Function/Application | Implementation Example |

|---|---|---|

| PrimerQuest Tool | Automated primer and probe design | Design of qPCR assays with ~45 customizable parameters [24] |

| OligoAnalyzer Tool | Thermodynamic analysis of oligonucleotides | Secondary structure prediction, Tm calculation, dimer detection |

| MAFFT Algorithm | Multiple sequence alignment | Generating consensus sequences for species-specific primer design [15] [23] |

| In-Silico PCR (ISPCR) | Specificity validation | Detection of perfect and imperfect off-target matches using BLAT algorithm [7] |

| Primer3 | Core primer design algorithm | Thermodynamically optimized primer selection in CREPE and PrimeSpecPCR [7] [25] |

| NCBI BLAST | Sequence similarity search | Verification of primer specificity against genomic databases [24] |

| Synthetic DNA Pools | Training data for efficiency models | Generation of large, annotated datasets for deep learning models [1] |

| Reference Genomes | Specificity testing | In-silico validation of primer specificity across non-target species [23] |

The evolving landscape of in-silico primer design tools demonstrates a clear trajectory toward more sophisticated, automated, and predictive solutions. While established tools like PrimerQuest provide robust, user-friendly platforms for conventional PCR and qPCR applications with extensive customization options [24], emerging solutions like CREPE [7] and PrimeSpecPCR [25] address specific needs for large-scale and species-specific applications, respectively.

The integration of deep learning approaches, as demonstrated by 1D-CNN models for predicting sequence-specific amplification efficiencies [1], represents the next frontier in addressing persistent challenges like multi-template PCR bias. These approaches not only improve design outcomes but also provide mechanistic insights into the fundamental factors governing amplification efficiency.

For researchers validating PCR primer specificity and efficiency, the current tool ecosystem offers multiple pathways to robust assay development. The choice among platforms depends critically on the specific application—from clinical diagnostics requiring species-specific detection to environmental genomics needing broad-scale amplification of diverse targets. As these tools continue to evolve, incorporating more sophisticated predictive models and expanding genomic databases, they will further reduce the empirical optimization required in molecular assay development, accelerating research across biological disciplines and drug development pipelines.

The validation of a Polymerase Chain Reaction (PCR) assay is a critical process that ensures the reliability, accuracy, and reproducibility of results in research and diagnostic settings. A rigorously validated assay guarantees that observed outcomes truly reflect biological reality rather than methodological artifacts. This validation rests on three fundamental pillars: the use of standard curves to define quantitative capabilities, the implementation of comprehensive controls to monitor for contamination and inhibition, and the meticulous optimization of reaction conditions to maximize specificity and efficiency. Within the broader context of validating PCR primer specificity and efficiency, these experimental setups provide the empirical evidence required to trust an assay's output. Whether for a laboratory-developed test (LDT) or the verification of a commercial kit, the principles outlined herein form the foundation of any robust molecular method, directly impacting the integrity of scientific conclusions and the efficacy of drug development pipelines [27].

Reaction Optimization: Establishing the Foundation

Before an assay can be validated, its reaction conditions must be finely tuned. Optimization is the process of balancing numerous chemical and physical parameters to achieve maximum specificity, efficiency, and yield. Inadequate optimization is a primary source of failure, leading to issues such as non-specific amplification, primer-dimer formation, or false negatives [3] [28].

Critical Parameters for Optimization

The following parameters require systematic investigation to establish a robust PCR protocol.

- Primer Design and Concentration: The sequence of the primers is the most significant determinant of assay specificity. Optimal primers are typically 18-24 bases in length, with a GC content of 40-60% and closely matched melting temperatures (Tm) within 1-2°C for the forward and reverse primers. The 3' end should be rich in GC bases to enhance binding stability and ensure efficient extension initiation. Primer concentrations between 0.2 µM and 1.0 µM are commonly used, as lower concentrations can reduce non-specific product formation [3] [28].

- Annealing Temperature (Ta): The annealing temperature is perhaps the most critical thermal parameter. A temperature that is too low permits non-specific primer binding, while a temperature that is too high can prevent efficient annealing, leading to low or no yield. The most efficient method for determining the optimal Ta is a gradient PCR, which tests a range of temperatures in a single run. A good starting point is to set the Ta 3-5°C below the calculated Tm of the primers [3].

- Mg²⁺ Concentration: Magnesium ions (Mg²⁺) are an essential cofactor for DNA polymerase activity. The typical optimal concentration ranges from 1.5 mM to 2.5 mM. Low Mg²⁺ concentrations reduce enzyme activity and yield, while high concentrations promote non-specific amplification and reduce fidelity. Fine-tuning this parameter through titration is crucial [3].

- Polymerase Selection: The choice of DNA polymerase depends on the application. Standard Taq polymerase is fast and robust for routine screening. For applications requiring high accuracy, such as cloning or sequencing, high-fidelity polymerases (e.g., Pfu, KOD) with 3'→5' proofreading activity are essential, as they can reduce error rates by up to 10-fold compared to Taq. Hot-start polymerases, which require heat activation, are recommended to prevent non-specific amplification during reaction setup [3] [28].