A Practical Guide to Reportable Range Verification for Semi-Quantitative Microbiology Tests

This article provides a comprehensive framework for verifying the reportable range of semi-quantitative microbiology tests, a critical requirement for clinical and research laboratories.

A Practical Guide to Reportable Range Verification for Semi-Quantitative Microbiology Tests

Abstract

This article provides a comprehensive framework for verifying the reportable range of semi-quantitative microbiology tests, a critical requirement for clinical and research laboratories. Tailored for researchers, scientists, and drug development professionals, the content spans from foundational regulatory definitions and performance characteristics to step-by-step methodological protocols. It further addresses common troubleshooting scenarios and outlines robust validation procedures, including comparative analyses with quantitative methods. The guide synthesizes current standards from CLSI, CLIA, and ISO to ensure tests meet regulatory compliance and deliver reliable, clinically actionable results.

Understanding Reportable Range: Definitions, Regulations, and Significance for Semi-Quantitative Assays

In clinical microbiology, semi-quantitative methods provide an essential bridge between purely qualitative detection and fully quantitative enumeration, offering a practical approach for assessing microbial burden in clinical specimens. These methods utilize an ordinal scale (e.g., 0, 1+, 2+, 3+, 4+) to report results, where the units can be of any size and need not be identical across the entire measuring interval but can be ranked [1]. The reportable range defines the span of these categorical results—from the lowest to the highest—that a test system can reliably produce, and its verification is a critical pre-requisite for implementing any new test in routine diagnostics [2] [3]. Within the context of a broader thesis on reportable range verification, this application note details the definition, verification protocols, and practical implementation of reportable ranges for semi-quantitative microbiology tests, providing researchers and developers with structured experimental frameworks and performance data.

Defining the Semi-Quantitative Reportable Range

Conceptual Framework and Clinical Utility

The reportable range for a semi-quantitative assay is defined as the set of categorical results that the laboratory establishes as reportable, verified by testing samples that fall within this range [2]. Unlike quantitative tests that report numerical values on a ratio scale, semi-quantitative tests report on an ordinal scale, where results like "occasional," "light," "moderate," and "heavy" growth can be ranked but lack defined, equally sized intervals between categories [1]. This approach is widely used in diagnostic scenarios where precise enumeration is unnecessary, but an estimation of microbial load is clinically valuable, such as in the diagnosis of ventilator-associated pneumonia (VAP) [4] or the assessment of chronic wound bioburden [5].

The clinical utility of these methods stems from their balance of practicality and diagnostic performance. For instance, in VAP diagnosis, the absence of bacteria in a semi-quantitative Gram stain (score of 0) or poor growth (≤1+) in a semi-quantitative culture strongly excludes the condition, whereas abundant bacteria (≥3+) strongly suggests VAP, enabling timely clinical decision-making [4].

Common Scoring Systems and Their Interpretation

Semi-quantitative scoring systems are applied to both direct microscopic examination (e.g., Gram stain) and culture-based methods. The specific criteria, while conceptually similar, vary between these applications. The table below summarizes two common scoring systems documented in the literature.

Table 1: Common Semi-Quantitative Scoring Systems and Their Interpretations

| Test Method | Score | Definition | Typical Interpretation/Correlation |

|---|---|---|---|

| Gram Stain [4] | 0 | No bacteria per oil immersion field | Effectively rules out VAP (90% of samples had bacterial count below diagnostic threshold) [4] |

| 1+ | <1 bacterium per field | ||

| 2+ | 1-5 bacteria per field | ||

| 3+ | 6-30 bacteria per field | Strongly suggests VAP (94% of samples had bacterial count above diagnostic threshold) [4] | |

| 4+ | >30 bacteria per field | ||

| Culture (Four-Quadrant Method) [5] | Occasional | Growth only in first quadrant | Wide range of CFU/g (102–106 CFU/g), significant overlap with other categories [5] |

| Light | Growth in first and second quadrants | Mean of 2.5 × 105 CFU/g, a clinically significant level [5] | |

| Moderate | Growth in first, second, and third quadrants | Mean of 5.4 × 106 CFU/g [5] | |

| Heavy | Growth in all four quadrants | Wide range of CFU/g (104–108 CFU/g) [5] |

Experimental Protocols for Reportable Range Verification

Verifying the reportable range is a fundamental requirement under the Clinical Laboratory Improvement Amendments (CLIA) for any unmodified FDA-cleared test before patient results can be reported [2]. The following protocol provides a detailed framework for this process.

Core Verification Protocol

This protocol is designed to verify that a semi-quantitative test's reportable range performs as established by the manufacturer in the user's specific laboratory environment.

Table 2: Research Reagent Solutions and Essential Materials

| Item/Category | Specification/Function |

|---|---|

| Clinical Isolates & Samples | Minimum of 20 clinically relevant isolates or de-identified clinical samples [2]. |

| Sample Matrix | Should include different sample matrices (e.g., respiratory secretions, tissue biopsies) if applicable to the test's intended use [2] [5]. |

| Culture Media | Various agar types (e.g., Blood agar, Chocolate agar, MacConkey agar, Columbia CNA agar) for isolation and differentiation [5]. |

| Quality Controls | Standards, controls, or proficiency test materials to ensure analytical accuracy [2]. |

| Instrumentation | Standard microbiology lab equipment (e.g., incubators, matrix-assisted laser desorption ionization-time of flight (MALDI-TOF) for organism identification [5]). |

| Inoculation Loops | For standardized streaking in the four-quadrant method [5]. |

Methodology:

- Sample Selection and Preparation: Procure a minimum of three samples that are known to be positive for the analyte [2]. These samples should represent values near the upper and lower ends of the manufacturer's established cutoff values for the categorical scores (e.g., samples expected to yield scores of 1+, 3+, and 4+) [2]. Samples can be derived from reference materials, proficiency tests, or characterized clinical samples.

- Testing Procedure: Process each sample according to the test's standard operating procedure. For a semi-quantitative culture, this involves streaking the sample onto appropriate agar plates using the four-quadrant method to generate isolated colonies for scoring [5].

- Analysis and Interpretation: Incubate plates under specified conditions and assign semi-quantitative scores based on the manufacturer's criteria (e.g., number of quadrants with growth for culture, number of bacteria per field for Gram stain) [4] [5].

- Verification Criteria: The reportable range is considered verified if the tested samples yield the expected categorical results across the entire span of the claimed range (e.g., 1+, 2+, 3+, 4+). All results must fall within the manufacturer's defined categories without any "out-of-range" flags [2].

Supplemental Verification: Accuracy and Precision

While verifying the reportable range confirms the test can generate the correct categories, assessing accuracy and precision ensures the results are clinically reliable and reproducible.

Accuracy Verification:

- Objective: To confirm acceptable agreement between the new method's results and those from a comparative method.

- Procedure: Test a minimum of 20 positive and negative samples or a range of samples with high to low values in parallel with the new method and a validated reference method (e.g., quantitative culture) [2].

- Calculation: Calculate the percentage agreement: (Number of results in agreement / Total number of results) × 100. The acceptable percentage should meet the manufacturer's stated claims or a level determined by the laboratory director [2].

Precision Verification:

- Objective: To confirm acceptable within-run, between-run, and operator variance.

- Procedure: Test a minimum of 2 positive and 2 negative samples (or samples with high and low values) in triplicate over 5 days by 2 different operators [2].

- Calculation: Calculate the percentage agreement for repeated measurements. The system is precise if the agreement meets the manufacturer's stated performance specifications [2].

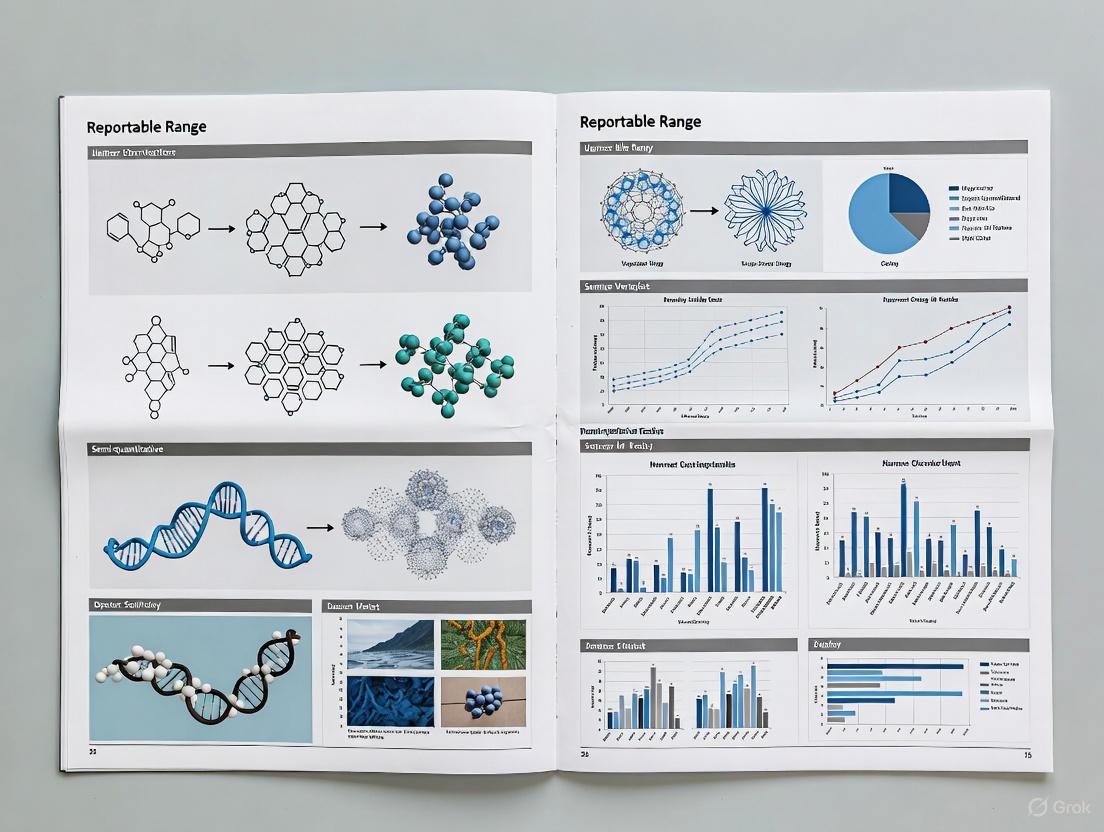

Diagram 1: Reportable range verification workflow.

Data Analysis and Performance Benchmarking

Correlation with Quantitative Cultures

A critical step in understanding the clinical meaning of semi-quantitative categories is to correlate them with quantitative measurements, the gold standard for bacterial load. Studies across different clinical specialties demonstrate a strong statistical correlation, but also reveal significant overlaps in the underlying quantitative values.

Table 3: Correlation Between Semi-Quantitative Scores and Quantitative Cultures

| Study Context | Correlation Finding | Key Clinical Performance Metrics |

|---|---|---|

| Ventilator-Associated Pneumonia (VAP)(Endotracheal Aspirates) [4] | Semi-quantitative Gram stain scores significantly correlated with log quantitative counts (rs = 0.64, p<0.0001). | Gram Stain ≥1+: Sensitivity 95%, Specificity 61%, NPV 90%Gram Stain ≥3+: Sensitivity 42%, Specificity 96%, PPV 94% [4] |

| Chronic Wounds(Tissue Biopsies) [5] | Semi-quantitative culture results correlated with log quantitative counts (r = 0.85). | Light Growth: Mean 2.5 × 105 CFU/g (clinically significant)Heavy Growth: Range 104–108 CFU/g (6-log range) [5] |

Analytical and Diagnostic Performance

The data from these studies highlight the distinct diagnostic strengths of different cutoff points. A low cutoff (e.g., ≥1+) excels as a rule-out test due to its high sensitivity and negative predictive value (NPV). Conversely, a high cutoff (e.g., ≥3+) serves as an excellent rule-in test due to its high specificity and positive predictive value (PPV) [4]. This understanding is crucial for contextualizing the reportable range within clinical decision-making.

Diagram 2: Diagnostic logic of different score cutoffs.

The reportable range is a foundational element of semi-quantitative microbiology tests, transforming subjective observations into standardized, clinically actionable categorical results. Its robust verification, as outlined in the protocols above, is a regulatory and clinical necessity. While these methods show strong correlation with quantitative standards, researchers and clinicians must be aware of the inherent limitations, including the broad and overlapping quantitative values corresponding to each categorical score. A thorough verification of the reportable range, complemented by assessments of accuracy and precision, ensures that these practical and cost-effective tests provide reliable data to support patient diagnosis and management, forming a critical component of high-quality clinical microbiology practice.

The development and implementation of semi-quantitative microbiology tests operate within a complex framework of regulatory standards and requirements. Three primary systems govern this field: the United States' Clinical Laboratory Improvement Amendments (CLIA), the international ISO 15189 standard for medical laboratories, and the European Union's In Vitro Diagnostic Regulation (IVDR). Understanding the distinctions and overlaps between these frameworks is crucial for researchers, scientists, and drug development professionals aiming to ensure regulatory compliance while advancing diagnostic capabilities. These standards collectively emphasize the need for rigorous verification and validation processes to ensure test reliability, accuracy, and clinical usefulness [6] [7].

For semi-quantitative microbiology tests, which use numerical values to determine cutoffs but report qualitative results (e.g., "detected" or "not detected"), the reportable range represents a critical performance characteristic [8]. This range defines the upper and lower limits of detection that a test can reliably measure, establishing the boundaries for what constitutes a reportable result. Within the context of these evolving regulations, establishing a verified reportable range becomes fundamental to demonstrating test competence and ensuring patient safety across all regulatory jurisdictions.

Comparative Analysis of Key Regulations

The following table summarizes the core characteristics of the three major regulatory frameworks affecting semi-quantitative microbiology testing.

Table 1: Comparison of CLIA, ISO 15189, and IVDR Frameworks

| Aspect | CLIA | ISO 15189:2022 | IVDR (EU 2017/746) |

|---|---|---|---|

| Geographic Scope | United States [6] | International [6] | European Union [9] |

| Legal Nature | Mandatory for U.S. clinical laboratories [6] | Voluntary accreditation [6] | Mandatory for market access [9] |

| Primary Focus | Regulatory compliance, proficiency testing, quality control [6] | Continuous improvement, risk management, technical competence [10] [6] | Device safety, performance evaluation, post-market surveillance [9] [11] |

| Key Relevance to In-House Tests | Establishes method verification requirements for unmodified FDA-cleared tests [8] | Specifies validation requirements for laboratory-developed tests (LDTs) [3] [7] | Mandates performance evaluation and validation for in-house devices [7] |

| Status/Timeline | Updated personnel requirements effective 2025; New PT limits implemented 2025 [12] [13] | Full implementation required by December 2025 [10] | Progressive rollout with key transition periods through 2027 [9] [11] |

Distinguishing Verification and Validation

A fundamental concept in navigating these regulations is understanding the distinction between verification and validation:

- Verification confirms that a commercially developed test performs as claimed by the manufacturer when used in your specific laboratory environment. It is required for unmodified, FDA-cleared or CE-marked tests under CLIA and ISO 15189 [8] [7].

- Validation is a more extensive process that establishes the performance characteristics of a laboratory-developed test (LDT) or a significantly modified commercial test. It demonstrates the test is fit for its intended purpose and is mandated by ISO 15189 and IVDR for in-house tests [3] [7].

For semi-quantitative tests, the reportable range must be verified for commercial tests and fully validated for LDTs [8].

Updated Regulatory Requirements and Timelines

CLIA Proficiency Testing Updates

CLIA has implemented updated proficiency testing (PT) acceptance criteria effective January 1, 2025 [13]. These revised limits are crucial for verifying analytical performance during method validation. The following table highlights selected updated CLIA PT criteria for 2025 relevant to microbiology and related assays.

Table 2: Selected Updated CLIA Proficiency Testing (PT) Acceptance Limits for 2025

| Analyte or Test | NEW 2025 CLIA PT Criterion | OLD Criterion |

|---|---|---|

| C-reactive protein (HS) | Target Value (TV) ± 1 mg/L or ± 30% (greater) | None (Newly regulated) [13] |

| Creatinine | TV ± 0.2 mg/dL or ± 10% (greater) | TV ± 0.3 mg/dL or ± 15% (greater) [13] |

| Hemoglobin A1c | TV ± 8% | None (Newly regulated) [13] |

| Potassium | TV ± 0.3 mmol/L | TV ± 0.5 mmol/L [13] |

| Cell Identification | ≥ 80% consensus | ≥ 90% consensus [13] |

| Leukocyte Count | TV ± 10% | TV ± 15% [13] |

ISO 15189:2022 Key Changes

The updated ISO 15189:2022 standard, with a full implementation deadline of December 2025, introduces several critical changes [10]:

- Integration of POCT Requirements: Requirements for Point-of-Care Testing (POCT) previously outlined in ISO 22870 are now integrated into the main standard, streamlining accreditation for different testing environments [10].

- Enhanced Focus on Risk Management: Laboratories must now implement more robust, proactive processes to identify, assess, and mitigate potential risks to service quality [10].

- Updated Governance and Resource Management: The standard introduces clearer definitions of roles and responsibilities and places greater emphasis on ensuring adequate personnel, equipment, and facilities [10].

IVDR Transition and Challenges

The EU's IVDR (2017/746) is being progressively implemented. While applicable since May 2022, transition periods allow a phased approach for certain devices [9]. 2025 is a pivotal year within this timeline, marking the end of some key transition periods and increasing the need for rigorous performance evaluation of in-house tests [11] [3]. Challenges under IVDR include managing legacy devices, generating sufficient clinical evidence, navigating complex risk classifications (especially for novel diagnostics like AI-based IVDs), and meeting stringent post-market surveillance requirements [11].

Experimental Protocols for Reportable Range Verification

The following protocol provides a detailed methodology for establishing the reportable range of a semi-quantitative microbiology test, such as a PCR assay with a cycle threshold (Ct) cutoff for detection of a specific microbial target [8]. This process is a core component of method verification and validation under CLIA, ISO 15189, and IVDR.

Protocol: Determination of Reportable Range for a Semi-Quantitative Microbiology Test

1. Purpose and Principle To experimentally verify the lower and upper limits of the reportable range for a semi-quantitative assay and confirm that results within this range are reliable. The reportable range defines the span of analyte concentrations (e.g., from the limit of detection to the upper limit of the linear/measurable range) that can be directly reported without dilution. For a semi-quantitative test, this often involves verifying the cutoff value that distinguishes positive from negative results [8].

2. Scope and Application This protocol applies to the verification of commercial semi-quantitative tests and the validation of laboratory-developed tests (LDTs) in clinical microbiology. Examples include cartridge-based molecular tests for infectious disease targets or immunoassays for microbial antigens [8] [3].

3. Materials and Reagents

Table 3: Research Reagent Solutions for Reportable Range Verification

| Item | Function in Protocol | Example/Specification |

|---|---|---|

| Certified Reference Material | Provides a standardized analyte of known concentration for accurate range finding. | Microbial genomic DNA, quantified synthetic oligonucleotides, or whole organism standards [3]. |

| Quality Control Materials | Monitors assay performance and precision across the reportable range. | Commercially available positive and negative controls spanning the assay's quantitative scale [8]. |

| Clinical Isolates or Residual Patient Samples | Assesses assay performance with biologically relevant matrices. | De-identified clinical samples previously characterized by a validated method; must be ethically approved for use [8] [3]. |

| Appropriate Culture Media | Supports the growth and viability of microbial organisms used in the study. | Validated media suitable for the fastidiousness of the indicator organisms; pH and osmolality must be specified [14]. |

4. Experimental Procedure

Step 1: Sample Preparation

- Prepare a panel of samples that span the anticipated reportable range. This should include concentrations near the lower limit of detection (LoD), the clinical cutoff, and the upper limit of quantification [8] [3].

- Use a dilution series of a known positive sample in a relevant negative matrix (e.g., negative patient sample or appropriate transport medium).

- For microbial susceptibility or bioburden tests, this may involve a dilution series of a specific microbial strain with known colony-forming units (CFU) [14].

- The minimum number of samples is typically 3-5 for this specific parameter, but more may be needed for a full validation [8].

Step 2: Testing and Replication

- Test the prepared sample panel in accordance with the laboratory's standard operating procedure for the assay.

- Each concentration level should be tested in at least triplicate to account for assay variability [8].

- Testing should be performed over multiple days (e.g., 3-5 days) and by at least two operators if applicable, to incorporate inter-assay and inter-operator precision into the range assessment [8].

Step 3: Data Collection

- Record the semi-quantitative output for each replicate (e.g., "Detected"/"Not detected" and the corresponding numerical value like Ct value, signal-to-cutoff ratio, or categorical score).

- The data should be structured to allow for the analysis of the consistency of results at each concentration level.

5. Data Analysis and Acceptance Criteria

- Defining the Range: The verified reportable range is the interval between the lowest and highest concentrations of the analyte where the test consistently provides the correct qualitative result and the semi-quantitative numerical value is within the specified performance limits [8].

- Lower Limit: The lower limit should be consistent with the verified LoD. Samples below the LoD should be "Not detected" or have a numerical value below the positive cutoff.

- Upper Limit: The upper limit is the highest concentration at which the assay signal is still reliable and does not show signs of saturation or the "hook effect." Results above this limit may require dilution and re-testing.

- Cutoff Verification: For assays with a clinical cutoff (e.g., positive/negative boundary), samples with concentrations near this cutoff must be tested to confirm the cutoff's robustness. The acceptance criterion is typically 100% concordance with the expected result for samples clearly above or below the cutoff, and a defined level of consistency for samples near the cutoff [8] [3].

6. Documentation The verification report must include the raw data, a summary of results for each concentration level, a description of the samples used, and a definitive statement of the verified reportable range and cutoff value, as approved by the laboratory director [8].

Regulatory Workflow Integration

The following diagram illustrates the decision-making process for determining the required level of evidence (verification vs. validation) under the CLIA, ISO 15189, and IVDR frameworks.

Diagram 1: Decision Flow for Test Verification vs. Validation

Preparing for Compliance

Successfully navigating the convergent requirements of CLIA, ISO 15189, and IVDR necessitates a proactive and strategic approach. Laboratories and manufacturers should consider the following actions:

- Conduct a Gap Analysis: Perform a thorough review of current laboratory practices against the updated requirements of ISO 15189:2022 and the new CLIA personnel and PT rules to identify areas needing enhancement before the December 2025 deadline [10] [12].

- Develop a Comprehensive Transition Plan: Create a detailed plan with clear timelines, assigned responsibilities, and defined milestones for achieving and maintaining compliance. This is especially critical for IVDR transition periods ending in 2025-2027 [11].

- Invest in Training: Ensure all personnel, from laboratory directors to testing staff, are aware of and trained on the updated qualification requirements and the heightened focus on risk management [10] [12].

- Engage with Accreditation Bodies: Early communication with relevant accreditation bodies can provide valuable guidance and help streamline the transition process [10].

The regulatory landscape for semi-quantitative microbiology tests is dynamic, with significant updates to CLIA, ISO 15189, and IVDR converging in the 2025-2027 timeline. A deep understanding of the distinctions between verification and validation is fundamental. The precise determination and documentation of the reportable range is a critical technical requirement that cuts across all these frameworks, serving as a key indicator of a test's reliability. By implementing robust experimental protocols, such as the one detailed herein, and aligning quality management systems with these evolving standards, researchers and drug development professionals can not only ensure regulatory compliance but also foster a culture of quality that enhances patient safety, facilitates market access, and builds a foundation for sustainable growth and innovation in diagnostic medicine.

Within the framework of reportable range verification for semi-quantitative microbiology tests, understanding the interplay between core performance characteristics is paramount for ensuring reliable patient results. Semi-quantitative methods, which report results on an ordinal scale (e.g., rare, few, moderate, many), occupy a unique position between purely qualitative and fully quantitative assays [8] [1]. These tests provide an estimate of microbial abundance, which is crucial for differentiating infection from colonization and guiding treatment decisions [15]. The verification of these methods, as required by standards such as CLIA and ISO 15189, demands a rigorous assessment of accuracy, precision, and reference range to ensure they perform as intended in the specific laboratory environment [8] [3]. This application note details the experimental protocols and analytical frameworks necessary to establish and interrelate these core characteristics, providing a structured approach for researchers and scientists validating semi-quantitative microbiological assays.

Core Concepts and Definitions

Understanding Semi-Quantitative Assays

Semi-quantitative assays yield results on an ordinal scale, where values can be ranked (e.g., 1+, 2+, 3+) but lack the fixed interval sizes of a true ratio scale [1]. The fundamental principle involves estimating the relative quantity of microorganisms, typically through standardized streaking techniques like the four-quadrant method, which produces isolated colonies in successive quadrants correlating with the original microbial load [15]. The result is not an exact count but a categorized estimate of abundance, such as "rare" for very few colonies in the first quadrant or "many" for confluent growth in all quadrants [15]. This ordinal output directly influences how performance characteristics like accuracy and precision are defined and measured, differing from both quantitative methods (which provide a numerical value) and qualitative methods (which provide a binary result) [8].

Key Performance Characteristics

The reliability of a semi-quantitative test is defined by several interconnected performance characteristics:

- Accuracy signifies the closeness of agreement between a test result and an accepted reference value [16]. It confirms the acceptable agreement of results between the new method and a comparative method [8]. For semi-quantitative tests, this is often assessed as the percentage of correct categorical assignments compared to a reference method.

- Precision denotes the closeness of agreement between independent test results obtained under stipulated conditions [16]. It confirms acceptable within-run, between-run, and operator variance [8]. In validation, it is often broken down into:

- Reference Range defines the normal or expected result for the tested patient population [8]. For a semi-quantitative test, this establishes the expected ordinal result (e.g., "negative" or "no growth") for a typical sample from a healthy population or a specimen without infection.

- Reportable Range is the range of results that can be reliably reported by the assay, verified by testing samples that fall within this range [8]. For semi-quantitative tests, this encompasses the verified categories (e.g., from "rare" to "many") that the system can distinguish.

The relationship between these characteristics is synergistic. High precision reduces random variation, thereby enhancing the ability to measure true accuracy. A well-defined reference range provides the baseline against which accuracy is judged for negative or normal samples. Ultimately, the verified reportable range is the final expression of a method that has demonstrated acceptable accuracy, precision, and appropriateness for its intended patient population.

Experimental Protocols

Protocol 1: Verification of Accuracy

This protocol is designed to verify the accuracy of a semi-quantitative microbiology test by comparing its results to a reference method.

1. Principle Accuracy is determined by testing a panel of well-characterized samples and calculating the percentage of results that are in categorical agreement with the results obtained from a reference standard method [8] [16].

2. Materials and Reagents

- A minimum of 20 clinically relevant isolates or samples [8].

- Samples should include a combination of positive and negative samples, and for semi-quantitative assays, a range of samples with high to low values is recommended [8].

- Acceptable specimens can originate from:

- Reference materials, accredited standards, or controls.

- Proficiency test samples.

- De-identified clinical samples previously tested with a validated method.

- Culture media and all standard reagents required for the execution of the new test and the reference method.

3. Procedure

- Sample Preparation: Select and prepare the minimum of 20 samples, ensuring they represent the entire reportable range (e.g., negative, rare, few, moderate, many) [8].

- Parallel Testing: Test all samples using the new semi-quantitative method and the established reference method. This should be done in parallel or from samples previously characterized by the reference method [8].

- Blinded Analysis: The analysis of the new method should be performed without knowledge of the reference method results (blinded) to prevent bias.

- Data Recording: Record the results (e.g., 1+, 2+, 3+) from both methods for each sample in a data table.

4. Analysis and Interpretation

- Calculate the percentage agreement (accuracy) using the formula:

Accuracy (%) = (Number of Correct Results in Agreement / Total Number of Results) × 100[16]. - The acceptance criteria should meet the stated claims of the manufacturer or what the laboratory director determines is acceptable [8]. For instance, the laboratory may set an acceptance criterion of ≥90% categorical agreement.

Table 1: Example Data Collection for Accuracy Verification

| Sample ID | Reference Method Result | New Test Result | Categorical Agreement (Y/N) |

|---|---|---|---|

| S01 | Negative | Negative | Y |

| S02 | 1+ (Rare) | 1+ (Rare) | Y |

| S03 | 2+ (Few) | 2+ (Few) | Y |

| S04 | 3+ (Moderate) | 2+ (Few) | N |

| ... | ... | ... | ... |

| Total | % Agreement = (X/20)×100 |

Protocol 2: Verification of Precision

This protocol verifies the precision (repeatability and intermediate precision) of the semi-quantitative test.

1. Principle Precision is assessed by repeatedly testing a set of samples over multiple days and by different analysts to confirm acceptable variance in results [8] [16].

2. Materials and Reagents

- A minimum of 2 positive and 2 negative samples [8]. For semi-quantitative assays, use a combination of samples with high and low values.

- The samples can be controls, standardized suspensions, or de-identified clinical samples.

- All necessary culture media and laboratory equipment.

3. Procedure

- Sample Selection: Select the positive and negative samples that will be tested in triplicate.

- Within-Run (Repeatability):

- A single operator tests the 4 selected samples (2 positive, 2 negative) in triplicate within a single run [8].

- Between-Run (Intermediate Precision):

- Data Recording: All results from every replicate and every day are recorded.

4. Analysis and Interpretation

- Calculate the precision for each level of sample (e.g., for each positive and negative sample) using the formula:

Precision (%) = (Number of Results in Agreement / Total Number of Results) × 100[8]. - For a more quantitative assessment, results can be assigned numerical values (e.g., 1, 2, 3) and the standard deviation or coefficient of variation can be calculated, though this is less common for ordinal data [16].

- The acceptable percentage of precision should meet the manufacturer's claims or laboratory-defined criteria. Consistency in categorical assignment across all replicates and operators is the key indicator of acceptable precision.

Table 2: Example Data Collection for Precision Verification

| Sample | Level | Operator | Day | Replicate 1 | Replicate 2 | Replicate 3 | Within-Run Agreement |

|---|---|---|---|---|---|---|---|

| A | Low (1+) | 1 | 1 | 1+ | 1+ | 1+ | 3/3 (100%) |

| B | High (3+) | 1 | 1 | 3+ | 3+ | 2+ | 2/3 (67%) |

| A | Low (1+) | 2 | 2 | 1+ | 1+ | 1+ | 3/3 (100%) |

| ... | ... | ... | ... | ... | ... | ... | ... |

Protocol 3: Establishing Reference and Reportable Range

This protocol outlines the process for verifying the reference range and the reportable range for a semi-quantitative assay.

1. Principle The reference range is verified by testing samples known to represent the "normal" or "negative" state for the laboratory's patient population. The reportable range is verified by demonstrating that the test can correctly categorize samples across all claimed levels, from the lowest (e.g., "rare") to the highest (e.g., "many") [8].

2. Materials and Reagents

- For Reference Range: A minimum of 20 isolates from de-identified clinical samples or reference samples with a result known to be standard for the population (e.g., samples negative for the target pathogen) [8].

- For Reportable Range: A minimum of 3 samples. Use known positive samples for the detected analyte, and for semi-quantitative assays, use a range of positive samples near the upper and lower ends of the manufacturer-determined cutoff values [8].

3. Procedure

- Reference Range Verification:

- Test the 20 negative/normal samples using the new semi-quantitative method.

- Record the results and confirm that at least 95% (19/20) of the results fall within the expected reference range (e.g., "no growth" or "negative") [8].

- Reportable Range Verification:

- Test the panel of samples that are known to span the entire range of reportable categories.

- Ensure the panel includes samples with microbial loads near the cut-offs between categories (e.g., a sample barely qualifying as "few" versus "rare").

- Record the categorical result for each sample.

4. Analysis and Interpretation

- Reference Range: The verified reference range is defined as what the laboratory establishes as an expected result for a typical sample. If the results do not match the manufacturer's claim for the local population, the reference range may need to be re-defined using samples from the laboratory's patient population [8].

- Reportable Range: The reportable range is successfully verified if the test correctly identifies and categorizes samples across all claimed levels. The laboratory must confirm that the results for samples at the extremes of the range are reportable and clinically meaningful.

Visualizing the Interplay

The following diagram illustrates the logical relationships and workflow between the core performance characteristics and the final reportable range verification in semi-quantitative microbiology.

Diagram 1: Verification Workflow and Dependencies. This chart illustrates how the verification of Accuracy, Precision, and Reference Range converges to establish the Reportable Range, which is foundational for generating reliable patient results. Required sample inputs for each verification step are shown.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Verification Studies

| Item | Function in Verification | Application Example |

|---|---|---|

| Clinically Relevant Isolates | Serves as the core sample material for verifying accuracy, precision, and reference range. | A panel of 20 well-characterized microbial strains used to assess categorical agreement in accuracy studies [8]. |

| Reference Materials & Controls | Provides a benchmark with known properties to assess the trueness and reliability of the new test method. | Using accredited reference materials or proficiency test samples to establish the correctness of results during accuracy verification [8] [16]. |

| De-identified Clinical Samples | Represents the real-world patient population, crucial for validating the reference range and ensuring the test performs correctly with actual clinical matrices. | Verifying the reference range using 20 de-identified clinical samples known to be negative for the target pathogen [8]. |

| Selective & Non-Selective Culture Media | Supports the growth and isolation of specific or a broad range of microorganisms, forming the basis of the semi-quantitative culture. | Using blood agar plates in the four-quadrant streaking method to estimate microbial load and differentiate organisms [15]. |

| Sterile Inoculation Loops | Ensures a standardized volume of specimen is transferred during the streaking process, which is critical for the reproducibility of semi-quantitative results. | Employing a consistent loop size (e.g., 10µL) for the initial inoculation in the four-quadrant method to maintain precision [15]. |

Within clinical and research microbiology laboratories, ensuring the reliability of test results is paramount. For researchers and scientists developing drugs and diagnostic assays, understanding the regulatory and technical distinctions between method verification and validation is a fundamental requirement. These processes, though often confused, serve different purposes and are mandated under regulations such as the Clinical Laboratory Improvement Amendments (CLIA) [2].

This primer delineates the critical differences between verification and validation, with a specific focus on the context of reportable range assessment for semi-quantitative microbiology tests. A semi-quantitative test, which uses numerical values (e.g., cycle thresholds, optical density) to determine a cutoff but reports a qualitative result (e.g., "detected"/"not detected"), is common in microbial identification and molecular detection [2]. Establishing and verifying its reportable range is essential for ensuring that results falling within this range are accurate and reportable.

Key Concepts: Verification vs. Validation

The terms "verification" and "validation" are not interchangeable; they describe distinct processes triggered by different circumstances concerning the test method's regulatory status and intended use [2] [17].

Verification is a process for unmodified, FDA-cleared or approved tests. It is a one-time study conducted by the end-user laboratory to confirm that the test's established performance characteristics—such as accuracy, precision, and reportable range—are achieved in the local environment with the lab's own personnel and equipment [2]. The performance claims have already been established and approved; the lab is simply providing evidence that it can successfully reproduce them.

Validation, in contrast, is a more extensive process to establish the performance characteristics of a test method. This applies to laboratory-developed tests (LDTs), non-FDA-cleared tests, or any FDA-approved test that has been modified from the manufacturer's instructions [2]. Modifications can include using different specimen types, sample dilutions, or altering test parameters like incubation times. As the performance of these tests is not pre-established by the FDA, the laboratory must perform a comprehensive validation to prove the test works as intended for its specific use [2].

The table below provides a structured comparison of these two critical processes.

Table 1: Core Differences Between Method Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Definition | Confirming a lab can reproduce manufacturer's claims for an unmodified, FDA-cleared test [2]. | Establishing performance characteristics for a lab-developed test (LDT) or a modified FDA-cleared test [2]. |

| Performed By | End-user laboratory [17]. | Method developer or laboratory implementing the LDT/modified method [2] [17]. |

| Regulatory Context | Required by CLIA for unmodified, non-waived tests before patient reporting [2]. | Required for LDTs and modified methods; results used for regulatory submissions and adoption by standards organizations [2] [17]. |

| Scope of Work | Limited to verifying performance specs like accuracy, precision, and reportable range [2]. | Comprehensive, establishing performance specs like sensitivity, specificity, and reproducibility [17]. |

| Typical Assays | Routine implementation of commercial FDA-cleared kits. | Laboratory Developed Tests (LDTs), research-use-only (RUO) assays applied clinically. |

Diagram 1: Decision Pathway for Verification and Validation. This workflow helps determine whether a verification or validation process is required based on the test's regulatory status and modifications.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successfully executing verification or validation studies requires carefully selected materials. The following table details key reagents and their functions in these processes, particularly for semi-quantitative microbiology assays.

Table 2: Essential Research Reagents for Verification and Validation Studies

| Reagent / Material | Function in Verification/Validation | Application Example |

|---|---|---|

| Reference Strains | Serve as standardized controls to demonstrate accuracy and precision; crucial for promoting technical competence in microbiology [18]. | Quantifying analyte in a spiked sample to assess reportable range [19]. |

| Chromogenic Media | Selective and differential culture media that allow for presumptive or full identification of target microorganisms based on colony color, streamlining workflows [20]. | Using a single chromogenic agar plate for the detection and enumeration of Listeria species, reducing confirmation steps [21]. |

| Linearity/Calibration Verification Materials | Samples with known concentrations used to verify the upper and lower limits of the reportable range and assay calibration [19] [22]. | Testing a minimum of three levels (low, mid, high) to challenge the entire reportable range of an assay [22]. |

| Proficiency Testing (PT) Samples | External quality assessment samples with known values but unknown to the analyst, used to verify a method's accuracy and technical competence [18]. | Testing PT samples as unknown patient specimens during a verification study to demonstrate accuracy [2]. |

| Characterized Clinical Isolates | De-identified patient samples or well-characterized microbial stocks used to verify performance against a laboratory's specific patient population [2]. | Using a panel of 20 positive and negative isolates to verify the accuracy of a new qualitative PCR assay [2]. |

Experimental Protocol: Reportable Range Verification for a Semi-Quantitative Microbiology Test

The reportable range defines the lowest and highest test results that are reliable and can be reported. For a semi-quantitative test, this often involves verifying the cutoff values that differentiate between positive and negative results or between different levels of positivity [19] [2]. The following protocol outlines a standardized approach for this verification.

Purpose and Principle

The purpose of this experiment is to verify the reportable range of a semi-quantitative microbiology test, confirming that the manufacturer's claimed range—particularly the cutoff values—performs as expected in the user's laboratory environment [2]. The principle involves testing samples with known concentrations near the claimed upper and lower limits of the reportable range and at the critical cutoff to ensure the test system correctly classifies them [19] [2].

Materials and Equipment

- Test system (instrument, reagents) for the semi-quantitative assay.

- A minimum of three levels of test materials: one near the lower end of the reportable range, one near the upper end, and one at the critical cutoff value [2] [22].

- Acceptable materials include: commercial calibration verification kits, proficiency testing samples, control materials with known values, or characterized patient samples [22].

- Standard laboratory equipment (pipettes, timers, biosafety cabinet).

Step-by-Step Procedure

- Sample Preparation: Obtain or prepare samples with known concentrations or characteristics. For a semi-quantitative assay, this involves sourcing or creating samples that fall just above, at, and just below the manufacturer's stated cutoff value, as well as samples at the extremes of the range [2].

- Sample Analysis: Analyze the prepared samples according to the manufacturer's instructions for use. The samples should be processed as routine patient specimens to reflect real-world testing conditions [22].

- Data Collection: Record the raw data (e.g., numerical values, instrument readings) and the final interpreted result (e.g., "detected," "not detected") for each sample.

- Data Analysis: Compare the observed results against the expected results. For the cutoff verification, ensure that samples below the cutoff are classified as negative and samples above the cutoff are classified as positive. The results across the range should align with the manufacturer's claims [2].

Data Interpretation and Acceptance Criteria

The reportable range is considered verified if the test system correctly classifies all samples in relation to the cutoff value and produces results across the range that are consistent with the expected values. The laboratory director must define specific acceptance criteria prior to the study, which should meet or exceed the manufacturer's stated performance specifications [2]. Any misclassification of samples near the cutoff may necessitate further investigation, corrective action, or a full validation if the method has been modified.

Diagram 2: Reportable Range Verification Workflow. This outlines the key steps for verifying the reportable range of a test, from sample preparation to final acceptance.

For researchers and scientists in drug and diagnostic development, a precise understanding of verification and validation is non-negotiable for regulatory compliance and data integrity. Verification demonstrates that your laboratory can successfully implement a commercially available test, while validation is a more rigorous process to prove a new or modified test is fit for purpose.

Adhering to structured protocols for verifying critical performance characteristics like the reportable range ensures that semi-quantitative microbiology tests generate reliable and clinically meaningful data. This foundational knowledge not only supports the internal quality systems of a laboratory but also bolsters the credibility of research findings and the development of robust diagnostic assays.

Executing Verification: A Step-by-Step Protocol for Semi-Quantitative Test Range

Semi-quantitative assays occupy a critical space in clinical microbiology, providing ordinal results (e.g., 1+, 2+, 3+) or results based on a cutoff value (e.g., cycle threshold (Ct)) rather than precise numerical measurements [8]. Before implementing such assays for patient testing, laboratories must perform verification studies to demonstrate that the test performs acceptably in their specific environment and with their patient population. According to the Clinical Laboratory Improvement Amendments (CLIA), this verification is mandatory for unmodified, FDA-approved tests before patient results can be reported [8]. Unlike purely quantitative tests, verifying semi-quantitative assays requires specialized approaches focusing on categorical agreement and ordinal consistency rather than numerical precision [23]. This document outlines a comprehensive protocol for designing verification studies and defining robust acceptance criteria for semi-quantitative microbiology tests, with particular emphasis on reportable range verification.

Key Verification Parameters and Experimental Design

Defining Verification Parameters

For semi-quantitative assays, four primary analytical performance characteristics require verification as outlined in CLIA regulations [8]. The specific approach for each parameter must be adapted to the categorical nature of these tests.

- Accuracy: This confirms acceptable agreement between the new method's results and those from a comparative method. For semi-quantitative tests, this is not a measure of numerical closeness but of categorical correctness [8] [23].

- Precision: This confirms acceptable reproducibility, assessing within-run, between-run, and operator-to-operator variance. For semi-quantitative assays, precision is measured by the consistency of categorical results across replicates [8].

- Reportable Range: This verifies the assay's ability to correctly categorize samples across its entire claimed range of detection, particularly near established cutoffs that separate ordinal categories (e.g., negative, low positive, high positive) [8].

- Reference Range: This confirms the expected result for a typical sample from the laboratory's specific patient population. For many semi-quantitative infectious disease assays, this may be "not detected" or "negative," but it must be appropriate for the population served [8] [24].

Core Experimental Protocol

The following workflow provides a structured, step-by-step approach for planning and executing a verification study for a semi-quantitative microbiology assay.

Defining Acceptance Criteria and Sample Plans

Accuracy Verification

Accuracy for a semi-quantitative assay is demonstrated by the degree of agreement with a reference method. The experiment should include samples that represent all possible reportable categories.

Experimental Protocol:

- Sample Selection: Select a minimum of 20 clinically relevant samples or isolates [8]. For semi-quantitative assays, ensure this panel includes samples with values spanning the entire range, from high to low, particularly those near critical cutoffs [8].

- Testing Procedure: Test all samples using both the new method (test method) and a validated comparative method (reference method) in parallel.

- Data Analysis: Construct a contingency table (also known as a 2x2 table or cross-tabulation) comparing the results from the two methods. Calculate the Percent Agreement as (Number of agreements / Total number of tests) × 100 [8]. For more sophisticated analysis, Cohen's Kappa (κ) can be used to measure agreement beyond chance, which is particularly important for ordinal results [23].

Acceptance Criteria: The observed percentage of agreement should meet or exceed the manufacturer's stated claims. In the absence of such claims, the laboratory director must define acceptable performance, which should typically be ≥90% agreement or a Cohen's Kappa value ≥0.80, indicating strong agreement [23].

Precision Verification

Precision testing assesses the assay's reproducibility across multiple runs, days, and operators.

Experimental Protocol:

- Sample Selection: Use a minimum of 2 positive and 2 negative samples. For semi-quantitative assays, select positive samples that represent different ordinal levels (e.g., low positive and high positive) [8].

- Testing Procedure: Test all samples in triplicate, over the course of 5 days, and by 2 different operators. If the system is fully automated, operator variance may not be required [8].

- Data Analysis: Calculate the percent agreement across all replicates and conditions. The coefficient of unalikeability can be a useful statistical tool for measuring variance in categorical data [23].

Acceptance Criteria: The percentage of concordant results should meet the manufacturer's stated precision claims. A common acceptance criterion is ≥90% agreement across all replicates and conditions [8] [23].

Reportable Range Verification

The reportable range for a semi-quantitative assay is the span of results—such as the range of Ct values or signal-to-cutoff ratios—within which the laboratory can reliably assign the correct categorical result (e.g., "Detected," "Not detected," "Low," "High") [8] [24].

Experimental Protocol:

- Sample Selection: Verify the range using a minimum of 3 samples. These should be known positive samples, specifically chosen to challenge the upper and lower boundaries of the manufacturer's established cutoffs [8].

- Testing Procedure: Analyze these samples in multiple replicates to ensure they consistently fall within and report correctly for the established reportable range.

- Data Analysis: The reportable range is considered verified if the tested samples that fall within the range are assigned the correct categorical result, and samples near the boundaries do not show erratic categorization.

Acceptance Criteria: 100% of samples tested that are within the claimed reportable range should produce a reportable result consistent with the manufacturer's specifications [8].

Reference Range Verification

The reference interval (RI) is the range of results expected for a healthy reference population. For a semi-quantitative MRSA assay, for example, the reference range for a healthy nasal swab would be "Not Detected" [8] [24].

Experimental Protocol (Limited Validation):

- Sample Selection: Obtain 20 samples from healthy individuals representative of the laboratory's patient population. These can be de-identified clinical samples or commercial reference materials [8] [24].

- Testing Procedure: Analyze the 20 samples using the new test method.

- Data Analysis: Tally the number of results that fall outside the manufacturer's provided reference interval.

Acceptance Criteria (CLSI C28-A Guideline): The reference range is considered validated if no more than 2 of the 20 samples (≤10%) fall outside the proposed reference interval. If 3 or more results fall outside, a second set of 20 samples can be tested. If, again, 3 or more results from the second set fall outside the interval, the laboratory should consider establishing its own population-specific reference range [24].

The table below summarizes the core parameters for designing a verification study for a semi-quantitative microbiology assay.

Table 1: Verification Study Parameters for Semi-Quantitative Assays

| Performance Characteristic | Minimum Sample Number & Type | Experimental Replicates & Conditions | Key Statistical Methods | Acceptance Criteria |

|---|---|---|---|---|

| Accuracy [8] | 20 samples spanning high to low values | Single test by test and reference method | Percent Agreement, Cohen's Kappa [23] | ≥90% agreement or per manufacturer's claim |

| Precision [8] | 2 positive + 2 negative (with different ordinal levels) | Triplicate, 5 days, 2 operators | Percent Agreement, Coefficient of Unalikeability [23] | ≥90% agreement across all conditions |

| Reportable Range [8] | 3 samples near upper/lower cutoffs | Multiple replicates | Categorical consistency check | 100% reportable and correct categorization |

| Reference Range [24] | 20 samples from healthy reference population | Single test per sample | Dixon's Q test or Tukey Fence for outliers [24] | ≤2/20 (10%) results outside claimed range |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of a verification study requires careful selection of characterized samples and statistical tools.

Table 2: Essential Research Reagents and Materials

| Item | Function in Verification | Specific Examples & Notes |

|---|---|---|

| Characterized Clinical Isolates | Serve as positive and negative samples for accuracy and precision studies. | Can be obtained from culture collections, proficiency testing materials, or archived patient samples (de-identified). |

| Commercial Reference Materials | Provide standardized samples with known assigned values for challenging the reportable range. | Quantitative standards, control materials, or panels from diagnostic manufacturers. |

| Proficiency Test Samples | Independent, external samples used to objectively assess accuracy. | Samples from CAP, QCMD, or other proficiency testing providers. |

| Statistical Software Packages | Perform advanced analyses for categorical data, including Cohen's Kappa and outlier detection. | R, SPSS, MedCalc, or GraphPad Prism. CLSI guidelines provide foundational methods [8] [24]. |

| Outlier Detection Tools | Identify and manage aberrant data points that could skew the verification results. | Dixon's Q Test (for small n) or Tukey Fence Method (for larger datasets) [24]. |

A meticulously designed verification plan with predefined, statistically sound acceptance criteria is fundamental to implementing reliable semi-quantitative microbiology tests. By adhering to the structured protocols for accuracy, precision, reportable range, and reference range outlined in this document, laboratories can ensure their assays perform robustly within the local testing environment. This process not only fulfills regulatory requirements but also underpins the quality of patient results and supports confident clinical decision-making.

The reliability of semi-quantitative microbiology tests in clinical diagnostics is fundamentally dependent on rigorous verification procedures before routine use. International standards demand that these verifications demonstrate a test's accuracy, precision, and reportable range within the specific laboratory where it will be implemented [8]. A cornerstone of this process is the strategic selection of clinically relevant isolates and appropriate controls. This protocol provides detailed application notes for selecting these critical biological materials, specifically framed within the verification of the reportable range for semi-quantitative tests, such as those reporting results as "Detected/Not Detected" with an associated numerical cutoff value (e.g., cycle threshold (Ct)) [8]. Proper sample selection ensures that the verification study accurately reflects the test's performance against the pathogens and resistance mechanisms most relevant to the patient population, thereby supporting reliable antimicrobial stewardship and effective patient care [3].

Research Reagent Solutions

The following table details essential materials and reagents required for executing the sample selection and verification protocols described in this document.

Table 1: Essential Research Reagents and Materials

| Item | Function/Explanation |

|---|---|

| Clinical Isolates | Well-characterized microbial strains from clinical specimens; used to challenge the test across its reportable range and ensure it detects real-world pathogens [8] [3]. |

| Reference Materials | Certified controls or standards (e.g., from ATCC); used to establish baseline performance and accuracy of the new test method [8]. |

| Proficiency Test Samples | Externally provided samples with known but blinded values; used for unbiased assessment of test accuracy [8]. |

| Sterile Collection Swabs & Containers | Pre-sterilized consumables for collecting and transporting specimen samples (e.g., oropharyngeal swabs, urine containers) without introducing contamination [25]. |

| Transport Media | Liquid or semi-solid media designed to preserve the viability of microorganisms from the time of collection to laboratory testing [25]. |

| Quality Control (QC) Organisms | Specific strains with defined expected results; used for daily monitoring to ensure the test system performs within established parameters post-verification [8]. |

Experimental Protocols

Protocol 1: Selection of Clinically Relevant Isolates

Objective: To strategically select a panel of microbial isolates that ensures the semi-quantitative test is verified against a clinically representative and analytically challenging set of samples.

Methodology:

- Define the Scope: Clearly identify the microbial targets (e.g., specific species, resistance mechanisms like ESBL or MRSA) detected by the semi-quantitative test [26] [27].

- Establish a Minimum Sample Number: A minimum of 20 clinically relevant isolates per target organism or resistance mechanism is recommended for verification studies [8]. For a comprehensive reportable range verification, include a range of samples from high to low values relative to the test's cutoff [8].

- Source the Isolates: Obtain isolates from a variety of sources to ensure robustness. Acceptable sources include:

- Prioritize Epidemiological Relevance: Select isolates that reflect the local or intended-use epidemiology. For instance, if verifying an ESBL test, ensure the panel includes prevalent genotypes like CTX-M-type enzymes [27]. The panel should include:

- Strong Positive Isolates: Samples with analyte concentrations significantly above the cutoff.

- Weak Positive Isolates: Samples with analyte concentrations near the positive cutoff to challenge the test's detection limit.

- Negative Isolates: Samples without the target analyte.

- Include Challenging Samples: Intentionally include isolates with closely related non-target organisms or common interfering substances to verify analytical specificity.

The following diagram illustrates the logical workflow for the selection of clinically relevant isolates.

Protocol 2: Preparation and Use of Controls

Objective: To establish and implement a system of controls that monitor the performance of the test throughout the verification process, ensuring the validity of reported results.

Methodology:

- Determine Control Types:

- Positive Control: Contains the target analyte at a concentration known to produce a positive result. It verifies that the test can detect the target when present.

- Negative Control: Lacks the target analyte. It verifies that the test does not produce false-positive signals.

- Internal Control (if applicable): A control incorporated into each sample to monitor the entire testing process, including extraction and amplification, identifying sample-specific inhibition.

- Source Controls: Controls can be purchased as commercial quality control materials or prepared in-house using characterized strains. If preparing in-house, use strains different from those used in the clinical isolate panel to ensure independence.

- Define Acceptance Criteria: Before starting the verification, pre-define the expected results for each control (e.g., "Positive control must be 'Detected' with a Ct value between 20-25"; "Negative control must be 'Not Detected'") [8].

- Integration into Runs: Controls must be included in every batch of tests run during the verification study. For semi-quantitative tests, it is critical to include a control with an analyte concentration near the clinical decision cutoff to ensure reproducible classification [8].

Data Presentation and Analysis

The selection of isolates should be documented with detailed metadata. The following table provides a template for summarizing the quantitative characteristics of a verification panel for a semi-quitative MRSA detection test.

Table 2: Example Panel Composition for a Semi-Quantitative MRSA Detection Test

| Isolate Category | Number of Isolates | Source (e.g., Wound, Blood) | Phenotypic Profile | Genotypic Characterization | Expected Result (Detected/Not Detected) |

|---|---|---|---|---|---|

| MRSA (mecA+) | 15 | Various (e.g., 8 wound, 7 blood) | Oxacillin R, Cefoxitin R | mecA gene positive | Detected |

| MSSA (mecA-) | 10 | Various (e.g., 5 nares, 5 tissue) | Oxacillin S, Cefoxitin S | mecA gene negative | Not Detected |

| Coagulase-Negative Staphylococci (MR) | 5 | Blood cultures | Cefoxitin R | mecA gene positive | Detected* |

| Other Gram-Positive Cocci | 5 | Various | N/A | N/A | Not Detected |

| *Note: Inclusive of the test's claim if it detects all mecA-positive staphylococci. |

The overall verification workflow, from planning to analysis, integrates the selection of isolates and controls into a cohesive whole.

Analyzing Look-Back Periods for Resistance

When verifying tests for antimicrobial resistance mechanisms, the epidemiological relevance of isolates can be informed by analyzing historical data. Research indicates that a 12-month look-back period for prior multidrug-resistant (MDR) cultures provides a clinically relevant and statistically sound basis for predicting current resistance patterns, balancing precision and recall [26]. The following table summarizes the performance of a 1-year look-back period for common resistance mechanisms, which can guide the inclusion of isolates from patients with relevant histories.

Table 3: Predictive Performance of a 1-Year Look-Back Period for Prior MDR Culture [26]

| Mechanism of Resistance | Precision (PPV) at 1 Year | Recall (Sensitivity) at 1 Year | Odds Ratio (p-value) |

|---|---|---|---|

| MRSA | 0.93 | 0.32 | 15.87 (p=0.1) |

| VRE | 0.61 | 0.24 | 14.89 (p=0.1) |

| ESBL | 0.67 | 0.45 | 52.90 (p=0.05) |

| AmpC | 0.34 | 0.26 | 21.70 (p=0.08) |

| CRE | 0.37 | 0.40 | 61.12 (p=0.04) |

| PPV: Positive Predictive Value. Data adapted from a study comparing look-back periods for MDR mechanisms [26]. |

In the rigorous context of reportable range verification for semi-quantitative microbiology tests, appropriate sample size and replication strategies are fundamental to obtaining scientifically valid and regulatory-compliant results. These studies are required by standards such as the Clinical Laboratory Improvement Amendments (CLIA) before implementing new tests for patient diagnostics [8]. A poorly designed study with inadequate sample size risks both Type I errors (false positives) and Type II errors (false negatives), leading to incorrect conclusions about a test's performance [28]. This application note provides researchers and drug development professionals with structured protocols and data-driven frameworks to establish statistically powerful sample sizes and replicates, ensuring that verification studies yield reliable and generalizable data.

Statistical Foundations: Power, Error, and Effect Size

Core Statistical Concepts

The foundation of any sample size calculation rests on understanding the interplay between several key statistical parameters, which are crucial for hypothesis testing [28].

- Null and Alternative Hypotheses (H₀ and H₁): The null hypothesis (H₀) typically states that there is no effect or difference (e.g., the new test does not perform differently from the reference method). The alternative hypothesis (H₁) is the researcher's prediction of an effect.

- Type I and Type II Errors: A Type I error (α) occurs when a true H₀ is incorrectly rejected (a false positive). A Type II error (β) occurs when a false H₀ is not rejected (a false negative). The probability of correctly rejecting a false H₀ is the statistical power (1-β) [28].

- Significance Level (α) and P-value: The significance level, conventionally set at α=0.05, is the threshold risk of a Type I error the researcher is willing to accept. The P-value is the obtained probability of incorrectly accepting H₁ and is compared to α to determine statistical significance [28].

- Effect Size (ES): The ES quantifies the magnitude of a phenomenon or the strength of a relationship, independent of sample size. Larger effect sizes require smaller samples to detect, and vice-versa [28].

The diagram below illustrates the logical workflow and relationships between these core concepts when planning a study.

The Critical Relationship Between Parameters

The parameters of α, power, effect size, and sample size are intrinsically linked. The ideal power for a study is generally considered to be 0.8 (or 80%), with an α of 0.05 [28]. However, these are arbitrary conventions, and the balance between them must be considered in the context of the study's goals. For instance, in situations where the consequences of a false positive are severe, a lower α (e.g., 0.01) may be justified [28]. It is crucial to determine these parameters a priori, as an inadequate sample size is a primary cause of low power, increasing the risk of Type II errors and rendering the study incapable of detecting a true effect, even if it exists [28].

Establishing Minimums for Verification Studies

For semi-quantitative microbiological tests, verification studies must demonstrate performance across several defined characteristics. The following table summarizes the minimum sample and replicate requirements based on regulatory standards and best practices [8].

Table 1: Minimum Sample and Replicate Requirements for Verification of Semi-Quantitative Microbiology Tests

| Performance Characteristic | Minimum Sample Number | Replication Requirements | Key Specifications |

|---|---|---|---|

| Accuracy | 20 clinically relevant isolates [8] | Single test per sample, compared to a reference method [8] | Use a combination of positive and negative samples. For semi-quantitative assays, include a range of samples with high to low values near the cutoff [8]. |

| Precision | 2 positive and 2 negative samples [8] | Tested in triplicate, for 5 days, by 2 different operators [8] | If the system is fully automated, operator variance testing may not be required [8]. |

| Reportable Range | 3 samples [8] | Verification of the upper and lower limits [8] | Use known positive samples. For semi-quantitative assays, use samples near the manufacturer's established cutoff values [8]. |

| Reference Range | 20 isolates [8] | Single test per sample [8] | Use de-identified clinical or reference samples that represent the laboratory's normal patient population [8]. |

These minima provide a baseline for regulatory compliance. However, achieving adequate statistical power may require larger sample sizes, which should be determined via a formal power analysis based on pilot data or established effect sizes [29].

Experimental Protocol for Sample Size Determination and Verification

Protocol 1: A Priori Sample Size Calculation

This protocol outlines the steps for calculating a sample size before commencing a verification study.

1. Define the Hypothesis and Primary Endpoint:

- Clearly state the null and alternative hypotheses.

- Identify the primary outcome variable (e.g., percent agreement, correlation coefficient).

2. Choose the Statistical Test:

- Select the test that will be used to analyze the primary endpoint (e.g., paired t-test for means, chi-square test for proportions).

3. Set the Error Tolerances and Power:

- Establish the significance level (α), typically 0.05.

- Set the desired power (1-β), typically 0.80 or 0.90 [28].

4. Estimate the Effect Size (ES):

- The ES can be estimated from pilot data, previous published studies, or defined based on the minimal clinically or technically important difference. For microbiome studies, this might be a meaningful difference in microbial abundance or diversity [29].

5. Calculate the Sample Size:

- Use the gathered parameters in statistical software or nomograms to compute the required sample size [28]. The table below provides simplified formulas for different study types.

Table 2: Sample Size Calculation Formulas for Common Study Designs

| Study Type | Formula | Variable Explanations |

|---|---|---|

| Comparison of Two Proportions [28] | n = [p(1-p)(Zα/2 + Z1-β)²] / (p1 - p2)² |

p1, p2: Proportions in groups 1 and 2.p: Average of p1 and p2.Zα/2: 1.96 for α=0.05.Z1-β: 0.84 for 80% power. |

| Comparison of Two Means [28] | n = [2σ²(Zα/2 + Z1-β)²] / d² |

σ: Pooled standard deviation.d: Difference between two group means. |

| Correlation Study [28] | n = [(Zα/2 + Z1-β)²] / [C²] + 3 |

C: 0.5 * ln[(1+r)/(1-r)] where r is the expected correlation coefficient. |

Protocol 2: Executing a Verification Study for Reportable Range

This protocol details the experimental workflow for verifying the reportable range of a semi-quantitative microbiology test, integrating the required sample sizes and replicates.

Objective: To verify that the test's reportable range (e.g., "Detected," "Not detected," with a Cycle threshold (Ct) value cutoff) performs as claimed by the manufacturer within your laboratory environment [8].

Materials and Equipment:

- The new semi-quantitative test system (e.g., PCR instrument).

- Comparative method (reference standard).

- A minimum of 3 unique clinical or reference samples [8].

- Relevant culture media, diluents, and disposables.

Procedure:

- Verification Plan: Document a plan detailing the study design, number and type of samples, acceptance criteria, and timeline. This plan must be reviewed and signed by the laboratory director [8].

- Sample Selection and Preparation: Select samples that are known to be positive for the analyte. For a semi-quantitative assay, this includes samples with values near the upper and lower ends of the manufacturer's determined cutoff values [8].

- Testing: Test each selected sample using both the new method and the established comparative method.

- Data Analysis and Acceptance: Calculate the percentage agreement between the two methods. The reportable range is considered verified if the results from the new method fall within the established parameters (e.g., correct classification as "Detected" or "Not detected" based on the cutoff) [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogues key reagents and materials critical for successfully conducting verification studies in microbiology.

Table 3: Essential Research Reagent Solutions for Microbiological Verification

| Reagent/Material | Function/Application |

|---|---|

| Clinical Isolates & Reference Strains | Serve as positive and negative controls for accuracy, specificity, and reference range studies. They provide a known baseline for comparing test performance [8] [16]. |

| Selective & Non-Selective Culture Media | Used for the recovery and isolation of challenge microorganisms. Critical for assessing medium appropriateness and specificity [16] [30]. |

| Standardized Reference Materials | Includes controls, proficiency test samples, and certified biological standards. Used for accuracy and precision testing, providing a benchmark for measurement comparison [8]. |

| De-identified Clinical Samples | Authentic samples that represent the laboratory's patient population. Essential for verifying reference ranges and ensuring the test performs correctly with real-world sample matrices [8]. |

| Quality Control (QC) Organisms | A defined set of microorganisms used for ongoing precision and robustness testing, ensuring the test performs consistently over time and across operators [16]. |

Establishing a statistically sound sample size and appropriate number of replicates is not a mere regulatory checkbox but a fundamental component of rigorous scientific practice in microbiology. By integrating the principles of statistical power with the practical requirements for verifying semi-quitative tests, researchers can design studies that are efficient, ethical, and capable of producing reliable, defensible conclusions. The frameworks and protocols provided here offer a actionable pathway for scientists to ensure their work on reportable range verification meets the highest standards of quality and contributes meaningfully to the field of diagnostic microbiology and drug development.

In clinical microbiology and pharmaceutical development, semi-quantitative tests provide critical results that use numerical values to determine an acceptable cutoff but ultimately report a qualitative result, such as "detected" or "not detected" [8]. A common example is the use of a cycle threshold (Ct) cutoff in real-time polymerase chain reaction (PCR) assays for pathogen detection [8]. Verification of the reportable range, which includes these cutoffs, is a mandatory requirement under the Clinical Laboratory Improvement Amendments (CLIA) for any unmodified FDA-approved test before it can be implemented for patient testing [8]. This process ensures that the test performs in line with the manufacturer's established performance characteristics within the specific user's environment. The core challenge lies in robustly calculating agreement between the new method and a comparative method and in empirically verifying the cutoff values that define the test's positive and negative categories. This application note provides detailed protocols and data analysis frameworks to address this challenge, ensuring the reliability of semi-quantitative tests in routine diagnostics and drug development.

Core Data Analysis Protocols

For semi-quantitative assays, the verification process focuses on confirming key performance characteristics through specific data analysis approaches. The essential calculations and their interpretations are detailed below.

Table 1: Essential Calculations for Verification of Semi-Quantitative Assays

| Parameter | Calculation Formula | Interpretation | Minimum Sample Guidance [8] |

|---|---|---|---|