A Comprehensive Method Verification Plan Template for Clinical Microbiology Laboratories

This article provides a structured framework for developing and executing a robust method verification plan in clinical microbiology laboratories.

A Comprehensive Method Verification Plan Template for Clinical Microbiology Laboratories

Abstract

This article provides a structured framework for developing and executing a robust method verification plan in clinical microbiology laboratories. Tailored for researchers, scientists, and drug development professionals, it demystifies CLIA and ISO 15189 requirements, offering a step-by-step guide from foundational concepts and study design to troubleshooting and final validation. The content synthesizes current standards from CLSI, USP, and ICH to equip laboratories with a practical template for verifying unmodified FDA-cleared tests, ensuring reliability, compliance, and patient safety.

Laying the Groundwork: Understanding Verification Requirements and Regulatory Standards

In clinical microbiology laboratories, the processes of method verification and validation are fundamental to ensuring the accuracy, reliability, and regulatory compliance of diagnostic tests. While often used interchangeably, these terms represent distinct activities with different regulatory requirements and implementation scenarios. Understanding the distinction between verification (confirming that a test performs as claimed by the manufacturer) and validation (establishing that a lab-developed or modified test performs appropriately for its intended use) is critical for laboratory professionals navigating the complex landscapes of the Clinical Laboratory Improvement Amendments (CLIA) and the In Vitro Diagnostic Regulation (IVDR) in the European Union [1] [2].

The regulatory environment for in vitro diagnostics (IVDs) is evolving significantly. IVDR implementation continues through key transition periods, while CLIA has introduced updated personnel requirements and proficiency testing standards effective in 2025 [3] [4] [5]. Within this framework, clinical microbiology laboratories must establish robust protocols for verifying commercial tests and validating laboratory-developed tests (LDTs), particularly as laboratories increasingly implement molecular methods such as next-generation sequencing and complex multiplex PCR panels that may fall outside FDA-cleared indications [1] [2].

This application note provides detailed guidance for distinguishing between verification and validation requirements, designing appropriate experimental protocols, and implementing compliant processes within clinical microbiology laboratories operating under CLIA and IVDR frameworks.

Regulatory Background

CLIA Requirements

The Clinical Laboratory Improvement Amendments (CLIA) establishes quality standards for all laboratory testing in the United States. CLIA requires that laboratories perform method verification for any non-waived test system (moderate or high complexity) before reporting patient results [2] [6]. For unmodified FDA-cleared or approved tests, laboratories must verify that performance specifications for accuracy, precision, reportable range, and reference range are comparable to those established by the manufacturer and appropriate for the laboratory's patient population [2] [6]. For modified FDA-cleared tests or laboratory-developed tests (LDTs), CLIA requires a more extensive validation process to establish performance specifications [2].

Recent updates to CLIA regulations effective in 2025 have revised personnel qualifications and updated proficiency testing acceptance criteria [4] [5]. These changes include more specific educational requirements for laboratory directors and testing personnel, with updated definitions for "laboratory training or experience" requiring that experience be obtained in CLIA-compliant facilities [5].

IVDR Requirements

The In Vitro Diagnostic Regulation (IVDR, EU 2017/746) represents a significant regulatory shift in the European Union, with full implementation ongoing through 2025-2027 [3]. IVDR imposes stricter requirements for clinical evidence, performance evaluation, and post-market surveillance for all IVD devices [3].

Under IVDR, most laboratories performing in-house tests must comply with ISO 15189 requirements for verification and validation [1]. IVDR specifically mandates that laboratories validate their in-house tests according to established performance evaluation requirements, with documentation demonstrating the test's analytical and clinical performance [1] [3]. The regulation also introduces a risk-based classification system (Class A-D) that determines the level of regulatory control, with genetic tests like those used in clinical microbiology typically classified as Class C (high risk) [3].

Key Differences Between Verification and Validation

The fundamental distinction between verification and validation lies in their purpose and scope. Verification confirms that a commercially developed test performs according to the manufacturer's claims when implemented in a specific laboratory setting. In contrast, validation establishes performance characteristics for tests developed or significantly modified by the laboratory itself [1] [2].

Table 1: Comparison of Method Verification vs. Validation

| Feature | Verification | Validation |

|---|---|---|

| Definition | Confirming performance of commercial tests [1] | Establishing performance of lab-developed or modified tests [1] |

| When Required | Introducing unmodified FDA-cleared/CE-marked tests [1] [2] | Developing LDTs or modifying commercial tests [1] [2] |

| Regulatory Basis | CLIA for FDA-cleared tests; ISO 15189 for CE-IVD [1] | CLIA for LDTs; IVDR & ISO 15189 for in-house tests in EU [1] |

| Scope | Less extensive - confirms manufacturer claims [1] | More extensive - establishes full performance [1] |

| Examples | Implementing a commercial CE-marked PCR assay [1] | Developing an in-house NGS test for oncology [1] |

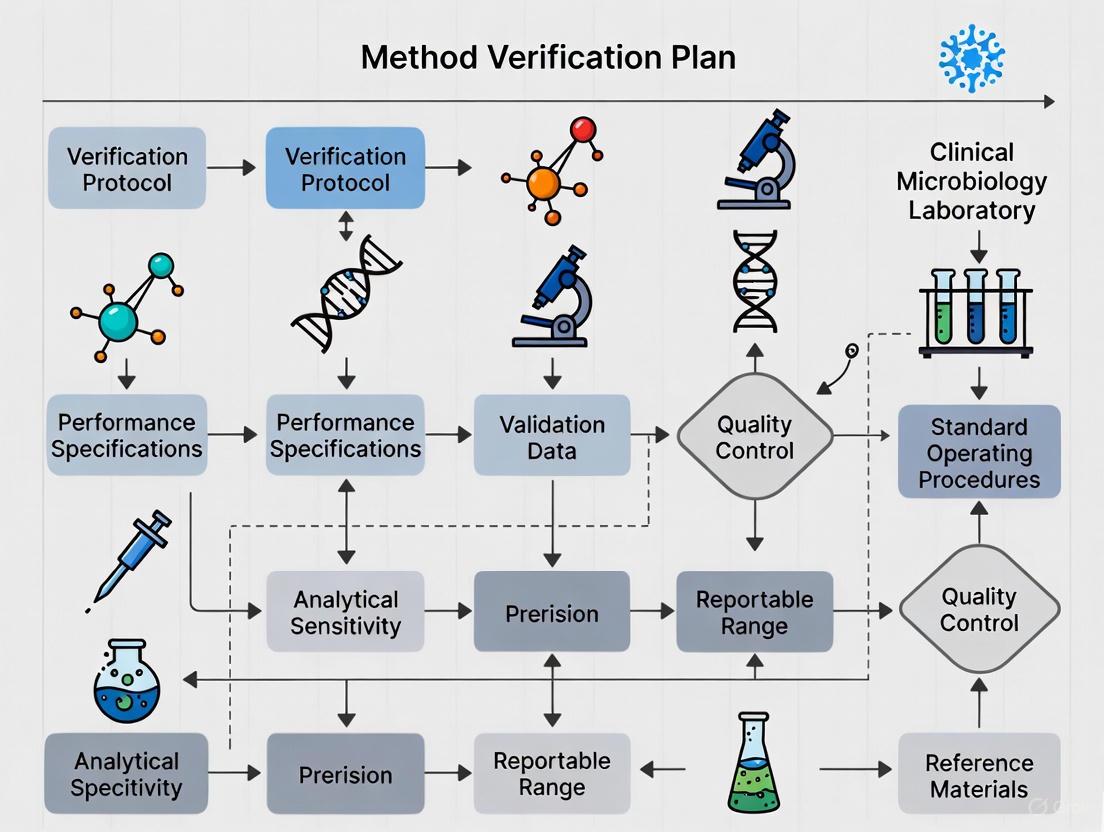

The decision pathway for determining whether verification or validation is required can be visualized through the following workflow:

Method Verification Protocols

Verification Study Design

For unmodified FDA-cleared or CE-marked tests, verification must confirm that the test performs according to manufacturer specifications in your laboratory environment. The verification study should evaluate accuracy, precision, reportable range, and reference range appropriate for your patient population [2] [6].

For qualitative tests (e.g., pathogen detection), focus on verifying analytical sensitivity and specificity. For quantitative tests (e.g., microbial load determination), verify precision, accuracy, and reportable range. For semi-quantitative tests (e.g., antimicrobial susceptibility testing with breakpoints), verify both quantitative cutoffs and qualitative categorization [2].

Sample Planning and Acceptance Criteria

Adequate sample planning is essential for meaningful verification results. The following table summarizes recommended sample sizes and types for verifying qualitative microbiological assays:

Table 2: Sample Planning Guide for Verification of Qualitative Microbiological Assays

| Performance Characteristic | Minimum Sample Recommendation | Sample Types | Acceptance Criteria |

|---|---|---|---|

| Accuracy | 20 clinically relevant isolates [2] | Combination of positive and negative samples; can include standards, controls, reference materials, proficiency test samples, or de-identified clinical samples [2] | Meet manufacturer's stated claims or laboratory director-defined criteria [2] |

| Precision | 2 positive and 2 negative samples tested in triplicate for 5 days by 2 operators [2] | Controls or de-identified clinical samples; for semi-quantitative assays, include samples with high to low values [2] | Meet manufacturer's stated claims or laboratory director-defined criteria [2] |

| Reportable Range | 3 samples [6] | Known positive samples for detected analyte; for semi-quantitative assays, samples near upper and lower cutoff values [2] | Laboratory-established reportable result (e.g., Detected/Not detected) verified across range [2] |

| Reference Range | 20 isolates [2] | De-identified clinical samples or reference samples representing laboratory's patient population [2] | Representative of laboratory's patient population; may need redefinition if manufacturer range isn't appropriate [2] |

Experimental Methodologies

Accuracy Assessment

Accuracy verification confirms acceptable agreement between the new method and a comparative method [2]. For a qualitative PCR assay for pathogen detection:

- Select a minimum of 20 clinically relevant bacterial isolates representing both positive and negative targets [2]

- Test samples in parallel with the new method and a previously validated method

- Calculate percent agreement: (Number of results in agreement / Total number of results) × 100 [2]

- Compare observed agreement to manufacturer claims or establish laboratory-specific acceptance criteria

Precision Evaluation

Precision verification confirms acceptable within-run, between-run, and operator variance [2]. For a microbial identification system:

- Select 2 positive and 2 negative control materials (can include clinical isolates)

- Test each sample in triplicate over 5 separate days

- Utilize 2 different operators if the process involves manual steps

- Calculate within-run, between-run, and total precision

- Express precision as percent agreement for qualitative tests or coefficient of variation for quantitative measurements [2]

Method Validation Protocols

Validation Study Design

Validation is required for laboratory-developed tests (LDTs) or significantly modified commercial tests [1] [2]. The validation process is more extensive than verification and must establish all performance characteristics de novo. A comprehensive validation study for a microbiology LDT should include assessment of analytical sensitivity (detection limit), analytical specificity (including interfering substances), precision, reportable range, reference range, and accuracy [6].

For molecular LDTs such as laboratory-developed PCR assays, also include evaluation of amplification efficiency, linear dynamic range for quantitative assays, and robustness to minor variations in testing conditions [2].

Sample Planning and Acceptance Criteria

Validation requires more extensive sample testing to establish performance characteristics across clinically relevant ranges. The following table outlines minimum sample recommendations for validation studies:

Table 3: Sample Planning Guide for Validation of Laboratory-Developed Tests

| Performance Characteristic | Minimum Sample Recommendation | Experimental Design | Acceptance Criteria |

|---|---|---|---|

| Reportable Range/Linearity | 5 specimens with known values tested in triplicate [6] | Samples spanning claimed reportable range including low, medium, and high concentrations | Establish linear range with coefficient of determination (R²) >0.98 |

| Precision | 20 replicate determinations on at least two levels of control materials [6] | Within-run, between-run, and between-operator comparisons for qualitative tests; CV determination for quantitative tests | Total error < allowable total error based on clinical requirements |

| Accuracy/Method Comparison | 40 patient specimens analyzed by both new and comparison method [6] | Method comparison using clinical samples analyzed by both LDT and reference method | Deming regression showing no significant bias compared to reference method |

| Analytical Sensitivity | Blank and spiked specimen each analyzed 20 times [6] | Limit of detection (LOD) determination using diluted positive samples | 95% detection rate at claimed LOD |

| Analytical Specificity | Testing against cross-reactive organisms and potentially interfering substances [6] | Evaluation of interference from hemolysis, lipemia, common medications, and cross-reactivity with related organisms | No significant interference at clinically relevant concentrations |

Experimental Methodologies

Detection Limit (Analytical Sensitivity) Experiment

For establishing the detection limit of a qualitative LDT for pathogen detection:

- Prepare a series of dilutions from a known positive sample with high concentration

- Analyze each dilution in replicates of 20 [6]

- Identify the lowest concentration where ≥19/20 (95%) replicates test positive

- Confirm this detection limit with at least 3 independent sample preparations

- Document the claimed detection limit with supporting data

Interference Testing (Analytical Specificity)

To evaluate potential interfering substances for a microbiology assay:

- Select potentially interfering substances relevant to the test (e.g., hemoglobin for blood culture, mucus for respiratory tests, antimicrobial agents)

- Prepare test samples with and without interferent at clinically relevant concentrations

- Include samples with structurally or genetically related microorganisms that might cross-react

- Analyze paired samples (with/without interferent) in triplicate

- Consider the test acceptable if results for samples with and without interferent show no significant differences [6]

The Scientist's Toolkit: Essential Research Reagents

Successful verification and validation studies require carefully selected reagents and materials. The following table outlines essential research reagent solutions for microbiology method evaluation:

Table 4: Essential Research Reagent Solutions for Method Verification and Validation

| Reagent/Material | Function in Verification/Validation | Application Examples |

|---|---|---|

| Certified Reference Materials | Provides standardized samples with known characteristics for accuracy assessment | Quantification of microbial loads; quality control for molecular assays |

| Clinical Isolates | Represents real-world samples for performance evaluation; essential for inclusivity testing | Panel for analytical sensitivity/specificity; accuracy studies with diverse strains |

| Molecular Grade Water | Serves as negative control in molecular assays; diluent for sample preparation | PCR negative controls; preparation of sample dilutions |

| Interferent Stocks | Evaluates assay robustness against common interfering substances | Hemoglobin, lipids, mucus testing for analytical specificity |

| Nucleic Acid Extraction Kits | Standardizes sample preparation component of molecular tests | Evaluation of extraction efficiency in LDT validations |

| Proficiency Testing Materials | Provides externally validated samples for accuracy assessment | Inter-laboratory comparison; twice-yearly CLIA requirement [4] |

| Quality Control Panels | Monitors ongoing assay performance post-implementation | Daily QC monitoring; trend analysis for precision |

Navigating Current Regulatory Challenges

IVDR Implementation in 2025

With key IVDR transition periods ending in 2025, laboratories must focus on several challenging areas. Performance evaluation requirements for in-house tests under IVDR Annex I require comprehensive clinical evidence, potentially including data from clinical performance studies [3]. Risk classification challenges are particularly relevant for microbiology tests, with genetic tests typically classified as Class C under IVDR Rule 3 [3]. For legacy devices, transition periods extend through 2027-2028, but laboratories must maintain technical documentation that remains audit-ready [3].

CLIA Updates for 2025

Recent CLIA updates include revised personnel requirements with more specific educational pathways and updated definitions for laboratory training and experience [5]. Proficiency testing acceptance criteria have been updated for multiple analytes, with changes implemented January 1, 2025 [4]. Laboratories must ensure their verification and validation protocols align with these updated standards.

Special Considerations for Microbiology

Microbiology presents unique verification and validation challenges compared to other laboratory disciplines. Verification of antimicrobial susceptibility testing methods requires careful consideration of organism selection, interpretation against FDA versus CLSI breakpoints, and correlation with clinical outcomes [2]. For molecular microbiology assays, verification and validation must address extraction efficiency, amplification inhibitors, and strain genetic diversity that might affect performance [2].

Method verification and validation represent distinct but complementary processes in the clinical microbiology laboratory. Verification confirms that commercial tests perform as claimed by the manufacturer in your specific laboratory environment, while validation establishes performance characteristics for laboratory-developed or significantly modified tests. With evolving regulatory landscapes including IVDR implementation and CLIA updates, laboratories must maintain robust, well-documented processes for both activities. By implementing the protocols and strategies outlined in this application note, clinical microbiology laboratories can ensure regulatory compliance while providing accurate, reliable test results essential for patient care.

In clinical microbiology, introducing new instruments, assays, or implementing major changes requires a rigorous assessment to ensure reliable patient results. This process is governed by a critical distinction between method verification and method validation [2]. Understanding this distinction is fundamental to regulatory compliance and quality patient care.

Method verification is a one-time study confirming that a test performs according to the manufacturer's established performance characteristics when used as intended in your laboratory. It applies to unmodified FDA-cleared or approved tests [2]. In contrast, method validation is a more extensive process to establish that an assay works as intended for non-FDA cleared tests, such as laboratory-developed tests (LDTs), or when modifications are made to an FDA-approved test [2]. Such modifications can include using different specimen types, sample dilutions, or altering test parameters like incubation times, all of which could affect assay performance.

Scenarios Requiring Verification or Validation

Navigating the requirements for new tests and changes can be complex. The table below outlines common laboratory scenarios and the required level of assessment.

Table 1: Guidance on When Verification or Validation is Required

| Laboratory Scenario | Type of Assessment Required | Key Rationale |

|---|---|---|

| Implementing a new, unmodified FDA-cleared test | Verification [2] | Confirms the test performs as stated by the manufacturer in your laboratory environment. |

| Implementing a laboratory-developed test (LDT) or a modified FDA-cleared test | Validation [2] | Establishes performance characteristics for a test without existing manufacturer claims for your specific use. |

| Major change in procedure or instrument relocation | Verification [2] | Ensures the change or new location has not adversely affected the test's performance. |

| Updating antimicrobial susceptibility testing (AST) breakpoints on an FDA-cleared device | Validation (treated as an LDT) [7] | Modifying an FDA-cleared device to use current CLSI breakpoints is considered a laboratory-developed test. |

| Implementing a test for sterility testing under current Good Manufacturing Practices (cGMP) | Equipment Validation (IOPQ) [8] | cGMP standards require Installation, Operational, and Performance Qualification for equipment used in manufacturing. |

The regulatory landscape is dynamic. A significant recent development is the FDA's final rule on Laboratory Developed Tests (LDTs), which began phasing in during 2024 [7]. This rule subjects LDTs to greater FDA oversight. Consequently, modifying an FDA-cleared AST device to interpret results with current CLSI breakpoints (if the device was cleared with older, obsolete breakpoints) is now explicitly classified as creating an LDT, thus requiring a full validation by the laboratory [7].

The following workflow diagram provides a decision pathway to help determine whether a verification or validation is needed for a new test or procedure.

Core Performance Characteristics for Verification

For unmodified FDA-cleared tests, verification studies must confirm several core performance characteristics as required by the Clinical Laboratory Improvement Amendments (CLIA) [2]. The specific experiments and acceptance criteria should align with the test's intended use and the laboratory's patient population.

Table 2: Core Performance Characteristics and Verification Protocols for Qualitative and Semi-Quantitative Assays

| Performance Characteristic | Objective | Minimum Sample Recommendation | Acceptance Criteria |

|---|---|---|---|

| Accuracy [2] | Confirm agreement between the new method and a comparative method. | 20 clinically relevant isolates (combination of positive and negative for qualitative; high to low values for semi-quantitative). | Meets manufacturer's stated claims or laboratory director-defined criteria. |

| Precision [2] | Confirm acceptable within-run, between-run, and operator variance. | 2 positive and 2 negative samples, tested in triplicate for 5 days by 2 operators. | Meets manufacturer's stated claims or laboratory director-defined criteria. |

| Reportable Range [2] | Confirm the acceptable upper and lower limits of the test system. | 3 known positive samples (for qualitative) or samples near the upper/lower cutoff (for semi-quantitative). | The laboratory-defined reportable result (e.g., "Detected", "Not detected", Ct value cutoff) is verified. |

| Reference Range [2] | Confirm the normal result for the tested patient population. | 20 isolates using de-identified clinical samples or reference samples. | The manufacturer's reference range is verified as representative. If not, the lab must redefine it. |

Experimental Protocol for Accuracy and Precision

The following protocol provides a step-by-step guide for conducting accuracy and precision studies, which are foundational to any verification plan.

Accuracy Verification Protocol

- Sample Selection: Obtain a minimum of 20 clinically relevant isolates. For qualitative assays, use a combination of positive and negative samples. For semi-quantitative assays, use a range of samples with high to low values [2].

- Source Material: Acceptable specimens can include standardized controls, reference materials, proficiency test samples, or de-identified clinical samples previously tested with a validated method [2].

- Testing Procedure: Test all samples in parallel using the new method (test method) and the established comparative method (reference method).

- Data Analysis: Calculate the percentage of agreement: (Number of results in agreement / Total number of results) × 100 [2].

- Acceptance Criteria: The calculated percentage of agreement must meet the performance claims stated by the manufacturer or criteria defined by the laboratory director.

Precision Verification Protocol

- Sample Selection: Select a minimum of 2 positive and 2 negative samples. For semi-quantitative assays, use samples with high and low values [2].

- Testing Procedure: Test each sample in triplicate, over the course of 5 days, using two different operators. If the system is fully automated, operator variance may not be required [2].

- Data Analysis: Calculate the percentage of agreement for the repeated measurements across all runs and operators: (Number of concordant results / Total number of results) × 100.

- Acceptance Criteria: The precision percentage must meet the manufacturer's stated claims or laboratory director-defined criteria.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful verification and validation studies rely on well-characterized materials. The following table details essential reagents and resources for executing these studies.

Table 3: Key Research Reagent Solutions for Verification & Validation

| Reagent / Resource | Function in Verification/Validation | Application Example |

|---|---|---|

| Reference Materials & Controls [2] | Serve as objective benchmarks for assessing accuracy and precision. | Using standardized controls from ATCC or other recognized sources to verify a new microbial identification system. |

| Proficiency Test (PT) Samples [2] | Provide an external performance check with pre-characterized samples. | Using archived PT samples with known results to challenge the reportable range of a new qualitative PCR assay. |

| De-identified Clinical Samples [2] | Represent the real-world patient population for verifying reference ranges and accuracy. | Using stored, characterized patient isolates to validate updated breakpoints on an AST system. |

| CLSI Standards (e.g., M07, M100) [7] [2] | Provide reference methods and interpretive criteria (breakpoints) for AST. | Using CLSI M07 broth microdilution as the reference method when validating a new automated AST system. |

| Verification Plan Template [9] | Provides a structured document outlining study design, samples, and acceptance criteria. | Customizing a quantitative validation plan template to detail the verification protocol for a new quantitative HBV nucleic acid test [10]. |

Navigating Regulatory and Standards Frameworks

Adherence to evolving regulatory requirements and international standards is paramount. Key frameworks impacting clinical microbiology include:

- CLIA Regulations: Mandate verification for all non-waived, unmodified FDA-cleared tests before patient results can be reported [2].

- FDA Recognition of CLSI Breakpoints: In a significant 2025 update, the FDA recognized many CLSI breakpoints (from M100, M45, M24S, etc.), simplifying the regulatory path for using current breakpoints with AST devices [7].

- ISO Standards: The ISO 16140 series provides a structured protocol for method validation and verification in the food and feed chain, with part 3 specifically dedicated to verification in a single laboratory [11]. While focused on the food chain, its principles are informative for clinical labs.

- cGMP for Equipment: When performing testing under current Good Manufacturing Practices (e.g., for product sterility testing), equipment must undergo a formal validation process known as Installation, Operational, and Performance Qualification [8].

Clinical microbiology laboratories operate within a stringent regulatory ecosystem to ensure the quality, accuracy, and reliability of diagnostic testing. Three cornerstone organizations establish the critical standards governing this field: the Clinical and Laboratory Standards Institute (CLSI), the International Organization for Standardization through its ISO 15189 standard, and the United States Pharmacopeia (USP). These frameworks collectively address method validation, quality management systems, and microbiological control, forming the foundation for laboratory compliance and patient safety. Adherence to these guidelines is not merely a regulatory exercise but a fundamental component of diagnostic excellence, impacting every phase from test selection and verification to routine patient reporting and quality control [12] [13].

For researchers and drug development professionals, understanding the interplay between these standards is crucial for designing robust verification plans, developing new diagnostic products, and ensuring that laboratory data meets stringent regulatory scrutiny. This article delineates the roles of these key organizations and provides actionable protocols for implementing their requirements within the context of a clinical microbiology laboratory.

Core Regulatory Frameworks and Their Synergy

The following table summarizes the primary focus and key documents for each major standards organization relevant to clinical microbiology.

Table 1: Key Standards Organizations and Their Primary Guidance

| Organization | Primary Focus | Key Documents/Guidelines |

|---|---|---|

| CLSI | Method evaluation, verification, and antimicrobial susceptibility testing standards [14] [15]. | EP07 (Interference Testing), EP12 (Qualitative Performance), M52 (Verification of ID/AST Systems), M100 (AST Breakpoints) [12] [2] [16]. |

| ISO | Quality Management System (QMS) and technical competence for medical laboratories [13]. | ISO 15189:2022 (Medical laboratories—Requirements for quality and competence) [13]. |

| USP | Microbiological quality control for pharmaceuticals, compounding, and dietary supplements [17]. | <61> Microbial Enumeration, <62> Specified Microorganisms, <71> Sterility Tests, <1112> Microbial Contamination Control [17]. |

These frameworks are highly complementary. CLSI provides the detailed technical protocols for test verification and performance, while ISO 15189 establishes the overarching quality management system in which these tests are performed. USP standards, though more directly applicable to pharmaceutical manufacturing and compounding, provide critical guidance on microbiological control that supports laboratory reagent quality and sterility assurance. Laboratories aiming for the highest level of recognition often seek accreditation to ISO 15189, which can be combined with CLIA requirements in comprehensive programs like A2LA's "Platinum Choice Accreditation Program" [13].

Experimental Protocols for Method Verification

Method verification is a mandatory process under regulations like the Clinical Laboratory Improvement Amendments (CLIA) for any non-waived test system before patient results are reported [2]. The process confirms that a test's performance characteristics, as established by the manufacturer, are accurately reproduced in the user's laboratory environment.

Distinction Between Verification and Validation

A critical first step is determining whether a verification or a validation is required:

- Verification: A one-time study for unmodified, FDA-cleared or approved tests. It demonstrates that the test performs in line with the manufacturer's established performance characteristics when used as intended [2].

- Validation: A more extensive process meant to establish that an assay works as intended. This is required for laboratory-developed tests (LDTs) and modified FDA-approved tests [2].

The following workflow outlines the key decision points and stages for planning and executing a method verification study in clinical microbiology.

Verification of Qualitative and Semi-Quantitative Assays

Most tests in clinical microbiology are qualitative or semi-quantitative. The table below outlines the minimum verification criteria as required by CLIA and detailed in CLSI guidelines.

Table 2: Method Verification Criteria for Qualitative/Semi-Quantitative Assays [2]

| Performance Characteristic | Minimum Sample Recommendation | Acceptable Specimen Types | Data Analysis |

|---|---|---|---|

| Accuracy | 20 clinically relevant isolates (positive and negative) [2]. | Standards/controls, reference materials, proficiency test samples, de-identified clinical samples [2]. | (Number of results in agreement / Total results) × 100 [2]. |

| Precision | 2 positive and 2 negative samples, tested in triplicate for 5 days by 2 operators [2]. | Controls or de-identified clinical samples [2]. | (Number of results in agreement / Total results) × 100 [2]. |

| Reportable Range | 3 known positive samples [2]. | Samples with analytes detected; for semi-quantitative, use samples near cutoff values [2]. | Verify that results fall within the laboratory's established reportable range (e.g., "Detected," "Not detected") [2]. |

| Reference Range | 20 isolates [2]. | De-identified clinical samples or reference samples representing the lab's patient population [2]. | Confirm the manufacturer's reference range is appropriate for the laboratory's patient population [2]. |

Specific Protocol for Microbial Identification and AST Systems

For instrument-based microbial identification and antimicrobial susceptibility testing systems, CLSI M52 provides essential recommendations.

- Scope: This guideline covers the verification of FDA-cleared Microbial Identification Systems and Antimicrobial Susceptibility Testing Systems, and can also apply to manual methods like disk diffusion [16].

- Key Focus Areas:

- Accuracy of Identification: Testing a range of organisms that represent the system's claimed database.

- AST Categorization Agreement: Ensuring the system correctly categorizes isolates as Susceptible, Intermediate, or Resistant compared to a reference method.

- Implementation of Alternative Breakpoints: Appendix B of M52 addresses studies for implementing updated CLSI breakpoints that may differ from those approved by the FDA for the commercial system [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful method verification and quality control rely on high-quality, standardized reagents and materials. The following table details essential items and their functions in the verification process.

Table 3: Essential Reagents and Materials for Verification Studies

| Item | Function/Application | Relevant Standards |

|---|---|---|

| Reference Microorganisms | Served as standardized controls for accuracy and precision studies of identification and AST systems [17]. | USP, CLSI M52 [17] [16]. |

| Endotoxin Reference Standard | Used for validation of the Bacterial Endotoxins Test to ensure parenteral products are free of pyrogens [17]. | USP <85> [17]. |

| Competency Testing Kits | Used for verifying the competency of personnel and processes in surface sampling and other monitoring activities [17]. | USP <1116> [17]. |

| Clinical Isolates & De-identified Samples | Used as patient-like samples for verifying accuracy, reportable range, and reference range [2]. | CLSI M52, EP12 [2] [16]. |

| Quality Control Strains | Used for daily or routine QC monitoring of instruments and media to ensure ongoing performance [15]. | CLSI AST Standards [15]. |

The integrated application of CLSI, ISO 15189, and USP standards provides a robust framework for ensuring the quality and reliability of work in clinical microbiology laboratories. CLSI's method evaluation protocols offer the technical "how-to" for verifying test performance. ISO 15189 establishes the overarching quality management system that ensures sustained competency and continuous improvement. USP standards underpin the quality and sterility of critical reagents and products used in testing and pharmaceutical preparation.

For researchers and scientists, a deep understanding of these frameworks is indispensable. It allows for the development of a comprehensive method verification plan template that is not only compliant with regulatory and accreditation requirements but also scientifically sound, thereby safeguarding patient care and supporting the advancement of diagnostic technologies.

In clinical microbiology laboratories, accurately determining the nature of an assay—whether qualitative, quantitative, or semi-quantitative—is a critical first step before method verification or validation. This classification directly influences the study design, performance characteristics evaluated, and statistical analyses employed. Method verification studies are required by the Clinical Laboratory Improvement Amendments (CLIA) for all non-waived systems before patient results can be reported, making proper assay classification essential for regulatory compliance [2]. Understanding these categories ensures that laboratory professionals select appropriate verification protocols that accurately demonstrate a test's performance characteristics within their specific operational environment.

The terms validation and verification, while sometimes used interchangeably, represent distinct processes. A validation establishes that an assay works as intended for laboratory-developed methods or modified FDA-approved tests. In contrast, a verification is a one-time study for unmodified FDA-approved or cleared tests, demonstrating that the test performs according to established characteristics when used as intended by the manufacturer [2]. This application note provides detailed guidance for classifying assays and executing appropriate verification protocols within the framework of clinical microbiology research.

Fundamental Assay Classifications

Clinical laboratory testing methods are divided into three main categories based on the results they report. Each category corresponds to a specific scale of measurement in metrology, which determines the appropriate statistical analyses and quality indicators [18].

Qualitative Assays

- Definition: Assays that provide a binary, categorical result without magnitude (e.g., "detected/not detected," "positive/negative").

- Measurement Scale: Nominal scale, where results are names or categories that cannot be ordered or ranked by size [18].

- Common Examples: Pathogen detection by lateral flow immunoassay, presence of specific genetic markers by PCR without threshold cycles, cultural characteristics for preliminary organism identification.

- Key Consideration: For nominal properties, only equality matters; the cut-off value between categories cannot be arbitrarily changed without affecting the test's fundamental classification performance [18].

Quantitative Assays

- Definition: Assays that provide a numerical value with units, representing a continuous measurement.

- Measurement Scale: Typically ratio scale, with equally sized units, a natural zero point, and constant ratio between quantity values [18].

- Common Examples: Bacterial colony counts (CFU/mL), minimum inhibitory concentration (MIC) values, viral load measurements, enzyme activity levels.

- Key Consideration: This is the highest measurement scale where all statistical methods apply, including calculation of mean, standard deviation, and confidence intervals [18].

Semi-Quantitative Assays

- Definition: Assays that use numerical values to determine cutoffs but report qualitative results or ranked categories.

- Measurement Scale: Ordinal scale, where results can be ordered by size but units may not be identical across the measuring interval [18].

- Common Examples: Cycle threshold (Ct) values in PCR with established cutoffs for detection, agglutination tests graded as 1+/2+/3+/4+, antigen tests with signal-to-cutoff ratios.

- Key Consideration: These methods provide more information than qualitative tests but typically have less optimal quality indicators for trueness, precision, and detectability compared to fully quantitative methods [18].

Table 1: Comparative Analysis of Assay Types in Clinical Microbiology

| Characteristic | Qualitative Assays | Semi-Quantitative Assays | Quantitative Assays |

|---|---|---|---|

| Result Type | Binary/categorical | Ranked categories or ordinal values | Numerical with units |

| Measurement Scale | Nominal | Ordinal | Ratio |

| Statistical Analysis | Sensitivity, specificity, predictive values | Non-parametric statistics, rank-based tests | Mean, SD, correlation, regression |

| Data Presentation | Contingency tables, prevalence | Ordered categories, thresholds | Continuous numerical values |

| CLIA Verification Focus | Accuracy, precision at cut-off | Accuracy across categories, reportable range | Accuracy, precision, reportable range, reference range |

| Example Methods | Rapid strep test, HIV rapid test | PCR with Ct values, agglutination tests | MIC testing, bacterial counts |

Method Verification Requirements by Assay Type

The Clinical Laboratory Improvement Amendments (CLIA) require laboratories to verify specific performance characteristics for unmodified FDA-approved tests before implementing them for patient testing. The verification requirements differ based on whether the assay is qualitative, quantitative, or semi-quantitative [2].

Verification of Qualitative Assays

For qualitative assays, CLIA requires verification of accuracy, precision, reportable range, and reference range [2]. The following protocols provide detailed methodologies for meeting these requirements:

Accuracy Verification Protocol:

- Sample Requirements: Minimum of 20 clinically relevant isolates or samples, combining both positive and negative samples [2].

- Sample Types: Standards, controls, reference materials, proficiency test samples, or de-identified clinical samples tested previously or in parallel with a validated method [2].

- Calculation Method: (Number of results in agreement / Total number of results) × 100 [2].

- Acceptance Criteria: Should meet the manufacturer's stated claims or laboratory director's determination [2].

Precision Verification Protocol:

- Sample Requirements: Minimum of 2 positive and 2 negative samples tested in triplicate for 5 days by 2 operators [2].

- Automated Systems: If the system is fully automated, user variance testing may not be required [2].

- Calculation Method: (Number of results in agreement / Total number of results) × 100 [2].

- Acceptance Criteria: Should meet the manufacturer's stated claims or laboratory director's determination [2].

Reportable Range Verification Protocol:

Reference Range Verification Protocol:

Verification of Quantitative Assays

Quantitative assays require verification of the same performance characteristics but with different experimental approaches focused on numerical results:

- Accuracy Verification: Method comparison studies using at least 40 patient samples compared to a reference method [9].

- Precision Verification: Within-run, between-run, and between-operator testing following established CLSI guidelines [9].

- Reportable Range Verification: Testing samples with concentrations at the upper and lower limits of detection to verify the assay's measurable range [2].

- Reference Range Verification: Establishing normal values for the tested patient population using appropriate statistical methods [9].

Verification of Semi-Quantitative Assays

Semi-quantitative assays require a hybrid approach, combining elements from both qualitative and quantitative verification protocols:

- Accuracy Verification: Use a range of samples with high to low values to verify correct categorization across the entire reporting spectrum [2].

- Precision Verification: Test samples representing different categories in triplicate over multiple days to ensure consistent classification [2].

- Reportable Range Verification: Use a range of positive samples near the upper and lower ends of the manufacturer-determined cutoff values [2].

- Reference Range Verification: Use de-identified clinical samples or reference samples with known expected results for the laboratory's patient population [2].

Experimental Design and Workflow

The following diagram illustrates the decision pathway for determining assay type and selecting the appropriate verification protocol:

Data Analysis and Presentation

Proper data analysis and presentation methods vary significantly by assay type and must be selected accordingly:

Table 2: Data Analysis and Presentation Methods by Assay Type

| Analysis Type | Appropriate Quantitative Analysis | Presentation Format |

|---|---|---|

| Univariate Analysis | Descriptive statistics (range, mean, median, mode, standard deviation) | Graphs (line graphs, histograms), charts (pie chart, descriptive table) [19] |

| Univariate Inferential Analysis | T-test, chi-square | Summary tables of test results, contingency table [19] |

| Bivariate Analysis | T-tests, ANOVA, Chi-square | Summary tables; contingency tables [19] |

| Multivariate Analysis | ANOVA, MANOVA, Chi-square, correlation, regression | Summary tables [19] |

For quantitative data presentation, several principles should be followed. Tables should be numbered consecutively and given brief, self-explanatory titles. Headings of columns and rows should be clear and concise, with data presented in a logical order (e.g., by size, importance, chronological, alphabetical, or geographical). When presenting percentages or averages for comparison, place them as close as possible, and avoid tables that are too large, as most people find vertical arrangements easier to scan than horizontal ones [20].

For frequency distribution of quantitative data, histograms provide a pictorial diagram consisting of a series of rectangular and contiguous blocks. The class intervals are represented along the horizontal axis (width of the column), while frequencies are represented along the vertical axis (length of the column). The area of each column depicts the frequency, which is why columns touch each other without space between them [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Method Verification Studies

| Reagent/Material | Function in Verification Studies |

|---|---|

| Reference Materials | Provide known values for accuracy determination and calibration verification [2] |

| Quality Controls (QC) | Monitor precision and detect systematic errors during verification studies [2] |

| Proficiency Test Samples | External assessment of method performance compared to peer laboratories [2] |

| Clinical Isolates | Cultured microorganisms representing target pathogens for clinical relevance [2] |

| De-identified Clinical Samples | Patient specimens that maintain biological matrix without privacy concerns [2] |

| Standard Strains | ATCC or reference strains with well-characterized properties for comparison [9] |

Implementation Workflow for Assay Verification

The following workflow diagram outlines the comprehensive process for planning and executing a method verification study in clinical microbiology:

Successful implementation of a new assay requires careful documentation throughout the verification process. The verification plan should include the type and purpose of the study, test purpose and method description, detailed study design (including number and types of samples, quality control procedures, replicates, and performance characteristics), materials and equipment needed, safety considerations, and expected timeline for completion [2]. This plan must be reviewed and signed by the laboratory director before commencement of the verification study. Following successful verification, ongoing quality monitoring is essential to ensure the test continues to meet performance requirements throughout its implementation lifetime [2].

Building Your Verification Protocol: A Step-by-Step Template and Study Design

In clinical microbiology laboratories, method verification is a standard and required practice before reporting patient results from any new, unmodified FDA-cleared or approved test system. This process, mandated by the Clinical Laboratory Improvement Amendments (CLIA) for non-waived systems (tests of moderate or high complexity), serves as a one-time study to demonstrate that a test performs according to the manufacturer's established performance characteristics within the operator's specific environment [2]. Verification is distinctly different from validation; the latter is a more extensive process required for laboratory-developed tests (LDTs) or modified FDA-approved tests to establish that an assay works as intended [2] [21]. A well-structured verification plan is crucial for ensuring that laboratory tests are reliable, accurate, and ready for diagnostic use, ultimately safeguarding patient care.

Core Components of a Verification Plan

A comprehensive verification plan acts as a formal protocol, ensuring that all regulatory and performance requirements are met before a new test is implemented. The plan must be reviewed and signed off by the laboratory director and typically includes the following core elements [2]:

- Type of Verification and Purpose of Study: Clearly state whether the activity is a verification (for an unmodified FDA-approved test) or a validation (for an LDT or modified test). Define the primary objective of the study.

- Purpose of Test and Method Description: Describe the clinical application of the test and provide a detailed description of the methodology.

- Details of Study Design: This is the most critical section, specifying the number and types of samples, quality assurance and control procedures, number of replicates, days, and analysts, the performance characteristics to be evaluated, and the acceptance criteria for each.

- Materials, Equipment, and Resources: List all necessary reagents, instruments, and other resources required to perform the verification.

- Safety Considerations: Outline any specific safety protocols relevant to the test or specimens.

- Expected Timeline for Completion: Provide a projected timeline for finalizing the verification study.

The following workflow outlines the key stages in developing and executing a method verification plan:

Purpose: Verification vs. Validation

A fundamental first step is determining whether a verification or a validation is required. The terms are not interchangeable, and the required rigor and scope of the study differ significantly [2].

- Verification: This is conducted for unmodified, FDA-cleared or approved tests. It is a confirmation process, providing evidence that the test performs as claimed by the manufacturer in the user's laboratory setting. It is a one-time study [2] [21].

- Validation: This is a more extensive process required for laboratory-developed tests (LDTs) or modified FDA-approved tests. Any change to the manufacturer's instructions, such as using different specimen types, sample dilutions, or altering test parameters like incubation times, constitutes a modification. Validation establishes that the assay works as intended for its specific use case [2].

Experimental Design: Performance Characteristics

The study design must detail the experiments to verify key performance characteristics as required by CLIA regulations. The specific approach depends on whether the assay is qualitative, quantitative, or semi-quantitative [2]. The following table summarizes the core characteristics and the minimum sample suggestions for qualitative and semi-quantitative assays, which are common in microbiology.

Table 1: Verification Criteria for Qualitative and Semi-Quantitative Assays [2]

| Performance Characteristic | Objective | Minimum Sample Suggestions | Acceptance Criteria |

|---|---|---|---|

| Accuracy | Confirm agreement between the new method and a comparative method. | 20 clinically relevant isolates (combination of positive and negative). | Meets manufacturer's stated claims or as determined by the lab director. |

| Precision | Confirm acceptable within-run, between-run, and operator variance. | 2 positive and 2 negative samples, tested in triplicate for 5 days by 2 operators. | Meets manufacturer's stated claims or as determined by the lab director. |

| Reportable Range | Confirm the upper and lower limits of what the test system can report. | 3 known positive samples (for qualitative) or samples near cutoff values (for semi-quantitative). | The laboratory's defined reportable result (e.g., "Detected," "Not detected") is verified. |

| Reference Range | Confirm the normal result for the tested patient population. | 20 isolates from de-identified clinical or reference samples. | Represents the standard for the laboratory’s patient population. |

Defining Acceptance Criteria

Acceptance criteria are the predefined benchmarks that determine the success or failure of the verification study. These criteria should be established before testing begins and documented in the verification plan [2]. Typically, the primary reference for acceptance criteria is the manufacturer's stated performance claims for the test. Where manufacturer claims are unavailable or deemed insufficient, the laboratory director is responsible for establishing and documenting appropriate acceptance criteria based on laboratory needs and clinical requirements [2]. For accuracy and precision, the results (calculated as the percentage of results in agreement) must meet or exceed these predefined benchmarks.

Detailed Experimental Protocols

This section provides detailed methodologies for key experiments cited in the verification plan.

Protocol for Verifying Accuracy

Objective: To confirm the acceptable agreement of results between the new method and a comparative method [2].

Materials:

- New test system and instrumentation.

- Comparative method (a previously validated method).

- A minimum of 20 clinically relevant isolates or samples [2].

- Appropriate sample types: standards, controls, reference materials, proficiency test samples, or de-identified clinical samples [2].

Procedure:

- Select samples that represent the expected range of analytes, including positive and negative samples.

- Test each sample using both the new method and the comparative method.

- Ensure testing is performed according to each method's standard operating procedure.

Data Analysis:

- Compare the results from the new method to those from the comparative method.

- Calculate the percent agreement using the formula: (Number of results in agreement / Total number of results) × 100 [2].

- Compare the calculated percentage to the predefined acceptance criteria.

Protocol for Verifying Precision

Objective: To confirm acceptable variance within a run, between runs, and between different operators [2].

Materials:

- New test system and instrumentation.

- A minimum of 2 positive and 2 negative samples [2].

- These can be controls or de-identified clinical samples.

Procedure:

- Two different operators should perform the testing.

- Each operator tests the selected samples in triplicate.

- This testing is repeated over the course of 5 days to capture between-run variability [2].

- If the system is fully automated, testing for user variance may not be required [2].

Data Analysis:

- Compile all results from the different runs and operators.

- Calculate the overall percent agreement for each sample level as described in the accuracy protocol.

- Compare the calculated percentages to the predefined acceptance criteria for precision.

The Scientist's Toolkit

A successful verification study relies on more than just the instrument and reagents. The following table details key resources and their functions in the verification process.

Table 2: Essential Research Reagent Solutions and Resources for Verification

| Item / Resource | Function / Purpose in Verification |

|---|---|

| Clinical and Laboratory Standards Institute (CLSI) Guidelines | Provides authoritative standards and guidelines for designing and evaluating verification studies (e.g., EP12, M52, MM03) [2]. |

| Reference Materials & Controls | Well-characterized samples (from standards, PT panels, or commercial controls) used as a benchmark for assessing accuracy and precision [2]. |

| De-identified Clinical Samples | Real patient samples used to verify performance in a matrix representative of the laboratory's routine workload and patient population [2]. |

| Verification Plan Template | A customizable document that ensures all necessary components of the verification are planned, executed, and documented consistently [9] [22]. |

| Calculation Spreadsheets | Tools for performing standardized calculations for accuracy, precision, and other parameters, reducing human error and improving efficiency [9]. |

| Individualized Quality Control Plan (IQCP) | A framework for developing a quality control plan tailored to the specific test and laboratory environment, extending beyond initial verification [2]. |

Method verification is a mandatory practice for clinical laboratories, required by the Clinical Laboratory Improvement Amendments (CLIA) before implementing new, unmodified FDA-approved tests for patient reporting [2]. A cornerstone of a robust verification study is the appropriate selection of clinically relevant isolates and matrices, coupled with a sample size sufficient to demonstrate that the test performs as claimed within your specific laboratory environment [2]. This document provides detailed application notes and protocols for establishing the sample size and selection criteria for method verification in clinical microbiology, framed within a comprehensive method verification plan template.

Core Principles of Sample Size Calculation

The Importance of Adequate Sample Size

An appropriately calculated sample size is an essential component of any research or verification study, ensuring scientific validity and ethical use of resources [23] [24]. An inadequate sample size can lead to underpowered studies that fail to detect true performance characteristics of a test, resulting in the rejection of valid findings or, conversely, the acceptance of false results [23] [24]. Conversely, an excessively large sample size wastes resources, time, and may unnecessarily consume valuable clinical specimens [24].

Prerequisites for Sample Size Determination

The calculation of sample size requires several key components to be defined during the initial planning phase of the study, as outlined in Table 1 [24].

Table 1: Key Components for Sample Size Calculation

| Component | Description | Typical Values in Medical Research |

|---|---|---|

| Type I Error (α) | The probability of falsely rejecting the null hypothesis (i.e., falsely detecting a difference when none exists). Also known as the significance level [23] [24]. | 0.05 or 0.01 [24] |

| Power (1-β) | The probability of correctly rejecting a false null hypothesis (i.e., correctly detecting a true effect) [23] [24]. | 80% or higher [24] |

| Effect Size | The smallest clinically relevant difference in the outcome that the study aims to detect [23] [24]. | Determined from previous studies, pilot data, or clinical experience [24] |

| Variance/Standard Deviation | The variability of the outcome measure within the population [23] [24]. | Obtained from previous studies, pilot data, or published literature [24] |

These components are used in various statistical formulas tailored to specific study designs (e.g., cross-sectional, case-control, clinical trials) [24]. For verification studies, the "effect size" is often related to the performance criteria you aim to verify, such as a minimum threshold for accuracy.

Sample Size for Different Study Types in Microbiology

The required sample size and the formula used for its calculation depend on the objective of the study and the type of data being generated. Clinical microbiology verification often involves qualitative or semi-quantitative assays.

Cross-Sectional Studies (e.g., Prevalence or Accuracy)

For studies aiming to estimate a proportion, such as the accuracy or prevalence of a microorganism, the following sample size formula is applicable [23]: [ n = \frac{Z^2 P(1-P)}{d^2} ] Where:

- ( n ) = sample size

- ( Z ) = statistic corresponding to the level of confidence (e.g., 1.96 for 95% confidence)

- ( P ) = expected prevalence or proportion (e.g., expected accuracy)

- ( d ) = precision (margin of error)

The expected proportion (( P )) significantly influences the required sample size. Table 2 demonstrates how different values of ( P ) and precision (( d )) affect the sample size [23].

Table 2: Sample Size Calculation for Different Prevalences and Precision Levels (95% Confidence)

| Precision (d) | Assumed Prevalence (P) | ||

|---|---|---|---|

| 0.05 | 0.20 | 0.60 | |

| 0.01 | 1825 | 6147 | 9220 |

| 0.04 | 114 | 384 | 576 |

| 0.10 | 18 | 61 | 92 |

For rare events (very low P), the precision should be chosen carefully, often as a fraction of the prevalence, to avoid crude estimates [23].

Experimental and Multi-Centre Trials

In experimental settings, such as comparing a new microbiological method against a reference standard, more complex designs are used. For multi-centre trials, which increase recruitment rate and generalisability, sample size calculation must account for between-centre heterogeneity using mixed models [25]. Failure to account for this clustering can lead to underpowered studies [25]. A key consideration is that block randomisation, used to balance treatment groups within centres, can result in unbalanced treatment allocations if centre sizes are small and block lengths are large, which may necessitate a larger overall sample size to maintain power [25].

Sampling Methods for Isolate and Matrix Selection

Selecting the right samples is as crucial as determining the right number. The goal is to ensure the study population (the selected samples) is representative of the target population for which the test will ultimately be used [26].

Probability Sampling Methods

Probability sampling methods give all subjects in the target population an equal chance of being selected, maximizing representativeness [26].

- Simple Random Sampling: The basic method where a sampling frame (a list of all units) is available, and samples are drawn randomly [26]. This is ideal if a comprehensive repository of all available isolates is accessible.

- Stratified Random Sampling: The population is divided into homogeneous subgroups (strata) based on a characteristic like bacterial species, specimen type (e.g., urine, sputum), or patient demographics. A random sample is then drawn from each stratum [26]. This ensures adequate representation of minority or clinically important subgroups.

Non-Probability Sampling Methods

In clinical practice, a perfect sampling frame rarely exists, making non-probability methods more common, though they require careful implementation to avoid bias [26].

- Convenience Sampling: Involves enrolling subjects according to their availability and accessibility [26]. For a lab, this might mean using all consecutive clinical isolates received during the verification study period. While convenient and inexpensive, investigators must be cautious that this sample does not systematically differ from the broader target population [26].

- Judgmental Sampling: The investigator selects samples based on specific characteristics deemed important for the study [26]. In microbiology, this is a key strategy for ensuring a verification panel includes isolates with specific resistance mechanisms (e.g., ESBLs, carbapenemases) or a range of colony morphologies.

The following workflow diagram (Figure 1) illustrates the decision process for selecting a sampling method for a verification study.

Figure 1. Sampling Method Selection Workflow. This diagram outlines the logical process for choosing an appropriate sampling strategy based on data availability and study objectives.

Practical Protocols for Verification Studies

For a clinical microbiology laboratory verifying an unmodified FDA-cleared test, CLIA regulations require verification of accuracy, precision, reportable range, and reference range [2]. The following protocols provide detailed methodologies.

Protocol 1: Verifying Accuracy for a Qualitative Assay

Purpose: To confirm the acceptable agreement of results between the new method and a comparative method [2].

Sample Size and Selection:

- Minimum Sample Number: A minimum of 20 clinically relevant isolates is recommended [2].

- Sample Types: Use a combination of positive and negative samples. These can be sourced from standards or controls, reference materials, proficiency test samples, or de-identified clinical samples previously tested with a validated method [2].

- Matrix Considerations: Include different sample matrices (e.g., sputum, urine, swabs) if the test claims to support them.

Methodology:

- Test all selected samples using the new method.

- Compare the results to those obtained from the reference method.

- Calculate the percentage agreement: (Number of results in agreement / Total number of results) × 100.

- Compare the calculated percentage to the manufacturer's stated claims or a laboratory-director-defined acceptance criterion.

Protocol 2: Verifying Precision for a Semi-Quantitative Assay

Purpose: To confirm acceptable within-run, between-run, and operator variance [2].

Sample Size and Selection:

- Minimum Sample Number: A minimum of 2 positive and 2 negative samples [2].

- Sample Types: For semi-quantitative assays, use a combination of samples with high, medium, and low values (e.g., different colony counts or cycle threshold values). These can be controls or de-identified clinical samples [2].

Methodology:

- Test each sample in triplicate.

- Perform this testing over 5 days.

- Employ 2 different operators to perform the testing (if the system is not fully automated).

- Calculate the percentage agreement for results across all replicates, days, and operators.

- Compare the calculated percentage to the manufacturer's stated claims or a laboratory-director-defined acceptance criterion.

Protocol 3: Verifying Reportable and Reference Ranges

- Reportable Range: Verify using a minimum of 3 samples. For qualitative assays, use known positive samples. For semi-quantitative assays, use positive samples near the upper and lower cutoff values defined by the manufacturer [2].

- Reference Range: Verify using a minimum of 20 isolates. Use de-identified clinical samples or reference samples with results known to be standard for the laboratory’s patient population. If the manufacturer's range does not fit your population, additional testing is needed to redefine it [2].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and their functions for conducting a method verification study in clinical microbiology.

Table 3: Essential Research Reagents and Materials for Verification Studies

| Item Category | Specific Examples | Function in Verification |

|---|---|---|

| Characterized Isolates | ATCC strains, proficiency test panels, archived clinical isolates with whole-genome sequence data | Serve as positive and negative controls; provide ground truth for accuracy assessment. |

| Clinical Matrices | Sputum, urine, blood, swabs in transport media | Assess test performance across different sample types as claimed by the manufacturer. |

| Quality Controls | Commercial positive/negative controls, internal controls | Monitor the daily performance and reliability of the test system during verification. |

| Reference Method Materials | Culture media, susceptibility testing discs/materials, PCR reagents for a validated lab-developed test | Provide the comparator result for establishing accuracy. |

| Data Analysis Software | Statistical software (e.g., R, SPSS, EP Evaluator) | Calculate performance metrics (e.g., % agreement, CV%) and perform statistical comparisons. |

The entire process of planning and executing the sample size and selection components of a verification study can be summarized in the following workflow (Figure 2).

Figure 2. Method Verification Workflow. This diagram illustrates the three-phase process for establishing sample size and selection, from initial planning through execution and final reporting.

A scientifically sound method verification study in clinical microbiology hinges on a statistically justified sample size and a deliberate strategy for selecting clinically relevant isolates and matrices. By applying the principles, formulas, and protocols outlined in this document, researchers and laboratory professionals can create a verification plan that is both compliant with regulatory standards and robust enough to ensure the reliability of patient test results. Proper planning at this stage adds transparency and credibility to the verification process and ensures the new test is safely and effectively implemented in the clinical laboratory.

Method verification is a mandatory, one-time study required by the Clinical Laboratory Improvement Amendments (CLIA) for unmodified, FDA-approved laboratory tests before patient results can be reported [2]. It is a critical process that demonstrates a test performs according to the manufacturer's established performance characteristics within a specific laboratory's operational environment. This process is distinct from method validation, which is a more extensive process to establish performance specifications for non-FDA cleared tests, such as laboratory-developed tests (LDTs) or modified FDA-approved tests [2] [27]. For clinical microbiology laboratories, which primarily utilize qualitative and semi-quantitative assays, a structured verification plan is essential for ensuring reliable test performance and, ultimately, high-quality patient care.

The following workflow outlines the core decision-making process for embarking on method verification:

Core Performance Characteristics: Verification Protocols

CLIA regulations mandate that laboratories verify specific performance characteristics for non-waived (moderate or high complexity) test systems [2]. The following sections provide detailed protocols for verifying the four core characteristics: Accuracy, Precision, Reportable Range, and Reference Range.

Accuracy

Accuracy confirms the acceptable agreement of results between the new method and a comparative method [2].

- Objective: To confirm that the new method provides results that agree with a previously validated method or a reference method.

- Principle: A set of clinical samples is tested in parallel using both the new method and the comparative method. The results are compared to determine the percentage of agreement.

- Sample Requirements:

- Type: A minimum of 20 clinically relevant isolates or samples is recommended [2].

- Composition: For qualitative assays, use a combination of positive and negative samples. For semi-quantitative assays, use a range of samples with high to low values [2].

- Sources: Acceptable specimens can include standards, controls, reference materials, proficiency test samples, or de-identified clinical samples tested in parallel with a validated method [2].

- Procedure:

- Test all selected samples using the new method and the comparative method.

- Ensure testing is performed within the stability period of the samples.

- Record all results for comparison.

- Data Analysis: Calculate the percentage agreement as (Number of results in agreement / Total number of results) × 100.

- Acceptance Criteria: The percentage agreement should meet the performance claims stated by the manufacturer or a criteria determined by the laboratory director [2].

Precision

Precision confirms acceptable variance within a run (repeatability), between runs, and between operators [2].

- Objective: To verify the reproducibility and repeatability of the test method.

- Principle: The same samples are tested repeatedly under defined conditions to measure the inherent random variation of the test system.

- Sample Requirements:

- Type: A minimum of 2 positive and 2 negative samples [2].

- Composition: Use controls or de-identified clinical samples. For semi-quantitative assays, include samples with high and low values.

- Procedure:

- Test the selected samples in triplicate.

- Repeat this process over 5 days.

- Involve 2 different operators in the testing process. If the system is fully automated, operator variance may not be required [2].

- Data Analysis: Calculate the percentage agreement for qualitative results. For quantitative data, calculate the coefficient of variation (CV).

- Acceptance Criteria: The observed precision should meet the manufacturer's stated claims or the laboratory's predefined goals, such as a CV of less than one-quarter of the allowable total error (ATE) [2] [27].

Reportable Range

The reportable range verification confirms the acceptable upper and lower limits of the test system [2].

- Objective: To verify that the test can accurately measure analytes across the entire range of values claimed by the manufacturer.

- Principle: Samples with known values at the extremes and within the manufacturer's declared range are tested to confirm they are reported correctly.

- Sample Requirements:

- Procedure:

- Test the selected samples using the new method.

- Ensure that the lowest and highest samples fall within the reportable range.

- Data Analysis: The reportable range is verified by confirming that the results for all tested samples fall within the laboratory's established reportable criteria (e.g., "Detected," "Not detected," or a specific cycle threshold (Ct) value) [2].

- Acceptance Criteria: All results should be accurately reported across the verified range.

Reference Range

Reference range verification confirms the normal or expected result for the tested patient population [2].

- Objective: To confirm that the reference interval provided by the manufacturer is appropriate for the laboratory's patient population.

- Principle: Test samples from individuals who are representative of the "normal" or "negative" condition for the laboratory's patient population.

- Sample Requirements:

- Procedure:

- Test the selected samples using the new method.

- Record the results.

- Data Analysis: The reference range is verified if a pre-defined percentage (e.g., ≥90%) of the results align with the expected normal or negative condition.

- Acceptance Criteria: If the manufacturer's reference range does not represent the laboratory's patient population, the laboratory must redefine the range by testing additional samples from its specific population [2].

The following workflow summarizes the experimental design for these core verification studies:

Experimental Design and Data Interpretation

Structured Verification Plan

A written verification plan, reviewed and approved by the laboratory director, is the foundation of a successful study [2]. This plan should include:

- Type and Purpose: Clearly state whether it is a verification or validation and the reason for the study.

- Test Method Description: Detail the purpose of the test and a description of the methodology.

- Study Design: Specify the number and type of samples, quality control procedures, number of replicates, days of testing, and personnel involved.

- Performance Characteristics and Acceptance Criteria: List each characteristic being verified and the predefined criteria for acceptability.

- Resources and Timeline: Outline required materials, equipment, and the expected timeline for completion [2].

The table below consolidates the key parameters for designing verification studies for qualitative and semi-quantitative assays in clinical microbiology.

Table 1: Method Verification Study Design for Qualitative/Semi-Quantitative Assays

| Performance Characteristic | Minimum Sample Number | Sample Type & Composition | Experimental Replication | Acceptance Criteria |

|---|---|---|---|---|

| Accuracy [2] | 20 | Clinically relevant isolates; mix of positive and negative samples. | Single test per sample versus comparative method. | Meets manufacturer's claims or director-defined percentage agreement. |

| Precision [2] | 2 positive, 2 negative | Controls or clinical samples; range of values for semi-quantitative. | Triplicate testing over 5 days by 2 operators. | Meets manufacturer's claims or director-defined percentage agreement/CV. |

| Reportable Range [2] | 3 | Known positive samples; near cutoff values for semi-quantitative. | Single test per sample. | All results fall within established reportable parameters. |

| Reference Range [2] | 20 | De-identified clinical/negative samples representing "normal". | Single test per sample. | Confirmation of manufacturer's range for the local patient population. |

Troubleshooting Common Issues

Laboratories may encounter challenges during verification. Here are solutions to common problems:

- Precision Issues: If day-to-day precision fails, check for outliers, repeat the study, select different QC materials, or compare the CV to the current method's performance [27].

- Accuracy Issues: For accuracy studies, investigate outliers, recalibrate both assays, or change reagent lots. If unable to obtain high-concentration samples, consider spiking or using historical proficiency testing samples [27].

- Reportable Range Issues: If the range cannot be verified, use different diluents, try a new lot of linearity material, or use serially diluted patient samples. Truncating the analytical measurement range within the approved limits is also an option and is not considered a modification [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful method verification relies on carefully selected materials. The following table details key reagents and resources essential for executing the verification protocols.

Table 2: Essential Research Reagent Solutions for Method Verification

| Reagent / Material | Function in Verification | Application Examples |

|---|---|---|

| Reference Materials & Panels | Serves as a benchmark for accuracy and reportable range studies. | Quantified microbial panels for AST verification; characterized strain panels for molecular assay accuracy [2]. |