A Comprehensive Guide to Validating Template Quality and Quantity for Robust and Reproducible PCR

Accurate assessment of DNA template quality and quantity is a critical prerequisite for successful Polymerase Chain Reaction (PCR), directly impacting the sensitivity, specificity, and reliability of results in research and...

A Comprehensive Guide to Validating Template Quality and Quantity for Robust and Reproducible PCR

Abstract

Accurate assessment of DNA template quality and quantity is a critical prerequisite for successful Polymerase Chain Reaction (PCR), directly impacting the sensitivity, specificity, and reliability of results in research and diagnostic applications. This article provides a comprehensive framework for researchers and drug development professionals, covering foundational principles, advanced methodological approaches, systematic troubleshooting, and rigorous validation strategies. By integrating current best practices and emerging technologies like digital PCR, this guide aims to empower scientists to optimize their PCR workflows, overcome common challenges with degraded or complex samples, and ensure data integrity for biomedical and clinical research.

The Critical Role of Template Integrity in PCR Success

Why Template Quality and Quantity are Non-Negotiable for PCR

In the realm of molecular biology, the polymerase chain reaction (PCR) is a foundational technique, yet its success is profoundly dependent on two critical pre-analytical factors: the quality and quantity of the template DNA. While primer design and cycling conditions often receive significant attention, rigorous validation of the template is the non-negotiable first step for ensuring data accuracy, reproducibility, and efficiency in downstream applications from basic research to drug development. This guide objectively compares the performance of different template preparation methods and qualities, providing a framework for scientists to optimize this crucial parameter.

The Direct Impact of Template on Amplification Efficiency

The integrity and concentration of the DNA template directly influence the kinetics and outcome of the PCR reaction. Suboptimal templates can introduce biases that compromise data integrity, particularly in sensitive applications.

Research demonstrates that sequence-specific factors in the template itself can lead to drastic differences in amplification efficiency during multi-template PCR, a common scenario in next-generation sequencing library prep. In one study, a small subset of sequences (around 2%) exhibited amplification efficiencies as low as 80% relative to the population mean. This minor disadvantage led to their near-complete disappearance from the sequencing data after just 60 cycles, skewing abundance data and potentially leading to false negatives [1].

Furthermore, the physical quality of the template is paramount. The presence of co-purified inhibitors from biological samples—such as humic acid, phenols, or heparin—can directly inhibit polymerase activity. Similarly, carryover EDTA from extraction protocols can chelate the essential Mg²⁺ cofactor, bringing the reaction to a halt [2].

The table below summarizes the core consequences of poor template quality and quantity:

| Parameter | Optimal Range | Consequence of Deviation |

|---|---|---|

| Template Quantity (Human Genomic DNA) | 10ng (abundant genes) - 100ng [3] | Too Low: Inadequate amplification, false negatives.Too High: Non-specific amplification, reagent depletion, inhibition [3]. |

| Template Purity (A260/A280 Ratio) | Approximately 1.8 [4] | Low Ratio: Protein contamination, inhibited reactions [2] [4]. |

| Presence of Inhibitors | None | Direct inhibition of DNA polymerase, leading to reaction failure or reduced yield [2]. |

| Template Integrity | High molecular weight, non-degraded | Degraded DNA provides fragmented templates, preventing amplification of long targets [3]. |

Experimental Comparison: Template Preparation Methods

The method used to generate DNA templates, especially for advanced applications like in vitro transcription (IVT) for mRNA synthesis, has a significant impact on PCR efficiency and final product yield. A systematic comparison between conventional plasmid-derived DNA and PCR-generated DNA templates reveals critical performance differences.

A 2025 study designed a GFP-encoding DNA construct optimized for IVT. Linear DNA templates were prepared using two distinct methods [5]:

- Enzymatic Linearization: Circular DNA plasmid was propagated in bacterial culture, extracted, purified, and digested with a high-fidelity restriction enzyme (HindIII-HF).

- PCR Amplification: A bacteria-free method where linear DNA templates were generated via PCR using a high-fidelity DNA polymerase (PrimeSTAR Max).

The resulting DNA templates from both methods were then used in IVT reactions to synthesize mRNA. The DNA and mRNA yields, as well as the quality and immunogenicity of the final mRNA-LNP vaccines, were rigorously compared [5].

Performance Data and Results

The PCR-based method demonstrated clear advantages in speed and yield while maintaining high-quality output, as summarized in the table below.

| Performance Metric | Plasmid-Derived DNA (Enzymatic Linearization) | PCR-Generated DNA | Experimental Findings |

|---|---|---|---|

| Template Preparation Time | Several days [5] | ~4-6 hours [5] | PCR-based method is significantly faster, eliminating need for bacterial culture [5]. |

| DNA Template Yield | Baseline | ~30% higher [5] | PCR method produced a greater mass of DNA template for IVT [5]. |

| Transcribed mRNA Yield | Baseline | Higher [5] | Increased DNA template yield from PCR method translated to higher mRNA production [5]. |

| Final Product Integrity & Immunogenicity | High-quality mRNA; robust immune response in mice [5] | Equivalent high quality; robust and comparable immune response in mice [5] | Both methods produced mRNA-LNPs with comparable physicochemical properties and efficacy [5]. |

The study concluded that PCR-generated DNA templates offer a rapid, efficient, and cost-effective alternative to plasmid-based methods, without compromising the quality or biological activity of the final product [5].

The Scientist's Toolkit: Essential Reagents for Reliable PCR

A successful PCR relies on a suite of carefully optimized reagents beyond the template itself. The following table details key components and their functions for setting up robust reactions.

| Reagent / Tool | Function & Importance | Optimal Concentration / Type |

|---|---|---|

| DNA Polymerase | Enzyme that synthesizes new DNA strands; choice impacts fidelity, processivity, and specificity. | 0.2-0.5 µL per standard reaction; "Hot Start" versions are recommended to prevent non-specific amplification [3] [4]. |

| Primers | Short DNA sequences that define the start and end of the target amplicon. | 0.1-1.0 µM each; designed with 40-60% GC content and a G or C at the 3' end [3] [4]. |

| dNTPs | Deoxynucleotide triphosphates (dATP, dCTP, dGTP, dTTP); the building blocks for new DNA. | 20-200 µM of each dNTP; equimolar concentrations are critical [3]. |

| Magnesium (Mg²⁺) | Essential cofactor for DNA polymerase activity; concentration dramatically affects efficiency and fidelity. | 1.5-2.0 mM; requires titration as too little reduces yield and too much lowers fidelity [2] [3]. |

| Buffer Additives | Chemicals that help resolve template secondary structures, especially in GC-rich sequences. | DMSO (1-10%), Formamide (1.25-10%), or Betaine; homogenize DNA stability [2] [3]. |

Validating Template Quality: A Practical Workflow

Establishing a standardized workflow for template validation is essential for laboratory rigor and reproducibility. The following diagram and protocol outline the key steps.

Detailed Experimental Protocol for Template Validation

- Quantification: Precisely measure the concentration of the DNA or RNA template using a spectrophotometer (e.g., NanoDrop). Record the concentration in ng/µL [6].

- Purity Assessment: Using the same spectrophotometer, check the absorbance ratios. An A260/A280 ratio of ~1.8 indicates pure DNA, while a lower ratio suggests protein contamination. The A260/A230 ratio should be above 2.0 to indicate freedom from chemical contaminants like salts or phenol [4].

- Integrity Evaluation: Run the template on an agarose gel. Intact genomic DNA should appear as a single, high-molecular-weight band. Degraded DNA will show a smear. For PCR products, a single, sharp band of the expected size should be visible [3].

- Inhibitor Testing (Spike-In Assay): Perform a parallel PCR reaction with a known, well-amplifying control template spiked into the test sample. If the control fails to amplify, it indicates the presence of PCR inhibitors in the sample preparation [2].

- Input Optimization: Set up a series of PCR reactions with a logarithmic dilution series of the template (e.g., 1 ng, 10 ng, 100 ng). Use this to determine the concentration that yields the strongest specific product with the least background, establishing the optimal template input for your specific reaction conditions [3] [4].

For researchers and drug development professionals, the message is clear: overlooking template quality and quantity introduces an untenable risk to experimental validity. As demonstrated, the choice of template preparation method can significantly impact yield and workflow efficiency, while the presence of inhibitors or degraded material can lead to complete reaction failure. By adopting the systematic validation protocols and performance comparisons outlined here, scientists can ensure their PCR results are a true reflection of biology, not an artifact of poor template preparation. Making template validation a non-negotiable step in every PCR workflow is a fundamental prerequisite for rigorous and reproducible science.

Validating the quality and quantity of nucleic acid templates is a critical prerequisite for generating reliable, reproducible data in polymerase chain reaction (PCR) research. Among the essential parameters, DNA degradation, purity, and copy number stand out as fundamental determinants of experimental success. Failures in accurately defining these metrics can lead to highly variable, inaccurate, and ultimately meaningless results, particularly in complex applications like multi-template PCR [7]. This guide objectively compares the performance of leading methodologies and technologies used to assess these key parameters, providing researchers and drug development professionals with a structured framework for template qualification.

Section 1: DNA Purity Assessment and Cleanup

Defining Purity and Its Impact on Downstream Applications

DNA purity refers to the absence of contaminants that can inhibit enzymatic reactions, including proteins, salts, organic compounds, and other impurities. The presence of these contaminants can significantly compromise PCR efficiency, cloning success, and sequencing reliability. Spectrophotometric ratios (A260/280 and A260/230) serve as standard purity indicators, with ideal values typically around 1.8 and 2.0-2.3, respectively [8].

Comparative Performance of DNA Cleanup Methodologies

While various cleanup methods exist, spin-column-based kits represent a widely used standard in molecular biology workflows. The following table summarizes best practices for maximizing DNA purity and yield using this technology.

Table 1: DNA Cleanup Best Practices for Optimal Purity

| Process Step | Key Actions for Success | Common Pitfalls to Avoid |

|---|---|---|

| Binding | - Maintain sample volume between 20-100 µL [8]- Use kit-specific binding buffers [8]- Add extra alcohol for small fragments (<50 bp) [8] | - Do not exceed column binding capacity [8]- Avoid skipping incubation steps [8] |

| Washing | - Perform all recommended wash steps [8]- Centrifuge for full recommended time [8] | - Do not allow column tip to contact flow-through [8]- Do not rush the washing process [8] |

| Elution | - Use recommended elution buffers (e.g., 10 mM Tris, pH 8.5) [8]- Pre-warm buffer (50°C) for large fragments (>10 kb) [8]- Apply buffer to center of matrix and incubate ≥1 minute [8] | - Do not use acidic, nuclease-free water without pH adjustment [8]- Avoid shortening incubation times [8] |

Section 2: DNA Degradation Analysis

Quantifying Degradation in Forensic and Research Contexts

DNA degradation involves the fragmentation of nucleic acids, which reduces the effective template copy number available for amplification. In forensic science, the Degradation Index (DI) from quantification kits like the Quantifiler HP provides a valuable metric for estimating DNA fragmentation [9]. Research demonstrates that DI accurately predicts allele detection rates in Short Tandem Repeat (STR) profiling, enabling scientists to adjust input DNA quantities to maximize PCR recovery from compromised samples [9]. It is crucial to note that different degradation patterns (e.g., fragmentation vs. UV irradiation) can differentially impact STR and Y-STR profiles even at identical DI values [9].

Methodologies for Protein Degradation Assessment

While not directly analogous to DNA degradation, protein degradation studies offer insights into quantitative assessment methodologies. A modified SDS-PAGE technique, which eliminates the standard heating step to prevent additional protein breakdown, can be used to quantify the degree of protein degradation during cleaning process validation for biologics manufacturing [10]. This approach provides good linearity across a wide concentration range (from 5x to 1/80x working concentration) and enables quantitative analysis when paired with gel analysis software [10]. Alternative methods include dual fluorescent reporter systems (e.g., GFP/mCherry) for quantifying cellular protein degradation kinetics in live cells [11].

Section 3: DNA Copy Number Validation

Digital PCR Platforms: A Comparative Analysis

Digital PCR (dPCR) has emerged as a powerful tool for the absolute quantification of gene copy numbers. A recent 2025 study directly compared the performance of two major dPCR platforms—the QX200 droplet digital PCR (ddPCR) from Bio-Rad and the QIAcuity One nanoplate-based digital PCR (ndPCR) from QIAGEN—using both synthetic oligonucleotides and DNA from the ciliate Paramecium tetraurelia [12].

Table 2: Performance Comparison of dPCR Platforms for Copy Number Analysis

| Performance Parameter | QIAcuity One (ndPCR) | QX200 (ddPCR) |

|---|---|---|

| Limit of Detection (LOD) | 0.39 copies/µL input [12] | 0.17 copies/µL input [12] |

| Limit of Quantification (LOQ) | 1.35 copies/µL input (54 copies/reaction) [12] | 4.26 copies/µL input (85.2 copies/reaction) [12] |

| Dynamic Range | Linear trend from <0.5 to >3000 copies/µL input [12] | Linear trend from <0.5 to >3000 copies/µL input [12] |

| Precision (CV with HaeIII enzyme) | CVs between 1.6% and 14.6% [12] | All CVs < 5% [12] |

| Accuracy (vs. expected copies) | Consistently lower than expected, especially at higher concentrations [12] | Consistently lower than expected, but with slightly better agreement than ndPCR [12] |

The study also highlighted that the choice of restriction enzyme (e.g., HaeIII vs. EcoRI) significantly impacted precision, particularly for the QX200 system [12]. Both platforms demonstrated the ability to generate reproducible, linear copy number estimates from an increasing number of ciliate cells.

dPCR as a Superior Alternative to qPCR and PFGE

For copy number variation (CNV) analysis, ddPCR has been validated as a highly accurate and precise method. When measuring the multiallelic DEFA1A3 gene, ddPCR showed 95% concordance with the gold standard Pulsed Field Gel Electrophoresis (PFGE), with copy numbers differing by only 5% on average [13]. In contrast, quantitative PCR (qPCR) was only 60% concordant with PFGE and underestimated copy numbers by an average of 22% [13]. This establishes ddPCR as a robust, high-throughput, and cost-effective alternative for clinical CNV enumeration.

Alternative and Historical Methods for Copy Number Analysis

Several other techniques exist for copy number assessment, each with distinct advantages and limitations:

- Array-based Comparative Genomic Hybridization (CGH): This method uses differentially labeled test and reference DNAs co-hybridized to arrays. While it revolutionized CNV detection, its resolution is limited by the number and distribution of probes, and it only provides a relative quantification compared to a reference [14].

- Competitive Genomic PCR (CGP): A moderate-throughput PCR-based technique where test and reference DNAs are ligated to different adaptors before competitive PCR. This method allows for high-resolution analysis of specific genomic regions and has demonstrated accurate quantification of low-level copy number changes [15].

Experimental Protocols

Protocol 1: Assessing dPCR Performance for Copy Number Quantification

This protocol is adapted from a cross-platform evaluation of dPCR systems [12].

- Sample Preparation: Prepare serial dilutions of synthetic oligonucleotides or DNA extracted from a model organism (e.g., Paramecium tetraurelia) with known cell counts.

- Restriction Digestion: Digest DNA samples with appropriate restriction enzymes (e.g., HaeIII or EcoRI) to ensure accessibility of target sequences.

- dPCR Setup: Partition the reaction mix according to the platform's specifications (nanoplates for QIAcuity or droplets for QX200) using recommended master mixes and probe-based assays.

- Amplification and Reading: Perform end-point PCR using a thermal cycler protocol optimized for the assay, followed by fluorescence reading (imaging for ndPCR, droplet flow for ddPCR).

- Data Analysis: Use the platform's proprietary software to calculate absolute copy numbers/µL based on Poisson statistics. Determine precision (Coefficient of Variation) and accuracy (deviation from expected values) across replicates.

Protocol 2: Modified SDS-PAGE for Protein Degradation Quantification

This protocol is used to quantify the degree of protein degradation, relevant for cleaning validation in biologics [10].

- Gel Preparation: Construct polyacrylamide gels (e.g., 7.5% for antibodies) using a gel preparation kit for optimal consistency.

- Sample Preparation: Mix protein samples with SDS-PAGE loading buffer. Crucially, omit the standard 90-95°C heating step to avoid confounding the analysis with heat-induced degradation.

- Electrophoresis: Load samples alongside a molecular weight marker and run the gel at constant voltage until adequate separation is achieved.

- Staining and Analysis: Stain the gel with Coomassie Blue or a similar stain. Use gel analysis software (e.g., GelAnalyzer) to calculate the molecular weight and volume of protein bands, which allows for quantification of degradation residues.

Visualized Workflows and Pathways

DNA Copy Number Analysis Decision Workflow

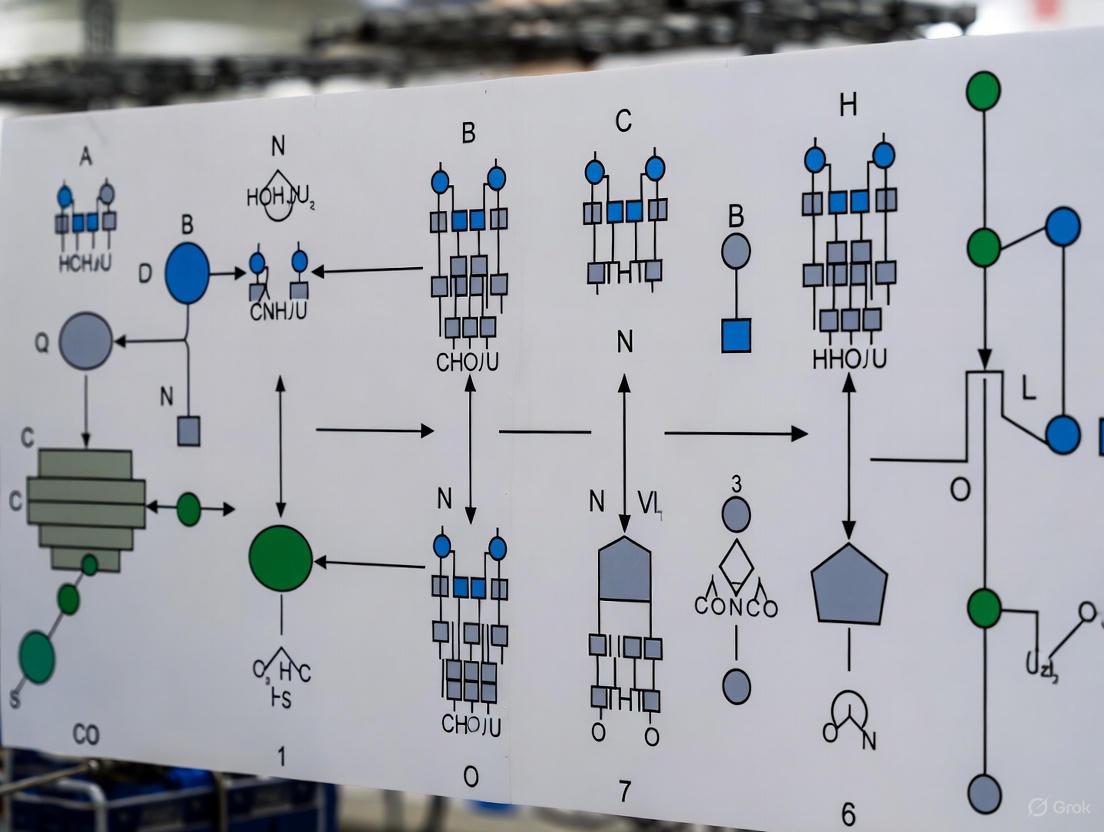

The following diagram outlines a logical pathway for selecting the appropriate methodology based on research goals, resources, and required resolution.

Research Reagent Solutions

The following table details key materials and reagents essential for the experiments and analyses described in this guide.

Table 3: Essential Research Reagents for Template Quality Assessment

| Reagent / Kit | Primary Function | Key Features / Applications |

|---|---|---|

| Monarch Spin PCR & DNA Cleanup Kit (NEB #T1130) [8] | Purifies DNA from PCR reactions. | Binds up to 5 µg DNA; elution in 5-20 µL; effective for fragments from 50 bp to 25 kb. |

| Quantifiler HP DNA Quantification Kit [9] | Quantifies human DNA and assesses degradation. | Provides a Degradation Index (DI) to guide PCR input for degraded forensic samples. |

| QX200 Droplet Digital PCR System (Bio-Rad) [12] [13] | Absolutely quantifies DNA copy number. | Partitions samples into ~20,000 droplets; high concordance with PFGE for CNV analysis. |

| QIAcuity One Digital PCR System (QIAGEN) [12] | Absolutely quantifies DNA copy number. | Nanoplate-based partitioning; high precision comparable to droplet-based systems. |

| Restriction Enzyme HaeIII [12] | Digests DNA prior to copy number analysis. | Can improve precision in dPCR assays for certain targets compared to other enzymes (e.g., EcoRI). |

The rigorous validation of template DNA through the assessment of its degradation, purity, and copy number is not merely a preliminary step but a cornerstone of reliable molecular research. As evidenced by comparative studies, technological advancements like dPCR offer superior accuracy and precision for copy number quantification compared to traditional qPCR, approaching the gold-standard reliability of PFGE but with much higher throughput [12] [13]. Similarly, standardized cleanup protocols and the strategic use of metrics like the Degradation Index are critical for managing sample purity and integrity [8] [9]. By systematically applying the methodologies and comparisons outlined in this guide, researchers can make informed, evidence-based decisions to ensure their data is both robust and reproducible, thereby strengthening the foundation of scientific discovery and diagnostic development.

The integrity of the DNA template is a foundational element governing the success and reliability of any polymerase chain reaction (PCR). Suboptimal template quality or quantity introduces significant biases and errors that propagate through molecular workflows, compromising data integrity in research, diagnostics, and drug development. Within the broader thesis of validating template quality and quantity for PCR research, this guide objectively compares the performance outcomes associated with optimal versus suboptimal templates. We synthesize current experimental data to delineate the consequences—false negatives, inaccurate quantification, and non-specific amplification—across various PCR applications. The focus is on providing researchers and scientists with a clear, evidence-based comparison of how template-related parameters influence key performance metrics, supported by detailed methodologies and empirical findings.

False Negatives: Erosion of Diagnostic and Detection Sensitivity

False negative results, where a target sequence is present but not amplified, represent a critical failure, especially in clinical diagnostics and pathogen detection. Experimental data consistently demonstrates that sequence mismatches between the template and PCR primers are a primary cause.

Experimental Data on Mismatch-Induced Amplification Failure

A comprehensive study investigating SARS-CoV-2 PCR assays provides quantitative evidence on how mismatches lead to false negatives. The research tested 16 different diagnostic assays against over 200 synthetic templates engineered with naturally occurring mutations [16].

Table 1: Impact of Primer-Template Mismatches on PCR Efficiency

| Mismatch Characteristic | Impact on Cycle Threshold (Ct) | Effect on Amplification Efficiency | Experimental Findings |

|---|---|---|---|

| Single mismatch >5 bp from 3' end | Minor shift (<1.5 cycles) | Moderate reduction; often tolerated | Most assays performed without drastic reduction [16] |

| Single mismatch at 3' end | Severe shift (>7.0 cycles) | Substantial reduction or reaction failure | Specific mismatches (A-A, G-A, A-G, C-C) show greatest impact [16] |

| Multiple mismatches (≥4) | Complete reaction blocking | PCR amplification effectively blocked | Complete inhibition observed, leading to false negatives [16] |

| Critical residue variations | Variable Ct shifts depending on position | Can cause false negatives in specific assays | Identified critical positions and mutation types that most impact performance [16] |

Experimental Protocol: Validating In Silico Predictions of False Negatives

The wet lab testing methodology from the SARS-CoV-2 study provides a robust protocol for assessing mismatch impact [16]:

- Assay Selection and Template Design: Sixteen EUA-authorized SARS-CoV-2 PCR assays were selected based on their genomic distribution. Over 200 synthetic DNA templates were designed to mirror naturally occurring viral variants, introducing specific mismatches within primer and probe binding sites.

- PCR Amplification and Data Collection: Each assay was run against the full panel of mutant templates using standardized reaction conditions. Key metrics recorded included:

- Cycle threshold (Ct) values across multiple template concentrations

- Amplification efficiency calculations

- Change in melting temperature (ΔTm) of primer-template hybrids

- Y-intercept values from standard curves

- Performance Analysis: The impact of mismatches was quantified by comparing the Ct values, efficiencies, and y-intercepts of mutant templates to the perfectly matched wild-type control. The data was used to identify critical residues and mismatch types that most severely impacted assay performance.

This experimental approach confirmed that while many assays are robust to single mismatches, specific critical positions can lead to signature erosion and false negative results, validating in silico predictions [16].

Figure 1: Pathway to False Negative Results from Template-Primer Mismatches.

Inaccurate Quantification: Skewed Abundance Data in Multi-Template PCR

In applications requiring precise nucleic acid quantification—such as gene expression analysis, microbiome studies, and DNA data storage—template-dependent amplification bias systematically distorts abundance measurements.

Deep Learning Reveals Sequence-Specific Amplification Bias

Research employing synthetic DNA pools and deep learning has quantitatively demonstrated how amplification efficiency varies significantly by sequence, independent of traditional factors like GC content [1]. In a serial amplification experiment tracking 12,000 random sequences over 90 PCR cycles, a progressive skewing of coverage distribution occurred. A small subset of sequences (~2%) exhibited very poor amplification efficiency (as low as 80% relative to the mean), causing their effective disappearance from the pool after 60 cycles [1]. This bias was reproducible and persisted even when sequences were constrained to 50% GC content, indicating that other sequence-specific factors are at play.

Digital PCR Platform Performance in Accurate Quantification

Digital PCR (dPCR) offers a pathway to more absolute quantification, but platform choice and experimental setup influence precision and accuracy. A 2025 study compared the QX200 droplet digital PCR (ddPCR) from Bio-Rad with the QIAcuity One nanoplate digital PCR (ndPCR) from QIAGEN using synthetic oligonucleotides and DNA from the ciliate Paramecium tetraurelia [12].

Table 2: Performance Comparison of Digital PCR Platforms

| Performance Metric | QIAcuity One ndPCR (QIAGEN) | QX200 ddPCR (Bio-Rad) | Experimental Context |

|---|---|---|---|

| Limit of Detection (LOD) | 0.39 copies/µL input | 0.17 copies/µL input | Synthetic oligonucleotides [12] |

| Limit of Quantification (LOQ) | 1.35 copies/µL input | 4.26 copies/µL input | Synthetic oligonucleotides [12] |

| Accuracy (Deviation from Expected) | Consistently lower than expected | Consistently lower than expected, but slightly better agreement than ndPCR | Across dilution series of synthetic oligonucleotides [12] |

| Precision (Coefficient of Variation) | 7-11% (above LOQ) | 6-13% (above LOQ) | Across dilution series of synthetic oligonucleotides [12] |

| Impact of Restriction Enzyme (EcoRI) | CV: 0.6% - 27.7% | CV: 2.5% - 62.1% | DNA from 10-100 ciliate cells; high variability [12] |

| Impact of Restriction Enzyme (HaeIII) | CV: 1.6% - 14.6% | CV: <5% for all cell numbers | DNA from 10-100 ciliate cells; improved precision [12] |

Experimental Protocol: Tracking Amplification Bias with Synthetic Pools

The protocol for quantifying sequence-specific amplification efficiency is as follows [1]:

- Synthetic DNA Pool Design and Synthesis: A pool of 12,000 random DNA sequences is synthesized, each flanked by common adapter sequences (e.g., truncated Truseq adapters) for priming.

- Serial PCR Amplification: The pool is subjected to multiple consecutive PCR reactions (e.g., 6 reactions of 15 cycles each). An aliquot is taken for sequencing after each reaction series to track the changing abundance of every sequence over a total of up to 90 cycles.

- Sequencing and Coverage Analysis: High-throughput sequencing is performed on each aliquot. The read coverage for each sequence is normalized and analyzed across time points.

- Efficiency Calculation: For each sequence, a simple exponential model is fit to its coverage data over the PCR cycles. The model yields two parameters: the initial synthesis bias and the sequence-specific amplification efficiency (ε). Sequences with efficiencies significantly below the population mean are identified as poorly amplifying.

This method revealed that specific sequence motifs adjacent to priming sites, which can lead to mechanisms like adapter-mediated self-priming, are major contributors to poor amplification efficiency [1].

Non-Specific Amplification and Allelic Dropout: Challenges in Forensic and Low-Template PCR

The analysis of degraded or low-concentration DNA templates, common in forensic science and ancient DNA studies, introduces artifacts like allelic dropout and non-specific amplification, complicating profile interpretation.

Reduced PCR Volume and Its Impact on Low Template DNA

A 2025 study on forensic genetics evaluated the effects of reducing PCR volumes (from a standard 25 µL down to 3 µL) when analyzing low template DNA (LTDNA) samples using GlobalFiler and Yfiler Plus kits [17]. The results demonstrated that for controlled samples with sufficient DNA, complete genetic profiles could be obtained even at drastically reduced volumes of 12, 6, or 3 µL. However, for true LTDNA samples (0.01 ng/µL), reducing the amplification volume led to a proportional increase in allelic dropouts. The study concluded that the absolute amount of available DNA is the limiting factor, not the reaction volume itself [17].

Experimental Protocol: Allelic Dropout Assessment in Reduced Volume PCR

The forensic protocol for testing the limits of LTDNA analysis is detailed below [17]:

- Sample Preparation: Controlled samples (commercial positive control DNA) and low template DNA samples (e.g., extracted from blood swabs and quantified to 0.01-0.02 ng/µL) are prepared.

- PCR Amplification at Various Volumes: The same DNA extracts are amplified in replicate reactions across different total reaction volumes: 25 µL (standard), 12 µL, 6 µL, and 3 µL. The biochemical ratios of reagents to DNA are kept constant.

- Capillary Electrophoresis: Amplification products are separated and detected via capillary electrophoresis (e.g., on a 3500 Genetic Analyzer).

- Profile Analysis and Artifact Scoring: The resulting genetic profiles are analyzed for:

- Allelic Dropout: The failure to amplify one allele at a heterozygous locus, recorded per locus and per reaction.

- Drop-in: The sporadic, random amplification of an allele not present in the true profile, typically from contamination.

- Profile Completeness: The percentage of expected alleles successfully detected.

- Peak Height and Balance: The fluorescence intensity of alleles and the balance between heterozygous alleles.

This protocol establishes the boundaries of reliable analysis for challenging forensic samples and highlights the stochastic effects associated with minimal template amounts [17].

Figure 2: Analysis Challenges and Artifacts in Low Template DNA PCR.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for PCR Template Quality Assessment

| Reagent/Material | Primary Function | Application Example |

|---|---|---|

| Synthetic Oligonucleotide Pools | Provides a defined, diverse template set for quantifying sequence-specific bias and training prediction models. | Deep learning model training to predict amplification efficiency from sequence [1]. |

| Digital PCR Platforms (ddPCR/ndPCR) | Enables absolute quantification of nucleic acids by partitioning reactions, reducing the impact of amplification efficiency differences. | Precise gene copy number estimation in environmental samples; cross-platform performance validation [12]. |

| Restriction Enzymes (e.g., HaeIII) | Digests DNA to break up complex structures or tandem repeats, improving primer access and amplification uniformity. | Enhanced precision in gene copy number quantification of ciliate DNA, especially for ddPCR [12]. |

| Commercial Multiplex PCR Kits (e.g., GlobalFiler) | Optimized reagent mixtures for simultaneous amplification of multiple loci, critical for complex sample types. | Standardized and optimized profiling of forensic and low-template DNA samples [17]. |

| Unique Molecular Identifiers (UMIs) | Molecular barcodes added to templates pre-amplification to tag and track original molecules, correcting for amplification bias and duplicates. | Mitigating skewed abundance data in quantitative sequencing applications [1]. |

The experimental data and comparisons presented underscore that template quality and quantity are not mere preliminary parameters but are active determinants of PCR performance across diverse fields. False negatives, driven by primer-template mismatches, compromise diagnostic reliability. Amplification biases, revealed through deep learning and synthetic pool experiments, invalidate quantitative conclusions in multi-template applications. Furthermore, the stochastic artifacts of allelic dropout and non-specific amplification in low-template analyses pose significant interpretation challenges. A comprehensive validation strategy must therefore integrate template quality assessment, platform-specific performance understanding, and robust experimental design, including the use of digital PCR, UMIs, and optimized protocols, to ensure the generation of rigorous and reproducible data.

Validating the quality and quantity of nucleic acid templates is a critical prerequisite for successful polymerase chain reaction (PCR) research. Compromised templates remain a significant source of experimental variability, leading to reduced sensitivity, quantification inaccuracies, and complete amplification failure. This guide objectively examines the primary sources of template compromise—degradation, inhibitors, and complex matrices—and compares the performance of relevant PCR technologies and methodologies in overcoming these challenges.

Understanding Template Degradation

Template degradation involves the fragmentation of high-molecular-weight DNA into smaller pieces. This occurs through enzymatic, chemical, or physical processes that break the phosphodiester backbone of nucleic acids.

Causes and Consequences

DNA degradation is a progressive process influenced by multiple factors [18]:

- Environmental Exposure: Ultraviolet radiation, fluctuating temperatures, and pH shifts.

- Sample Handling: Repeated freezing and thawing of DNA samples, leaving them at room temperature, or exposure to heat and physical shearing.

- Sample Origin: DNA extracted from formalin-fixed, paraffin-embedded (FFPE) tissue samples is notoriously degraded.

- Inadequate Purification: Inefficient purification that leaves residual nucleases active.

The critical consequence of degradation is the reduction in amplifiable template. As the average size of the DNA fragments approaches the length of the target amplicon, the probability of having an intact template spanning the entire region drops significantly. Research demonstrates that nucleotide incorporation initially increases with moderate DNA fragmentation but then declines sharply when the DNA becomes highly degraded [19]. In forensic and clinical settings, this manifests as allelic dropout—the failure to detect an allele in a genetic profile because the DNA template is broken within the amplicon region [17].

Detection and Assessment

Gel electrophoresis is a fundamental method for assessing degradation. Intact genomic DNA appears as a tight, high-molecular-weight band, while degraded DNA shows a characteristic smear toward lower molecular weights [18]. The degree of smearing correlates with the extent of fragmentation.

The Problem of PCR Inhibitors

PCR inhibitors are substances that co-purify with nucleic acids and interfere with the amplification process. They can originate from the sample itself, the collection method, or reagents used during extraction and purification [20].

Table 1: Common PCR Inhibitors and Their Sources

| Inhibitor Category | Specific Examples | Common Sources |

|---|---|---|

| Blood Components | Hemoglobin, Immunoglobulin G (IgG), Lactoferrin | Blood, tissue samples [21] |

| Soil Components | Humic Acid, Fulvic Acid | Soil, sediment, plants [21] |

| Food Components | Polyphenols, Fats, Bile Salts | Various food matrices [22] |

| Extraction Reagents | Phenol, Ethanol, Sodium Dodecyl Sulfate (SDS), EDTA | Laboratory purification processes [20] |

The mechanisms of inhibition are diverse [21]:

- Enzyme Inactivation: Inhibitors like phenol and IgG can bind directly to the DNA polymerase, disrupting its activity.

- Nucleic Acid Binding: Substances such as humic acid and polyphenols interact with the DNA template, preventing its denaturation or the primer annealing step.

- Cofactor Sequestration: Chelating agents like EDTA bind magnesium ions (Mg2+), which are essential cofactors for DNA polymerase activity [23] [20].

Impact on Different PCR Technologies

Digital PCR (dPCR) demonstrates greater resilience to inhibitors compared to quantitative real-time PCR (qPCR). Because dPCR relies on end-point, binary measurements rather than amplification kinetics, it provides more accurate quantification in the presence of inhibitors that delay amplification [21]. Studies show that complete inhibition occurs at significantly higher concentrations of humic acid in dPCR compared to qPCR [21]. The partitioning step in dPCR may also reduce the local concentration of inhibitors in reaction chambers containing DNA templates, facilitating more efficient amplification [21].

Challenges of Complex Sample Matrices

Complex matrices like food, soil, and forensic samples present a dual challenge: they often contain low amounts of target DNA amidst a high background of non-target DNA and potent PCR inhibitors.

Food Matrices

Pathogen detection in food is complicated by the presence of PCR inhibitors from the food itself and the inherent low pathogen levels. For instance, washes from foods like bean sprouts, cilantro, and beef can contain compounds that suppress amplification [22]. Without effective template preparation, enrichment cultures are often necessary to increase target concentration, adding time and complexity.

Forensic and Environmental Matrices

Forensic samples from crime scenes may contain traces of human DNA mixed with inhibitors from soil, fabric dyes, or other environmental contaminants [21]. Similarly, environmental samples targeting microbiota or pathogens are rich in humic and fulvic acids, which are major inhibitors [21].

Comparative Experimental Data and Solutions

Template Preparation Methods

Effective template preparation is the first line of defense. FTA filter cards provide a universal method for rapid template preparation from complex samples. These cards are impregnated with chelators and denaturants that lyse microbial cells on contact, sequestering and preserving DNA within the membrane while allowing washes to remove PCR inhibitors [22].

Table 2: Sensitivity of PCR Detection from Pure Cultures Using FTA Filters vs. Boiling

| Bacterial Species | Target Gene | Detection Limit (FTA Filter) | Detection Limit (Boiling) |

|---|---|---|---|

| Shigella flexneri | ipaH | 40 CFU | 40 CFU |

| Salmonella enterica | invA | 30 CFU | 30 CFU |

| Listeria monocytogenes | hemolysin | 200 CFU | >200 CFU* |

Boiling is less effective for lysing gram-positive bacteria like *Listeria, highlighting a limitation of simple lysis methods [22].

When applied to foods artificially contaminated with S. flexneri, the FTA filter method enabled consistent detection with similar sensitivity to pure cultures, effectively overcoming PCR interference from the food matrices [22].

PCR Volume Optimization

For low-template DNA (LTDNA) samples, such as those encountered in forensic science, reducing PCR volume is a strategy to improve sensitivity. A study comparing the GlobalFiler and Yfiler Plus kits found that reducing amplification volumes from a standard 25 µL down to 12, 6, or 3 µL while maintaining biochemical ratios could yield complete genetic profiles from optimal control DNA [17].

However, for true low-template DNA extracts, the key limiting factor is the absolute amount of DNA available, not the volume. The same study reported that volume reduction in low-concentration DNA extracts proportionally increased the incidence of allelic dropout [17]. This indicates that while volume reduction can be a useful optimization tool, it cannot compensate for insufficient template quantity.

Platform Performance: dPCR vs. qPCR

Digital PCR platforms offer advantages for challenging samples, and different systems have been rigorously compared.

Table 3: Comparison of Two Digital PCR Platforms for GMO Quantification

| Validation Parameter | Bio-Rad QX200 (ddPCR) | Qiagen QIAcuity (ndPCR) |

|---|---|---|

| Technology | Droplet-based (water-oil emulsion) | Nanoplate-based (microfluidic chips) |

| Partitioning | ~20,000 droplets of 1 nL | 26,000 partitions of 1 nL |

| Limit of Detection (LOD) | ~0.17 copies/µL input [12] | ~0.39 copies/µL input [12] |

| Limit of Quantification (LOQ) | ~4.26 copies/µL input [12] | ~1.35 copies/µL input [12] |

| Impact of Restriction Enzyme | Precision significantly improved with HaeIII vs. EcoRI [12] | Precision less affected by choice of restriction enzyme [12] |

| Key Finding | Duplex assays equivalent to singleplex qPCR; suitable for collaborative trial validation [24] | Duplex assays equivalent to singleplex qPCR; suitable for collaborative trial validation [24] |

A separate study comparing the same two platforms for quantifying gene copy numbers in protists found both achieved high precision, with the QX200 ddPCR system showing a slightly better agreement with expected values for synthetic oligonucleotides [12]. Both platforms showed a strong linear response when quantifying DNA from increasing cell numbers of Paramecium tetraurelia.

Experimental Protocols for Validation

Protocol: Assessing Template Degradation via Gel Electrophoresis

This protocol helps determine if DNA fragmentation is a source of PCR failure [18].

- Prepare a 1% Agarose Gel: Dissolve 1 g of agarose in 100 mL of 0.5x TBE buffer. Add ethidium bromide (0.2 µg/mL) or a similar DNA stain after cooling.

- Load Samples: Mix 1–2 µL of DNA extract with 6x loading dye. Load alongside a DNA molecular weight ladder.

- Electrophorese: Run the gel at 5-6 V/cm until the dye front has migrated sufficiently.

- Visualize: Image the gel under UV light. A single, tight high-molecular-weight band indicates intact DNA. A smear descending toward the lower molecular weights indicates degradation.

Protocol: FTA Filter-Based Template Preparation

This method is effective for preparing PCR templates from bacterial cultures or complex food matrices [22].

- Sample Application: Apply a 10 µL aliquot of a bacterial culture or a concentrated food wash to an FTA filter.

- Dry and Lyse: Air-dry the filter on a heating block at 56°C for 15–20 minutes. The chemicals in the filter lyse cells and denature proteins.

- Wash: To remove inhibitors and cell debris, wash the filter spot twice with 100-200 µL of FTA purification buffer for 2 minutes per wash, followed by two 2-minute washes with 10 mM Tris-HCl (pH 8.0) containing 0.1 mM EDTA.

- Dry and Punch: Air-dry the filter. Use a 6-mm diameter hole punch to remove a disc from the spotted area.

- PCR Amplification: Use the filter disc directly as a template in a PCR reaction.

Workflow Diagram for Troubleshooting Template Compromise

The following diagram outlines a logical workflow for diagnosing and addressing issues related to template compromise.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents and Kits for Managing Template Compromise

| Reagent / Kit | Function / Application | Key Consideration |

|---|---|---|

| FTA Filter Cards | Rapid collection, lysis, and preservation of DNA from complex samples (e.g., food, bacteria). | Effectively removes many PCR inhibitors; template is stable for storage [22]. |

| Inhibitor-Tolerant DNA Polymerases | Engineered polymerases or enzyme blends designed to resist a wide range of inhibitors. | Superior performance with blood, soil, and plant-derived templates compared to standard Taq [21]. |

| PrepFiler BTA Forensic DNA Extraction Kit | Automated extraction of DNA from forensic samples, including those with inhibitors. | Optimized for challenging, low-level DNA samples and integrates with automated systems [17]. |

| Quantifiler Trio DNA Quantification Kit | qPCR-based kit to quantify human DNA and assess PCR inhibition and degradation in a single assay. | Provides a "DNA Degradation Index" and "Inhibition Indicator" to pre-emptively flag sample issues. |

| BSA (Bovine Serum Albumin) | PCR additive that binds to inhibitors like phenolic compounds and IgG, neutralizing their effects [20]. | A simple, cost-effective way to ameliorate inhibition in many sample types. |

| Chelex Resin | Chealing resin used in rapid, simple DNA extraction protocols. | Commonly used in forensic science to bind metal ions that facilitate degradation, but may not remove all inhibitors [21]. |

Successful PCR-based research and diagnostics hinge on the integrity and purity of the starting template. Degradation, inhibitors, and complex matrices represent significant hurdles that can be overcome through a combination of robust sample preparation, informed choice of technology, and careful validation. The experimental data presented herein demonstrates that while qPCR remains a powerful workhorse, dPCR platforms offer distinct advantages for absolute quantification in inhibited samples. Methodologies like FTA filter preparation provide a universal and effective means to purify templates from complex backgrounds. Ultimately, validating template quality and quantity is not a single step but an integrated process—from sample collection to data analysis—ensuring that results are both reliable and meaningful.

Advanced Techniques for Quantifying and Qualifying DNA Templates

Accurate DNA quantification is a fundamental requirement in molecular biology, serving as a critical gatekeeper for downstream applications such as polymerase chain reaction (PCR), sequencing, and cloning [25]. The validation of template quality and quantity forms the cornerstone of reliable, reproducible research, particularly in pharmaceutical development and clinical diagnostics where results directly impact drug candidate selection and patient management [26]. Two predominant methodologies have emerged for nucleic acid quantification: spectrophotometry, which measures light absorption, and fluorometry, which detects fluorescent emission from dye-bound DNA [27] [28]. Within the context of PCR research, the choice between these methods significantly impacts data integrity, as each technique possesses distinct strengths and limitations in assessing concentration and purity [29]. This guide provides an objective comparison of these technologies, supported by experimental data, to inform researchers in selecting the optimal quantification approach for their specific applications.

Fundamental Principles: How the Technologies Work

Spectrophotometry: Measuring Light Absorption

Spectrophotometry operates on the Beer-Lambert law, which states that the absorbance of light by a solution is directly proportional to the concentration of the absorbing substance [27]. In nucleic acid quantification, a beam of light at 260 nanometers (nm)—the wavelength at which DNA bases absorb most strongly—is passed through the sample. The instrument measures the amount of light absorbed, which is then used to calculate the DNA concentration [25] [29]. A key advantage of spectrophotometry is its ability to provide purity assessments through absorbance ratios. The 260/280 nm ratio indicates protein contamination (with a value of 1.8 considered pure for DNA), while the 260/230 nm ratio detects organic compound or salt contamination (with a value greater than 1.5 indicating good quality) [25] [29]. However, a significant limitation is its inability to discriminate between double-stranded DNA (dsDNA), single-stranded DNA (ssDNA), and RNA, as all nucleic acids absorb at 260 nm [25].

Fluorometry: Detecting Fluorescent Emission

Fluorometry employs a fundamentally different process based on fluorescence. This three-stage mechanism involves (1) excitation: a fluorophore (DNA-binding dye) absorbs light at a specific wavelength; (2) excited-state lifetime: the fluorophore resides in a transient excited state (typically 1-10 nanoseconds); and (3) emission: the fluorophore returns to its ground state, emitting light at a longer, lower-energy wavelength [28]. This energy difference between excitation and emission is known as the Stokes shift, which is fundamental to the technique's sensitivity as it allows emission photons to be detected against a low background, isolated from excitation photons [28]. Fluorometric DNA quantification uses dyes that selectively bind to dsDNA, such as PicoGreen, and emit fluorescence proportional to the DNA concentration [25] [30]. This specificity for dsDNA is a critical advantage for PCR applications, where the amplifiable template is double-stranded.

Table 1: Core Principles and Instrumentation

| Feature | Spectrophotometry | Fluorometry |

|---|---|---|

| Measurement Principle | Absorbance of UV light at 260 nm [27] | Emitted fluorescence from dye-bound DNA [27] |

| Physical Basis | Beer-Lambert Law [27] | Stokes Shift [28] |

| DNA Specificity | Detects all nucleic acids (dsDNA, ssDNA, RNA) [25] | High specificity for dsDNA with selective dyes [25] [31] |

| Purity Assessment | Yes (260/280 nm and 260/230 nm ratios) [29] | No [29] |

| Key Instrument Components | UV light source, monochromator, sample cuvette, detector [27] | Excitation light source, emission and excitation filters, detector [28] |

The diagram above illustrates the fundamental workflows for both spectrophotometry and fluorometry, highlighting their distinct mechanisms of action.

Performance Comparison: Experimental Data and Findings

Concentration Accuracy and Sample Purity

Multiple studies have systematically compared the performance of spectrophotometric and fluorometric quantification methods, revealing consistent patterns. A 2022 study analyzed seven different DNA samples using both a NanoDrop spectrophotometer and three fluorometric kits (AccuGreen, AccuClear, and Qubit). It found that for most samples, the measured concentration was close to the supplier-specified 10 ng/μL, with no significant variance between analysts. However, a key finding was that the spectrophotometer tended to overestimate DNA concentration compared to fluorometric methods, particularly for fish DNA samples [25].

This overestimation by spectrophotometry is frequently reported in the literature. Research on DNA extracted from processed foods found that "spectrophotometry was found to overestimate, whereas fluorometry underestimated the amount of extracted DNA" when compared to quantitative PCR (qPCR), which measures amplifiable DNA [29]. The overestimation is largely attributed to the fact that spectrophotometry detects all nucleic acids, including any contaminating RNA, ssDNA, and free nucleotides, as well as interference from co-extracted chemicals that also absorb light at 260 nm [25] [29]. In contrast, fluorometry specifically quantifies dsDNA via binding dyes, making it more reflective of the actual amplifiable template in PCR [31].

Impact of DNA Degradation and Complex Matrices

The accuracy of quantification is further complicated when analyzing degraded DNA or samples from complex matrices, such as processed foods. A study on degraded maize DNA (via sonication or heat treatment) found that the quantification method directly impacted qPCR results for genetically modified (GM) content. qPCR reactions based on spectrophotometric (A260) quantification reported different GM percentages (e.g., 1.14% and 2.15% for sonicated samples) compared to those based on fluorometric quantification (0.861% and 1.74%). The study concluded that fluorometric quantification yielded more accurate GM content determinations at higher concentrations, likely because it provided a more reliable count of amplifiable DNA templates into the qPCR reaction [30] [31].

Furthermore, research on processed foods demonstrated that chemical residues from both the extraction reagents and the food matrix itself contribute to erroneous A260 readings, leading to significant overestimation of DNA concentration. The study concluded that "spectrophotometry is not recommended as a suitable method to determine the concentration and purity of DNA extracted from processed foods" [29].

Table 2: Comparative Performance in Experimental Conditions

| Experimental Condition | Spectrophotometric Performance | Fluorometric Performance | Key Supporting Findings |

|---|---|---|---|

| Intact, Pure DNA | Concentration tends to be overestimated [25]. | Provides specific dsDNA concentration [25]. | Measured values closer to expected concentration with fluorometry [25]. |

| Degraded DNA | Overestimates amplifiable template [30] [31]. | More accurately reflects amplifiable template [30] [31]. | qPCR results based on fluorometry were more accurate for GM content [31]. |

| Complex Matrices (e.g., Processed Food) | Prone to overestimation due to chemical interference at A260 [29]. | Less susceptible to non-DNA chemical interference [29]. | Fluorometry showed better correlation with amplifiability in qPCR [29]. |

| Presence of Contaminants (Proteins, RNA) | Purity ratios (260/280, 260/230) indicate contamination [29]. | Dyes are highly specific for dsDNA; not affected by RNA/protein [31]. | Fluorometry does not assess purity, but its measurement is specific to dsDNA [25] [29]. |

Methodologies: Detailed Experimental Protocols

Protocol for Spectrophotometric DNA Quantification (e.g., NanoDrop)

This protocol outlines the standard procedure for quantifying DNA using a microvolume spectrophotometer.

- Instrument Initialization: Clean the measurement pedestal with a lint-free tissue. Initialize and blank the instrument using the same elution buffer as the DNA samples (e.g., nuclease-free water, TE buffer) [29].

- Sample Measurement: Apply 1-2 μL of the DNA sample directly onto the lower measurement pedestal. Close the arm to position the upper pedestal, creating a column of liquid between the two surfaces.

- Data Acquisition: Initiate the measurement. The instrument will measure absorbance at multiple wavelengths, including 260 nm (for DNA), 280 nm (for protein), and 230 nm (for salts/organics).

- Data Analysis: Record the concentration (in ng/μL) calculated from the A260 reading. Assess sample purity by examining the 260/280 and 260/230 ratios. Acceptable purity ranges are typically 1.7–2.0 for 260/280 and >1.5 for 260/230 [25] [29].

- Cleaning: Wipe the pedestals clean with a lint-free tissue after each measurement to prevent cross-contamination.

Protocol for Fluorometric DNA Quantification (e.g., Qubit Assay)

This protocol describes the workflow for the highly specific Qubit dsDNA HS Assay.

- Working Solution Preparation: Prepare the assay working solution by diluting the fluorometric dye 1:200 in the provided assay buffer. Mix thoroughly by vortexing. The amount prepared depends on the number of samples and standards [25].

- Standard Preparation: Prepare the standard curve by pipetting 190 μL of working solution into each of two tubes and adding 10 μL of the provided standard #1 or standard #2. Mix by vortexing.

- Sample Preparation: For each unknown sample, pipette 199 μL of working solution into a tube and add 1 μL of the DNA sample. Mix by vortexing. Note: Sample volume may be adjusted depending on concentration.

- Incubation and Measurement: Incubate all tubes at room temperature for 2-5 minutes protected from light. Read the standards and samples in the fluorometer according to the manufacturer's instructions.

- Data Analysis: The instrument uses the standard curve to calculate and report the DNA concentration of the unknown samples directly in ng/μL.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Kits for DNA Quantification

| Item Name | Function/Application | Specific Example(s) |

|---|---|---|

| Microvolume Spectrophotometer | Measures nucleic acid concentration and purity using minimal sample volume (1-2 µL). | NanoDrop instruments [25] [29]. |

| Fluorometer | Quantifies DNA concentration with high sensitivity and specificity via DNA-binding dyes. | Qubit Fluorometer [25] [27]. |

| dsDNA-Specific Fluorescence Kits | Provide the intercalating dye and standards required for fluorometric quantification. | Qubit dsDNA HS Assay Kit, AccuGreen High Sensitivity Kit, AccuClear Ultra High Sensitivity Kit [25]. |

| Nucleic Acid Extraction Kits | Isolate DNA from various biological sources; choice of kit impacts yield and purity. | Kits for processed foods, forensic samples, cell cultures [29]. |

| Fluorescent Reference Standards | Calibrate fluorescence measurements across different instruments and time points. | High-precision fluorescent microsphere standards, fluorescent standard solutions [28]. |

The choice between spectrophotometry and fluorometry for DNA quantification is application-dependent. Based on the experimental data and principles discussed, the following recommendations are provided to validate template quality and quantity for PCR research:

- Use Spectrophotometry for Initial Quality Control: Its ability to provide concentration and purity ratios (260/280 and 260/230) makes it valuable for a quick initial assessment of a nucleic acid sample. It readily identifies significant contamination from proteins, salts, or organic compounds that could inhibit PCR [25] [29].

- Employ Fluorometry for Accurate PCR Template Quantification: For any application requiring precise knowledge of amplifiable dsDNA concentration—such as preparing templates for qPCR, next-generation sequencing, or cloning—fluorometry is the superior choice. Its specificity for dsDNA prevents overestimation due to RNA, ssDNA, or nucleotides, leading to more consistent and reliable PCR results [25] [30] [31].

- Adopt a Combined Approach for Critical Applications: If sample volume permits, the most robust strategy is to use both methods. Spectrophotometry provides a purity profile, while fluorometry gives an accurate measure of amplifiable dsDNA concentration. This combined data offers the most comprehensive picture of template quality and quantity [25].

- Prioritize Fluorometry for Challenging Samples: For degraded DNA, samples from complex matrices (like processed foods), or any material where purity is a known concern, fluorometry is strongly recommended. Spectrophotometric measurements under these conditions are often inaccurate and poorly correlated with PCR amplifiability [29] [31].

In conclusion, while spectrophotometry offers speed and purity information, fluorometry provides the specificity and accuracy required for robust PCR validation. Understanding the strengths and limitations of each method allows researchers to make informed decisions, ensuring the integrity of their molecular biology data.

Quantitative real-time PCR (qPCR) is a cornerstone technique in molecular biology, clinical diagnostics, and drug development. Its accuracy hinges on the integrity and purity of the nucleic acid template, as inhibitors co-purified from biological samples can severely compromise amplification efficiency and lead to erroneous quantification [32]. This guide objectively compares established and emerging methodologies for assessing template quality and overcoming amplification inhibition, providing a structured framework for validation within PCR research. We present experimental data and standardized protocols to empower researchers in selecting appropriate strategies for their specific applications, ensuring data reliability and reproducibility in accordance with updated MIQE guidelines [33] [34].

Core Principles: Amplification Efficiency and Inhibition Mechanisms

Amplification efficiency is a fundamental parameter in qPCR, describing the rate at which a target sequence is doubled during each PCR cycle. Ideal efficiency (100%) corresponds to a perfect doubling (2.0), with values between 90-110% generally considered acceptable [32]. Efficiency is most accurately determined from the slope of a standard curve generated from a serial dilution, using the formula: Efficiency = [10^(-1/slope) - 1] x 100 [35]. A deviation from this ideal range directly impacts quantification accuracy.

PCR inhibitors are substances that interfere with the amplification reaction through various mechanisms, including DNA polymerase inactivation, nucleic acid degradation, or chelation of essential co-factors like Mg²⁺ [36] [2]. Common inhibitors include hemoglobin (blood), heparin (clinical samples), humic acids (environmental samples), and polysaccharides (plants) [32]. Their effects manifest in qPCR outputs as delayed quantification cycle (Cq) values, reduced amplification efficiency, abnormal amplification curves, or complete amplification failure [32].

Detecting Inhibition: A Practical Workflow

A systematic approach is required to diagnose the presence of inhibitors in a qPCR reaction.

- Internal PCR Controls (IPCs): The most robust method involves spiking a known quantity of a non-target control sequence into each reaction. A delayed Cq value for the IPC in a sample compared to a no-inhibit control indicates the presence of inhibitors [32].

- Standard Curve Analysis: A serial dilution of the sample DNA itself can be run. A significant change in the slope of the standard curve (outside the -3.1 to -3.6 range) or a non-linear dilution series suggests inhibition is affecting PCR efficiency [32].

- Sample Dilution: Diluting the template (e.g., 1:5, 1:10) and re-running the qPCR can reveal inhibition. A significant decrease in Cq value that is disproportionate to the dilution factor (e.g., more than a 2.3 cycle shift for a 1:5 dilution) indicates that dilution has reduced the inhibitor concentration, thereby improving amplification [36].

Comparative Analysis of Template Preparation and Inhibition Management Strategies

This section compares the performance of different template preparation methods and inhibitor mitigation strategies, supported by experimental data.

Traditional DNA Extraction vs. Direct PCR Methods

A key decision point is whether to use purified DNA or direct sample lysates.

Table 1: Comparison of Template Preparation Methods for qPCR

| Method | Procedure Summary | Key Performance Findings | Advantages | Limitations |

|---|---|---|---|---|

| Traditional DNA Extraction [37] | Column-based or liquid-phase purification to isolate DNA from other cellular components. | High purity template; consistent PCR efficiency when successful [37]. | High-quality template; removes most inhibitors. | Potential DNA loss (up to 83% in forensic samples); additional time and cost [37]. |

| Direct PCR with Sample Lysate (GG-RT PCR) [37] | Whole blood mixed with water, heated at 95°C for 20 min, and centrifuged. Lysate supernatant used directly. | All target genes successfully amplified with 1:10 and 1:5 dilutions; PCR efficiency for ACTB and PIK3CA was 20% and 14% lower, respectively, vs. purified DNA [37]. | Cost-effective; rapid; prevents DNA loss during extraction [37]. | Lower PCR efficiency for some targets; requires optimization of lysate dilution [37]. |

The experimental data from the GG-RT PCR method demonstrates that while direct PCR is feasible, a direct comparison of PCR efficiency reveals a measurable performance gap for some targets when using lysates versus purified DNA [37].

Evaluating Strategies to Overcome PCR Inhibition

For samples known to contain inhibitors, several chemical and physical strategies can be employed to restore amplification.

Table 2: Efficacy of Common Inhibitor Mitigation Strategies

| Strategy | Proposed Mechanism of Action | Reported Effectiveness | Considerations |

|---|---|---|---|

| Sample Dilution (1:10) [36] | Reduces inhibitor concentration below a critical threshold. | Eliminated false negative results in inhibited wastewater samples [36]. | Also dilutes the target DNA, potentially reducing sensitivity [36]. |

| Bovine Serum Albumin (BSA) [36] [38] | Binds to inhibitors, preventing their interaction with the polymerase. | Significantly improved PCR robustness; lowered failure rates to 0.1% in buccal swab samples [38]. Effective in wastewater [36]. | Can cause foaming in automated liquid handlers [38]. |

| T4 Gene 32 Protein (gp32) [36] | Binds to single-stranded DNA and inhibitors like humic acids. | Most significant method for removing inhibition in wastewater; used at 0.2 μg/μL [36]. | Higher cost compared to BSA. |

| Inhibitor-Tolerant Master Mix [32] | Proprietary enzyme formulations and buffers designed to be resistant to common inhibitors. | Delivers consistent, sensitive amplification in challenging samples (blood, soil) [32]. | Commercial solution; cost may be higher than standard mixes. |

| Digital PCR (dPCR) [39] | Partitions reaction into thousands of nanoreactions, reducing the effective inhibitor concentration in positive partitions. | Accurate quantification possible with higher levels of humic acid/heparin vs. qPCR [39]. | Different platform; higher cost per reaction; longer setup time [36]. |

The data shows that the most effective strategy can depend on the sample type and inhibitor. For instance, in wastewater, gp32 outperformed other additives, whereas BSA proved highly effective for high-throughput processing of buccal swabs [36] [38].

Experimental Protocols for Validation

This protocol is designed for EDTA-treated whole blood and eliminates the DNA extraction step.

Key Reagent Solutions:

- EDTA-treated Whole Blood

- Nuclease-free Distilled Water

- SYBR Green I Master Mix (e.g., Roche LightCycler 480 SYBR Green I Master)

- Sequence-Specific Primers

Methodology:

- Sample Preparation: Mix 400 μL of whole blood with 100 μL of distilled water (a 1:5 dilution of blood in water).

- Heat Lysis: Incubate the diluted blood sample at 95°C for 20 minutes. Vortex the sample 2-3 times during incubation.

- Clarification: Centrifuge the heat-treated sample at 14,000 rpm for 5 minutes.

- Template Preparation: Carefully collect the supernatant. This is the blood lysate, which can be used directly or further diluted (1:5 or 1:10 in water) for qPCR.

- qPCR Setup: Perform real-time PCR in a final volume of 10 μL, containing 2.5 μL of the blood lysate (or diluted lysate) and 5 pmol of each primer.

- Thermal Cycling: Use the following conditions: 95°C for 10 min, followed by 40 cycles of 95°C for 15 s and 60-61°C for 30 s.

This method tests the efficacy of different additives in restoring amplification.

Key Reagent Solutions:

- Extracted Nucleic Acids (from inhibited sample)

- qPCR Master Mix

- PCR Enhancers: BSA, T4 gp32, DMSO, Formamide, Tween-20, Glycerol

Methodology:

- Preparation of Enhancer Stocks: Prepare stock solutions of the enhancers to be tested.

- Reaction Setup: Set up qPCR reactions containing the inhibited sample and the candidate enhancer at various concentrations. For example:

- BSA: Common final concentrations from 0.1 to 0.5 μg/μL.

- T4 gp32: A final concentration of 0.2 μg/μL was found effective [36].

- DMSO: Typically 2-10% (v/v).

- Control Reactions: Include a positive control (uninhibited sample) and a negative control (no template). Also, run a sample with no enhancer as a inhibited control.

- qPCR Run: Perform qPCR using the standard cycling conditions for the assay.

- Analysis: Compare the Cq values, amplification curves, and PCR efficiency of reactions with and without enhancers. A significant decrease in Cq and normalization of the amplification curve in the enhancer-containing reaction indicates successful inhibition relief.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for qPCR Template Quality Assessment

| Item | Function/Application | Example Use-Case |

|---|---|---|

| Inhibitor-Tolerant Polymerase | Enzyme formulations resistant to common inhibitors in complex samples. | GoTaq Endure qPCR Master Mix for reliable amplification from blood or soil [32]. |

| Bovine Serum Albumin (BSA) | Protein additive that binds to a wide range of PCR inhibitors. | Overcoming sporadic inhibition in buccal swab-derived DNA [38]. |

| T4 Gene 32 Protein (gp32) | Single-stranded DNA binding protein that counteracts potent inhibitors like humic acids. | Optimized detection of SARS-CoV-2 in wastewater samples [36]. |

| SYBR Green I Master Mix | Intercalating dye for real-time detection of amplified DNA. | Used in the GG-RT PCR protocol for direct amplification from blood lysate [37]. |

| Internal PCR Control (IPC) | A known, non-target sequence spiked into reactions to detect inhibition. | Differentiating true target absence from PCR failure [32]. |

Workflow Visualization: Direct qPCR from Blood Lysate

The following diagram illustrates the streamlined GG-RT PCR workflow for direct qPCR from whole blood, highlighting its key advantage in reducing processing steps.

Accurate qPCR quantification is inextricably linked to template quality. This guide has compared multiple approaches, from direct lysis methods to sophisticated chemical enhancers, for managing template-related challenges. The experimental data demonstrates that while direct PCR methods like GG-RT PCR offer compelling speed and cost benefits, they may involve trade-offs in amplification efficiency compared to purified DNA [37]. The choice of inhibitor mitigation strategy—be it dilution, additive use, or inhibitor-tolerant master mixes—should be informed by the sample type and the specific inhibitors present [36] [32] [38]. Adherence to MIQE guidelines [33] [34] in reporting sample processing, assay validation, and data analysis is non-negotiable for ensuring the reliability and reproducibility of qPCR results in critical research and diagnostic contexts.

Digital PCR (dPCR) represents a transformative third generation of polymerase chain reaction technology, following conventional PCR and real-time quantitative PCR (qPCR). The core principle of dPCR involves partitioning a PCR reaction mixture into thousands to millions of individual nanoliter-scale reactions, so that each partition contains either 0, 1, or a few nucleic acid target molecules according to a Poisson distribution. Following end-point PCR amplification, the fraction of positive partitions is counted, allowing for absolute quantification of the target nucleic acid without the need for a standard curve through direct application of Poisson statistics. This fundamental approach provides dPCR with significant advantages in precision, sensitivity, and robustness compared to earlier PCR generations [40] [41].

The historical development of dPCR began with foundational work in limiting dilution PCR. In 1999, the term "digital PCR" was formally coined by Bert Vogelstein and colleagues, who developed a workflow using limiting dilution across 96-well plates combined with fluorescence readout to detect RAS oncogene mutations in colorectal cancer patients. The technology has since evolved substantially with advancements in microfluidics, leading to commercial platforms that enable efficient partitioning through water-in-oil droplet emulsification (droplet digital PCR or ddPCR) or microchamber arrays (chip-based dPCR). These technological improvements have addressed early limitations of practicability and cost while enhancing precision and scalability [40] [41].

In clinical and research contexts, dPCR has emerged as a powerful tool for applications requiring high sensitivity and absolute quantification. Its capabilities are particularly valuable in liquid biopsy approaches for cancer monitoring, infectious disease diagnostics, pathogen detection in environmental surveillance, and analysis of rare genetic variants. The technology's superior performance characteristics position it as an ideal methodology for validating template quality and quantity in PCR research, especially when analyzing complex samples or targets present at low concentrations [42] [41] [43].

Fundamental Principles and Advantages of dPCR

Core Technological Principles

The operational workflow of digital PCR consists of four critical steps: partitioning, amplification, fluorescence reading, and quantitative analysis. During partitioning, the PCR mixture containing the sample is divided into thousands to millions of discrete compartments using either droplet-based or chip-based systems. In droplet digital PCR (ddPCR), the sample is dispersed into numerous nanoliter-sized droplets within an immiscible oil phase, typically generating 20,000 droplets per reaction. Alternatively, chip-based systems utilize microfabricated arrays of microscopic wells to achieve physical separation of reaction volumes. Following partitioning, each compartment undergoes traditional PCR amplification through thermal cycling. Crucially, the amplification follows an end-point measurement approach rather than real-time monitoring, with fluorescence intensity measured after completion of all cycles [40] [41].

The quantitative analysis phase applies Poisson statistics to calculate the initial target concentration based on the ratio of positive to negative partitions. The fundamental calculation follows the formula: Concentration = -ln(1 - p) / V, where p represents the proportion of positive partitions and V is the partition volume. This statistical correction accounts for the possibility of multiple target molecules occupying a single partition, enabling absolute quantification without reference to standards. This approach contrasts sharply with qPCR methodology, which relies on comparison to standard curves and measures amplification in early exponential phase through threshold cycle (Ct) values that are relative rather than absolute [40] [44].

Key Performance Advantages

The unique architecture of dPCR confers several significant advantages over previous PCR generations. Absolute quantification without standard curves eliminates potential variability introduced by standard curve preparation and interpolation, enhancing reproducibility across experiments and laboratories. The massive sample partitioning provides exceptional sensitivity for detecting rare targets, with studies demonstrating reliable detection of mutant alleles at frequencies as low as 0.001% in a background of wild-type sequences. This partitioning also confers superior tolerance to PCR inhibitors, as these substances are effectively diluted across thousands of partitions, reducing their impact on amplification efficiency compared to bulk reactions in qPCR [40] [41].

The precision of dPCR stems from its digital nature, with binary (positive/negative) endpoint detection minimizing variability associated with amplification efficiency differences. This precision is particularly valuable for detecting small fold-changes in target concentration, such as in gene expression studies or viral load monitoring. Additionally, dPCR exhibits a wider dynamic range than qPCR, typically spanning 5 orders of magnitude, enabling accurate quantification of both low-abundance and high-abundance targets in the same experimental setup. These technical advantages make dPCR particularly suited for applications requiring high accuracy, sensitivity, and reproducibility [44] [41].

dPCR Workflow: From sample partitioning to absolute quantification.

Comparative Performance Analysis: dPCR vs. qPCR

Sensitivity and Detection Limits

Multiple studies have demonstrated the superior sensitivity of dPCR compared to qPCR across various applications. A comprehensive 2024 meta-analysis examining circulating tumor HPV DNA (ctHPVDNA) detection across oropharyngeal, cervical, and anal cancers revealed significant differences in sensitivity between platforms. Next-generation sequencing (NGS) showed the highest sensitivity at 94%, followed by dPCR at 81%, while qPCR demonstrated substantially lower sensitivity at 51%. This analysis, encompassing 36 studies and 2,986 patients, established that dPCR significantly outperforms qPCR (P < 0.001) in detecting low-abundance nucleic acid targets in complex clinical samples [42].