A Comprehensive Guide to Overcoming PCR Bias in 16S rRNA Sequencing for Robust Microbiome Research

16S rRNA gene sequencing is a cornerstone of microbiome research, yet its accuracy is fundamentally challenged by PCR amplification bias, which distorts microbial community representation and threatens the validity of...

A Comprehensive Guide to Overcoming PCR Bias in 16S rRNA Sequencing for Robust Microbiome Research

Abstract

16S rRNA gene sequencing is a cornerstone of microbiome research, yet its accuracy is fundamentally challenged by PCR amplification bias, which distorts microbial community representation and threatens the validity of downstream analyses. This article provides a systematic framework for researchers and drug development professionals to understand, quantify, and mitigate these biases. Drawing on the latest methodological advances and benchmarking studies, we explore the foundational sources of bias from DNA extraction to primer design, detail wet-lab and bioinformatic correction strategies, and offer a comparative evaluation of sequencing technologies and analysis pipelines. Our guide delivers actionable protocols and troubleshooting insights to enhance data fidelity, ensuring that ecological metrics and biomarker discovery in biomedical studies are both reliable and reproducible.

Understanding the Enemy: Deconstructing the Sources and Impact of PCR Bias

FAQ: Understanding and Troubleshooting PCR Bias

What is PCR bias and why is it a problem in 16S rRNA sequencing?

PCR bias refers to the distortion of microbial community composition that occurs during the polymerase chain reaction (PCR) amplification of 16S rRNA genes. This happens because DNA templates from different bacterial species amplify with varying efficiencies, causing their relative abundances in the final sequencing data to misrepresent the actual abundances in the original sample. This is a critical problem because it can lead to incorrect biological conclusions about community structure and diversity [1] [2]. The bias primarily manifests in two ways: 1) the formation of spurious sequences (artifacts) that inflate diversity estimates, and 2) the skewing of template distribution, where some taxa are overrepresented while others are suppressed [1] [3].

What are the main types of PCR artifacts and how do they affect my data?

PCR artifacts are erroneous sequences generated during amplification that do not correspond to any real organism in the sample. The primary types are:

- Taq Polymerase Errors: Incorrect base incorporations by the DNA polymerase, which create single-nucleotide variants that can be mistaken for novel, rare taxa [1] [4].

- Chimeras: Hybrid sequences formed when an incomplete PCR product from one template acts as a primer on a different, but related, template during a subsequent cycle. One study found that 13% of raw sequence reads can be chimeric [1] [4].

- Heteroduplex Molecules: Mismatched double-stranded DNA molecules formed by annealing similar, but not identical, sequences from different templates. These can lead to incorrect base calls or failure to sequence [1].

These artifacts artificially inflate the observed microbial diversity, making the community appear more complex than it truly is [1] [4].

My mock community results don't match the expected composition. What could be causing this?

Discrepancies between expected and observed mock community compositions are a direct measure of bias in your pipeline. The following table summarizes key quantitative biases reported in the literature:

Table 1: Documented Biases in Microbial Community Profiling

| Source of Bias | Observed Effect | Reference |

|---|---|---|

| GC Content | Negative correlation between genomic GC-content and observed relative abundance. | [5] |

| DNA Extraction | Using different DNA extraction kits produced "dramatically different results"; error rates from bias exceeded 85% in some samples. | [6] |

| PCR Amplification | Preferential amplification of specific templates by over 3.5-fold has been observed reproducibly. | [2] |

| Primer Mismatch | A single nucleotide mismatch between primer and template can lead to up to 10-fold preferential amplification. | [2] |

| PCR Artifacts | A standard 35-cycle PCR led to 76% unique sequences in a library, versus 48% when using a modified, lower-cycle protocol. | [1] |

The bias is often systematic. For example, one study found that species belonging to Proteobacteria were consistently underestimated, while many from Firmicutes were overestimated [5].

How can I modify my wet-lab protocol to minimize PCR bias?

Several experimental strategies can significantly reduce the introduction of bias:

- Limit PCR Cycles: Reduce the number of amplification cycles as much as possible. Bias accumulates with each cycle [1] [2]. One effective protocol is to run a limited number of cycles (e.g., 15), followed by a reconditioning PCR step (a few additional cycles in a fresh reaction mixture), which was shown to drastically reduce heteroduplex molecules and chimeras [1].

- Optimize Polymerase and PCR Conditions: The choice of polymerase and reaction setup matters. Using a high-fidelity polymerase can reduce errors [4]. Furthermore, increasing the initial denaturation time from 30 to 120 seconds has been shown to improve the amplification of templates with high GC content [5].

- Use Degenerate Primers: Primers with degenerate bases can help mitigate bias caused by sequence variation in the primer binding sites across different taxa, allowing for more uniform amplification [7].

- Standardize DNA Extraction: Be aware that the DNA extraction method itself is a major source of bias. Choose and consistently use a kit that has been validated for your sample type [6].

Are there computational methods to correct for PCR bias after sequencing?

Yes, computational correction is an active area of research and can be applied post-sequencing.

- Log-Ratio Linear Models: These models, which build on classical work by Suzuki and Giovannoni, can correct for non-primer-mismatch bias (NPM-bias). The core idea is that the ratio of any two taxa changes predictably with each PCR cycle. By using a calibration experiment with different cycle numbers, you can model and correct this bias [2].

- Reference-Based Bias Correction: This involves creating a "mock community" with known starting ratios or using a pre-defined reference set. By sequencing this reference alongside your samples, you can calculate taxon-specific correction factors and apply them to your environmental data [8].

- Quality Filtering and Denoising: Rigorous quality control pipelines are essential. Using tools like PyroNoise can correct base-call errors in raw sequencing data, reducing the overall error rate from ~0.0060 to ~0.0002 [4]. Chimera detection software like Uchime is also critical, reducing chimeras from ~8% to ~1% in one study [4].

- Cluster Sequences at 99% Similarity: To account for the majority of Taq polymerase errors, cluster your sequences into Operational Taxonomic Units (OTUs) at a 99% sequence similarity cutoff instead of the traditional 97%. This was shown to group most artificial variants with their true parent sequence [1].

Experimental Protocols

Protocol 1: Modified PCR Amplification to Reduce Artifacts

This protocol, adapted from a 2005 study, is designed to constrain the accumulation of PCR artifacts including chimeras, heteroduplexes, and polymerase errors [1].

- First-Stage PCR: Set up your initial PCR reaction as normal. Amplify for only 15 cycles.

- Reconditioning PCR: Transfer a small aliquot of the first-stage PCR product (e.g., 1-2 µl) into a fresh PCR mix. Perform an additional 3 cycles of amplification.

- Clone and Sequence: Proceed with library construction, cloning, and sequencing of the final product.

This method resulted in a greater than two-fold decrease in spurious sequence diversity and an increase in library coverage from 24% to 64% compared to a standard 35-cycle protocol [1].

Protocol 2: Calibration Experiment for Computational Bias Correction

This protocol, based on a 2021 methodology, provides the data needed to fit a log-ratio linear model and correct for PCR NPM-bias [2].

- Create a Calibration Sample: Prior to PCR, pool aliquots of extracted DNA from every study sample into a single, representative pooled sample.

- Split and Amplify: Split the calibration sample into multiple aliquots. Amplify each aliquot for a predetermined range of PCR cycles (e.g., 15, 20, 25, 30 cycles).

- Sequence: Sequence all aliquots of the calibration sample alongside your actual study samples.

- Model and Correct: Use a computational tool like the

Rpackagefidoto fit a log-ratio linear model. The model uses the calibration data to infer the original sample composition (intercept) and the taxon-specific amplification efficiencies (slope), which are then used to correct the bias in the study samples [2].

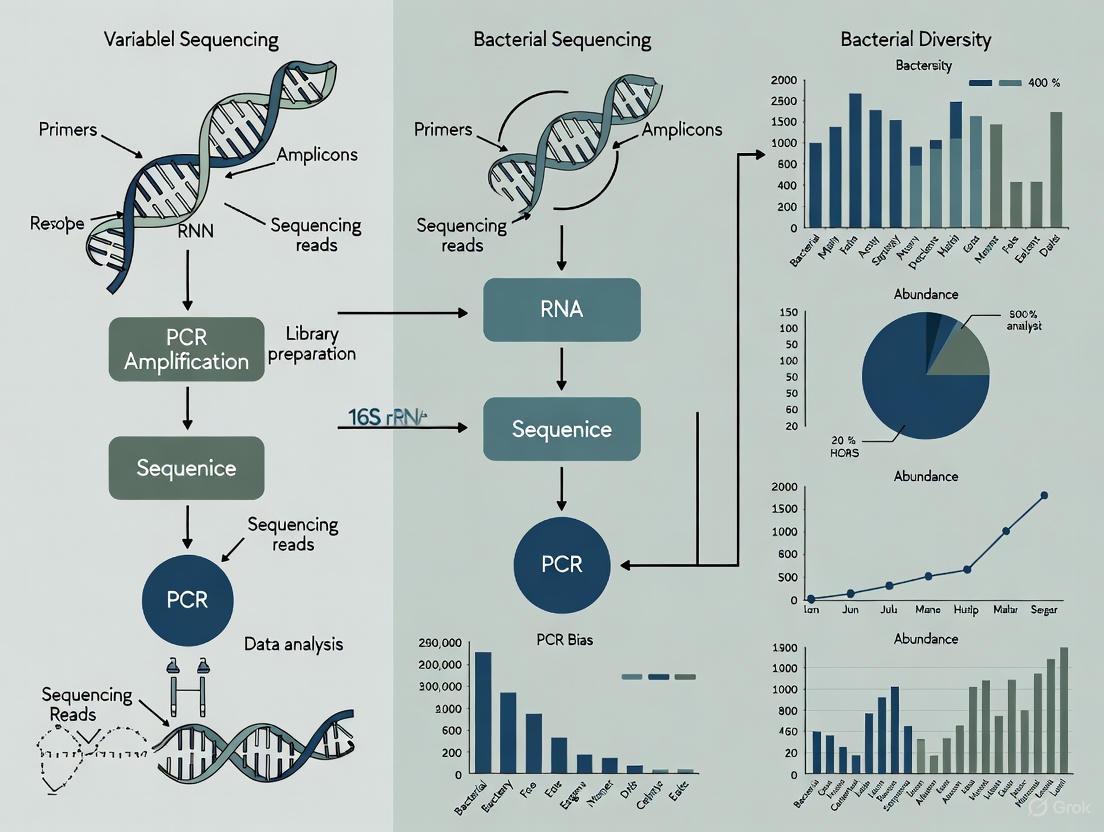

Workflow Visualization

The following diagram illustrates the integrated experimental and computational workflow for mitigating PCR bias, as described in the protocols and FAQs.

Research Reagent Solutions

Table 2: Key Reagents for Managing PCR Bias

| Reagent / Tool | Function in Bias Mitigation | Key Consideration |

|---|---|---|

| High-Fidelity DNA Polymerase | Reduces sequence errors caused by incorrect nucleotide incorporation during amplification. | Lower error rate compared to standard Taq polymerase. |

| Mock Community Standards | Provides a ground-truth standard with known composition to quantify bias in your entire workflow. | Essential for validating both wet-lab and computational protocols. |

| Degenerate Primers | Contains mixed bases at variable positions to bind more efficiently to a wider range of template sequences. | Helps overcome bias from primer-template mismatches. |

| Bead-Based Cleanup Kits | Purifies PCR products to remove primers, enzymes, and salts before the next step (e.g., reconditioning PCR). | Critical for protocol steps that involve transferring amplicons between reactions. |

| Droplet Digital PCR (ddPCR) | Provides absolute quantification of target genes without relying on amplification cycles, used for creating calibrated mock communities. | Can be used to establish true starting ratios for a reference-based correction model [8]. |

Frequently Asked Questions (FAQs)

1. How does my choice of DNA extraction kit affect my microbiome results? The DNA extraction method is a major source of bias in microbiome studies. Different kits can produce dramatically different results because they vary in their efficiency at lysing the cell walls of different bacterial types. For instance, mechanical disruption (bead-beating) is crucial for breaking open tough Gram-positive bacterial cells, while chemical lysis alone may preferentially release DNA from Gram-negative bacteria. One study found that compared to the Powersoil kit, using a Qiagen kit increased the observed proportion of Enterococcus by about 50% while suppressing the observed proportions of Neisseria, Bacillus, Pseudomonas, and Porphyromonas [6].

2. Why do primer mismatches cause bias, and how can I minimize their effect? Primer mismatches occur when the "universal" primers used in PCR do not perfectly complement the 16S rRNA gene of all bacteria in your sample. Even a single nucleotide mismatch, especially near the 3' end of the primer, can significantly reduce amplification efficiency. This bias is introduced primarily in the first few PCR cycles. To minimize it, you can:

- Use optimized, non-degenerate primer sets designed with computational tools that maximize coverage and matching efficiency across the bacterial domain [9].

- Target different variable regions with separate primer sets, as bias is dependent on primer position [3].

3. My sample has bacteria with a wide range of genomic GC-content. How will this impact my data? Genomic GC-content correlates negatively with observed relative abundances in 16S rRNA sequencing [10]. This means that species with high GC-content in their genome are often underestimated, while those with low GC-content are overestimated. This bias is largely attributed to the lower efficiency of PCR amplification for GC-rich templates. You can mitigate this by optimizing your PCR conditions, such as increasing the initial denaturation time, which has been shown to improve the detection of high-GC% species [10].

4. What is the best way to monitor and correct for bias in my workflow? The most robust method is to use a mock community—a defined mix of known bacterial strains—alongside your experimental samples. By sequencing this mock community with your chosen protocol, you can quantify the bias introduced at every step and identify which taxa are being over- or under-represented [6] [11]. For advanced users, computational models built from mock community data or calibration experiments can then be applied to correct the bias in your actual samples [2].

Troubleshooting Guide

Use the following table to diagnose and address common problems related to the major sources of bias.

| Problem Symptom | Potential Cause | Corrective Action & Experimental Optimization |

|---|---|---|

| Under-representation of Gram-positive bacteria (e.g., Firmicutes, Actinobacteria) | Inefficient cell lysis during DNA extraction, often due to inadequate mechanical disruption of tough cell walls. | Implement rigorous mechanical lysis: Use a repeated bead-beating protocol with a mixture of different bead sizes (e.g., 0.1 mm zirconia/silica beads with larger glass beads) to ensure comprehensive cell breakage [11]. |

| Spurious or unexpected absence of specific taxa | Primer mismatch, where the "universal" primers have poor binding efficiency to the 16S rRNA gene of certain bacteria. | Re-evaluate primer choice: Use in-silico tools (e.g., mopo16S, DegePrime) to select primer pairs with maximal coverage and minimal matching-bias for your target environment [9]. Validate with a mock community containing the missing taxa [12]. |

| Over-estimation of low GC% species and under-estimation of high GC% species | PCR amplification bias against templates with high genomic GC-content. | Optimize PCR conditions: Increase the initial denaturation time (e.g., from 30s to 120s) and/or use PCR additives like DMSO or betaine to facilitate denaturation of GC-rich templates [10]. Limit PCR cycles to the minimum necessary [2]. |

| High variation between technical replicates or different sample batches | Inconsistent DNA extraction efficiency or PCR amplification, often a result of manual protocol deviations or reagent degradation. | Standardize and automate: Use master mixes for PCR to reduce pipetting error. Introduce detailed SOPs with highlighted critical steps and use checklists. Include a positive control (e.g., a mock community) in every batch to monitor consistency [13] [11]. |

| Inaccurate community structure compared to a known standard | Cumulative bias from multiple sources (extraction, primers, PCR). | Employ a bias quantification and correction protocol: Use a multi-step calibration experiment involving a pooled sample amplified for different cycle numbers to model and computationally correct for PCR bias using log-ratio linear models [2]. |

Experimental Protocols for Bias Assessment and Mitigation

Protocol 1: Using Mock Communities to Quantify Total Workflow Bias

This protocol helps you characterize the total bias introduced by your entire workflow, from DNA extraction to sequencing.

- Acquire or Create a Mock Community: Obtain a commercially available, well-defined mock community (e.g., from BEI Resources or Zymo Research) comprising genomic DNA from 20 or more bacterial species with known, even composition [10] [6].

- Process the Mock Community: Subject the mock community to your standard DNA extraction, library preparation, and sequencing protocol. Include it as a control in every sequencing run.

- Data Analysis:

- Process the sequencing data through your standard bioinformatics pipeline.

- Compare the observed relative abundances of each species to the expected abundances.

- Calculate the coefficient of variance across replicates to assess reproducibility [10].

- The deviation from the expected composition is a direct measure of the bias introduced by your protocol for those specific taxa.

Protocol 2: A Calibration Experiment to Isolate and Correct PCR NPM-Bias

This advanced protocol, adapted from Silverman et al. (2021), helps measure and correct for PCR bias from non-primer-mismatch sources (NPM-bias) [2].

- Create a Calibration Sample: Pool aliquots of extracted DNA from all study samples into a single, representative pool.

- Set Up Cycle Gradient PCR: Split this pooled sample into multiple aliquots. Amplify each aliquot for a different number of PCR cycles (e.g., 15, 20, 25, 30 cycles), keeping all other conditions identical.

- Sequence and Model: Sequence all aliquots and build a log-ratio linear model where the microbial composition is the dependent variable and the PCR cycle number is the independent variable.

- Apply the Model: The intercept of this model estimates the true composition before PCR bias, and the slope represents the taxon-specific amplification efficiencies. This model can then be applied to correct the data from your actual experimental samples.

The diagram below illustrates the key sources of bias in the 16S rRNA amplicon sequencing workflow and the corresponding strategies to mitigate them.

Research Reagent Solutions

The following table lists key reagents and materials essential for mitigating bias in 16S rRNA sequencing studies.

| Item | Function in Bias Mitigation |

|---|---|

| Mock Communities (e.g., from BEI Resources, ZymoBIOMICS) | Defined mixes of bacterial strains or DNA used as positive controls to quantify bias and validate protocols [10] [11]. |

| Mechanical Lysis Beads (Zirconia/Silica, 0.1mm) | Essential for the efficient and unbiased lysis of tough bacterial cell walls (e.g., Gram-positive) during DNA extraction [11]. |

| Optimized Primer Pairs (e.g., 515F-806R, 341F-785R) | Primer sets selected for high coverage and low matching-bias against target populations, often identified via computational tools [12] [9]. |

| High-Fidelity DNA Polymerase | Reduces PCR-introduced errors and can improve amplification efficiency across diverse templates [10]. |

| PCR Additives (e.g., DMSO, Betaine) | Assist in denaturing difficult templates, helping to mitigate amplification bias against high GC-content sequences [10]. |

| Stabilization Buffers (e.g., OMNIgene·GUT, DNA/RNA Shield) | Preserve microbial community composition at room temperature, preventing shifts due to bacterial growth post-collection [11]. |

Troubleshooting Guides and FAQs

FAQ: Understanding and Overcoming Bias in 16S rRNA Sequencing

1. What are the most significant sources of bias that affect diversity metrics in 16S rRNA sequencing?

The most significant biases originate from the experimental workflow itself. Key sources include:

- PCR Amplification Bias: The PCR step can skew the true biological representation due to varying amplification efficiency of different templates. This is influenced by primer selection, number of PCR cycles, and polymerase error rates, which can create spurious sequences [14] [1] [15].

- Variable 16S rRNA Gene Copy Number: The number of 16S rRNA genes varies per bacterial genome (from one to ~10), meaning a bacterium with more copies will be overrepresented in the final data compared to its true biological abundance [16].

- Sequencing Errors and Cross-Talk: Sequencing errors and index hopping (cross-talk) can introduce spurious Operational Taxonomic Units (OTUs) or Amplicon Sequence Variants (ASVs), artificially inflating richness metrics [14] [16].

- Sample DNA Concentration: Samples with lower DNA concentration have been demonstrated to have significantly increased technical variation across sequencing runs, which reduces reproducibility [17].

2. How does PCR bias specifically impact alpha diversity metrics?

PCR bias distorts the underlying abundance distribution of species in a sample, which directly impacts alpha diversity metrics [16].

- Richness (e.g., Observed Features, Chao1): PCR errors and chimeras create spurious sequences, artificially inflating richness estimates. Conversely, primer mismatches can prevent some species from amplifying at all, leading to an underestimation of true richness [14] [16].

- Evenness/Dominance (e.g., Simpson, Berger-Parker): The preferential amplification of certain templates over others during PCR disrupts the actual abundance ratios. This can make a community appear more or less even than it truly is [18].

- Information Metrics (e.g., Shannon Index): Since these metrics incorporate both richness and evenness, they are affected by both the inflation of spurious taxa and the distortion of abundance profiles [18] [16].

3. Can we quantify the technical variation introduced by sequencing runs compared to biological variation?

Yes, studies have directly compared this. Research sequencing nearly 1000 samples across 18 runs found that while technical variation exists, biological variation was significantly higher than technical variation due to sequencing runs [17]. This underscores that while technical bias is a critical confounder, it does not typically eclipse the strong biological signals present in well-designed studies.

4. What is a "mock community" and why is it important for troubleshooting?

A mock community is a synthetic mixture of genomic DNA from known microorganisms. It serves as a positive control to benchmark your entire wet-lab and bioinformatics pipeline.

- By comparing your sequencing results to the known composition of the mock community, you can assess the accuracy (how close the measured abundances are to the true abundances) and precision (technical variation across replicates) of your workflow [17]. A simplified mock community may show limitations in accuracy but can still demonstrate robust precision [17].

Troubleshooting Guide: Diagnosing Diversity Metric Anomalies

Problem: Inflated Richness Estimates

| Observation | Potential Cause | Solution |

|---|---|---|

| High number of rare OTUs/ASVs, particularly singletons (sequences appearing only once). | PCR errors and sequencing errors creating spurious sequences [14]. | Implement strict quality filtering and denoising algorithms (e.g., DADA2, Deblur) [17] [14]. Use positive controls to estimate error rates. |

| Index hopping (cross-talk) between samples on a sequencing run [16]. | Use dual-indexed primers and bioinformatic tools to filter reads with non-matching index pairs. | |

| Chimeric sequences formed during PCR [14] [1]. | Use chimera detection software (e.g., Uchime) as part of your bioinformatics pipeline. Reduce PCR cycle numbers to minimize chimera formation [14] [1]. |

Problem: Unreliable or Skewed Beta Diversity Results

| Observation | Potential Cause | Solution |

|---|---|---|

| Samples cluster based on sequencing run or DNA extraction batch rather than biological groups. | Batch effects from technical processing. High technical variation in low-DNA-concentration samples [17]. | Include positive controls in every batch to correct for run-to-run variation. Standardize DNA input concentrations across samples where possible. |

| PCR bias differentially affecting samples with different community compositions. | Use a modified PCR protocol with fewer cycles (e.g., 15-18 instead of 35) and include a "reconditioning PCR" step to reduce heteroduplex molecules [1]. | |

| Poor separation between groups in UniFrac analysis. | Sparse data with many zeroes, often due to incomplete sampling or amplification dropouts. | Ensure adequate sequencing depth per sample. Be aware that primer choice can affect which taxa are amplified [15]. |

Table 1: Impact of Modified PCR Protocols on PCR Artifacts (as demonstrated in [1])

| PCR Protocol | Number of Cycles | % Chimeric Sequences | % Unique 16S rRNA Sequences | Estimated Total Sequences (Chao-1) |

|---|---|---|---|---|

| Standard | 35 | 13% | 76% | 3,881 |

| Modified (with reconditioning step) | 15 + 3 | 3% | 48% | 1,633 |

Table 2: Impact of Sample Type and DNA Concentration on Technical Variation (as demonstrated in [17])

| Sample Type | Relative DNA Concentration | Technical Variation (Precision) Across Runs |

|---|---|---|

| Stabilized Fecal Samples | Highest | Lowest |

| Fecal Swab Samples | Medium | Medium |

| Oral Swab Samples | Lowest | Highest |

Experimental Protocols for Bias Mitigation

Protocol 1: Modified PCR Amplification to Reduce Artifacts

This protocol is adapted from research that significantly reduced chimeras and spurious sequences [1].

- First PCR Stage: Perform a limited-cycle PCR amplification. Use 15 cycles with your standard 16S rRNA gene primers.

- Reconditioning PCR: Transfer a small aliquot (e.g., 1 µL) of the first PCR product to a fresh PCR mixture. Perform an additional 3 amplification cycles.

- Rationale: The limited cycles reduce the accumulation of polymerase errors and chimeras. The reconditioning step helps to "correct" heteroduplex molecules (a source of chimeras) by allowing them to denature and reanneal to a more abundant, correct template [1].

Protocol 2: Using Positive and Negative Controls

Including controls is non-negotiable for quantifying and correcting bias [17] [19].

- Mock Community (Positive Control): Include a commercially available or custom-built mock community of known composition in every DNA extraction and sequencing batch. This allows you to measure accuracy and precision.

- Negative Control: Include a blank (e.g., water) that undergoes the entire process from DNA extraction onward. This allows you to identify and bioinformatically subtract contaminating sequences.

- Analysis: Use the data from the mock community to understand the error profile of your run. The negative control reveals contaminating taxa that should be removed from all samples.

Workflow and Relationship Diagrams

Diagram 1: This workflow maps critical points where bias is introduced during 16S rRNA sequencing and identifies specific mitigation strategies to employ at each step.

Diagram 2: This diagram illustrates the logical cascade of how a single source of bias, PCR amplification bias, propagates through the data to impact various alpha and beta diversity metrics.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Robust 16S rRNA Sequencing

| Item | Function | Key Consideration |

|---|---|---|

| DNA Stabilization Buffer (e.g., OmniGene Gut Kit) | Preserves microbial DNA at ambient temperature post-collection, minimizing changes before extraction [17]. | Critical for field studies or clinical settings where immediate freezing is not possible. |

| PowerSoil DNA Isolation Kit | DNA extraction kit optimized for difficult environmental and stool samples; effective at removing PCR inhibitors [17]. | Consistency in DNA extraction method is vital to minimize batch effects. |

| Mock Community Standard (e.g., ZymoBIOMICS) | Defined mix of microbial genomes used as a positive control to quantify accuracy and precision of the entire workflow [17]. | Should be included in every processing batch to track and correct for technical variation. |

| High-Fidelity DNA Polymerase | PCR enzyme with proofreading activity to reduce polymerase errors during amplification [14]. | Lower error rates help prevent the creation of spurious sequences that inflate richness. |

| Dual-Indexed PCR Primers | Primers with unique barcodes on both ends to allow multiplexing and robust demultiplexing, reducing index hopping [14]. | Essential for accurately assigning sequences to the correct sample in multiplexed runs. |

| Magnetic Bead Cleanup Kits | For post-PCR cleanup and size selection to remove primer dimers and other unwanted fragments [13] [19]. | Prevents adapter-dimer contamination from overwhelming the sequencing run. |

In 16S rRNA sequencing research, accurately interpreting microbial community data requires a clear understanding of the technical errors introduced during experimental workflows. Both PCR amplification and sequencing processes generate artifacts that can significantly distort microbial diversity estimates and taxonomic composition. This guide provides a structured approach to identifying, troubleshooting, and mitigating these distinct error types within the context of overcoming PCR bias in 16S rRNA studies.

FAQ: Fundamental Distinctions

What is the primary difference between a PCR artifact and a sequencing error? PCR artifacts are generated during the amplification process and include chimeras, heteroduplex molecules, and polymerase errors. These artifacts alter the molecular composition of your amplicon pool before sequencing even begins. In contrast, sequencing errors occur during the nucleotide detection process on the sequencing platform itself, resulting in incorrect base calls in your read data [4].

Why can't bioinformatics fix all these problems later? While bioinformatic tools are essential for error reduction, they cannot completely compensate for biases introduced during wet lab procedures. PCR bias, such as the preferential amplification of certain templates, alters the actual relative abundance of sequences in your sample. Once this distortion occurs, it becomes embedded in your data and cannot be fully computationally corrected, leading to potentially skewed biological interpretations [2] [6].

How do I know if my observed "rare biosphere" is real or technical error? The "rare biosphere" is particularly vulnerable to inflation by technical errors. High rates of unique sequences (e.g., >60% singletons) strongly suggest significant contamination by PCR errors or sequencing noise. Clustering sequences into 99% similarity groups has been shown to effectively collapse most Taq polymerase errors while retaining biological variants, providing a more realistic estimate of true diversity [1].

Troubleshooting Guide: Identifying and Resolving Common Issues

Problem 1: Overestimated Diversity and High Singleton Count

- Symptoms: An unexpectedly high number of unique sequences (singletons) that inflate alpha-diversity metrics; low library coverage.

- Primary Causes:

- Solutions:

- Wet Lab: Reduce PCR cycle number. Use high-fidelity polymerases. Include a "reconditioning PCR" step (3 additional cycles in a fresh mix) to reduce heteroduplex molecules [1].

- Bioinformatics: Employ denoising algorithms (DADA2, Deblur) to generate Amplicon Sequence Variants (ASVs) or cluster sequences into 99% Operational Taxonomic Units (OTUs) to collapse technical variants [1] [20].

Problem 2: Chimeric Sequences Creating False Taxa

- Symptoms: Detection of novel, low-abundance taxa that are phylogenetically unexpected; sequences that align well to two different parent taxa.

- Primary Cause: Chimeras, formed when an incomplete PCR product from one template acts as a primer on another template, creating a hybrid molecule [4] [21].

- Solutions:

- Wet Lab: Limit PCR cycles. Use robust polymerase with high processivity. The reconditioning PCR step can significantly reduce chimeras [1].

- Bioinformatics: Apply chimera detection tools like UCHIME against a reference database. One study showed this reduced chimeras from 8% in raw reads to just 1% post-filtering [4] [21].

Problem 3: Skewed Microbial Community Composition (PCR Bias)

- Symptoms: Consistent over- or under-representation of specific taxonomic groups compared to known expectations (e.g., from mock communities); poor reproducibility between labs.

- Primary Causes:

- Primer-Template Mismatches: Especially critical in the first few PCR cycles [2].

- Variable Amplification Efficiency (PCR NPM-Bias): Differences in amplification efficiency between templates persisting beyond initial cycles, leading to a log-ratio linear distortion of true abundances [2].

- DNA Extraction Bias: The DNA extraction kit and protocol can dramatically alter observed community structure [6].

- Solutions:

- Wet Lab: Use well-validated, universal primers. Standardize DNA extraction protocols across a study. For precise quantification, employ a calibration experiment with pooled samples amplified for different cycle numbers to model and correct for bias [2] [12].

- Bioinformatics: Apply computational corrections using log-ratio linear models derived from calibration experiments to mitigate PCR NPM-bias [2].

Problem 4: Low Library Yield or High Adapter-Dimer Contamination

- Symptoms: Insufficient library concentration for sequencing; a prominent peak at ~70-90 bp on an electropherogram (adapter dimers).

- Primary Causes:

- Poor Input Quality: Degraded DNA or contaminants (phenol, salts) inhibiting enzymes.

- Inefficient Ligation/Amplification: Suboptimal adapter-to-insert ratios, inefficient ligase, or too few PCR cycles.

- Inadequate Cleanup: Failure to remove adapter dimers during size selection [13].

- Solutions:

- Wet Lab: Re-purify input DNA, check quality metrics (260/280 ~1.8). Titrate adapter concentrations. Optimize bead-based cleanup ratios to retain fragments and remove dimers [13].

- QC: Use fluorometric quantification (Qubit) over UV absorbance (NanoDrop). Always inspect library profile with a BioAnalyzer or TapeStation [13].

The table below summarizes key quantitative findings on error rates and the efficacy of mitigation strategies from the literature.

Table 1: Quantifying Errors and Mitigation Efficacy in 16S rRNA Sequencing

| Error Type | Reported Frequency | Effective Mitigation Strategy | Impact After Mitigation |

|---|---|---|---|

| Taq Polymerase Errors | Error rate ~3.3 × 10⁻⁵ per nt/duplication [1] | Clustering at 99% similarity | ~80% of lineages shared between libraries, vs. significant differences at 100% [1] |

| Chimeras | 13% in standard (35-cycle) library [1] | Modified protocol (15 cycles + reconditioning) & UCHIME | Reduced to 3% [1]; from 8% down to 1% in another study [4] [21] |

| Sequencing Errors (Pyrosequencing) | Average error rate 0.0060 per base [4] | PyroNoise flowgram denoising | Overall error rate reduced to 0.0002 [4] |

| PCR Bias (NPM-Bias) | Can skew abundance estimates by a factor of 4 or more [2] | Log-ratio linear model correction | Allows for estimation and mitigation of bias without mock communities [2] |

Experimental Protocols for Error Characterization

Protocol 1: Using Mock Communities to Quantify Total Workflow Bias

Mock communities with known composition are the gold standard for quantifying total bias in your workflow [6].

- Acquire or Create a Mock Community: Use a defined mix of genomic DNA from 20-30 bacterial strains. Complexity should reflect your study system [20] [6].

- Process in Parallel: Subject the mock community to your standard 16S rRNA gene sequencing pipeline—DNA extraction, PCR, sequencing—alongside your experimental samples.

- Sequence and Analyze: Sequence the mock community and process the data identically to your samples.

- Compare to Ground Truth: Compare the observed composition (taxonomic relative abundances) to the known expected composition. The discrepancy is your total procedural bias, allowing you to identify which taxa are over/under-represented in your data [6].

Protocol 2: A Calibration Experiment to Measure PCR NPM-Bias

This protocol measures and corrects for bias specifically introduced during mid-to-late PCR cycles [2].

- Create a Calibration Sample: Pool aliquots of extracted DNA from all study samples. This ensures representation of all relevant taxa.

- Aliquot and Amplify: Split the pooled sample into multiple aliquots. Amplify each aliquot for a different number of PCR cycles (e.g., 15, 20, 25, 30).

- Sequence and Model: Sequence all aliquots. Use a log-ratio linear model (e.g., with the

fidoR package) to relate the observed composition to the cycle number. The model's intercept estimates the true composition prior to PCR bias, and the slope estimates the taxon-specific amplification efficiencies [2]. - Apply Correction: Use this model to correct for PCR bias in your actual study samples.

Workflow and Decision Diagrams

The following diagram illustrates the logical pathway for diagnosing the source of technical errors in 16S rRNA sequencing data.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Tools for Error Mitigation

| Item | Function & Importance | Considerations |

|---|---|---|

| High-Fidelity DNA Polymerase | Reduces nucleotide misincorporation during PCR, lowering erroneous sequence variants. | Essential for limiting polymerase errors, a major source of inflated diversity [1]. |

| Validated Primer Panels | Ensure broad, unbiased amplification of the target taxonomic group. | Primer choice is a major source of bias; different variable regions (V4, V3-V4, etc.) yield different taxonomic profiles [12]. |

| Mock Community Standards | Provides ground-truth for quantifying total bias (extraction, PCR, sequencing) in the workflow. | Should be of sufficient and relevant complexity for the study system [20] [6]. |

| Magnetic Bead Cleanup Kits | For efficient size selection and removal of adapter dimers and primer artifacts. | Critical for clean library prep; the bead-to-sample ratio must be optimized to prevent loss of desired fragments [13]. |

| Fluorometric Quantification Kits (Qubit) | Accurately measures concentration of double-stranded DNA. | More reliable for NGS library quantification than UV absorbance, which can overestimate due to contaminants [13]. |

| Bioinformatic Tools: DADA2, Deblur, UNOISE3 | Denoising algorithms that correct sequencing errors and output Amplicon Sequence Variants (ASVs). | ASV methods offer high resolution but can over-split; evaluate against mock data [20]. |

| Bioinformatic Tools: UCHIME | Reference-based algorithm for detecting and removing chimeric sequences. | Highly effective at removing a major source of spurious OTUs/ASVs [4] [21]. |

In clinical microbiome research, the 16S rRNA gene sequencing technique is a cornerstone for identifying bacterial populations and understanding their role in health and disease. However, the accuracy of this method is fundamentally compromised by multiple, cascading sources of bias. These biases, introduced at every stage from sample collection to computational analysis, can severely distort the microbial profile, leading to incorrect biological interpretations and flawed clinical conclusions. This case study delves into the primary sources of these biases, presents data on their quantitative impact, and provides a troubleshooting guide to help researchers identify, mitigate, and correct for these errors in their own studies.

The journey from a biological sample to microbial community data is fraught with potential distortions. The major sources of bias can be categorized into wet-lab (experimental) and dry-lab (computational) processes.

Wet-Lab Experimental Biases

- DNA Extraction Bias: The method used to break open bacterial cells and extract DNA is a major confounder. Protocols differ in their lysis efficiency, meaning some tough-to-lyse bacteria (e.g., Gram-positive with thick peptidoglycan layers) may be systematically underrepresented compared to easy-to-lyse (e.g., Gram-negative) bacteria [22] [11]. This is one of the most significant sources of bias in microbiome analysis.

- PCR Amplification Biases: The polymerase chain reaction, used to amplify the target 16S gene region, introduces several errors:

- Stochasticity: In early PCR cycles, the random amplification of individual molecules can cause dramatic skews in final sequence representation, especially when starting template concentrations are low [23].

- Polymerase Errors: DNA polymerase enzymes can incorporate incorrect nucleotides, creating erroneous sequences that are often confined to low copy numbers [23].

- GC Bias: Sequences with very high or low GC content may amplify with different efficiencies, though one study found this to have a minor effect compared to stochasticity [23].

- Template Switching: This rare event can create chimeric sequences—artifactual hybrids from two different parent sequences—which inflate diversity estimates [23] [22].

- Index Misassignment (Index Hopping): In high-throughput multiplexed sequencing, a small percentage of reads can be misassigned to the wrong sample due to errors during cluster generation on the flow cell. This creates false positive rare taxa and can significantly inflate alpha diversity in simple communities [24].

Dry-Lab Computational Biases

- Reference Database Errors: The accuracy of taxonomic classification is entirely dependent on the quality of the reference database. Common issues include:

- Incorrect Taxonomic Labelling: Sequences may be misidentified due to submitter error [25].

- Database Contamination: Reference sequences can be contaminated with host, vector, or other non-target DNA, leading to false taxonomic assignments [25].

- Taxonomic Underrepresentation: The database may lack sequences for the true microbes in your sample, preventing their accurate identification [25].

Table 1: Quantitative Impact of Different PCR Biases in a Low-Input Amplicon Pool

| Source of Bias | Relative Impact on Sequence Representation | Key Characteristics |

|---|---|---|

| PCR Stochasticity | Major | The dominant force skewing representation; effect is most pronounced with low starting quantities of DNA [23]. |

| Polymerase Errors | Moderate | Very common in later PCR cycles, but these erroneous sequences typically remain at low copy numbers [23]. |

| GC Bias | Minor | Variable amplification efficiency based on GC content; found to have a minor effect in one experimental system [23]. |

| Template Switching | Minor (Rare) | Creates chimeric sequences; rate increases with higher input cell numbers but remains a rare event [23] [22]. |

How can experimental design and controls correct for technical bias?

A carefully designed experiment with appropriate controls is the first and most crucial line of defense against technical biases.

Essential Experimental Controls

- Mock Community Controls: These are commercially available standards comprising a defined mix of bacterial cells or DNA from known species. They should be included in every batch of sample processing, from DNA extraction to sequencing. By comparing your results from the mock community to its known composition, you can directly measure the bias introduced by your entire workflow and correct for it computationally [22] [26].

- Negative Controls: These include "no-template" controls (NTC) for PCR and blanks for DNA extraction. They are critical for identifying contaminants from reagents, kits, or the laboratory environment, which is especially important for low-biomass samples [22] [11].

- Positive Controls: A well-characterized sample (e.g., from a previous run or a different study) processed alongside new samples helps monitor technical variation and batch effects across different processing rounds [11].

Optimized Wet-Lab Protocols

- Standardized Storage: For fecal samples, immediate freezing at -80°C is considered the gold standard. When logistics are challenging, stabilization buffers (e.g., OMNIgene·GUT, Zymo DNA/RNA Shield) can limit microbial composition changes at room temperature, though they do not perfectly replicate frozen samples [11].

- Mechanical Lysis: Use a rigorous bead-beating step with a mix of different bead sizes during DNA extraction to ensure the efficient lysis of a wide range of bacteria, particularly tough-to-break Gram-positive species [11].

- Minimize PCR Cycles: Using the minimum number of PCR cycles necessary during library preparation reduces the accumulation of errors, chimeras, and the skewing effects of stochasticity and primer bias [23] [11]. One study suggests 25 cycles as an optimal parameter [11].

Table 2: Troubleshooting Guide for Common Sequencing Preparation Issues

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input / Quality | Low library complexity, degraded DNA | Sample contaminants (salts, phenol), inaccurate quantification, degraded nucleic acids [13]. | Re-purify input sample; use fluorometric quantification (Qubit) over UV absorbance; check 260/280 and 260/230 ratios [13]. |

| Amplification / PCR | High duplicate rate, overamplification artifacts, bias | Too many PCR cycles; polymerase inhibitors; primer exhaustion or mispriming [13]. | Minimize PCR cycles; use high-fidelity polymerase; titrate primers; avoid overcycling weak products [23] [13] [11]. |

| Post-Sequencing Data | Inflated diversity, false positive rare taxa | Index misassignment; chimeric sequences; database errors [25] [24]. | Use dual-indexed primers; employ bioinformatic chimera removal (e.g., DADA2); use curated databases [24] [26]. |

What computational tools can debias microbiome data?

Once data is generated, bioinformatic preprocessing is vital to remove technical artifacts before biological interpretation.

Preprocessing and Denoising

- Denoising Algorithms: Tools like DADA2 and Deblur are used to correct sequencing errors and infer exact amplicon sequence variants (ASVs), providing a higher resolution and more reproducible output than older clustering methods (OTUs) [26].

- Chimera Removal: Denoising pipelines like DADA2 include steps to identify and remove chimeric sequences, which are artifacts of the PCR process and not real biological sequences [22] [26].

Innovative Computational Correction Methods

- Morphology-Based Bias Correction: A 2025 study demonstrated that extraction bias per bacterial species is predictable by bacterial cell morphology (e.g., cell wall structure, shape). Using mock communities, researchers can create a model that corrects for observed biases in environmental samples based on these morphological properties, significantly improving the accuracy of the resulting microbial compositions [22].

The following diagram illustrates the core workflow of this novel computational correction method.

A practical example: How to implement an alternative, less biased method

The CAPRA (Gene Capture and Random Amplification) protocol offers an alternative to traditional PCR that mitigates primer bias. It separates the enrichment of target genes from their amplification.

Principle: Instead of using two primers for exponential amplification, a single biotinylated capture probe enriches the target gene (e.g., rpoC). The enriched genes are then amplified using random hexamers in a non-exponential manner, which preserves quantitative ratios more faithfully.

Step-by-Step Methodology:

Gene Capture:

- Use a biotinylated oligonucleotide capture probe designed for a conserved region of a universal, single-copy gene (e.g., the FDGDQMA amino acid motif in the rpoC gene).

- Hybridize the probe to sheared, denatured genomic DNA from the sample.

- Bind the probe-DNA hybrid to streptavidin-coated magnetic beads and wash away non-target DNA.

Random Amplification:

- Amplify the captured, single-stranded DNA using a fully degenerate hexanucleotide primer and a DNA polymerase.

- This linear (non-exponential) amplification step generates sufficient quantity of the enriched target for downstream analysis (e.g., cloning, microarray hybridization) without the skewing effects of exponential PCR.

The workflow below outlines the key steps of this method and its advantage over conventional PCR.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Bias-Aware Microbiome Research

| Item | Function | Example Use-Case |

|---|---|---|

| ZymoBIOMICS Microbial Community Standards | Defined mock communities of known composition (even or staggered) used as positive controls to measure and correct for technical bias across the entire workflow [22] [11] [24]. | Quantifying the combined bias of DNA extraction and PCR amplification in a batch of samples. |

| OMNIgene·GUT / Zymo DNA/RNA Shield | Sample stabilization buffers that preserve microbial composition at room temperature for several days, facilitating sample transport when immediate freezing is not feasible [11]. | Large-scale, multi-center clinical studies where maintaining a cold chain is logistically challenging. |

| Bead Beating Tubes with Zirconia/Silica Beads | For mechanical cell disruption during DNA extraction, ensuring efficient lysis of a broad range of bacteria, including tough Gram-positive species [11]. | Standardizing DNA extraction from diverse sample types (e.g., soil, stool, water) to improve comparability. |

| High-Fidelity DNA Polymerase | Enzyme with proofreading activity to minimize the introduction of errors during PCR amplification [23]. | Reducing polymerase errors in amplicon sequences, especially when a higher number of cycles is unavoidable. |

| Dual-Indexed PCR Primers | Primers with unique barcodes on both ends to minimize the effect of index misassignment (index hopping) during sequencing [24]. | Preventing cross-talk between samples in a multiplexed sequencing run, thereby protecting the integrity of rare biosphere data. |

FAQs

Q1: My sequencing results show a high number of rare taxa. How can I tell if they are real or artifacts? A1: This is a critical challenge. A high prevalence of rare taxa can be a red flag for index misassignment or contamination. To verify, check your negative controls—if the same rare taxa appear there, they are likely artifacts. Using a mock community can help you benchmark the expected rate of false positives. Furthermore, employing a sequencing platform with a lower published index-hopping rate (e.g., DNBSEQ-G400) can reduce this issue [24].

Q2: I am seeing batch effects in my data. What are the most likely causes? A2: Batch effects are often introduced by changes in reagent lots, different personnel performing the extractions, or running PCR on different days. The most robust solution is to process cases and controls randomly across all batches. Using a positive control (like a mock community or a well-characterized sample) in every batch allows you to detect and statistically correct for these effects during analysis [11].

Q3: Why can't I just use more PCR cycles to get more DNA from my low-biomass sample? A3: While increasing PCR cycles boosts yield, it comes at a high cost. Overcycling exponentially amplifies minor contaminants in reagents, increases the formation of chimeras, and exacerbates the stochastic skewing of sequence abundances. This can completely distort the true biological signal. It is better to optimize DNA extraction for higher yield and use the minimum number of PCR cycles necessary for library preparation [23] [13] [11].

Q4: My bioinformatician says my data has a lot of "chimeras." What does this mean and how did they form? A4: Chimeras are artificial DNA sequences created when an incompletely extended PCR fragment acts as a primer on a different template in a subsequent cycle. They are common in multi-template PCR reactions like 16S sequencing and falsely inflate diversity estimates. They form during PCR, and their rate can increase with higher cycle numbers. The solution is to use bioinformatic tools like DADA2 or UCHIME that are designed to detect and remove these artifactual sequences from your dataset [23] [22] [26].

Bench-Tested Strategies: Wet-Lab and Bioinformatic Methods to Counteract Bias

Polymerase chain reaction (PCR) amplification is an integral yet problematic step in 16S rRNA gene sequencing, with bias introduced by differing amplification efficiencies between templates representing a substantial source of error [2]. Degenerate primers—oligonucleotide pools containing mixed nucleotide sequences at specific positions—have been widely adopted to improve the amplification of templates containing sequence variations in their primer-binding sites [27]. While these primers aim to increase coverage across diverse taxonomic groups, they simultaneously introduce multiple forms of bias that can distort microbial community representation [27] [2]. This technical support center provides troubleshooting guidance for researchers navigating the complexities of degenerate primer usage within 16S rRNA sequencing workflows, framed within the broader context of overcoming PCR bias.

Frequently Asked Questions (FAQs)

What are degenerate primers and how do they work?

Degenerate primers are pools of oligonucleotide sequences that contain mixed bases (such as R for A/G, Y for C/T, or N for A/C/T/G) at specific positions within their sequence. This design strategy accounts for natural genetic variation in conserved genomic regions across different microorganisms. The primary intent is to create a primer mixture where at least one variant will perfectly match the primer-binding site of a wider range of target organisms, thereby increasing the taxonomic coverage during PCR amplification [27] [28].

What specific problems do degenerate primers cause?

While designed to improve coverage, degenerate primers introduce several significant issues:

- Reduced Amplification Efficiency: Computational modeling and experimental measurements reveal that degenerate primers reduce PCR efficiency well before generating a substantial product pool, performing worse than non-degenerate primers even when amplifying non-consensus targets [27].

- Distorted Community Representation: Mismatched primers may anneal at low temperatures but not be extended efficiently, acting as reaction inhibitors. Furthermore, as best-matching oligonucleotides are incorporated in early PCR cycles, functional primers become progressively depleted, unpredictably biasing subsequent amplification [27].

- Off-target Amplification: High degeneracy can increase the risk of amplifying non-target DNA, including host DNA in clinical samples. One study showed that commonly used degenerate primers targeting the V4 region aligned to the human genome in up to 70% of amplicon sequence variants in gastrointestinal biopsy samples [29].

Are there alternatives to using fully degenerate primers?

Yes, several alternative approaches can mitigate the biases associated with fully degenerate primers:

- Thermal-Bias PCR: A novel single-reaction protocol uses only two non-degenerate primers with a large difference in annealing temperatures to isolate targeting and amplification stages, allowing proportional amplification of mismatched targets [27].

- Reduced Cycle Two-Step PCR: Alternative protocols separate a degenerate template-targeting stage from a non-degenerate library amplification stage, though these require cleaning intermediate samples and add labor and reagent costs [27].

- Computational Correction: Log-ratio linear models can be applied to estimate and correct for PCR amplification bias in microbiota datasets by modeling the relationship between starting template ratios and amplification efficiencies [2].

- Primer Optimization Tools: Bioinformatics tools like "Degenerate primer 111" systematically add degenerate bases to existing primers to maximize coverage for specific target microorganisms without unnecessarily increasing overall degeneracy [28].

How does primer choice impact the detection of specific taxa?

Primer selection dramatically influences which taxa are detected and their apparent abundance. Different variable regions (V-regions) of the 16S rRNA gene exhibit varying taxonomic resolutions for different bacterial groups [12]. For instance:

- Some primer pairs may completely miss specific phyla (e.g., Bacteroidetes was missed using primers 515F-944R) [12].

- Primers targeting the V1-V2 region were found to miss Fusobacteriota due to a two-base mismatch, requiring primer modification for detection [29].

- The estimated microbial composition varies significantly across primer pairs targeting different variable regions, making cross-study comparisons problematic [12].

Troubleshooting Guides

Problem: Low Taxonomic Coverage Despite Using Degenerate Primers

Issue: Your sequencing results show missing taxonomic groups that you know should be present in your samples.

Solutions:

- In Silico Validation: Before wet-lab work, use tools like TestPrime in the SILVA database to evaluate your primer set's coverage against your target microorganisms. This helps identify mismatches before experimental work [28].

- Strategic Degeneracy: Use tools like "Degenerate primer 111" to add degenerate bases strategically. This tool iteratively generates new primers that maximize coverage for specific uncovered target microorganisms without unnecessarily increasing overall degeneracy that would impact efficiency [28].

- Variable Region Selection: Consider switching the variable region you are amplifying. Studies show that no single variable region perfectly captures all diversity, and the optimal region depends on your sample type and target organisms [12] [29]. For example, V1-V2 primers demonstrated superior performance for human biopsy samples with minimal human DNA off-target amplification compared to V4 primers [29].

Problem: High Off-Target Amplification

Issue: A significant portion of your sequencing reads aligns to non-target DNA (e.g., host DNA in clinical samples).

Solutions:

- Primer Specificity Evaluation: Check your primer sequences for similarity to non-target genomes. One study found that the widespread off-target amplification of human DNA with V4 primers was due to significant alignment with the Homo sapiens mitochondrion haplogroup [29].

- Alternative Primer Sets: Implement primer sets with lower off-target potential. For human biopsy samples, a modified V1-V2 primer set (V1-V2M) reduced human DNA alignment to practically zero while providing higher taxonomic richness [29].

- Reduced PCR Cycles: Limit PCR cycle numbers to the minimum necessary, as late amplification cycles can exacerbate minor off-target products. However, note that one study found that simply reducing cycles did not strongly affect amplification bias and actually made the association between taxon abundance and read count less predictable [7].

Problem: Distorted Community Representation

Issue: Your microbial composition data does not match expected profiles from mock communities or other quantification methods.

Solutions:

Thermal-Bias PCR Protocol: Implement this alternative to degenerate primers which uses two non-degenerate primers with different annealing temperatures in a single reaction [27].

- Procedure: The protocol exploits a large difference in annealing temperatures to isolate targeting and amplification stages. Early cycles use a lower annealing temperature to allow priming even to mismatched templates, while later cycles use a higher annealing temperature for efficient amplification of products that now have perfectly matching primer-binding sites [27].

- Advantage: Allows stable amplification of targets containing substantial mismatches while maintaining proportional representation of community members without intermediate processing steps [27].

Bias Correction Models: Apply computational correction using log-ratio linear models as proposed in [2].

- Procedure: Pool aliquots of extracted DNA from each study sample. Split this pooled sample into aliquots and amplify each for a predetermined number of PCR cycles (covering a wide range). Sequence these samples and use log-ratio linear models to estimate and correct for taxon-specific amplification efficiencies in your data [2].

- Advantage: Can mitigate PCR bias from non-primer-mismatch sources (NPM-bias) which can skew estimates of microbial relative abundances by a factor of 4 or more [2].

Problem: Inconsistent Results Between Studies

Issue: Your results cannot be directly compared with other studies using different primer sets or protocols.

Solutions:

- Standardized Mock Communities: Always include sufficiently complex mock communities in your sequencing runs. These serve as internal standards to detect aberrancies and normalize data across studies [12] [20].

- Cross-Validation: Independently validate performance for your specific sample type. Microbial profiles generated using different primer pairs need independent validation as performance varies by environment [12].

- Consistent Bioinformatics: Use consistent clustering methods (OTUs vs. ASVs) and reference databases, as these significantly impact taxonomic assignment and comparability [12] [20]. ASV methods (like DADA2) provide consistent outputs but may over-split, while OTU methods (like UPARSE) achieve clusters with lower errors but with more over-merging [20].

Performance Comparison of Common 16S rRNA Primer Sets

Table 1: Taxonomic coverage and performance metrics of commonly used primer sets

| Primer Set | Target Region | Key Features | Coverage | Reported Limitations |

|---|---|---|---|---|

| 515F-806R (Parada-Apprill) [28] [29] | V4 | Earth Microbiome Project recommended | 83.6% Bacteria, 83.5% Archaea [28] | High off-target human DNA amplification (avg. 70% ASVs in biopsies) [29] |

| 341F-785R (Klindworth et al.) [27] [28] | V3-V4 | Commonly used for bacterial communities | Varies by sample type | Degenerate primer issues: reduced efficiency, distorted representation [27] |

| 27F-338R [12] [29] | V1-V2 | Lower off-target amplification | Varies by sample type | Requires modification (V1-V2M) to capture Fusobacteriota [29] |

| BA-515F-806R-M1 [28] | V4 | Improved version with strategic degeneracy | Increased coverage of target microorganisms | Customized for specific target microorganisms |

Impact of PCR Cycle Number on Amplification Bias

Table 2: Effects of PCR protocol modifications on amplification bias

| Protocol Modification | Effect on Bias | Implementation Considerations |

|---|---|---|

| Reduced PCR Cycles (from 32 to 16) [7] | Less effect than expected; association between abundance and read count became less predictable | Requires optimization for each sample type; may reduce sensitivity |

| Increased Template Concentration (from 15ng to 60ng) [7] | Moderate improvement in abundance recovery | Requires higher DNA input; not feasible for low-biomass samples |

| Degenerate vs. Non-degenerate Primers [27] | Non-degenerate primers outperformed degenerate ones even for non-consensus targets | Challenges conventional wisdom; thermal-bias PCR offers alternative |

| Two-Step Amplification Protocols [27] | Can separate targeting from amplification stages | Adds substantial labor and reagent costs; requires clean-up steps |

Experimental Protocols

Protocol 1: Thermal-Bias PCR for Proportional Amplification

Background: This protocol addresses the fundamental flaw in degenerate primer usage by employing a temperature-based approach to handle sequence mismatches rather than sequence degeneracy [27].

Procedure:

- Reaction Setup: Prepare PCR reactions using only two non-degenerate primers. The primers should be designed with a large inherent difference in their optimal annealing temperatures.

- Thermal Cycling:

- Initial Denaturation: 95°C for 2-5 minutes

- 25-35 Cycles of:

- Denaturation: 95°C for 30 seconds

- Low-Temperature Annealing: Use a lower annealing temperature (e.g., 45-50°C) for the first 5-10 cycles to permit initial priming even to templates with mismatches

- Extension: 72°C for 30-60 seconds per kb

- Final Extension: 72°C for 5-10 minutes

- Mechanism: The low initial annealing temperature allows priming to mismatched templates. Once amplified, these products now contain perfectly matching primer-binding sites for efficient amplification in subsequent cycles.

Advantages: Single-reaction protocol, no intermediate processing, maintains proportional representation of rare community members, avoids inefficiencies of degenerate primers [27].

Protocol 2: Computational Correction for Amplification Bias

Background: This method uses a calibration experiment and log-ratio linear models to estimate and correct for PCR bias in existing datasets [2].

Procedure:

- Calibration Sample Preparation: Prior to PCR, pool aliquots of extracted DNA from each study sample into a single pooled calibration sample.

- Cycle Gradient PCR: Split the calibration sample into multiple aliquots and amplify each for a predetermined number of PCR cycles (e.g., 15, 20, 25, 30 cycles).

- Sequencing and Analysis: Sequence all cycle-gradient samples alongside your main samples.

- Model Fitting: Apply log-ratio linear models to the cycle-gradient data to infer:

- The relative abundance of each transcript prior to PCR bias (intercept)

- The relative efficiencies with which each taxon is amplified (slope)

- Bias Correction: Use these calculated efficiencies to correct the relative abundances in your main experimental samples.

Advantages: Does not require mock communities or isolate libraries, corrects for both primer-mismatch and non-primer-mismatch bias sources [2].

Research Reagent Solutions

Table 3: Essential materials and tools for degenerate primer optimization

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| SILVA Database [12] [28] | Curated database of aligned ribosomal RNA sequences | Use TestPrime function for in silico primer coverage evaluation |

| "Degenerate primer 111" Tool [28] | Script for strategically adding degenerate bases to existing primers | Improves coverage of specific target microorganisms without excessive degeneracy |

| Mock Communities (e.g., ABRF-MGRG, HC227) [27] [20] | Genomic DNA mixtures with known composition | Essential for validating protocol performance and quantifying bias |

| High-Fidelity Polymerases | PCR amplification with lower error rates | Reduces introduction of sequence errors during amplification |

| DADA2 [20] | Denoising algorithm for Amplicon Sequence Variants (ASVs) | Provides higher resolution than OTU-based methods; corrects sequencing errors |

Workflow Visualization

Primer Selection Impact on Community Representation

Computational Bias Correction Workflow

DNA extraction is a critical first step in 16S rRNA gene sequencing that directly determines the accuracy and reliability of microbiome research outcomes. The extraction process influences DNA yield, integrity, and most importantly, the representative inclusion of all microbial taxa present in a sample. Variations in extraction efficiency, particularly between Gram-positive and Gram-negative bacteria due to differences in cell wall structure, can introduce significant PCR bias in downstream analyses, ultimately skewing the perceived microbial community structure. This technical guide provides a comprehensive comparison of DNA extraction kits and protocols, offering troubleshooting advice to help researchers overcome these challenges and obtain more accurate, reproducible results in their microbiome studies.

FAQ: DNA Extraction and 16S rRNA Sequencing

Q1: Why does DNA extraction method impact 16S rRNA sequencing results?

Different DNA extraction methods vary in their efficiency at lysing diverse bacterial cell types. Gram-positive bacteria, with their thick peptidoglycan cell walls, are more difficult to lyse compared to Gram-negative bacteria with thinner walls. Protocols without robust mechanical lysis or specialized chemical treatments can under-represent Firmicutes and other Gram-positive taxa, introducing significant bias into your microbial community profiles [30] [31]. The DNA extraction method has been demonstrated to strongly affect the detection of bacterial communities and subsequent 16S rRNA amplicon sequencing results.

Q2: How can I minimize host DNA contamination in samples with high human-to-bacterial DNA ratios?

For human biopsy samples, blood, or other low-biomass samples, use primer sets that minimize off-target amplification of human DNA. Primers targeting the V1-V2 region have demonstrated significantly less off-target amplification compared to V4 primers, which can generate up to 70% human DNA amplicons in some biopsy samples [29]. Additionally, consider extraction protocols that incorporate steps to reduce host DNA, such as selective lysis of human cells or enzymatic degradation of human DNA prior to microbial lysis.

Q3: What is the optimal sample storage and handling procedure prior to DNA extraction?

Maintain sample sterility, freeze samples immediately at -20°C or -80°C, and avoid freeze-thaw cycles. For temporary storage, 4°C is suitable, or use preservation buffers to prolong sample integrity for hours to days before freezing [19]. Consistent handling procedures across all samples in a study is crucial to prevent technical variations from obscuring biological signals.

Q4: How important are controls in DNA extraction for microbiome studies?

Essential. Always include:

- Negative controls (extraction blanks) to identify contamination from reagents or the environment

- Positive controls (mock communities with known composition) to verify extraction efficiency and detect biases [12] [30] Mock communities should be of sufficient complexity and include both Gram-positive and Gram-negative bacteria to properly validate your extraction protocol.

Q5: Should I use bead beating in my DNA extraction protocol?

Bead beating is generally recommended for more comprehensive lysis of diverse bacteria, particularly Gram-positive species. However, the intensity and duration must be optimized - excessive bead beating can shear DNA from easily-lysed bacteria and reduce DNA quality [30] [31]. Standardize bead beating parameters across all samples in a study for reproducible results. Alternative lysis methods like alkaline/heat/detergent combinations can also provide consistent lysis across bacterial populations without mechanical shearing [31].

Troubleshooting Common DNA Extraction Issues

Problem: Low DNA Yield

Potential Causes and Solutions:

- Insufficient lysis: Increase bead-beating intensity/duration or incorporate enzymatic pre-treatment (lysozyme, mutanolysin) for Gram-positive bacteria

- Sample quantity too low: Optimize sample input; some protocols work efficiently with as little as 10mg [31]

- Inhibitors present: Add additional purification steps or use inhibitor removal kits

- DNA loss during purification: Include carrier molecules during precipitation steps

Problem: Under-Representation of Gram-Positive Bacteria

Potential Causes and Solutions:

- Insufficient cell disruption: Implement harsher lysis conditions; bead beating generally improves Gram-positive recovery [30]

- Protocol too gentle: Try the novel 'Rapid' alkaline/heat/detergent protocol which provides more uniform lysis across bacterial types [31]

- Validation needed: Always test your protocol with mock communities containing both Gram-positive and Gram-negative bacteria

Problem: Inconsistent Results Between Samples

Potential Causes and Solutions:

- Variable bead beating: Ensure consistent tube positioning and filling in bead beaters

- Inconsistent sample handling: Standardize sample weighing, homogenization, and processing times

- Operator variation: Implement detailed SOPs and training for all personnel

- Reagent lot variation: Where possible, use the same reagent lots for entire studies

Problem: Poor DNA Quality Affecting PCR Amplification

Potential Causes and Solutions:

- DNA shearing: Reduce mechanical lysis intensity or duration

- Inhibitors co-purified: Add additional wash steps or use clean-up kits

- Degraded samples: Ensure proper storage conditions and avoid repeated freeze-thaw cycles

- Low purity: Check A260/280 ratios; optimize purification methods

Comparative Analysis of DNA Extraction Methods

Performance Metrics Across Extraction Methods

The following table summarizes key performance characteristics of different DNA extraction approaches based on comparative studies:

Table 1: Comparison of DNA Extraction Method Characteristics

| Method Type | Gram-Positive Efficiency | DNA Yield | DNA Quality | Reproducibility | Throughput |

|---|---|---|---|---|---|

| Bead Beating Protocols | High [30] | Variable | Moderate (shearing) | Moderate | Moderate |

| Enzymatic Lysis | Low to Moderate [31] | Low to Moderate | High | High | High |

| Alkaline/Heat/Detergent | High [31] | High | High | High | High |

| Spin Column Kits | Variable by kit | Variable | High | High | High |

Kit Performance Comparison

Table 2: Commercial DNA Extraction Kit Performance Comparison

| Kit/Protocol | DNA Yield | Gram-Positive Efficiency | Alpha Diversity | Best Application |

|---|---|---|---|---|

| DNeasy PowerLyzer PowerSoil (QIAGEN) | High [30] | High [30] | High [30] [32] | Complex samples (stool) |

| NucleoSpin Soil (Macherey-Nagel) | Moderate [30] | Moderate | Moderate | Environmental samples |

| ZymoBIOMICS DNA Mini | Moderate [30] | Moderate | Moderate | Standard microbiome samples |

| Novel 'Rapid' Protocol | High [31] | High [31] | High [31] | High-throughput studies |

Experimental Protocols for Method Validation

Protocol 1: Standardized DNA Extraction with Bead Beating

This protocol is adapted from the HMP protocol and has been widely used in microbiome studies [30]:

- Sample Preparation: Weigh 180-220mg of frozen stool sample into a sterile tube

- Lysis: Add lysis buffer and perform bead beating with 0.1mm glass beads for 2-5 minutes

- Incubation: Incubate at 70°C for 10-15 minutes

- Precipitation: Add inhibitor removal solution and centrifuge

- DNA Binding: Transfer supernatant to spin column and centrifuge

- Washing: Perform two wash steps with wash buffers

- Elution: Elute DNA in 50-100μL elution buffer

- Quality Control: Measure DNA concentration, purity (A260/280), and fragment size

Protocol 2: Novel 'Rapid' Alkaline/Heat/Detergent Protocol

This non-bead-beating protocol provides uniform lysis across bacterial populations [31]:

- Sample Input: Transfer 10mg or less of sample to a 96-well plate format

- Lysis: Add alkaline lysis buffer (KOH-based) with detergents

- Heat Treatment: Incubate at 65-95°C for 5-15 minutes

- Neutralization: Add neutralization buffer

- Direct PCR: Use lysate directly for 16S rRNA gene amplification or proceed to purification

- Optional Purification: Clean up with standard silica-based columns if needed

This protocol enables rapid transfer and simultaneous lysis of 96 samples, reducing sample handling time 20-fold compared to manual methods [31].

Protocol 3: Validation Using Mock Communities

Always validate your chosen extraction protocol with mock communities:

- Select Appropriate Mock: Choose a mock community with both Gram-positive and Gram-negative bacteria (e.g., ZymoBIOMICS Microbial Community Standard)

- Parallel Processing: Extract mock community alongside your samples using the same protocol

- Sequencing and Analysis: Sequence the mock community and compare observed composition to expected composition

- Bias Assessment: Calculate extraction efficiency for different bacterial types and adjust protocol if significant biases are detected

Research Reagent Solutions

Table 3: Essential Reagents for Optimized DNA Extraction

| Reagent/Category | Function | Examples/Alternatives |

|---|---|---|

| Lysis Matrix | Mechanical cell disruption | 0.1mm glass beads, ceramic beads, zirconia/silica beads |

| Enzymatic Additives | Enhanced lysis of tough cells | Lysozyme, mutanolysin, proteinase K |

| Inhibitor Removal | Remove PCR inhibitors | PTB, silica columns, size exclusion chromatography |

| Binding Matrices | DNA purification | Silica membranes, magnetic beads, cellulose matrices |

| Alkaline Lysis Solutions | Chemical lysis | KOH/NaOH with detergent combinations [31] |

| Stool Preprocessing | Standardization | Stool preprocessing devices (SPD) for consistent homogenization [30] |

Workflow Diagrams

Optimizing DNA extraction is fundamental to reducing PCR bias in 16S rRNA sequencing studies. The selection of an appropriate extraction method must balance efficiency across diverse bacterial types, DNA quality, and practical considerations like throughput and cost. Based on current evidence, protocols incorporating either rigorous bead beating or the novel alkaline/heat/detergent approach provide the most comprehensive lysis of both Gram-positive and Gram-negative bacteria. Most importantly, researchers should validate their chosen method with mock communities and maintain strict consistency throughout their study to ensure reproducible, reliable microbiome profiling results.

FAQs: Understanding and Minimizing PCR Chimeras

Q1: What are PCR chimeras and why are they a critical problem in 16S rRNA sequencing? PCR chimeras are hybrid DNA molecules formed when an incomplete DNA extension product from one template acts as a primer on a different, related template during subsequent PCR cycles [33]. In 16S rRNA sequencing, they are a major source of artifact, as they can be falsely interpreted as novel bacterial species, thereby inflating apparent microbial diversity. One study found that chimeras can constitute over 45% of sequences in some libraries, significantly skewing diversity estimates [33] [4].